365天深度学习训练营-第P5周:运动鞋识别

- 本文为365天深度学习训练营 中的学习记录博客

- 参考文章:Pytorch实战 | 第P5周:运动鞋识别

- 原作者:K同学啊|接辅导、项目定制

要求:

了解如何设置动态学习率(重点)

调整代码使测试集accuracy到达84%。

拔高(可选):

保存训练过程中的最佳模型权重

调整代码使测试集accuracy到达86%。

我的环境:

语言环境:Python3.8

编译器:Jupyter Lab

深度学习环境:Pytorch

数据集:K同学啊的百度网盘、和鲸

一、 前期准备

- 设置GPU

import torch

import torch.nn as nn

import torchvision.transforms as transforms

import torchvision

from torchvision import transforms, datasets

import os,PIL,pathlib

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

device

如果设备上支持GPU就使用GPU,否则使用CPU

2. 导入数据

import os,PIL,random,pathlib

data_dir = './data/5-data/'

data_dir = pathlib.Path(data_dir)

data_paths = list(data_dir.glob('*'))

classeNames = [str(path).split("\\")[2] for path in data_paths]

classeNames

# 关于transforms.Compose的更多介绍可以参考:https://blog.csdn.net/qq_38251616/article/details/124878863

train_transforms = transforms.Compose([

transforms.Resize([224, 224]), # 将输入图片resize成统一尺寸

# transforms.RandomHorizontalFlip(), # 随机水平翻转

transforms.ToTensor(), # 将PIL Image或numpy.ndarray转换为tensor,并归一化到[0,1]之间

transforms.Normalize( # 标准化处理-->转换为标准正太分布(高斯分布),使模型更容易收敛

mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225]) # 其中 mean=[0.485,0.456,0.406]与std=[0.229,0.224,0.225] 从数据集中随机抽样计算得到的。

])

test_transform = transforms.Compose([

transforms.Resize([224, 224]), # 将输入图片resize成统一尺寸

transforms.ToTensor(), # 将PIL Image或numpy.ndarray转换为tensor,并归一化到[0,1]之间

transforms.Normalize( # 标准化处理-->转换为标准正太分布(高斯分布),使模型更容易收敛

mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225]) # 其中 mean=[0.485,0.456,0.406]与std=[0.229,0.224,0.225] 从数据集中随机抽样计算得到的。

])

train_dataset = datasets.ImageFolder("./data/5-data/train/",transform=train_transforms)

test_dataset = datasets.ImageFolder("./data/5-data/test/",transform=train_transforms)

train_dataset.class_to_idx

二、构建简单的CNN网络

batch_size = 32

train_dl = torch.utils.data.DataLoader(train_dataset,

batch_size=batch_size,

shuffle=True,

num_workers=1)

test_dl = torch.utils.data.DataLoader(test_dataset,

batch_size=batch_size,

shuffle=True,

num_workers=1)

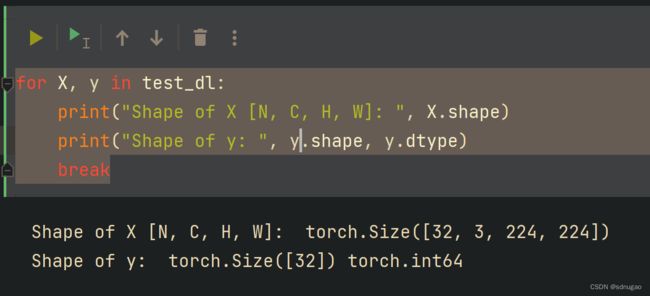

for X, y in test_dl:

print("Shape of X [N, C, H, W]: ", X.shape)

print("Shape of y: ", y.shape, y.dtype)

break

三、 训练模型

import torch.nn.functional as F

class Model(nn.Module):

def __init__(self):

super(Model, self).__init__()

self.conv1=nn.Sequential(

nn.Conv2d(3, 12, kernel_size=5, padding=0), # 12*220*220

nn.BatchNorm2d(12),

nn.ReLU())

self.conv2=nn.Sequential(

nn.Conv2d(12, 12, kernel_size=5, padding=0), # 12*216*216

nn.BatchNorm2d(12),

nn.ReLU())

self.pool3=nn.Sequential(

nn.MaxPool2d(2)) # 12*108*108

self.conv4=nn.Sequential(

nn.Conv2d(12, 24, kernel_size=5, padding=0), # 24*104*104

nn.BatchNorm2d(24),

nn.ReLU())

self.conv5=nn.Sequential(

nn.Conv2d(24, 24, kernel_size=5, padding=0), # 24*100*100

nn.BatchNorm2d(24),

nn.ReLU())

self.pool6=nn.Sequential(

nn.MaxPool2d(2)) # 24*50*50

self.dropout = nn.Sequential(

nn.Dropout(0.2))

self.fc=nn.Sequential(

nn.Linear(24*50*50, len(classeNames)))

def forward(self, x):

batch_size = x.size(0)

x = self.conv1(x) # 卷积-BN-激活

x = self.conv2(x) # 卷积-BN-激活

x = self.pool3(x) # 池化

x = self.conv4(x) # 卷积-BN-激活

x = self.conv5(x) # 卷积-BN-激活

x = self.pool6(x) # 池化

x = self.dropout(x)

x = x.view(batch_size, -1) # flatten 变成全连接网络需要的输入 (batch, 24*50*50) ==> (batch, -1), -1 此处自动算出的是24*50*50

x = self.fc(x)

return x

device = "cuda" if torch.cuda.is_available() else "cpu"

print("Using {} device".format(device))

model = Model().to(device)

model

Model(

(conv1): Sequential(

(0): Conv2d(3, 12, kernel_size=(5, 5), stride=(1, 1))

(1): BatchNorm2d(12, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU()

)

(conv2): Sequential(

(0): Conv2d(12, 12, kernel_size=(5, 5), stride=(1, 1))

(1): BatchNorm2d(12, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU()

)

(pool3): Sequential(

(0): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

)

(conv4): Sequential(

(0): Conv2d(12, 24, kernel_size=(5, 5), stride=(1, 1))

(1): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU()

)

(conv5): Sequential(

(0): Conv2d(24, 24, kernel_size=(5, 5), stride=(1, 1))

(1): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU()

)

(pool6): Sequential(

(0): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

)

(dropout): Sequential(

(0): Dropout(p=0.2, inplace=False)

)

(fc): Sequential(

(0): Linear(in_features=60000, out_features=2, bias=True)

)

)

- 编写训练函数

# 训练循环

def train(dataloader, model, loss_fn, optimizer):

size = len(dataloader.dataset) # 训练集的大小

num_batches = len(dataloader) # 批次数目, (size/batch_size,向上取整)

train_loss, train_acc = 0, 0 # 初始化训练损失和正确率

for X, y in dataloader: # 获取图片及其标签

X, y = X.to(device), y.to(device)

# 计算预测误差

pred = model(X) # 网络输出

loss = loss_fn(pred, y) # 计算网络输出和真实值之间的差距,targets为真实值,计算二者差值即为损失

# 反向传播

optimizer.zero_grad() # grad属性归零

loss.backward() # 反向传播

optimizer.step() # 每一步自动更新

# 记录acc与loss

train_acc += (pred.argmax(1) == y).type(torch.float).sum().item()

train_loss += loss.item()

train_acc /= size

train_loss /= num_batches

return train_acc, train_loss

- 编写测试函数

测试函数和训练函数大致相同,但是由于不进行梯度下降对网络权重进行更新,所以不需要传入优化器

def test (dataloader, model, loss_fn):

size = len(dataloader.dataset) # 测试集的大小

num_batches = len(dataloader) # 批次数目, (size/batch_size,向上取整)

test_loss, test_acc = 0, 0

# 当不进行训练时,停止梯度更新,节省计算内存消耗

with torch.no_grad():

for imgs, target in dataloader:

imgs, target = imgs.to(device), target.to(device)

# 计算loss

target_pred = model(imgs)

loss = loss_fn(target_pred, target)

test_loss += loss.item()

test_acc += (target_pred.argmax(1) == target).type(torch.float).sum().item()

test_acc /= size

test_loss /= num_batches

return test_acc, test_loss

- 设置动态学习率

✨调用官方动态学习率接口

与上面方法是等价的

def adjust_learning_rate(optimizer, epoch, start_lr):

# 每 2 个epoch衰减到原来的 0.98

lr = start_lr * (0.92 ** (epoch // 2))

for param_group in optimizer.param_groups:

param_group['lr'] = lr

learn_rate = 1e-4 # 初始学习率

optimizer = torch.optim.SGD(model.parameters(), lr=learn_rate)

- 正式训练

loss_fn = nn.CrossEntropyLoss() # 创建损失函数

epochs = 40

train_loss = []

train_acc = []

test_loss = []

test_acc = []

for epoch in range(epochs):

# 更新学习率(使用自定义学习率时使用)

adjust_learning_rate(optimizer, epoch, learn_rate)

model.train()

epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fn, optimizer)

# scheduler.step() # 更新学习率(调用官方动态学习率接口时使用)

model.eval()

epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fn)

train_acc.append(epoch_train_acc)

train_loss.append(epoch_train_loss)

test_acc.append(epoch_test_acc)

test_loss.append(epoch_test_loss)

# 获取当前的学习率

lr = optimizer.state_dict()['param_groups'][0]['lr']

template = ('Epoch:{:2d}, Train_acc:{:.1f}%, Train_loss:{:.3f}, Test_acc:{:.1f}%, Test_loss:{:.3f}, Lr:{:.2E}')

print(template.format(epoch+1, epoch_train_acc*100, epoch_train_loss,

epoch_test_acc*100, epoch_test_loss, lr))

print('Done')

Epoch: 1, Train_acc:53.8%, Train_loss:0.749, Test_acc:51.3%, Test_loss:0.719, Lr:1.00E-04

Epoch: 2, Train_acc:61.4%, Train_loss:0.659, Test_acc:63.2%, Test_loss:0.600, Lr:1.00E-04

Epoch: 3, Train_acc:64.5%, Train_loss:0.634, Test_acc:71.1%, Test_loss:0.554, Lr:9.20E-05

Epoch: 4, Train_acc:69.9%, Train_loss:0.578, Test_acc:76.3%, Test_loss:0.541, Lr:9.20E-05

Epoch: 5, Train_acc:75.3%, Train_loss:0.534, Test_acc:73.7%, Test_loss:0.517, Lr:8.46E-05

Epoch: 6, Train_acc:75.7%, Train_loss:0.505, Test_acc:77.6%, Test_loss:0.488, Lr:8.46E-05

Epoch: 7, Train_acc:78.3%, Train_loss:0.477, Test_acc:77.6%, Test_loss:0.527, Lr:7.79E-05

Epoch: 8, Train_acc:77.3%, Train_loss:0.474, Test_acc:71.1%, Test_loss:0.499, Lr:7.79E-05

Epoch: 9, Train_acc:81.5%, Train_loss:0.456, Test_acc:78.9%, Test_loss:0.465, Lr:7.16E-05

Epoch:10, Train_acc:82.5%, Train_loss:0.429, Test_acc:81.6%, Test_loss:0.478, Lr:7.16E-05

Epoch:11, Train_acc:83.7%, Train_loss:0.417, Test_acc:81.6%, Test_loss:0.450, Lr:6.59E-05

Epoch:12, Train_acc:85.1%, Train_loss:0.395, Test_acc:82.9%, Test_loss:0.481, Lr:6.59E-05

Epoch:13, Train_acc:84.3%, Train_loss:0.387, Test_acc:82.9%, Test_loss:0.449, Lr:6.06E-05

Epoch:14, Train_acc:87.5%, Train_loss:0.362, Test_acc:81.6%, Test_loss:0.455, Lr:6.06E-05

Epoch:15, Train_acc:87.5%, Train_loss:0.362, Test_acc:82.9%, Test_loss:0.436, Lr:5.58E-05

Epoch:16, Train_acc:88.6%, Train_loss:0.353, Test_acc:82.9%, Test_loss:0.431, Lr:5.58E-05

Epoch:17, Train_acc:89.2%, Train_loss:0.350, Test_acc:82.9%, Test_loss:0.419, Lr:5.13E-05

Epoch:18, Train_acc:87.8%, Train_loss:0.346, Test_acc:81.6%, Test_loss:0.404, Lr:5.13E-05

Epoch:19, Train_acc:89.8%, Train_loss:0.322, Test_acc:80.3%, Test_loss:0.443, Lr:4.72E-05

Epoch:20, Train_acc:87.3%, Train_loss:0.340, Test_acc:84.2%, Test_loss:0.452, Lr:4.72E-05

Epoch:21, Train_acc:89.2%, Train_loss:0.336, Test_acc:82.9%, Test_loss:0.434, Lr:4.34E-05

Epoch:22, Train_acc:91.8%, Train_loss:0.314, Test_acc:81.6%, Test_loss:0.436, Lr:4.34E-05

Epoch:23, Train_acc:92.4%, Train_loss:0.306, Test_acc:81.6%, Test_loss:0.402, Lr:4.00E-05

Epoch:24, Train_acc:90.6%, Train_loss:0.309, Test_acc:81.6%, Test_loss:0.445, Lr:4.00E-05

Epoch:25, Train_acc:92.6%, Train_loss:0.295, Test_acc:84.2%, Test_loss:0.377, Lr:3.68E-05

Epoch:26, Train_acc:92.4%, Train_loss:0.298, Test_acc:82.9%, Test_loss:0.412, Lr:3.68E-05

Epoch:27, Train_acc:93.2%, Train_loss:0.298, Test_acc:81.6%, Test_loss:0.389, Lr:3.38E-05

Epoch:28, Train_acc:93.4%, Train_loss:0.284, Test_acc:81.6%, Test_loss:0.426, Lr:3.38E-05

Epoch:29, Train_acc:93.2%, Train_loss:0.284, Test_acc:84.2%, Test_loss:0.405, Lr:3.11E-05

Epoch:30, Train_acc:94.0%, Train_loss:0.278, Test_acc:82.9%, Test_loss:0.452, Lr:3.11E-05

Epoch:31, Train_acc:92.8%, Train_loss:0.286, Test_acc:82.9%, Test_loss:0.419, Lr:2.86E-05

Epoch:32, Train_acc:94.4%, Train_loss:0.275, Test_acc:82.9%, Test_loss:0.434, Lr:2.86E-05

Epoch:33, Train_acc:95.2%, Train_loss:0.269, Test_acc:82.9%, Test_loss:0.385, Lr:2.63E-05

Epoch:34, Train_acc:94.2%, Train_loss:0.269, Test_acc:82.9%, Test_loss:0.412, Lr:2.63E-05

Epoch:35, Train_acc:93.2%, Train_loss:0.273, Test_acc:82.9%, Test_loss:0.426, Lr:2.42E-05

Epoch:36, Train_acc:94.2%, Train_loss:0.275, Test_acc:82.9%, Test_loss:0.386, Lr:2.42E-05

Epoch:37, Train_acc:94.4%, Train_loss:0.262, Test_acc:82.9%, Test_loss:0.393, Lr:2.23E-05

Epoch:38, Train_acc:95.2%, Train_loss:0.264, Test_acc:82.9%, Test_loss:0.375, Lr:2.23E-05

Epoch:39, Train_acc:94.2%, Train_loss:0.265, Test_acc:82.9%, Test_loss:0.422, Lr:2.05E-05

Epoch:40, Train_acc:94.6%, Train_loss:0.257, Test_acc:82.9%, Test_loss:0.379, Lr:2.05E-05

Done

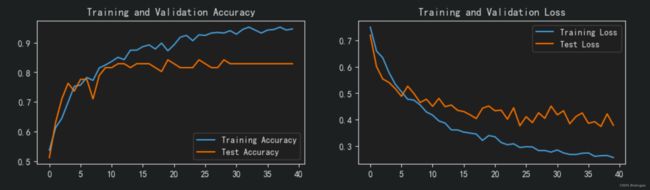

四、 结果可视化

7. Loss与Accuracy图

import matplotlib.pyplot as plt

#隐藏警告

import warnings

warnings.filterwarnings("ignore") #忽略警告信息

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

plt.rcParams['figure.dpi'] = 100 #分辨率

epochs_range = range(epochs)

plt.figure(figsize=(12, 3))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, train_acc, label='Training Accuracy')

plt.plot(epochs_range, test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, train_loss, label='Training Loss')

plt.plot(epochs_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

output_28_0.png

- 指定图片进行预测

⭐torch.squeeze()详解

对数据的维度进行压缩,去掉维数为1的的维度

函数原型:

torch.squeeze(input, dim=None, *, out=None)

关键参数说明:

input (Tensor):输入Tensor

dim (int, optional):如果给定,输入将只在这个维度上被压缩

实战案例:

⭐torch.unsqueeze()

对数据维度进行扩充。给指定位置加上维数为一的维度

函数原型:

torch.unsqueeze(input, dim)

关键参数说明:

input (Tensor):输入Tensor

dim (int):插入单例维度的索引

实战案例:

from PIL import Image

classes = list(train_dataset.class_to_idx)

def predict_one_image(image_path, model, transform, classes):

test_img = Image.open(image_path).convert('RGB')

# plt.imshow(test_img) # 展示预测的图片

test_img = transform(test_img)

img = test_img.to(device).unsqueeze(0)

model.eval()

output = model(img)

_,pred = torch.max(output,1)

pred_class = classes[pred]

print(f'预测结果是:{pred_class}')

# 预测训练集中的某张照片

predict_one_image(image_path='./5-data/test/adidas/1.jpg',

model=model,

transform=train_transforms,

classes=classes)

五、保存并加载模型

# 模型保存

PATH = './model.pth' # 保存的参数文件名

torch.save(model.state_dict(), PATH)

# 将参数加载到model当中

model.load_state_dict(torch.load(PATH, map_location=device))

六、动态学习率

9. torch.optim.lr_scheduler.StepLR

等间隔动态调整方法,每经过step_size个epoch,做一次学习率decay,以gamma值为缩小倍数。

函数原型:

torch.optim.lr_scheduler.StepLR(optimizer, step_size, gamma=0.1, last_epoch=-1)

关键参数详解:

optimizer(Optimizer):是之前定义好的需要优化的优化器的实例名

step_size(int):是学习率衰减的周期,每经过每个epoch,做一次学习率decay

gamma(float):学习率衰减的乘法因子。Default:0.1

用法示例:

optimizer = torch.optim.SGD(net.parameters(), lr=0.001 )

scheduler = torch.optim.lr_scheduler.StepLR(optimizer, step_size=5, gamma=0.1)

- lr_scheduler.LambdaLR

根据自己定义的函数更新学习率。

函数原型:

torch.optim.lr_scheduler.LambdaLR(optimizer, lr_lambda, last_epoch=-1, verbose=False)

关键参数详解:

optimizer(Optimizer):是之前定义好的需要优化的优化器的实例名

lr_lambda(function):更新学习率的函数

用法示例:

lambda1 = lambda epoch: (0.92 ** (epoch // 2) # 第二组参数的调整方法

optimizer = torch.optim.SGD(model.parameters(), lr=learn_rate)

scheduler = torch.optim.lr_scheduler.LambdaLR(optimizer, lr_lambda=lambda1) #选定调整方法

- lr_scheduler.MultiStepLR

在特定的 epoch 中调整学习率

函数原型:

torch.optim.lr_scheduler.MultiStepLR(optimizer, milestones, gamma=0.1, last_epoch=-1, verbose=False)

关键参数详解:

optimizer(Optimizer):是之前定义好的需要优化的优化器的实例名

milestones(list):是一个关于epoch数值的list,表示在达到哪个epoch范围内开始变化,必须是升序排列

gamma(float):学习率衰减的乘法因子。Default:0.1

用法示例:

Python

复制代码

1

2

3

4

optimizer = torch.optim.SGD(net.parameters(), lr=0.001 )

scheduler = torch.optim.lr_scheduler.MultiStepLR(optimizer,

milestones=[2,6,15], #调整学习率的epoch数

gamma=0.1)

更多的官方动态学习率设置方式可参考:https://pytorch.org/docs/stable/optim.html

调用官方接口示例:

model = [Parameter(torch.randn(2, 2, requires_grad=True))]

optimizer = SGD(model, 0.1)

scheduler = ExponentialLR(optimizer, gamma=0.9)

for epoch in range(20):

for input, target in dataset:

optimizer.zero_grad()

output = model(input)

loss = loss_fn(output, target)

loss.backward()

optimizer.step()

scheduler.step()