YOLOV5学习笔记(六)——优化网络架构

目录

1 整体框架分析

1.1 Focus

1.2 Conv模块

1.3 Bottleneck模块

1.4 C3模块 跨尺度连接

1.5 SPP:空间金字塔池化

1.6 Concat

2 更改网络架构

2.2 小目标

2.1 轻量化

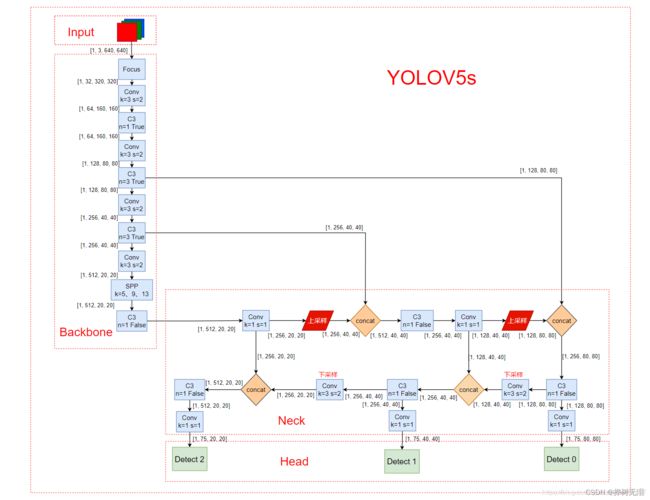

1 整体框架分析

Backbone作用:特征提取

Neck作用:对特征进行一波混合与组合,并且把这些特征传递给预测层

Head作用:进行最终的预测输出

# anchors

anchors:

- [10,13, 16,30, 33,23] # P3/8 stride=8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

backbone:

# [from, number, module, args]

# from表示当前模块的输入来自那一层的输出,-1表示来自上一层的输出

# number表示本模块重复的次数,1表示只有一个,3表示重复3次

# module: 模块名

[[-1, 1, Focus, [64, 3]], # 0-P1/2 [3, 32, 3]

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4 [32, 64, 3, 2]

[-1, 3, C3, [128]], # 2 [64, 64, 1]

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8 [64, 128, 3, 2]

[-1, 9, C3, [256]], # 4 [128, 128, 3]

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16 [128, 256, 3, 2]

[-1, 9, C3, [512]], # 6 [256, 256, 3]

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32 [256, 512, 3, 2]

[-1, 1, SPP, [1024, [5, 9, 13]]], # 8 [512, 512, [5, 9, 13]]

[-1, 3, C3, [1024, False]], # 9 [512, 512, 1, False]

# [nc, anchors, 3个Detect的输出channel]

# [1, [[10, 13, 16, 30, 33, 23], [30, 61, 62, 45, 59, 119], [116, 90, 156, 198, 373, 326]], [128, 256, 512]]

]

head:

[[-1, 1, Conv, [512, 1, 1]], # 10 [512, 256, 1, 1]

[-1, 1, nn.Upsample, [None, 2, 'nearest']], # 11 [None, 2, 'nearest']

[[-1, 6], 1, Concat, [1]], # 12 cat backbone P4 [1]

[-1, 3, C3, [512, False]], # 13 [512, 256, 1, False]

[-1, 1, Conv, [256, 1, 1]], # 14 [256, 128, 1, 1]

[-1, 1, nn.Upsample, [None, 2, 'nearest']], #15 [None, 2, 'nearest']

[[-1, 4], 1, Concat, [1]], # 16 cat backbone P3 [1]

[-1, 3, C3, [256, False]], # 17 (P3/8-small) [256, 128, 1, False]

[-1, 1, Conv, [256, 3, 2]], # 18 [128, 128, 3, 2]

[[-1, 14], 1, Concat, [1]], # 19 cat head P4 [1]

[-1, 3, C3, [512, False]], # 20 (P4/16-medium) [256, 256, 1, False]

[-1, 1, Conv, [512, 3, 2]], # 21 [256, 256, 3, 2]

[[-1, 10], 1, Concat, [1]], # 22 cat head P5 [1]

[-1, 3, C3, [1024, False]], # 23 (P5/32-large) [512, 512, 1, False]

[[17, 20, 23], 1, Detect, [nc, anchors]], # 24 Detect(P3, P4, P5)

]1.1 Focus

作用:下采样

Focus模块的作用是对图片进行切片,类似于下采样,先将图片变为320×320×12的特征图,再经过3×3的卷积操作,输出通道32,最终变为320×320×32的特征图,是一般卷积计算量的4倍,如此做下采样将无信息丢失。

输入:3x640x640

输出:32×320×320

1.2 Conv模块

作用:卷积,步长为2下采样,步长为1大小不变

对输入的特征图执行卷积,BN,激活函数操作,在新版的YOLOv5中,作者使用Silu作为激活函数。

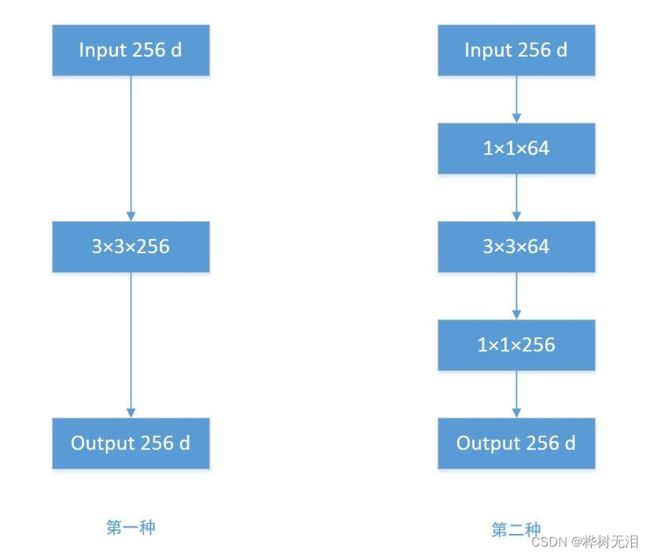

1.3 Bottleneck模块

作用:为了降低参数量

利用多个小卷积核替代一个大卷积核,先将channel 数减小再扩大(默认减小到一半),具体做法是先进行1×1卷积将channel减小一半,再通过3×3卷积将通道数加倍,并获取特征(共使用两个标准卷积模块),其输入与输出的通道数是不发生改变的。

- 直接使用 3x3 的卷积核参数量:256×3×3×256 = 589824

- 先经过 1x1 的卷积核,再经过 3x3 卷积核,最后经过一个 1x1 卷积核参数量:256×1×1×64 + 64×3×3×64 + 64×1×1×256 = 69632

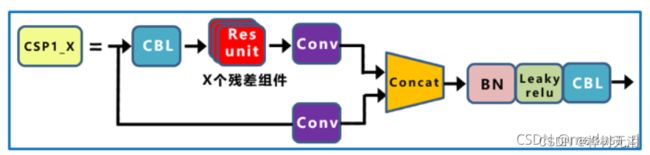

1.4 C3模块 跨尺度连接

作用:残差结构,让模型学习更多的特征。

- C3相对于BottleneckCSP模块不同的是,经历过残差输出后的Conv模块被去掉了,concat后的标准卷积模块中的激活函数也由LeakyRelu变为了SiLU(同上)。

- 该模块是对残差特征进行学习的主要模块,其结构分为两支,一支使用了上述指定多个Bottleneck堆叠和3个标准卷积层,另一支仅经过一个基本卷积模块,最后将两支进行concat操作。

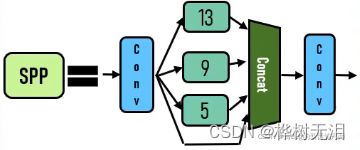

1.5 SPP:空间金字塔池化

作用:能将任意大小的特征图转换成固定大小的特征向量

- SPP是空间金字塔池化的简称,其先通过一个标准卷积模块将输入通道减半,然后分别做kernel-size为5,9,13的maxpooling(对于不同的核大小,padding是自适应的)。

- 对三次最大池化的结果与未进行池化操作的数据进行concat,最终合并后channel数是原来的2倍。

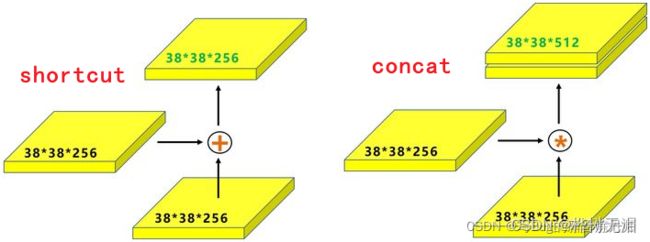

1.6 Concat

作用:融合两层

大小通道相同的两层叠加,通道数相加

2 更改网络架构

2.2 小目标

添加一个小目标层,160*160。通道数的选择主要目的是为了和上层通道数一致从而能够concat

# YOLOv5 by Ultralytics, GPL-3.0 license

# Parameters

nc: 10 # number of classes

depth_multiple: 1.0 # model depth multiple

width_multiple: 1.0 # layer channel multiple

anchors:

- [5,6, 8,14, 15,11] #4

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

backbone:

# [from, number, module, args]

#640*640*3

[[-1, 1, Focus, [64, 3]], # 0-P1/2

#320*320*32

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

#160*160*64

[-1, 3, C3, [128]], #160*160

#160*160*64

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

#80*80*128

[-1, 9, C3, [256]], #480*80

#80*80*128

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16

#40*40*256

[-1, 9, C3, [512]], #40*40

#40*40*256

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32

#20*20*512

[-1, 1, SPP, [1024, [5, 9, 13]]],

[-1, 3, C3, [1024, False]], # 9 20*20

#20*20*512

]

# YOLOv5 v6.0 head

# concat之后通道翻倍

head:

[[-1, 1, Conv, [512, 1, 1]], #20*20*256

[-1, 1, nn.Upsample, [None, 2, 'nearest']], #40*40*256

[[-1, 6], 1, Concat, [1]], # cat backbone P4 40*40*512

[-1, 1, C3, [512, False]], # 13 40*40*256

[-1, 1, Conv, [256, 1, 1]], # 40*40*128

[-1, 1, nn.Upsample, [None, 2, 'nearest']], #80*80*128

[[-1, 4], 1, Concat, [1]], # cat backbone P3 80*80*256

[-1, 1, C3, [256, False]], # 17 (P3/8-small) 80*80*128

[-1, 1, Conv, [128, 1, 1]], # 80*80*64

[-1, 1, nn.Upsample, [None, 2, 'nearest']], #160*160*64

[[-1, 2], 1, Concat, [1]], # cat backbone P3 160*160*128

[-1, 1, C3, [128, False]], # 21 (P3/8-small) 160*160*64

[-1, 1, Conv, [128, 3, 2]], #80*80*64

[[-1, 18], 1, Concat, [1]], # cat head P4 80*80*128

[-1, 1, C3, [256, False]], # 24 (P4/16-medium) 80*80*128

[-1, 1, Conv, [256, 3, 2]], # 40*40*128

[[-1, 14], 1, Concat, [1]], # cat head P4 40*40*256

[-1, 1, C3, [512, False]], # 27 (P4/16-medium) 40*40*256

[-1, 1, Conv, [512, 3, 2]], # 20*20*256

[[-1, 10], 1, Concat, [1]], # cat head P5 20*20*512

[-1, 1, C3, [1024, False]], # 30 (P5/32-large) 20*20*512

[[21, 24,27,30], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]2.1 轻量化

Shufflenetv2

旷视轻量化卷积神经网络Shufflenetv2,通过大量实验提出四条轻量化网络设计准则,对输入输出通道、分组卷积组数、网络碎片化程度、逐元素操作对不同硬件上的速度和内存访问量MAC(Memory Access Cost)的影响进行了详细分析:

准则一:输入输出通道数相同时,内存访问量MAC最小

Mobilenetv2就不满足,采用了拟残差结构,输入输出通道数不相等

准则二:分组数过大的分组卷积会增加MAC

Shufflenetv1就不满足,采用了分组卷积(GConv)

准则三:碎片化操作(多通路,把网络搞的很宽)对并行加速不友好

Inception系列的网络

准则四:逐元素操作(Element-wise,例如ReLU、Shortcut-add等)带来的内存和耗时不可忽略

Shufflenetv1就不满足,采用了add操作

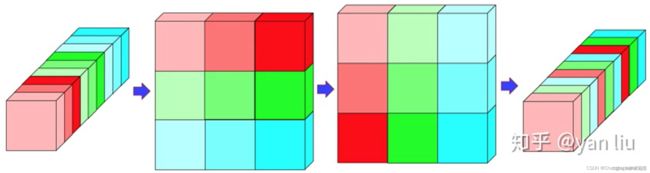

针对以上四条准则,作者提出了Shufflenetv2模型,通过Channel Split替代分组卷积,满足四条设计准则,达到了速度和精度的最优权衡。

Shufflenetv2有两个结构:basic unit和unit from spatial down sampling(2×)

basic unit:输入输出通道数不变,大小也不变

unit from spatial down sample :输出通道数扩大一倍,大小缩小一倍(降采样)

Shufflenetv2整体哲学要紧紧向论文中提出的轻量化四大准则靠拢,基本除了准则四之外,都有效的避免了

为了解决GConv(Group Convolution)导致的不同group之间没有信息交流,只在同一个group内进行特征提取的问题,Shufflenetv2设计了Channel Shuffle操作进行通道重排,跨group信息交流

1. common.py文件修改:直接在最下面加入如下代

# ---------------------------- ShuffleBlock start -------------------------------

# 通道重排,跨group信息交流

def channel_shuffle(x, groups):

batchsize, num_channels, height, width = x.data.size()

channels_per_group = num_channels // groups

# reshape

x = x.view(batchsize, groups,

channels_per_group, height, width)

x = torch.transpose(x, 1, 2).contiguous()

# flatten

x = x.view(batchsize, -1, height, width)

return x

class conv_bn_relu_maxpool(nn.Module):

def __init__(self, c1, c2): # ch_in, ch_out

super(conv_bn_relu_maxpool, self).__init__()

self.conv = nn.Sequential(

nn.Conv2d(c1, c2, kernel_size=3, stride=2, padding=1, bias=False),

nn.BatchNorm2d(c2),

nn.ReLU(inplace=True),

)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)

def forward(self, x):

return self.maxpool(self.conv(x))

class Shuffle_Block(nn.Module):

def __init__(self, inp, oup, stride):

super(Shuffle_Block, self).__init__()

if not (1 <= stride <= 3):

raise ValueError('illegal stride value')

self.stride = stride

branch_features = oup // 2

assert (self.stride != 1) or (inp == branch_features << 1)

if self.stride > 1:

self.branch1 = nn.Sequential(

self.depthwise_conv(inp, inp, kernel_size=3, stride=self.stride, padding=1),

nn.BatchNorm2d(inp),

nn.Conv2d(inp, branch_features, kernel_size=1, stride=1, padding=0, bias=False),

nn.BatchNorm2d(branch_features),

nn.ReLU(inplace=True),

)

self.branch2 = nn.Sequential(

nn.Conv2d(inp if (self.stride > 1) else branch_features,

branch_features, kernel_size=1, stride=1, padding=0, bias=False),

nn.BatchNorm2d(branch_features),

nn.ReLU(inplace=True),

self.depthwise_conv(branch_features, branch_features, kernel_size=3, stride=self.stride, padding=1),

nn.BatchNorm2d(branch_features),

nn.Conv2d(branch_features, branch_features, kernel_size=1, stride=1, padding=0, bias=False),

nn.BatchNorm2d(branch_features),

nn.ReLU(inplace=True),

)

@staticmethod

def depthwise_conv(i, o, kernel_size, stride=1, padding=0, bias=False):

return nn.Conv2d(i, o, kernel_size, stride, padding, bias=bias, groups=i)

def forward(self, x):

if self.stride == 1:

x1, x2 = x.chunk(2, dim=1) # 按照维度1进行split

out = torch.cat((x1, self.branch2(x2)), dim=1)

else:

out = torch.cat((self.branch1(x), self.branch2(x)), dim=1)

out = channel_shuffle(out, 2)

return out

# ---------------------------- ShuffleBlock end --------------------------------

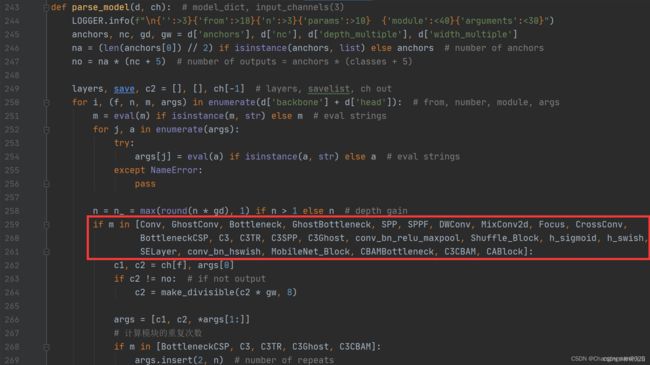

2. yolo.py文件修改:在yolo.py的parse_model函数中,加入conv_bn_relu_maxpool, Shuffle_Block两个模块

3. 新建yaml文件:在model文件下新建yolov5-shufflenetv2.yaml文件,复制以下代码即可

# YOLOv5 by Ultralytics, GPL-3.0 license

# Parameters

nc: 10 # number of classes

depth_multiple: 1.0 # model depth multiple

width_multiple: 1.0 # layer channel multiple

anchors:

- [5,6, 8,14, 15,11] #4

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

backbone:

#640*640*3

[[ -1, 1, conv_bn_relu_maxpool, [ 32 ] ], # 0-P2/4

#320*320*32

[ -1, 1, Shuffle_Block, [ 128, 2 ] ], # 1-P3/8

#160*160*64

[ -1, 3, Shuffle_Block, [ 128, 1 ] ], # 2

#160*160*64

[ -1, 1, Shuffle_Block, [ 256, 2 ] ], # 3-P4/16

#80*80*128

[ -1, 7, Shuffle_Block, [ 256, 1 ] ], # 4

#80*80*128

[ -1, 1, Shuffle_Block, [ 512, 2 ] ], # 5-P5/32

#40*40*256

[ -1, 3, Shuffle_Block, [ 512, 1 ] ], # 6

#40*40*256

[ -1, 1, Shuffle_Block, [ 1024, 2 ] ], # 7

#20*20*512

[ -1, 3, Shuffle_Block, [ 1024, 1 ] ], # 8

#20*20*512

]

# YOLOv5 v6.0 head

# concat之后通道翻倍

head:

[[-1, 1, Conv, [512, 1, 1]], #20*20*256

[-1, 1, nn.Upsample, [None, 2, 'nearest']], #40*40*256

[[-1, 6], 1, Concat, [1]], # cat backbone P4 40*40*512

[-1, 1, C3, [512, False]], # 12 40*40*256

[-1, 1, Conv, [256, 1, 1]], # 40*40*128

[-1, 1, nn.Upsample, [None, 2, 'nearest']], #80*80*128

[[-1, 4], 1, Concat, [1]], # cat backbone P3 80*80*256

[-1, 1, C3, [256, False]], # 16 (P3/8-small) 80*80*128

[-1, 1, Conv, [128, 1, 1]], # 80*80*64

[-1, 1, nn.Upsample, [None, 2, 'nearest']], #160*160*64

[[-1, 2], 1, Concat, [1]], # cat backbone P3 160*160*128

[-1, 1, C3, [128, False]], # 20 (P3/8-small) 160*160*64

[-1, 1, Conv, [128, 3, 2]], #80*80*64

[[-1, 17], 1, Concat, [1]], # cat head P4 80*80*128

[-1, 1, C3, [256, False]], # 23 (P4/16-medium) 80*80*128

[-1, 1, Conv, [256, 3, 2]], # 40*40*128

[[-1, 13], 1, Concat, [1]], # cat head P4 40*40*256

[-1, 1, C3, [512, False]], # 26 (P4/16-medium) 40*40*256

[-1, 1, Conv, [512, 3, 2]], # 20*20*256

[[-1, 9], 1, Concat, [1]], # cat head P5 20*20*512

[-1, 1, C3, [1024, False]], # 29 (P5/32-large) 20*20*512

[[20, 23,26,29], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]

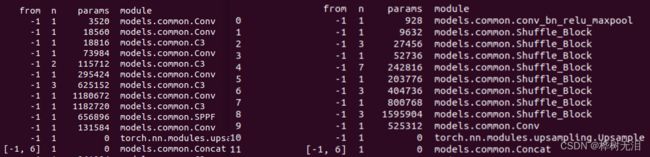

右侧是轻量化后的,可以看到参数数量明显减少很多

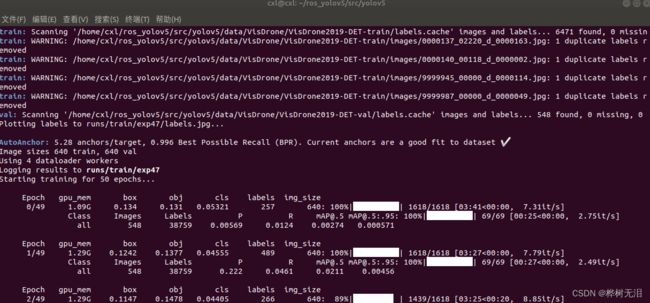

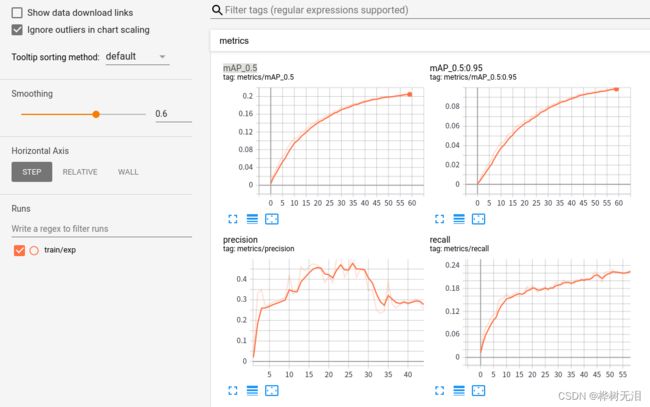

4. 训练运行

python train.py --data data/VisDrone.yaml --cfg models/yolov5s-tiny.yaml --weights weights/yolov5s.pt --batch-size 4 --epochs 50