OpenCV4经典案例实战教程 笔记

OpenCV4经典案例实战教程 笔记

这几天在看OpenCV4经典的案例实战教程,这里记录一下学习的过程。

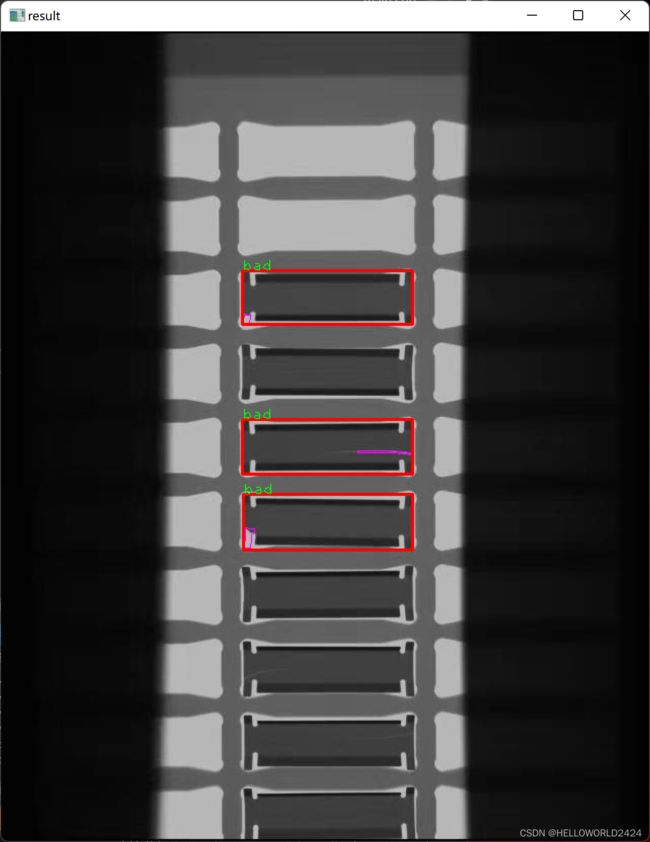

案例一 刀片1的缺陷检测

这里的目的是检测出有缺陷的刀片,如下图。

先总结一下思路,这里首先需要将图像进行二值化,通过轮廓的查找,找到刀片所有的刀片,然后进入缺陷的识别。缺陷识别主要还是选取一个没有缺陷的模板,然后对相应的二值图像进行相减操作,得出缺陷,通过形态学开操作,去掉一部分的噪声,并通过面积,位置信息等排除掉干扰项,就可以完成检测了。

下面附上实现的代码:

void sort_box(vector<Rect> &boxes);

void detect_defect(Mat &src, Mat &binary, vector<Rect> rects, vector<Rect> &defect);

Mat tpl;

int Advance::blade() {

Mat src = imread("D:/images/ce_01.jpg");

if (src.empty()) {

printf("could not load image file...");

return -1;

}

namedWindow("input", WINDOW_AUTOSIZE);

imshow("input", src);

// 图像二值化

Mat gray, binary;

cvtColor(src, gray, COLOR_BGR2GRAY);

threshold(gray, binary, 0, 255, THRESH_BINARY_INV | THRESH_OTSU);

imshow("binary", binary);

// 定义结构元素

Mat se = getStructuringElement(MORPH_RECT, Size(3, 3), Point(-1, -1));

morphologyEx(binary, binary, MORPH_OPEN, se);

imshow("open-binary", binary);

// 轮廓发现

vector<vector<Point>> contours;

vector<Vec4i> hirarchy;

vector<Rect> rects;

findContours(binary, contours, hirarchy, RETR_LIST, CHAIN_APPROX_SIMPLE);

int height = src.rows;

for (size_t t = 0; t < contours.size(); ++t) {

Rect rect = boundingRect(contours[t]);

double area = contourArea(contours[t]);

if (rect.height > (height / 2) | area < 150) {

continue;

}

rects.push_back(rect);

//rectangle(src, rect, Scalar(0, 0, 255), 2);

//drawContours(src, contours, t, Scalar(0, 0, 255), 2);

}

sort_box(rects);

tpl = binary(rects[1]);

//for (int i = 0; i < rects.size(); ++i) {

// putText(src, format("%d", i), rects[i].tl(), FONT_HERSHEY_PLAIN, 1.0, Scalar(0, 255, 0));

//}

vector<Rect> defects;

detect_defect(src, binary, rects, defects);

for (int i = 0; i < defects.size(); i++) {

rectangle(src, defects[i], Scalar(0, 0, 255), 2);

putText(src, "bad", defects[i].tl(), FONT_HERSHEY_PLAIN, 1.0, Scalar(0, 255, 0));

}

imshow("result", src);

waitKey(0);

}

void sort_box(vector<Rect> &boxes) {

int size = boxes.size();

for (int i = 0; i < size - 1; ++i) {

for (int j = i; j < size; j++) {

int x = boxes[j].x;

int y = boxes[j].y;

if (y < boxes[i].y) {

Rect temp = boxes[i];

boxes[i] = boxes[j];

boxes[j] = temp;

}

}

}

}

void detect_defect(Mat &src, Mat &binary, vector<Rect> rects, vector<Rect> &defect) {

int h = tpl.rows;

int w = tpl.cols;

int size = rects.size();

for (int i = 0; i < size; ++i) {

//构建diff

Mat roi = binary(rects[i]);

resize(roi, roi, tpl.size());

Mat mask;

subtract(tpl, roi, mask);

Mat se = getStructuringElement(MORPH_RECT, Size(3, 3), Point(-1, -1));

morphologyEx(mask, mask, MORPH_OPEN, se);

threshold(mask, mask, 0, 255, THRESH_BINARY);

//根据diff查找缺陷,阈值化

int count = 0;

for (int row = 0; row < h; ++row) {

for (int col = 0; col < w; ++col) {

int pv = mask.at<uchar>(row, col);

if (pv == 255) {

count++;

}

}

}

// 填充一个像素宽

int mh = mask.rows + 2;

int mw = mask.cols + 2;

Mat m1 = Mat::zeros(Size(mw, mh), mask.type());

Rect mroi;

mroi.x = 1;

mroi.y = 1;

mroi.height = mask.rows;

mroi.width = mask.cols;

mask.copyTo(m1(mroi));

// 轮廓分析

vector<vector<Point>> contours;

vector<Vec4i> hierarchy;

findContours(m1, contours, hierarchy, RETR_LIST, CHAIN_APPROX_SIMPLE);

bool find = false;

for (size_t t = 0; t < contours.size(); ++t) {

Rect rect = boundingRect(contours[t]);

float ratio = (float)rect.width / ((float)rect.height);

if (ratio > 4.0 && (rect.y < 5 || (m1.rows - (rect.height + rect.y)) < 10)) {

continue;

}

double area = contourArea(contours[t]);

if (area > 10) {

printf("index: %d, ratio: %.2f, area: %.2f\n", i, ratio, area);

find = true;

// 绘制缺陷

Mat sroi = src(rects[i]);

drawContours(sroi, contours, t, Scalar(255, 0, 255), 0.5);

imshow("sroi", sroi);

}

}

if (count > 50 && find == true) {

printf("index: %d, count: %d\n", i, count);

defect.push_back(rects[i]);

}

imshow("mask", mask);

waitKey(0);

}

// 返回结果

destroyAllWindows();

}

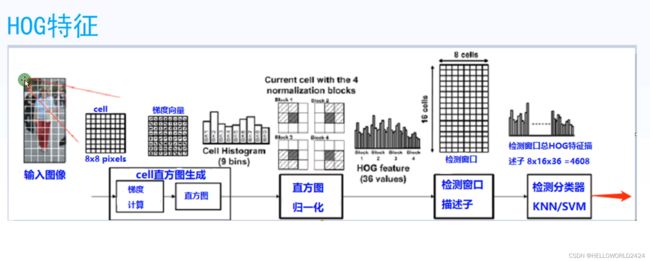

案例二:使用HOG特征描述加SVM进行表计的识别

本案例的目的是使用HOG对图片进行特征提取,然后使用SVM判断检测窗口是否有表计,属于传统的目标检测范畴。实验的数据分为positive,即有表计的图片,negative,没有表计的图片。以及test,测试样例图片。

下面是其中一张示例的图片。

对于训练图片,我们统一resize成(128, 64) (宽, 高)大小,64 * 128 = 8 * 16 cells (高,宽),所以经过特征提取后,HOG特征数为36,总计数目 7*15*36=3780个特征。所以输出的维度应为(1, 3780)。1是batch_size,文字的表述和图上有些一致,以文字为准即可。

string positive_dir = "D:/images/elec_watch/positive";

string negative_dir = "D:/images/elec_watch/negative";

void get_hog_descriptor(Mat &image, vector<float> &desc);

void generate_dataset(Mat &trainData, Mat &label);

void svm_train(Mat &trainData, Mat &labels);

int Advance::instrument() {

// 读取和生成数据集

Mat trainData = Mat::zeros(Size(3780, 26), CV_32FC1);

Mat labels = Mat::zeros(Size(1, 26), CV_32SC1);

generate_dataset(trainData, labels);

// SVM train, and save model

svm_train(trainData, labels);

// load model

Ptr<SVM> svm = SVM::load("D:/images/elec_watch/test.xml");

// detect object

Mat test = imread("D:/images/elec_watch/test/scene_01.jpg");

resize(test, test, Size(0, 0), 0.2, 0.2);

imshow("input", test);

Rect winRect;

winRect.width = 64;

winRect.height = 128;

int sum_x = 0;

int sum_y = 0;

int count = 0;

// 开窗检测...

for (int row = 64; row < test.rows - 64; row += 4) {

for (int col = 32; col < test.cols - 32; col += 4) {

winRect.x = col - 32;

winRect.y = row - 64;

vector<float> fv;

Mat test_win = test(winRect);

get_hog_descriptor(test_win, fv);

Mat one_row = Mat::zeros(Size(fv.size(), 1), CV_32FC1);

for (int i = 0; i < fv.size(); ++i) {

one_row.at<float>(0, i) = fv[i];

}

float result = svm->predict(one_row);

if (result > 0) {

//rectangle(test, winRect, Scalar(0, 0, 255));

sum_x += winRect.x;

sum_y += winRect.y;

count++;

}

}

}

winRect.x = sum_x / count;

winRect.y = sum_y / count;

rectangle(test, winRect, Scalar(255, 0, 0));

imshow("object detection result", test);

waitKey(0);

return 0;

}

void get_hog_descriptor(Mat &image, vector<float> &desc) {

HOGDescriptor hog;

int h = image.rows;

int w = image.cols;

float rate = 64.0 / w;

Mat img, gray;

resize(image, img, Size(64, int(rate*h)));

cvtColor(img, gray, COLOR_BGR2GRAY);

// 图像统一resize成(128, 64)

Mat result = Mat::zeros(Size(64, 128), CV_8UC1);

result = Scalar(127);

Rect roi;

roi.x = 0;

roi.width = 64;

roi.y = (128 - gray.rows) / 2;

roi.height = gray.rows;

gray.copyTo(result(roi));

// cell = 8 * 8像素块

// 64 * 128 = 8 * 16 cells

// 总计数目 7*15*36=3780

hog.compute(result, desc, Size(8, 8), Size(0, 0));

printf("desc len: %d\n", desc.size());

}

void generate_dataset(Mat &trainData, Mat &labels) {

vector<string> images;

glob(positive_dir, images);

int pos_num = images.size();

for (int i = 0; i < images.size(); ++i) {

Mat image = imread(images[i].c_str());

vector<float> fv;

get_hog_descriptor(image, fv);

for (int j = 0; j < fv.size(); ++j) {

trainData.at<float>(i, j) = fv[j];

}

labels.at<int>(i, 0) = 1;

}

images.clear();

glob(negative_dir, images);

for (int i = 0; i < images.size(); ++i) {

Mat image = imread(images[i].c_str());

vector<float> fv;

get_hog_descriptor(image, fv);

for (int j = 0; j < fv.size(); ++j) {

trainData.at<float>(i+pos_num, j) = fv[j];

}

labels.at<int>(i+pos_num, 0) = -1;

}

}

void svm_train(Mat &trainData, Mat &labels) {

printf("\n start SVM training... \n");

Ptr<SVM> svm = SVM::create();

svm->setC(2.67);

svm->setType(SVM::C_SVC);

svm->setKernel(SVM::LINEAR);

svm->setGamma(5.383);

svm->train(trainData, ROW_SAMPLE, labels);

clog << "...[Done]" << endl;

printf("end train...\n");

svm->save("D:/images/elec_watch/test.xml");

}

案例三 二维码检测

知识点:二维码特征、图像二值化、轮廓提取、透视变换、几何分析

核心重点:主要使用图像的二值化,然后findcontour找到轮廓,利用透视摆正。利用外接矩形的宽高比过滤一部分不合适的选项,然后使用二维码固有特征。找到左上,右上,左下的三个正方形。并且如上图b1x:w1x:xb:w2x:b2x=1:1:3:1:1。这样就可以过滤其他的轮廓,得到正确值。

代码部分:

void scanAndDetectQRCode(Mat &image) {

Mat gray, binary;

cvtColor(image, gray, COLOR_BGR2GRAY);

threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_OTSU);

imshow("binary", binary);

// detect rectangle now

vector<vector<Point>> contours;

vector<Vec4i> hireachy;

Moments monents;

findContours(binary.clone(), contours, hireachy, RETR_LIST, CHAIN_APPROX_SIMPLE, Point());

Mat result = Mat::zeros(image.size(), CV_8UC1);

for (size_t t = 0; t < contours.size(); t++) {

double area = contourArea(contours[t]);

if (area < 100) continue;

RotatedRect rect = minAreaRect(contours[t]);

float w = rect.size.width;

float h = rect.size.height;

float rate = min(w, h) / max(w, h);

if (rate > 0.85 && w < image.cols / 4 && h < image.rows / 4) {

Mat qr_roi = transformCorner(image, rect);

// 根据矩形特征进行几何分析

if (isXCorner(qr_roi)) {

drawContours(image, contours, static_cast<int>(t), Scalar(255, 0, 0), 2, 8);

drawContours(result, contours, static_cast<int>(t), Scalar(255), 2, 8);

}

}

}

//scan all key points

vector<Point> pts;

for (int row = 0; row < result.rows; row++) {

for (int col = 0; col < result.cols; col++) {

int pv = result.at<uchar>(row, col);

if (pv == 255) {

pts.push_back(Point(col, row));

}

}

}

RotatedRect rrt = minAreaRect(pts);

Point2f vertices[4];

rrt.points(vertices);

pts.clear();

for (int i = 0; i < 4; i++) {

line(image, vertices[i], vertices[(i + 1) % 4], Scalar(0, 255, 0), 2);

pts.push_back(vertices[i]);

}

Mat mask = Mat::zeros(result.size(), result.type());

vector<vector<Point>> cpts;

cpts.push_back(pts);

drawContours(mask, cpts, 0, Scalar(255), -1, 8);

Mat dst;

bitwise_and(image, image, dst, mask);

imshow("detect result", image);

imshow("result-mask", mask);

imshow("qrcode-roi", dst);

//imshow("contour-image", image);

//imshow("result", result);

}

bool isXCorner(Mat &image) {

Mat gray, binary;

cvtColor(image, gray, COLOR_BGR2GRAY);

threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_OTSU);

int xb = 0, yb = 0;

int w1x = 0, w2x = 0;

int b1x = 0, b2x = 0;

int width = binary.cols;

int height = binary.rows;

int cy = height / 2;

int cx = width / 2;

int pv = binary.at<uchar>(cy, cx);

if (pv == 255) return false;

// verify finder pattern

bool findleft = false, findright = false;

int start = 0, end = 0;

int offset = 0;

while (true) {

offset++;

if ((cx - offset) <= width / 8 || (cx + offset) >= width - 1) {

start = -1;

end = -1;

break;

}

pv = binary.at<uchar>(cy, cx - offset);

if (pv == 255) {

start = cx - offset;

findleft = true;

}

pv = binary.at<uchar>(cy, cx + offset);

if (pv == 255) {

end = cx + offset;

findright = true;

}

if (findleft&&findright) {

break;

}

}

if (start <= 0 || end <= 0) {

return false;

}

xb = end - start;

for (int col = start; col > 0; col--) {

pv = binary.at<uchar>(cy, col);

if (pv == 0) {

w1x = start - col;

break;

}

}

for (int col = end; col < width - 1; col++) {

pv = binary.at<uchar>(cy, col);

if (pv == 0) {

w2x = col - end;

break;

}

}

for (int col = (end + w2x); col < width; col++) {

pv = binary.at<uchar>(cy, col);

if (pv == 255) {

b2x = col - end - w2x;

break;

}

else {

b2x++;

}

}

for (int col = start - w1x; col > 0; col--) {

pv = binary.at<uchar>(cy, col);

if (pv == 255) {

b1x = start - w1x - col;

break;

}

else {

b1x++;

}

}

float sum = xb + b1x + b2x + w1x + w2x;

//printf("xb : %d, b1x = %d, b2x = %d, w1x = %d, w2x = %d\n", xb, b1x, b2x, w1x, w2x);

xb = static_cast<int>((xb / sum)*7.0 + 0.5);

b1x = static_cast<int>((b1x / sum)*7.0 + 0.5);

b2x = static_cast<int>((b2x / sum)*7.0 + 0.5);

w1x = static_cast<int>((w1x / sum)*7.0 + 0.5);

w2x = static_cast<int>((w2x / sum)*7.0 + 0.5);

printf("xb : %d, b1x = %d, b2x = %d, w1x = %d, w2x = %d\n", xb, b1x, b2x, w1x, w2x);

if ((xb == 3 || xb == 4) && b1x == b2x && w1x == w2x && w1x == b1x && b1x == 1) { // 1:1:3:1:1

return true;

}

else {

return false;

}

}

bool isYCorner(Mat &image) {

Mat gray, binary;

cvtColor(image, gray, COLOR_BGR2GRAY);

threshold(gray, binary, 0, 255, THRESH_BINARY | THRESH_OTSU);

int width = binary.cols;

int height = binary.rows;

int cy = height / 2;

int cx = width / 2;

int pv = binary.at<uchar>(cy, cx);

int bc = 0, wc = 0;

bool found = true;

for (int row = cy; row > 0; row--) {

pv = binary.at<uchar>(row, cx);

if (pv == 0 && found) {

bc++;

}

else if (pv == 255) {

found = false;

wc++;

}

}

bc = bc * 2;

if (bc <= wc) {

return false;

}

return true;

}

Mat transformCorner(Mat &image, RotatedRect &rect) {

int width = static_cast<int>(rect.size.width);

int height = static_cast<int>(rect.size.height);

Mat result = Mat::zeros(height, width, image.type());

Point2f vertices[4];

rect.points(vertices);

vector<Point> src_corners;

vector<Point> dst_corners;

dst_corners.push_back(Point(0, 0));

dst_corners.push_back(Point(width, 0));

dst_corners.push_back(Point(width, height));

dst_corners.push_back(Point(0, height));

for (int i = 0; i < 4; i++) {

src_corners.push_back(vertices[i]);

}

Mat h = findHomography(src_corners, dst_corners);

warpPerspective(image, result, h, result.size());

return result;

}

程序运行的结果输出如下图所示:

值得注意的是,我们调用透视变换api后的输出结果如下面图所示:

我们可以看到,二维码上面的三个定位矩形,经过透视变换以后,均已经摆正了,就可以接下来做我们的1:1:3:1:1的特征检测了。

案例四 kmean聚类

4.1 kmeans的原理

下面案例是在图片上随机生成点,然后再进行了kmeans的聚类。

void kmeans_data_demo() {

Mat img(500, 500, CV_8UC3);

RNG rng(12345);

Scalar colorTab[] = {

Scalar(0, 0, 255),

Scalar(255, 0, 0),

};

int numCluster = 2;

int sampleCount = rng.uniform(5, 500);

Mat points(sampleCount, 1, CV_32FC2);

for (int k = 0; k<numCluster; ++k)

{

Point center;

center.x = rng.uniform(0, img.cols);

center.y = rng.uniform(0, img.rows);

Mat pointChunk = points.rowRange(k*sampleCount / numCluster,

k == numCluster - 1 ? sampleCount : (k + 1)*sampleCount / numCluster);

rng.fill(pointChunk, RNG::NORMAL, Scalar(center.x, center.y), Scalar(img.cols*0.05, img.rows*0.05));

};

randShuffle(points, 1, &rng);

// 使用KMeans

Mat labels;

Mat centers;

kmeans(points, numCluster, labels, TermCriteria(TermCriteria::EPS + TermCriteria::COUNT, 10, 0.1), 3, KMEANS_PP_CENTERS, centers);

// 用不同颜色显示分类

img = Scalar::all(255);

for (int i = 0; i < sampleCount; i++) {

int index = labels.at<int>(i);

Point p = points.at<Point2f>(i);

circle(img, p, 2, colorTab[index], -1, 8);

}

// 每个聚类的中心来绘制圆

for (int i = 0; i < centers.rows; i++) {

int x = centers.at<float>(i, 0);

int y = centers.at<float>(i, 1);

printf("c.x= %d, c.y=%d\n", x, y);

circle(img, Point(x, y), 40, colorTab[i], 1, LINE_AA);

}

imshow("KMeans-Data-Demo", img);

waitKey(0);

}

4.2 kmean图片分割

下面代码进行了图片的分割,是基于像素级别的kmeans的聚类。

void kmeans_image_demo() {

Mat src = imread("D:/images/toux.jpg");

if (src.empty()) {

printf("could not load image...\n");

return;

}

namedWindow("input image", WINDOW_AUTOSIZE);

imshow("input image", src);

Vec3b colorTab[] = {

Vec3b(0, 0, 255),

Vec3b(0, 255, 0),

Vec3b(255, 0, 0),

Vec3b(0, 255, 255),

Vec3b(255, 0, 255)

};

int width = src.cols;

int height = src.rows;

int dims = src.channels();

int sampleCount = width * height;

int clusterCount = 3;

Mat labels;

Mat centers;

Mat sample_data = src.reshape(3, sampleCount);

Mat data;

sample_data.convertTo(data, CV_32F);

TermCriteria criteria = TermCriteria(TermCriteria::EPS + TermCriteria::COUNT, 10, 0.1);

kmeans(data, clusterCount, labels, criteria, clusterCount, KMEANS_PP_CENTERS, centers);

int index = 0;

Mat result = Mat::zeros(src.size(), src.type());

for (int row = 0; row < height; ++row) {

for (int col = 0; col < width; ++col) {

index = row * width + col;

int label = labels.at<int>(index, 0);

result.at<Vec3b>(row, col) = colorTab[label];

}

}

imshow("KMeans-image-Demo", result);

waitKey(0);

}

4.3 kmeans图片背景的替换

使用kmean进行图片分割然后替换背景

void kmeans_background_replace() {

Mat src = imread("D:/images/toux.jpg");

if (src.empty()) {

printf("could not load image...\n");

return;

}

namedWindow("input image", WINDOW_AUTOSIZE);

imshow("input image", src);

int width = src.cols;

int height = src.rows;

int dims = src.channels();

// 初始化定义

int simpleCount = width * height;

int clusterCount = 3;

Mat labels;

Mat centers;

Mat sample_data = src.reshape(3, simpleCount);

Mat data;

sample_data.convertTo(data, CV_32F);

// 运行kmeans

TermCriteria criteria = TermCriteria(TermCriteria::EPS + TermCriteria::COUNT, 10, 0.1);

kmeans(data, clusterCount, labels, criteria, clusterCount, KMEANS_PP_CENTERS, centers);

// 生成mask

Mat mask = Mat::zeros(src.size(), CV_8UC1);

int index = labels.at<int>(0, 0);

labels = labels.reshape(1, height);

for (int row = 0; row < height; row++) {

for (int col = 0; col < width; col++) {

int c = labels.at<int>(row, col);

if (c == index) {

mask.at<uchar>(row, col) = 255;

}

}

}

imshow("mask", mask);

Mat se = getStructuringElement(MORPH_RECT, Size(3, 3), Point(-1, -1));

dilate(mask, mask, se);

// mask边缘进行高斯模糊

GaussianBlur(mask, mask, Size(5, 5), 0);

imshow("mask-blur", mask);

// 生成高斯权重图像融合

Mat result = Mat::zeros(src.size(), CV_8UC3);

for (int row = 0; row < height; ++row) {

for (int col = 0; col < width; ++col) {

float w1 = mask.at<uchar>(row, col) / 255.0;

Vec3b bgr = src.at<Vec3b>(row, col);

bgr[0] = w1 * 255.0 + bgr[0] * (1.0 - w1);

bgr[1] = w1 * 0 + bgr[1] * (1.0 - w1);

bgr[2] = w1 * 255.0 + bgr[2] * (1.0 - w1);

result.at<Vec3b>(row, col) = bgr;

}

}

imshow("background-replacement-demo", result);

waitKey(0);

}

4.4 kmeans生成图像色卡

有别4.1中使用位置信息聚类,这里使用的是像素值信息进行聚类。聚类以后通过label信息,在像素级别上面统计不同颜色的数量,然后进行色卡的绘制。

void kmeans_color_card() {

Mat src = imread("D:/images/master.jpg");

if (src.empty()) {

printf("could not load image...\n");

return;

}

namedWindow("input image", WINDOW_AUTOSIZE);

imshow("input image", src);

int width = src.cols;

int height = src.rows;

int dims = src.channels();

// 初始化定义

int sampleCount = width * height;

int clusterCount = 4;

Mat labels;

Mat centers;

Mat sample_data = src.reshape(3, sampleCount);

Mat data;

sample_data.convertTo(data, CV_32F);

// 运行K-Means

TermCriteria criteria = TermCriteria(TermCriteria::EPS + TermCriteria::COUNT, 10, 0.1);

kmeans(data, clusterCount, labels, criteria, clusterCount, KMEANS_PP_CENTERS, centers);

Mat card = Mat::zeros(Size(width, 50), CV_8UC3);

vector<float> clusters(clusterCount);

for (int i = 0; i<labels.rows; i++){

clusters[labels.at<int>(i, 0)]++;

}

for (int i = 0; i < clusters.size(); i++) {

clusters[i] = clusters[i] / sampleCount;

}

int x_offset = 0;

cout << centers << endl;

for (int x = 0; x < clusterCount; ++x) {

Rect rect;

rect.x = x_offset;

rect.y = 0;

rect.height = 50;

rect.width = round(clusters[x] * width);

x_offset += rect.width;

float b = centers.at<float>(x, 0);

float g = centers.at<float>(x, 1);

float r = centers.at<float>(x, 2);

rectangle(card, rect, Scalar(b, g, r), -1, 8, 0);

}

imshow("Image Color Card", card);

waitKey(0);

}