Deep Neural Network

Deep Neural Network

仅用于自己学习使用

依赖的库

import numpy as np

import h5py

import matplotlib.pyplot as plt

from pyrsistent import b

from regex import B

import testCases #参见资料包,或者在文章底部copy

from dnn_utils import sigmoid, sigmoid_backward, relu, relu_backward #参见资料包

import lr_utils #参见资料包,或者在文章底部copy

初始化参数

def initialize_parameters_deep(layers_dims):

np.random.seed(3)

parameters = {}

L = len(layers_dims)

for l in range(1,L):

parameters["W" + str(l)] = np.random.randn(layers_dims[l], layers_dims[l - 1]) / np.sqrt(layers_dims[l - 1])

parameters["b" + str(l)] = np.zeros((layers_dims[l], 1))

return parameters

前向传播

def linear_activation_forward(A_prev,W,b,activation):

Z = np.dot(W,A_prev) + b

if activation == "sigmoid":

A, Z = sigmoid(Z)

elif activation == "relu":

A, Z = relu(Z)

assert(A.shape == (W.shape[0],A_prev.shape[1]))

cache = (A_prev, W, b,Z) # (A_prev, W, b,Z)

return A,cache

def L_model_forward(X,parameters):

caches = [] #[(X, W1, b1,Z1),(A1, W2, b2,Z2).....]

A = X

L = len(parameters) // 2

for l in range(1,L):

A_prev = A

A, cache = linear_activation_forward(A_prev, parameters['W' + str(l)], parameters['b' + str(l)], "relu")

caches.append(cache)

AL, cache = linear_activation_forward(A, parameters['W' + str(L)], parameters['b' + str(L)], "sigmoid")

caches.append(cache)

assert(AL.shape == (1,X.shape[1]))

return AL,caches

成本函数

def compute_cost(AL,Y):

m = Y.shape[1]

cost = -np.sum(np.multiply(np.log(AL),Y) + np.multiply(np.log(1 - AL), 1 - Y)) / m

cost = np.squeeze(cost)

assert(cost.shape == ())

return cost

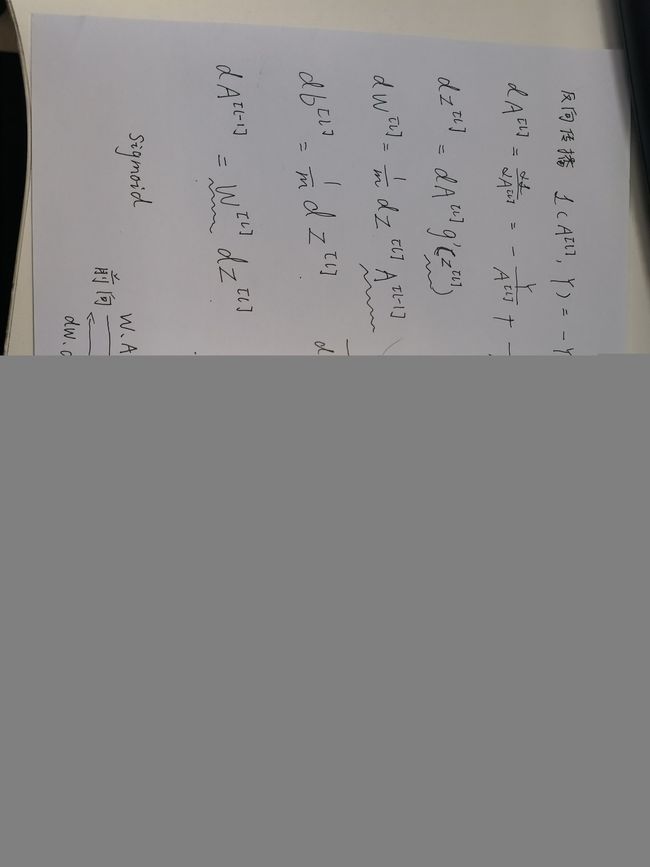

反向传播

def linear_activation_backward(dA,cache,activation="relu"): #cache2 (A1,W2,b2,Z2)

A_prev, W, b, Z = cache

m = A_prev.shape[1]

if activation == "relu":

dZ = relu_backward(dA, Z)

dW = np.dot(dZ, A_prev.T) / m

db = np.sum(dZ, axis=1, keepdims=True) / m

dA_prev = np.dot(W.T, dZ)

elif activation == "sigmoid":

dZ = sigmoid_backward(dA, Z)

dW = np.dot(dZ, A_prev.T) / m

db = np.sum(dZ, axis=1, keepdims=True) / m

dA_prev = np.dot(W.T, dZ)

return dA_prev,dW,db

def L_model_backward(AL,Y,caches):

grads = {}

L = len(caches)

m = AL.shape[1]

Y = Y.reshape(AL.shape)

dAL = - (np.divide(Y, AL) - np.divide(1 - Y, 1 - AL))

current_cache = caches[L-1]

grads["dA" + str(L)], grads["dW" + str(L)], grads["db" + str(L)] = linear_activation_backward(dAL, current_cache, "sigmoid")

for l in reversed(range(L-1)): #reversed 反转迭代器

current_cache = caches[l]

dA_prev_temp, dW_temp, db_temp = linear_activation_backward(grads["dA" + str(l + 2)], current_cache, "relu")

grads["dA" + str(l + 1)] = dA_prev_temp

grads["dW" + str(l + 1)] = dW_temp

grads["db" + str(l + 1)] = db_temp

return grads

更新参数

def update_parameters(parameters, grads, learning_rate):

L = len(parameters) // 2 #整除

for l in range(1,L):

parameters["W" + str(l)] = parameters["W" + str(l)] - learning_rate * grads["dW" + str(l)]

parameters["b" + str(l)] = parameters["b" + str(l)] - learning_rate * grads["db" + str(l)]

return parameters

多层神经网络

def L_layer_model(X, Y, layers_dims, learning_rate=0.0075, num_iterations=3000, print_cost=False,isPlot=True):

np.random.seed(1)

costs = []

parameters = initialize_parameters_deep(layers_dims)

for i in range(0,num_iterations):

AL , caches = L_model_forward(X,parameters)

cost = compute_cost(AL,Y)

grads = L_model_backward(AL,Y,caches)

parameters = update_parameters(parameters,grads,learning_rate)

#打印成本值,如果print_cost=False则忽略

if i % 100 == 0:

#记录成本

costs.append(cost)

#是否打印成本值

if print_cost:

print("第", i ,"次迭代,成本值为:" ,np.squeeze(cost))

#迭代完成,根据条件绘制图

if isPlot:

plt.plot(np.squeeze(costs))

plt.ylabel('cost')

plt.xlabel('iterations (per tens)')

plt.title("Learning rate =" + str(learning_rate))

plt.show()

return parameters

def predict(X, y, parameters):

m = X.shape[1]

n = len(parameters) // 2 # 神经网络的层数

p = np.zeros((1,m))

#根据参数前向传播

probas, caches = L_model_forward(X, parameters)

for i in range(0, probas.shape[1]):

if probas[0,i] > 0.5:

p[0,i] = 1

else:

p[0,i] = 0

print("准确度为: " + str(float(np.sum((p == y))/m)))

return p

train_set_x_orig , train_set_y , test_set_x_orig , test_set_y , classes = lr_utils.load_dataset()

train_x_flatten = train_set_x_orig.reshape(train_set_x_orig.shape[0], -1).T

test_x_flatten = test_set_x_orig.reshape(test_set_x_orig.shape[0], -1).T

train_x = train_x_flatten / 255

train_y = train_set_y

test_x = test_x_flatten / 255

test_y = test_set_y

layers_dims = [12288, 20, 7, 5,1] # 5-layer model

parameters = L_layer_model(train_x, train_y, layers_dims, num_iterations = 2500, print_cost = True,isPlot=True)

pred_train = predict(train_x, train_y, parameters) #训练集

pred_test = predict(test_x, test_y, parameters) #测试集

参考:https://blog.csdn.net/u013733326/article/details/79767169