- 机器学习与深度学习间关系与区别

ℒℴѵℯ心·动ꦿ໊ོ꫞

人工智能学习深度学习python

一、机器学习概述定义机器学习(MachineLearning,ML)是一种通过数据驱动的方法,利用统计学和计算算法来训练模型,使计算机能够从数据中学习并自动进行预测或决策。机器学习通过分析大量数据样本,识别其中的模式和规律,从而对新的数据进行判断。其核心在于通过训练过程,让模型不断优化和提升其预测准确性。主要类型1.监督学习(SupervisedLearning)监督学习是指在训练数据集中包含输入

- 将cmd中命令输出保存为txt文本文件

落难Coder

Windowscmdwindow

最近深度学习本地的训练中我们常常要在命令行中运行自己的代码,无可厚非,我们有必要保存我们的炼丹结果,但是复制命令行输出到txt是非常麻烦的,其实Windows下的命令行为我们提供了相应的操作。其基本的调用格式就是:运行指令>输出到的文件名称或者具体保存路径测试下,我打开cmd并且ping一下百度:pingwww.baidu.com>./data.txt看下相同目录下data.txt的输出:如果你再

- 数字里的世界17期:2021年全球10大顶级数据中心,中国移动榜首

张三叨

你知道吗?2016年,全球的数据中心共计用电4160亿千瓦时,比整个英国的发电量还多40%!前言每天,我们都会创造超过250万TB的数据。并且随着物联网(IOT)的不断普及,这一数据将持续增长。如此庞大的数据被存储在被称为“数据中心”的专用设施中。虽然最早的数据中心建于20世纪40年代,但直到1997-2000年的互联网泡沫期间才逐渐成为主流。当前人类的技术,比如人工智能和机器学习,已经将我们推向

- nosql数据库技术与应用知识点

皆过客,揽星河

NoSQLnosql数据库大数据数据分析数据结构非关系型数据库

Nosql知识回顾大数据处理流程数据采集(flume、爬虫、传感器)数据存储(本门课程NoSQL所处的阶段)Hdfs、MongoDB、HBase等数据清洗(入仓)Hive等数据处理、分析(Spark、Flink等)数据可视化数据挖掘、机器学习应用(Python、SparkMLlib等)大数据时代存储的挑战(三高)高并发(同一时间很多人访问)高扩展(要求随时根据需求扩展存储)高效率(要求读写速度快)

- Python开发常用的三方模块如下:

换个网名有点难

python开发语言

Python是一门功能强大的编程语言,拥有丰富的第三方库,这些库为开发者提供了极大的便利。以下是100个常用的Python库,涵盖了多个领域:1、NumPy,用于科学计算的基础库。2、Pandas,提供数据结构和数据分析工具。3、Matplotlib,一个绘图库。4、Scikit-learn,机器学习库。5、SciPy,用于数学、科学和工程的库。6、TensorFlow,由Google开发的开源机

- Python实现简单的机器学习算法

master_chenchengg

pythonpython办公效率python开发IT

Python实现简单的机器学习算法开篇:初探机器学习的奇妙之旅搭建环境:一切从安装开始必备工具箱第一步:安装Anaconda和JupyterNotebook小贴士:如何配置Python环境变量算法初体验:从零开始的Python机器学习线性回归:让数据说话数据准备:从哪里找数据编码实战:Python实现线性回归模型评估:如何判断模型好坏逻辑回归:从分类开始理论入门:什么是逻辑回归代码实现:使用skl

- BART&BERT

Ambition_LAO

深度学习

BART和BERT都是基于Transformer架构的预训练语言模型。模型架构:BERT(BidirectionalEncoderRepresentationsfromTransformers)主要是一个编码器(Encoder)模型,它使用了Transformer的编码器部分来处理输入的文本,并生成文本的表示。BERT特别擅长理解语言的上下文,因为它在预训练阶段使用了掩码语言模型(MLM)任务,即

- 遥感影像的切片处理

sand&wich

计算机视觉python图像处理

在遥感影像分析中,经常需要将大尺寸的影像切分成小片段,以便于进行详细的分析和处理。这种方法特别适用于机器学习和图像处理任务,如对象检测、图像分类等。以下是如何使用Python和OpenCV库来实现这一过程,同时确保每个影像片段保留正确的地理信息。准备环境首先,确保安装了必要的Python库,包括numpy、opencv-python和xml.etree.ElementTree。这些库将用于图像处理

- 推荐3家毕业AI论文可五分钟一键生成!文末附免费教程!

小猪包333

写论文人工智能AI写作深度学习计算机视觉

在当前的学术研究和写作领域,AI论文生成器已经成为许多研究人员和学生的重要工具。这些工具不仅能够帮助用户快速生成高质量的论文内容,还能进行内容优化、查重和排版等操作。以下是三款值得推荐的AI论文生成器:千笔-AIPassPaper、懒人论文以及AIPaperPass。千笔-AIPassPaper千笔-AIPassPaper是一款基于深度学习和自然语言处理技术的AI写作助手,旨在帮助用户快速生成高质

- AI大模型的架构演进与最新发展

季风泯灭的季节

AI大模型应用技术二人工智能架构

随着深度学习的发展,AI大模型(LargeLanguageModels,LLMs)在自然语言处理、计算机视觉等领域取得了革命性的进展。本文将详细探讨AI大模型的架构演进,包括从Transformer的提出到GPT、BERT、T5等模型的历史演变,并探讨这些模型的技术细节及其在现代人工智能中的核心作用。一、基础模型介绍:Transformer的核心原理Transformer架构的背景在Transfo

- ai绘画工具midjourney怎么下载?附作品管理教程

设计师早上好

Midjourney是一款功能强大的AI绘画工具,它使用机器学习技术和深度神经网络等算法,可以生成各种艺术风格的绘画作品。在创意设计、广告宣传等方面有着广泛的应用前景。那么,ai绘画工具midjourney怎么下载?本文将为您介绍Midjourney的下载以及作品的相关管理。一、Midjourney下载Midjourney的下载非常简单,只需打开Midjourney官网(点击“GetMidjour

- [实践应用] 深度学习之模型性能评估指标

YuanDaima2048

深度学习工具使用深度学习人工智能损失函数性能评估pytorchpython机器学习

文章总览:YuanDaiMa2048博客文章总览深度学习之模型性能评估指标分类任务回归任务排序任务聚类任务生成任务其他介绍在机器学习和深度学习领域,评估模型性能是一项至关重要的任务。不同的学习任务需要不同的性能指标来衡量模型的有效性。以下是对一些常见任务及其相应的性能评估指标的详细解释和总结。分类任务分类任务是指模型需要将输入数据分配到预定义的类别或标签中。以下是分类任务中常用的性能指标:准确率(

- [实践应用] 深度学习之优化器

YuanDaima2048

深度学习工具使用pytorch深度学习人工智能机器学习python优化器

文章总览:YuanDaiMa2048博客文章总览深度学习之优化器1.随机梯度下降(SGD)2.动量优化(Momentum)3.自适应梯度(Adagrad)4.自适应矩估计(Adam)5.RMSprop总结其他介绍在深度学习中,优化器用于更新模型的参数,以最小化损失函数。常见的优化函数有很多种,下面是几种主流的优化器及其特点、原理和PyTorch实现:1.随机梯度下降(SGD)原理:随机梯度下降通过

- 机器学习-聚类算法

不良人龍木木

机器学习机器学习算法聚类

机器学习-聚类算法1.AHC2.K-means3.SC4.MCL仅个人笔记,感谢点赞关注!1.AHC2.K-means3.SC传统谱聚类:个人对谱聚类算法的理解以及改进4.MCL目前仅专注于NLP的技术学习和分享感谢大家的关注与支持!

- 生成式地图制图

Bwywb_3

深度学习机器学习深度学习生成对抗网络

生成式地图制图(GenerativeCartography)是一种利用生成式算法和人工智能技术自动创建地图的技术。它结合了传统的地理信息系统(GIS)技术与现代生成模型(如深度学习、GANs等),能够根据输入的数据自动生成符合需求的地图。这种方法在城市规划、虚拟环境设计、游戏开发等多个领域具有应用前景。主要特点:自动化生成:通过算法和模型,系统能够根据输入的地理或空间数据自动生成地图,而无需人工逐

- 未来软件市场是怎么样的?做开发的生存空间如何?

cesske

软件需求

目录前言一、未来软件市场的发展趋势二、软件开发人员的生存空间前言未来软件市场是怎么样的?做开发的生存空间如何?一、未来软件市场的发展趋势技术趋势:人工智能与机器学习:随着技术的不断成熟,人工智能将在更多领域得到应用,如智能客服、自动驾驶、智能制造等,这将极大地推动软件市场的增长。云计算与大数据:云计算服务将继续普及,大数据技术的应用也将更加广泛。企业将更加依赖云计算和大数据来优化运营、提升效率,并

- 轻量级模型解读——轻量transformer系列

lishanlu136

#图像分类轻量级模型transformer图像分类

先占坑,持续更新。。。文章目录1、DeiT2、ConViT3、Mobile-Former4、MobileViTTransformer是2017谷歌提出的一篇论文,最早应用于NLP领域的机器翻译工作,Transformer解读,但随着2020年DETR和ViT的出现(DETR解读,ViT解读),其在视觉领域的应用也如雨后春笋般渐渐出现,其特有的全局注意力机制给图像识别领域带来了重要参考。但是tran

- 吴恩达深度学习笔记(30)-正则化的解释

极客Array

正则化(Regularization)深度学习可能存在过拟合问题——高方差,有两个解决方法,一个是正则化,另一个是准备更多的数据,这是非常可靠的方法,但你可能无法时时刻刻准备足够多的训练数据或者获取更多数据的成本很高,但正则化通常有助于避免过拟合或减少你的网络误差。如果你怀疑神经网络过度拟合了数据,即存在高方差问题,那么最先想到的方法可能是正则化,另一个解决高方差的方法就是准备更多数据,这也是非常

- 个人学习笔记7-6:动手学深度学习pytorch版-李沐

浪子L

深度学习深度学习笔记计算机视觉python人工智能神经网络pytorch

#人工智能##深度学习##语义分割##计算机视觉##神经网络#计算机视觉13.11全卷积网络全卷积网络(fullyconvolutionalnetwork,FCN)采用卷积神经网络实现了从图像像素到像素类别的变换。引入l转置卷积(transposedconvolution)实现的,输出的类别预测与输入图像在像素级别上具有一一对应关系:通道维的输出即该位置对应像素的类别预测。13.11.1构造模型下

- python中zeros用法_Python中的numpy.zeros()用法

江平舟

python中zeros用法

numpy.zeros()函数是最重要的函数之一,广泛用于机器学习程序中。此函数用于生成包含零的数组。numpy.zeros()函数提供给定形状和类型的新数组,并用零填充。句法numpy.zeros(shape,dtype=float,order='C'参数形状:整数或整数元组此参数用于定义数组的尺寸。此参数用于我们要在其中创建数组的形状,例如(3,2)或2。dtype:数据类型(可选)此参数用于

- 深度学习-点击率预估-研究论文2024-09-14速读

sp_fyf_2024

深度学习人工智能

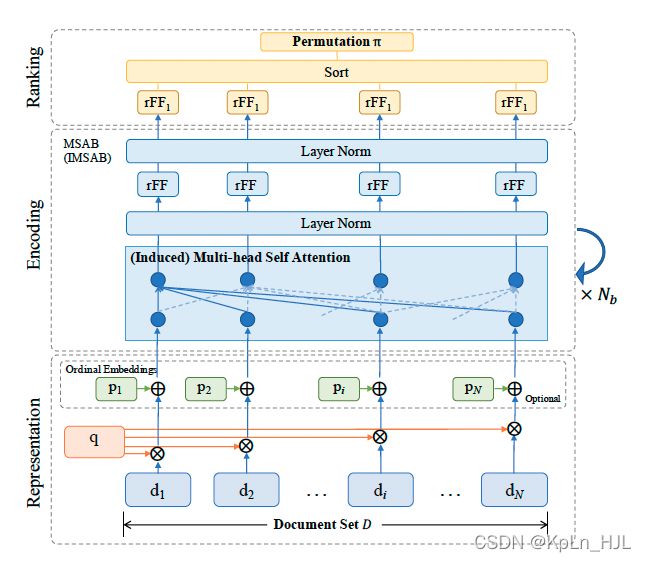

深度学习-点击率预估-研究论文2024-09-14速读1.DeepTargetSessionInterestNetworkforClick-ThroughRatePredictionHZhong,JMa,XDuan,SGu,JYao-2024InternationalJointConferenceonNeuralNetworks,2024深度目标会话兴趣网络用于点击率预测摘要:这篇文章提出了一种新

- 【NumPy】深入解析numpy.zeros()函数

二七830

numpy

欢迎莅临我的个人主页这里是我深耕Python编程、机器学习和自然语言处理(NLP)领域,并乐于分享知识与经验的小天地!博主简介:我是二七830,一名对技术充满热情的探索者。多年的Python编程和机器学习实践,使我深入理解了这些技术的核心原理,并能够在实际项目中灵活应用。尤其是在NLP领域,我积累了丰富的经验,能够处理各种复杂的自然语言任务。技术专长:我熟练掌握Python编程语言,并深入研究了机

- 【中国国际航空-注册_登录安全分析报告】

风控牛

验证码接口安全评测系列安全行为验证极验网易易盾智能手机

前言由于网站注册入口容易被黑客攻击,存在如下安全问题:1.暴力破解密码,造成用户信息泄露2.短信盗刷的安全问题,影响业务及导致用户投诉3.带来经济损失,尤其是后付费客户,风险巨大,造成亏损无底洞所以大部分网站及App都采取图形验证码或滑动验证码等交互解决方案,但在机器学习能力提高的当下,连百度这样的大厂都遭受攻击导致点名批评,图形验证及交互验证方式的安全性到底如何?请看具体分析一、中国国际航空PC

- 机器学习 流形数据降维:UMAP 降维算法

小嗷犬

Python机器学习#数据分析及可视化机器学习算法人工智能

✅作者简介:人工智能专业本科在读,喜欢计算机与编程,写博客记录自己的学习历程。个人主页:小嗷犬的个人主页个人网站:小嗷犬的技术小站个人信条:为天地立心,为生民立命,为往圣继绝学,为万世开太平。本文目录UMAP简介理论基础特点与优势应用场景在Python中使用UMAP安装umap-learn库使用UMAP可视化手写数字数据集UMAP简介UMAP(UniformManifoldApproximatio

- 损失函数与反向传播

Star_.

PyTorchpytorch深度学习python

损失函数定义与作用损失函数(lossfunction)在深度学习领域是用来计算搭建模型预测的输出值和真实值之间的误差。1.损失函数越小越好2.计算实际输出与目标之间的差距3.为更新输出提供依据(反向传播)常见的损失函数回归常见的损失函数有:均方差(MeanSquaredError,MSE)、平均绝对误差(MeanAbsoluteErrorLoss,MAE)、HuberLoss是一种将MSE与MAE

- 七.正则化

愿风去了

吴恩达机器学习之正则化(Regularization)http://www.cnblogs.com/jianxinzhou/p/4083921.html从数学公式上理解L1和L2https://blog.csdn.net/b876144622/article/details/81276818虽然在线性回归中加入基函数会使模型更加灵活,但是很容易引起数据的过拟合。例如将数据投影到30维的基函数上,模

- 探索创新科技: Lite-Mono - 简约高效的小型化Mono框架

杭律沛Meris

探索创新科技:Lite-Mono-简约高效的小型化Mono框架Lite-Mono[CVPR2023]Lite-Mono:ALightweightCNNandTransformerArchitectureforSelf-SupervisedMonocularDepthEstimation项目地址:https://gitcode.com/gh_mirrors/li/Lite-Mono如果你在寻找一个轻

- 机器学习-------数据标准化

罔闻_spider

数据分析算法机器学习人工智能

什么是归一化,它与标准化的区别是什么?一作用在做训练时,需要先将特征值与标签标准化,可以防止梯度防炸和过拟合;将标签标准化后,网络预测出的数据是符合标准正态分布的—StandarScaler(),与真实值有很大差别。因为StandarScaler()对数据的处理是(真实值-平均值)/标准差。同时在做预测时需要将输出数据逆标准化提升模型精度:标准化/归一化使不同维度的特征在数值上更具比较性,提高分类

- 分享一个基于python的电子书数据采集与可视化分析 hadoop电子书数据分析与推荐系统 spark大数据毕设项目(源码、调试、LW、开题、PPT)

计算机源码社

Python项目大数据大数据pythonhadoop计算机毕业设计选题计算机毕业设计源码数据分析spark毕设

作者:计算机源码社个人简介:本人八年开发经验,擅长Java、Python、PHP、.NET、Node.js、Android、微信小程序、爬虫、大数据、机器学习等,大家有这一块的问题可以一起交流!学习资料、程序开发、技术解答、文档报告如需要源码,可以扫取文章下方二维码联系咨询Java项目微信小程序项目Android项目Python项目PHP项目ASP.NET项目Node.js项目选题推荐项目实战|p

- 【深度学习】训练过程中一个OOM的问题,太难查了

weixin_40293999

深度学习深度学习人工智能

现象:各位大佬又遇到过ubuntu的这个问题么?现象是在训练过程中,ssh上不去了,能ping通,没死机,但是ubunutu的pc侧的显示器,鼠标啥都不好用了。只能重启。问题原因:OOM了95G,尼玛!!!!pytorch爆内存了,然后journald假死了,在journald被watchdog干掉之后,系统就崩溃了。这种规模的爆内存一般,即使被oomkill了,也要卡半天的,确实会这样,能不能配

- eclipse maven

IXHONG

eclipse

eclipse中使用maven插件的时候,运行run as maven build的时候报错

-Dmaven.multiModuleProjectDirectory system propery is not set. Check $M2_HOME environment variable and mvn script match.

可以设一个环境变量M2_HOME指

- timer cancel方法的一个小实例

alleni123

多线程timer

package com.lj.timer;

import java.util.Date;

import java.util.Timer;

import java.util.TimerTask;

public class MyTimer extends TimerTask

{

private int a;

private Timer timer;

pub

- MySQL数据库在Linux下的安装

ducklsl

mysql

1.建好一个专门放置MySQL的目录

/mysql/db数据库目录

/mysql/data数据库数据文件目录

2.配置用户,添加专门的MySQL管理用户

>groupadd mysql ----添加用户组

>useradd -g mysql mysql ----在mysql用户组中添加一个mysql用户

3.配置,生成并安装MySQL

>cmake -D

- spring------>>cvc-elt.1: Cannot find the declaration of element

Array_06

springbean

将--------

<?xml version="1.0" encoding="UTF-8"?>

<beans xmlns="http://www.springframework.org/schema/beans"

xmlns:xsi="http://www.w3

- maven发布第三方jar的一些问题

cugfy

maven

maven中发布 第三方jar到nexus仓库使用的是 deploy:deploy-file命令

有许多参数,具体可查看

http://maven.apache.org/plugins/maven-deploy-plugin/deploy-file-mojo.html

以下是一个例子:

mvn deploy:deploy-file -DgroupId=xpp3

- MYSQL下载及安装

357029540

mysql

好久没有去安装过MYSQL,今天自己在安装完MYSQL过后用navicat for mysql去厕测试链接的时候出现了10061的问题,因为的的MYSQL是最新版本为5.6.24,所以下载的文件夹里没有my.ini文件,所以在网上找了很多方法还是没有找到怎么解决问题,最后看到了一篇百度经验里有这个的介绍,按照其步骤也完成了安装,在这里给大家分享下这个链接的地址

- ios TableView cell的布局

张亚雄

tableview

cell.imageView.image = [UIImage imageNamed:[imageArray objectAtIndex:[indexPath row]]];

CGSize itemSize = CGSizeMake(60, 50);

&nbs

- Java编码转义

adminjun

java编码转义

import java.io.UnsupportedEncodingException;

/**

* 转换字符串的编码

*/

public class ChangeCharset {

/** 7位ASCII字符,也叫作ISO646-US、Unicode字符集的基本拉丁块 */

public static final Strin

- Tomcat 配置和spring

aijuans

spring

简介

Tomcat启动时,先找系统变量CATALINA_BASE,如果没有,则找CATALINA_HOME。然后找这个变量所指的目录下的conf文件夹,从中读取配置文件。最重要的配置文件:server.xml 。要配置tomcat,基本上了解server.xml,context.xml和web.xml。

Server.xml -- tomcat主

- Java打印当前目录下的所有子目录和文件

ayaoxinchao

递归File

其实这个没啥技术含量,大湿们不要操笑哦,只是做一个简单的记录,简单用了一下递归算法。

import java.io.File;

/**

* @author Perlin

* @date 2014-6-30

*/

public class PrintDirectory {

public static void printDirectory(File f

- linux安装mysql出现libs报冲突解决

BigBird2012

linux

linux安装mysql出现libs报冲突解决

安装mysql出现

file /usr/share/mysql/ukrainian/errmsg.sys from install of MySQL-server-5.5.33-1.linux2.6.i386 conflicts with file from package mysql-libs-5.1.61-4.el6.i686

- jedis连接池使用实例

bijian1013

redisjedis连接池jedis

实例代码:

package com.bijian.study;

import java.util.ArrayList;

import java.util.List;

import redis.clients.jedis.Jedis;

import redis.clients.jedis.JedisPool;

import redis.clients.jedis.JedisPoo

- 关于朋友

bingyingao

朋友兴趣爱好维持

成为朋友的必要条件:

志相同,道不合,可以成为朋友。譬如马云、周星驰一个是商人,一个是影星,可谓道不同,但都很有梦想,都要在各自领域里做到最好,当他们遇到一起,互相欣赏,可以畅谈两个小时。

志不同,道相合,也可以成为朋友。譬如有时候看到两个一个成绩很好每次考试争做第一,一个成绩很差的同学是好朋友。他们志向不相同,但他

- 【Spark七十九】Spark RDD API一

bit1129

spark

aggregate

package spark.examples.rddapi

import org.apache.spark.{SparkConf, SparkContext}

//测试RDD的aggregate方法

object AggregateTest {

def main(args: Array[String]) {

val conf = new Spar

- ktap 0.1 released

bookjovi

kerneltracing

Dear,

I'm pleased to announce that ktap release v0.1, this is the first official

release of ktap project, it is expected that this release is not fully

functional or very stable and we welcome bu

- 能保存Properties文件注释的Properties工具类

BrokenDreams

properties

今天遇到一个小需求:由于java.util.Properties读取属性文件时会忽略注释,当写回去的时候,注释都没了。恰好一个项目中的配置文件会在部署后被某个Java程序修改一下,但修改了之后注释全没了,可能会给以后的参数调整带来困难。所以要解决这个问题。

&nb

- 读《研磨设计模式》-代码笔记-外观模式-Facade

bylijinnan

java设计模式

声明: 本文只为方便我个人查阅和理解,详细的分析以及源代码请移步 原作者的博客http://chjavach.iteye.com/

/*

* 百度百科的定义:

* Facade(外观)模式为子系统中的各类(或结构与方法)提供一个简明一致的界面,

* 隐藏子系统的复杂性,使子系统更加容易使用。他是为子系统中的一组接口所提供的一个一致的界面

*

* 可简单地

- After Effects教程收集

cherishLC

After Effects

1、中文入门

http://study.163.com/course/courseMain.htm?courseId=730009

2、videocopilot英文入门教程(中文字幕)

http://www.youku.com/playlist_show/id_17893193.html

英文原址:

http://www.videocopilot.net/basic/

素

- Linux Apache 安装过程

crabdave

apache

Linux Apache 安装过程

下载新版本:

apr-1.4.2.tar.gz(下载网站:http://apr.apache.org/download.cgi)

apr-util-1.3.9.tar.gz(下载网站:http://apr.apache.org/download.cgi)

httpd-2.2.15.tar.gz(下载网站:http://httpd.apac

- Shell学习 之 变量赋值和引用

daizj

shell变量引用赋值

本文转自:http://www.cnblogs.com/papam/articles/1548679.html

Shell编程中,使用变量无需事先声明,同时变量名的命名须遵循如下规则:

首个字符必须为字母(a-z,A-Z)

中间不能有空格,可以使用下划线(_)

不能使用标点符号

不能使用bash里的关键字(可用help命令查看保留关键字)

需要给变量赋值时,可以这么写:

- Java SE 第一讲(Java SE入门、JDK的下载与安装、第一个Java程序、Java程序的编译与执行)

dcj3sjt126com

javajdk

Java SE 第一讲:

Java SE:Java Standard Edition

Java ME: Java Mobile Edition

Java EE:Java Enterprise Edition

Java是由Sun公司推出的(今年初被Oracle公司收购)。

收购价格:74亿美金

J2SE、J2ME、J2EE

JDK:Java Development

- YII给用户登录加上验证码

dcj3sjt126com

yii

1、在SiteController中添加如下代码:

/**

* Declares class-based actions.

*/

public function actions() {

return array(

// captcha action renders the CAPTCHA image displ

- Lucene使用说明

dyy_gusi

Lucenesearch分词器

Lucene使用说明

1、lucene简介

1.1、什么是lucene

Lucene是一个全文搜索框架,而不是应用产品。因此它并不像baidu或者googleDesktop那种拿来就能用,它只是提供了一种工具让你能实现这些产品和功能。

1.2、lucene能做什么

要回答这个问题,先要了解lucene的本质。实际

- 学习编程并不难,做到以下几点即可!

gcq511120594

数据结构编程算法

不论你是想自己设计游戏,还是开发iPhone或安卓手机上的应用,还是仅仅为了娱乐,学习编程语言都是一条必经之路。编程语言种类繁多,用途各 异,然而一旦掌握其中之一,其他的也就迎刃而解。作为初学者,你可能要先从Java或HTML开始学,一旦掌握了一门编程语言,你就发挥无穷的想象,开发 各种神奇的软件啦。

1、确定目标

学习编程语言既充满乐趣,又充满挑战。有些花费多年时间学习一门编程语言的大学生到

- Java面试十问之三:Java与C++内存回收机制的差别

HNUlanwei

javaC++finalize()堆栈内存回收

大家知道, Java 除了那 8 种基本类型以外,其他都是对象类型(又称为引用类型)的数据。 JVM 会把程序创建的对象存放在堆空间中,那什么又是堆空间呢?其实,堆( Heap)是一个运行时的数据存储区,从它可以分配大小各异的空间。一般,运行时的数据存储区有堆( Heap)和堆栈( Stack),所以要先看它们里面可以分配哪些类型的对象实体,然后才知道如何均衡使用这两种存储区。一般来说,栈中存放的

- 第二章 Nginx+Lua开发入门

jinnianshilongnian

nginxlua

Nginx入门

本文目的是学习Nginx+Lua开发,对于Nginx基本知识可以参考如下文章:

nginx启动、关闭、重启

http://www.cnblogs.com/derekchen/archive/2011/02/17/1957209.html

agentzh 的 Nginx 教程

http://openresty.org/download/agentzh-nginx-tutor

- MongoDB windows安装 基本命令

liyonghui160com

windows安装

安装目录:

D:\MongoDB\

新建目录

D:\MongoDB\data\db

4.启动进城:

cd D:\MongoDB\bin

mongod -dbpath D:\MongoDB\data\db

&n

- Linux下通过源码编译安装程序

pda158

linux

一、程序的组成部分 Linux下程序大都是由以下几部分组成: 二进制文件:也就是可以运行的程序文件 库文件:就是通常我们见到的lib目录下的文件 配置文件:这个不必多说,都知道 帮助文档:通常是我们在linux下用man命令查看的命令的文档

二、linux下程序的存放目录 linux程序的存放目录大致有三个地方: /etc, /b

- WEB开发编程的职业生涯4个阶段

shw3588

编程Web工作生活

觉得自己什么都会

2007年从学校毕业,凭借自己原创的ASP毕业设计,以为自己很厉害似的,信心满满去东莞找工作,找面试成功率确实很高,只是工资不高,但依旧无法磨灭那过分的自信,那时候什么考勤系统、什么OA系统、什么ERP,什么都觉得有信心,这样的生涯大概持续了约一年。

根本不是自己想的那样

2008年开始接触很多工作相关的东西,发现太多东西自己根本不会,都需要去学,不管是asp还是js,

- 遭遇jsonp同域下变作post请求的坑

vb2005xu

jsonp同域post

今天迁移一个站点时遇到一个坑爹问题,同一个jsonp接口在跨域时都能调用成功,但是在同域下调用虽然成功,但是数据却有问题. 此处贴出我的后端代码片段

$mi_id = htmlspecialchars(trim($_GET['mi_id ']));

$mi_cv = htmlspecialchars(trim($_GET['mi_cv ']));

贴出我前端代码片段:

$.aj