【汇总】nltk相关资源包无法下载报错问题

LookupError:

**********************************************************************

Resource xxx not found.

Please use the NLTK Downloader to obtain the resource:

>>> import nltk

>>> nltk.download('xxx')因为一些原因,下载不了nltk的相关数据,这个时候可以手工导入所需的资源

0. 相关语料数据

我收集了四份资源分别是punk,omw-1.4,stopwords,wordnet

下载链接

1. word_tokenize相关

1.1 报错代码

from nltk.tokenize import word_tokenize

a = "hello, world!"

print(word_tokenize(a))1.2 报错信息

缺失punkt。这里我的环境是ubuntu中的miniconda下的tf,我的用户名是username。我选择了下面搜索路径中的第二条路径,实际可以结合自身情况修改。

LookupError:

**********************************************************************

Resource punkt not found.

Please use the NLTK Downloader to obtain the resource:

>>> import nltk

>>> nltk.download('punkt')

For more information see: https://www.nltk.org/data.html

Attempted to load tokenizers/punkt/PY3/english.pickle

Searched in:

- '/home/username/nltk_data'

- '/home/username/miniconda3/envs/tf/nltk_data'

- '/home/username/miniconda3/envs/tf/share/nltk_data'

- '/home/username/miniconda3/envs/tf/lib/nltk_data'

- '/usr/share/nltk_data'

- '/usr/local/share/nltk_data'

- '/usr/lib/nltk_data'

- '/usr/local/lib/nltk_data'

- ''

**********************************************************************1.3 解决方法

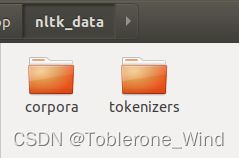

在相应的环境下(这里我的环境是tf)创建nltk_data文件夹,并在下面创建tokenizers文件夹,再将punkt文件夹放入。最终路径为(我选择了搜索路径中的第二条路径,实际可以结合自身情况修改)

/home/username/miniconda/envs/tf/nltk_data/tokenizers/punkt2. lemmatize相关

2.1 报错代码

from nltk.stem.wordnet import WordNetLemmatizer

stem_wordnet = WordNetLemmatizer()

print(stem_wordnet.lemmatize("goes"))2.2 报错信息

缺失wordnet。这里我的环境是ubuntu中的miniconda下的tf,我的用户名是username。

LookupError:

**********************************************************************

Resource wordnet not found.

Please use the NLTK Downloader to obtain the resource:

>>> import nltk

>>> nltk.download('wordnet')

For more information see: https://www.nltk.org/data.html

Attempted to load corpora/wordnet

Searched in:

- '/home/username/nltk_data'

- '/home/username/miniconda3/envs/tf/nltk_data'

- '/home/username/miniconda3/envs/tf/share/nltk_data'

- '/home/username/miniconda3/envs/tf/lib/nltk_data'

- '/usr/share/nltk_data'

- '/usr/local/share/nltk_data'

- '/usr/lib/nltk_data'

- '/usr/local/lib/nltk_data'

**********************************************************************缺失omw-1.4。这里我的环境是ubuntu中的miniconda下的tf,我的用户名是username。

LookupError:

**********************************************************************

Resource omw-1.4 not found.

Please use the NLTK Downloader to obtain the resource:

>>> import nltk

>>> nltk.download('omw-1.4')

For more information see: https://www.nltk.org/data.html

Attempted to load corpora/omw-1.4

Searched in:

- '/home/username/nltk_data'

- '/home/username/miniconda3/envs/tf/nltk_data'

- '/home/username/miniconda3/envs/tf/share/nltk_data'

- '/home/username/miniconda3/envs/tf/lib/nltk_data'

- '/usr/share/nltk_data'

- '/usr/local/share/nltk_data'

- '/usr/lib/nltk_data'

- '/usr/local/lib/nltk_data'

**********************************************************************2.3 解决方法

在相应的环境下(这里我的环境是tf)创建nltk_data文件夹,并在下面创建corpora文件夹,再将wordnet和omw-1.4文件夹放入。最终路径为(我选择了搜索路径中的第二条路径,实际可以结合自身情况修改)

/home/username/miniconda/envs/tf/nltk_data/corpora/wordnet/home/username/miniconda/envs/tf/nltk_data/corpora/omw-1.43. stopwords相关

3.1 报错代码

from nltk.corpus import stopwords

stop_words = set(stopwords.words('english'))3.2 报错信息

缺失stopwords。这里我的环境是ubuntu中的miniconda下的tf,我的用户名是username。

LookupError:

**********************************************************************

Resource stopwords not found.

Please use the NLTK Downloader to obtain the resource:

>>> import nltk

>>> nltk.download('stopwords')

For more information see: https://www.nltk.org/data.html

Attempted to load corpora/stopwords

Searched in:

- '/home/username/nltk_data'

- '/home/username/miniconda3/envs/tf/nltk_data'

- '/home/username/miniconda3/envs/tf/share/nltk_data'

- '/home/username/miniconda3/envs/tf/lib/nltk_data'

- '/usr/share/nltk_data'

- '/usr/local/share/nltk_data'

- '/usr/lib/nltk_data'

- '/usr/local/lib/nltk_data'

**********************************************************************3.3 解决方法

在相应的环境下(这里我的环境是tf)创建nltk_data文件夹,并在下面创建corpora文件夹,再将stopwords文件夹放入。最终路径为(我选择了搜索路径中的第二条路径,实际可以结合自身情况修改)

/home/username/miniconda/envs/tf/nltk_data/corpora/stopwords