seq2seq 实现从tensorflow到pytorch的转写

在练习tensorflow转写成pytorch

对之前的一段tensorflow的seq2seq代码实现pytorch的转换

任务是这样的:

要将中文日期翻译成英文日期格式,并且输入的时候不知道是1980年还是2080年,需要模型自行判断

已经有了tensorflow的代码

完整tensorflow代码

# -*- coding: utf-8 -*-

"""

Created on Fri May 21 10:25:14 2021

@author: zhongxi

"""

import tensorflow as tf

from tensorflow import keras

import numpy as np

import tensorflow_addons as tfa

import datetime

PAD_ID = 0

class DateData:

def __init__(self, n):# 将要取的数量传入

np.random.seed(1)

self.date_cn = []

self.date_en = []

for timestamp in np.random.randint(143835585, 2043835585, n):

# 从74-07-24 -- 2034-10-7种的任意一天构造中文日期和英文日期

date = datetime.datetime.fromtimestamp(timestamp)

self.date_cn.append(date.strftime("%y-%m-%d"))

self.date_en.append(date.strftime("%d/%b/%Y"))

# 将所有出现的数字字符,英文单词,以及各种表示符号与索引对应起来

self.vocab = set(

[str(i) for i in range(0, 10)] + ["-", "/", "" , "" ] + [

i.split("/")[1] for i in self.date_en])

self.v2i = {v: i for i, v in enumerate(sorted(list(self.vocab)), start=1)}

# 加上一个“”标志

self.v2i["" ] = PAD_ID

self.vocab.add("" )

self.i2v = {i: v for v, i in self.v2i.items()}

self.x, self.y = [], []

for cn, en in zip(self.date_cn, self.date_en):

self.x.append([self.v2i[v] for v in cn])

self.y.append(

[self.v2i["" ], ] + [self.v2i[v] for v in en[:3]] + [

self.v2i[en[3:6]], ] + [self.v2i[v] for v in en[6:]] + [

self.v2i["" ], ])

# cn和en的数据都变成了索引,用来训练的其实是字符所对应的索引

self.x, self.y = np.array(self.x), np.array(self.y)

self.start_token = self.v2i["" ] # 开始符号

self.end_token = self.v2i["" ] # 结束符号

def sample(self, n=64):

bi = np.random.randint(0, len(self.x), size=n) # 得到每一个batch的index序列

bx, by = self.x[bi], self.y[bi]

decoder_len = np.full((len(bx),), by.shape[1] - 1, dtype=np.int32)

return bx, by, decoder_len

def idx2str(self, idx):

x = []

for i in idx:

x.append(self.i2v[i])

if i == self.end_token:

break

return "".join(x)

@property

def num_word(self):

return len(self.vocab)

class Seq2SeqTranslation(keras.Model):

def __init__(self, enc_v_dim, dec_v_dim, emb_dim, units, max_pred_len, start_token, end_token):

super().__init__()

self.units = units

# encoder

self.enc_embeddings = keras.layers.Embedding(

input_dim=enc_v_dim, output_dim=emb_dim, # [enc_n_vocab, emb_dim]

embeddings_initializer=tf.initializers.RandomNormal(0., 0.1),

)

# 卷积层

self.conv2ds = [

keras.layers.Conv2D(16, (n, emb_dim), padding="valid", activation=keras.activations.relu)

for n in range(2, 5)] # 不同长度的filter从2-4有三种大小不同的卷积核

# 池化层

self.max_pools = [keras.layers.MaxPool2D((n, 1)) for n in [7, 6, 5]]

# 是一个全连接层,用relu做激活函数

self.encoder = keras.layers.Dense(units, activation=keras.activations.relu)

# decoder还是不变

# 嵌入层,初始化方法指定

self.dec_embeddings = keras.layers.Embedding(

input_dim=dec_v_dim, output_dim=emb_dim, # [dec_n_vocab, emb_dim]

embeddings_initializer=tf.initializers.RandomNormal(0., 0.1),

)

# LSTM层

self.decoder_cell = keras.layers.LSTMCell(units=units)

# 全连接层

decoder_dense = keras.layers.Dense(dec_v_dim)

# train decoder

# 传进了一个LSTM_cell以及一个output_layer,之后BasicDecoderOutput中的step是基于前一时刻的cell输出以及当前的输入不断计算当前的输出,之后经过output_layer最终形成序列。

self.decoder_train = tfa.seq2seq.BasicDecoder(

cell=self.decoder_cell,

sampler=tfa.seq2seq.sampler.TrainingSampler(), # sampler for train

output_layer=decoder_dense

)

# predict decoder

self.decoder_eval = tfa.seq2seq.BasicDecoder(

cell=self.decoder_cell,

sampler=tfa.seq2seq.sampler.GreedyEmbeddingSampler(), # sampler for predict

output_layer=decoder_dense

)

# loss

self.cross_entropy = keras.losses.SparseCategoricalCrossentropy(from_logits=True)

# 优化器

self.opt = keras.optimizers.Adam(0.01)

self.max_pred_len = max_pred_len

self.start_token = start_token

self.end_token = end_token

def encode(self, x):

embedded = self.enc_embeddings(x) # [n, step, emb]

o = tf.expand_dims(embedded, axis=3) # [n, step=8, emb=16, 1]

co = [conv2d(o) for conv2d in self.conv2ds] # [n, 7, 1, 16], [n, 6, 1, 16], [n, 5, 1, 16]

co = [self.max_pools[i](co[i]) for i in range(len(co))] # [n, 1, 1, 16] * 3

co = [tf.squeeze(c, axis=[1, 2]) for c in co] # [n, 16] * 3

o = tf.concat(co, axis=1) # [n, 16*3]

h = self.encoder(o) # [n, units]

return [h, h] # RNN种一个句子可以得到两个句向量信息[h,c],这里只能得到一个,把h直接写两边,具有移植性

def inference(self, x):

s = self.encode(x)

done, i, s = self.decoder_eval.initialize(

self.dec_embeddings.variables[0],

start_tokens=tf.fill([x.shape[0], ], self.start_token),

end_token=self.end_token,

initial_state=s,

)

pred_id = np.zeros((x.shape[0], self.max_pred_len), dtype=np.int32)

for l in range(self.max_pred_len):

o, s, i, done = self.decoder_eval.step(

time=l, inputs=i, state=s, training=False)

pred_id[:, l] = o.sample_id

return pred_id

def train_logits(self, x, y, seq_len):

s = self.encode(x)

dec_in = y[:, :-1] # ignore 转写成pytorch首先要明白seq2seq的原理,网上有很多不赘述了

import numpy as np

import torch

import torch.nn as nn

import torch.optim as optim

import random

PAD_ID = 0 # ""的索引置为0

class DateData:

def __init__(self, date_cn, date_en):

self.vocab = set(

[str(i) for i in range(0, 10)] + ["-", "/", "" , "" ] + [

i.split("/")[1] for i in date_en])

self.v2i = {v: i for i, v in enumerate(sorted(list(self.vocab)), start=1)} # 从1开始,加索引

self.v2i["" ] = PAD_ID #加索引0

self.vocab.add("" )

self.i2v = {i: v for v, i in self.v2i.items()}

# 构建了两个字典可以对应查询i2v和v2i

self.x, self.y = [], []

for cn, en in zip(date_cn, date_en):

self.x.append([self.v2i[v] for v in cn])

self.y.append(

[self.v2i["" ], ] + [self.v2i[v] for v in en[:3]] + [

self.v2i[en[3:6]], ] + [self.v2i[v] for v in en[6:]] + [

self.v2i["" ], ])

self.x, self.y = np.array(self.x), np.array(self.y)

self.start_token = self.v2i["" ]

self.end_token = self.v2i["" ]

def sample(self, n=64):

bi = np.random.randint(0, len(self.x), size=n)

bx, by = self.x[bi], self.y[bi]

decoder_len = np.full((len(bx),), by.shape[1] - 1, dtype=np.int32)

return bx, by, decoder_len

def idx2str(self, idx):

x = []

for i in idx:

x.append(self.i2v[i])

if i == self.end_token:

break

return "".join(x)

@property

def num_word(self):

return len(self.vocab)

首先生成数据的阶段不需要怎么变化

with open(r"data.txt",'r') as f:

date = f.readlines()

date = [i.strip().split(' ') for i in date]

# print(date)

date_cn = [i[0] for i in date]

date_en = [i[1] for i in date]

date = DateData(date_cn=date_cn, date_en=date_en)

print("Chinese time order: yy/mm/dd ", date_cn[:3], "\nEnglish time order: dd/M/yyyy ",date_en[:3])

print("vocabularies: ", date.vocab)

print("v2i:\n",date.v2i)

print("x index sample: \n{}\n{}".format(date.idx2str(date.x[0]), date.x[0]),

"\ny index sample: \n{}\n{}".format(date.idx2str(date.y[0]), date.y[0]))

也可以查看一下数据,没有问题

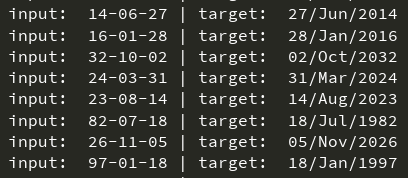

Chinese time order: yy/mm/dd ['31-04-26', '04-07-18', '33-06-06']

English time order: dd/M/yyyy ['26/Apr/2031', '18/Jul/2004', '06/Jun/2033']

vocabularies: {'Feb', 'Nov', '', 'Jul', '8', 'Dec', 'Jan', 'Jun', 'Mar', '/', '', 'May', '0', '4', 'Oct', '2', '', '7', '5', '6', 'Sep', '-', '9', 'Aug', '3', 'Apr', '1'}

v2i:

{'-': 1, '/': 2, '0': 3, '1': 4, '2': 5, '3': 6, '4': 7, '5': 8, '6': 9, '7': 10, '8': 11, '9': 12, '': 13, '': 14, 'Apr': 15, 'Aug': 16, 'Dec': 17, 'Feb': 18, 'Jan': 19, 'Jul': 20, 'Jun': 21, 'Mar': 22, 'May': 23, 'Nov': 24, 'Oct': 25, 'Sep': 26, '': 0}

x index sample:

31-04-26

[6 4 1 3 7 1 5 9]

y index sample:

26/Apr/2031

[14 5 9 2 15 2 5 3 6 4 13]

定义编码器,解码器,以及把两者联系起来的seq2seq模型

编码器

编码器输入是输入的一句话每个单词对应的索引,输出的是隐藏层状态和细胞状态,就是这个步骤

class Encoder(nn.Module):

# 我们在定义自已的网络的时候,需要继承nn.Module类,并重新实现构造函数__init__和forward这两个方法。

def __init__(self, input_dim, emb_dim, hid_dim, n_layers, dropout):

super().__init__()

self.input_dim = input_dim

self.emb_dim = emb_dim

self.hid_dim = hid_dim

self.n_layers = n_layers

self.dropout = dropout

self.embedding = nn.Embedding(input_dim, emb_dim) # 27-->16

self.rnn = nn.LSTM(emb_dim, hid_dim, n_layers, dropout=dropout) # 16-->32

self.dropout = nn.Dropout(dropout) # 32-->27

def forward(self, src):

# src: (sent_len, batch_size)

embedded = self.dropout(self.embedding(src))

# embedded: (sent_len, batch_size, emb_dim)

outputs, (hidden, cell) = self.rnn(embedded)

# outputs: (sent_len, batch_size, hid_dim)

# hidden: (n_layers, batch_size, hid_dim)

# cell: (n_layers, batch_size, hid_dim)

return hidden, cell

一定要记得写继承nn.Module,我写的时候忘记写这个,导致查看模型结构的时候print(model)啥也没有

解码器

解码器输入是之前求出来的隐藏层状态和细胞状态,然后根据前面传过来的隐藏层状态和细胞状态进行预测,后面的词应该是啥

class Decoder(nn.Module):

def __init__(self, output_dim, emb_dim, hid_dim, n_layers, dropout):

super().__init__()

self.emb_dim = emb_dim

self.hid_dim = hid_dim

self.output_dim = output_dim

self.n_layers = n_layers

self.dropout = dropout

self.embedding = nn.Embedding(output_dim, emb_dim) # 27-->16

self.rnn = nn.LSTM(emb_dim, hid_dim, n_layers, dropout=dropout) # 16-->32

self.out = nn.Linear(hid_dim, output_dim) # 32-->27

self.dropout = nn.Dropout(dropout)

def forward(self, input, hidden, cell):

# input: (batch_size) -> input: (1, batch_size)

input = input.unsqueeze(0)

# embedded: (1, batch_size, emb_dim)

embedded = self.dropout(self.embedding(input))

# hidden: (n_layers, batch size, hid_dim)

# cell: (n_layers, batch size, hid_dim)

# output(1, batch_size, hid_dim)

output, (hidden, cell) = self.rnn(embedded, (hidden, cell))

# prediction: (batch_size, output_dim)

prediction = self.out(output.squeeze(0))

return prediction, hidden, cell

这个部分为什么没有把target的部分框进去呢,因为这里用的teacher_force的方法其实只是在一定概率下使用的target

这里用了一个teacher_force的方法:

for t in range(1, max_len):

# 注意前面的hidden、cell和后面的是不同的

output, hidden, cell = self.decoder(input, hidden, cell) # torch.Size([1, 32, 32]) torch.Size([1, 32, 32])

# print(hidden.shape,cell.shape)

# output: (32,27) 每一个step下输出batch_size个词向量

outputs[t] = output

teacher_force = random.random() < teacher_forcing_ratio

# True的可能性为teacher_forcing_ratio,有一半机率用根据上一次预测的结果,一半机率用上一个真实的词

# 引入了一个监督学习的效果,下一步的输入不一定是上一步预测来的词,有一半的可能性是直接用正确的词

# 在Decoder预测的时候,都是用到上一次预测的结果,一般情况下,在预测的前几轮都是不正确的,也就是说前面的预测本来就不正确,后面根据前面的结果再预测就是错上加错了。

# 所以,为了加快模型训练的速度,我们引入teacher_forcing方法。

top1 = output.max(1)[1]

input = (trg[t] if teacher_force else top1)

# print(top1)

这里添加了一个在一定概率下才使用真实翻译结果作为输入的部分,相当于半监督的学习效果,一半可能性是自己预测出来的词当输入输入去预测下一个词,一般可能性是用真实的上一个词去预测下一个此,为什么要这么做呢,一般情况下,在预测的前几轮都是不正确的,也就是说前面的预测本来就不正确,后面根据前面的结果再预测就是错上加错了。当然也可以直接不用上一次预测的结果作为下一次的输入,直接用标准结果作为输入,这样学习应该会更快但是上下文连贯可能能力没那么强(我猜的)如果全部用标准翻译结果只需要将teacher_forcing_ratio=1即可,teacher_forcing_ratio=0则表示全部都是自己预测的翻译结果,用于测试部分使用。

两者联系起来的seq2seq模型

class Seq2Seq(nn.Module):

def __init__(self, encoder, decoder, device):

super().__init__()

self.encoder = encoder

self.decoder = decoder

self.device = device

assert encoder.hid_dim == decoder.hid_dim, \

"Hidden dimensions of encoder and decoder must be equal!"

assert encoder.n_layers == decoder.n_layers, \

"Encoder and decoder must have equal number of layers!"

def forward(self, src, trg, teacher_forcing_ratio=0.5):

# src: (sent_len, batch size)

# trg: (sent_len, batch size)

batch_size = trg.shape[1]

max_len = trg.shape[0]

trg_vocab_size = self.decoder.output_dim

# 创建outputs张量存储Decoder的输出

outputs = torch.zeros(max_len, batch_size, trg_vocab_size).to(self.device)

hidden, cell = self.encoder(src) # torch.Size([1, 32, 32]) torch.Size([1, 32, 32])

# (n_layer, batch_size, hidden_dim)

# print(hidden.shape,cell.shape)

# 输入到Decoder网络的第一个字符是 (句子开始标记)

input = trg[0, :]

for t in range(1, max_len):

# 注意前面的hidden、cell和后面的是不同的

output, hidden, cell = self.decoder(input, hidden, cell) # torch.Size([1, 32, 32]) torch.Size([1, 32, 32])

# print(hidden.shape,cell.shape)

# output: (32,27) 每一个step下输出batch_size个词向量

outputs[t] = output

teacher_force = random.random() < teacher_forcing_ratio

# True的可能性为teacher_forcing_ratio,有一半机率用根据上一次预测的结果,一半机率用上一个真实的词

# 引入了一个监督学习的效果,下一步的输入不一定是上一步预测来的词,有一半的可能性是直接用正确的词

#在Decoder预测的时候,都是用到上一次预测的结果,一般情况下,在预测的前几轮都是不正确的,也就是说前面的预测本来就不正确,后面根据前面的结果再预测就是错上加错了。

# 所以,为了加快模型训练的速度,我们引入teacher_forcing方法。

top1 = output.max(1)[1]

input = (trg[t] if teacher_force else top1)

# print(top1)

# 输出为(11,32,27)

return outputs

然后是定义超参数和模型

"""

超参数定义

"""

INPUT_DIM = len(date.vocab) # 27

OUTPUT_DIM = len(date.vocab) # 27

ENC_EMB_DIM = 16

DEC_EMB_DIM = 16

HID_DIM = 32

N_LAYERS = 1

ENC_DROPOUT = 0.5

DEC_DROPOUT = 0.5

BATCH_SIZE = 32

device = "cpu"

"""

输入的是每个词的onehot编码27维,embedding之后成了16维的词向量,16维进行编码成32维的编码,输出是32维的向量

"""

enc = Encoder(INPUT_DIM, ENC_EMB_DIM, HID_DIM, N_LAYERS, ENC_DROPOUT)

dec = Decoder(OUTPUT_DIM, DEC_EMB_DIM, HID_DIM, N_LAYERS, DEC_DROPOUT)

# 使用to方法可以容易地将对象移动到不同的设备上(CPU或者GPU)

model = Seq2Seq(enc, dec, device).to(device)

print(model)

# 得到要训练的参数个数

def count_parameters(model):

return sum(p.numel() for p in model.parameters() if p.requires_grad)

print(f'The model has {count_parameters(model):,} trainable parameters')

这里编码器的作用是把输入的27维的onehot词向量一个一个变成16维的embedding向量然后把16维的特征向量输入到lstm单元里面变成32维向量,dropout失活比例为0.5

解码器也是同样的

定义loss,优化器

# 优化器

optimizer = optim.Adam(model.parameters())

# 定义损失

#TODO: 传入的需要是softmax结果和分类结果 softmax结果是27个概率,分类结果是最终索引是哪个索引

criterion = nn.CrossEntropyLoss(ignore_index=PAD_ID) # 一个batch里的所有句子都padding到了相同的长度,不足的用补齐PAD为0

这个交叉熵的输入不是一般传入的的类和类来比较的,是输入的类和softmax结果

开始训练

loss_record = []

for epoch in range(7000):

# model.train(): 让model变为训练模式,启用batch normalization(本模型未使用)和 Dropout。

model.train()

epoch_loss = 0

bx, by, decoder_len = date.sample(BATCH_SIZE)

# 一个batch是32个数据,bx都是中文日期的索引序列,by是输出英文日期的索引序列

# print(bx.shape) #(32, 8)

# print(by.shape) #(32, 11)

# print(decoder_len) # 返回的是输出长度-1, [10,10,10,...]32个10

bx_tensor = torch.LongTensor(bx).to(device).t() # torch.Size([8, 32])

by_tensor = torch.LongTensor(by).to(device).t() # torch.Size([11, 32])

output = model(bx_tensor, by_tensor) # (11,32,27)

# 开始词不要,取后面的十个词(10,32,27) view相当于tensorflow的reshape和numpy的resize

output = output[1:].view(-1, output.shape[-1]) # torch.Size([320, 27])

# print(output[:10])

by_tensor = by_tensor[1:].contiguous().view(-1) # torch.Size([320])

# print(by_tensor[:10])

loss = criterion(output, by_tensor)

loss.backward()

torch.nn.utils.clip_grad_norm_(model.parameters(),1) # TODO:梯度剪裁,防止梯度爆炸

optimizer.step()

epoch_loss += loss.item()

loss_record.append(epoch_loss)

得到的是(32,8)的编码器输入和(32,11)的解码器输入,但是在定义模型的时候没有batch_first=True所以输入的时候不应该把batch放在前面,所以这里用了一个转置.t(),输出的时候是除了开头词

测试一下模型的翻译能力

可以每隔200个batch训练之后,输出一下一个数据的测试结果

if epoch%200 == 0:

target = date.idx2str(by[0, 1:-1])

model.eval()

# model.eval(): 开启测试模式,关闭batch normalization(本模型未使用)和 dropout

# print(by[0])

output = model(torch.LongTensor([bx[0]]).to(device).t(), torch.LongTensor([by[0]]).to(device).t(), 0) # 将teacher_force置零,全部输出由上一步预测的结果

# 这里关闭了teacher_force相当于每次都用前面预测的结果作为后面的词预测的输入,本来一个词一个词的教它翻译的

# 现在不交了,直接全部自己根据前面的词自己翻译,此时虽然输入了by,但是不起作用了

# tensor_out = [out.max(1)[1] for out in output]

tensor_out = [torch.max(output[i], 1)[1] for i in range(1,output.shape[0])]

#output的第一个词在初始化的时候都是0,在训练和预测的时候直接用的索引预测的,但是并没有修改它

# 所以这里必然是在一堆0里面选最大的,是一个随机结果,没有可用性,直接不看这一位,从第二个词开始看sigmoid结果

# tensor.max(,1)返回所以 tensor.max(,0)返回最大值

pred = [int(tensor_out[i][0]) for i in range(len(tensor_out))]

res = date.idx2str(pred)

src = date.idx2str(bx[0])

print(

"t: ", epoch,

"| loss: %.3f" % loss,

"| input: ", src,

"| target: ", target,

"| inference: ", res,

)

测试的时候输入编码器的还是一个8为index,解码器的输入还是一个11维的index,但是在模型翻译的时候会指定第一个词为开始词

Pytorch完整代码

import numpy as np

import torch

import torch.nn as nn

import torch.optim as optim

import random

PAD_ID = 0 # ""的索引置为0

class DateData:

def __init__(self, date_cn, date_en):

self.vocab = set(

[str(i) for i in range(0, 10)] + ["-", "/", "" , "" ] + [

i.split("/")[1] for i in date_en])

self.v2i = {v: i for i, v in enumerate(sorted(list(self.vocab)), start=1)} # 从1开始,加索引

self.v2i["" ] = PAD_ID #加索引0

self.vocab.add("" )

self.i2v = {i: v for v, i in self.v2i.items()}

# 构建了两个字典可以对应查询i2v和v2i

self.x, self.y = [], []

for cn, en in zip(date_cn, date_en):

self.x.append([self.v2i[v] for v in cn])

self.y.append(

[self.v2i["" ], ] + [self.v2i[v] for v in en[:3]] + [

self.v2i[en[3:6]], ] + [self.v2i[v] for v in en[6:]] + [

self.v2i["" ], ])

self.x, self.y = np.array(self.x), np.array(self.y)

self.start_token = self.v2i["" ]

self.end_token = self.v2i["" ]

def sample(self, n=64):

bi = np.random.randint(0, len(self.x), size=n)

bx, by = self.x[bi], self.y[bi]

decoder_len = np.full((len(bx),), by.shape[1] - 1, dtype=np.int32)

return bx, by, decoder_len

def idx2str(self, idx):

x = []

for i in idx:

x.append(self.i2v[i])

if i == self.end_token:

break

return "".join(x)

@property

def num_word(self):

return len(self.vocab)

"""

(1)一般把网络中具有可学习参数的层(如全连接层、卷积层等)放在构造函数__init__()中,当然我也可以吧不具有参数的层也放在里面;

(2)一般把不具有可学习参数的层(如ReLU、dropout、BatchNormanation层)可放在构造函数中,也可不放在构造函数中,如果不放在构造函数__init__里面,则在forward方法里面可以使用nn.functional来代替

(3)forward方法是必须要重写的,它是实现模型的功能,实现各个层之间的连接关系的核心。

"""

class Encoder(nn.Module):

# TODO:我们在定义自已的网络的时候,需要继承nn.Module类,并重新实现构造函数__init__和forward这两个方法。

def __init__(self, input_dim, emb_dim, hid_dim, n_layers, dropout):

super().__init__()

self.input_dim = input_dim

self.emb_dim = emb_dim

self.hid_dim = hid_dim

self.n_layers = n_layers

self.dropout = dropout

self.embedding = nn.Embedding(input_dim, emb_dim) # 27-->16

self.rnn = nn.LSTM(emb_dim, hid_dim, n_layers, dropout=dropout) # 16-->32

self.dropout = nn.Dropout(dropout) # 32-->27

def forward(self, src):

# src: (sent_len, batch_size)

embedded = self.dropout(self.embedding(src))

# embedded: (sent_len, batch_size, emb_dim)

outputs, (hidden, cell) = self.rnn(embedded)

# outputs: (sent_len, batch_size, hid_dim)

# hidden: (n_layers, batch_size, hid_dim)

# cell: (n_layers, batch_size, hid_dim)

return hidden, cell

class Decoder(nn.Module):

def __init__(self, output_dim, emb_dim, hid_dim, n_layers, dropout):

super().__init__()

self.emb_dim = emb_dim

self.hid_dim = hid_dim

self.output_dim = output_dim

self.n_layers = n_layers

self.dropout = dropout

self.embedding = nn.Embedding(output_dim, emb_dim) # 27-->16

self.rnn = nn.LSTM(emb_dim, hid_dim, n_layers, dropout=dropout) # 16-->32

self.out = nn.Linear(hid_dim, output_dim) # 32-->27

self.dropout = nn.Dropout(dropout)

def forward(self, input, hidden, cell):

# input: (batch_size) -> input: (1, batch_size)

input = input.unsqueeze(0)

# embedded: (1, batch_size, emb_dim)

embedded = self.dropout(self.embedding(input))

# hidden: (n_layers, batch size, hid_dim)

# cell: (n_layers, batch size, hid_dim)

# output(1, batch_size, hid_dim)

output, (hidden, cell) = self.rnn(embedded, (hidden, cell))

# prediction: (batch_size, output_dim)

prediction = self.out(output.squeeze(0))

return prediction, hidden, cell

class Seq2Seq(nn.Module):

def __init__(self, encoder, decoder, device):

super().__init__()

self.encoder = encoder

self.decoder = decoder

self.device = device

assert encoder.hid_dim == decoder.hid_dim, \

"Hidden dimensions of encoder and decoder must be equal!"

assert encoder.n_layers == decoder.n_layers, \

"Encoder and decoder must have equal number of layers!"

def forward(self, src, trg, teacher_forcing_ratio=0.5):

# src: (sent_len, batch size)

# trg: (sent_len, batch size)

batch_size = trg.shape[1]

max_len = trg.shape[0]

trg_vocab_size = self.decoder.output_dim

# 创建outputs张量存储Decoder的输出

outputs = torch.zeros(max_len, batch_size, trg_vocab_size).to(self.device)

hidden, cell = self.encoder(src) # torch.Size([1, 32, 32]) torch.Size([1, 32, 32])

# (n_layer, batch_size, hidden_dim)

# print(hidden.shape,cell.shape)

# 输入到Decoder网络的第一个字符是 (句子开始标记)

input = trg[0, :]

for t in range(1, max_len):

# 注意前面的hidden、cell和后面的是不同的

output, hidden, cell = self.decoder(input, hidden, cell) # torch.Size([1, 32, 32]) torch.Size([1, 32, 32])

# print(hidden.shape,cell.shape)

# output: (32,27) 每一个step下输出batch_size个词向量

outputs[t] = output

teacher_force = random.random() < teacher_forcing_ratio

# True的可能性为teacher_forcing_ratio,有一半机率用根据上一次预测的结果,一半机率用上一个真实的词

# 引入了一个监督学习的效果,下一步的输入不一定是上一步预测来的词,有一半的可能性是直接用正确的词

# TODO:在Decoder预测的时候,都是用到上一次预测的结果,一般情况下,在预测的前几轮都是不正确的,也就是说前面的预测本来就不正确,后面根据前面的结果再预测就是错上加错了。

# 所以,为了加快模型训练的速度,我们引入teacher_forcing方法。

top1 = output.max(1)[1]

input = (trg[t] if teacher_force else top1)

# print(top1)

# 输出为(11,32,27)

return outputs

if __name__ == "__main__":

with open(r"data.txt",'r') as f:

date = f.readlines()

date = [i.strip().split(' ') for i in date]

# print(date)

date_cn = [i[0] for i in date]

date_en = [i[1] for i in date]

date = DateData(date_cn=date_cn, date_en=date_en)

print("Chinese time order: yy/mm/dd ", date_cn[:3], "\nEnglish time order: dd/M/yyyy ",date_en[:3])

print("vocabularies: ", date.vocab)

print("v2i:\n",date.v2i)

print("x index sample: \n{}\n{}".format(date.idx2str(date.x[0]), date.x[0]),

"\ny index sample: \n{}\n{}".format(date.idx2str(date.y[0]), date.y[0]))

"""

超参数定义

"""

INPUT_DIM = len(date.vocab) # 27

OUTPUT_DIM = len(date.vocab) # 27

ENC_EMB_DIM = 16

DEC_EMB_DIM = 16

HID_DIM = 32

N_LAYERS = 1

ENC_DROPOUT = 0.5

DEC_DROPOUT = 0.5

BATCH_SIZE = 32

device = "cpu"

"""

输入的是每个词的onehot编码27维,embedding之后成了16维的词向量,16维进行编码成32维的编码,输出是32维的向量

"""

enc = Encoder(INPUT_DIM, ENC_EMB_DIM, HID_DIM, N_LAYERS, ENC_DROPOUT)

dec = Decoder(OUTPUT_DIM, DEC_EMB_DIM, HID_DIM, N_LAYERS, DEC_DROPOUT)

# 使用to方法可以容易地将对象移动到不同的设备上(CPU或者GPU)

model = Seq2Seq(enc, dec, device).to(device)

print(model)

# 初始化权重

def init_weights(m):

for name, param in m.named_parameters():

nn.init.uniform_(param.data, -0.08, 0.08)

model.apply(init_weights)

# 得到要训练的参数个数

def count_parameters(model):

return sum(p.numel() for p in model.parameters() if p.requires_grad)

print(f'The model has {count_parameters(model):,} trainable parameters')

# 优化器

optimizer = optim.Adam(model.parameters())

# 定义损失

#TODO: 传入的需要是sigmoid结果和分类结果 sigmoid结果是27个概率,分类结果是最终索引是哪个索引

criterion = nn.CrossEntropyLoss(ignore_index=PAD_ID) # 一个batch里的所有句子都padding到了相同的长度,不足的用补齐PAD为0

loss_record = []

for epoch in range(7000):

# model.train(): 让model变为训练模式,启用batch normalization(本模型未使用)和 Dropout。

model.train()

epoch_loss = 0

bx, by, decoder_len = date.sample(BATCH_SIZE)

# 一个batch是32个数据,bx都是中文日期的索引序列,by是输出英文日期的索引序列

# print(bx.shape) #(32, 8)

# print(by.shape) #(32, 11)

# print(decoder_len) # 返回的是输出长度-1, [10,10,10,...]32个10

bx_tensor = torch.LongTensor(bx).to(device).t() # torch.Size([8, 32])

by_tensor = torch.LongTensor(by).to(device).t() # torch.Size([11, 32])

output = model(bx_tensor, by_tensor) # (11,32,27)

# 开始词不要,取后面的十个词(10,32,27) view相当于tensorflow的reshape和numpy的resize

output = output[1:].view(-1, output.shape[-1]) # torch.Size([320, 27])

# print(output[:10])

by_tensor = by_tensor[1:].contiguous().view(-1) # torch.Size([320])

# print(by_tensor[:10])

loss = criterion(output, by_tensor)

loss.backward()

torch.nn.utils.clip_grad_norm_(model.parameters(),1) # TODO:梯度剪裁,防止梯度爆炸

optimizer.step()

epoch_loss += loss.item()

loss_record.append(epoch_loss)

if epoch%200 == 0:

target = date.idx2str(by[0, 1:-1])

model.eval()

# model.eval(): 开启测试模式,关闭batch normalization(本模型未使用)和 dropout

# print(by[0])

output = model(torch.LongTensor([bx[0]]).to(device).t(), torch.LongTensor([by[0]]).to(device).t(), 0) # 将teacher_force置零,全部输出由上一步预测的结果

# 这里关闭了teacher_force相当于每次都用前面预测的结果作为后面的词预测的输入,本来一个词一个词的教它翻译的

# 现在不交了,直接全部自己根据前面的词自己翻译,此时虽然输入了by,但是不起作用了

# tensor_out = [out.max(1)[1] for out in output]

tensor_out = [torch.max(output[i], 1)[1] for i in range(1,output.shape[0])]

#TODO: output的第一个词在初始化的时候都是0,在训练和预测的时候直接用的索引预测的,但是并没有修改它

# 所以这里必然是在一堆0里面选最大的,是一个随机结果,没有可用性,直接不看这一位,从第二个词开始看sigmoid结果

# tensor.max(,1)返回所以 tensor.max(,0)返回最大值

pred = [int(tensor_out[i][0]) for i in range(len(tensor_out))]

res = date.idx2str(pred)

src = date.idx2str(bx[0])

print(

"t: ", epoch,

"| loss: %.3f" % loss,

"| input: ", src,

"| target: ", target,

"| inference: ", res,

)

训练结果:

我们可以把teacher_force改成1,让它解码器的输入能百分之百参与到训练里面去,完全不用预测结果作为下一个time_step的输入

def forward(self, src, trg, teacher_forcing_ratio=1):