Pytorch:tensorboard的使用

文章目录

- TensorBoard

- TensorBoard的简单使用

-

- 创建event file的接口

- 记录标量的接口

- 启动web终端

- 演示

- 其他接口的使用

-

- add_histogram()

- 采用以上接口,对一个分类模型的参数进行可视化

- add_image()

- make_grid()

- 采用以上接口,对一个模型中的卷积与特征图进行展示

- add_graph()

TensorBoard

TensorBoard:TensorFlow中强大的可视化工具

将python程序中需要记录的可视化数据,保存成硬盘上可以展示的文件(event file),再通过TensorBoard进行可视化的展示,就可以通过web端查看。

TensorBoard的简单使用

创建event file的接口

- SummaryWriter

- log_dir: event file输出文件夹

- comment:不指定log_dir时,文件夹的后缀。不指定log_dir时,会在此文件夹外,还有一个runs文件夹

- filename_suffix:event file文件名后缀

记录标量的接口

- add_scalar:

- tag:图像的标签名,图的唯一识别

- scalar_value: 要记录的标量

- global_step:x轴

- add_scalars:

- main_tag:标签

- tag_scalar_dir:一个字典,key是变量的tag,value是变量的值。

启动web终端

tensorboard --logdir=yourpath --port=端口 --bind_all

最后一个参数代表运行绑定端口,让其他ip的地址通过端口也能访问,并不只让127.0.0.1访问

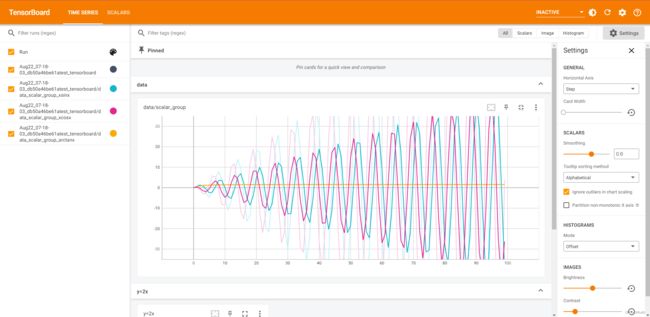

演示

import numpy as np

from torch.utils.tensorboard import SummaryWriter

writer = SummaryWriter(comment='test_tensorboard')

for x in range(100):

writer.add_scalar('y=2x', x * 2, x)

writer.add_scalar('y=pow(2, x)', 2 ** x, x)

writer.add_scalars('data/scalar_group', {"xsinx": x * np.sin(x),

"xcosx": x * np.cos(x),

"arctanx": np.arctan(x)}, x)

writer.close()

上诉代码执行完毕生成,文件(runs)–(Aug22_07-18-03_db50a46be61atest_tensorboard)–下面是几个标量的文件夹和event file

其他接口的使用

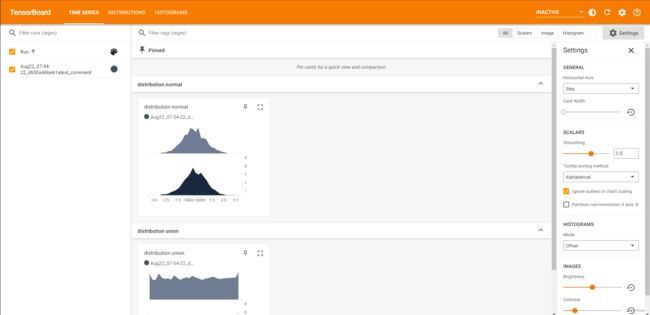

add_histogram()

- 功能:统计直方图与多分位数折线图

- tag:图像的标签名,图的唯一标识

- values:要统计的参数

- global_step:y轴

- bins:取直方图的bins

writer = SummaryWriter(comment='test_comment', filename_suffix="test_suffix")

for x in range(2):

np.random.seed(x)

data_union = np.arange(100)

data_normal = np.random.normal(size=1000)

writer.add_histogram('distribution union', data_union, x)

writer.add_histogram('distribution normal', data_normal, x)

plt.subplot(121).hist(data_union, label="union")

plt.subplot(122).hist(data_normal, label="normal")

plt.legend()

plt.show()

writer.close()

接口中的参数:global_step,主要体现一个tag中有几幅图,并且在y轴上体现出来,如上图。

采用以上接口,对一个分类模型的参数进行可视化

train_curve = list()

valid_curve = list()

iter_count = 0

# 构建 SummaryWriter

writer = SummaryWriter(comment='test_your_comment', filename_suffix="_test_your_filename_suffix")

for epoch in range(MAX_EPOCH):

loss_mean = 0.

correct = 0.

total = 0.

net.train()

for i, data in enumerate(train_loader):

iter_count += 1

# forward

inputs, labels = data

outputs = net(inputs)

# backward

optimizer.zero_grad()

loss = criterion(outputs, labels)

loss.backward()

# update weights

optimizer.step()

# 统计分类情况

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).squeeze().sum().numpy()

# 打印训练信息

loss_mean += loss.item()

train_curve.append(loss.item())

if (i+1) % log_interval == 0:

loss_mean = loss_mean / log_interval

print("Training:Epoch[{:0>3}/{:0>3}] Iteration[{:0>3}/{:0>3}] Loss: {:.4f} Acc:{:.2%}".format(

epoch, MAX_EPOCH, i+1, len(train_loader), loss_mean, correct / total))

loss_mean = 0.

# 记录数据,保存于event file

writer.add_scalars("Loss", {"Train": loss.item()}, iter_count)

writer.add_scalars("Accuracy", {"Train": correct / total}, iter_count)

# 每个epoch,记录梯度,权值

for name, param in net.named_parameters():

writer.add_histogram(name + '_grad', param.grad, epoch)

writer.add_histogram(name + '_data', param, epoch)

scheduler.step() # 更新学习率

# validate the model

if (epoch+1) % val_interval == 0:

correct_val = 0.

total_val = 0.

loss_val = 0.

net.eval()

with torch.no_grad():

for j, data in enumerate(valid_loader):

inputs, labels = data

outputs = net(inputs)

loss = criterion(outputs, labels)

_, predicted = torch.max(outputs.data, 1)

total_val += labels.size(0)

correct_val += (predicted == labels).squeeze().sum().numpy()

loss_val += loss.item()

loss_mean_epoch = loss_val / len(valid_loader) # 计算一个epoch的loss均值

valid_curve.append(loss_mean_epoch) # 记录每个epoch的loss

print("Valid:\t Epoch[{:0>3}/{:0>3}] Iteration[{:0>3}/{:0>3}] Loss: {:.4f} Acc:{:.2%}".format(

epoch, MAX_EPOCH, j+1, len(valid_loader), loss_mean_epoch, correct_val / total_val))

# 记录数据,保存于event file

writer.add_scalars("Loss", {"Valid": loss_mean_epoch}, iter_count)

writer.add_scalars("Accuracy", {"Valid": correct_val / total_val}, iter_count)

add_image()

- 功能:记录图像

- tag:图像的标签名,图的唯一标识

- img_tensor:图像数据,注意尺度

- global_step:x轴

- dataformats:数据形式,CHW,HWC,HW

最后一个参数,主要是有的图像的通道数在维度上不一样。有的是灰度图(通道数为1)

第二个参数,对于图像上数据,如果有的图像经过了模型归一化,其中的数据已经不是0-255,而是0-1的浮点数,这时候,如果发现数据都在0-1,会对其乘以255来可视化。

此方法,会在一个窗口上通过进度条的形式,拉动x轴,分别展示多个图像,如图:

make_grid()

不是tensorboard的SummaryWriter对象中的方法,而是来自torchversion.utils中的方法。

一般是用这个方法绘制一个网格图像,然后用SummaryWriter的add_image去添加一个图像。

功能:制作网格图像

• tensor:图像数据, BCH*W形式

• nrow:行数(列数自动计算)

• padding:图像间距(像素单位)

• normalize:是否将像素值标准化

• range:标准化范围

• scale_each:是否单张图维度标准化

• pad_value:padding的像素值

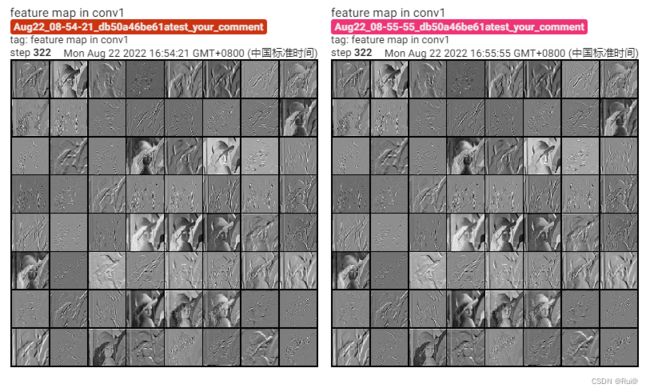

结果如下图:

采用以上接口,对一个模型中的卷积与特征图进行展示

import torch.nn as nn

from PIL import Image

import torchvision.transforms as transforms

from torch.utils.tensorboard import SummaryWriter

import torchvision.utils as vutils

import os

import torch

import sys

hello_pytorch_DIR = os.path.abspath(os.path.dirname(__file__)+os.path.sep+".."+os.path.sep+"..")

sys.path.append(hello_pytorch_DIR)

from tools.common_tools import set_seed

import torchvision.models as models

set_seed(1) # 设置随机种子

# ----------------------------------- kernel visualization -----------------------------------

# flag = 0

flag = 1

if flag:

writer = SummaryWriter(comment='test_your_comment', filename_suffix="_test_your_filename_suffix")

alexnet = models.alexnet(pretrained=True)

kernel_num = -1

vis_max = 1

# 避免pytorch1.7下的一个小bug,增加 torch.no_grad

with torch.no_grad():

for sub_module in alexnet.modules():

if isinstance(sub_module, nn.Conv2d):

kernel_num += 1

if kernel_num > vis_max:

break

kernels = sub_module.weight

c_out, c_int, k_w, k_h = tuple(kernels.shape)

for o_idx in range(c_out):

kernel_idx = kernels[o_idx, :, :, :].unsqueeze(1) # make_grid需要 BCHW,这里拓展C维度。其实拿出来的是原卷积核的 CHW,只不过在把原C当作B,然后扩展一个C

kernel_grid = vutils.make_grid(kernel_idx, normalize=True, scale_each=True, nrow=c_int)

writer.add_image('{}_Convlayer_split_in_channel'.format(kernel_num), kernel_grid, global_step=o_idx)

kernel_all = kernels.view(-1, 3, k_h, k_w) # 3, h, w

kernel_grid = vutils.make_grid(kernel_all, normalize=True, scale_each=True, nrow=8) # c, h, w

writer.add_image('{}_all'.format(kernel_num), kernel_grid, global_step=322)

print("{}_convlayer shape:{}".format(kernel_num, tuple(kernels.shape)))

writer.close()

# ----------------------------------- feature map visualization -----------------------------------

# flag = 0

flag = 1

if flag:

with torch.no_grad():

writer = SummaryWriter(comment='test_your_comment', filename_suffix="_test_your_filename_suffix")

# 数据

path_img = "./lena.png" # your path to image

normMean = [0.49139968, 0.48215827, 0.44653124]

normStd = [0.24703233, 0.24348505, 0.26158768]

norm_transform = transforms.Normalize(normMean, normStd)

img_transforms = transforms.Compose([

transforms.Resize((224, 224)),

transforms.ToTensor(),

norm_transform

])

img_pil = Image.open(path_img).convert('RGB')

if img_transforms is not None:

img_tensor = img_transforms(img_pil)

img_tensor.unsqueeze_(0) # chw --> bchw

# 模型

alexnet = models.alexnet(pretrained=True)

# forward

convlayer1 = alexnet.features[0]

fmap_1 = convlayer1(img_tensor)

# 预处理

fmap_1.transpose_(0, 1) # bchw=(1, 64, 55, 55) --> (64, 1, 55, 55) 为了展示,把64的c换成b

fmap_1_grid = vutils.make_grid(fmap_1, normalize=True, scale_each=True, nrow=8)

writer.add_image('feature map in conv1', fmap_1_grid, global_step=322)

writer.close()

add_graph()

功能:可视化模型计算图

• model:模型,必须是 nn.Module

• input_to_model:输出给模型的数据

• verbose:是否打印计算图结构信息

writer = SummaryWriter(comment='test_your_comment', filename_suffix="_test_your_filename_suffix")

# 模型

fake_img = torch.randn(1, 3, 32, 32)

lenet = LeNet(classes=2)

writer.add_graph(lenet, fake_img)

writer.close()