决策树的进阶-----属性值为连续

目录

一.决策树概述

1.1决策树概念

1.2决策树实现步骤

1.3分类原理

编辑

二.分类指标

2.1离散和连续属性

2.2连续值处理

2.3连续值划分原理

三.代码实现

3.1创建数据集

3.2计算信息增益

3.2.1信息熵

3.2.2条件熵

3.2.3信息增益

3.4调用信息增益函数确定根节点

运行结果

3.5划分连续值

3.6 定义节点的类

3.7决策树

3.7.1定义决策树

3.7.2生成决策树和预测

一.决策树概述

1.1决策树概念

决策树(decision tree)是一种基本的分类与回归方法。决策树模型呈树形结构,在分类问题中,表示基于特征对实例进行分类的过程。它可以认为是if-then规则的集合,也可以认为是定义在特征空间与类空间上的条件概率分布。

决策树是一种描述对实例进行分类的树形结构,其中每个内部节点表示一个属性上的判断,每个分支代表一个判断结果的输出,最后每个叶节点代表一种分类结果,本质是一颗由多个判断节点组成的树。分类决策树模型是一种树形结构。 决策树由结点和有向边组成。结点有两种类型:内部结点和叶节点。内部结点表示一个特征或属性,叶节点表示一个类。

1.2决策树实现步骤

决策树通常有三个步骤:特征选择、决策树的生成、决策树的修剪。

特征选择:从训练数据的特征中选择一个特征作为当前节点的分裂标准(特征选择的标准不同产生了不同的特征决策树算法)。

决策树生成:根据所选特征评估标准,从上至下递归地生成子节点,直到数据集不可分则停止决策树停止声场。

决策树剪枝:决策树容易过拟合,需要剪枝来缩小树的结构和规模(包括预剪枝和后剪枝)。

算法基本流程:

将所有数据放在根节点

选择一个最优的特征,根据这个特征将训练数据分割成子集,使得各个子集在当前条件下有一个最好的分类

递归下去,直到所有数据子集都被基本正确分类、或者没有合适的特征为止

递归返回的三个条件:

(1)当前结点点包含的样本全部属于同一类别

(2)当前属性集为空,或者是所有样本在所有属性的取值均相同,无法划分

(3)当前结点包含的样本集合为空

1.3分类原理

信息增益,它表示得知特征 A 的信息而使得样本集合不确定性减少的程度。数据集的信息熵公式如下:

表示集合 D 中属于第 k 类样本的样本子集。

针对某个特征 A,对于数据集 D 的条件熵 H(D|A) 为:

![]()

信息增益 = 信息熵 - 条件熵:

![]()

信息增益越大表示使用特征 A 来划分所获得的“纯度提升越大”

二.分类指标

2.1离散和连续属性

集美大学调查学生晚上回不回宿舍,通过(性别专业,毕业去向)这些离散属性和(学习成绩)这一连续属性对学生是否周末回宿舍进行分类。

2.2连续值处理

由于连续值不好直接用某个指标进行划分(例:有5组数据且成绩属性的值分别为(60,61,62,63,90),如果简单的以所有值进行划分如60那么得到的所有概率均为1/5。显然对于数据来说1/5的概率完全不合理,应该在60左右的概率要比较大。因此需要对连续值进行二值划分。

2.3连续值划分原理

- 首先将所有值进行排序,并求得相邻两位数的均值作为候选划分标准;

- 对每个划分标准求得经验条件熵;

- 计算根节点的信息熵与每个划分标准的信息增益;

- 选取最大增益对应的标准为划分标准,将低于标准的值置为0高于标准的值置为1。

三.代码实现

3.1创建数据集

def create_data():

datasets = [['男', '78', '计算机', '考研', '是'],

['男', '80', '师范', '考研', '是'],

['男', '79', '计算机', '就业', '否'],

['男', '79', '师范', '就业', '否'],

['男', '79', '财经', '考研', '是'],

['男', '83', '计算机', '考公', '否'],

['男', '77', '财经', '考研', '是'],

['男', '76', '师范', '就业', '否'],

['男', '75', '计算机', '考研', '否'],

['女', '76', '计算机', '考研', '是'],

['女', '79', '师范', '考研', '是'],

['女', '85', '计算机', '就业', '否'],

['女', '88', '师范', '就业', '否'],

['女', '87', '财经', '考研', '是'],

['女', '88', '计算机', '考公', '否'],

['女', '78', '财经', '考研', '是'],

['女', '90', '师范', '就业', '否'],

['女', '79', '计算机', '考研', '否'],

]

labels = [u'性别', u'学习成绩', u'专业', u'毕业去向', u'是否回宿舍']

# 返回数据集和每个维度的名称

return datasets, labels

3.2计算信息增益

3.2.1信息熵

# 计算信息熵

def calc_ent(datasets):

data_length = len(datasets)

label_count = {}

for i in range(data_length):

label = datasets[i][-1]

if label not in label_count:

label_count[label] = 0

label_count[label] += 1

ent = -sum([(p/data_length)*log(p/data_length, 2) for p in label_count.values()])

return ent

3.2.2条件熵

# 条件熵

def cond_ent(datasets, axis=0):

data_length = len(datasets)

feature_sets = {}

for i in range(data_length):

feature = datasets[i][axis]

if feature not in feature_sets:

feature_sets[feature] = []

feature_sets[feature].append(datasets[i])

cond_ent = sum([(len(p)/data_length)*calc_ent(p) for p in feature_sets.values()])

return cond_ent

3.2.3信息增益

# 信息增益

def info_gain(ent, cond_ent):

return ent - cond_ent

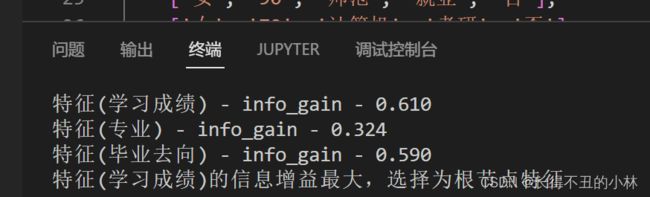

3.4调用信息增益函数确定根节点

def info_gain_train(datasets):

count = len(datasets[0]) - 1

ent = calc_ent(datasets)

best_feature = []

for c in range(count):

c_info_gain = info_gain(ent, cond_ent(datasets, axis=c))

best_feature.append((c, c_info_gain))

print('特征({}) - info_gain - {:.3f}'.format(labels[c], c_info_gain))

# 比较大小

best_ = max(best_feature, key=lambda x: x[-1])

return '特征({})的信息增益最大,选择为根节点特征'.format(labels[best_[0]])

datasets, labels = create_data()

data_df = pd.DataFrame(datasets, columns=labels)

a=info_gain_train(np.array(datasets))

print(a)运行结果

经过一次测试得到对应特征的信息增益,这里学习成绩信息增益最高故以此为跟节点

3.5划分连续值

为了方便本次实验数据仅采用一个连续属性。

第一步首先判断是否存在连续属性。为了预测,函数将输出转化后的数据集与对应标准。

# 化连续值为离散值

def trainsform(datasets):

pre_change = -1

for i in range(len(datasets[0])):

if type(datasets[0][i])==float:

pre_change = i

# 不存在连续属性

if pre_change == -1:

return datasets, -1

ent = calc_ent(datasets)

pre_feature = datasets[:, pre_change:pre_change + 1].flatten()

pre_feature_ls = np.array(sorted(list(pre_feature)))

eta_ls = []

#确定划分标准

for i in range(len(pre_feature_ls) - 1):

eta_ls.append((pre_feature_ls[i + 1] + pre_feature_ls[i]) / 2)

tmp_pre_feature_ls = np.copy(pre_feature_ls)

ent_count = []

for i in range(len(eta_ls)):

eta = eta_ls[i]

tmp_pre_feature_ls[pre_feature_ls <= eta] = int(0)

tmp_pre_feature_ls[pre_feature_ls > eta] = int(1)

tmp_datasets = np.copy(datasets)

tmp_datasets[:, pre_change:pre_change + 1] = np.array(tmp_pre_feature_ls).reshape(-1, 1)

gain = info_gain(ent, self.cond_ent(tmp_datasets, axis=pre_change))

ent_count.append(gain)

# 确定最佳标准

ent_count = np.array(ent_count)

best = np.argmax(ent_count)

tmp_pre_feature_ls[pre_feature_ls <= eta_ls[best]] = int(0)

tmp_pre_feature_ls[pre_feature_ls > eta_ls[best]] = int(1)

datasets[:, pre_change:pre_change + 1] = np.array(tmp_pre_feature_ls).reshape(-1, 1)

return datasets, eta_ls[best]

3.6 定义节点的类

# 定义节点类 二叉树

class Node:

def __init__(self, root=True, label=None, feature_name=None, feature=None):

self.root = root

self.label = label

self.feature_name = feature_name

self.feature = feature

self.tree = {}

self.result = {'label:': self.label, 'feature': self.feature, 'tree': self.tree}

def __repr__(self):

return '{}'.format(self.result)

def add_node(self, val, node):

self.tree[val] = node

def predict(self, features):

if self.root is True:

return self.label

return self.tree[features[self.feature]].predict(features)

3.7决策树

3.7.1定义决策树

class DTree:

def __init__(self, epsilon=0.1):

self.epsilon = epsilon

self._tree = {}

self.best = 0

# 熵

@staticmethod

def calc_ent(datasets):

data_length = len(datasets)

label_count = {}

for i in range(data_length):

label = datasets[i][-1]

if label not in label_count:

label_count[label] = 0

label_count[label] += 1

ent = -sum([(p/data_length)*log(p/data_length, 2) for p in label_count.values()])

return ent

# 经验条件熵

def cond_ent(self, datasets, axis=0):

data_length = len(datasets)

feature_sets = {}

for i in range(data_length):

feature = datasets[i][axis]

if feature not in feature_sets:

feature_sets[feature] = []

feature_sets[feature].append(datasets[i])

cond_ent = sum([(len(p)/data_length)*self.calc_ent(p) for p in feature_sets.values()])

return cond_ent

# 信息增益

@staticmethod

def info_gain(ent, cond_ent):

return ent - cond_ent

# 化连续值为离散值

def trainsform(self, datasets):

pre_change = -1

for i in range(len(datasets[0])):

if type(datasets[0][i])==float:

pre_change = i

if pre_change == -1:

return datasets, -1

ent = self.calc_ent(datasets)

pre_feature = datasets[:, pre_change:pre_change + 1].flatten()

pre_feature_ls = np.array(sorted(list(pre_feature)))

eta_ls = []

#确定划分标准

for i in range(len(pre_feature_ls) - 1):

eta_ls.append((pre_feature_ls[i + 1] + pre_feature_ls[i]) / 2)

tmp_pre_feature_ls = np.copy(pre_feature_ls)

ent_count = []

for i in range(len(eta_ls)):

eta = eta_ls[i]

tmp_pre_feature_ls[pre_feature_ls <= eta] = int(0)

tmp_pre_feature_ls[pre_feature_ls > eta] = int(1)

tmp_datasets = np.copy(datasets)

tmp_datasets[:, pre_change:pre_change + 1] = np.array(tmp_pre_feature_ls).reshape(-1, 1)

gain = self.info_gain(ent, self.cond_ent(tmp_datasets, axis=pre_change))

ent_count.append(gain)

# 确定最佳标准

ent_count = np.array(ent_count)

best = np.argmax(ent_count)

tmp_pre_feature_ls[pre_feature_ls <= eta_ls[best]] = int(0)

tmp_pre_feature_ls[pre_feature_ls > eta_ls[best]] = int(1)

datasets[:, pre_change:pre_change + 1] = np.array(tmp_pre_feature_ls).reshape(-1, 1)

return datasets, eta_ls[best]

def info_gain_train(self, datasets):

count = len(datasets[0]) - 1

ent = self.calc_ent(datasets)

best_feature = []

for c in range(count):

c_info_gain = self.info_gain(ent, self.cond_ent(datasets, axis=c))

best_feature.append((c, c_info_gain))

# 比较大小

best_ = max(best_feature, key=lambda x: x[-1])

return best_

def train(self, train_data):

"""

input:数据集D(DataFrame格式),特征集A,阈值eta

output:决策树T

"""

_, y_train, features = train_data.iloc[:, :-1], train_data.iloc[:, -1], train_data.columns[:-1]

# 1,若D中实例属于同一类Ck,则T为单节点树,并将类Ck作为结点的类标记,返回T

if len(y_train.value_counts()) == 1:

return Node(root=True,

label=y_train.iloc[0])

# 2, 若A为空,则T为单节点树,将D中实例树最大的类Ck作为该节点的类标记,返回T

if len(features) == 0:

return Node(root=True, label=y_train.value_counts().sort_values(ascending=False).index[0])

# 3,计算最大信息增益 同5.1,Ag为信息增益最大的特征

# 计算最大信息增益时,先将连续值转为离散值

datasets, self.best = dt.trainsform(np.array(train_data))

max_feature, max_info_gain = self.info_gain_train(datasets)

max_feature_name = features[max_feature]

# 4,Ag的信息增益小于阈值eta,则置T为单节点树,并将D中是实例数最大的类Ck作为该节点的类标记,返回T

if max_info_gain < self.epsilon:

return Node(root=True, label=y_train.value_counts().sort_values(ascending=False).index[0])

# 5,构建Ag子集

node_tree = Node(root=False, feature_name=max_feature_name, feature=max_feature)

feature_list = train_data[max_feature_name].value_counts().index

for f in feature_list:

sub_train_df = train_data.loc[train_data[max_feature_name] == f].drop([max_feature_name], axis=1)

# 6, 递归生成树

sub_tree = self.train(sub_train_df)

node_tree.add_node(f, sub_tree)

# pprint.pprint(node_tree.tree)

return node_tree

def fit(self, train_data):

self._tree = self.train(train_data)

return self._tree

def predict(self, X_test):

if X_test[3] <= self.best:

X_test[3] = int(0)

else:

X_test[3] = int(1)

return self._tree.predict(X_test)

3.7.2生成决策树和预测

datasets, labels = create_data()

data_df = pd.DataFrame(datasets, columns=labels)

dt = DTree()

tree = dt.fit(data_df)

print(dt.predict(['男', '79', '计算机', '考研']))