掌握如何用Pytorch实现一个分类器

学习目标

- 了解分类器的任务和数据样式

- 掌握如何用Pytorch实现一个分类器

分类器任务和数据介绍

- 构造一个将不同图像进行分类的神经网络分类器, 对输入的图片进行判别并完成分类.

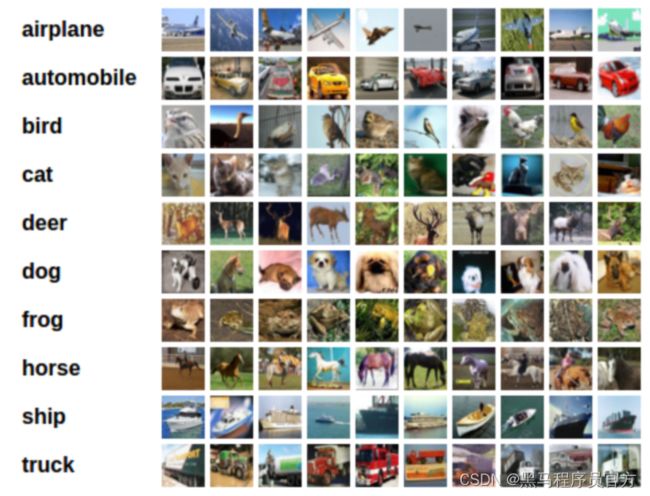

- 本案例采用CIFAR10数据集作为原始图片数据.

CIFAR10数据集介绍: 数据集中每张图片的尺寸是3 * 32 * 32, 代表彩色3通道

CIFAR10数据集总共有10种不同的分类, 分别是"airplane", "automobile", "bird", "cat", "deer", "dog", "frog", "horse", "ship", "truck".

- CIFAR10数据集的样例如下图所示:

训练分类器的步骤

- 1: 使用torchvision下载CIFAR10数据集

- 2: 定义卷积神经网络

- 3: 定义损失函数

- 4: 在训练集上训练模型

- 5: 在测试集上测试模型

- 1: 使用torchvision下载CIFAR10数据集

- 导入torchvision包来辅助下载数据集

import torch

import torchvision

import torchvision.transforms as transforms

- 下载数据集并对图片进行调整, 因为torchvision数据集的输出是PILImage格式, 数据域在[0, 1]. 我们将其转换为标准数据域[-1, 1]的张量格式.

transform = transforms.Compose(

[transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

trainset = torchvision.datasets.CIFAR10(root='./data', train=True,

download=True, transform=transform)

trainloader = torch.utils.data.DataLoader(trainset, batch_size=4,

shuffle=True, num_workers=2)

testset = torchvision.datasets.CIFAR10(root='./data', train=False,

download=True, transform=transform)

testloader = torch.utils.data.DataLoader(testset, batch_size=4,

shuffle=False, num_workers=2)

classes = ('plane', 'car', 'bird', 'cat',

'deer', 'dog', 'frog', 'horse', 'ship', 'truck')

- 输出结果:

Downloading https://www.cs.toronto.edu/~kriz/cifar-10-python.tar.gz to ./data/cifar-10-python.tar.gz

Extracting ./data/cifar-10-python.tar.gz to ./data

Files already downloaded and verified

- 注意:

- 如果你是在Windows系统下运行上述代码, 并且出现报错信息 "BrokenPipeError", 可以尝试将torch.utils.data.DataLoader()中的num_workers设置为0.

- 展示若干训练集的图片

# 导入画图包和numpy

import matplotlib.pyplot as plt

import numpy as np

# 构建展示图片的函数

def imshow(img):

img = img / 2 + 0.5

npimg = img.numpy()

plt.imshow(np.transpose(npimg, (1, 2, 0)))

plt.show()

# 从数据迭代器中读取一张图片

dataiter = iter(trainloader)

images, labels = dataiter.next()

# 展示图片

imshow(torchvision.utils.make_grid(images))

# 打印标签label

print(' '.join('%5s' % classes[labels[j]] for j in range(4)))

- 输出图片结果:

- 输出标签结果:

bird truck cat cat

- 2: 定义卷积神经网络

- 仿照2.1节中的类来构造此处的类, 唯一的区别是此处采用3通道3-channel

import torch.nn as nn

import torch.nn.functional as F

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(3, 6, 5)

self.pool = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(6, 16, 5)

self.fc1 = nn.Linear(16 * 5 * 5, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, 10)

def forward(self, x):

x = self.pool(F.relu(self.conv1(x)))

x = self.pool(F.relu(self.conv2(x)))

x = x.view(-1, 16 * 5 * 5)

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = self.fc3(x)

return x

net = Net()

- 3: 定义损失函数

- 采用交叉熵损失函数和随机梯度下降优化器.

import torch.optim as optim

criterion = nn.CrossEntropyLoss()

optimizer = optim.SGD(net.parameters(), lr=0.001, momentum=0.9)

- 4: 在训练集上训练模型

- 采用基于梯度下降的优化算法, 都需要很多个轮次的迭代训练.

for epoch in range(2): # loop over the dataset multiple times

running_loss = 0.0

for i, data in enumerate(trainloader, 0):

# data中包含输入图像张量inputs, 标签张量labels

inputs, labels = data

# 首先将优化器梯度归零

optimizer.zero_grad()

# 输入图像张量进网络, 得到输出张量outputs

outputs = net(inputs)

# 利用网络的输出outputs和标签labels计算损失值

loss = criterion(outputs, labels)

# 反向传播+参数更新, 是标准代码的标准流程

loss.backward()

optimizer.step()

# 打印轮次和损失值

running_loss += loss.item()

if (i + 1) % 2000 == 0:

print('[%d, %5d] loss: %.3f' %

(epoch + 1, i + 1, running_loss / 2000))

running_loss = 0.0

print('Finished Training')

- 输出结果:

[1, 2000] loss: 2.227

[1, 4000] loss: 1.884

[1, 6000] loss: 1.672

[1, 8000] loss: 1.582

[1, 10000] loss: 1.526

[1, 12000] loss: 1.474

[2, 2000] loss: 1.407

[2, 4000] loss: 1.384

[2, 6000] loss: 1.362

[2, 8000] loss: 1.341

[2, 10000] loss: 1.331

[2, 12000] loss: 1.291

Finished Training

- 保存模型:

# 首先设定模型的保存路径

PATH = './cifar_net.pth'

# 保存模型的状态字典

torch.save(net.state_dict(), PATH)

- 5: 在测试集上测试模型

- 第一步, 展示测试集中的若干图片

dataiter = iter(testloader)

images, labels = dataiter.next()

# 打印原始图片

imshow(torchvision.utils.make_grid(images))

# 打印真实的标签

print('GroundTruth: ', ' '.join('%5s' % classes[labels[j]] for j in range(4)))

- 输出图片结果:

- 输出标签结果:

GroundTruth: cat ship ship plane

- 第二步, 加载模型并对测试图片进行预测

# 首先实例化模型的类对象

net = Net()

# 加载训练阶段保存好的模型的状态字典

net.load_state_dict(torch.load(PATH))

# 利用模型对图片进行预测

outputs = net(images)

# 共有10个类别, 采用模型计算出的概率最大的作为预测的类别

_, predicted = torch.max(outputs, 1)

# 打印预测标签的结果

print('Predicted: ', ' '.join('%5s' % classes[predicted[j]] for j in range(4)))

- 输出结果:

Predicted: cat ship ship plane

- 接下来看一下在全部测试集上的表现

correct = 0

total = 0

with torch.no_grad():

for data in testloader:

images, labels = data

outputs = net(images)

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('Accuracy of the network on the 10000 test images: %d %%' % (

100 * correct / total))

- 输出结果:

Accuracy of the network on the 10000 test images: 53 %

- 分析结果: 对于拥有10个类别的数据集, 随机猜测的准确率是10%, 模型达到了53%, 说明模型学到了真实的东西.

- 为了更加细致的看一下模型在哪些类别上表现更好, 在哪些类别上表现更差, 我们分类别的进行准确率计算.

class_correct = list(0. for i in range(10))

class_total = list(0. for i in range(10))

with torch.no_grad():

for data in testloader:

images, labels = data

outputs = net(images)

_, predicted = torch.max(outputs, 1)

c = (predicted == labels).squeeze()

for i in range(4):

label = labels[i]

class_correct[label] += c[i].item()

class_total[label] += 1

for i in range(10):

print('Accuracy of %5s : %2d %%' % (

classes[i], 100 * class_correct[i] / class_total[i]))

- 输出结果:

Accuracy of plane : 62 %

Accuracy of car : 62 %

Accuracy of bird : 45 %

Accuracy of cat : 36 %

Accuracy of deer : 52 %

Accuracy of dog : 25 %

Accuracy of frog : 69 %

Accuracy of horse : 60 %

Accuracy of ship : 70 %

Accuracy of truck : 48 %

在GPU上训练模型

- 为了真正利用Pytorch中Tensor的优秀属性, 加速模型的训练, 我们可以将训练过程转移到GPU上进行.

- 首先要定义设备, 如果CUDA是可用的则被定义成GPU, 否则被定义成CPU.

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print(device)

- 输出结果:

cuda:0

- 当训练模型的时候, 只需要将模型转移到GPU上, 同时将输入的图片和标签页转移到GPU上即可.

# 将模型转移到GPU上

net.to(device)

# 将输入的图片张量和标签张量转移到GPU上

inputs, labels = data[0].to(device), data[1].to(device)