FAIRNESS IN MACHINE LEARNING: A SURVEY 阅读笔记

论文链接

刚读完一篇关于机器学习领域研究公平性的综述,这篇综述想必与其有许多共通之处,重合部分不再整理笔记,可详见上一篇论文的笔记:

A Survey on Bias and Fairness in Machine Learning 阅读笔记_Catherine_he_ye的博客

Section 1 引言

这篇文章试图在机器学习文献中提供不同的思想流派和减轻(社会)偏见和增加公平的方法的概述。它将方法组织成广泛接受的预处理、在处理和后处理方法框架,再细分为11个方法领域。尽管大多数文献强调二元分类,但是关于回归、推荐系统、无监督学习和自然语言处理方面的公平性的讨论也与当前可用的开源库一起提供。最后本文总结了公平研究面临的四个难题。

Section 2 机器学习中的公平性:关键的方法论组成部分

虽然不是所有的公平ML方法都符合下面这个框架,但它提供了一个很好理解的参考点,并作为ML中公平方法分类中的一个维度。

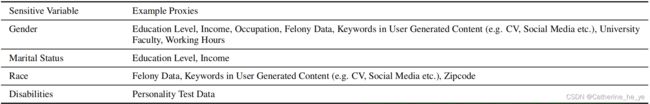

2.1 Sensitive and Protected Variables and (Un)privileged Groups

Most approaches to mitigate unfairness, bias, or discrimination are based on the notion of protected or sensitive variables (we will use the terms interchangeably) and on (un)privileged groups: groups (often defifined by one or more sensitive variables) that are disproportionately (less) more likely to be positively classifified.

① Metrics usually either emphasize individual (e.g. everyone is treated equal), or group fairness, where the latter is further differentiated to within group (e.g. women vs. men) and between group (e.g. young women vs. black men) fairness.

② Increasing fairness often results in lower overall accuracy or related metrics, leading to the necessity of analyzing potentially achievable trade-offs in a given scenario.

2.3 Pre-processing 预处理

在一个“已修复的”数据集上训练一个模型,预处理被认为是数据科学流水线中最灵活的部分,因为它对随后应用的建模技术的选择不做任何假设。

① A distinct advantage of pre- and post-processing approaches is that they do not modify the ML method explicitly. However, they have no direct control over the optimization function of the ML model itself.② This means that (open source) ML libraries can be leveraged unchanged for model training. Only in-processing approaches can optimize notions of fairness during model training. Yet, this requires the optimization function to be either accessible, replaceable, and/or modififiable, which may not always be the case.

Section 3 度量公平与偏见

3.1 Abstract Fairness Criteria

大多数关于公平性的定量定义都围绕着一个(二类)分类器的三个基本方面:

① 敏感变量S(区别受保护群体和非保护群体);② 目标变量Y(真实的类别);③ 分类分数R(预测的分类结果)

基于此三要素,general fairness desiderata被分为三个“非歧视”标准:

① Independence:评分R独立于敏感变量S,e.g., Statistical/Demographic Parity.

② Separation:在已知目标变量Y值的条件下,评分R独立于敏感变量S,e.g., Equalized Odds和Equal Opportunity.

③ Suffificiency:在已知评分R的条件下,目标变量Y独立于敏感变量S.

Parity-based metrics typically consider the predicted positive rates, i.e.,, across different groups.

While parity-based metrics typically consider variants of the predicted positive rate, confusion matrix-based metrics take into consideration additional aspects such as True Positive Rate (TPR), True Negative Rate (TNR), False Positive Rate (FPR), and False Negative Rate (FNR).

e.g., Equal Opportunity: 考虑真阳性,![]()

Overall accuracy equality: 考虑准确性,![]()

Conditional use accuracy equality: 有点不太懂,但是公式在这:![]()

Calibration-based metrics take the predicted probability, or score, into account.

consider the outcome for each participating individual

Section 4 二分类场景下的公平性研究

|

Blinding

|

the approach of making a classififier “immune” to one or more sensitive variables

|

|

Causal Methods

|

A key objective is to uncover causal relationships in the data and fifind dependencies between sensitive and non-sensitive variables.

也用于敏感变量的代理变量的识别,训练数据的去偏。

|

|

Sampling and Subgroup Analysis

|

① 纠正训练数据;② 通过subgroup analysis找到分类器不利的一方 因此,寻求创建公平训练样本的方法在抽样策略中包含进公平的概念。 subgroup analysis 也可用于模型评估,例如分析某一子组是否受歧视,确认某一因素是否影响模型公平性;Statistical hypothesis testing 统计假设检验评价某一模型是否稳健符合公平性指标;通过对敏感变量的抽样,还提出了公平性度量的概率验证,以在某些(小的)置信范围内评估训练过的模型。 |

|

Transformation

|

对数据进行映射或投影以确保公平性。往往部分转换以寻求公平与准确性的trade-off。 虽然转换主要是一种预处理方法,但它也可以在后处理阶段中应用。 |

|

Relabelling and Perturbation

|

作为转换方法的一个子集。 重新标记涉及修改训练数据实例的标签; Perturbation often aligns with notions of “repairing” some aspect(s) of the data with regard to notions of fairness.

Sensitivity analysis explores how various aspects of the feature vector affect a given outcome. 虽然敏感性分析并不是一种提高公平性的方法,但它可以帮助更好地理解关于公平性的不确定性。

|

|

Reweighing

|

reweighing为训练数据的实例分配权重,而保持数据本身不变。 |

|

Regularization and Constraint Optimisation

|

当应用于公平性时,正则化方法添加一个或多个惩罚项,以惩罚分类器的歧视性行为。 约束优化方法在模型训练过程中在分类器损失函数中包含公平性项。 |

|

Adversarial Learning

|

When applied to applications of fairness in ML, an adversary instead seeks to determine whether the training process is fair, and when not, feedback from the adversary is used to improve the model.

|

|

Bandits

|

Bandit approaches frame the fairness problem as a stochastic multi-armed bandit framework, assigning either individuals to arms, or groups of “similar” individuals to arms, and fairness quality as a reward represented as regret.

The two main notions of fairness that have emerged from the application of bandits are meritocratic fairness(group agnostic) and subjective fairness(emphasises fairness in each time period t of the bandit framework).

|

|

Calibration

|

Calibration is the process of ensuring that the proportion of positive predictions is equal to the proportion of positive examples.

|

|

Thresholding

|

后处理方法。 Thresholding is a post-processing approach which is motivated on the basis that discriminatory decisions are often made close to decision making boundaries because of a decision maker’s bias [157] and that humans apply threshold rules when making decisions.

|

Section 5 二分类以外场景下的公平性方法

|

Fair Regression

|

公平回归的主要目标是最小化一个损失函数 |

|

Recommender Systems

|

“C-fairness” for fair user/consumer recommendation (user-based)

“P-fairness” for fairness of producer recommendation (item-based)

之后会有一篇关于推荐系统公平性的综述要读,可以参考。 |

|

Unsupervised Methods

|

1) fair clustering

2) investigating the presence and detection of discrimination in association rule mining

3) transfer learning 迁移学习

|

| NLP |

Unintended biases have also been noticed in NLP; these are often gender or race focused.

|

Section 6 Current Platforms 开源工具

|

Project

|

Features

|

|---|---|

|

AIF360

|

Set of tools that provides several pre-, in-, and post-processing approaches for binary classifification as well as several pre-implemented datasets that are commonly used in Fairness research

|

|

Fairlean

|

Implements several parity-based fairness measures and algorithms for binary classifification and regression as well as a dashboard to visualize disparity in accuracy and parity.

|

|

Aequitas

|

Open source bias audit toolkit. Focuses on standard ML metrics and their evaluation for different subgroups of a protective attribute.

|

|

Responsibly

|

Provides datasets, metrics, and algorithms to measure and mitigate bias in classifification as well as NLP (bias in word embeddings).

|

|

Fairness

|

Tool that provides commonly used fairness metrics (e.g., statistical parity, equalized odds) for R projects.

|

|

FairTest

|

Generic framework that provides measures and statistical tests to detect unwanted associations between the output of an algorithm and a sensitive attribute.

|

|

Fairness Measures

|

Project that considers quantitative defifinitions of discrimination in classifification and ranking scenarios. Provides datasets, measures, and algorithms (for ranking) that investigate fairness.

|

|

Audit AI

|

Implements various statistical signifificance tests to detect discrimination between groups and bias from standard machine learning procedures.

|

|

Dataset Nutrition Label

|

Generates qualitative and quantitative measures and descriptions of dataset health to assess the quality of a dataset used for training and building ML models.

|

|

ML Fairness Gym

|

Part of Google’s Open AI project, a simulation toolkit to study long-run impacts of ML decisions. Analyzes how algorithms that take fairness into consideration change the underlying data (previous classififications) over time.

|

Section 7 Concluding Remarks: The Fairness Dilemmas 公平困境

① Balancing the tradeoff between fairness and model performance

② Quantitative notions of fairness permit model optimization, yet cannot balance different notions of fairness, i.e individual vs. group fairness

③ Tensions between fairness, situational, ethical, and sociocultural context, and policy

④ Recent advances to the state of the art have increased the skills gap inhibiting “man-on-the-street”

and industry uptake

![GEI=\frac{1}{n\alpha (\alpha -1)}\sum_{i=1}^{n}[(\frac{b_i}{\mu })^\alpha -1],\ b_i=\widehat{y_i}-y_i+1 \ and \ \mu =\frac{\sum_{i}^{}b_i}{n}](http://img.e-com-net.com/image/info8/bd5fc21dd7984e1695de870707c20854.gif)