TensorRT(C++)部署 Pytorch模型

众所周知,python训练pytorch模型得到.pt模型。但在实际项目应用中,特别是嵌入式端部署时,受限于语言、硬件算力等因素,往往需要优化部署,而tensorRT是最常用的一种方式。本文以yolov5的部署为例,说明模型部署在x86架构上的电脑端的流程。(部署在Arm架构的嵌入式端的流程类似)。

一、环境安装

1. 安装tensorRT

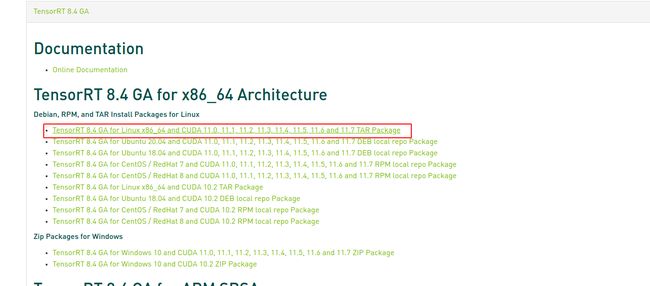

根据自己的系统Ubuntu版本、CPU架构、CUDA版本来选择tensorrt的下载包,连接如下

https://developer.nvidia.com/nvidia-tensorrt-8x-download

注意如果是自己的cuda不是用.deb安装的,最好是下载tensorrt的.tar包进行安装。笔者因为事先配置过pytorch开发环境,安装过cuda(.run)方式,所以使用.deb安装tensorrt时一直报错且未解决,后来使用.tar安装就好了。下载tensorrt的时候要注意版本对应关系,这里的版本对应关系包括cuda和cudnn的版本。如tensorrt使用的TensorRT-8.4.1.5.Linux.x86_64-gnu.cuda-11.6.cudnn8.4.tar.gz,则cuda要使用11.6,cudnn要使用8.4.1,否则编译tensorrt时会报错。

下载后文件为 TensorRT-8.4.1.5.Linux.x86_64-gnu.cuda-11.6.cudnn8.4.tar.gz

执行下列命令

# 解压

tar zxf TensorRT-8.4.1.5.Linux.x86_64-gnu.cuda-11.6.cudnn8.4.tar.gz

# 换个目录

cp TensorRT-8.4.1.5 /opt编辑.bashrc文件把tensorrt的库文件添加到环境变量

# 开大bashrc文件

sudo gedit ~./bashrc

# 在文件末尾添加下列一行

export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:/opt/TensorRT-8.4.1.5/lib保存关闭后,完成配置更新环境

source ~/.bashrc复制tensorRT目录下 lib、include文件夹到系统文件夹(或者将这两个文件夹路径添加到.bashrc文件中)

sudo cp -r ./lib/* /usr/lib

sudo cp -r ./include/* /user/include安装完成后,测试是否安装成功,这里使用 /opt/TensorRT-8.4.1.5/samples/sampleMNIST来测试

cd /opt/TensorRT-8.4.1.5/samples/sampleMNIST

sudo make编译之后会在 /opt/TensorRT-8.4.1.5/bin生成对应的可执行文件,此时直接运行sample_mnist

sudo ./sample_mnist得到下列结果即为成功

2. 安装opencv

具体参考我的另一篇博客

ubuntu编译安装opencv4.5.1(C++)_专业女神杀手的博客-CSDN博客

二、tensorrt的yolov5部署

tensorrt是一个推理引擎架构,会将pytorch用到的网络模块,如卷积,池化等用tensorrt进行重写。pytorch模型转换为.engine后就可以进行推理。

在github上下载tensorrt, 注意tensorrt版本和yolov5版本的匹配关系,如tensorrt5.0对应yolov5的 v5.0

GitHub - wang-xinyu/tensorrtx at yolov5-v5.0

基本上搭建好环境后,根据github上这个开源项目的readme文件进行tensorrt部署就可以了。

1. generate .wts from pytorch with .pt, or download .wts from model zoo

```

git clone -b v5.0 https://github.com/ultralytics/yolov5.git

git clone https://github.com/wang-xinyu/tensorrtx.git

// download https://github.com/ultralytics/yolov5/releases/download/v5.0/yolov5s.pt

cp {tensorrtx}/yolov5/gen_wts.py {ultralytics}/yolov5

cd {ultralytics}/yolov5

python gen_wts.py -w yolov5s.pt -o yolov5s.wts

// a file 'yolov5s.wts' will be generated.

```

2. build tensorrtx/yolov5 and run

```

cd {tensorrtx}/yolov5/

// update CLASS_NUM in yololayer.h if your model is trained on custom dataset

mkdir build

cd build

cp {ultralytics}/yolov5/yolov5s.wts {tensorrtx}/yolov5/build

cmake ..

make

sudo ./yolov5 -s [.wts] [.engine] [s/m/l/x/s6/m6/l6/x6 or c/c6 gd gw] // serialize model to plan file

sudo ./yolov5 -d [.engine] [image folder] // deserialize and run inference, the images in [image folder] will be processed.

// For example yolov5s

sudo ./yolov5 -s yolov5s.wts yolov5s.engine s

sudo ./yolov5 -d yolov5s.engine ../samples

// For example Custom model with depth_multiple=0.17, width_multiple=0.25 in yolov5.yaml

sudo ./yolov5 -s yolov5_custom.wts yolov5.engine c 0.17 0.25

sudo ./yolov5 -d yolov5.engine ../samples

```注意:我的环境是

- ubuntu20.04

- cuda11.6

- cudnn8.4.1

- TensorRT-8.4.1.5.Linux.x86_64-gnu.cuda-11.6.cudnn8.4

- wang-xinyu/tensorrtx/tree/yolov5-v5.0

- ultralytics/yolov5/tree/v5.0

上述环境是可以正常运行的。但我使用wang-xinyu/tensorrt/tree/yolov5-v3.0和ultralytics/yolov5/tree/v3.0时,make总出错,这是因为tensorrt的版本造成的。总之,这套流程的版本匹配还挺不容易的,坑比较多。

三、封装tensorrt(yolov5)算法接口供其他项目调用

在实际项目使用中,yolov5往往作为一个算法调用接口使用,这里通过改写一下tensorrt的yolov5代码,实现一个例子,可以在其他项目C++项目中调用yolv5。

本例子把源码yolov5.cpp拆成yolo_infer.hpp和yolo_infer.cpp,修改下CMakelists然后写一个main函数调用。(文件夹yolov5里的其他文件都不用变)

yolo_infer.hpp

#ifndef _YOLO_INFER_H

#define _YOLO_INFER_H

#include

static int get_width(int x, float gw, int divisor = 8);

static int get_depth(int x, float gd);

//void doInference(IExecutionContext& context, cudaStream_t& stream, void **buffers, float* input, float* output, int batchSize);

// load engine model(buffers and stream)

void yolov5_init(std::string engine_file);

// release engine model

void yolov5_destroy();

// recognize

cv::Mat yolov5_recognize(cv::Mat& frame);

#endif yolo_infer.cpp

#include

#include

#include

#include "cuda_utils.h"

#include "logging.h"

#include "common.hpp"

#include "utils.h"

#include "calibrator.h"

#define USE_FP16 // set USE_INT8 or USE_FP16 or USE_FP32

#define DEVICE 0 // GPU id

#define NMS_THRESH 0.4

#define CONF_THRESH 0.5

#define BATCH_SIZE 1

// stuff we know about the network and the input/output blobs

static const int INPUT_H = Yolo::INPUT_H;

static const int INPUT_W = Yolo::INPUT_W;

static const int CLASS_NUM = Yolo::CLASS_NUM;

static const int OUTPUT_SIZE = Yolo::MAX_OUTPUT_BBOX_COUNT * sizeof(Yolo::Detection) / sizeof(float) + 1; // we assume the yololayer outputs no more than MAX_OUTPUT_BBOX_COUNT boxes that conf >= 0.1

const char* INPUT_BLOB_NAME = "data";

const char* OUTPUT_BLOB_NAME = "prob";

static Logger gLogger;

char categories[] = {"buoy"};

void* buffers[2];

cudaStream_t stream;

int inputIndex;

int outputIndex;

IExecutionContext* context;

ICudaEngine* engine;

IRuntime* runtime;

static int get_width(int x, float gw, int divisor = 8) {

return int(ceil((x * gw) / divisor)) * divisor;

}

static int get_depth(int x, float gd) {

if (x == 1) return 1;

int r = round(x * gd);

if (x * gd - int(x * gd) == 0.5 && (int(x * gd) % 2) == 0) {

--r;

}

return std::max(r, 1);

}

void doInference(IExecutionContext& context, cudaStream_t& stream, void **buffers, float* input, float* output, int batchSize) {

// DMA input batch data to device, infer on the batch asynchronously, and DMA output back to host

CUDA_CHECK(cudaMemcpyAsync(buffers[0], input, batchSize * 3 * INPUT_H * INPUT_W * sizeof(float), cudaMemcpyHostToDevice, stream));

context.enqueue(batchSize, buffers, stream, nullptr);

CUDA_CHECK(cudaMemcpyAsync(output, buffers[1], batchSize * OUTPUT_SIZE * sizeof(float), cudaMemcpyDeviceToHost, stream));

cudaStreamSynchronize(stream);

}

// load engine model(buffers and stream)

void yolov5_init(std::string engine_file)

{

cudaSetDevice(DEVICE);

std::string engine_name = engine_file;

// deserialize the .engine and run inference

std::ifstream file(engine_name, std::ios::binary);

if (!file.good()) {

std::cerr << "read " << engine_name << " error!" << std::endl;

return;

}

char *trtModelStream = nullptr;

size_t size = 0;

file.seekg(0, file.end);

size = file.tellg();

file.seekg(0, file.beg);

trtModelStream = new char[size];

assert(trtModelStream);

file.read(trtModelStream, size);

file.close();

runtime = createInferRuntime(gLogger);

assert(runtime != nullptr);

engine = runtime->deserializeCudaEngine(trtModelStream, size);

assert(engine != nullptr);

context = engine->createExecutionContext();

assert(context != nullptr);

delete[] trtModelStream;

assert(engine->getNbBindings() == 2);

// In order to bind the buffers, we need to know the names of the input and output tensors.

// Note that indices are guaranteed to be less than IEngine::getNbBindings()

inputIndex = engine->getBindingIndex(INPUT_BLOB_NAME);

outputIndex = engine->getBindingIndex(OUTPUT_BLOB_NAME);

assert(inputIndex == 0);

assert(outputIndex == 1);

// Create GPU buffers on device

CUDA_CHECK(cudaMalloc(&buffers[inputIndex], BATCH_SIZE * 3 * INPUT_H * INPUT_W * sizeof(float)));

CUDA_CHECK(cudaMalloc(&buffers[outputIndex], BATCH_SIZE * OUTPUT_SIZE * sizeof(float)));

// Create stream

CUDA_CHECK(cudaStreamCreate(&stream));

}

void yolov5_destroy()

{

// Release stream and buffers

cudaStreamDestroy(stream);

CUDA_CHECK(cudaFree(buffers[inputIndex]));

CUDA_CHECK(cudaFree(buffers[outputIndex]));

// Destroy the engine

context->destroy();

engine->destroy();

runtime->destroy();

}

cv::Mat yolov5_recognize(cv::Mat& frame)

{

// prepare input data ---------------------------

static float data[BATCH_SIZE * 3 * INPUT_H * INPUT_W];

//for (int i = 0; i < 3 * INPUT_H * INPUT_W; i++)

// data[i] = 1.0;

static float prob[BATCH_SIZE * OUTPUT_SIZE];

int fcount = 1;

int b = 0;

//if (frame.empty()) return;

cv::Mat pr_img = preprocess_img(frame, INPUT_W, INPUT_H); // letterbox BGR to RGB

int i = 0;

for (int row = 0; row < INPUT_H; ++row) {

uchar* uc_pixel = pr_img.data + row * pr_img.step;

for (int col = 0; col < INPUT_W; ++col) {

data[b * 3 * INPUT_H * INPUT_W + i] = (float)uc_pixel[2] / 255.0;

data[b * 3 * INPUT_H * INPUT_W + i + INPUT_H * INPUT_W] = (float)uc_pixel[1] / 255.0;

data[b * 3 * INPUT_H * INPUT_W + i + 2 * INPUT_H * INPUT_W] = (float)uc_pixel[0] / 255.0;

uc_pixel += 3;

++i;

}

}

// Run inference

auto start = std::chrono::system_clock::now();

doInference(*context, stream, buffers, data, prob, BATCH_SIZE);

auto end = std::chrono::system_clock::now();

std::cout << std::chrono::duration_cast(end - start).count() << "ms" << std::endl;

std::vector> batch_res(fcount);

auto& res = batch_res[b];

nms(res, &prob[b * OUTPUT_SIZE], CONF_THRESH, NMS_THRESH);

std::cout << res.size() << std::endl;

cv::Mat img = frame;

for (size_t j = 0; j < res.size(); j++) {

cv::Rect r = get_rect(img, res[j].bbox);

cv::rectangle(img, r, cv::Scalar(0x27, 0xC1, 0x36), 2);

cv::putText(img, "buoy "+std::to_string((float)res[j].conf), cv::Point(r.x, r.y - 1), cv::FONT_HERSHEY_PLAIN, 1.2, cv::Scalar(0xFF, 0xFF, 0xFF), 2);

}

//cv::imwrite("re.jpg", img);

return img;

}

main.cpp

#include

#include

#include"yolo_infer.hpp"

int main(int argc, char** argv)

{

std::string img_path = std::string(argv[1]);

std::cout< CMakelists.txt

cmake_minimum_required(VERSION 2.6)

project(yolov5_tinktek)

add_definitions(-std=c++11)

add_definitions(-DAPI_EXPORTS)

option(CUDA_USE_STATIC_CUDA_RUNTIME OFF)

set(CMAKE_CXX_STANDARD 11)

set(CMAKE_BUILD_TYPE Debug)

find_package(CUDA REQUIRED)

if(WIN32)

enable_language(CUDA)

endif(WIN32)

message("PROJECT_SOURCE_DIR: ${PROJECT_SOURCE_DIR}")

include_directories(${PROJECT_SOURCE_DIR}/include)

# include and link dirs of cuda and tensorrt, you need adapt them if yours are different

# cuda

include_directories(/usr/local/cuda/include)

link_directories(/usr/local/cuda/lib64)

# tensorrt

include_directories(/usr/include/x86_64-linux-gnu/)

link_directories(/usr/lib/x86_64-linux-gnu/)

set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -std=c++11 -Wall -Ofast -Wfatal-errors -D_MWAITXINTRIN_H_INCLUDED")

cuda_add_library(myplugins SHARED ${PROJECT_SOURCE_DIR}/yololayer.cu)

target_link_libraries(myplugins nvinfer cudart)

find_package(OpenCV)

include_directories(${OpenCV_INCLUDE_DIRS})

add_executable(yolov5 ${PROJECT_SOURCE_DIR}/calibrator.cpp ${PROJECT_SOURCE_DIR}/yolo_infer.cpp ${PROJECT_SOURCE_DIR}/main.cpp)

target_link_libraries(yolov5 nvinfer)

target_link_libraries(yolov5 cudart)

target_link_libraries(yolov5 myplugins)

target_link_libraries(yolov5 ${OpenCV_LIBS})

if(UNIX)

add_definitions(-O2 -pthread)

endif(UNIX)

使用流程如下:

- 使用pythoy训练出.pt模型

- 使用tensorrt里的文件gen_wts.py把.pt变成.wts

- 使用tensorrt里的yolv5源码把.wts转换为.engine

- 使用我修改后的例子,把yolov5源码封装成函数,供给项目调用。

踩坑不少,记录一下。。。。