【目标检测】YOLOv5跑通VOC2007数据集(修复版)

前言

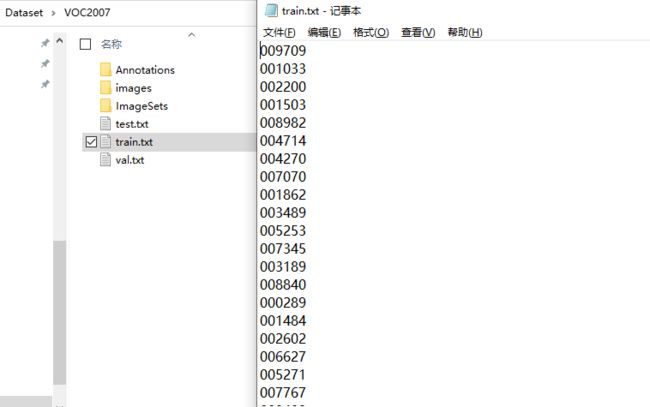

在【目标检测】YOLOv5跑通VOC2007数据集一文中,我写了个脚本来提取VOC中Segmentation划分好的数据集,但是经过观察发现,这个train.txt中仅有209条数据,而VOC2007的图片有9963张,这意味着大量的图片被浪费,没有输入到模型中进行训练。

因此,本篇就来重新修改数据集处理的流程,以解决这一问题。

数据集划分

我处理的思路是直接根据图片来进行划分,不用管ImageSets这个文件夹的信息。

在根目录下创建split_data.py

import os

import random

img_path = 'D:/Dataset/VOC2007/images/' # 图片文件夹路径(最后斜杠不要漏)

label_path = 'D:/Dataset/VOC2007/' # 生成划分数据路径

img_list = os.listdir(img_path)

train_ratio = 0.8 # 训练集比例

val_ratio = 0.1 # 验证集比例

shuffle = True # 是否随机划分

def data_split(full_list, train_ratio, val_ratio, shuffle=True):

n_total = len(full_list)

train_set_num = int(n_total * train_ratio)

val_set_num = int(n_total * val_ratio)

if shuffle:

random.shuffle(full_list)

train_set = full_list[:train_set_num]

val_set = full_list[train_set_num:(train_set_num + val_set_num)]

test_set = full_list[(train_set_num + val_set_num):]

return train_set, val_set, test_set

if __name__ == '__main__':

train_set, val_set, test_set = data_split(img_list, train_ratio, val_ratio, shuffle=True)

with open(label_path + 'train.txt', 'w') as f:

for img_name in train_set:

f.write(img_name.split('.jpg')[0] + '\n')

with open(label_path + 'val.txt', 'w') as f:

for img_name in val_set:

f.write(img_name.split('.jpg')[0] + '\n')

with open(label_path + 'test.txt', 'w') as f:

for img_name in test_set:

f.write(img_name.split('.jpg')[0] + '\n')

这里我设置训练集/验证集/测试集的比例为:8:1:1,并且划分时进行可随机打乱,如有需要可以进行修改。

运行之后,在数据集文件夹下生成划分好的数据:

标签转换

和【目标检测】YOLOv5跑通VOC2007数据集文中一样,我们可以依旧采用之前的脚本进行转换,不同的是数据划分集的指向路径发生变化。

在根目录下新建voc2yolo.py文件

import xml.etree.ElementTree as ET

import os

sets = ['train', 'test', 'val']

Imgpath = 'D:/Dataset/VOC2007/images/'

xmlfilepath = 'D:/Dataset/VOC2007/Annotations/'

ImageSets_path = 'D:/Dataset/VOC2007/'

Label_path = 'D:/Dataset/VOC2007/'

classes = ["aeroplane", "bicycle", "bird", "boat", "bottle", "bus", "car", "cat", "chair", "cow", "diningtable", "dog", "horse", "motorbike", "person", "pottedplant", "sheep", "sofa", "train", "tvmonitor"]

def convert(size, box):

dw = 1. / size[0]

dh = 1. / size[1]

x = (box[0] + box[1]) / 2.0

y = (box[2] + box[3]) / 2.0

w = box[1] - box[0]

h = box[3] - box[2]

x = x * dw

w = w * dw

y = y * dh

h = h * dh

return (x, y, w, h)

def convert_annotation(image_id):

in_file = open(xmlfilepath + '%s.xml' % (image_id))

out_file = open(Label_path + 'labels/%s.txt' % (image_id), 'w')

tree = ET.parse(in_file)

root = tree.getroot()

size = root.find('size')

w = int(size.find('width').text)

h = int(size.find('height').text)

for obj in root.iter('object'):

difficult = obj.find('difficult').text

cls = obj.find('name').text

if cls not in classes or int(difficult) == 1:

continue

cls_id = classes.index(cls)

xmlbox = obj.find('bndbox')

b = (float(xmlbox.find('xmin').text), float(xmlbox.find('xmax').text), float(xmlbox.find('ymin').text),

float(xmlbox.find('ymax').text))

bb = convert((w, h), b)

out_file.write(str(cls_id) + " " + " ".join([str(a) for a in bb]) + '\n')

for image_set in sets:

if not os.path.exists(Label_path + 'labels/'):

os.makedirs(Label_path + 'labels/')

image_ids = open(ImageSets_path + '%s.txt' % (image_set)).read().strip().split()

list_file = open(Label_path + '%s.txt' % (image_set), 'w')

for image_id in image_ids:

list_file.write(Imgpath + '%s.jpg\n' % (image_id))

convert_annotation(image_id)

list_file.close()

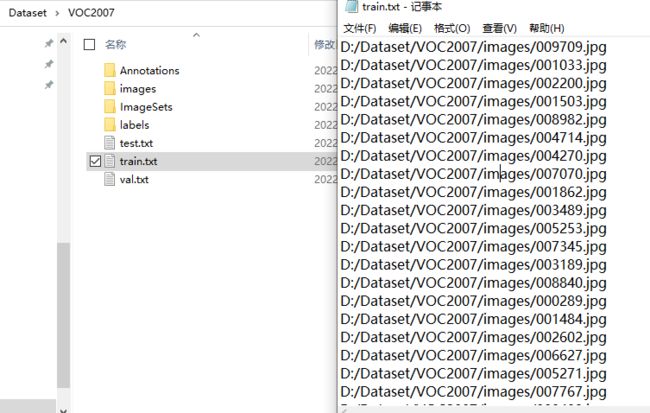

运行之后,数据集文件下生成对应的labels,并且数据集指向发生变化。

训练准备

在data文件夹下新建mydata.yaml

train: D:/Dataset/VOC2007/train.txt

val: D:/Dataset/VOC2007/val.txt

test: D:/Dataset/VOC2007/test.txt

# number of classes

nc: 20

# class names

names: [ 'aeroplane', 'bicycle', 'bird', 'boat', 'bottle', 'bus', 'car', 'cat', 'chair', 'cow', 'diningtable', 'dog', 'horse', 'motorbike', 'person', 'pottedplant', 'sheep', 'sofa', 'train', 'tvmonitor' ]

数据的指向修改为自己的路径。

剩下的步骤和前文一样。