CentOS 7 上hadoop伪分布式搭建全流程完整教程

一.虚拟机安装CentOS7并配置共享文件夹

二.CentOS 7 上hadoop伪分布式搭建全流程完整教程

三.本机使用python操作hdfs搭建及常见问题

四.mapreduce搭建

五.mapper-reducer编程搭建

CentOS 7 上进行hadoop伪分布式搭建

-

- 一、环境搭建

-

- 1.安装所需环境

- 2.关闭selinux,打开防火墙对50070端口的访问

- 二、主机配置

-

- 1.设置主机名

- 2.设置 host 列表

- 三、JAVA安装

-

- 1.下载java安装包

- 2.安装java

- 3.配置 java 环境变量/etc/profile

- 4.校验 java 安装成功

- 四、hadoop 安装

-

- 1.下载hadoop安装包

- 2.解压 hadoop

- 3.修改环境变量

- 4.修改 hadoop 配置文件

-

- 4.1配置core-site.xml

- 4.2配置hdfs-site.xml

- 4.3配置hadoop-env.sh

- 五、启动

-

- 1.格式化 namenode

- 2.启动集群

- 3.启动 namenode 和 datanode

- 4.查看服务

- 5.web访问

一、环境搭建

1.安装所需环境

yum -y install gcc* readline* python-devel cmake net-tools psmisc vim* bash-completion.noarch lrzsz

出现报错:

gcc-go-4.8.5-44.el7.x86_64.rpm FAILED

http://mirrors.aliyun.com/centos/7.9.2009/os/x86_64/Packages/gcc-go-4.8.5-44.el7.x86_64.rpm: [Errno 12] Timeout on http://mirrors.aliyun.com/centos/7.9.2009/os/x86_64/Packages/gcc-go-4.8.5-44.el7.x86_64.rpm: (28, 'Operation too slow. Less than 1000 bytes/sec transferred the last 30 seconds')

正在尝试其它镜像。

gcc-go-4.8.5-44.el7.x86_64.rpm FAILED

http://mirrors.bfsu.edu.cn/centos/7.9.2009/os/x86_64/Packages/gcc-go-4.8.5-44.el7.x86_64.rpm: [Errno -1] 软件包与预期下载的不符。建议:运行 yum --enablerepo=base clean metadata

按照提示执行

yum --enablerepo=base clean metadata

yum -y install gcc* readline* python-devel cmake net-tools psmisc vim* bash-completion.noarch lrzsz

2.关闭selinux,打开防火墙对50070端口的访问

参考:https://blog.csdn.net/hanwenshan123/article/details/78717782

对问题2、问题3进行配置

进行完之后

查看50070进程是否存在

ps -aux |grep 50070

二、主机配置

1.设置主机名

hostnamectl set-hostname hadoop4

2.设置 host 列表

查看当前ip

ifconfig

sudo gedit /etc/hosts

在文件末尾添加:

192.168.137.134 hadoop4

ping hadoop4

三、JAVA安装

1.下载java安装包

网盘链接:https://pan.baidu.com/s/1bXLZ20yr8egVmLEwYBSLMA

提取码:hl99

2.安装java

cd +filepath

sudo tar -zxf jdk-8u212-linux-x64.tar.gz -C /usr/local/

sudo mv /usr/local/jdk1.8.0_212/ /usr/local/java

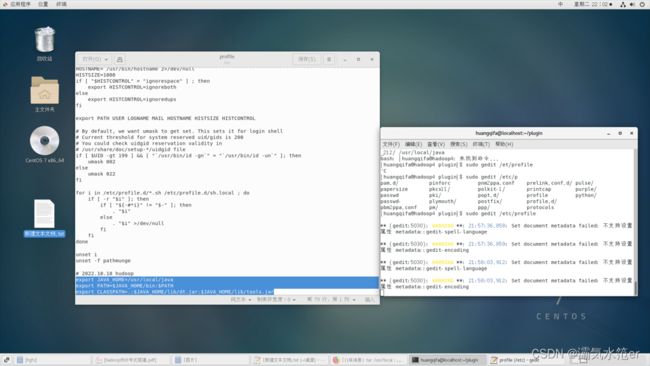

3.配置 java 环境变量/etc/profile

sudo gedit /etc/profile

在文件末尾添加

export JAVA_HOME=/usr/local/java

export PATH=$JAVA_HOME/bin:$PATH

export CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

刷新/etc/profile

source /etc/profile

![]()

4.校验 java 安装成功

java -version

四、hadoop 安装

1.下载hadoop安装包

网盘链接:https://pan.baidu.com/s/1o826SqMe3PnnoO8fVUwCfw

提取码:hl99

2.解压 hadoop

cd +filepath

sudo tar -zxf hadoop-2.7.7.tar.gz -C /usr/local/

sudo mv /usr/local/hadoop-2.7.7/ /usr/local/hadoop

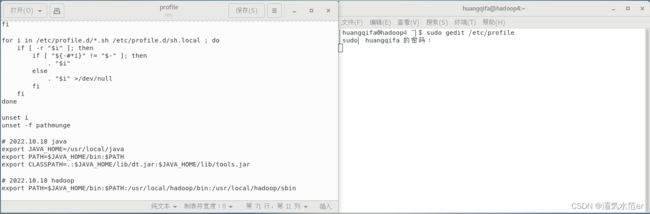

3.修改环境变量

sudo gedit /etc/profile

文件末尾添加:

export PATH=$JAVA_HOME/bin:$PATH:/usr/local/hadoop/bin:/usr/local/hadoop/sbin

source /etc/profile

4.修改 hadoop 配置文件

4.1配置core-site.xml

sudo gedit /usr/local/hadoop/etc/hadoop/core-site.xml

添加

<configuration>

<property>

<name>fs.defaultFSname>

<value>hdfs://hadoop4:9000value>

property>

<property>

<name>hadoop.tmp.dirname>

<value>/home/huangqifa/data/hadoop/tmpvalue>

property>

configuration>

4.2配置hdfs-site.xml

sudo gedit /usr/local/hadoop/etc/hadoop/hdfs-site.xml

添加

<configuration>

<property>

<name>dfs.replicationname>

<value>1value>

property>

<property>

<name>dfs.namenode.name.dirname>

<value>/home/huangqifa/data/hadoop/namenodevalue>

property>

<property>

<name>dfs.datanode.data.dirname>

<value>/home/huangqifa/data/hadoop/datanodevalue>

property>

configuration>

如图

core-site.xml、hdfs-site.xml中所涉及的文件夹不用创建,执行后面的命令时会自动创建

4.3配置hadoop-env.sh

sudo gedit /usr/local/hadoop/etc/hadoop/hadoop-env.sh

文档末尾添加

export JAVA_HOME=/usr/local/java

五、启动

1.格式化 namenode

hadoop namenode -format

2.启动集群

sh /usr/local/hadoop/sbin/start-all.sh

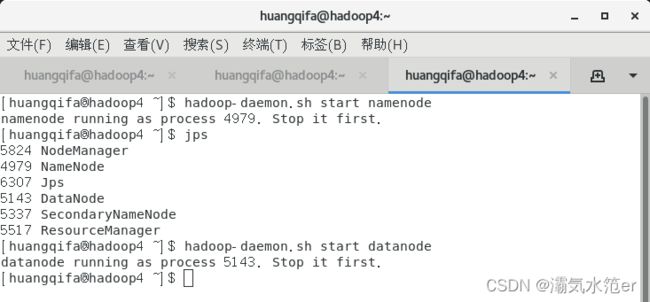

3.启动 namenode 和 datanode

hadoop-daemon.sh start namenode

hadoop-daemon.sh start datanode

出现错误的话,可以去找对应的log文件

4.查看服务

jps

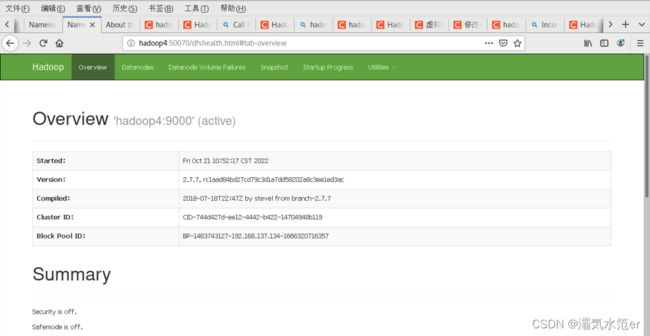

5.web访问

浏览器访问

192.168.137.134:50070

![]()

hadoop4:50070

192.168.137.134:8088

每次重启需要删除/home/huangqifa/data(core-site.xml、hdfs-site.xml中的存储路径)文件夹,然后将以上1-4再重新做一遍才可以访问

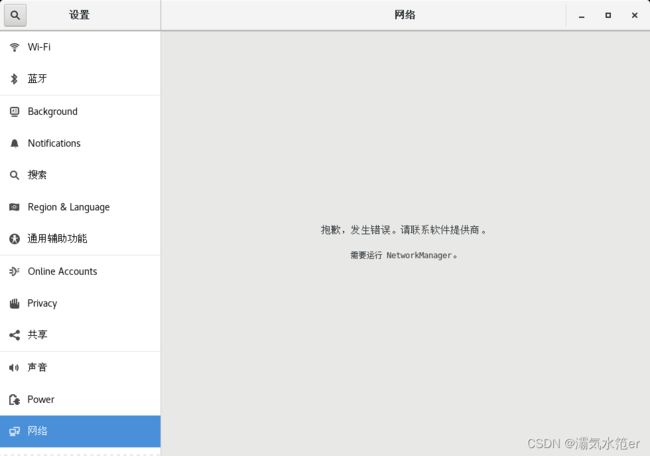

注:以上配置可能会导致此问题,不过依然可以正常联网,毫无影响。 解决:systemctl start NetworkManager.service ,但之后ip就变了,原因未知,好在每次重启又自动复原,原因未知。

解决:systemctl start NetworkManager.service ,但之后ip就变了,原因未知,好在每次重启又自动复原,原因未知。