基于K8s构建Jenkins持续集成平台(部署流程)(转)

转载至:https://blog.csdn.net/m0_59430185/article/details/123394853

文章目录

- 一、传统Jenkins的Master-Slave方案的缺陷

- 二、K8s+Docker+Jenkins持续集成架构

-

-

- 1. 架构图

- 2. 持续集成优点

-

- 三、K8S 集群部署

-

-

- 1. 环境配置

- 2. 安装kubelet、kubeadm、kubectl

- 3.Master节点上进行配置

- 4. 安装Calico

- 5.Slave节点

- 6. 验证部署结果

-

- 四、部署配置 NFS

-

-

- 1. 安装NFS服务

- 2. 创建共享目录

- 3. 启动服务

- 4. 查看NFS共享目录

-

- 五、K8S上安装Jenkins-Master

-

-

- 1. 创建NFS client provisioner

- 2. 安装 Jenkins-Master

- 3. Jenkins控制台配置

-

- 六、Jenkins与Kubernetes整合

-

-

- 1. 实现Jenkins与K8s整合

- 2. 构建Jenkins-Slave自定义镜像

- 3. Jenkins-slave流水线项目测试

-

- 七、基于kubernetes平台微服务的部署

-

-

- 1. 拉取代码,创建镜像

- 2. 配置eureka服务

- 3.zuul

- 4.admin

- 5.gathering

- 6.依次构建3个子服务

-

- 6.1 zuul

- 6.2 admin

- 6.3 gathering

- 7. postman 测试数据库

-

一、传统Jenkins的Master-Slave方案的缺陷

- Master节点发生单点故障时,整个流程都不可用了

- 每个 Slave节点的配置环境不一样,来完成不同语言的编译打包等操作,但是这些差异化的配置导致管理起来非常不方便,维护起来也是比较费劲

- 资源分配不均衡,有的 Slave节点要运行的job出现排队等待,而有的Slave节点处于空闲状态

- 资源浪费,每台 Slave节点可能是实体机或者VM,当Slave节点处于空闲状态时,也不会完全释放掉资源

可以引入Kubernates来解决

二、K8s+Docker+Jenkins持续集成架构

1. 架构图

![]()

![]()

大致工作流程:手动/自动构建 -> Jenkins 调度 K8S API ->动态生成 Jenkins Slave pod -> Slave pod 拉取 Git 代码/编译/打包镜像 ->推送到镜像仓库 Harbor -> Slave 工作完成,Pod 自动销毁 ->部署到测试或生产 Kubernetes平台。(完全自动化,无需人工干预)

2. 持续集成优点

-

服务高可用:

当 Jenkins Master 出现故障时,Kubernetes 会自动创建一个新的 Jenkins Master

容器,并且将 Volume 分配给新创建的容器,保证数据不丢失,从而达到集群服务高可用。 -

动态伸缩,合理使用资源:

每次运行 Job 时,会自动创建一个 Jenkins Slave,Job 完成后,Slave 自动注销并删除容器,资源自动释放,而且 Kubernetes 会根据每个资源的使用情况,动态分配Slave 到空闲的节点上创建,降低出现因某节点资源利用率高,还排队等待在该节点的情况。 -

扩展性好:

当 Kubernetes 集群的资源严重不足而导致 Job 排队等待时,可以很容易的添加一个 Kubernetes Node 到集群中,从而实现扩展。

三、K8S 集群部署

1. 环境配置

| 主机名称 | IP地址 | 安装的软件 |

|---|---|---|

| Gitlab服务器 | 192.168.74.11 | Gitlab-12.4.2 |

| harbor仓库服务器 | 192.168.74.7 | Harbor1.9.2 |

| master | 192.168.74.4 | kube-apiserver、kube-controller-manager、kube- scheduler、docker、etcd、calico,NFS |

| node1 | 192.168.74.5 | kubelet、kubeproxy、Docker18.06.1-ce |

| node2 | 192.168.74.6 | kubelet、kubeproxy、Docker18.06.1-ce |

三台k8s服务器上操作

安装 Docker:

#环境配置

hostnamectl set-hostname master && su

hostnamectl set-hostname node1 && su

hostnamectl set-hostname node2 && su

vim /etc/resolv.conf

nameserver 114.114.114.114

#关闭防火墙,selinux,swap

systemctl stop firewalld && systemctl disable firewalld

setenforce 0

#永久关闭

vim /etc/selinux/config

SELINUX=disabled

swapoff -a

#永久关闭需进入配置文件注释掉以下段

vim /etc/fstab

....

/dev/mapper/cl-swap swap swap defaults 0 0

....

#安装依赖包

yum install -y yum-utils device-mapper-persistent-data lvm2

#设置阿里云镜像源

cd /etc/yum.repos.d/

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

#安装 docker-ce 社区版

yum install -y docker-ce

systemctl start docker

systemctl enable docker

#配置镜像加速,官方网址可参考:https://help.aliyun.com/document_detail/60750.html

mkdir -p /etc/docker

#直接命令行输入以下内容:

sudo tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://wrdun890.mirror.aliyuncs.com"]

}

EOF

#把Harbor地址加入到Docker信任列表(harbor仓库的docker中不需要配)

vim /etc/docker/daemon.json

{

"registry-mirrors": ["https://wrdun890.mirror.aliyuncs.com"],

"insecure-registries": ["192.168.74.7:85"]

}

systemctl daemon-reload

systemctl restart docker

#网络优化

vim /etc/sysctl.conf

net.ipv4.ip_forward=1

sysctl -p

systemctl restart network

systemctl restart docker

docker version

1234567891011121314151617181920212223242526272829303132333435363738394041424344454647484950515253545556575859606162

配置基础环境

cat >> /etc/hosts << EOF

192.168.74.4 master

192.168.74.5 node1

192.168.74.6 node2

EOF

#设置系统参数,加载br_netfilter模块

modprobe br_netfilter

#设置允许路由转发,不对bridge的数据进行处理创建文件

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

vm.swappiness = 0

EOF

sysctl -p /etc/sysctl.d/k8s.conf #执行文件

#kube-proxy开启ipvs的前置条件

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash

/etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4

1234567891011121314151617181920212223242526272829303132

2. 安装kubelet、kubeadm、kubectl

kubeadm: 用来初始化集群的指令。

kubelet: 在集群中的每个节点上用来启动 pod 和 container 等。

kubectl: 用来与集群通信的命令行工具。

在三台k8s服务器上配置

#清空yum缓存

yum clean all

#设置yum安装源

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

#安装

yum install -y kubelet-1.17.0 kubeadm-1.17.0 kubectl-1.17.0

#kubelet设置开机启动(注意:先不启动,现在启动的话会报错)

systemctl enable kubelet

#查看版本

kubelet --version

12345678910111213141516171819202122

3.Master节点上进行配置

#运行初始化命令

kubeadm init --kubernetes-version=1.17.0 \

--apiserver-advertise-address=192.168.74.4 \

--image-repository registry.aliyuncs.com/google_containers \

--service-cidr=10.1.0.0/16 \

--pod-network-cidr=10.244.0.0/16

#这里需要等待,最后会提示节点安装的命令,需要复制下来后面加入集群的时候使用

#注意:apiserver-advertise-address这个地址必须是master机器的IP

12345678910

![]()

常见错误如下:

错误① :

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver作为Docker cgroup驱动程序。,Kubernetes推荐的Docker驱动程序是“systemd”

解决方案:

#修改Docker的配置,加入下面的配置,然后重启docker

vim /etc/docker/daemon.json

{

"exec-opts":["native.cgroupdriver=systemd"]

}

123456

错误②:

[ERROR NumCPU]: the number of available CPUs 1 is less than the required 2

解决方案:

修改机器的CPU核数,最少二个

![]()

启动kubelet

systemctl restart kubelet

1

![]()

配置kubectl工具

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

123

4. 安装Calico

master节点

mkdir /root/k8s

cd /root/k8s

#不检查凭证下载calico.yaml文件

wget --no-check-certificate https://docs.projectcalico.org/v3.10/getting-started/kubernetes/installation/hosted/kubernetes-datastore/calico-networking/1.7/calico.yaml

#地址更改,方便从节点通信

sed -i 's/192.168.0.0/10.244.0.0/g' calico.yaml

kubectl apply -f calico.yaml

1234567891011

看所有Pod的状态,需要确保所有Pod都是Running状态

kubectl get pod --all-namespaces -o wide

1

![]()

5.Slave节点

使用之前Master节点产生的命令加入集群

kubeadm join 192.168.74.4:6443 --token 22hiwz.xu5n0ekvkcy7lmsd \

--discovery-token-ca-cert-hash sha256:f4006c9dae8838d1ee151671ad43ec8305d6b4e86b00f3e3206b356767be0958

#启动kubelet

systemctl start kubelet

12345

6. 验证部署结果

回到Master节点查看,如果Status全部为Ready,代表集群环境搭建成功

kubectl get nodes

1

![]()

四、部署配置 NFS

NFS(Network FileSystem):

它最大的功能就是可以通过网络,让不同的机器、不同的操作系统可以共享彼此的文件。

我们可以利用NFS共享Jenkins运行的配置文件、Maven的仓库依赖文件等

1. 安装NFS服务

所有K8S的节点都需要安装

yum install -y nfs-utils

1

2. 创建共享目录

mkdir -p /opt/nfs/jenkins

vim /etc/exports #添加下面的参数

/opt/nfs/jenkins *(rw,no_root_squash)

12345

3. 启动服务

systemctl enable nfs

systemctl start nfs

12

4. 查看NFS共享目录

showmount -e 192.168.74.4

1

三台服务器都要能访问

![]()

![]()

![]()

五、K8S上安装Jenkins-Master

1. 创建NFS client provisioner

nfs-client-provisioner 是一个Kubernetes的简易 NFS 的外部 provisioner,本身不提供 NFS,需要现有的 NFS 服务器提供存储。

上传 nfs-client-provisioner 构建文件

[root@master ~]# rz -E

rz waiting to receive.

[root@master ~]# unzip nfs-client.zip

Archive: nfs-client.zip

creating: nfs-client/

inflating: nfs-client/class.yaml

inflating: nfs-client/deployment.yaml

inflating: nfs-client/rbac.yaml

[root@master ~]# ls

anaconda-ks.cfg k8s nfs-client.zip 模板 图片 下载 桌面

initial-setup-ks.cfg nfs-client 公共 视频 文档 音乐

[root@master ~]# rm -rf nfs-client.zip

[root@master ~]# cd nfs-client/

[root@master nfs-client]# ls

class.yaml deployment.yaml rbac.yaml

123456789101112131415

修改deployment.yaml,使用之前配置NFS服务器和目录

![]()

构建nfs-client-provisioner的pod资源

cd nfs-client/

kubectl create -f . #注意后面有个小数点

12

![]()

查看pod是否创建成功

kubectl get pods

1

![]()

2. 安装 Jenkins-Master

上传Jenkins-Master构建文件并解压

![]()

![]()

- 在StatefulSet.yaml文件,声明了利用nfs-client-provisioner进行Jenkins-Master文件存储

![]()

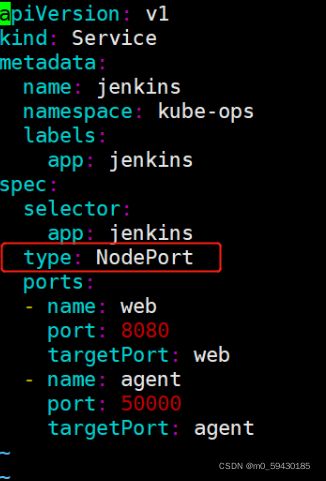

- Service发布方法采用NodePort,会随机产生节点访问端口

创建kube-ops的namespace

把Jenkins-Master的pod放到kube-ops下

kubectl create namespace kube-ops

1

构建Jenkins-Master的pod资源

kubectl create -f . #小数点

1

查看pod是否创建成功,需要耐心等待创建成功

kubectl get pods -n kube-ops

1

![]()

查看Pod运行在那个Node上,并使用自动分发的端口访问

kubectl describe pods -n kube-ops

kubectl get service -n kube-ops

12

![]()

![]()

访问地址为node节点的IP:

http://192.168.74.4:31017

需要密码

![]()

找到密钥并复制到web页面中登录

cat /opt/nfs/jenkins/kube-ops-jenkins-home-jenkins-0-pvc-6319468f-6ccf-4781-af84-df992eb7f96b/secrets/initialAdminPassword

1

注意目录中的pvc后面数字会不同,不能直接复制

![]()

Kubernetes中Jenkins部署完成!!!

3. Jenkins控制台配置

设置插件下载地址

cd /opt/nfs/jenkins/kube-ops-jenkins-home-jenkins-0-pvc-6319468f-6ccf-4781-af84-df992eb7f96b/updates/

sed -i 's/http:\/\/updates.jenkins- ci.org\/download/https:\/\/mirrors.tuna.tsinghua.edu.cn\/jenkins/g' default.json && sed -i 's/http:\/\/www.google.com/https:\/\/www.baidu.com/g' default.json

123

Manage Plugins——>Advanced,把Update Site改为国内插件下载地址

https://mirrors.tuna.tsinghua.edu.cn/jenkins/updates/update-center.json

1

![]()

安装所需插件

Chinese

Git

Pipeline

Extended Choice Parameter

Kubernetes

12345

六、Jenkins与Kubernetes整合

1. 实现Jenkins与K8s整合

系统管理->系统配置->云->新建云->Kubernetes

![]()

![]()

![]()

![]()

#kubernetes 地址采用了kube的服务器发现:

https://kubernetes.default.svc.cluster.local

命名空间填kube-ops

#点击Test Connection,如果出现 Connection test successful 的提示信息证明 Jenkins 已经可以和 Kubernetes 系统正常通信

#Jenkins URL地址:

http://jenkins.kube-ops.svc.cluster.local:8080

12345678

2. 构建Jenkins-Slave自定义镜像

- Jenkins-Master在构建Job的时候,Kubernetes会创建Jenkins-Slave的Pod来完成Job的构建

- 我们选择运行Jenkins-Slave的镜像为官方推荐镜像:jenkins/jnlp-slave:latest,但是这个镜像里面并没有Maven 环境,为了方便使用,我们需要自定义一个新的镜像:

上传压缩包——>解压——>查看

![]()

Dockerfile文件内容如下:

FROM jenkins/jnlp-slave:latest

MAINTAINER itcast

# 切换到 root 账户进行操作

USER root

# 安装 maven

COPY apache-maven-3.6.2-bin.tar.gz .

RUN tar -zxf apache-maven-3.6.2-bin.tar.gz && \

mv apache-maven-3.6.2 /usr/local && \

rm -f apache-maven-3.6.2-bin.tar.gz && \

ln -s /usr/local/apache-maven-3.6.2/bin/mvn /usr/bin/mvn && \

ln -s /usr/local/apache-maven-3.6.2 /usr/local/apache-maven && \

mkdir -p /usr/local/apache-maven/repo

COPY settings.xml /usr/local/apache-maven/conf/settings.xml

USER jenkins

1234567891011121314151617181920

构建镜像

docker build -t jenkins-slave-maven:latest .

1

![]()

镜像打标签,上传harbor仓库

docker login -u admin -p Harbor12345 192.168.74.7:85

docker tag jenkins-slave-maven:latest 192.168.74.7:85/library/jenkins-slave-maven:latest

docker push 192.168.74.7:85/library/jenkins-slave-maven:latest

123

![]()

3. Jenkins-slave流水线项目测试

创建流水线项目

![]()

添加Gitlab凭证

![]()

![]()

用流水线语法生成拉取代码

![]()

编写Pipeline,从GItlab拉取代码(使用http方式)

def git_address = "http://192.168.74.11:82/web_demo/tensquare_back.git"

def git_auth = "2736b833-5457-4aa1-bb97-6d353bb5977d"

//创建一个Pod的模板,label为jenkins-slave

podTemplate(label: 'jenkins-slave', cloud: 'kubernetes', containers: [

containerTemplate(

name: 'jnlp',

image: "192.168.74.7:85/library/jenkins-slave-maven:latest"

)

]

)

{

//引用jenkins-slave的pod模块来构建Jenkins-Slave的pod

node("jenkins-slave"){

stage('拉取代码'){

checkout([$class: 'GitSCM', branches: [[name: '*/master']], extensions: [], userRemoteConfigs: [[credentialsId: "${git_auth}", url: "${git_address}"]]])

}

}

}

12345678910111213141516171819

构建时再开一个窗口观察,node1节点运作![]()

可以看到构建的时候,创建了slave节点,构建是由slave进行的;

而当项目构建完成以后,这个slave节点就消失了!

七、基于kubernetes平台微服务的部署

1. 拉取代码,创建镜像

创建流水线项目

![]()

创建NFS共享目录,让所有Jenkins-Slave构建指向NFS的Maven的共享仓库目录

vim /etc/exports

#添加内容:

/opt/nfs/jenkins *(rw,no_root_squash)

/opt/nfs/maven *(rw,no_root_squash)

systemctl restart nfs

12345

编写构建Pipeline

![]()

![]()

![]()

tensquare_eureka_server@10086,tensquare_zuul@10020,tensquare_admin_service@9001,tensquare_gathering@9002

1

注册中心,服务网关,认证中心,活动微服务

1

上述所有逗号都要用英文

继续添加字符串参数

![]()

![]()

配置harbor凭证

![]()

脚本文件

def git_address = "http://192.168.8.18:82/gl/tensquare_back.git"

def git_auth = "da4091ce-712f-42fa-a3a8-d8aad6a166c1"

//构建版本的名称

def tag = "latest"

//Harbor私服地址

def harbor_url = "192.168.8.20:85"

//Harbor的项目名称

def harbor_project_name = "tensquare"

//Harbor的凭证

def harbor_auth = "c77a32ac-33a1-4855-947c-b0c4d788c555"

podTemplate(label: 'jenkins-slave', cloud: 'kubernetes', containers: [

containerTemplate(

name: 'jnlp',

image: "192.168.8.20:85/library/jenkins-slave-maven:latest"

),

containerTemplate(

name: 'docker',

image: "docker:stable",

ttyEnabled: true,

command: 'cat'

),

],

volumes: [

hostPathVolume(mountPath: '/var/run/docker.sock', hostPath: '/var/run/docker.sock'),

nfsVolume(mountPath: '/usr/local/apache-maven/repo', serverAddress: '192.168.8.12' , serverPath: '/opt/nfs/maven'),

],

)

{

node("jenkins-slave"){

// 第一步

stage('pull code'){

checkout([$class: 'GitSCM', branches: [[name: '*/master']], extensions: [], userRemoteConfigs: [[credentialsId: "${git_auth}", url: "${git_address}"]]])

}

// 第二步

stage('make public sub project'){

//编译并安装公共工程

sh "mvn -f tensquare_common clean install"

}

// 第三步

stage('make image'){

//把选择的项目信息转为数组

def selectedProjects = "${project_name}".split(',')

for(int i=0;i<selectedProjects.size();i++){

//取出每个项目的名称和端口

def currentProject = selectedProjects[i];

//项目名称

def currentProjectName = currentProject.split('@')[0]

//项目启动端口

def currentProjectPort = currentProject.split('@')[1]

//定义镜像名称

def imageName = "${currentProjectName}:${tag}"

//编译,构建本地镜像

sh "mvn -f ${currentProjectName} clean package dockerfile:build"

container('docker') {

//给镜像打标签

sh "docker tag ${imageName} ${harbor_url}/${harbor_project_name}/${imageName}"

//登录Harbor,并上传镜像

withCredentials([usernamePassword(credentialsId: "${harbor_auth}", passwordVariable: 'password', usernameVariable: 'username')])

{

//登录

sh "docker login -u ${username} -p ${password} ${harbor_url}"

//上传镜像

sh "docker push ${harbor_url}/${harbor_project_name}/${imageName}"

}

//删除本地镜像

sh "docker rmi -f ${imageName}"

sh "docker rmi -f ${harbor_url}/${harbor_project_name}/${imageName}"

}

}

}

}

}

12345678910111213141516171819202122232425262728293031323334353637383940414243444546474849505152535455565758596061626364656667686970717273747576777879808182

在构建过程会发现无法创建仓库目录,是因为NFS共享目录权限不足,需更改权限

mkdir -p /opt/nfs/maven

chmod -R 777 /opt/nfs/maven

#Docker命令执行权限问题(node节点)

chmod 777 /var/run/docker.sock

12345

进行构建测试

![]()

![]()

![]()

这是第一次下载maven组件,所以时间会有些长

2. 配置eureka服务

安装插件Kubernetes Continuous Deploy

![]()

添加k8s凭证

[root@master ~]# cd .kube/

[root@master .kube]# ls

cache config http-cache

[root@master .kube]# cat config

1234

![]()

![]()

复制id

![]()

编写pipeline脚本

def git_address = "http://192.168.74.11:82/web_demo/tensquare_back.git"

def git_auth = "2736b833-5457-4aa1-bb97-6d353bb5977d"

//构建版本的名称

def tag = "latest"

//Harbor私服地址

def harbor_url = "192.168.74.7:85"

//Harbor的项目名称

def harbor_project_name = "tensquare"

//Harbor的凭证

def harbor_auth = "4b806397-44dd-42c9-8a4c-38d298c1f86b"

//k8s的凭证

def k8s_auth="a74bad1a-3efb-4143-8816-a4f5a0a3cf3b"

//定义k8s-barbor的凭证

def secret_name="registry-auth-secret"

podTemplate(label: 'jenkins-slave', cloud: 'kubernetes', containers: [

containerTemplate(

name: 'jnlp',

image: "192.168.74.7:85/library/jenkins-slave-maven:latest"

),

containerTemplate(

name: 'docker',

image: "docker:stable",

ttyEnabled: true,

command: 'cat'

),

],

volumes: [

hostPathVolume(mountPath: '/var/run/docker.sock', hostPath: '/var/run/docker.sock'),

nfsVolume(mountPath: '/usr/local/apache-maven/repo', serverAddress: '192.168.74.4' , serverPath: '/opt/nfs/maven'),

],

)

{

node("jenkins-slave"){

// 第一步

stage('pull code'){

checkout([$class: 'GitSCM', branches: [[name: '*/master']], extensions: [], userRemoteConfigs: [[credentialsId: "${git_auth}", url: "${git_address}"]]])

}

// 第二步

stage('make public sub project'){

//编译并安装公共工程

sh "mvn -f tensquare_common clean install"

}

// 第三步

stage('make image'){

//把选择的项目信息转为数组

def selectedProjects = "${project_name}".split(',')

for(int i=0;i<selectedProjects.size();i++){

//取出每个项目的名称和端口

def currentProject = selectedProjects[i];

//项目名称

def currentProjectName = currentProject.split('@')[0]

//项目启动端口

def currentProjectPort = currentProject.split('@')[1]

//定义镜像名称

def imageName = "${currentProjectName}:${tag}"

//编译,构建本地镜像

sh "mvn -f ${currentProjectName} clean package dockerfile:build"

container('docker') {

//给镜像打标签

sh "docker tag ${imageName} ${harbor_url}/${harbor_project_name}/${imageName}"

//登录Harbor,并上传镜像

withCredentials([usernamePassword(credentialsId: "${harbor_auth}", passwordVariable: 'password', usernameVariable: 'username')])

{

//登录

sh "docker login -u ${username} -p ${password} ${harbor_url}"

//上传镜像

sh "docker push ${harbor_url}/${harbor_project_name}/${imageName}"

}

//删除本地镜像

sh "docker rmi -f ${imageName}"

sh "docker rmi -f ${harbor_url}/${harbor_project_name}/${imageName}"

}

def deploy_image_name = "${harbor_url}/${harbor_project_name}/${imageName}"

//部署到K8S

sh """

sed -i 's#\$IMAGE_NAME#${deploy_image_name}#' ${currentProjectName}/deploy.yml

sed -i 's#\$SECRET_NAME#${secret_name}#' ${currentProjectName}/deploy.yml

"""

kubernetesDeploy configs: "${currentProjectName}/deploy.yml", kubeconfigId: "${k8s_auth}"

}

}

}

}

123456789101112131415161718192021222324252627282930313233343536373839404142434445464748495051525354555657585960616263646566676869707172737475767778798081828384858687888990919293

eureka目录下创建deploy.yml文件

![]()

---

apiVersion: v1

kind: Service

metadata:

name: eureka

labels:

app: eureka

spec:

type: NodePort

ports:

- port: 10086

name: eureka

targetPort: 10086

selector:

app: eureka

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: eureka

spec:

serviceName: "eureka"

replicas: 2

selector:

matchLabels:

app: eureka

template:

metadata:

labels:

app: eureka

spec:

imagePullSecrets:

- name: $SECRET_NAME

containers:

- name: eureka

image: $IMAGE_NAME

ports:

- containerPort: 10086

env:

- name: MY_POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: EUREKA_SERVER

value: "http://eureka-0.eureka:10086/eureka/,http://eureka- 1.eureka:10086/eureka/"

- name: EUREKA_INSTANCE_HOSTNAME

value: ${MY_POD_NAME}.eureka

podManagementPolicy: "Parallel"

123456789101112131415161718192021222324252627282930313233343536373839404142434445464748

更改application.yml配置文件

server:

port: ${PORT:10086}

spring:

application:

name: eureka

eureka:

server:

# 续期时间,即扫描失效服务的间隔时间(缺省为60*1000ms)

eviction-interval-timer-in-ms: 5000

enable-self-preservation: false

use-read-only-response-cache: false

client:

# eureka client间隔多久去拉取服务注册信息 默认30s

registry-fetch-interval-seconds: 5

serviceUrl:

defaultZone: ${EUREKA_SERVER:http://127.0.0.1:${server.port}/eureka/}

instance:

# 心跳间隔时间,即发送一次心跳之后,多久在发起下一次(缺省为30s)

lease-renewal-interval-in-seconds: 5

# 在收到一次心跳之后,等待下一次心跳的空档时间,大于心跳间隔即可,即服务续约到期时间(缺省为90s)

lease-expiration-duration-in-seconds: 10

instance-id: ${EUREKA_INSTANCE_HOSTNAME:${spring.application.name}}:${server.port}@${random.l ong(1000000,9999999)}

hostname: ${EUREKA_INSTANCE_HOSTNAME:${spring.application.name}}

123456789101112131415161718192021222324

提交修改的配置文件和yml文件

![]()

![]()

k8s访问harbor需要密钥权限

docker login -u tom -p Abcd1234 192.168.74.7:85

kubectl create secret docker-registry registry-auth-secret --docker-server=192.168.74.7:85 --docker-username=tom --docker-password=Abcd1234 -- docker-email=[email protected]

kubectl get secrets

kubectl get pods

kubectl get service

1234567

![]()

可以使用kubectl get pods查看是否构建成功

![]()

![]()

访问两个node节点的30708端口

![]()

把其余三个子服务的yml配置文件修改

3.zuul

修改 application.yml 中的 eureka 地址

http://eureka-0.eureka:10086/eureka/,http://eureka-1.eureka:10086/eureka/

1

![]()

创建配置文件deploy.yml如下(其他项目名字和端口进行修改即可)

---

apiVersion: v1

kind: Service

metadata:

name: zuul

labels:

app: zuul

spec:

type: NodePort

ports:

- port: 10020

name: zuul

targetPort: 10020

selector:

app: zuul

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: zuul

spec:

serviceName: "zuul"

replicas: 2

selector:

matchLabels:

app: zuul

template:

metadata:

labels:

app: zuul

spec:

imagePullSecrets:

- name: $SECRET_NAME

containers:

- name: zuul

image: $IMAGE_NAME

ports:

- containerPort: 10020

podManagementPolicy: "Parallel"

12345678910111213141516171819202122232425262728293031323334353637383940

4.admin

修改application.yml配置文件

http://eureka-0.eureka:10086/eureka/,http://eureka-1.eureka:10086/eureka/

1

deploy.yml

---

apiVersion: v1

kind: Service

metadata:

name: admin

labels:

app: admin

spec:

type: NodePort

ports:

- port: 9001

name: admin

targetPort: 9001

selector:

app: admin

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: admin

spec:

serviceName: "admin"

replicas: 2

selector:

matchLabels:

app: admin

template:

metadata:

labels:

app: admin

spec:

imagePullSecrets:

- name: $SECRET_NAME

containers:

- name: admin

image: $IMAGE_NAME

ports:

- containerPort: 9001

podManagementPolicy: "Parallel"

12345678910111213141516171819202122232425262728293031323334353637383940

5.gathering

修改application.yml配置文件

http://eureka-0.eureka:10086/eureka/,http://eureka-1.eureka:10086/eureka/

1

deploy.yml

---

apiVersion: v1

kind: Service

metadata:

name: gathering

labels:

app: gathering

spec:

type: NodePort

ports:

- port: 9002

name: gathering

targetPort: 9002

selector:

app: gathering

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: gathering

spec:

serviceName: "gathering"

replicas: 2

selector:

matchLabels:

app: gathering

template:

metadata:

labels:

app: gathering

spec:

imagePullSecrets:

- name: $SECRET_NAME

containers:

- name: gathering

image: $IMAGE_NAME

ports:

- containerPort: 9002

podManagementPolicy: "Parallel"

123456789101112131415161718192021222324252627282930313233343536373839

完成后全部提交gitlab仓库

6.依次构建3个子服务

6.1 zuul

![]()

![]()

![]()

6.2 admin

![]()

![]()

![]()

6.3 gathering

![]()

![]()

![]()

7. postman 测试数据库

因为各个微服务的 application.yml 中 mysql 数据库的地址为 192.168.74.8,我们还用以前实验的那台机器中的数据库

![]()

现在的生产服务器地址为 k8s 节点的地址,访问端口为映射的端口

![]()

![]()

文章已被收录至官方知识档案

云原生入门技能树持续集成和部署(Jenkins)k8s持续集成(CI,CD)7871 人正在系统学习中