Python进行Logistic回归

-

-

-

- 第一步,导入库和数据;

- 第二步,处理数据;

- 第三步,数据建模;

- 最后,模型评价。

第一步,导入库和数据;

from sklearn import datasets

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

plt.rcParams['font.sans-serif'] = ['SimHei']

plt.rcParams['axes.unicode_minus'] = False

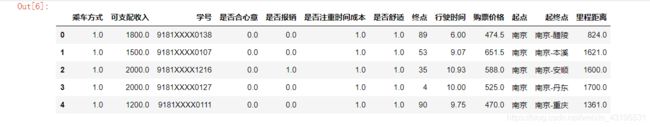

data = pd.read_excel('F:\\Desktop\\数据.xlsx')

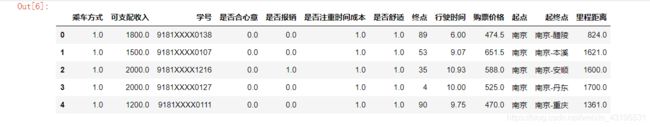

data[:5]

第二步,处理数据;

data = pd.concat([data, pd.DataFrame(columns = ['起点', '终点'])], sort = True)

n1 = []

for i in list(data['起终点'].values):

i = i.split('-')

n1.append(i)

for i in range(len(n1)):

data['起点'][i] = n1[i][0]

data['终点'][i] = n1[i][1]

import numpy as np

class_mapping = {label:k for k,label in enumerate(np.unique(data['终点']))}

data['终点'] = data['终点'].map(class_mapping)

data[:5]

第三步,数据建模;

data1 = data[['乘车方式', '终点', '里程距离', '行驶时间', '是否合心意', '可支配收入', '是否报销', '购票价格', '是否舒适', '是否注重时间成本']]

X = data1.iloc[:, 1:]

y = data1.iloc[:, 0]

from sklearn.cross_validation import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.3, random_state = 0)

from sklearn.preprocessing import StandardScaler

stdsc = StandardScaler()

X_train_std = stdsc.fit_transform(X_train)

X_test_std = stdsc.transform(X_test)

from sklearn.linear_model import LogisticRegression

lr = LogisticRegression(C = 10)

lr.fit(X_train_std, y_train)

print('Training accuracy:', lr.score(X_train_std, y_train))

print('Test accuracy:', lr.score(X_test_std, y_test))

lr.coef_

lr.intercept_

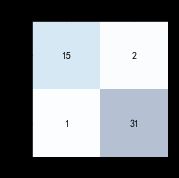

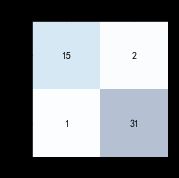

最后,模型评价。

from sklearn.metrics import confusion_matrix

y_pred = lr.predict(X_test_std)

confmat = confusion_matrix(y_true=y_test, y_pred=y_pred)

print(confmat)

fig, ax = plt.subplots(figsize=(2.5, 2.5))

ax.matshow(confmat, cmap=plt.cm.Blues, alpha=0.3)

for i in range(confmat.shape[0]):

for j in range(confmat.shape[1]):

ax.text(x=j, y=i, s=confmat[i, j], va='center', ha='center')

plt.xlabel('预测类标')

plt.ylabel('真实类标')

plt.show()

from sklearn.metrics import precision_score, recall_score, f1_score

print('Precision: %.4f' % precision_score(y_true=y_test, y_pred=y_pred))

print('Recall: %.4f' % recall_score(y_true=y_test, y_pred=y_pred))

print('F1: %.4f' % f1_score(y_true=y_test, y_pred=y_pred))

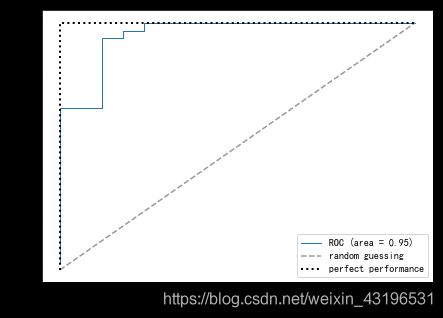

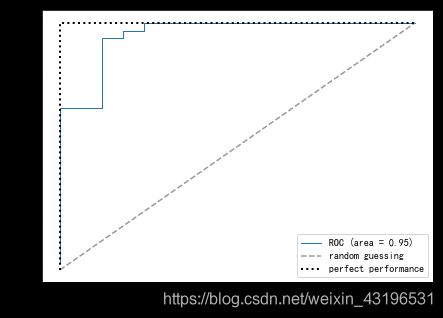

from sklearn.metrics import roc_curve, auc

from scipy import interp

fig = plt.figure(figsize=(7, 5))

mean_tpr = 0.0

mean_fpr = np.linspace(0, 1, 100)

all_tpr = []

probas = lr.fit(X_train, y_train).predict_proba(X_test)

fpr, tpr, thresholds = roc_curve(y_test, probas[:, 1], pos_label=1)

mean_tpr += interp(mean_fpr, fpr, tpr)

mean_tpr[0] = 0.0

roc_auc = auc(fpr, tpr)

plt.plot(fpr, tpr, lw=1, label='ROC (area = %0.2f)'

% ( roc_auc))

plt.plot([0, 1], [0, 1], linestyle='--', color=(0.6, 0.6, 0.6), label='random guessing')

mean_tpr /= len(X_train)

mean_tpr[-1] = 1.0

mean_auc = auc(mean_fpr, mean_tpr)

plt.plot([0, 0, 1],

[0, 1, 1],

lw=2,

linestyle=':',

color='black',

label='perfect performance')

plt.xlim([-0.05, 1.05])

plt.ylim([-0.05, 1.05])

plt.xlabel('假正率')

plt.ylabel('真正率')

plt.title('')

plt.legend(loc="lower right")

plt.show()