深度学习课件-实验1_PyTorch基本操作实验

文章目录

- 一、Pytorch基本操作考察

-

- 1.1

- 1.2

- 1.3

- 二、动手实现 logistic 回归

-

- 2.1

- 2.2

- 三、动手实现softmax回归

-

- 3.1

- 3.2

一、Pytorch基本操作考察

-

使用 初始化一个 × 的矩阵 和一个 × 的矩阵 ,对两矩阵进行减法操作(要求实现三种不同的形式),给出结果并分析三种方式的不同(如果出现报错,分析报错的原因),同时需要指出在计算过程中发生了什么

-

利用 创建两个大小分别 × 和 × 的随机数矩阵 和 ,要求服从均值为0,标准差0.01为的正态分布 2) 对第二步得到的矩阵 进行形状变换得到 的转置 ^ 3) 对上述得到的矩阵 和矩阵 ^ 求内积!

-

给定公式 _3=_1+_2=2+3,且 =1。利用学习所得到的Tensor的相关知识,求_3对的梯度,即(_3)/。要求在计算过程中,在计算^3 时中断梯度的追踪,观察结果并进行原因分析提示, 可使用 with torch.no_grad(), 举例:with torch.no_grad(): y2 = x * 3

1.1

使用 初始化一个 × 的矩阵 和一个 × 的矩阵 ,对两矩阵进行减法操作(要求实现三种不同的形式),给出结果并分析三种方式的不同(如果出现报错,分析报错的原因),同时需要指出在计算过程中发生了什么

使用Tensor初始化矩阵M、N

import torch

# 使用 tensor 库初始化矩阵

M = torch.Tensor([[1, 2, 3]]) # × 的矩阵

N = torch.Tensor([[4], [5]]) # × 的矩阵

方法一:两矩阵直接相减

result = M - N

result

tensor([[-3., -2., -1.],

[-4., -3., -2.]])

方法二:使用 tensor 库中的函数来对两个矩阵进行减法运算,例如使用 torch.sub() 函数

result = torch.sub(M, N)

result

tensor([[-3., -2., -1.],

[-4., -3., -2.]])

方法三:使用 map() 函数进行矩阵减法运算。

result = list(map(lambda x, y: [x_i - y_i for x_i, y_i in zip(x, y)], M, N))

result

[[tensor(-3.)]]

1.2

利用 创建两个大小分别 × 和 × 的随机数矩阵 和 ,要求服从均值为0,标准差0.01为的正态分布 2) 对第二步得到的矩阵 进行形状变换得到 的转置 ^ 3) 对上述得到的矩阵 和矩阵 ^ 求内积!

1)利用 创建两个大小分别 × 和 × 的随机数矩阵 和 ,要求服从均值为0,标准差0.01为的正态分布

import torch

# 利用 torch.randn() 函数创建随机数矩阵

P = torch.randn(3, 2, dtype=torch.float) * 0.01

Q = torch.randn(4, 2, dtype=torch.float) * 0.01

2)对第二步得到的矩阵 进行形状变换得到 的转置 ^

Q_T = Q.t()

- 对上述得到的矩阵 和矩阵 ^ 求内积!

result = torch.mm(P, Q_T)

result

tensor([[ 4.9330e-05, -1.1444e-04, -9.8972e-05, 1.1928e-04],

[-2.7478e-05, 7.1535e-05, 5.9566e-05, -7.8800e-05],

[ 2.1334e-04, -1.2982e-04, -2.2018e-04, -6.3379e-05]])

1.3

给定公式 _3=_1+_2=2+3,且 =1。利用学习所得到的Tensor的相关知识,求_3对的梯度,即(_3)/。要求在计算过程中,在计算^3 时中断梯度的追踪,观察结果并进行原因分析提示, 可使用 with torch.no_grad(), 举例:with torch.no_grad(): y2 = x * 3

import torch

x = torch.tensor(1.0,requires_grad = True)

y1 = x**2

with torch.no_grad():

y2 = x**3

y3 = y1+y2

y3.backward()

print(x.grad)

tensor(2.)

二、动手实现 logistic 回归

-

要求动手从0实现 logistic 回归(只借助Tensor和Numpy相关的库)在人工构造的数据集上进行训练和测试,并从loss、训练集以及测试集上的准确率等多个角度对结果进行分析

-

利用 torch.nn 实现 logistic 回归在人工构造的数据集上进行训练和测试,并对结果进行分析,并从loss、训练集以及测试集上的准确率等多个角度对结果进行分析

2.1

要求动手从0实现 logistic 回归(只借助Tensor和Numpy相关的库)在人工构造的数据集上进行训练和测试,并从loss、训练集以及测试集上的准确率等多个角度对结果进行分析

1)生成训练、测试数据集

import torch

import numpy as np

import matplotlib.pyplot as plt

num_inputs = 2

n_data = torch.ones(1000, num_inputs)

x1 = torch.normal(2 * n_data, 1)

y1 = torch.ones(1000)

x2 = torch.normal(-2 * n_data, 1)

y2 = torch.zeros(1000)

#划分训练和测试集

train_index = 800

trainfeatures = torch.cat((x1[:train_index], x2[:train_index]), 0).type(torch.FloatTensor)

trainlabels = torch.cat((y1[:train_index], y2[:train_index]), 0).type(torch.FloatTensor)

testfeatures = torch.cat((x1[train_index:], x2[train_index:]), 0).type(torch.FloatTensor)

testlabels = torch.cat((y1[train_index:], y2[train_index:]), 0).type(torch.FloatTensor)

print(len(trainfeatures))

1600

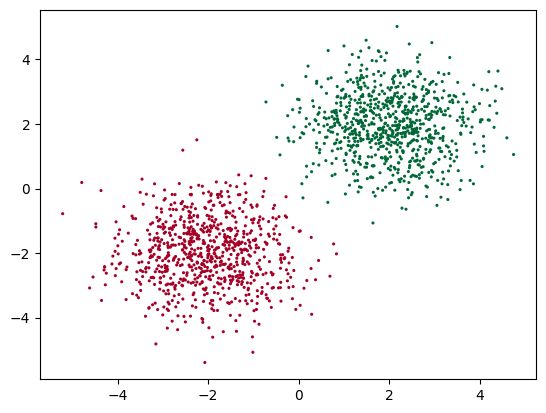

2)训练集数据可视化

plt.scatter(trainfeatures.data.numpy()[:, 0],

trainfeatures.data.numpy()[:, 1],

c=trainlabels.data.numpy(),

s=5, lw=0, cmap='RdYlGn' )

plt.show()

3)读取样本特征

def data_iter(batch_size,features,labels):

num_examples=len(features)

indices=list(range(num_examples))

np.random.shuffle(indices)

for i in range(0,num_examples,batch_size):

j=torch.LongTensor(indices[i:min(i+batch_size,num_examples)])

yield features.index_select(0,j),labels.index_select(0,j)

4)逻辑回归、二元交叉熵损失函数

def logits(X, w, b):

y = torch.mm(X, w) + b

return 1/(1+torch.pow(np.e,-y))

def logits_loss(y_hat, y):

y = y.view(y_hat.size())

return -y.mul(torch.log(y_hat))-(1-y).mul(torch.log(1-y_hat))

#优化函数

def sgd(params, lr, batch_size):

for param in params:

param.data -= lr * param.grad / batch_size # 注意这里更改param时用的param.data

- 测试集准确率

def evaluate_accuracy():

acc_sum,n,test_l_sum = 0.0,0 ,0

for X,y in data_iter(batch_size, testfeatures, testlabels):

y_hat = net(X, w, b)

y_hat = torch.squeeze(torch.where(y_hat>0.5,torch.tensor(1.0),torch.tensor(0.0)))

acc_sum += (y_hat==y).float().sum().item()

l = loss(y_hat,y).sum()

test_l_sum += l.item()

n+=y.shape[0]

return acc_sum/n,test_l_sum/n

6)模型参数初始化

w=torch.tensor(np.random.normal(0,0.01,(num_inputs,1)),dtype=torch.float32)

b=torch.zeros(1,dtype=torch.float32)

w.requires_grad_(requires_grad=True)

b.requires_grad_(requires_grad=True)

tensor([0.], requires_grad=True)

7)训练

lr = 0.0005

num_epochs = 10

net = logits

loss = logits_loss

batch_size = 50

test_acc,train_acc= [],[]

train_loss,test_loss =[],[]

for epoch in range(num_epochs): # 训练模型一共需要num_epochs个迭代周期

train_l_sum, train_acc_sum,n = 0.0,0.0,0

#在每一个迭代周期中,会使用训练数据集中所有样本一次

for X, y in data_iter(batch_size, trainfeatures, trainlabels): # x和y分别是小批量样本的特征和标签

y_hat = net(X, w, b)

l = loss(y_hat, y).sum() # l是有关小批量X和y的损失

l.backward() # 小批量的损失对模型参数求梯度

sgd([w, b], lr, batch_size) # 使用小批量随机梯度下降迭代模型参数

w.grad.data.zero_() # 梯度清零

b.grad.data.zero_() # 梯度清零

#计算每个epoch的loss

train_l_sum += l.item()

#计算训练样本的准确率

y_hat = torch.squeeze(torch.where(y_hat>0.5,torch.tensor(1.0),torch.tensor(0.0)))

train_acc_sum += (y_hat==y).sum().item()

#每一个epoch的所有样本数

n+= y.shape[0]

#train_l = loss(net(trainfeatures, w, b), trainlabels)

#计算测试样本的准确率

test_a,test_l = evaluate_accuracy()

test_acc.append(test_a)

test_loss.append(test_l)

train_acc.append(train_acc_sum/n)

train_loss.append(train_l_sum/n)

print('epoch %d, loss %.4f, train acc %.3f, test acc %.3f'

% (epoch + 1, train_loss[epoch], train_acc[epoch], test_acc[epoch]))

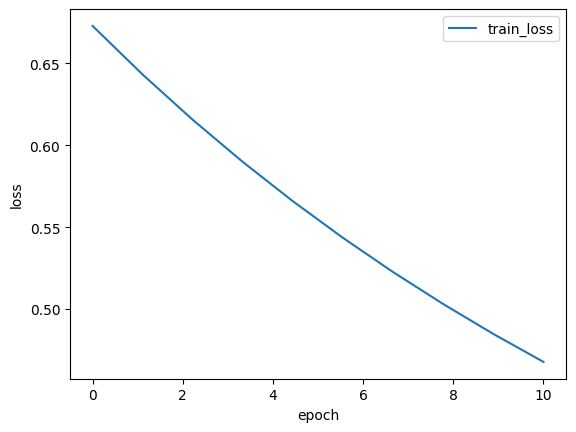

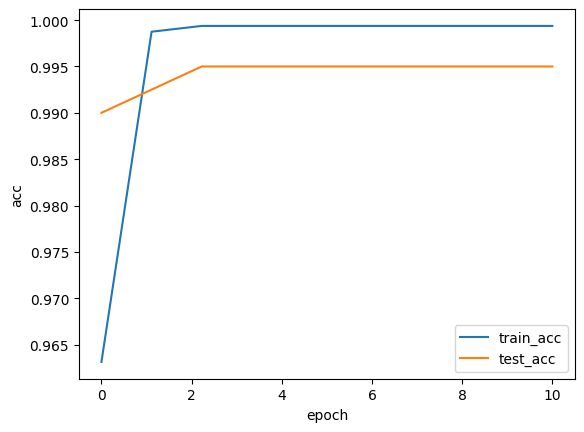

epoch 1, loss 0.6728, train acc 0.963, test acc 0.990

epoch 2, loss 0.6432, train acc 0.999, test acc 0.993

epoch 3, loss 0.6155, train acc 0.999, test acc 0.995

epoch 4, loss 0.5898, train acc 0.999, test acc 0.995

epoch 5, loss 0.5658, train acc 0.999, test acc 0.995

epoch 6, loss 0.5434, train acc 0.999, test acc 0.995

epoch 7, loss 0.5226, train acc 0.999, test acc 0.995

epoch 8, loss 0.5031, train acc 0.999, test acc 0.995

epoch 9, loss 0.4848, train acc 0.999, test acc 0.995

epoch 10, loss 0.4677, train acc 0.999, test acc 0.995

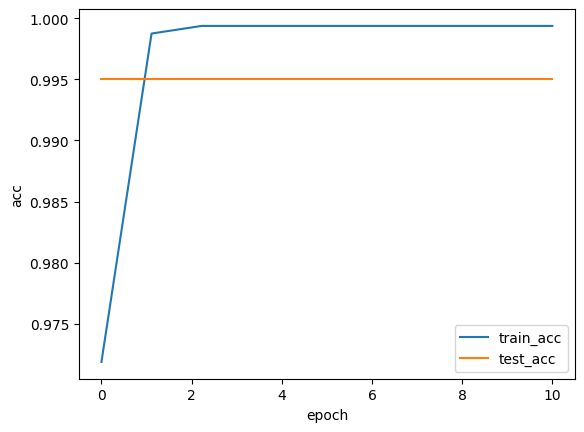

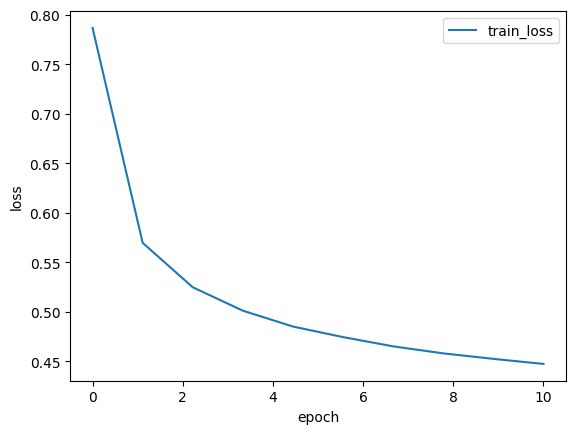

8)绘出损失、准确率

import matplotlib.pyplot as plt

def Draw_Curve(*args,xlabel = "epoch",ylabel = "loss"):#

for i in args:

x = np.linspace(0,len(i[0]),len(i[0]))

plt.plot(x,i[0],label=i[1],linewidth=1.5)

plt.xlabel(xlabel)

plt.ylabel(ylabel)

plt.legend()

plt.show()

Draw_Curve([train_loss,"train_loss"])

Draw_Curve([train_acc,"train_acc"],[test_acc,"test_acc"],ylabel = "acc")

2.2

利用 torch.nn 实现 logistic 回归在人工构造的数据集上进行训练和测试,并对结果进行分析,并从loss、训练集以及测试集上的准确率等多个角度对结果进行分析

import torch

import numpy as np

import matplotlib.pyplot as plt

import torch.utils.data as Data

from torch.nn import init

from torch import nn

1)读取数据

batch_size = 50

# 将训练数据的特征和标签组合

dataset = Data.TensorDataset(trainfeatures, trainlabels)

# 把 dataset 放入 DataLoader

train_data_iter = Data.DataLoader(dataset=dataset, # torch TensorDataset format

batch_size=batch_size, # mini batch size

shuffle=True, # 是否打乱数据 (训练集一般需要进行打乱)

num_workers=0, # 多线程来读数据, 注意在Windows下需要设置为0

)

# 将测试数据的特征和标签组合

dataset = Data.TensorDataset(testfeatures, testlabels)

# 把 dataset 放入 DataLoader

test_data_iter = Data.DataLoader(dataset=dataset, # torch TensorDataset format

batch_size=batch_size, # mini batch size

shuffle=False, # 是否打乱数据 (训练集一般需要进行打乱)

num_workers=0, # 多线程来读数据, 注意在Windows下需要设置为0

)

2)nn.Module定义模型

class LogisticRegression(nn.Module):

def __init__(self,n_features):

super(LogisticRegression, self).__init__()

self.lr = nn.Linear(n_features, 1)

self.sm = nn.Sigmoid()

def forward(self, x):

x = self.lr(x)

x = self.sm(x)

return x

3)初始化模型,定义损失函数、优化器

# 初始化模型

logistic_model = LogisticRegression(num_inputs)

# 损失函数

criterion = nn.BCELoss()

# 优化器

optimizer = torch.optim.SGD(logistic_model.parameters(), lr=1e-3)

4)参数初始化

init.normal_(logistic_model.lr.weight, mean=0, std=0.01)

init.constant_(logistic_model.lr.bias, val=0) #也可以直接修改bias的data: net[0].bias.data.fill_(0)

print(logistic_model.lr.weight)

print(logistic_model.lr.bias)

Parameter containing:

tensor([[ 0.0210, -0.0105]], requires_grad=True)

Parameter containing:

tensor([0.], requires_grad=True)

5)训练

num_epochs = 10

test_acc,train_acc= [],[]

train_loss,test_loss =[],[]

for epoch in range( num_epochs):

train_l_sum, train_acc_sum,n = 0.0,0.0,0

for X, y in train_data_iter:

y_hat = logistic_model(X)

l = criterion(y_hat, y.view(-1, 1))

optimizer.zero_grad() # 梯度清零,等价于logistic_model.zero_grad()

l.backward()

# update model parameters

optimizer.step()

#计算每个epoch的loss

train_l_sum += l.item()

#计算训练样本的准确率

y_hat = torch.squeeze(torch.where(y_hat>0.5,torch.tensor(1.0),torch.tensor(0.0)))

train_acc_sum += (y_hat==y).sum().item()

#每一个epoch的所有样本数

n+= y.shape[0]

#计算测试样本的准确率

test_a,test_l = evaluate_accuracy()

test_acc.append(test_a)

test_loss.append(test_l)

train_acc.append(train_acc_sum/n)

train_loss.append(train_l_sum/n)

print('epoch %d, loss %.4f, train acc %.3f, test acc %.3f'

% (epoch + 1, train_loss[epoch], train_acc[epoch], test_acc[epoch]))

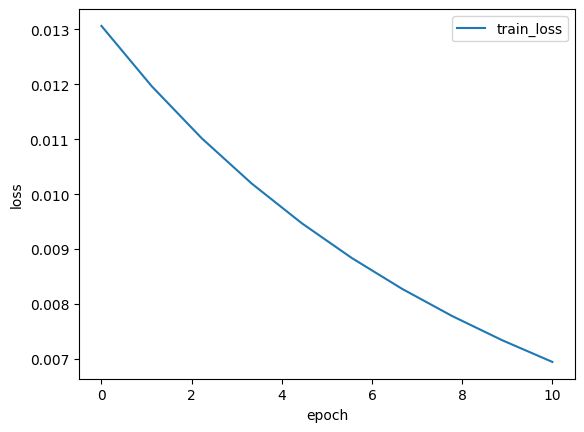

epoch 1, loss 0.0131, train acc 0.972, test acc 0.995

epoch 2, loss 0.0120, train acc 0.999, test acc 0.995

epoch 3, loss 0.0110, train acc 0.999, test acc 0.995

epoch 4, loss 0.0102, train acc 0.999, test acc 0.995

epoch 5, loss 0.0095, train acc 0.999, test acc 0.995

epoch 6, loss 0.0088, train acc 0.999, test acc 0.995

epoch 7, loss 0.0083, train acc 0.999, test acc 0.995

epoch 8, loss 0.0078, train acc 0.999, test acc 0.995

epoch 9, loss 0.0073, train acc 0.999, test acc 0.995

epoch 10, loss 0.0069, train acc 0.999, test acc 0.995

6)绘出损失、准确率

Draw_Curve([train_loss,"train_loss"])

Draw_Curve([train_acc,"train_acc"],[test_acc,"test_acc"],ylabel = "acc")

三、动手实现softmax回归

-

要求动手从0实现 softmax 回归(只借助Tensor和Numpy相关的库)在Fashion-MNIST数据集上进行训练和测试,并从loss、训练集以及测试集上的准确率等多个角度对结果进行分析(要求从零实现交叉熵损失函数

-

利用torch.nn实现 softmax 回归在Fashion-MNIST数据集上进行训练和测试,并从loss,训练集以及测试集上的准确率等多个角度对结果进行分析

3.1

要求动手从0实现 softmax 回归(只借助Tensor和Numpy相关的库)在Fashion-MNIST数据集上进行训练和测试,并从loss、训练集以及测试集上的准确率等多个角度对结果进行分析(要求从零实现交叉熵损失函数

import torchvision

import torchvision.transforms as transforms

1)读取数据

batch_size = 256

mnist_train = torchvision.datasets.FashionMNIST(root="../data", train=True, download=True, transform=transforms.ToTensor())

mnist_test = torchvision.datasets.FashionMNIST(root="../data", train=False, download=True, transform=transforms.ToTensor())

train_iter = torch.utils.data.DataLoader(mnist_train, batch_size=batch_size, shuffle=True, num_workers=0)

test_iter = torch.utils.data.DataLoader(mnist_test, batch_size=batch_size, shuffle=False, num_workers=0)

2)定义损失函数,优化函数

# 实现交叉熵损失函数

def cross_entropy(y_hat,y):

return - torch.log(y_hat.gather(1,y.view(-1,1)))

def sgd(params, lr, batch_size):

for param in params:

param.data -= lr * param.grad / batch_size # 注意这里更改param时用的param.data

3)初始化模型参数

# 初始化模型参数

num_inputs = 784 # 输入是28x28像素的图像,所以输入向量长度为28*28=784

num_outputs = 10 # 输出是10个图像类别

W = torch.tensor(np.random.normal(0,0.01,(num_inputs,num_outputs)),dtype=torch.float) # 权重参数为784x10

b = torch.zeros(num_outputs,dtype=torch.float) # 偏差参数为1x10

# 模型参数梯度

W.requires_grad_(requires_grad=True)

b.requires_grad_(requires_grad=True)

tensor([0., 0., 0., 0., 0., 0., 0., 0., 0., 0.], requires_grad=True)

4)实现softmax运算

# 实现softmax运算

def softmax(X):

X_exp = X.exp() # 通过exp函数对每个元素做指数运算

partition = X_exp.sum(dim=1, keepdim=True) # 对exp矩阵同行元素求和

return X_exp / partition # 矩阵每行各元素与该行元素之和相除 最终得到的矩阵每行元素和为1且非负

5)模型定义

# 模型定义

def net(X):

return softmax(torch.mm(X.view((-1,num_inputs)),W)+b)

6)定义精度评估函数

# 计算分类准确率

def evaluate_accuracy(data_iter,net):

acc_sum,n,test_l_sum= 0.0,0,0.0

for X,y in data_iter:

acc_sum += (net(X).argmax(dim = 1) == y).float().sum().item()

l = loss(net(X),y).sum()

test_l_sum += l.item()

n += y.shape[0]

return acc_sum/n,test_l_sum/n

7)开始训练

# 模型训练

num_epochs,lr = 10, 0.1

test_acc,train_acc= [],[]

train_loss,test_loss =[],[]

loss = cross_entropy

params = [W,b]

for epoch in range(num_epochs):

train_l_sum, train_acc_sum, n = 0.0, 0.0, 0

for X,y in train_iter:

y_hat = net(X)

l = loss(y_hat,y).sum()

# 梯度清零 梯度清零放在最后

#默认一开始梯度为零或者说没有梯度,所以讲梯度清零放在for循环的最后

l.backward()

sgd(params, lr, batch_size)

W.grad.data.zero_()

b.grad.data.zero_()

train_l_sum += l.item()

train_acc_sum += (y_hat.argmax(dim=1) == y).sum().item()

n += y.shape[0]

test_a,test_l = evaluate_accuracy(test_iter, net)

test_acc.append(test_a)

test_loss.append(test_l)

train_acc.append(train_acc_sum/n)

train_loss.append(train_l_sum/n)

print('epoch %d, loss %.4f, train acc %.3f, test acc %.3f'

% (epoch + 1, train_loss[epoch], train_acc[epoch], test_acc[epoch]))

epoch 1, loss 0.7865, train acc 0.748, test acc 0.794

epoch 2, loss 0.5697, train acc 0.813, test acc 0.812

epoch 3, loss 0.5249, train acc 0.826, test acc 0.814

epoch 4, loss 0.5012, train acc 0.831, test acc 0.823

epoch 5, loss 0.4852, train acc 0.837, test acc 0.828

epoch 6, loss 0.4746, train acc 0.840, test acc 0.830

epoch 7, loss 0.4652, train acc 0.843, test acc 0.828

epoch 8, loss 0.4582, train acc 0.845, test acc 0.833

epoch 9, loss 0.4526, train acc 0.846, test acc 0.834

epoch 10, loss 0.4475, train acc 0.848, test acc 0.833

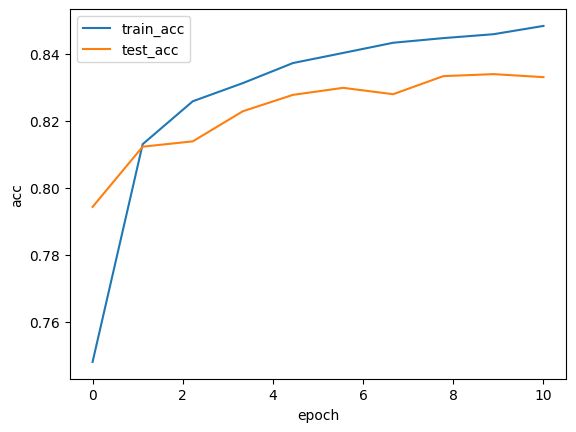

Draw_Curve([train_loss,"train_loss"])

Draw_Curve([train_acc,"train_acc"],[test_acc,"test_acc"],ylabel = "acc")

3.2

利用torch.nn实现 softmax 回归在Fashion-MNIST数据集上进行训练和测试,并从loss,训练集以及测试集上的准确率等多个角度对结果进行分析

import torchvision

import torchvision.transforms as transforms

1)读取数据

batch_size = 256

mnist_train = torchvision.datasets.FashionMNIST(root="../data", train=True, download=True, transform=transforms.ToTensor())

mnist_test = torchvision.datasets.FashionMNIST(root="../data", train=False, download=True, transform=transforms.ToTensor())

train_iter = torch.utils.data.DataLoader(mnist_train, batch_size=batch_size, shuffle=True, num_workers=0)

test_iter = torch.utils.data.DataLoader(mnist_test, batch_size=batch_size, shuffle=False, num_workers=0)

2)定义模型

#初始化模型

net = torch.nn.Sequential(nn.Flatten(),

nn.Linear(784,10))

3)初始化模型参数

def init_weights(m):#初始参数

if type(m) == nn.Linear:

nn.init.normal_(m.weight,std = 0.01)

net.apply(init_weights)

Sequential(

(0): Flatten(start_dim=1, end_dim=-1)

(1): Linear(in_features=784, out_features=10, bias=True)

)

4)损失函数、优化器

loss = nn.CrossEntropyLoss()

optimizer = torch.optim.SGD(net.parameters(),lr = 0.1)

5)模型训练

#模型训练

num_epochs = 10

lr = 0.1

test_acc,train_acc= [],[]

train_loss,test_loss =[],[]

for epoch in range(num_epochs):

train_l_sum, train_acc_sum, n = 0.0, 0.0, 0

for X,y in train_iter:

y_hat = net(X)

l = loss(y_hat,y).sum()

optimizer.zero_grad()

l.backward()

optimizer.step()

train_l_sum += l.item()

train_acc_sum += (y_hat.argmax(dim=1) == y).sum().item()

n += y.shape[0]

test_a,test_l = evaluate_accuracy(test_iter, net)

test_acc.append(test_a)

test_loss.append(test_l)

train_acc.append(train_acc_sum/n)

train_loss.append(train_l_sum/n)

print('epoch %d, loss %.4f, train acc %.3f, test acc %.3f'%(epoch + 1, train_loss[epoch], train_acc[epoch], test_acc[epoch]))

epoch 1, loss 0.0031, train acc 0.748, test acc 0.786

epoch 2, loss 0.0022, train acc 0.812, test acc 0.805

epoch 3, loss 0.0021, train acc 0.826, test acc 0.820

epoch 4, loss 0.0020, train acc 0.832, test acc 0.819

epoch 5, loss 0.0019, train acc 0.836, test acc 0.823

epoch 6, loss 0.0019, train acc 0.840, test acc 0.825

epoch 7, loss 0.0018, train acc 0.843, test acc 0.830

epoch 8, loss 0.0018, train acc 0.845, test acc 0.833

epoch 9, loss 0.0018, train acc 0.847, test acc 0.832

epoch 10, loss 0.0018, train acc 0.848, test acc 0.832

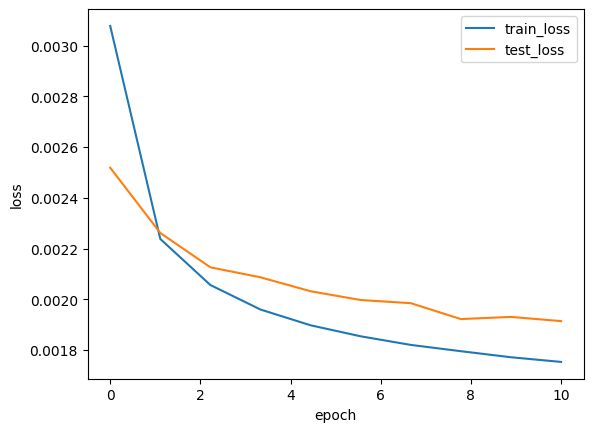

Draw_Curve([train_loss,"train_loss"],[test_loss,"test_loss"])