python异步爬虫(1)之多线程

- 多线程,多进程(不建议使用)

优点:可以为相关阻塞的操作单独开启线程或者进程,阻塞操作可以异步执行

弊端:无法无限制开启多线程或多进程。 - 原则:线程池处理的是阻塞且耗时的操作

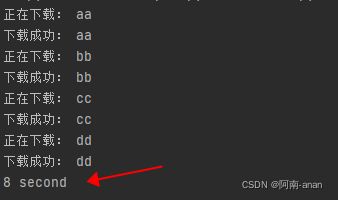

单线爬虫示例

import time

def get_page(str):

print("正在下载:",str)

time.sleep(2)

print('下载成功:',str)

name_list = ['aa','bb','cc','dd']

start_time = time.time()

for i in range(len(name_list)):

get_page(name_list[i])

end_time = time.time()

print('%d second'% (end_time-start_time))

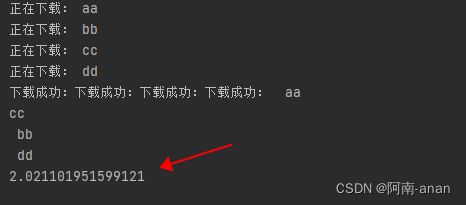

import time

# 导入线程池模块对应的类

from multiprocessing.dummy import Pool

start_time = time.time()

def get_page(str):

print("正在下载:",str)

time.sleep(2)

print('下载成功:',str)

name_list = ['aa','bb','cc','dd']

# 实例化一个线程池对象

pool = Pool(4)

# 将列表中每一个列表元素传递给get_page进行处理

pool.map(get_page,name_list)

end_time = time.time()

print(end_time-start_time)

# 多线爬虫示例

import requests

from lxml import etree

import re

from multiprocessing.dummy import Pool

headers = {

'User-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:80.0) Gecko/20100101 Firefox/80.0',

'Content-type':'application/json',

}

# 对下述url发起请求解析出视频详情页的url和视频的名称

url = "https://pearvideo.com/category_5"

page_text = requests.get(url=url,headers=headers).text

tree = etree.HTML(page_text)

li_list = tree.xpath('//ul[@id="listvideoListUl"]/li')

urls = [] #存储所有视频的链接

for li in li_list:

detail_url = 'https://pearvideo.com/' + li.xpath('./div/a/@href')[0]

name = li.xpath('./div/a/div[2]/text()')[0]+'.mp4'

# 对详情页的url发起请求

detail_page_text = requests.get(url=detail_url,headers=headers).text

# print(detail_url,name)

# 从详情页中解析出视频的地址(url)

id = re.findall(r'\d+', detail_url)[0]

# https://pearvideo.com/videoStatus.jsp?contId=1751458&mrd=0.32392817067398805

detail_vedio_url = 'https://pearvideo.com/videoStatus.jsp?contId='+id

header1s = {

'User-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:80.0) Gecko/20100101 Firefox/80.0',

'Content-type': 'application/json',

'referer':detail_url

}

vedio_text = requests.get(url=detail_vedio_url,headers=header1s).json()

# print(vedio_text)

vedio_url = vedio_text['videoInfo']['videos']['srcUrl']

dic = {

'name': name,

'url': vedio_url

}

urls.append(dic)

print(vedio_url)

def get_video_data(dic):

url = dic['url']

print(dic['name'],'正在下载......')

data = requests.get(url=url,headers=header1s).content

# 持久化存储操作

with open(dic['name'],'wb') as fp:

fp.write(data)

print(dic['name'],'下载成功')

# 使用线程池对视频数据进行请求(较为耗时的阻塞操作)

pool = Pool(4)

pool.map(get_video_data,urls)

pool.close()

pool.join()