- 机器学习与深度学习间关系与区别

ℒℴѵℯ心·动ꦿ໊ོ꫞

人工智能学习深度学习python

一、机器学习概述定义机器学习(MachineLearning,ML)是一种通过数据驱动的方法,利用统计学和计算算法来训练模型,使计算机能够从数据中学习并自动进行预测或决策。机器学习通过分析大量数据样本,识别其中的模式和规律,从而对新的数据进行判断。其核心在于通过训练过程,让模型不断优化和提升其预测准确性。主要类型1.监督学习(SupervisedLearning)监督学习是指在训练数据集中包含输入

- 数字里的世界17期:2021年全球10大顶级数据中心,中国移动榜首

张三叨

你知道吗?2016年,全球的数据中心共计用电4160亿千瓦时,比整个英国的发电量还多40%!前言每天,我们都会创造超过250万TB的数据。并且随着物联网(IOT)的不断普及,这一数据将持续增长。如此庞大的数据被存储在被称为“数据中心”的专用设施中。虽然最早的数据中心建于20世纪40年代,但直到1997-2000年的互联网泡沫期间才逐渐成为主流。当前人类的技术,比如人工智能和机器学习,已经将我们推向

- nosql数据库技术与应用知识点

皆过客,揽星河

NoSQLnosql数据库大数据数据分析数据结构非关系型数据库

Nosql知识回顾大数据处理流程数据采集(flume、爬虫、传感器)数据存储(本门课程NoSQL所处的阶段)Hdfs、MongoDB、HBase等数据清洗(入仓)Hive等数据处理、分析(Spark、Flink等)数据可视化数据挖掘、机器学习应用(Python、SparkMLlib等)大数据时代存储的挑战(三高)高并发(同一时间很多人访问)高扩展(要求随时根据需求扩展存储)高效率(要求读写速度快)

- Python开发常用的三方模块如下:

换个网名有点难

python开发语言

Python是一门功能强大的编程语言,拥有丰富的第三方库,这些库为开发者提供了极大的便利。以下是100个常用的Python库,涵盖了多个领域:1、NumPy,用于科学计算的基础库。2、Pandas,提供数据结构和数据分析工具。3、Matplotlib,一个绘图库。4、Scikit-learn,机器学习库。5、SciPy,用于数学、科学和工程的库。6、TensorFlow,由Google开发的开源机

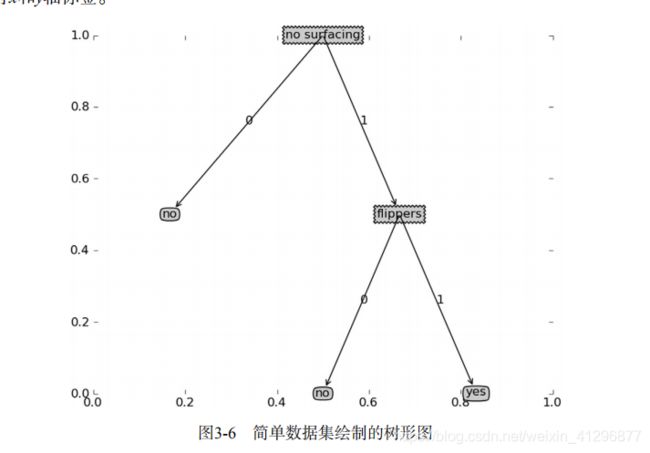

- Python实现简单的机器学习算法

master_chenchengg

pythonpython办公效率python开发IT

Python实现简单的机器学习算法开篇:初探机器学习的奇妙之旅搭建环境:一切从安装开始必备工具箱第一步:安装Anaconda和JupyterNotebook小贴士:如何配置Python环境变量算法初体验:从零开始的Python机器学习线性回归:让数据说话数据准备:从哪里找数据编码实战:Python实现线性回归模型评估:如何判断模型好坏逻辑回归:从分类开始理论入门:什么是逻辑回归代码实现:使用skl

- 遥感影像的切片处理

sand&wich

计算机视觉python图像处理

在遥感影像分析中,经常需要将大尺寸的影像切分成小片段,以便于进行详细的分析和处理。这种方法特别适用于机器学习和图像处理任务,如对象检测、图像分类等。以下是如何使用Python和OpenCV库来实现这一过程,同时确保每个影像片段保留正确的地理信息。准备环境首先,确保安装了必要的Python库,包括numpy、opencv-python和xml.etree.ElementTree。这些库将用于图像处理

- ai绘画工具midjourney怎么下载?附作品管理教程

设计师早上好

Midjourney是一款功能强大的AI绘画工具,它使用机器学习技术和深度神经网络等算法,可以生成各种艺术风格的绘画作品。在创意设计、广告宣传等方面有着广泛的应用前景。那么,ai绘画工具midjourney怎么下载?本文将为您介绍Midjourney的下载以及作品的相关管理。一、Midjourney下载Midjourney的下载非常简单,只需打开Midjourney官网(点击“GetMidjour

- [实践应用] 深度学习之模型性能评估指标

YuanDaima2048

深度学习工具使用深度学习人工智能损失函数性能评估pytorchpython机器学习

文章总览:YuanDaiMa2048博客文章总览深度学习之模型性能评估指标分类任务回归任务排序任务聚类任务生成任务其他介绍在机器学习和深度学习领域,评估模型性能是一项至关重要的任务。不同的学习任务需要不同的性能指标来衡量模型的有效性。以下是对一些常见任务及其相应的性能评估指标的详细解释和总结。分类任务分类任务是指模型需要将输入数据分配到预定义的类别或标签中。以下是分类任务中常用的性能指标:准确率(

- 机器学习-聚类算法

不良人龍木木

机器学习机器学习算法聚类

机器学习-聚类算法1.AHC2.K-means3.SC4.MCL仅个人笔记,感谢点赞关注!1.AHC2.K-means3.SC传统谱聚类:个人对谱聚类算法的理解以及改进4.MCL目前仅专注于NLP的技术学习和分享感谢大家的关注与支持!

- 未来软件市场是怎么样的?做开发的生存空间如何?

cesske

软件需求

目录前言一、未来软件市场的发展趋势二、软件开发人员的生存空间前言未来软件市场是怎么样的?做开发的生存空间如何?一、未来软件市场的发展趋势技术趋势:人工智能与机器学习:随着技术的不断成熟,人工智能将在更多领域得到应用,如智能客服、自动驾驶、智能制造等,这将极大地推动软件市场的增长。云计算与大数据:云计算服务将继续普及,大数据技术的应用也将更加广泛。企业将更加依赖云计算和大数据来优化运营、提升效率,并

- python中zeros用法_Python中的numpy.zeros()用法

江平舟

python中zeros用法

numpy.zeros()函数是最重要的函数之一,广泛用于机器学习程序中。此函数用于生成包含零的数组。numpy.zeros()函数提供给定形状和类型的新数组,并用零填充。句法numpy.zeros(shape,dtype=float,order='C'参数形状:整数或整数元组此参数用于定义数组的尺寸。此参数用于我们要在其中创建数组的形状,例如(3,2)或2。dtype:数据类型(可选)此参数用于

- 【NumPy】深入解析numpy.zeros()函数

二七830

numpy

欢迎莅临我的个人主页这里是我深耕Python编程、机器学习和自然语言处理(NLP)领域,并乐于分享知识与经验的小天地!博主简介:我是二七830,一名对技术充满热情的探索者。多年的Python编程和机器学习实践,使我深入理解了这些技术的核心原理,并能够在实际项目中灵活应用。尤其是在NLP领域,我积累了丰富的经验,能够处理各种复杂的自然语言任务。技术专长:我熟练掌握Python编程语言,并深入研究了机

- 【中国国际航空-注册_登录安全分析报告】

风控牛

验证码接口安全评测系列安全行为验证极验网易易盾智能手机

前言由于网站注册入口容易被黑客攻击,存在如下安全问题:1.暴力破解密码,造成用户信息泄露2.短信盗刷的安全问题,影响业务及导致用户投诉3.带来经济损失,尤其是后付费客户,风险巨大,造成亏损无底洞所以大部分网站及App都采取图形验证码或滑动验证码等交互解决方案,但在机器学习能力提高的当下,连百度这样的大厂都遭受攻击导致点名批评,图形验证及交互验证方式的安全性到底如何?请看具体分析一、中国国际航空PC

- 机器学习 流形数据降维:UMAP 降维算法

小嗷犬

Python机器学习#数据分析及可视化机器学习算法人工智能

✅作者简介:人工智能专业本科在读,喜欢计算机与编程,写博客记录自己的学习历程。个人主页:小嗷犬的个人主页个人网站:小嗷犬的技术小站个人信条:为天地立心,为生民立命,为往圣继绝学,为万世开太平。本文目录UMAP简介理论基础特点与优势应用场景在Python中使用UMAP安装umap-learn库使用UMAP可视化手写数字数据集UMAP简介UMAP(UniformManifoldApproximatio

- 七.正则化

愿风去了

吴恩达机器学习之正则化(Regularization)http://www.cnblogs.com/jianxinzhou/p/4083921.html从数学公式上理解L1和L2https://blog.csdn.net/b876144622/article/details/81276818虽然在线性回归中加入基函数会使模型更加灵活,但是很容易引起数据的过拟合。例如将数据投影到30维的基函数上,模

- 机器学习-------数据标准化

罔闻_spider

数据分析算法机器学习人工智能

什么是归一化,它与标准化的区别是什么?一作用在做训练时,需要先将特征值与标签标准化,可以防止梯度防炸和过拟合;将标签标准化后,网络预测出的数据是符合标准正态分布的—StandarScaler(),与真实值有很大差别。因为StandarScaler()对数据的处理是(真实值-平均值)/标准差。同时在做预测时需要将输出数据逆标准化提升模型精度:标准化/归一化使不同维度的特征在数值上更具比较性,提高分类

- 分享一个基于python的电子书数据采集与可视化分析 hadoop电子书数据分析与推荐系统 spark大数据毕设项目(源码、调试、LW、开题、PPT)

计算机源码社

Python项目大数据大数据pythonhadoop计算机毕业设计选题计算机毕业设计源码数据分析spark毕设

作者:计算机源码社个人简介:本人八年开发经验,擅长Java、Python、PHP、.NET、Node.js、Android、微信小程序、爬虫、大数据、机器学习等,大家有这一块的问题可以一起交流!学习资料、程序开发、技术解答、文档报告如需要源码,可以扫取文章下方二维码联系咨询Java项目微信小程序项目Android项目Python项目PHP项目ASP.NET项目Node.js项目选题推荐项目实战|p

- 两种方法判断Python的位数是32位还是64位

sanqima

Python编程电脑python开发语言

Python从1991年发布以来,凭借其简洁、清晰、易读的语法、丰富的标准库和第三方工具,在Web开发、自动化测试、人工智能、图形识别、机器学习等领域发展迅猛。 Python是一种胶水语言,通过Cython库与C/C++语言进行链接,通过Jython库与Java语言进行链接。 Python是跨平台的,可运行在多种操作系统上,包括但不限于Windows、Linux和macOS。这意味着用Py

- 使用最大边际相关性(MMR)选择示例:提高AI模型的多样性和相关性

aehrutktrjk

人工智能easyui前端python

使用最大边际相关性(MMR)选择示例:提高AI模型的多样性和相关性引言在机器学习和自然语言处理领域,选择合适的训练示例对模型性能至关重要。最大边际相关性(MaximalMarginalRelevance,MMR)是一种优秀的示例选择方法,它不仅考虑了示例与输入的相关性,还注重保持所选示例之间的多样性。本文将深入探讨如何使用MMR来选择示例,以提高AI模型的性能和泛化能力。什么是最大边际相关性(MM

- LangChain集成指南:如何利用多样化的AI提供商

aehrutktrjk

人工智能langchainpython

LangChain集成指南:如何利用多样化的AI提供商引言在人工智能和机器学习领域,LangChain已成为一个强大而灵活的框架,允许开发者轻松集成各种AI服务提供商。本文将深入探讨LangChain的集成能力,介绍如何利用不同的AI提供商来增强你的应用程序,并提供实用的代码示例。LangChain集成概览LangChain支持多种AI提供商的集成,这些集成可以分为两类:独立包集成:这些提供商有独

- 机器学习VS深度学习

nfgo

机器学习

机器学习(MachineLearning,ML)和深度学习(DeepLearning,DL)是人工智能(AI)的两个子领域,它们有许多相似之处,但在技术实现和应用范围上也有显著区别。下面从几个方面对两者进行区分:1.概念层面机器学习:是让计算机通过算法从数据中自动学习和改进的技术。它依赖于手动设计的特征和数学模型来进行学习,常用的模型有决策树、支持向量机、线性回归等。深度学习:是机器学习的一个子领

- 大数据毕业设计hadoop+spark+hive知识图谱租房数据分析可视化大屏 租房推荐系统 58同城租房爬虫 房源推荐系统 房价预测系统 计算机毕业设计 机器学习 深度学习 人工智能

2401_84572577

程序员大数据hadoop人工智能

做了那么多年开发,自学了很多门编程语言,我很明白学习资源对于学一门新语言的重要性,这些年也收藏了不少的Python干货,对我来说这些东西确实已经用不到了,但对于准备自学Python的人来说,或许它就是一个宝藏,可以给你省去很多的时间和精力。别在网上瞎学了,我最近也做了一些资源的更新,只要你是我的粉丝,这期福利你都可拿走。我先来介绍一下这些东西怎么用,文末抱走。(1)Python所有方向的学习路线(

- 【机器学习与R语言】1-机器学习简介

苹果酱0567

面试题汇总与解析java中间件开发语言springboot后端

1.基本概念机器学习:发明算法将数据转化为智能行为数据挖掘VS机器学习:前者侧重寻找有价值的信息,后者侧重执行已知的任务。后者是前者的先期准备过程:数据——>抽象化——>一般化。或者:收集数据——推理数据——归纳数据——发现规律抽象化:训练:用一个特定模型来拟合数据集的过程用方程来拟合观测的数据:观测现象——数据呈现——模型建立。通过不同的格式来把信息概念化一般化:一般化:将抽象化的知识转换成可用

- Python前沿技术:机器学习与人工智能

4.0啊

Python人工智能python机器学习

Python前沿技术:机器学习与人工智能一、引言随着科技的飞速发展,机器学习和人工智能(AI)已经成为了计算机科学领域的热门话题。Python作为一门易学易用且功能强大的编程语言,已经成为了这两个领域的首选语言之一。本文将深入探讨Python在机器学习和人工智能领域的应用,以及一些前沿技术和工具。二、Python机器学习基础2.1机器学习概述机器学习是人工智能(AI)的一个关键子集,它的核心在于让

- chatgpt赋能python:如何在Python中计算平均值

tulingtest

ChatGptpythonchatgptnumpy计算机

如何在Python中计算平均值计算平均值是数据分析、统计和机器学习等许多领域中的常见任务。Python作为一门功能强大且易于学习的编程语言,为计算平均值提供了多种方法。在本文中,我们将介绍如何在Python中计算平均值。什么是平均值简单来说,平均值是一组数字的总和除以数字的数量。例如,对于数字序列1,3,5,7,9,平均值是(1+3+5+7+9)/5=5。平均值在数据分析中非常有用,因为它可以提供

- Python 初学者入门必知: Anaconda是什么?有什么作用?怎么使用?

懒大王爱吃狼

Python基础python开发语言python基础python学习anacondaanaconda安装python教程

初学者在学习Python时,经常看到的一个名字是Anaconda。究竟什么是Anaconda,为什么它如此受欢迎?在这篇文章中,我们将探讨Anaconda,了解Anaconda的从安装到使用的。Anaconda是一个免费开源的Python和R编程发行版,包含上千个适用于数据科学和机器学习的包。同时,配备了Spyder和Jupyternotebook等工具,初学者可以使用它们来学习Python,使用

- 每天五分钟玩转深度学习PyTorch:模型参数优化器torch.optim

幻风_huanfeng

深度学习框架pytorch深度学习pytorch人工智能神经网络机器学习优化算法

本文重点在机器学习或者深度学习中,我们需要通过修改参数使得损失函数最小化(或最大化),优化算法就是一种调整模型参数更新的策略。在pytorch中定义了优化器optim,我们可以使用它调用封装好的优化算法,然后传递给它神经网络模型参数,就可以对模型进行优化。本文是学习第6步(优化器),参考链接pytorch的学习路线随机梯度下降算法在深度学习和机器学习中,梯度下降算法是最常用的参数更新方法,它的公式

- 一切皆是映射:AI的去中心化:区块链技术的融合

AI大模型应用之禅

计算科学神经计算深度学习神经网络大数据人工智能大型语言模型AIAGILLMJavaPython架构设计AgentRPA

一切皆是映射:AI的去中心化:区块链技术的融合作者:禅与计算机程序设计艺术/ZenandtheArtofComputerProgramming关键词:AI,区块链,去中心化,智能合约,共识机制,数据安全,隐私保护,分布式账本技术,机器学习,数据隐私1.背景介绍1.1问题的由来随着人工智能(AI)技术的快速发展,其在各个领域的应用越来越广泛,从自动驾驶、智能医疗到金融服务,AI正在改变着我们的生活。

- 第五届核磁机器学习班(训练营:2023.6.5~6.17)

茗创科技

茗创科技专注于脑科学数据处理,涵盖(EEG/ERP,fMRI,结构像,DTI,ASL,FNIRS)等,欢迎留言讨论及转发推荐,也欢迎了解茗创科技的脑电课程,数据处理服务及脑科学工作站销售业务,可添加我们的工程师(微信号MCKJ-zhouyi或17373158786)咨询。★课程简介★基于血氧水平依赖的功能磁共振成像(fMRI)技术,利用其数据构建的功能性脑网络后,发现脑并不是一个单纯对外界刺激进行

- 如何有效的学习AI大模型?

Python程序员罗宾

学习人工智能语言模型自然语言处理架构

学习AI大模型是一个系统性的过程,涉及到多个学科的知识。以下是一些建议,帮助你更有效地学习AI大模型:基础知识储备:数学基础:学习线性代数、概率论、统计学和微积分等,这些是理解机器学习算法的数学基础。编程技能:掌握至少一种编程语言,如Python,因为大多数AI模型都是用Python实现的。理论学习:机器学习基础:了解监督学习、非监督学习、强化学习等基本概念。深度学习:学习神经网络的基本结构,如卷

- HQL之投影查询

归来朝歌

HQLHibernate查询语句投影查询

在HQL查询中,常常面临这样一个场景,对于多表查询,是要将一个表的对象查出来还是要只需要每个表中的几个字段,最后放在一起显示?

针对上面的场景,如果需要将一个对象查出来:

HQL语句写“from 对象”即可

Session session = HibernateUtil.openSession();

- Spring整合redis

bylijinnan

redis

pom.xml

<dependencies>

<!-- Spring Data - Redis Library -->

<dependency>

<groupId>org.springframework.data</groupId>

<artifactId>spring-data-redi

- org.hibernate.NonUniqueResultException: query did not return a unique result: 2

0624chenhong

Hibernate

参考:http://blog.csdn.net/qingfeilee/article/details/7052736

org.hibernate.NonUniqueResultException: query did not return a unique result: 2

在项目中出现了org.hiber

- android动画效果

不懂事的小屁孩

android动画

前几天弄alertdialog和popupwindow的时候,用到了android的动画效果,今天专门研究了一下关于android的动画效果,列出来,方便以后使用。

Android 平台提供了两类动画。 一类是Tween动画,就是对场景里的对象不断的进行图像变化来产生动画效果(旋转、平移、放缩和渐变)。

第二类就是 Frame动画,即顺序的播放事先做好的图像,与gif图片原理类似。

- js delete 删除机理以及它的内存泄露问题的解决方案

换个号韩国红果果

JavaScript

delete删除属性时只是解除了属性与对象的绑定,故当属性值为一个对象时,删除时会造成内存泄露 (其实还未删除)

举例:

var person={name:{firstname:'bob'}}

var p=person.name

delete person.name

p.firstname -->'bob'

// 依然可以访问p.firstname,存在内存泄露

- Oracle将零干预分析加入网络即服务计划

蓝儿唯美

oracle

由Oracle通信技术部门主导的演示项目并没有在本月较早前法国南斯举行的行业集团TM论坛大会中获得嘉奖。但是,Oracle通信官员解雇致力于打造一个支持零干预分配和编制功能的网络即服务(NaaS)平台,帮助企业以更灵活和更适合云的方式实现通信服务提供商(CSP)的连接产品。这个Oracle主导的项目属于TM Forum Live!活动上展示的Catalyst计划的19个项目之一。Catalyst计

- spring学习——springmvc(二)

a-john

springMVC

Spring MVC提供了非常方便的文件上传功能。

1,配置Spring支持文件上传:

DispatcherServlet本身并不知道如何处理multipart的表单数据,需要一个multipart解析器把POST请求的multipart数据中抽取出来,这样DispatcherServlet就能将其传递给我们的控制器了。为了在Spring中注册multipart解析器,需要声明一个实现了Mul

- POJ-2828-Buy Tickets

aijuans

ACM_POJ

POJ-2828-Buy Tickets

http://poj.org/problem?id=2828

线段树,逆序插入

#include<iostream>#include<cstdio>#include<cstring>#include<cstdlib>using namespace std;#define N 200010struct

- Java Ant build.xml详解

asia007

build.xml

1,什么是antant是构建工具2,什么是构建概念到处可查到,形象来说,你要把代码从某个地方拿来,编译,再拷贝到某个地方去等等操作,当然不仅与此,但是主要用来干这个3,ant的好处跨平台 --因为ant是使用java实现的,所以它跨平台使用简单--与ant的兄弟make比起来语法清晰--同样是和make相比功能强大--ant能做的事情很多,可能你用了很久,你仍然不知道它能有

- android按钮监听器的四种技术

百合不是茶

androidxml配置监听器实现接口

android开发中经常会用到各种各样的监听器,android监听器的写法与java又有不同的地方;

1,activity中使用内部类实现接口 ,创建内部类实例 使用add方法 与java类似

创建监听器的实例

myLis lis = new myLis();

使用add方法给按钮添加监听器

- 软件架构师不等同于资深程序员

bijian1013

程序员架构师架构设计

本文的作者Armel Nene是ETAPIX Global公司的首席架构师,他居住在伦敦,他参与过的开源项目包括 Apache Lucene,,Apache Nutch, Liferay 和 Pentaho等。

如今很多的公司

- TeamForge Wiki Syntax & CollabNet User Information Center

sunjing

TeamForgeHow doAttachementAnchorWiki Syntax

the CollabNet user information center http://help.collab.net/

How do I create a new Wiki page?

A CollabNet TeamForge project can have any number of Wiki pages. All Wiki pages are linked, and

- 【Redis四】Redis数据类型

bit1129

redis

概述

Redis是一个高性能的数据结构服务器,称之为数据结构服务器的原因是,它提供了丰富的数据类型以满足不同的应用场景,本文对Redis的数据类型以及对这些类型可能的操作进行总结。

Redis常用的数据类型包括string、set、list、hash以及sorted set.Redis本身是K/V系统,这里的数据类型指的是value的类型,而不是key的类型,key的类型只有一种即string

- SSH2整合-附源码

白糖_

eclipsespringtomcatHibernateGoogle

今天用eclipse终于整合出了struts2+hibernate+spring框架。

我创建的是tomcat项目,需要有tomcat插件。导入项目以后,鼠标右键选择属性,然后再找到“tomcat”项,勾选一下“Is a tomcat project”即可。具体方法见源码里的jsp图片,sql也在源码里。

补充1:项目中部分jar包不是最新版的,可能导

- [转]开源项目代码的学习方法

braveCS

学习方法

转自:

http://blog.sina.com.cn/s/blog_693458530100lk5m.html

http://www.cnblogs.com/west-link/archive/2011/06/07/2074466.html

1)阅读features。以此来搞清楚该项目有哪些特性2)思考。想想如果自己来做有这些features的项目该如何构架3)下载并安装d

- 编程之美-子数组的最大和(二维)

bylijinnan

编程之美

package beautyOfCoding;

import java.util.Arrays;

import java.util.Random;

public class MaxSubArraySum2 {

/**

* 编程之美 子数组之和的最大值(二维)

*/

private static final int ROW = 5;

private stat

- 读书笔记-3

chengxuyuancsdn

jquery笔记resultMap配置ibatis一对多配置

1、resultMap配置

2、ibatis一对多配置

3、jquery笔记

1、resultMap配置

当<select resultMap="topic_data">

<resultMap id="topic_data">必须一一对应。

(1)<resultMap class="tblTopic&q

- [物理与天文]物理学新进展

comsci

如果我们必须获得某种地球上没有的矿石,才能够进行某些能量输出装置的设计和建造,而要获得这种矿石,又必须首先进行深空探测,而要进行深空探测,又必须获得这种能量输出装置,这个矛盾的循环,会导致地球联盟在与宇宙文明建立关系的时候,陷入困境

怎么办呢?

- Oracle 11g新特性:Automatic Diagnostic Repository

daizj

oracleADR

Oracle Database 11g的FDI(Fault Diagnosability Infrastructure)是自动化诊断方面的又一增强。

FDI的一个关键组件是自动诊断库(Automatic Diagnostic Repository-ADR)。

在oracle 11g中,alert文件的信息是以xml的文件格式存在的,另外提供了普通文本格式的alert文件。

这两份log文

- 简单排序:选择排序

dieslrae

选择排序

public void selectSort(int[] array){

int select;

for(int i=0;i<array.length;i++){

select = i;

for(int k=i+1;k<array.leng

- C语言学习六指针的经典程序,互换两个数字

dcj3sjt126com

c

示例程序,swap_1和swap_2都是错误的,推理从1开始推到2,2没完成,推到3就完成了

# include <stdio.h>

void swap_1(int, int);

void swap_2(int *, int *);

void swap_3(int *, int *);

int main(void)

{

int a = 3;

int b =

- php 5.4中php-fpm 的重启、终止操作命令

dcj3sjt126com

PHP

php 5.4中php-fpm 的重启、终止操作命令:

查看php运行目录命令:which php/usr/bin/php

查看php-fpm进程数:ps aux | grep -c php-fpm

查看运行内存/usr/bin/php -i|grep mem

重启php-fpm/etc/init.d/php-fpm restart

在phpinfo()输出内容可以看到php

- 线程同步工具类

shuizhaosi888

同步工具类

同步工具类包括信号量(Semaphore)、栅栏(barrier)、闭锁(CountDownLatch)

闭锁(CountDownLatch)

public class RunMain {

public long timeTasks(int nThreads, final Runnable task) throws InterruptedException {

fin

- bleeding edge是什么意思

haojinghua

DI

不止一次,看到很多讲技术的文章里面出现过这个词语。今天终于弄懂了——通过朋友给的浏览软件,上了wiki。

我再一次感到,没有辞典能像WiKi一样,给出这样体贴人心、一清二楚的解释了。为了表达我对WiKi的喜爱,只好在此一一中英对照,给大家上次课。

In computer science, bleeding edge is a term that

- c中实现utf8和gbk的互转

jimmee

ciconvutf8&gbk编码

#include <iconv.h>

#include <stdlib.h>

#include <stdio.h>

#include <unistd.h>

#include <fcntl.h>

#include <string.h>

#include <sys/stat.h>

int code_c

- 大型分布式网站架构设计与实践

lilin530

应用服务器搜索引擎

1.大型网站软件系统的特点?

a.高并发,大流量。

b.高可用。

c.海量数据。

d.用户分布广泛,网络情况复杂。

e.安全环境恶劣。

f.需求快速变更,发布频繁。

g.渐进式发展。

2.大型网站架构演化发展历程?

a.初始阶段的网站架构。

应用程序,数据库,文件等所有的资源都在一台服务器上。

b.应用服务器和数据服务器分离。

c.使用缓存改善网站性能。

d.使用应用

- 在代码中获取Android theme中的attr属性值

OliveExcel

androidtheme

Android的Theme是由各种attr组合而成, 每个attr对应了这个属性的一个引用, 这个引用又可以是各种东西.

在某些情况下, 我们需要获取非自定义的主题下某个属性的内容 (比如拿到系统默认的配色colorAccent), 操作方式举例一则:

int defaultColor = 0xFF000000;

int[] attrsArray = { andorid.r.

- 基于Zookeeper的分布式共享锁

roadrunners

zookeeper分布式共享锁

首先,说说我们的场景,订单服务是做成集群的,当两个以上结点同时收到一个相同订单的创建指令,这时并发就产生了,系统就会重复创建订单。等等......场景。这时,分布式共享锁就闪亮登场了。

共享锁在同一个进程中是很容易实现的,但在跨进程或者在不同Server之间就不好实现了。Zookeeper就很容易实现。具体的实现原理官网和其它网站也有翻译,这里就不在赘述了。

官

- 两个容易被忽略的MySQL知识

tomcat_oracle

mysql

1、varchar(5)可以存储多少个汉字,多少个字母数字? 相信有好多人应该跟我一样,对这个已经很熟悉了,根据经验我们能很快的做出决定,比如说用varchar(200)去存储url等等,但是,即使你用了很多次也很熟悉了,也有可能对上面的问题做出错误的回答。 这个问题我查了好多资料,有的人说是可以存储5个字符,2.5个汉字(每个汉字占用两个字节的话),有的人说这个要区分版本,5.0

- zoj 3827 Information Entropy(水题)

阿尔萨斯

format

题目链接:zoj 3827 Information Entropy

题目大意:三种底,计算和。

解题思路:调用库函数就可以直接算了,不过要注意Pi = 0的时候,不过它题目里居然也讲了。。。limp→0+plogb(p)=0,因为p是logp的高阶。

#include <cstdio>

#include <cstring>

#include <cmath&