pytorch学习(二十五)——从零实现多GPU训练

文章目录

-

- 1. 数据并行性

- 2. 从零开始实现多GPU训练

-

- 2.1 修改LenNet网络

- 2.2 数据同步

- 2.3 数据分发

- 2.4 数据训练

- 3. 简介实现多GPU并行运算

- 4. 总结

参考李沐老师动手学深度学习V2(强烈推荐看看书):

[1] https://zh-v2.d2l.ai/chapter_computational-performance/multiple-gpus.html

[2] https://zh-v2.d2l.ai/chapter_computational-performance/multiple-gpus-concise.html

[3] https://blog.csdn.net/jerry_liufeng/article/details/119808916?spm=1001.2014.3001.5501: 这是我自己总结的CPU和GPU的基础知识,后面还有单机多卡并行的基础。包括数据并行和模型并行。

只要GPU的现存足够,数据并行是最方便的。

1. 数据并行性

利用两个GPU上的数据,并行计算小批量随机梯度下降。

K个GPU的并行运算过程:

- 在任何一次迭代中,将给定的随机小批量样本分成k份,均匀地分配到GPU上

- 每个GPU根据分配的小批量子集,计算模型参数的损失和梯度

- 将k个GPU中的局部梯度聚合,获得整个批次数据的随机梯度

- 聚合梯度并将这个梯度分发到每个GPU之中

- 每个GPU使用小批量随机梯度,来更新其所维护的完整的模型参数集

2. 从零开始实现多GPU训练

%matplotlib inline

import torch

from torch import nn

from torch.nn import functional as F

from d2l import torch as d2l

2.1 修改LenNet网络

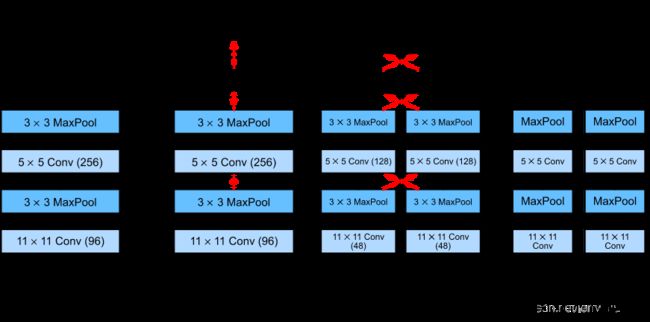

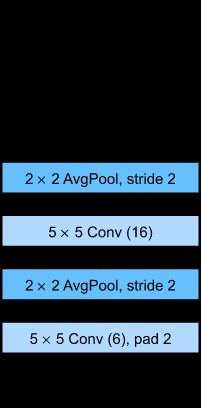

LeNet简化版

# 初始化模型参数

scale = 0.01

W1 = torch.randn(size=(20, 1, 3, 3)) * scale

b1 = torch.zeros(20)

W2 = torch.randn(size=(50, 20, 5, 5)) * scale

b2 = torch.zeros(50)

W3 = torch.randn(size=(800, 128)) * scale

b3 = torch.zeros(128)

W4 = torch.randn(size=(128, 10)) * scale

b4 = torch.zeros(10)

params = [W1, b1, W2, b2, W3, b3, W4, b4]

# 定义模型

def lenet(X, params):

h1_conv = F.conv2d(input=X, weight=params[0], bias=params[1])

h1_activation = F.relu(h1_conv)

h1 = F.avg_pool2d(input=h1_activation, kernel_size=(2, 2), stride=(2, 2))

h2_conv = F.conv2d(input=h1, weight=params[2], bias=params[3])

h2_activation = F.relu(h2_conv)

h2 = F.avg_pool2d(input=h2_activation, kernel_size=(2, 2), stride=(2, 2))

h2 = h2.reshape(h2.shape[0], -1)

h3_linear = torch.mm(h2, params[4]) + params[5]

h3 = F.relu(h3_linear)

y_hat = torch.mm(h3, params[6]) + params[7]

return y_hat

# 交叉熵损失函数

loss = nn.CrossEntropyLoss(reduction='none')

2.2 数据同步

get_params:将参数分配到不同的GPU上进行训练

def get_params(params,device):

new_params = [p.clone().to(device) for p in params]

for p in new_params:

p.requires_grad_() # setting calculate the grad

return new_params

new_params = get_params(params,d2l.try_gpu(0))

print('b1 weight',new_params[1])

print('b1 grad',new_params[1].grad)

b1 weight tensor([0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0.],

device='cuda:0', requires_grad=True)

b1 grad None

allreduce:将所有向量相加,并将结果广播给所有的GPU

def allreduce(data):

for i in range(1,len(data)):

data[0][:] += data[i].to(data[0].device)

for i in range(1,len(data)):

data[i] = data[0].to(data[i].device)

data = [torch.ones((1,2),device=d2l.try_gpu(i))*(i+1) for i in range(2)]

print('before allredce:\n',data[0],'\n',data[1])

allreduce(data)

print('after allredce:\n',data[0],'\n',data[1])

tensor([[1., 1.]], device='cuda:0') tensor([[2., 2.]]) after allredce: tensor([[3., 3.]], device='cuda:0') tensor([[3., 3.]])`

data = torch.arange(20).reshape(4,5)

# 我沙雕了,只有一块gpu,我重复用两次不过分吧--

devices = [torch.device('cuda:0'),torch.device('cuda:0')]

split = nn.parallel.scatter(data,devices)

print('input:',data)

print('load into',devices)

print('output:',split)

input: tensor([[ 0, 1, 2, 3, 4], [ 5, 6, 7, 8, 9], [10, 11, 12, 13, 14], [15, 16, 17, 18, 19]]) load into [device(type='cuda', index=0),device(type='cuda', index=0)] output: (tensor([[0, 1, 2, 3, 4],[5, 6, 7, 8, 9]], device='cuda:0'), tensor([[10, 11, 12, 13, 14], [15, 16, 17, 18, 19]], device='cuda:0'))

2.3 数据分发

split_batch:将输入batch拆分到多个设备上

def split_batch(X,y,devices):

assert X.shape[0] == y.shape[0]

return (nn.parallel.scatter(X,devices),

nn.parallel.scatter(y,devices))

2.4 数据训练

在一个小批量上实现多GPU训练

def train_batch(X,y,device_params,devices,lr):

# 将小batch分配到每一个GPU上分别计算梯度

X_shards,y_shards = split_batch(X,y,devices)

ls = [

loss(lenet(X_shard,devices_W),y_shard).sum() for X_shard,y_shard,devices_W in zip(X_shards,y_shards,device_params)

]

# 分别进行梯度后传

for l in ls:

l.backward()

# 将每个GPU求得的对应数据的梯度相加或得整个数据的梯度,然后进行广播到每一个GPU上

with torch.no_grad():

for i in range(len(device_params[0])):

allreduce([device_params[c][i].grad for c in range(len(devices))])

# 每个GPU做对应的梯度更新

for param in device_params:

d2l.sgd(param,lr,X.shape[0])

def train(num_gpus, batch_size, lr):

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

devices = [d2l.try_gpu(i) for i in range(num_gpus)]

# 将模型参数复制到`num_gpus`个GPU

device_params = [get_params(params, d) for d in devices]

num_epochs = 10

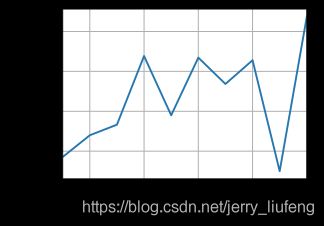

animator = d2l.Animator('epoch', 'test acc', xlim=[1, num_epochs])

timer = d2l.Timer()

for epoch in range(num_epochs):

timer.start()

for X, y in train_iter:

# 为单个小批量执行多GPU训练

train_batch(X, y, device_params, devices, lr)

torch.cuda.synchronize()

timer.stop()

# 在GPU 0上评估模型

animator.add(epoch + 1, (d2l.evaluate_accuracy_gpu(

lambda x: lenet(x, device_params[0]), test_iter, devices[0]),))

print(f'test acc: {animator.Y[0][-1]:.2f}, {timer.avg():.1f} sec/epoch '

f'on {str(devices)}')

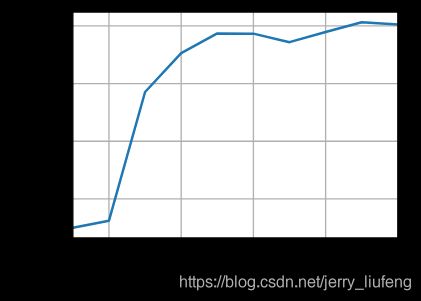

train(num_gpus=1, batch_size=256, lr=0.2)

test acc: 0.82, 2.9 sec/epoch on [device(type='cuda', index=0)]

单GPU效率

train(num_gpus=2, batch_size=256, lr=0.2)

test acc: 0.80, 3.0 sec/epoch on [device(type='cuda',index=0), device(type='cuda', index=1)]

双GPU效率

3. 简介实现多GPU并行运算

import torch

from torch import nn

from d2l import torch as d2l

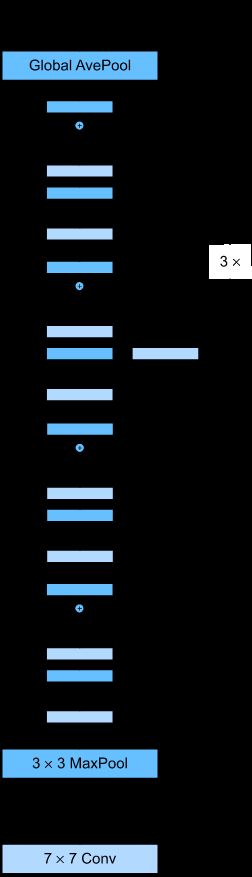

定义一个ResNet-18网络。(使用更小的卷集核、步长和填充,而且删除了最大汇聚层。)

ResNet-18

#@save

def resnet18(num_classes, in_channels=1):

"""稍加修改的 ResNet-18 模型。"""

def resnet_block(in_channels, out_channels, num_residuals,

first_block=False):

blk = []

for i in range(num_residuals):

if i == 0 and not first_block:

blk.append(d2l.Residual(in_channels, out_channels,

use_1x1conv=True, strides=2))

else:

blk.append(d2l.Residual(out_channels, out_channels))

return nn.Sequential(*blk)

# 该模型使用了更小的卷积核、步长和填充,而且删除了最大汇聚层。

net = nn.Sequential(

nn.Conv2d(in_channels, 64, kernel_size=3, stride=1, padding=1),

nn.BatchNorm2d(64),

nn.ReLU())

net.add_module("resnet_block1", resnet_block(64, 64, 2, first_block=True))

net.add_module("resnet_block2", resnet_block(64, 128, 2))

net.add_module("resnet_block3", resnet_block(128, 256, 2))

net.add_module("resnet_block4", resnet_block(256, 512, 2))

net.add_module("global_avg_pool", nn.AdaptiveAvgPool2d((1,1)))

net.add_module("fc", nn.Sequential(nn.Flatten(),

nn.Linear(512, num_classes)))

return net

net = resnet18(10)

# 获取GPU列表

devices = d2l.try_all_gpus()

# 我们将在训练代码实现中初始化网络

def train(net, num_gpus, batch_size, lr):

train_iter, test_iter = load_data_fashion_mnist(batch_size)

devices = [d2l.try_gpu(i) for i in range(num_gpus)]

def init_weights(m):

if type(m) in [nn.Linear, nn.Conv2d]:

nn.init.normal_(m.weight, std=0.01)

net.apply(init_weights)

# 在多个 GPU 上设置模型

net = nn.DataParallel(net, device_ids=devices) # 告诉需要哪些GPU

trainer = torch.optim.SGD(net.parameters(), lr)

loss = nn.CrossEntropyLoss()

timer, num_epochs = d2l.Timer(), 10

animator = d2l.Animator('epoch', 'test acc', xlim=[1, num_epochs])

for epoch in range(num_epochs):

net.train()

timer.start()

for X, y in train_iter:

trainer.zero_grad()

X, y = X.to(devices[0]), y.to(devices[0])

l = loss(net(X), y)

l.backward()

trainer.step()

timer.stop()

animator.add(epoch + 1, (d2l.evaluate_accuracy_gpu(net, test_iter),))

print(f'test acc: {animator.Y[0][-1]:.2f}, {timer.avg():.1f} sec/epoch '

f'on {str(devices)}')

train(net, num_gpus=1, batch_size=256, lr=0.1)

test acc: 0.93, 76.9 sec/epoch on [device(type='cuda', index=0)] >```

单GPU效率(pytorch简洁实现)

总结一下:pytorch简介实现其实就是在训练的过程中加入了一句

net = nn.DataParallel(net, device_ids=devices),从而告诉了网络需要给其分配哪些GPU来进行并行运算。其自动实现了我们第二节中的各种方法。

4. 总结

-

有多种方法可以在多个 GPU 上拆分深度网络的训练。拆分可以在层之间、跨层或跨数据上实现。前两者需要对数据传输过程进行严格编排,而最后一种则是最简单的策略。拆分网络层、拆分网络和拆分数据(拆分数据最简单)

-

数据并行训练本身是不复杂的,它通过增加有效的小批量数据量的大小提高了训练效率。

-

在数据并行中,数据需要跨多个 GPU 拆分,其中每个 GPU 执行自己的前向传播和反向传播,随后所有的梯度被聚合为一,之后聚合结果向所有的 GPU 广播。

-

小批量数据量更大时,学习率也需要稍微提高一些。经验之谈:批量batch_size越大,其learning rate也因该对应变大

-

神经网络可以在(可找到数据的)单 GPU 上进行自动评估。pytorch和其他架构中都集成好了,调用即可

-

注意每台设备上的网络需要先初始化,然后再尝试访问该设备上的参数,否则会遇到错误。注意验证自己是否有这些GPU,看我就只有一个。。然后强行脑部自己有多个,精神胜利法

-

优化算法在多个 GPU 上自动聚合。