深度学习训练滑动验证码(Yolov5)

注:本文只用于学习,如有问题请联系作者。

场景介绍

对于现在网络的大多数滑动验证码如果想用一个通用的方法还是需要用深度学习,用图像处理的方式对于单一类型还是比较好用的,多类型还是难以适用的。例如如下多种类型:

这里展示了5种类型不同的滑块,我们要做的就是准确的找到缺口的位置通过。

我这里使用的yolov5

项目开始

https://github.com/ultralytics/yolov5

这里是yolov5 github可以了解更多

https://docs.ultralytics.com/

这是yolov5的官方文档

YOLO 是“You only look once”的首字母缩写词,是一种将图像划分为网格系统的对象检测算法。网格中的每个单元都负责检测自身内部的对象。

由于其速度和准确性,YOLO 是最著名的目标检测算法之一。

git clone https://github.com/ultralytics/yolov5.git

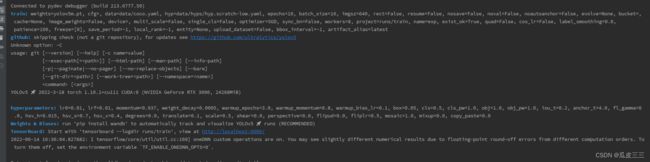

安装后配置环境然后启动train.py出现如下代表yolo配置成功

需要有cuda环境(需要用gpu训练) ,torch,torchvision,numpy等环境(自行解决)

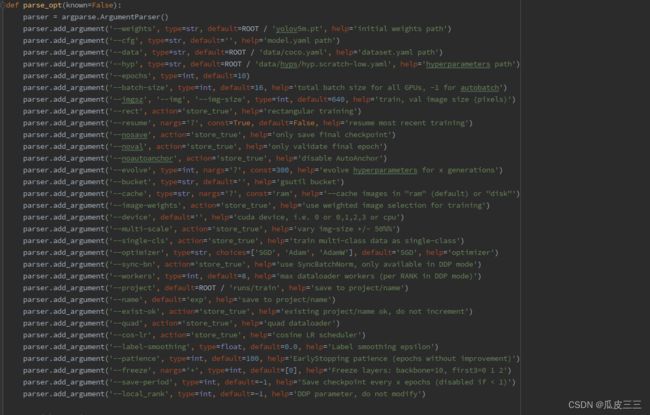

yolov5超参数

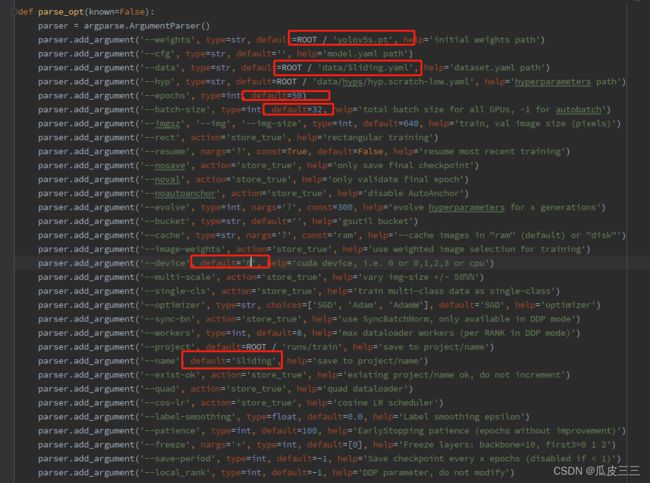

train.py文件的454行parse_opt里面有很多启动脚本超参数,接受一下常用的:

- weights

选择预训练模型,默认ROOT / ‘yolov5m.pt’ - cfg

模型配置和网络结构的yaml文件路径 - data

数据集配置的yaml文件路径,包括数据集的路径,类名等关键信息 - hyp

超参数配置的yaml文件路径 - epochs

训练总轮次 - batch-size

每个轮次下图片训练的批次大小 - imgsz

图片默认尺寸 - resume

从给定的path/last.pt恢复训练,如果为空,则从最近保存的path/last.pt恢复训练,持续训练 - device

显卡选择 - single-cls

将所有数据按照一个类别进行训练(例如滑块只有一个缺口,则表示一种类型) - optimizer

优化器选择 - name

训练完的保存的文件夹名称 - exist-ok

训练完文件夹不递增

如果有感兴趣的自行查询。

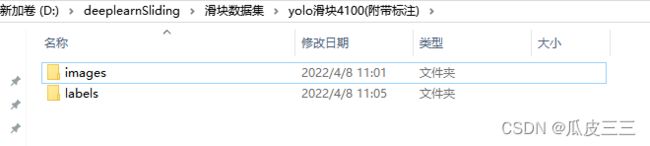

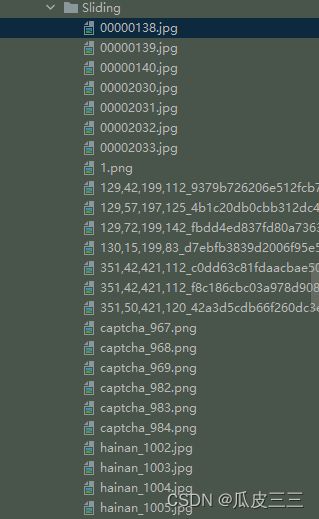

滑动验证码数据集

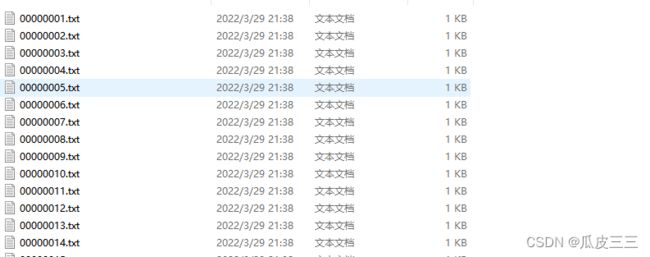

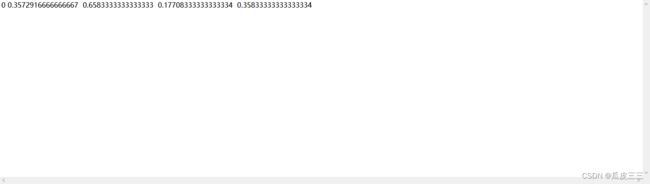

yolo有自己数据集的格式

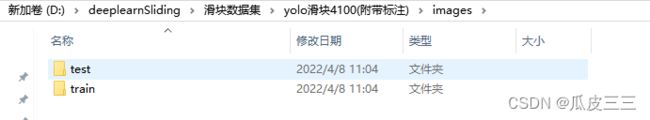

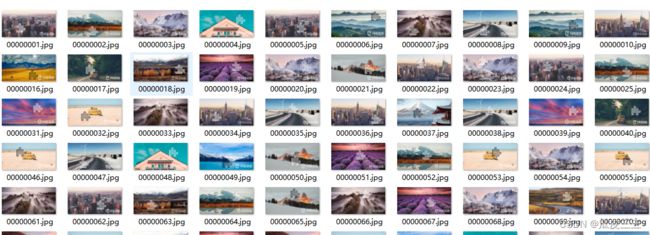

我已经整理好了有4100张上述滑块类型

这里目录下分文图片和标签两个目录

图片和标签一样下面为一个test和train测试集和训练集

这里是yolo的数据集格式必须这个样子

我已经整理好了数据集并标注,如果你想自己训练自己的图片也可以也行标注但是格式必须是这个样子。

推荐一个标注的网站可以直接导出yolo格式

make-sense 是一个被YOLOv5官方推荐使用的图像标注工具

https://www.makesense.ai/这个标注工具,具体使用https://blog.csdn.net/to_chariver/article/details/119619515看这个博主,很详细

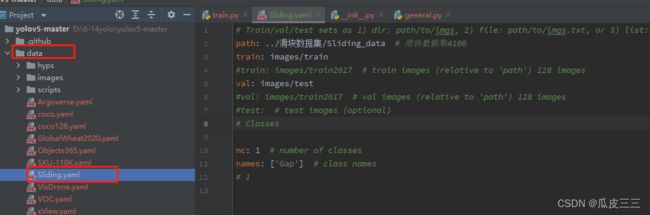

配置数据集和超参数

在data下面创建一个Sliding.yaml的配置文件

这里配置完改了几个超参数如下:

我训练模型选择yolov5s,这是不同yolo模型的对比图。

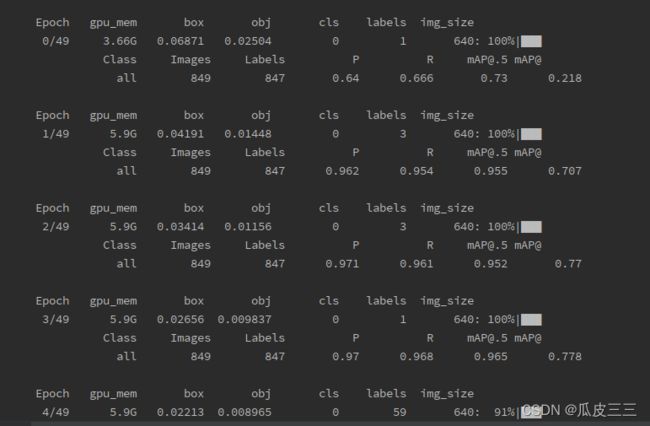

训练开始并查看结果

可以看到已经有训练信息在跑了,等待训练完50轮我们就可以看结果啦。

这里解释一下信息中的参数都是什么:

-

Epoch

训练轮数 -

gpu_mem

gpu占用内存 -

box

边界框损失 YOLO V5使用 GIOU Loss作为bounding box的损失,Box推测为GIoU损失函数均值,越小方框越准; -

obj

目标检测损失 推测为目标检测loss均值,越小目标检测越准; -

cls

分类损失 推测为分类loss均值,越小分类越准;(缺口只有一个类别所以为0没有浮动) -

img_size

图片尺寸默认为640 -

Images

测试集图片个数849张 -

Labels

测试集标签个数847张 -

P

精度 -

R

召回率

一般训练结果主要观察精度和召回率波动情况

详细解读请看

https://blog.csdn.net/sinat_37322535/article/details/117260081

这个博主

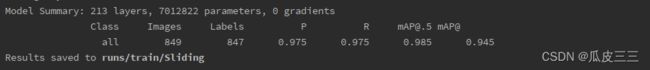

可以看到这里精度和召回率表现都很不错

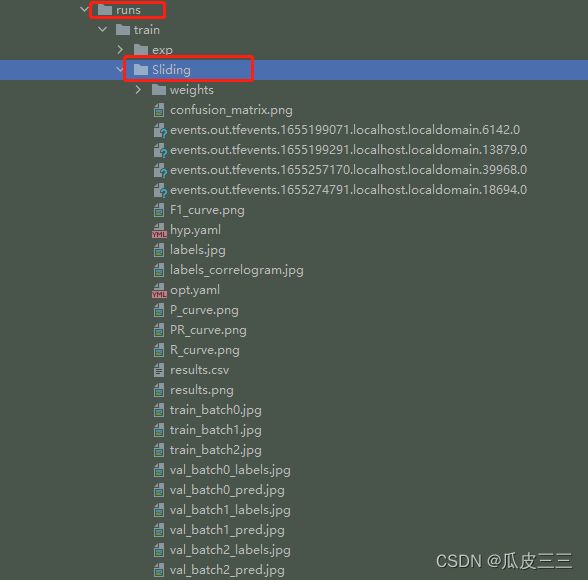

训练结束后在目录下的runs/train/Sliding下生成一些训练结果的文件(这个目录可以自己设置)

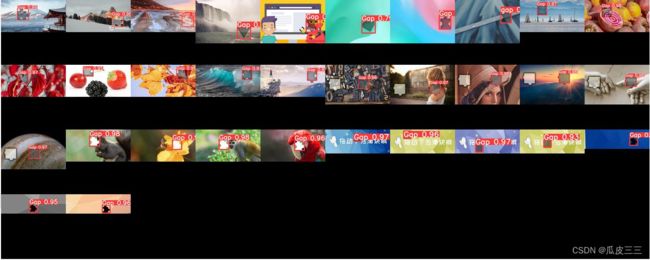

看一下我们测试集的预测结果,val_batch_pred.jpg

Gap就是我们需要找到的地方,还有置信度

results.csv文件是训练具体的详细信息

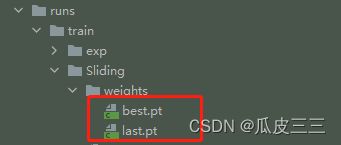

最重要的是在weights目录下有两个pt模型,

最好的模型,和最后的模型,我们之后验证就可以用着模型进行验证

PT模型转onnx模型

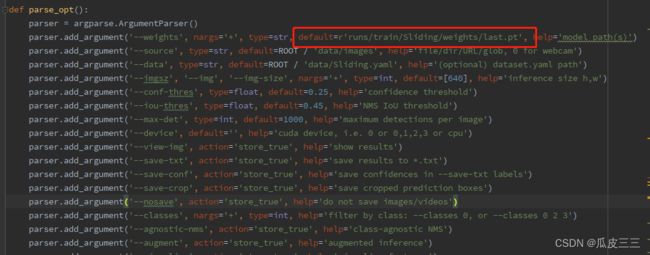

项目中的export.py文件中的521行weights超参数将pt模型路径写入列入:

需要有onnx模块不然会报错,如果报异常 Onnx: No module named ‘onnx’

手动安装onnx模块然后在重试即可

在这个目录下可以看到last.onnx模型,接下来就是模型的单独调度。

验证模型

在detect.py文件中配置可以对模型进行检测

211行配置超参数

这里一定要配置成刚刚训练好的,模型路径

然后将想要测试的图片放入data/images下面,然后运行detect.py文件即可。

这里看到我保存在了runs/detect/Sliding目录下

这里的图片文件名称和data/images的文件名称一样可以看到,

成功看到返回缺口位置和置信度,这里已经预测成功,说明我们的模型已经训练成功了。

我将所有图片全部组合起来看起来方便。

图片合成代码

import os

import PIL.Image as Image

def resize_by_width(infile, image_size):

"""按照宽度进行所需比例缩放"""

im = Image.open(infile)

(x, y) = im.size

lv = round(x / image_size, 2) + 0.01

x_s = int(x // lv)

y_s = int(y // lv)

print("x_s", x_s, y_s)

out = im.resize((x_s, y_s), Image.ANTIALIAS)

return out

def get_new_img_xy(infile, image_size):

"""返回一个图片的宽、高像素"""

im = Image.open(infile)

(x, y) = im.size

lv = round(x / image_size, 2) + 0.01

x_s = x // lv

y_s = y // lv

# print("x_s", x_s, y_s)

# out = im.resize((x_s, y_s), Image.ANTIALIAS)

return x_s, y_s

# 定义图像拼接函数

def image_compose(image_colnum, image_size, image_rownum, image_names, image_save_path, x_new, y_new):

to_image = Image.new('RGB', (image_colnum * x_new, image_rownum * y_new)) # 创建一个新图

# 循环遍历,把每张图片按顺序粘贴到对应位置上

total_num = 0

for y in range(1, image_rownum + 1):

for x in range(1, image_colnum + 1):

from_image = resize_by_width(image_names[image_colnum * (y - 1) + x - 1], image_size)

# from_image = Image.open(image_names[image_colnum * (y - 1) + x - 1]).resize((image_size,image_size ), Image.ANTIALIAS)

to_image.paste(from_image, ((x - 1) * x_new, (y - 1) * y_new))

total_num += 1

if total_num == len(image_names):

break

return to_image.save(image_save_path) # 保存新图

def get_image_list_fullpath(dir_path):

file_name_list = os.listdir(dir_path)

image_fullpath_list = []

for file_name_one in file_name_list:

file_one_path = os.path.join(dir_path, file_name_one)

if os.path.isfile(file_one_path):

image_fullpath_list.append(file_one_path)

else:

img_path_list = get_image_list_fullpath(file_one_path)

image_fullpath_list.extend(img_path_list)

return image_fullpath_list

def merge_images(image_dir_path,image_size,image_colnum):

# 获取图片集地址下的所有图片名称

image_fullpath_list = get_image_list_fullpath(image_dir_path)

image_fullpath_list = [i for i in image_fullpath_list if "crops" not in i]

print("image_fullpath_list", len(image_fullpath_list), image_fullpath_list,"========")

image_save_path = r'{}.jpg'.format(image_dir_path) # 图片转换后的地址

# image_rownum = 4 # 图片间隔,也就是合并成一张图后,一共有几行

image_rownum_yu = len(image_fullpath_list) % image_colnum

if image_rownum_yu == 0:

image_rownum = len(image_fullpath_list) // image_colnum

else:

image_rownum = len(image_fullpath_list) // image_colnum + 1

x_list = []

y_list = []

for img_file in image_fullpath_list:

img_x, img_y = get_new_img_xy(img_file, image_size)

x_list.append(img_x)

y_list.append(img_y)

print("x_list", sorted(x_list))

print("y_list", sorted(y_list))

x_new = int(x_list[len(x_list) // 5 * 4])

y_new = int(x_list[len(y_list) // 5 * 4])

image_compose(image_colnum, image_size, image_rownum, image_fullpath_list, image_save_path, x_new, y_new) # 调用函数

# for img_file in image_fullpath_list:

# resize_by_width(img_file,image_size)

if __name__ == '__main__':

image_dir_path = r'/home/yons/pytorch/wang/yolov5-master/runs/detect/Sliding' # 图片集地址

image_size = 128 # 每张小图片的大小

image_colnum = 10 # 合并成一张图后,一行有几个小图

merge_images(image_dir_path, image_size, image_colnum)

单张图片进行测试

yolo有接口测试就在utils下的flask_rest_api文件中restapi.py是服务端,example_requset.py是客户端,可以自行测试。

我这里主要介绍脱离yolo,单独脚本进行测试,并返回坐标和预测图片。

代码从yolo源码中debug抠出来的,将pt模型转为onnx模型,在调用此脚本

import os

import sys

import time

from io import BytesIO

import onnxruntime

import torch

import torchvision

import numpy as np

import cv2

# 图像处理

from PIL import Image

def padded_resize(im, new_shape=(640, 640), stride=32):

try:

shape = im.shape[:2]

r = min(new_shape[0] / shape[0], new_shape[1] / shape[1])

new_unpad = int(round(shape[1] * r)), int(round(shape[0] * r))

dw, dh = new_shape[1] - new_unpad[0], new_shape[0] - new_unpad[1]

# dw, dh = np.mod(dw, stride), np.mod(dh, stride)

dw /= 2

dh /= 2

if shape[::-1] != new_unpad: # resize

im = cv2.resize(im, new_unpad, interpolation=cv2.INTER_LINEAR)

top, bottom = int(round(dh - 0.1)), int(round(dh + 0.1))

left, right = int(round(dw - 0.1)), int(round(dw + 0.1))

im = cv2.copyMakeBorder(im, top, bottom, left, right, cv2.BORDER_CONSTANT, value=(114, 114, 114)) # add border

# Convert

im = im.transpose((2, 0, 1))[::-1] # HWC to CHW, BGR to RGB

im = np.ascontiguousarray(im)

im = torch.from_numpy(im)

im = im.float()

im /= 255

im = im[None]

im = im.cpu().numpy() # torch to numpy

return im

except:

print("123")

def xywh2xyxy(x):

# Convert nx4 boxes from [x, y, w, h] to [x1, y1, x2, y2] where xy1=top-left, xy2=bottom-right

y = x.clone() if isinstance(x, torch.Tensor) else np.copy(x)

y[:, 0] = x[:, 0] - x[:, 2] / 2 # top left x

y[:, 1] = x[:, 1] - x[:, 3] / 2 # top left y

y[:, 2] = x[:, 0] + x[:, 2] / 2 # bottom right x

y[:, 3] = x[:, 1] + x[:, 3] / 2 # bottom right y

return y

def box_iou(box1, box2):

"""

Return intersection-over-union (Jaccard index) of boxes.

Both sets of boxes are expected to be in (x1, y1, x2, y2) format.

Arguments:

box1 (Tensor[N, 4])

box2 (Tensor[M, 4])

Returns:

iou (Tensor[N, M]): the NxM matrix containing the pairwise

IoU values for every element in boxes1 and boxes2

"""

def box_area(box):

# box = 4xn

return (box[2] - box[0]) * (box[3] - box[1])

area1 = box_area(box1.T)

area2 = box_area(box2.T)

# inter(N,M) = (rb(N,M,2) - lt(N,M,2)).clamp(0).prod(2)

inter = (torch.min(box1[:, None, 2:], box2[:, 2:]) - torch.max(box1[:, None, :2], box2[:, :2])).clamp(0).prod(2)

return inter / (area1[:, None] + area2 - inter) # iou = inter / (area1 + area2 - inter)

def non_max_suppression(prediction, conf_thres=0.25, iou_thres=0.45, classes=None, agnostic=False, multi_label=False,

labels=(), max_det=300):

"""Runs Non-Maximum Suppression (NMS) on inference results

Returns:

list of detections, on (n,6) tensor per image [xyxy, conf, cls]

"""

nc = prediction.shape[2] - 5 # number of classes

xc = prediction[..., 4] > conf_thres # candidates

# Checks

assert 0 <= conf_thres <= 1, f'Invalid Confidence threshold {conf_thres}, valid values are between 0.0 and 1.0'

assert 0 <= iou_thres <= 1, f'Invalid IoU {iou_thres}, valid values are between 0.0 and 1.0'

# Settings

min_wh, max_wh = 2, 7680 # (pixels) minimum and maximum box width and height

max_nms = 30000 # maximum number of boxes into torchvision.ops.nms()

time_limit = 10.0 # seconds to quit after

redundant = True # require redundant detections

multi_label &= nc > 1 # multiple labels per box (adds 0.5ms/img)

merge = False # use merge-NMS

t = time.time()

output = [torch.zeros((0, 6), device=prediction.device)] * prediction.shape[0]

for xi, x in enumerate(prediction): # image index, image inference

# Apply constraints

x[((x[..., 2:4] < min_wh) | (x[..., 2:4] > max_wh)).any(1), 4] = 0 # width-height

x = x[xc[xi]] # confidence

# Cat apriori labels if autolabelling

if labels and len(labels[xi]):

lb = labels[xi]

v = torch.zeros((len(lb), nc + 5), device=x.device)

v[:, :4] = lb[:, 1:5] # box

v[:, 4] = 1.0 # conf

v[range(len(lb)), lb[:, 0].long() + 5] = 1.0 # cls

x = torch.cat((x, v), 0)

# If none remain process next image

if not x.shape[0]:

continue

# Compute conf

x[:, 5:] *= x[:, 4:5] # conf = obj_conf * cls_conf

# Box (center x, center y, width, height) to (x1, y1, x2, y2)

box = xywh2xyxy(x[:, :4])

# Detections matrix nx6 (xyxy, conf, cls)

if multi_label:

i, j = (x[:, 5:] > conf_thres).nonzero(as_tuple=False).T

x = torch.cat((box[i], x[i, j + 5, None], j[:, None].float()), 1)

else: # best class only

conf, j = x[:, 5:].max(1, keepdim=True)

x = torch.cat((box, conf, j.float()), 1)[conf.view(-1) > conf_thres]

# Filter by class

if classes is not None:

x = x[(x[:, 5:6] == torch.tensor(classes, device=x.device)).any(1)]

# Apply finite constraint

# if not torch.isfinite(x).all():

# x = x[torch.isfinite(x).all(1)]

# Check shape

n = x.shape[0] # number of boxes

if not n: # no boxes

continue

elif n > max_nms: # excess boxes

x = x[x[:, 4].argsort(descending=True)[:max_nms]] # sort by confidence

# Batched NMS

c = x[:, 5:6] * (0 if agnostic else max_wh) # classes

boxes, scores = x[:, :4] + c, x[:, 4] # boxes (offset by class), scores

i = torchvision.ops.nms(boxes, scores, iou_thres) # NMS

if i.shape[0] > max_det: # limit detections

i = i[:max_det]

if merge and (1 < n < 3E3): # Merge NMS (boxes merged using weighted mean)

# update boxes as boxes(i,4) = weights(i,n) * boxes(n,4)

iou = box_iou(boxes[i], boxes) > iou_thres # iou matrix

weights = iou * scores[None] # box weights

x[i, :4] = torch.mm(weights, x[:, :4]).float() / weights.sum(1, keepdim=True) # merged boxes

if redundant:

i = i[iou.sum(1) > 1] # require redundancy

output[xi] = x[i]

if (time.time() - t) > time_limit:

break # time limit exceeded

return output

def xyxy2xywh(x):

# Convert nx4 boxes from [x1, y1, x2, y2] to [x, y, w, h] where xy1=top-left, xy2=bottom-right

y = x.clone() if isinstance(x, torch.Tensor) else np.copy(x)

y[:, 0] = (x[:, 0] + x[:, 2]) / 2 # x center

y[:, 1] = (x[:, 1] + x[:, 3]) / 2 # y center

y[:, 2] = x[:, 2] - x[:, 0] # width

y[:, 3] = x[:, 3] - x[:, 1] # height

return y

def is_ascii(s=''):

# Is string composed of all ASCII (no UTF) characters? (note str().isascii() introduced in python 3.7)

s = str(s) # convert list, tuple, None, etc. to str

return len(s.encode().decode('ascii', 'ignore')) == len(s)

def box_label(self, box, label='', color=(128, 128, 128), txt_color=(255, 255, 255)):

# Add one xyxy box to image with label

if self.pil or not is_ascii(label):

self.draw.rectangle(box, width=self.lw, outline=color) # box

if label:

w, h = self.font.getsize(label) # text width, height

outside = box[1] - h >= 0 # label fits outside box

self.draw.rectangle((box[0],

box[1] - h if outside else box[1],

box[0] + w + 1,

box[1] + 1 if outside else box[1] + h + 1), fill=color)

# self.draw.text((box[0], box[1]), label, fill=txt_color, font=self.font, anchor='ls') # for PIL>8.0

self.draw.text((box[0], box[1] - h if outside else box[1]), label, fill=txt_color, font=self.font)

else: # cv2

p1, p2 = (int(box[0]), int(box[1])), (int(box[2]), int(box[3]))

cv2.rectangle(self.im, p1, p2, color, thickness=self.lw, lineType=cv2.LINE_AA)

if label:

tf = max(self.lw - 1, 1) # font thickness

w, h = cv2.getTextSize(label, 0, fontScale=self.lw / 3, thickness=tf)[0] # text width, height

outside = p1[1] - h - 3 >= 0 # label fits outside box

p2 = p1[0] + w, p1[1] - h - 3 if outside else p1[1] + h + 3

cv2.rectangle(self.im, p1, p2, color, -1, cv2.LINE_AA) # filled

cv2.putText(self.im, label, (p1[0], p1[1] - 2 if outside else p1[1] + h + 2), 0, self.lw / 3, txt_color,

thickness=tf, lineType=cv2.LINE_AA)

def return_coordinates(xyxy, conf):

conf = float(conf.numpy())

gain = 1.02

pad = 10

xyxy = torch.tensor(xyxy).view(-1, 4)

b = xyxy2xywh(xyxy) # boxes

b[:, 2:] = b[:, 2:] * gain + pad # box wh * gain + pad

xyxy = xywh2xyxy(b).long()

c1, c2 = (int(xyxy[0, 0]) + 6, int(xyxy[0, 1]) + 6), (int(xyxy[0, 2]) - 6, int(xyxy[0, 3]) - 6)

# print(f"leftTop:{c1},rightBottom:{c2},Confidence:{conf*100}%")

result_dict = {"leftTop": c1, "rightBottom": c2, "Confidence": conf}

return result_dict

def clip_coords(boxes, shape):

# Clip bounding xyxy bounding boxes to image shape (height, width)

if isinstance(boxes, torch.Tensor): # faster individually

boxes[:, 0].clamp_(0, shape[1]) # x1

boxes[:, 1].clamp_(0, shape[0]) # y1

boxes[:, 2].clamp_(0, shape[1]) # x2

boxes[:, 3].clamp_(0, shape[0]) # y2

else: # np.array (faster grouped)

boxes[:, [0, 2]] = boxes[:, [0, 2]].clip(0, shape[1]) # x1, x2

boxes[:, [1, 3]] = boxes[:, [1, 3]].clip(0, shape[0]) # y1, y2

def scale_coords(img1_shape, coords, img0_shape, ratio_pad=None):

# Rescale coords (xyxy) from img1_shape to img0_shape

if ratio_pad is None: # calculate from img0_shape

gain = min(img1_shape[0] / img0_shape[0], img1_shape[1] / img0_shape[1]) # gain = old / new

pad = (img1_shape[1] - img0_shape[1] * gain) / 2, (img1_shape[0] - img0_shape[0] * gain) / 2 # wh padding

else:

gain = ratio_pad[0][0]

pad = ratio_pad[1]

coords[:, [0, 2]] -= pad[0] # x padding

coords[:, [1, 3]] -= pad[1] # y padding

coords[:, :4] /= gain

clip_coords(coords, img0_shape)

return coords

def onnx_model_main(path):

# onnx

session = onnxruntime.InferenceSession("模型路径", providers=["CPUExecutionProvider"])

start = time.time()

image = open(path, "rb").read()

img = np.array(Image.open(BytesIO(image)))

# img = cv2.imread(path)

# 图像处理

img = img[:, :, :3]

im = padded_resize(img)

# 模型调度

pred = session.run([session.get_outputs()[0].name], {session.get_inputs()[0].name: im})[0]

pred = torch.tensor(pred)

pred = non_max_suppression(pred, conf_thres=0.60, iou_thres=0.60, max_det=1000) # 大于百分之六十的置信度

coordinate_list = []

for i, det in enumerate(pred):

det[:, :4] = scale_coords(im.shape[2:], det[:, :4], img.shape).round()

for *xyxy, conf, cls in reversed(det):

# 返回坐标和置信度

coordinates = return_coordinates(xyxy, conf)

coordinate_list.append(coordinates)

# 坐标列表

coordinate = sorted(coordinate_list, key=lambda a: a["Confidence"])

# 用时

duration = str((time.time() - start))

if len(coordinate) == 0:

data = {'message': 'error', 'time': duration}

else:

coordinate = coordinate[-1]

x = coordinate.get('leftTop')[0]

y = coordinate.get('leftTop')[1]

w = coordinate.get('rightBottom')[0] - coordinate.get('leftTop')[0]

h = coordinate.get('rightBottom')[1] - coordinate.get('leftTop')[1]

point = f"{x}|{y}|{w}|{h}"

data = {'message': 'success', 'time': duration, 'point': point}

data.update(coordinate)

print(data)

return data

def drow_rectangle(coordinate, path):

img = cv2.imread(path)

# 画框

result = cv2.rectangle(img, coordinate.get("leftTop"), coordinate.get("rightBottom"), (0, 0, 255), 2)

cv2.imwrite("drow_rectangle.jpg", result) # 返回圈中矩形的图片

print("返回坐标矩形成功")

if __name__ == '__main__':

coordinate_onnx = onnx_model_main("图片路径")

drow_rectangle(coordinate_onnx, "图片路径")

结果测试

这里我们用一张图片经测试

返回结果:

用时340毫秒,如果模型提前加载预计100到200毫秒,速度还是可以的,置信度也有百分之九十八。同时返回坐标,包括左上角以及右下角。

![]()

标注缺口位置图片:

drow_rectangle.jpg:

经过反复测试模型的泛化性也不错,针对于单一缺口的滑块,算是通用模型了如果遇到比较难搞的进行标注,放入训练集就可以了,本身滑块缺口对于目标检测来说是比较简单的,只有一个类别,同时也不需要大量数据集我只用了4000多张就可以达到一个非常好的效果。

本文只是实战操作,如果有同样需求的朋友可以更快上手,yolo里面还很多值得学习的东西,感兴趣的可以自己去研究模型的推理,以及源码。如果想要数据集请在评论区留言。