HBU_神经网络与深度学习 实验6 前馈神经网络:自动梯度计算 及 优化问题

目录

- 写在前面的一些内容

- 一、自动梯度计算和预定义算子

-

- 1. 利用预定义算子重新实现前馈神经网络

- 2. 完善Runner类

- 3. 模型训练

- 4. 性能评价

- 二、优化问题

-

- 1. 参数初始化

- 2. 梯度消失问题

-

- (1)模型构建

- (2)使用Sigmoid型函数进行训练

- (3)使用ReLU函数进行模型训练

- 3. 死亡 ReLU 问题

-

- (1)使用ReLU进行模型训练

- (2)使用Leaky ReLU进行模型训练

- 三、实验总结

写在前面的一些内容

- 本文为HBU_神经网络与深度学习实验(2022年秋)实验6的实验报告,此文的基本内容参照 [1]Github/前馈神经网络-上.ipynb,检索时请按对应序号进行检索。

- 本实验报告参考了 HBU-NNDL 实验五 前馈神经网络(2)自动梯度计算 & 优化问题 by 不是蒋承翰 的部分内容。

- 本实验编程语言为Python 3.10,使用Pycharm进行编程。

- 本实验报告目录标题级别顺序:一、 1. (1)

- 水平有限,难免有误,如有错漏之处敬请指正。

一、自动梯度计算和预定义算子

虽然我们能够通过模块化的方式比较好地对神经网络进行组装,但是每个模块的梯度计算过程仍然十分繁琐且容易出错。在深度学习框架中,已经封装了自动梯度计算的功能,我们只需要聚焦模型架构,不再需要耗费精力进行计算梯度。

1. 利用预定义算子重新实现前馈神经网络

下面我们使用Pytorch的预定义算子来重新实现二分类任务。

主要使用到的预定义算子为torch.nn.Linear:

class torch.nn.Linear(in_features, out_features, weight_attr=None, bias_attr=None, name=None)

torch.nn.Linear算子可以接受一个形状为[batch_size,∗,in_features]的输入张量,其中"∗"表示张量中可以有任意的其它额外维度,并计算它与形状为[in_features, out_features]的权重矩阵的乘积,然后生成形状为[batch_size,∗,out_features]的输出张量。torch.nn.Linear算子默认有偏置参数,可以通过bias_attr=False设置不带偏置。

代码实现如下:

import torch.nn

import torch.nn.functional as F

from torch.nn.init import constant_, normal_

class Model_MLP_L2_V2(torch.nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super(Model_MLP_L2_V2, self).__init__()

# 使用'torch.nn.Linear'定义线性层。

# 其中第一个参数(in_features)为线性层输入维度;第二个参数(out_features)为线性层输出维度

# weight_attr为权重参数属性,这里使用'torch.nn.init.normal_'进行随机高斯分布初始化

# bias_attr为偏置参数属性,这里使用'torch.nn.init.constant_'进行常量初始化

self.fc1 = torch.nn.Linear(input_size, hidden_size)

normal_(tensor=self.fc1.weight, mean=0., std=1.)

constant_(tensor=self.fc1.bias, val=0.0)

self.fc2 = torch.nn.Linear(hidden_size, output_size)

normal_(tensor=self.fc2.weight, mean=0., std=1.)

constant_(tensor=self.fc2.bias, val=0.0)

# 使用'torch.nn.functional.sigmoid'定义 Logistic 激活函数

self.act_fn = F.sigmoid

# 前向计算

def forward(self, inputs):

z1 = self.fc1(inputs)

a1 = self.act_fn(z1)

z2 = self.fc2(a1)

a2 = self.act_fn(z2)

return a2

2. 完善Runner类

基于上一节实现的 RunnerV2_1 类,本节的 RunnerV2_2 类在训练过程中使用自动梯度计算;模型保存时,使用state_dict方法获取模型参数;模型加载时,使用set_state_dict方法加载模型参数。

import torch

class RunnerV2_2(object):

def __init__(self, model, optimizer, metric, loss_fn, **kwargs):

self.model = model

self.optimizer = optimizer

self.loss_fn = loss_fn

self.metric = metric

# 记录训练过程中的评估指标变化情况

self.train_scores = []

self.dev_scores = []

# 记录训练过程中的评价指标变化情况

self.train_loss = []

self.dev_loss = []

def train(self, train_set, dev_set, **kwargs):

# 将模型切换为训练模式

self.model.train()

# 传入训练轮数,如果没有传入值则默认为0

num_epochs = kwargs.get("num_epochs", 0)

# 传入log打印频率,如果没有传入值则默认为100

log_epochs = kwargs.get("log_epochs", 100)

# 传入模型保存路径,如果没有传入值则默认为"best_model.pdparams"

save_path = kwargs.get("save_path", "best_model.pdparams")

# log打印函数,如果没有传入则默认为"None"

custom_print_log = kwargs.get("custom_print_log", None)

# 记录全局最优指标

best_score = 0

# 进行num_epochs轮训练

for epoch in range(num_epochs):

X, y = train_set

# 获取模型预测

logits = self.model(X)

# 计算交叉熵损失

trn_loss = self.loss_fn(logits, y)

self.train_loss.append(trn_loss.item())

# 计算评估指标

trn_score = self.metric(logits, y).item()

self.train_scores.append(trn_score)

# 自动计算参数梯度

trn_loss.backward()

if custom_print_log is not None:

# 打印每一层的梯度

custom_print_log(self)

# 参数更新

self.optimizer.step()

# 清空梯度

self.optimizer.zero_grad()

dev_score, dev_loss = self.evaluate(dev_set)

# 如果当前指标为最优指标,保存该模型

if dev_score > best_score:

self.save_model(save_path)

print(f"[Evaluate] best accuracy performence has been updated: {best_score:.5f} --> {dev_score:.5f}")

best_score = dev_score

if log_epochs and epoch % log_epochs == 0:

print(f"[Train] epoch: {epoch}/{num_epochs}, loss: {trn_loss.item()}")

# 模型评估阶段,使用'torch.no_grad()'控制不计算和存储梯度

@torch.no_grad()

def evaluate(self, data_set):

# 将模型切换为评估模式

self.model.eval()

X, y = data_set

# 计算模型输出

logits = self.model(X)

# 计算损失函数

loss = self.loss_fn(logits, y).item()

self.dev_loss.append(loss)

# 计算评估指标

score = self.metric(logits, y).item()

self.dev_scores.append(score)

return score, loss

# 模型测试阶段,使用'torch.no_grad()'控制不计算和存储梯度

@torch.no_grad()

def predict(self, X):

# 将模型切换为评估模式

self.model.eval()

return self.model(X)

# 使用'model.state_dict()'获取模型参数,并进行保存

def save_model(self, saved_path):

torch.save(self.model.state_dict(), saved_path)

# 使用'model.set_state_dict'加载模型参数

def load_model(self, model_path):

state_dict = torch.load(model_path)

self.model.load_state_dict(state_dict)

3. 模型训练

实例化RunnerV2类,并传入训练配置,代码实现如下:

from metric import accuracy

# 设置模型

input_size = 2

hidden_size = 5

output_size = 1

model = Model_MLP_L2_V2(input_size=input_size, hidden_size=hidden_size, output_size=output_size)

# 设置损失函数

loss_fn = F.binary_cross_entropy

# 设置优化器

learning_rate = 0.2

optimizer = torch.optim.SGD(model.parameters(), lr=learning_rate)

# 设置评价指标

metric = accuracy

# 其他参数

epoch_num = 1000

saved_path = 'best_model.pdparams'

# 实例化RunnerV2类,并传入训练配置

runner = RunnerV2_2(model, optimizer, metric, loss_fn)

runner.train([X_train, y_train], [X_dev, y_dev], num_epochs=epoch_num, log_epochs=50, save_path="best_model.pdparams")

X_train, y_train, X_dev, y_dev部分的代码见上一个实验。

代码执行结果:

[Evaluate] best accuracy performence has been updated: 0.00000 --> 0.51875

[Train] epoch: 0/1000, loss: 0.8497516512870789

[Evaluate] best accuracy performence has been updated: 0.51875 --> 0.53750

[Evaluate] best accuracy performence has been updated: 0.53750 --> 0.56875

[Evaluate] best accuracy performence has been updated: 0.56875 --> 0.58125

[Evaluate] best accuracy performence has been updated: 0.58125 --> 0.58750

[Evaluate] best accuracy performence has been updated: 0.58750 --> 0.59375

[Evaluate] best accuracy performence has been updated: 0.59375 --> 0.60000

[Evaluate] best accuracy performence has been updated: 0.60000 --> 0.61250

[Evaluate] best accuracy performence has been updated: 0.61250 --> 0.61875

[Evaluate] best accuracy performence has been updated: 0.61875 --> 0.62500

[Evaluate] best accuracy performence has been updated: 0.62500 --> 0.63125

[Evaluate] best accuracy performence has been updated: 0.63125 --> 0.65000

[Evaluate] best accuracy performence has been updated: 0.65000 --> 0.65625

[Evaluate] best accuracy performence has been updated: 0.65625 --> 0.66250

[Evaluate] best accuracy performence has been updated: 0.66250 --> 0.66875

[Evaluate] best accuracy performence has been updated: 0.66875 --> 0.67500

[Evaluate] best accuracy performence has been updated: 0.67500 --> 0.68125

[Train] epoch: 50/1000, loss: 0.556875467300415

[Evaluate] best accuracy performence has been updated: 0.68125 --> 0.68750

[Evaluate] best accuracy performence has been updated: 0.68750 --> 0.70000

[Evaluate] best accuracy performence has been updated: 0.70000 --> 0.70625

[Evaluate] best accuracy performence has been updated: 0.70625 --> 0.71250

[Evaluate] best accuracy performence has been updated: 0.71250 --> 0.71875

[Evaluate] best accuracy performence has been updated: 0.71875 --> 0.72500

[Evaluate] best accuracy performence has been updated: 0.72500 --> 0.73125

[Evaluate] best accuracy performence has been updated: 0.73125 --> 0.74375

[Evaluate] best accuracy performence has been updated: 0.74375 --> 0.75000

[Evaluate] best accuracy performence has been updated: 0.75000 --> 0.76250

[Evaluate] best accuracy performence has been updated: 0.76250 --> 0.76875

[Evaluate] best accuracy performence has been updated: 0.76875 --> 0.77500

[Evaluate] best accuracy performence has been updated: 0.77500 --> 0.78125

[Evaluate] best accuracy performence has been updated: 0.78125 --> 0.78750

[Train] epoch: 100/1000, loss: 0.48954516649246216

[Evaluate] best accuracy performence has been updated: 0.78750 --> 0.79375

[Evaluate] best accuracy performence has been updated: 0.79375 --> 0.80000

[Evaluate] best accuracy performence has been updated: 0.80000 --> 0.80625

[Train] epoch: 150/1000, loss: 0.4570175111293793

[Train] epoch: 200/1000, loss: 0.4402849078178406

[Train] epoch: 250/1000, loss: 0.4314705729484558

[Train] epoch: 300/1000, loss: 0.4267541766166687

[Evaluate] best accuracy performence has been updated: 0.80625 --> 0.81250

[Train] epoch: 350/1000, loss: 0.42418307065963745

[Train] epoch: 400/1000, loss: 0.4227420389652252

[Train] epoch: 450/1000, loss: 0.4218997359275818

[Train] epoch: 500/1000, loss: 0.4213765263557434

[Train] epoch: 550/1000, loss: 0.4210241734981537

[Train] epoch: 600/1000, loss: 0.4207640588283539

[Train] epoch: 650/1000, loss: 0.4205540120601654

[Train] epoch: 700/1000, loss: 0.4203713834285736

[Train] epoch: 750/1000, loss: 0.4202039837837219

[Train] epoch: 800/1000, loss: 0.4200451374053955

[Train] epoch: 850/1000, loss: 0.41989126801490784

[Train] epoch: 900/1000, loss: 0.4197402000427246

[Train] epoch: 950/1000, loss: 0.4195910096168518

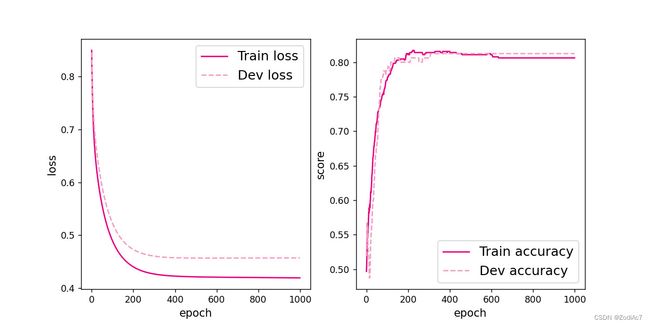

将训练过程中训练集与验证集的准确率变化情况进行可视化。

# 可视化观察训练集与验证集的指标变化情况

def plot(runner, fig_name):

plt.figure(figsize=(10, 5))

epochs = [i for i in range(len(runner.train_scores))]

plt.subplot(1, 2, 1)

plt.plot(epochs, runner.train_loss, color='#e4007f', label="Train loss")

plt.plot(epochs, runner.dev_loss, color='#f19ec2', linestyle='--', label="Dev loss")

# 绘制坐标轴和图例

plt.ylabel("loss", fontsize='large')

plt.xlabel("epoch", fontsize='large')

plt.legend(loc='upper right', fontsize='x-large')

plt.subplot(1, 2, 2)

plt.plot(epochs, runner.train_scores, color='#e4007f', label="Train accuracy")

plt.plot(epochs, runner.dev_scores, color='#f19ec2', linestyle='--', label="Dev accuracy")

# 绘制坐标轴和图例

plt.ylabel("score", fontsize='large')

plt.xlabel("epoch", fontsize='large')

plt.legend(loc='lower right', fontsize='x-large')

plt.savefig(fig_name)

plt.show()

plot(runner, 'fw-acc.pdf')

4. 性能评价

使用测试数据对训练完成后的最优模型进行评价,观察模型在测试集上的准确率以及loss情况。代码如下:

# 模型评价

runner.load_model("best_model.pdparams")

score, loss = runner.evaluate([X_test, y_test])

print("[Test] score/loss: {:.4f}/{:.4f}".format(score, loss))

代码执行结果:

[Test] score/loss: 0.8500/0.3814

从结果来看,模型在测试集上取得了较高的准确率。

二、优化问题

在本节中,我们通过实践来发现神经网络模型的优化问题,并思考如何改进。

1. 参数初始化

实现一个神经网络前,需要先初始化模型参数。如果对每一层的权重和偏置都用0初始化,那么通过第一遍前向计算,所有隐藏层神经元的激活值都相同;在反向传播时,所有权重的更新也都相同,这样会导致隐藏层神经元没有差异性,出现对称权重现象。

接下来,将模型参数全都初始化为0,看实验结果。这里重新定义了一个类TwoLayerNet_Zeros,两个线性层的参数全都初始化为0。

class Model_MLP_L2_V4(torch.nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super(Model_MLP_L2_V4, self).__init__()

# 使用'torch.nn.Linear'定义线性层。

# 其中第一个参数(in_features)为线性层输入维度;第二个参数(out_features)为线性层输出维度

# weight为权重参数属性,bias为偏置参数属性,这里使用'torch.nn.init.constant_'进行常量初始化

self.fc1 = torch.nn.Linear(input_size, hidden_size)

constant_(tensor=self.fc1.weight, val=0.0)

constant_(tensor=self.fc1.bias, val=0.0)

self.fc2 = torch.nn.Linear(hidden_size, output_size)

constant_(tensor=self.fc2.weight, val=0.0)

constant_(tensor=self.fc2.bias, val=0.0)

# 使用'torch.nn.functional.sigmoid'定义 Logistic 激活函数

self.act_fn = F.sigmoid

# 前向计算

def forward(self, inputs):

z1 = self.fc1(inputs)

a1 = self.act_fn(z1)

z2 = self.fc2(a1)

a2 = self.act_fn(z2)

return a2

def print_weights(runner):

print('The weights of the Layers:')

for _, param in enumerate(runner.model.named_parameters()):

print(param)

利用Runner类训练模型:

# 设置模型

input_size = 2

hidden_size = 5

output_size = 1

model = Model_MLP_L2_V4(input_size=input_size, hidden_size=hidden_size, output_size=output_size)

# 设置损失函数

loss_fn = F.binary_cross_entropy

# 设置优化器

learning_rate = 0.2 # 5e-2

optimizer = torch.optim.SGD(model.parameters(), lr=learning_rate)

# 设置评价指标

metric = accuracy

# 其他参数

epoch = 2000

saved_path = 'best_model.pdparams'

# 实例化RunnerV2类,并传入训练配置

runner = RunnerV2_2(model, optimizer, metric, loss_fn)

runner.train([X_train, y_train], [X_dev, y_dev], num_epochs=5, log_epochs=50, save_path="best_model.pdparams",

custom_print_log=print_weights)

代码执行结果:

The weights of the Layers:

('fc1.weight', Parameter containing:

tensor([[0., 0.],

[0., 0.],

[0., 0.],

[0., 0.],

[0., 0.]], requires_grad=True))

('fc1.bias', Parameter containing:

tensor([0., 0., 0., 0., 0.], requires_grad=True))

('fc2.weight', Parameter containing:

tensor([[0.0008, 0.0008, 0.0008, 0.0008, 0.0008]], requires_grad=True))

('fc2.bias', Parameter containing:

tensor([0.0016], requires_grad=True))

The weights of the Layers:

('fc1.weight', Parameter containing:

tensor([[ 1.0144e-05, -7.4018e-06],

[ 1.0144e-05, -7.4018e-06],

[ 1.0144e-05, -7.4018e-06],

[ 1.0144e-05, -7.4018e-06],

[ 1.0144e-05, -7.4018e-06]], requires_grad=True))

('fc1.bias', Parameter containing:

tensor([2.7084e-07, 2.7084e-07, 2.7084e-07, 2.7084e-07, 2.7084e-07],

requires_grad=True))

C:\Users\Rex Mei\Desktop\MLE_Project\venv\lib\site-packages\torch\nn\functional.py:1960: UserWarning: nn.functional.sigmoid is deprecated. Use torch.sigmoid instead.

warnings.warn("nn.functional.sigmoid is deprecated. Use torch.sigmoid instead.")

C:\Users\Rex Mei\Desktop\DeepLearningExperiment\metric.py:17: UserWarning: To copy construct from a tensor, it is recommended to use sourceTensor.clone().detach() or sourceTensor.clone().detach().requires_grad_(True), rather than torch.tensor(sourceTensor).

preds = torch.tensor((preds >= 0.5), dtype=torch.float32)

C:\Users\Rex Mei\Desktop\DeepLearningExperiment\metric.py:21: UserWarning: To copy construct from a tensor, it is recommended to use sourceTensor.clone().detach() or sourceTensor.clone().detach().requires_grad_(True), rather than torch.tensor(sourceTensor).

return torch.mean(torch.tensor(torch.eq(preds, labels), dtype=torch.float32))

('fc2.weight', Parameter containing:

tensor([[0.0015, 0.0015, 0.0015, 0.0015, 0.0015]], requires_grad=True))

('fc2.bias', Parameter containing:

tensor([0.0029], requires_grad=True))

The weights of the Layers:

('fc1.weight', Parameter containing:

tensor([[ 2.9262e-05, -2.1386e-05],

[ 2.9262e-05, -2.1386e-05],

[ 2.9262e-05, -2.1386e-05],

[ 2.9262e-05, -2.1386e-05],

[ 2.9262e-05, -2.1386e-05]], requires_grad=True))

('fc1.bias', Parameter containing:

tensor([7.2455e-07, 7.2455e-07, 7.2455e-07, 7.2455e-07, 7.2455e-07],

requires_grad=True))

('fc2.weight', Parameter containing:

tensor([[0.0021, 0.0021, 0.0021, 0.0021, 0.0021]], requires_grad=True))

('fc2.bias', Parameter containing:

tensor([0.0042], requires_grad=True))

The weights of the Layers:

('fc1.weight', Parameter containing:

tensor([[ 5.6325e-05, -4.1226e-05],

[ 5.6325e-05, -4.1226e-05],

[ 5.6325e-05, -4.1226e-05],

[ 5.6325e-05, -4.1226e-05],

[ 5.6325e-05, -4.1226e-05]], requires_grad=True))

('fc1.bias', Parameter containing:

tensor([1.2953e-06, 1.2953e-06, 1.2953e-06, 1.2953e-06, 1.2953e-06],

requires_grad=True))

('fc2.weight', Parameter containing:

tensor([[0.0026, 0.0026, 0.0026, 0.0026, 0.0026]], requires_grad=True))

('fc2.bias', Parameter containing:

tensor([0.0053], requires_grad=True))

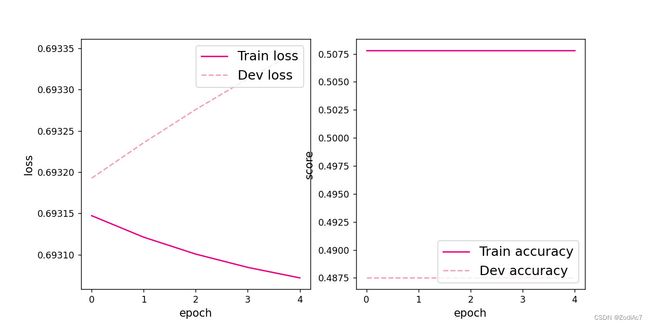

可视化训练和验证集上的主准确率和loss变化:

plot(runner, "fw-zero.pdf")

代码执行结果如下图所示:

从输出结果看,二分类准确率为50%左右,说明模型没有学到任何内容。训练和验证loss几乎没有怎么下降。

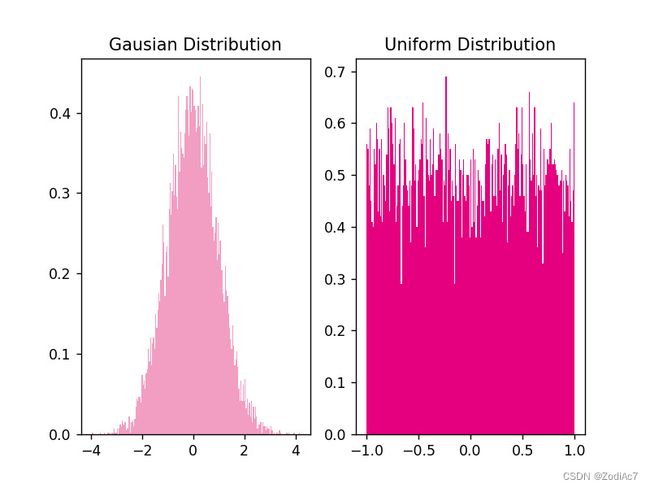

为了避免对称权重现象,可以使用高斯分布或均匀分布初始化神经网络的参数。

高斯分布和均匀分布采样的实现和可视化代码如下:

# 使用'torch.normal'实现高斯分布采样,其中'mean'为高斯分布的均值,'std'为高斯分布的标准差,'size'为输出形状

gausian_weights = torch.normal(mean=0.0, std=1.0, size=[10000])

# 使用'torch.uniform_'实现在[min,max)范围内的均匀分布采样

uniform_weights = torch.Tensor(10000)

uniform_weights.uniform_(-1,1)

gausian_weights=gausian_weights.numpy()

uniform_weights=uniform_weights.numpy()

# 绘制两种参数分布

plt.figure()

plt.subplot(1,2,1)

plt.title('Gausian Distribution')

plt.hist(gausian_weights, bins=200, density=True, color='#f19ec2')

plt.subplot(1,2,2)

plt.title('Uniform Distribution')

plt.hist(uniform_weights, bins=200, density=True, color='#e4007f')

plt.savefig('fw-gausian-uniform.pdf')

plt.show()

2. 梯度消失问题

在神经网络的构建过程中,随着网络层数的增加,理论上网络的拟合能力也应该是越来越好的。但是随着网络变深,参数学习更加困难,容易出现梯度消失问题。

由于Sigmoid型函数的饱和性,饱和区的导数更接近于0,误差经过每一层传递都会不断衰减。当网络层数很深时,梯度就会不停衰减,甚至消失,使得整个网络很难训练,这就是所谓的梯度消失问题。

在深度神经网络中,减轻梯度消失问题的方法有很多种,一种简单有效的方式就是使用导数比较大的激活函数,如:ReLU。

下面通过一个简单的实验观察前馈神经网络的梯度消失现象和改进方法。

(1)模型构建

定义一个前馈神经网络,包含4个隐藏层和1个输出层,通过传入的参数指定激活函数。代码实现如下:

# 定义多层前馈神经网络

class Model_MLP_L5(torch.nn.Module):

def __init__(self, input_size, output_size, act='relu', mean_init=0., std_init=0.01, b_init=1.0):

super(Model_MLP_L5, self).__init__()

self.fc1 = torch.nn.Linear(input_size, 3)

normal_(tensor=self.fc1.weight, mean=mean_init, std=std_init)

constant_(tensor=self.fc1.bias, val=b_init)

self.fc2 = torch.nn.Linear(3, 3)

normal_(tensor=self.fc2.weight, mean=mean_init, std=std_init)

constant_(tensor=self.fc2.bias, val=b_init)

self.fc3 = torch.nn.Linear(3, 3)

normal_(tensor=self.fc3.weight, mean=mean_init, std=std_init)

constant_(tensor=self.fc3.bias, val=b_init)

self.fc4 = torch.nn.Linear(3, 3)

normal_(tensor=self.fc4.weight, mean=mean_init, std=std_init)

constant_(tensor=self.fc4.bias, val=b_init)

self.fc5 = torch.nn.Linear(3, output_size)

normal_(tensor=self.fc5.weight, mean=mean_init, std=std_init)

constant_(tensor=self.fc5.bias, val=b_init)

# 定义网络使用的激活函数

if act == 'sigmoid':

self.act = F.sigmoid

elif act == 'relu':

self.act = F.relu

elif act == 'lrelu':

self.act = F.leaky_relu

else:

raise ValueError("Please enter sigmoid relu or lrelu!")

def forward(self, inputs):

outputs = self.fc1(inputs.to(torch.float32))

outputs = self.act(outputs)

outputs = self.fc2(outputs)

outputs = self.act(outputs)

outputs = self.fc3(outputs)

outputs = self.act(outputs)

outputs = self.fc4(outputs)

outputs = self.act(outputs)

outputs = self.fc5(outputs)

outputs = F.sigmoid(outputs)

return outputs

(2)使用Sigmoid型函数进行训练

使用Sigmoid型函数作为激活函数,为了便于观察梯度消失现象,只进行一轮网络优化。代码实现如下:

定义梯度打印函数

def print_grads(runner):

print('The gradient of the Layers:')

for name, parms in runner.model.named_parameters():

print('name:', name, ' grad_value:', parms.grad)

torch.random.manual_seed(102)

# 学习率大小

lr = 0.01

# 定义网络,激活函数使用sigmoid

model = Model_MLP_L5(input_size=2, output_size=1, act='sigmoid')

# 定义优化器

optimizer = torch.optim.SGD(model.parameters(), lr=lr)

# 定义损失函数,使用交叉熵损失函数

loss_fn = F.binary_cross_entropy

from metric import accuracy

# 定义评价指标

metric = accuracy

# 指定梯度打印函数

custom_print_log = print_grads

实例化RunnerV2_2类,并传入训练配置。代码实现如下:

# 实例化Runner类

runner = RunnerV2_2(model, optimizer, metric, loss_fn)

模型训练,打印网络每层梯度值的 ℓ 2 \ell_2 ℓ2范数。代码实现如下:

# 启动训练

runner.train([X_train, y_train], [X_dev, y_dev], num_epochs=1, log_epochs=None, save_path="best_model.pdparams",

custom_print_log=custom_print_log)

代码执行结果:

The gradient of the Layers:

name: fc1.weight grad_value: tensor([[-4.7838e-12, 8.2888e-12],

[ 3.6502e-12, -6.3175e-12],

[ 6.4543e-12, -1.1160e-11]])

name: fc1.bias grad_value: tensor([ 7.6704e-12, -5.8654e-12, -1.0326e-11])

name: fc2.weight grad_value: tensor([[1.0832e-09, 1.0814e-09, 1.0843e-09],

[1.6404e-09, 1.6376e-09, 1.6421e-09],

[3.8894e-10, 3.8829e-10, 3.8935e-10]])

name: fc2.bias grad_value: tensor([1.4831e-09, 2.2461e-09, 5.3256e-10])

name: fc3.weight grad_value: tensor([[-1.3158e-06, -1.3159e-06, -1.3071e-06],

[-1.5598e-06, -1.5600e-06, -1.5495e-06],

[ 1.5137e-06, 1.5139e-06, 1.5037e-06]])

name: fc3.bias grad_value: tensor([-1.8021e-06, -2.1363e-06, 2.0732e-06])

name: fc4.weight grad_value: tensor([[-8.9001e-05, -8.9251e-05, -8.9278e-05],

[ 6.6012e-04, 6.6198e-04, 6.6217e-04],

[ 3.7251e-04, 3.7356e-04, 3.7367e-04]])

name: fc4.bias grad_value: tensor([-0.0001, 0.0009, 0.0005])

name: fc5.weight grad_value: tensor([[0.1657, 0.1658, 0.1662]])

name: fc5.bias grad_value: tensor([0.2274])

[Evaluate] best accuracy performence has been updated: 0.00000 --> 0.48750

观察实验结果可以发现,梯度经过每一个神经层的传递都会不断衰减,最终传递到第一个神经层时,梯度几乎完全消失。

(3)使用ReLU函数进行模型训练

torch.random.manual_seed(102)

# 学习率大小

lr = 0.01

# 定义网络,激活函数使用sigmoid

model = Model_MLP_L5(input_size=2, output_size=1, act='relu')

# 定义优化器

optimizer = torch.optim.SGD(model.parameters(), lr=lr)

# 定义损失函数,使用交叉熵损失函数

loss_fn = F.binary_cross_entropy

from metric import accuracy

# 定义评价指标

metric = accuracy

# 指定梯度打印函数

custom_print_log = print_grads

# 实例化Runner类

runner = RunnerV2_2(model, optimizer, metric, loss_fn)

runner.train([X_train, y_train], [X_dev, y_dev], num_epochs=1, log_epochs=None, save_path="best_model.pdparams",

custom_print_log=custom_print_log)

代码执行结果:

The gradient of the Layers:

name: fc1.weight grad_value: tensor([[-3.1846e-09, 5.5507e-09],

[ 2.3915e-09, -4.1683e-09],

[ 4.2657e-09, -7.4350e-09]])

name: fc1.bias grad_value: tensor([ 5.1574e-09, -3.8729e-09, -6.9082e-09])

name: fc2.weight grad_value: tensor([[1.9452e-07, 1.9331e-07, 1.9529e-07],

[2.9493e-07, 2.9310e-07, 2.9609e-07],

[6.7968e-08, 6.7547e-08, 6.8236e-08]])

name: fc2.bias grad_value: tensor([1.9524e-07, 2.9602e-07, 6.8220e-08])

name: fc3.weight grad_value: tensor([[-4.6332e-05, -4.6356e-05, -4.4789e-05],

[-5.5117e-05, -5.5146e-05, -5.3282e-05],

[ 5.3547e-05, 5.3575e-05, 5.1764e-05]])

name: fc3.bias grad_value: tensor([-4.6624e-05, -5.5464e-05, 5.3884e-05])

name: fc4.weight grad_value: tensor([[-0.0006, -0.0006, -0.0006],

[ 0.0046, 0.0046, 0.0046],

[ 0.0026, 0.0026, 0.0026]])

name: fc4.bias grad_value: tensor([-0.0006, 0.0046, 0.0026])

name: fc5.weight grad_value: tensor([[0.2249, 0.2261, 0.2286]])

name: fc5.bias grad_value: tensor([0.2288])

[Evaluate] best accuracy performence has been updated: 0.00000 --> 0.48750

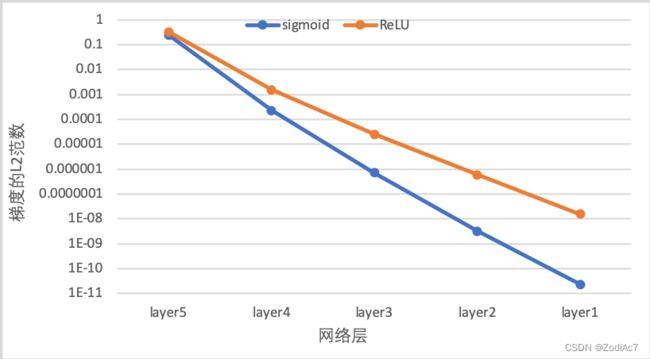

下图展示了使用不同激活函数时,网络每层梯度值的 ℓ 2 \ell_2 ℓ2范数情况。从结果可以看到,5层的全连接前馈神经网络使用Sigmoid型函数作为激活函数时,梯度经过每一个神经层的传递都会不断衰减,最终传递到第一个神经层时,梯度几乎完全消失。改为ReLU激活函数后,梯度消失现象得到了缓解,每一层的参数都具有梯度值。

3. 死亡 ReLU 问题

ReLU激活函数可以一定程度上改善梯度消失问题,但是ReLU函数在某些情况下容易出现死亡 ReLU问题,使得网络难以训练。这是由于当 x < 0 x<0 x<0时,ReLU函数的输出恒为0。在训练过程中,如果参数在一次不恰当的更新后,某个ReLU神经元在所有训练数据上都不能被激活(即输出为0),那么这个神经元自身参数的梯度永远都会是0,在以后的训练过程中永远都不能被激活。而一种简单有效的优化方式就是将激活函数更换为Leaky ReLU、ELU等ReLU的变种。

(1)使用ReLU进行模型训练

使用上一节中定义的多层全连接前馈网络进行实验,使用ReLU作为激活函数,观察死亡ReLU现象和优化方法。当神经层的偏置被初始化为一个相对于权重较大的负值时,可以想像,输入经过神经层的处理,最终的输出会为负值,从而导致死亡ReLU现象。

# 定义网络,并使用较大的负值来初始化偏置

model = Model_MLP_L5(input_size=2, output_size=1, act='relu', b_init=-8.0)

实例化RunnerV2类,启动模型训练,打印网络每层梯度值的 ℓ 2 \ell_2 ℓ2范数。代码实现如下:

# 实例化Runner类

runner = RunnerV2_2(model, optimizer, metric, loss_fn)

# 启动训练

runner.train([X_train, y_train], [X_dev, y_dev], num_epochs=1, log_epochs=0, save_path="best_model.pdparams",

custom_print_log=custom_print_log)

代码执行结果:

The gradient of the Layers:

name: fc1.weight grad_value: tensor([[0., 0.],

[0., 0.],

[0., 0.]])

name: fc1.bias grad_value: tensor([0., 0., 0.])

name: fc2.weight grad_value: tensor([[0., 0., 0.],

[0., 0., 0.],

[0., 0., 0.]])

name: fc2.bias grad_value: tensor([0., 0., 0.])

name: fc3.weight grad_value: tensor([[0., 0., 0.],

[0., 0., 0.],

[0., 0., 0.]])

name: fc3.bias grad_value: tensor([0., 0., 0.])

name: fc4.weight grad_value: tensor([[0., 0., 0.],

[0., 0., 0.],

[0., 0., 0.]])

name: fc4.bias grad_value: tensor([0., 0., 0.])

name: fc5.weight grad_value: tensor([[0., 0., 0.]])

name: fc5.bias grad_value: tensor([-0.5075])

[Evaluate] best accuracy performence has been updated: 0.00000 --> 0.51250

从输出结果可以发现,使用 ReLU 作为激活函数,当满足条件时,会发生死亡ReLU问题,网络训练过程中 ReLU 神经元的梯度始终为0,参数无法更新。

针对死亡ReLU问题,一种简单有效的优化方式就是将激活函数更换为Leaky ReLU、ELU等ReLU 的变种。接下来,观察将激活函数更换为 Leaky ReLU时的梯度情况。

(2)使用Leaky ReLU进行模型训练

将激活函数更换为Leaky ReLU进行模型训练,观察梯度情况。代码实现如下:

# 重新定义网络,使用Leaky ReLU激活函数

model = Model_MLP_L5(input_size=2, output_size=1, act='lrelu', b_init=-8.0)

# 实例化Runner类

runner = RunnerV2_2(model, optimizer, metric, loss_fn)

# 启动训练

runner.train([X_train, y_train], [X_dev, y_dev], num_epochs=1, log_epochps=None, save_path="best_model.pdparams",

custom_print_log=custom_print_log)

代码执行结果:

The gradient of the Layers:

name: fc1.weight grad_value: tensor([[-1.8774e-17, 2.5306e-18],

[-2.1469e-17, 2.8940e-18],

[ 6.5806e-18, -8.8704e-19]])

name: fc1.bias grad_value: tensor([-1.8644e-17, -2.1321e-17, 6.5351e-18])

name: fc2.weight grad_value: tensor([[ 3.9442e-14, 3.9619e-14, 3.9489e-14],

[-1.0858e-14, -1.0907e-14, -1.0871e-14],

[ 5.6145e-15, 5.6398e-15, 5.6213e-15]])

name: fc2.bias grad_value: tensor([-4.9409e-13, 1.3601e-13, -7.0334e-14])

name: fc3.weight grad_value: tensor([[-2.8899e-10, -2.8896e-10, -2.8899e-10],

[ 6.2649e-11, 6.2642e-11, 6.2648e-11],

[ 2.7410e-10, 2.7407e-10, 2.7410e-10]])

name: fc3.bias grad_value: tensor([ 3.6122e-09, -7.8308e-10, -3.4261e-09])

name: fc4.weight grad_value: tensor([[-5.2960e-07, -5.2960e-07, -5.2968e-07],

[-6.9280e-07, -6.9280e-07, -6.9289e-07],

[ 5.1386e-06, 5.1386e-06, 5.1392e-06]])

name: fc4.bias grad_value: tensor([ 6.6203e-06, 8.6604e-06, -6.4235e-05])

name: fc5.weight grad_value: tensor([[0.0406, 0.0406, 0.0406]])

name: fc5.bias grad_value: tensor([-0.5075])

[Evaluate] best accuracy performence has been updated: 0.00000 --> 0.51250

[Train] epoch: 0/1, loss: 4.06322717666626

从输出结果可以看到,将激活函数更换为Leaky ReLU后,死亡ReLU问题得到了改善,梯度恢复正常,参数也可以正常更新。但是由于 Leaky ReLU 中, x < 0 x<0 x<0时的斜率默认只有0.01,所以反向传播时,随着网络层数的加深,梯度值越来越小。如果想要改善这一现象,将 Leaky ReLU 中, x < 0 x<0 x<0时的斜率调大即可。

三、实验总结

这次的主要学习到的内容是Leaky ReLU,它可以解决参数在不恰当的更新后梯度降为0而造成训练数据不能被激活的问题,进而造成网络难以实现。实际实验中,ELU的收敛速度更快,因此可以更快速地训练神经网络。