Pytorch运行自己的数据集(基于小土堆)

在学完土堆的视频后就想把一开始的蚂蚁蜜蜂数据集训练一下在网上找了很久方法终于成功。

导入准备数据

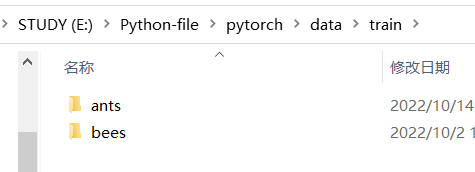

我的方法是文件夹就是标签,里边放所有的训练图片,如图train里边,测试数据集也一样

接下来是把数据导入,首先要进行transforms,然后使用ImageFolder加载数据,再用Dataloader进行打包,此时文件夹名就是target

data_transform = transforms.Compose([

transforms.Resize(32),#等比缩放,最短边为32

transforms.CenterCrop(32),#从中间切出32*32的图片

transforms.ToTensor(),#将图片转成Tensor

])

#准备数据

train_data = datasets.ImageFolder(root=r"E:\Python-file\pytorch\data\train",transform=data_transform)

test_data = datasets.ImageFolder(root=r"E:\Python-file\pytorch\data\val",transform=data_transform)

#length 长度

train_data_size = len(train_data)

test_data_size = len(test_data)

print("训练数据集的长度为:{}".format(train_data_size))

print("测试数据集的长度为:{}".format(test_data_size))

#利用dataloader来加载数据集

train_dataloader = torch.utils.data.DataLoader(train_data,batch_size=32,shuffle=True)

test_dataloader = torch.utils.data.DataLoader(test_data,batch_size=32,shuffle=True)

完整训练代码(基于小土堆)

因为是二分类,所以把神经网络里的最后一层线性层的输出改为2

#准备数据集

import torch

import torchvision.transforms

from torch import nn

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

from torchvision import datasets, transforms

from PIL import Image

#创建模型

class Bao(nn.Module):

def __init__(self):

super(Bao, self).__init__()

self.model = nn.Sequential(

nn.Conv2d(3, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 64, 5, 1, 2),

nn.MaxPool2d(2),

nn.Flatten(),

nn.Linear(64 * 4 * 4, 64),

nn.Linear(64, 2)

)

def forward(self ,x):

x = self.model(x)

return x

bao = Bao()

# bao = bao.cuda()

data_transform = transforms.Compose([

transforms.Resize(32),#等比缩放,最短边为32

transforms.CenterCrop(32),#从中间切出32*32的图片

transforms.ToTensor(),#将图片转成Tensor

])

#准备数据

train_data = datasets.ImageFolder(root=r"E:\Python-file\pytorch\data\train",transform=data_transform)

test_data = datasets.ImageFolder(root=r"E:\Python-file\pytorch\data\val",transform=data_transform)

#length 长度

train_data_size = len(train_data)

test_data_size = len(test_data)

print("训练数据集的长度为:{}".format(train_data_size))

print("测试数据集的长度为:{}".format(test_data_size))

#利用dataloader来加载数据集

train_dataloader = torch.utils.data.DataLoader(train_data,batch_size=32,shuffle=True)

test_dataloader = torch.utils.data.DataLoader(test_data,batch_size=32,shuffle=True)

#损失函数

loss_fun = nn.CrossEntropyLoss()

# loss_fun = loss_fun.cuda()

#优化器

learning_rate = 0.01

optimizer = torch.optim.SGD(bao.parameters(),lr=learning_rate)

#设置训练网络的一些参数

#训练次数

total_train_step = 0

#测试次数

total_test_step = 0

#训练轮次

epoch =100

#添加tensorboard

writer = SummaryWriter("../logs")

for i in range(epoch):

print("-----第{}轮训练开始----".format(i+1))

#开始训练

bao.train()#可写可不写,看官方文档

for data in train_dataloader:#加个tqdm可以显示进度条

imgs,targets = data

# imgs = imgs.cuda()

# targets = targets.cuda()

outputs = bao(imgs)

loss = loss_fun(outputs,targets)

#优化器优化模型

optimizer.zero_grad()

loss.backward()

optimizer.step()

total_train_step += 1

if total_train_step % 100 ==0:

print("训练次数:{},loss:{}".format(total_train_step, loss.item()))#加个item(),输出时为数字,不会有个tensor

writer.add_scalar("train_loss",loss.item(),total_train_step)

#测试步骤开始

bao.eval()#可写可不写

total_test_loss = 0

total_accuracy = 0

with torch.no_grad():

for data in test_dataloader:

imgs,targets = data

# imgs = imgs.cuda()

# targets = targets.cuda()

outputs = bao(imgs)

loss = loss_fun(outputs,targets)

total_test_loss = total_test_loss + loss.item()

accuracy = (outputs.argmax(1) == targets).sum()

total_accuracy = total_accuracy + accuracy

print(total_accuracy)

print(test_data_size)

print("整体测试集上的loss:{}".format(total_test_loss))

print("整体测试集上的正确率:{}".format(total_accuracy.item()/test_data_size))

writer.add_scalar("test_loss",total_test_loss,total_test_step)

writer.add_scalar("test_accuary",total_accuracy/test_data_size,total_test_step)

total_test_step += 1

#保存模型

torch.save(bao,"bao{}.pth".format(i))

print("模型已保存")

writer.close()

最后训练出来的效果不是很好,有60%的正确率,不知道是不是数据集太少了,还是模型的参数需要修改,学无止境。垃圾宿舍,gpu训练直接跳闸。

验证数据

import torch

import torchvision.transforms

from PIL import Image

from torch import nn

#图片路径

img_path = "./imgs/cans1.png"

image =Image.open(img_path)

print(image)

#png 图片有4个通道,还有一个透明通道,要转化为三个

image = image.convert('RGB')

transform = torchvision.transforms.Compose([torchvision.transforms.Resize((32,32)),

torchvision.transforms.ToTensor()])

image = transform(image)

print(image.shape)

#加载模型

#创建模型

class Bao(nn.Module):

def __init__(self):

super(Bao, self).__init__()

self.model = nn.Sequential(

nn.Conv2d(3, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 64, 5, 1, 2),

nn.MaxPool2d(2),

nn.Flatten(),

nn.Linear(64 * 4 * 4, 64),

nn.Linear(64, 3)

)

def forward(self ,x):

x = self.model(x)

return x

model1 = torch.load("./0.62.pth",map_location=torch.device('cpu'))

image = torch.reshape(image,(1,3,32,32))

model1.eval()

with torch.no_grad():

output = model1(image)

print(output)

print(output.argmax(1))

#写自己的分类标签

taget = ["易拉罐","塑料瓶","啤酒瓶"]

print(taget[output.argmax(1).item()])