动手学深度学习之经典的卷积神经网络之VGG

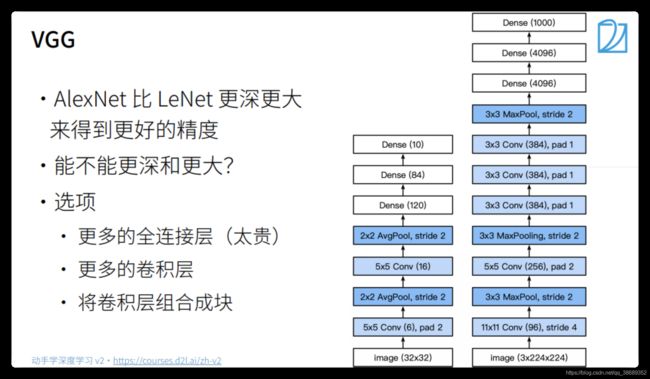

VGG

VGG块

-

深 vs 宽

- 5 * 5卷积

- 3 * 3卷积

- 深但窄效果更好

-

VGG块

VGG架构

进度

总结

VGG代码实现

import torch

from torch import nn

from d2l import torch as d2l

# VGG块

def vgg_block(num_convs, in_channels, out_channels):

layers = []

for _ in range(num_convs):

layers.append(nn.Conv2d(in_channels, out_channels, kernel_size=3, padding=1))

layers.append(nn.ReLU())

in_channels = out_channels

layers.append(nn.MaxPool2d(kernel_size=2, stride=2))

return nn.Sequential(*layers)

# VGG架构

conv_arch = ((1, 64), (1, 128), (2, 256), (2, 512), (2, 512))

def vgg(conv_arch):

conv_blks = []

in_channel = 1

for (num_conv, out_channels) in conv_arch:

conv_blks.append(vgg_block(num_conv, in_channel, out_channels))

in_channel = out_channels

return nn.Sequential(

*conv_blks, nn.Flatten(),

nn.Linear(out_channels * 7 * 7, 4096), nn.ReLU(),

nn.Dropout(0.5), nn.Linear(4096, 4096), nn.ReLU(),

nn.Dropout(0.5), nn.Linear(4096, 10)

)

net = vgg(conv_arch)

X = torch.randn(size=(1, 1, 224, 224))

# 从下面我们可以看到每一个VGG块的思想就是将高宽减半,通道数翻倍

for blk in net:

X = blk(X)

print(blk.__class__.__name__, 'output shape:\t', X.shape)

Sequential output shape: torch.Size([1, 64, 112, 112])

Sequential output shape: torch.Size([1, 128, 56, 56])

Sequential output shape: torch.Size([1, 256, 28, 28])

Sequential output shape: torch.Size([1, 512, 14, 14])

Sequential output shape: torch.Size([1, 512, 7, 7])

Flatten output shape: torch.Size([1, 25088])

Linear output shape: torch.Size([1, 4096])

ReLU output shape: torch.Size([1, 4096])

Dropout output shape: torch.Size([1, 4096])

Linear output shape: torch.Size([1, 4096])

ReLU output shape: torch.Size([1, 4096])

Dropout output shape: torch.Size([1, 4096])

Linear output shape: torch.Size([1, 10])

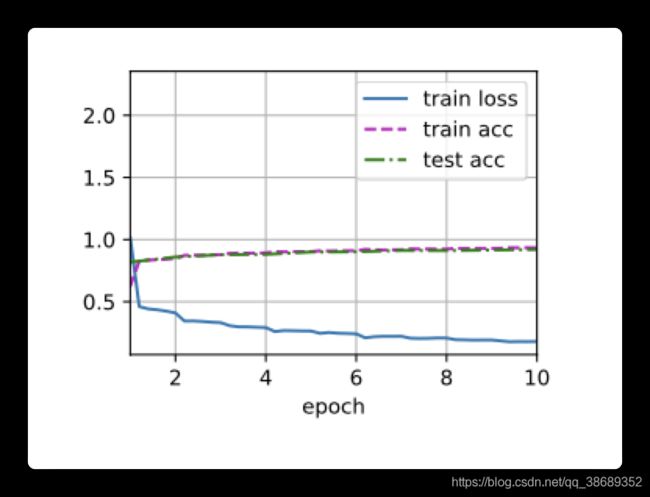

# 因为VGG-11比AlexNet计算量更大,因此我们构建一个通道数较小的网络来训练

ratio = 4

small_conv_arch = [(pair[0], pair[1] // ratio) for pair in conv_arch]

net = vgg(small_conv_arch)

lr, num_epochs, batch_size = 0.05, 10, 128

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size, resize=224)

d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

使用Colab训练

import torch

import time

from torch import nn

from d2l import torch as d2l

# VGG块

def vgg_block(num_convs, in_channels, out_channels):

layers = []

for _ in range(num_convs):

layers.append(nn.Conv2d(in_channels, out_channels, kernel_size=3, padding=1))

layers.append(nn.ReLU())

in_channels = out_channels

layers.append(nn.MaxPool2d(kernel_size=2, stride=2))

return nn.Sequential(*layers)

# VGG架构

conv_arch = ((1, 64), (1, 128), (2, 256), (2, 512), (2, 512))

def vgg(conv_arch):

conv_blks = []

in_channel = 1

for (num_conv, out_channels) in conv_arch:

conv_blks.append(vgg_block(num_conv, in_channel, out_channels))

in_channel = out_channels

return nn.Sequential(

*conv_blks, nn.Flatten(),

nn.Linear(out_channels * 7 * 7, 4096), nn.ReLU(),

nn.Dropout(0.5), nn.Linear(4096, 4096), nn.ReLU(),

nn.Dropout(0.5), nn.Linear(4096, 10)

)

# 因为VGG-11比AlexNet计算量更大,因此我们构建一个通道数较小的网络来训练

ratio = 4

small_conv_arch = [(pair[0], pair[1] // ratio) for pair in conv_arch]

net = vgg(small_conv_arch)

start = time.time()

lr, num_epochs, batch_size = 0.05, 10, 128

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size, resize=224)

d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

end = time.time()

print(f"time: {end - start}")

loss 0.180, train acc 0.933, test acc 0.920

388.2 examples/sec on cuda:0

time: 1701.2966752052307