SVM算法

目录

一、SVM算法介绍

二、例子代码

三、总结

四、参考资料

一、SVM算法介绍

支持向量机(support vector machines, SVM)是一种二分类模型,它的基本模型是定义在特征空间上的间隔最大的线性分类器,间隔最大使它有别于感知机;SVM还包括核技巧,这使它成为实质上的非线性分类器。SVM的的学习策略就是间隔最大化,可形式化为一个求解凸二次规划的问题,也等价于正则化的合页损失函数的最小化问题。SVM的的学习算法就是求解凸二次规划的最优化算法。SVM的 算法核心是 找到几何间距, 找到几何间距margin,处理线性可分问题,对应的非线性问题处理方法是:非线性VM,由于前面我已经讲解过SVM算法,这里不过多介绍。

二、例子代码

import matplotlib.pyplot as plt

import numpy as np

from sklearn import datasets

from sklearn.preprocessing import StandardScaler

from sklearn.svm import LinearSVC

iris=datasets.load_iris()

X=iris.data

y=iris.target

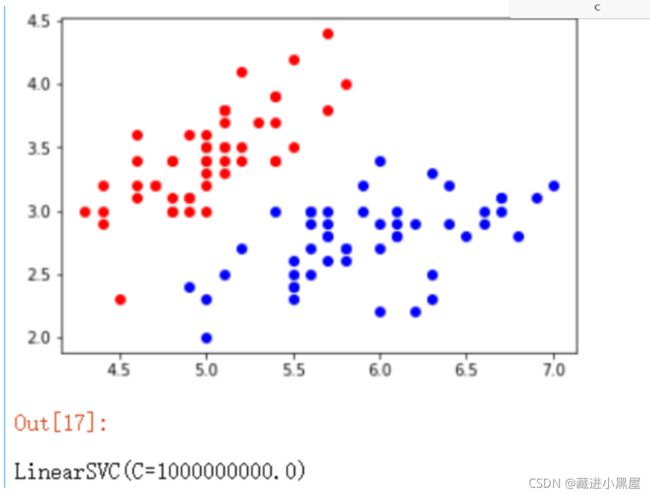

X=X[y< 2,:2]#只取y<2的类别,也就是0 1 并且只取前两个特征

y=y[y< 2]# 只取y<2的类别

# 分别画出类别0和1的点

plt.scatter(X[y==0,0],X[y==0,1],color='red')

plt.scatter(X[y==1,0],X[y==1,1],color='blue')

plt.show()

# 标准化

standardScaler=StandardScaler()

standardScaler.fit(X)#计算训练数据的均值和方差

X_standard=standardScaler.transform(X)#再用scaler中的均值和方差来转换X,使X标准化

svc=LinearSVC(C=1e9)#线性SVM分类器

svc.fit(X_standard,y)#训练svm

import matplotlib.pyplot as plt

import numpy as np

import sklearn

from sklearn import datasets

from sklearn.preprocessing import StandardScaler

from sklearn.svm import LinearSVC

iris=datasets.load_iris()

X=iris.data

y=iris.target

X=X[y<2,:2]#只取y<2的类别,也就是0 1 并且只取前两个特征

y=y[y<2]# 只取y<2的类别

# 分别画出类别0和1的点

plt.scatter(X[y==0,0],X[y==0,1],color='red')

plt.scatter(X[y==1,0],X[y==1,1],color='blue')

plt.show()

standardScaler=StandardScaler()

standardScaler.fit(X)#计算训练数据的均值和方差

X_standard=standardScaler.transform(X)#再用scaler中的均值和方差来转换X,使X标准化

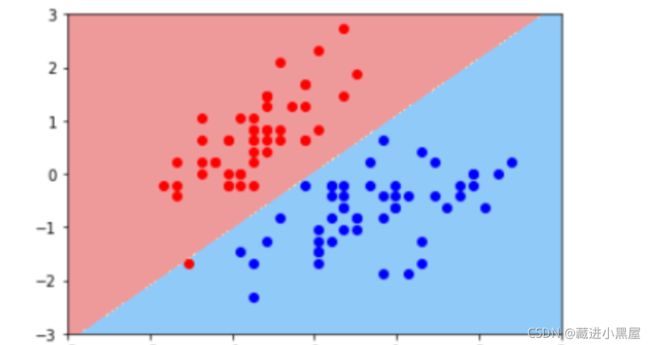

svc2=LinearSVC(C=0.01)#分类器

svc2.fit(X_standard,y)

plot_decision_boundary(svc2,axis=[-3,3,-3,3])# x,y轴都在-3到3之间

#绘制原始数据

plt.scatter(X_standard[y==0,0],X_standard[y==0,1],color='red')

plt.scatter(X_standard[y==1,0],X_standard[y==1,1],color='blue')

plt.show()svc2=LinearSVC(C=0.01)

svc2.fit(X_standard,y)

plot_decision_boundary(svc2,axis=[-3,3,-3,3])# x,y轴都在-3到3之间

# 绘制原始数据

plt.scatter(X_standard[y==0,0],X_standard[y==0,1],color='red')

plt.scatter(X_standard[y==1,0],X_standard[y==1,1],color='blue')

plt.show()

# 接下来我们看下如何处理非线性的数据。

import numpy as np

import matplotlib.pyplot as plt

from sklearn import datasets

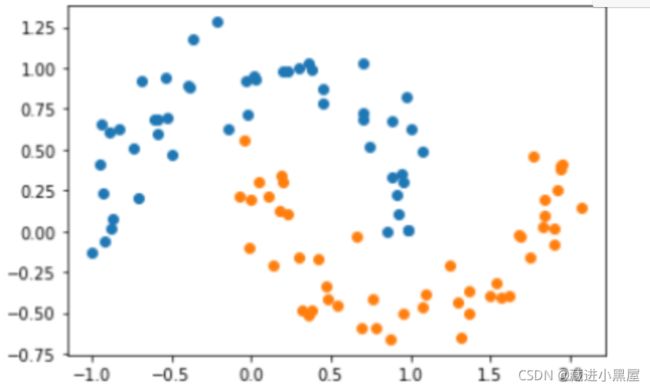

X, y = datasets.make_moons() #使用生成的数据

print(X.shape) # (100,2)

print(y.shape) # (100,)

# 接下来绘制下生成的数据

plt.scatter(X[y==0,0],X[y==0,1])

plt.scatter(X[y==1,0],X[y==1,1])

plt.show()X, y = datasets.make_moons(noise=0.15,random_state=777)

#随机生成噪声点,random_state是随机种子,noise是方差

plt.scatter(X[y==0,0],X[y==0,1])

plt.scatter(X[y==1,0],X[y==1,1])

plt.show()

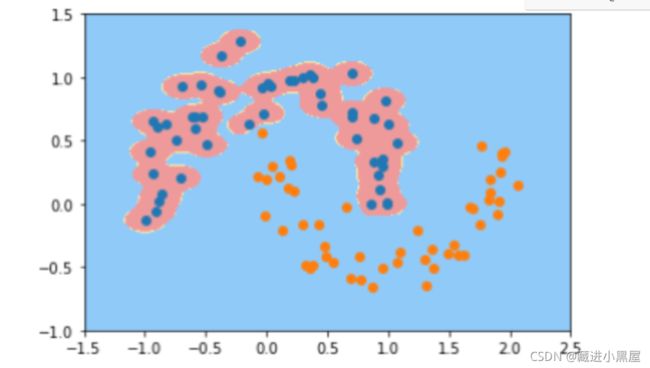

from sklearn.preprocessing import PolynomialFeatures,StandardScaler

from sklearn.svm import LinearSVC

from sklearn.pipeline import Pipeline

def PolynomialSVC(degree,C=1.0):

return Pipeline([ ("poly",PolynomialFeatures(degree=degree)),#生成多项式

("std_scaler",StandardScaler()),#标准化

("linearSVC",LinearSVC(C=C))#最后生成svm

])

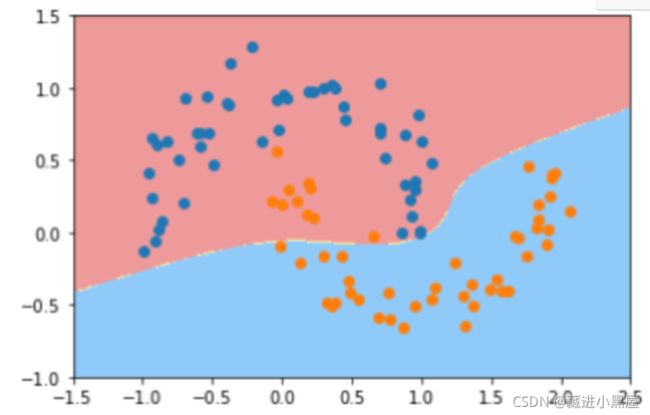

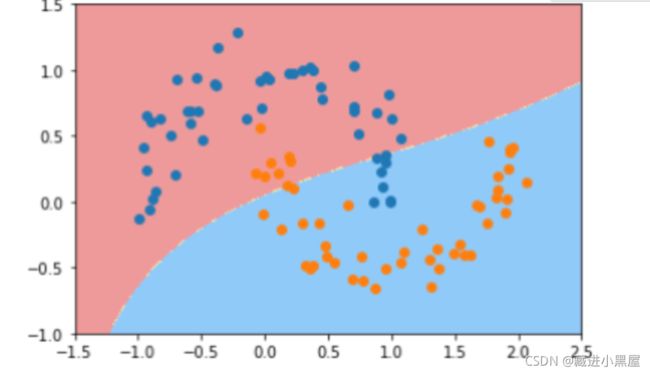

poly_svc = PolynomialSVC(degree=3)

poly_svc.fit(X,y)

plot_decision_boundary(poly_svc,axis=[-1.5,2.5,-1.0,1.5])

plt.scatter(X[y==0,0],X[y==0,1])

plt.scatter(X[y==1,0],X[y==1,1])

plt.show()

from sklearn.svm import SVC

def PolynomialKernelSVC(degree,C=1.0):

return Pipeline([ ("std_scaler",StandardScaler()),

("kernelSVC",SVC(kernel="poly"))# poly代表多项式特征

])

poly_kernel_svc = PolynomialKernelSVC(degree=3)

poly_kernel_svc.fit(X,y)

plot_decision_boundary(poly_kernel_svc,axis=[-1.5,2.5,-1.0,1.5])

plt.scatter(X[y==0,0],X[y==0,1])

plt.scatter(X[y==1,0],X[y==1,1])

plt.show()import numpy as np

import matplotlib.pyplot as plt

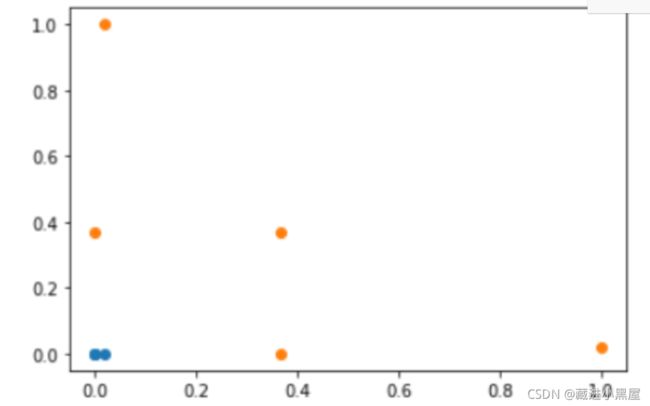

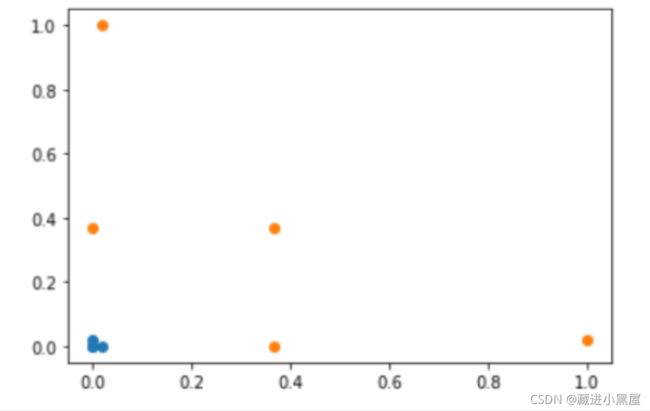

x = np.arange(-4,5,1)

#生成测试数据

y = np.array((x >= -2 ) & (x 2),dtype='int')

plt.scatter(x[y==0],[0]*len(x[y==0]))

# x取y=0的点, y取0,有多少个x,就有多少个y

plt.scatter(x[y==1],[0]*len(x[y==1]))

plt.show()

# 高斯核函数

def gaussian(x,l):

gamma = 1.0

return np.exp(-gamma * (x -l)**2)

l1,l2 = -1,1

X_new = np.empty((len(x),2))#len(x) ,2

for i,data in enumerate(x):

X_new[i,0] = gaussian(data,l1)

X_new[i,1] = gaussian(data,l2)

plt.scatter(X_new[y==0,0],X_new[y==0,1])

plt.scatter(X_new[y==1,0],X_new[y==1,1])

plt.show()

import numpy as np

import matplotlib.pyplot as plt

from sklearn import datasets

X,y = datasets.make_moons(noise=0.15,random_state=777)

plt.scatter(X[y==0,0],X[y==0,1])

plt.scatter(X[y==1,0],X[y==1,1])

plt.show()

from sklearn.preprocessing import StandardScaler

from sklearn.svm import SVC

from sklearn.pipeline import Pipeline

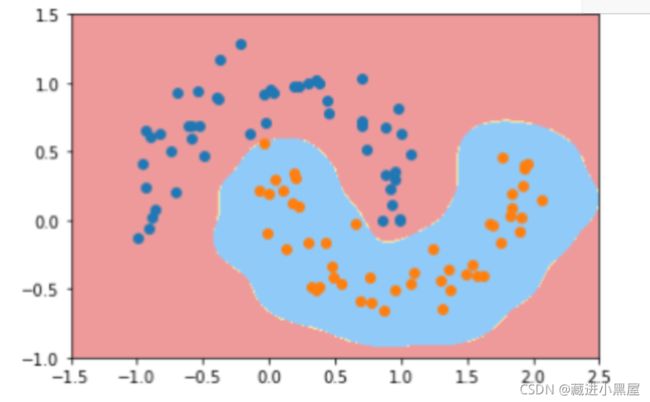

def RBFKernelSVC(gamma=1.0):

return Pipeline([ ('std_scaler',StandardScaler()), ('svc',SVC(kernel='rbf',gamma=gamma)) ])

svc = RBFKernelSVC()

svc.fit(X,y)

plot_decision_boundary(svc,axis=[-1.5,2.5,-1.0,1.5])

plt.scatter(X[y==0,0],X[y==0,1])

plt.scatter(X[y==1,0],X[y==1,1])

plt.show()

from sklearn.preprocessing import StandardScaler

from sklearn.svm import SVC

from sklearn.pipeline import Pipeline

def RBFKernelSVC(gamma=100):

return Pipeline([ ('std_scaler',StandardScaler()), ('svc',SVC(kernel='rbf',gamma=gamma)) ])

svc = RBFKernelSVC()

svc.fit(X,y)

plot_decision_boundary(svc,axis=[-1.5,2.5,-1.0,1.5])

plt.scatter(X[y==0,0],X[y==0,1])

plt.scatter(X[y==1,0],X[y==1,1])

plt.show()

from sklearn.preprocessing import StandardScaler

from sklearn.svm import SVC

from sklearn.pipeline import Pipeline

def RBFKernelSVC(gamma=10):

return Pipeline([ ('std_scaler',StandardScaler()), ('svc',SVC(kernel='rbf',gamma=gamma)) ])

svc = RBFKernelSVC()

svc.fit(X,y)

plot_decision_boundary(svc,axis=[-1.5,2.5,-1.0,1.5])

plt.scatter(X[y==0,0],X[y==0,1])

plt.scatter(X[y==1,0],X[y==1,1])

plt.show()

from sklearn.preprocessing import StandardScaler

from sklearn.svm import SVC

from sklearn.pipeline import Pipeline

def RBFKernelSVC(gamma=0.1):

return Pipeline([ ('std_scaler',StandardScaler()), ('svc',SVC(kernel='rbf',gamma=gamma)) ])

svc = RBFKernelSVC()

svc.fit(X,y)

plot_decision_boundary(svc,axis=[-1.5,2.5,-1.0,1.5])

plt.scatter(X[y==0,0],X[y==0,1])

plt.scatter(X[y==1,0],X[y==1,1])

plt.show()import numpy as np

import matplotlib.pyplot as plt

from sklearn import datasets

boston = datasets.load_boston()

X = boston.data

y = boston.target

from sklearn.model_selection import train_test_split

X_train,X_test,y_train,y_test = train_test_split(X,y,random_state=777)

# 把数据集拆分成训练数据和测试数据

from sklearn.svm import LinearSVR

from sklearn.svm import SVR

from sklearn.preprocessing import StandardScaler

def StandardLinearSVR(epsilon=0.1):

return Pipeline([ ('std_scaler',StandardScaler()), ('linearSVR',LinearSVR(epsilon=epsilon)) ])

svr = StandardLinearSVR()

svr.fit(X_train,y_train)

svr.score(X_test,y_test)

三、总结

SVM算法有很重要的意义它有很多优点,在高维空间有效,在维度数量大于样本数量的情况下仍然有效。仅用支持向量即训练点的小部分子集,节省空间。多功能,可以为决策功能指定不同的核函数。学习这个算法很有用。

四、参考资料

SVM深入理解:解决线性不可分类时,对特征集进行多项式、核函数转换将其转换为线性可分类问题 线性判别准则与线性分类编程实践

[SVM算法补充_一只特立独行的猪️的博客-CSDN博客_svm模式]: