pytorch教程 (二) -- 处理数据

处理数据

- 1.加载系统数据集

- 2.创建自定义数据集

- 3.迭代和可视化数据集

- 4.DataLoaders为模型处理数据

- 5.通过DataLoader迭代

1.加载系统数据集

系统数据集可以从torchVision 中加载获取,

这里以 Fashion-MNIST 数据集的示例。Fashion-MNIST是Zalando文章图像的数据集,由6万个训练样本和10,000个样本组成。每个样本包括一个28×28灰度图像和来自10个类之一的相关标签

参数说明:

- root:指定数据集下载的路径

- train:指导下载的是训练集还是测试机,train=True为训练集,train=False为测试集

- download:download=True表示下载数据集,download=False表示不下载(如果下载了一次,程序为自动跳过下载)

- transform:指定数据集的数据转换(包含数据正则化,归一化,或者是图像旋转、图像灰度化等)

- ToTensor:将PIL图像或NumPy ndarray转换为Tensor。并将图像的像素值缩放到[0,1]范围内

import torch

from torch.utils.data import Dataset

from torchvision import datasets

from torchvision.transforms import ToTensor

import matplotlib.pyplot as plt

training_data = datasets.FashionMNIST(

root="data",

train=True,

download=True,

transform=ToTensor()

)

test_data = datasets.FashionMNIST(

root="data",

train=False,

download=True,

transform=ToTensor()

)

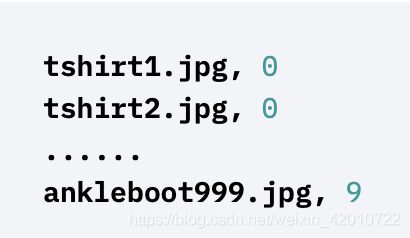

2.创建自定义数据集

自定义数据集,首先先规定数据集需要的相关文件,以及文件的存储位置。

import os

import pandas as pd

from torchvision.io import read_image

class CustomImageDataset(Dataset):

def __init__(self, annotations_file, img_dir, transform=None, target_transform=None):

self.img_labels = pd.read_csv(annotations_file)

self.img_dir = img_dir

self.transform = transform

self.target_transform = target_transform

def __len__(self):

return len(self.img_labels)

def __getitem__(self, idx):

img_path = os.path.join(self.img_dir, self.img_labels.iloc[idx, 0])

image = read_image(img_path)

label = self.img_labels.iloc[idx, 1]

if self.transform:

image = self.transform(image)

if self.target_transform:

label = self.target_transform(label)

return image, label

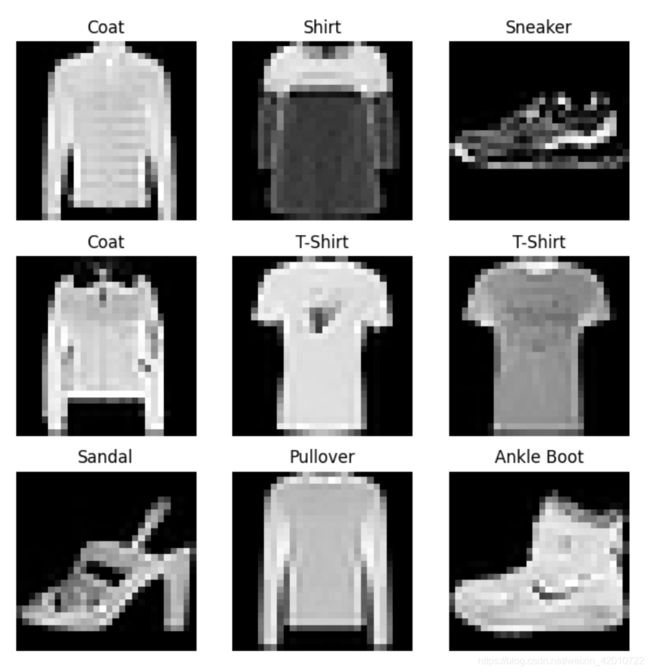

3.迭代和可视化数据集

labels_map = {

0: "T-Shirt",

1: "Trouser",

2: "Pullover",

3: "Dress",

4: "Coat",

5: "Sandal",

6: "Shirt",

7: "Sneaker",

8: "Bag",

9: "Ankle Boot",

}

figure = plt.figure(figsize=(8, 8))

cols, rows = 3, 3

for i in range(1, cols * rows + 1):

sample_idx = torch.randint(len(training_data), size=(1,)).item()

img, label = training_data[sample_idx]

figure.add_subplot(rows, cols, i)

plt.title(labels_map[label])

plt.axis("off")

plt.imshow(img.squeeze(), cmap="gray")

plt.show()

这里的img.squeeze()函数解释一下:

原始的img.shape为(H,W,1)

squeeze()函数的功能是:从矩阵shape中,去掉维度为1的。例如一个矩阵是的shape是(5, 1),使用过这个函数后,结果为(5,),因此img.squeeze() 对应的shape为(H,W)

4.DataLoaders为模型处理数据

DataLoader是一个迭代器,将数据集分成相同小批量,且每个小批量中样本,对应着样本的索引、数据和标签。

参数说明:

- batch_size:每个批次的样本个数

- shuffle:是否打乱数据集顺序

- num_workers:使用几个线程来处理这些数据,线程越多处理速度越快

from torch.utils.data import DataLoader

train_dataloader = DataLoader(training_data, batch_size=64, shuffle=True,num_workers=2)

test_dataloader = DataLoader(test_data, batch_size=64, shuffle=True,num_workers=2)

5.通过DataLoader迭代

# Display image and label.

train_features, train_labels = next(iter(train_dataloader))

print(f"Feature batch shape: {train_features.size()}")

print(f"Labels batch shape: {train_labels.size()}")

img = train_features[0].squeeze()

label = train_labels[0]

plt.imshow(img, cmap="gray")

plt.show()

print(f"Label: {label}")