Pytorch实战2——卷积神经网络对MNIST数据集分类

目录

一、卷积神经网络简介

二、CNN编程对mnist数据集分类

1. 导入相关的模块

2. 准备数据集,训练、测试数据集

3. 设计模型

4. 构建损失函数和优化器

5. 训练

6. 测试

7. 训练结果:

一、卷积神经网络简介

输入图像n*5*5 经过 1个 n*3*3的卷积核,得到 1*3*3的输出

输入图像n*5*5 经过 1个 n*3*3的卷积核,得到 1*3*3的输出

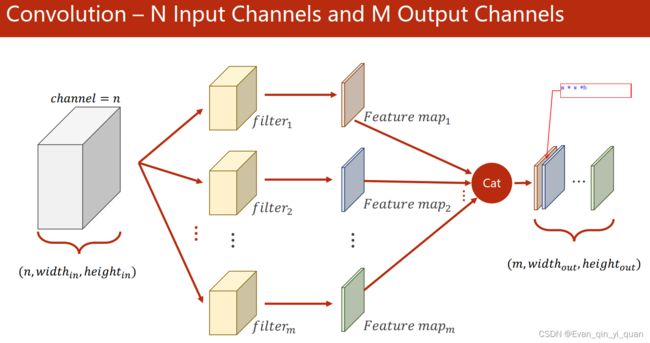

输入n*wi*hi的输入 经过 m个n*3*3的卷积核,得到 m*wo*ho的输出,m个特征图按照channel的方向拼接起来。

二、CNN编程对mnist数据集分类

1. 导入相关的模块

import torch

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim2. 准备数据集,训练、测试数据集

batch_size = 64

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307, ), (0.3081, ))

])

train_dataset = datasets.MNIST(root='../dataset/mnist/',train=True,download=True,transform=transform)

train_loader = DataLoader(train_dataset,shuffle=True,batch_size=batch_size)

test_dataset = datasets.MNIST(root='../dataset/mnist/',train=False,download=True,transform=transform)

test_loader = DataLoader(test_dataset,shuffle=False,batch_size=batch_size)3. 设计模型

#卷积神经网络模型,卷积、池化、激活,卷积、池化、激活,reshape、全连接输出

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = torch.nn.Conv2d(1, 10, kernel_size=5)#输入图像通道数,卷积输出的通道数,卷积核尺寸,默认stride = 1

self.conv2 = torch.nn.Conv2d(10, 20, kernel_size=5)#填充层默认 padding=0

self.pooling = torch.nn.MaxPool2d(2)

# batch*20*10*10

self.fc = torch.nn.Linear(320, 10)

def forward(self, x):

# Flatten data from (n, 1, 28, 28) to (n, 784)

batch_size = x.size(0) #x是4维的,size(0)表示batch_size

#输入 batch*1*28*28

x = F.relu(self.poo ling(self.conv1(x)))# 输出 batch*10*12*12

x = F.relu(self.pooling(self.conv2(x)))# batch*20*4*4

x = x.view(batch_size, -1) # flatten, -1表示自动计算列数,行数固定为batch_size

# x batch*320 #(x.size(0),-1)将tensor的结构转换为了(batchsize, channels*w*h),即将(channels,w,h)拉直

x = self.fc(x) #最后输出不做激活,在交叉熵损失函数里做了

return x

model = Net()4. 构建损失函数和优化器

#构建损失函数和优化器

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)5. 训练

#训练

def train(epoch):

running_loss = 0.0

for batch_idx, data in enumerate(train_loader, 0):

inputs, target = data

optimizer.zero_grad()

# forward + backward + update

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d, %5d] loss: %.3f' % (epoch + 1, batch_idx + 1, running_loss / 300))

running_loss = 0.06. 测试

#测试

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

# print(total) # 10000个测试样本

print('Accuracy on test set: %d %%' % (100 * correct / total))if __name__ == '__main__':

for epoch in range(2):

train(epoch)

test()7. 训练结果:

[1, 300] loss: 0.628

[1, 600] loss: 0.188

[1, 900] loss: 0.147

Accuracy on test set: 96 %

[2, 300] loss: 0.109

[2, 600] loss: 0.094

[2, 900] loss: 0.092

Accuracy on test set: 97 %