Pytorch 实现DenseNet网络

![]()

DenseNet的特性:

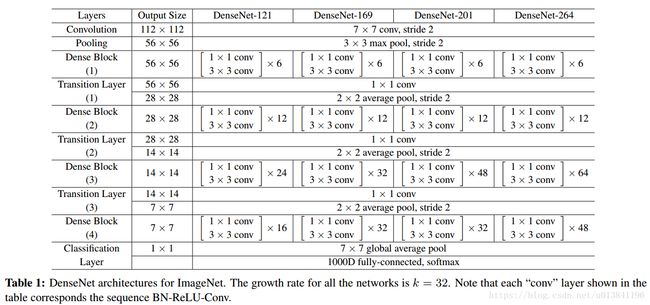

(1)神经网络一般需要使用池化操作缩小特征图尺寸来提取语义特征。而Dense Block需要保持每一个Block内的特征图尺寸一致来进行Concatnate操作,因此Dense Block被分成多个Block。Block的数量一般为4.

(2)两个相邻的Dense Block之间的部分被称为Transition层,具体包括BN,ReLU、1x1卷积、2x2平均池化操作。1x1的作用是降维,起到压缩模型的作用,而平均池化则是降低特征图的尺寸。

DenseNet的结构图:

网络四个优点

- 减轻梯度消失

- 提高了特征的传播效率

- 提高了特征的利用效率

- 减小了网络的参数量

Pytorch实现:

import torch

from torch import nn

import torch.nn.functional as F

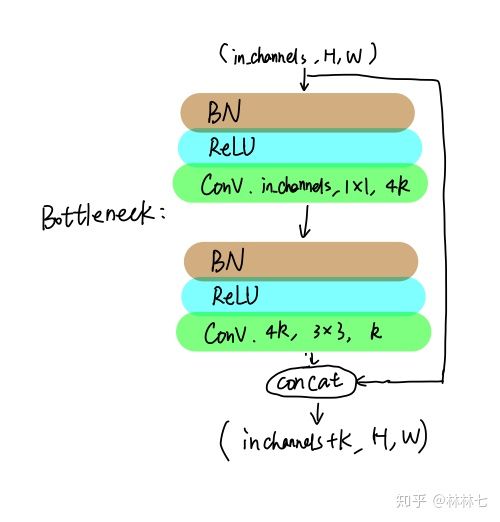

#实现一个Bottleneck的类,初始化需要输入通道数与GrowthRate这两个参数

class Bottleneck(nn.Module):

def __init__(self,nChannels,growthRate):

super(Bottleneck,self).__init__()

#通常1x1卷积的通道数为GrowthRate的4倍

interChannels=4*growthRate

self.bn1=nn.BatchNorm2d(nChannels)

self.conv1=nn.Conv2d(nChannels,interChannels,kernel_size=1,bias=False)

self.bn2=nn.BatchNorm2d(interChannels)

self.conv2=nn.Conv2d(interChannels,growthRate,kernel_size=3,padding=1,bias=False)

def forward(self,x):

out=self.conv1(F.relu(self.bn1(x)))

out=self.conv2(F.relu(self.bn2(out)))

#将输入x同计算的结果out进行通道拼接

out=torch.cat((x,out),1)

return out

class Denseblock(nn.Module):

def __init__(self,nChannels,growthRate,nDenseBlocks):

super(Denseblock,self).__init__()

layers=[]

#将每一个Bottleneck利用nn.Sequential()整合起来,输入通道数需要线性增长

for i in range(int(nDenseBlocks)):

layers.append(Bottleneck(nChannels,growthRate))

nChannels+=growthRate

self.denseblock=nn.Sequential(*layers)

def forward(self,x):

return self.denseblock(x)module=Denseblock(64,32,6).cuda()

module结构:

Denseblock(

(denseblock): Sequential(

(0): Bottleneck(

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv1): Conv2d(64, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(1): Bottleneck(

(bn1): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv1): Conv2d(96, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(2): Bottleneck(

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv1): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(3): Bottleneck(

(bn1): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv1): Conv2d(160, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(4): Bottleneck(

(bn1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv1): Conv2d(192, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(5): Bottleneck(

(bn1): BatchNorm2d(224, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv1): Conv2d(224, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

)

)