MindArmour 使用

文章首发于笔者华为云,欢迎关注MindArmour 使用(万字详解,gitee同步发表)_MindSpore_昇腾论坛_华为云论坛

配置环境:CPU环境

首先下载mindspore,参考官网[MindSpore官网]

安装MindArmour

确认系统环境信息

-

硬件平台为Ascend、GPU或CPU。

-

参考MindSpore安装指南,完成MindSpore的安装。 MindArmour与MindSpore的版本需保持一致。

-

其余依赖请参见setup.py。

安装方式

可以采用pip安装或者源码编译安装两种方式。

pip安装

pip install https://ms-release.obs.cn-north-4.myhuaweicloud.com/{version}/MindArmour/any/mindarmour-{version}-py3-none-any.whl --trusted-host ms-release.obs.cn-north-4.myhuaweicloud.com -i https://pypi.tuna.tsinghua.edu.cn/simple

在联网状态下,安装whl包时会自动下载MindArmour安装包的依赖项(依赖项详情参见setup.py),其余情况需自行安装。

{version}表示MindArmour版本号,例如下载1.3.0版本MindArmour时,{version}应写为1.3.0。

源码安装

-

从Gitee下载源码。

git clone https://gitee.com/mindspore/mindarmour.git

-

在源码根目录下,执行如下命令编译并安装MindArmour。

cd mindarmour python setup.py install

验证是否成功安装

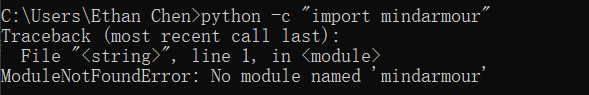

执行如下命令,如果没有报错No module named 'mindarmour',则说明安装成功。

python -c 'import mindarmour'

具体操作如下:

如图,最开始没有安装,显示没有mindarmour库

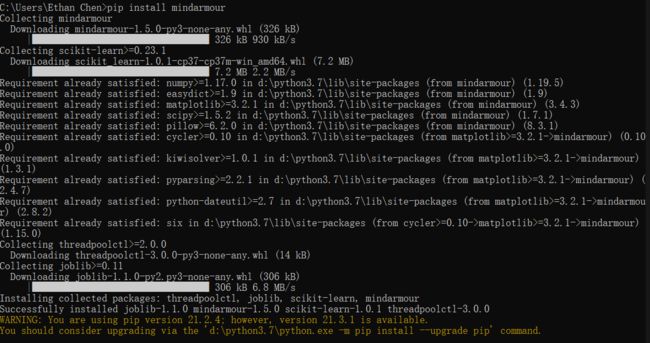

pip命令直接安装。

输入enter之后,没有错误报告,安装正确。

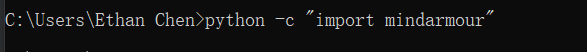

进入python环境,安装正确。

那我们跑一下测试玩玩。

使用NAD算法提升模型安全性

![]()

![]()

![]()

![]()

开始

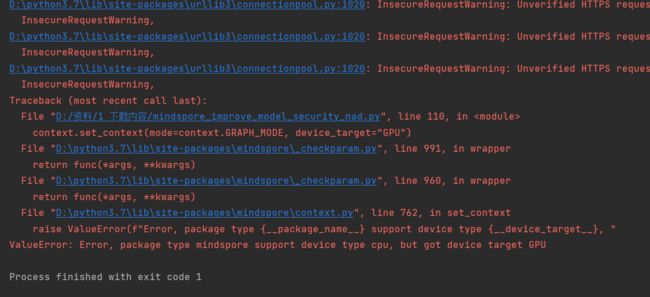

刚一开始就报错啦。没事,我们看看信息。

貌似这,暂时CPU还跑不了。

“got device target GPU”。但是仔细分析,我们发现前面这句“support type cpu”。

我们再结合报错信息,只用修改代码中的target即可。

MindSpore的兼容性还是很强的,

稍微调试就好。

果不其然,搞成了target="CPU"就可以了

这就真不错。

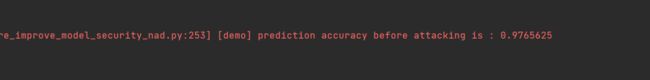

经过三轮训练,精确度已经达到97%了

GPU上演示

还没玩够,那我们在gpu上再玩一遍

(差点都忘了自己创建的环境叫什么了,原来叫mindspore1.5-gpu)

遇见的一些问题

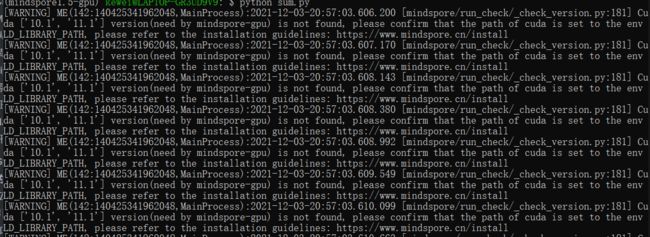

GPU运行armour

运行的时候,莫名奇妙出了些小故障,难道python命令出问题了?

原来是c盘满了,我把cuda卸了。看来寒假得重新加一块存储卡...那寒假再跟大家写gpu版本的吧。

完整演示

pycharm加装jupyter

1、安装Jupyter pip install jupyter

2、安装pycharm专业版,然后开始

建立被攻击模型

以MNIST为示范数据集,自定义的简单模型作为被攻击模型。

引入相关包

import os

import numpy as np

from scipy.special import softmax

from mindspore import dataset as ds

from mindspore import dtype as mstype

import mindspore.dataset.vision.c_transforms as CV

import mindspore.dataset.transforms.c_transforms as C

from mindspore.dataset.vision import Inter

import mindspore.nn as nn

from mindspore.nn import SoftmaxCrossEntropyWithLogits

from mindspore.common.initializer import TruncatedNormal

from mindspore import Model, Tensor, context

from mindspore.train.callback import LossMonitor

from mindarmour.adv_robustness.attacks import FastGradientSignMethod

from mindarmour.utils import LogUtil

from mindarmour.adv_robustness.evaluations import AttackEvaluate

context.set_context(mode=context.GRAPH_MODE, device_target="Ascend")

LOGGER = LogUtil.get_instance()

LOGGER.set_level("INFO")

TAG = 'demo'

下载文件的时候,会报不信任http,没关系,不用管。

注意,在CPU上运行,设置为target="CPU"

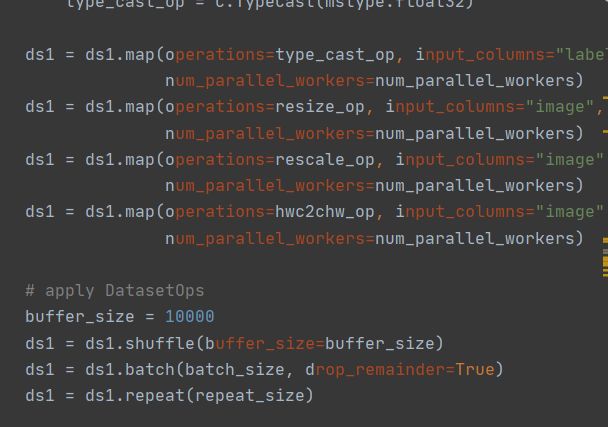

加载数据集

利用MindSpore的dataset提供的MnistDataset接口加载MNIST数据集。

# generate dataset for train of test def generate_mnist_dataset(data_path, batch_size=32, repeat_size=1, num_parallel_workers=1, sparse=True): """ create dataset for training or testing """ # define dataset ds1 = ds.MnistDataset(data_path) # define operation parameters resize_height, resize_width = 32, 32 rescale = 1.0 / 255.0 shift = 0.0 # define map operations resize_op = CV.Resize((resize_height, resize_width), interpolation=Inter.LINEAR) rescale_op = CV.Rescale(rescale, shift) hwc2chw_op = CV.HWC2CHW() type_cast_op = C.TypeCast(mstype.int32) # apply map operations on images if not sparse: one_hot_enco = C.OneHot(10) ds1 = ds1.map(operations=one_hot_enco, input_columns="label", num_parallel_workers=num_parallel_workers) type_cast_op = C.TypeCast(mstype.float32) ds1 = ds1.map(operations=type_cast_op, input_columns="label", num_parallel_workers=num_parallel_workers) ds1 = ds1.map(operations=resize_op, input_columns="image", num_parallel_workers=num_parallel_workers) ds1 = ds1.map(operations=rescale_op, input_columns="image", num_parallel_workers=num_parallel_workers) ds1 = ds1.map(operations=hwc2chw_op, input_columns="image", num_parallel_workers=num_parallel_workers) # apply DatasetOps buffer_size = 10000 ds1 = ds1.shuffle(buffer_size=buffer_size) ds1 = ds1.batch(batch_size, drop_remainder=True) ds1 = ds1.repeat(repeat_size) return ds1

建立模型

这里以LeNet模型为例,您也可以建立训练自己的模型。

-

定义LeNet模型网络。

def conv(in_channels, out_channels, kernel_size, stride=1, padding=0): weight = weight_variable() return nn.Conv2d(in_channels, out_channels, kernel_size=kernel_size, stride=stride, padding=padding, weight_init=weight, has_bias=False, pad_mode="valid") def fc_with_initialize(input_channels, out_channels): weight = weight_variable() bias = weight_variable() return nn.Dense(input_channels, out_channels, weight, bias) def weight_variable(): return TruncatedNormal(0.02) class LeNet5(nn.Cell): """ Lenet network """ def __init__(self): super(LeNet5, self).__init__() self.conv1 = conv(1, 6, 5) self.conv2 = conv(6, 16, 5) self.fc1 = fc_with_initialize(16*5*5, 120) self.fc2 = fc_with_initialize(120, 84) self.fc3 = fc_with_initialize(84, 10) self.relu = nn.ReLU() self.max_pool2d = nn.MaxPool2d(kernel_size=2, stride=2) self.flatten = nn.Flatten() def construct(self, x): x = self.conv1(x) x = self.relu(x) x = self.max_pool2d(x) x = self.conv2(x) x = self.relu(x) x = self.max_pool2d(x) x = self.flatten(x) x = self.fc1(x) x = self.relu(x) x = self.fc2(x) x = self.relu(x) x = self.fc3(x) return x

-

训练LeNet模型。利用上面定义的数据加载函数

generate_mnist_dataset载入数据。mnist_path = "../common/dataset/MNIST/" batch_size = 32 # train original model ds_train = generate_mnist_dataset(os.path.join(mnist_path, "train"), batch_size=batch_size, repeat_size=1, sparse=False) net = LeNet5() loss = SoftmaxCrossEntropyWithLogits(sparse=False) opt = nn.Momentum(net.trainable_params(), 0.01, 0.09) model = Model(net, loss, opt, metrics=None) model.train(10, ds_train, callbacks=[LossMonitor()], dataset_sink_mode=False)

以下是训练模型的结果

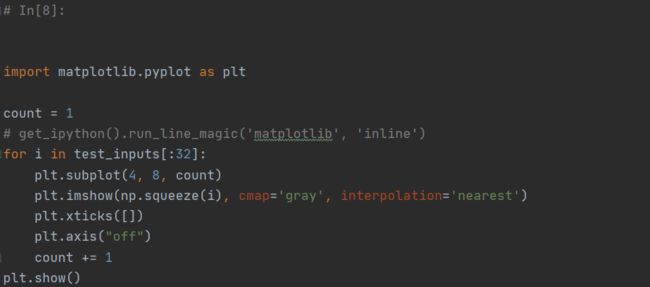

# 2. get test data

ds_test = generate_mnist_dataset(os.path.join(mnist_path, "test"),

batch_size=batch_size, repeat_size=1,

sparse=False)

inputs = []

labels = []

for data in ds_test.create_tuple_iterator():

inputs.append(data[0].asnumpy().astype(np.float32))

labels.append(data[1].asnumpy())

test_inputs = np.concatenate(inputs)

test_labels = np.concatenate(labels)

-

测试模型。

# prediction accuracy before attack net.set_train(False) test_logits = net(Tensor(test_inputs)).asnumpy() tmp = np.argmax(test_logits, axis=1) == np.argmax(test_labels, axis=1) accuracy = np.mean(tmp) LOGGER.info(TAG, 'prediction accuracy before attacking is : %s', accuracy)

测试结果中分类精度达到了97%。

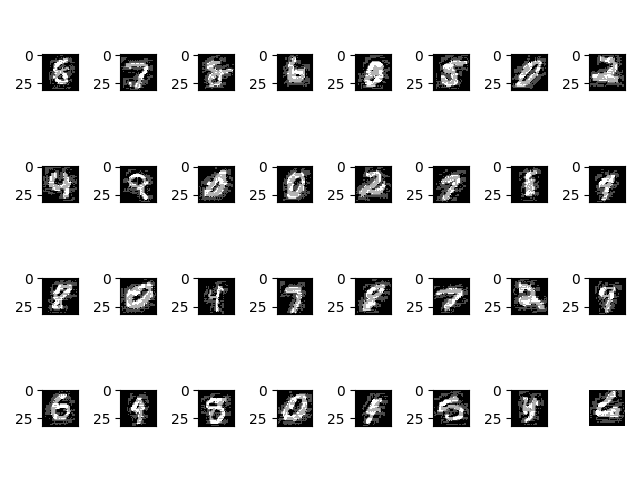

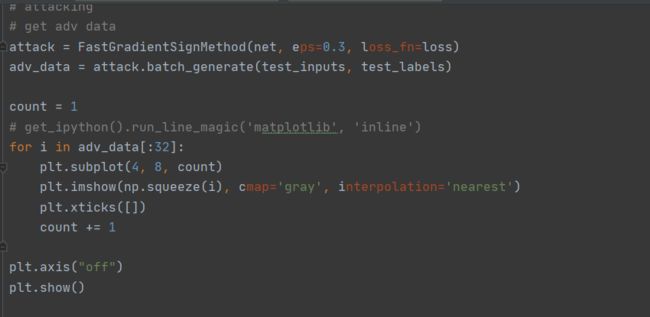

对抗性攻击

调用MindArmour提供的FGSM接口(FastGradientSignMethod)。

# attacking

# get adv data

attack = FastGradientSignMethod(net, eps=0.3, loss_fn=loss)

adv_data = attack.batch_generate(test_inputs, test_labels)

# get accuracy of adv data on original model

adv_logits = net(Tensor(adv_data)).asnumpy()

adv_proba = softmax(adv_logits, axis=1)

tmp = np.argmax(adv_proba, axis=1) == np.argmax(test_labels, axis=1)

accuracy_adv = np.mean(tmp)

LOGGER.info(TAG, 'prediction accuracy after attacking is : %s', accuracy_adv)

attack_evaluate = AttackEvaluate(test_inputs.transpose(0, 2, 3, 1),

test_labels,

adv_data.transpose(0, 2, 3, 1),

adv_proba)

LOGGER.info(TAG, 'mis-classification rate of adversaries is : %s',

attack_evaluate.mis_classification_rate())

LOGGER.info(TAG, 'The average confidence of adversarial class is : %s',

attack_evaluate.avg_conf_adv_class())

LOGGER.info(TAG, 'The average confidence of true class is : %s',

attack_evaluate.avg_conf_true_class())

LOGGER.info(TAG, 'The average distance (l0, l2, linf) between original '

'samples and adversarial samples are: %s',

attack_evaluate.avg_lp_distance())

LOGGER.info(TAG, 'The average structural similarity between original '

'samples and adversarial samples are: %s',

attack_evaluate.avg_ssim())

攻击结果如下:

prediction accuracy after attacking is : 0.052083 mis-classification rate of adversaries is : 0.947917 The average confidence of adversarial class is : 0.803375 The average confidence of true class is : 0.042139 The average distance (l0, l2, linf) between original samples and adversarial samples are: (1.698870, 0.465888, 0.300000) The average structural similarity between original samples and adversarial samples are: 0.332538

结果如下。

对模型进行FGSM无目标攻击后,模型精度有11%,误分类率高达89%,成功攻击的对抗样本的预测类别的平均置信度(ACAC)为 0.721933,成功攻击的对抗样本的真实类别的平均置信度(ACTC)为 0.05756182,同时给出了生成的对抗样本与原始样本的零范数距离、二范数距离和无穷范数距离,平均每个对抗样本与原始样本间的结构相似性为0.5708779。

对抗性防御

NaturalAdversarialDefense(NAD)是一种简单有效的对抗样本防御方法,使用对抗训练的方式,在模型训练的过程中构建对抗样本,并将对抗样本与原始样本混合,一起训练模型。随着训练次数的增加,模型在训练的过程中提升对于对抗样本的鲁棒性。NAD算法使用FGSM作为攻击算法,构建对抗样本。

防御实现

调用MindArmour提供的NAD防御接口(NaturalAdversarialDefense)。

from mindarmour.adv_robustness.defenses import NaturalAdversarialDefense

# defense

net.set_train()

nad = NaturalAdversarialDefense(net, loss_fn=loss, optimizer=opt,

bounds=(0.0, 1.0), eps=0.3)

nad.batch_defense(test_inputs, test_labels, batch_size=32, epochs=10)

# get accuracy of test data on defensed model

net.set_train(False)

test_logits = net(Tensor(test_inputs)).asnumpy()

tmp = np.argmax(test_logits, axis=1) == np.argmax(test_labels, axis=1)

accuracy = np.mean(tmp)

LOGGER.info(TAG, 'accuracy of TEST data on defensed model is : %s', accuracy)

# get accuracy of adv data on defensed model

adv_logits = net(Tensor(adv_data)).asnumpy()

adv_proba = softmax(adv_logits, axis=1)

tmp = np.argmax(adv_proba, axis=1) == np.argmax(test_labels, axis=1)

accuracy_adv = np.mean(tmp)

attack_evaluate = AttackEvaluate(test_inputs.transpose(0, 2, 3, 1),

test_labels,

adv_data.transpose(0, 2, 3, 1),

adv_proba)

LOGGER.info(TAG, 'accuracy of adv data on defensed model is : %s',

np.mean(accuracy_adv))

LOGGER.info(TAG, 'defense mis-classification rate of adversaries is : %s',

attack_evaluate.mis_classification_rate())

LOGGER.info(TAG, 'The average confidence of adversarial class is : %s',

attack_evaluate.avg_conf_adv_class())

LOGGER.info(TAG, 'The average confidence of true class is : %s',

attack_evaluate.avg_conf_true_class())

在CPU上跑起来了,我已经听到了风扇的声音!

每次跑深度学习模型,都能够听见散热扇呼啸~

数秒后,风扇声音降低,准备查看结果。

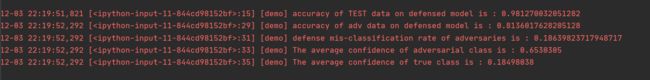

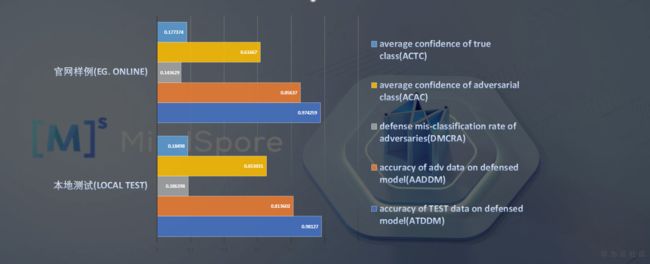

防御效果

accuracy of TEST data on defensed model is : 0.981270 accuracy of adv data on defensed model is : 0.813602 defense mis-classification rate of adversaries is : 0.186398 The average confidence of adversarial class is : 0.653031 The average confidence of true class is : 0.184980

使用NAD进行对抗样本防御后,模型对于对抗样本的误分类率降至18%,模型有效地防御了对抗样本。同时,模型对于原来测试数据集的分类精度达98%。

与官网数据对比:

accuracy of TEST data on defensed model is : 0.974259 accuracy of adv data on defensed model is : 0.856370 defense mis-classification rate of adversaries is : 0.143629 The average confidence of adversarial class is : 0.616670 The average confidence of true class is : 0.177374

使用NAD进行对抗样本防御后,模型对于对抗样本的误分类率从95%降至14%,模型有效地防御了对抗样本。同时,模型对于原来测试数据集的分类精度达97%。

开源代码

亲爱的朋友,我已将本文中MindArmour的实操代码开源到gitee,代码已经在CPU上调试通过,欢迎大家下载使用,亲手调试后会有更加深入的理解。

链接:MindSporeArmour: Show details of how to use MindSpore Armour.