《PyTorch深度学习实践》Lecture_10 卷积神经网络基础 CNN

B站刘二大人老师的《PyTorch深度学习实践》Lecture_10 重点回顾+代码复现

Lecture_10 卷积神经网络 Convolutional Neural Network

一、重点回顾——卷积神经网络的结构

(一) 卷积层:特征提取

1. 卷积核尺寸的确定

(1) 单输入通道

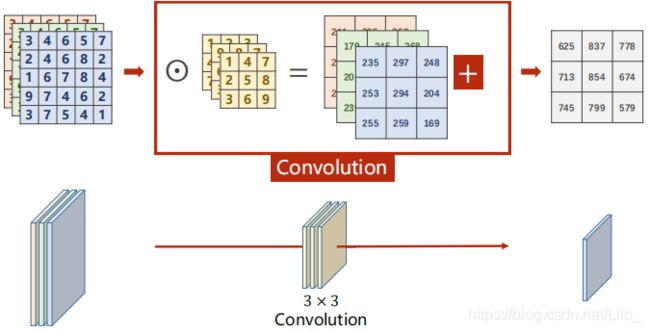

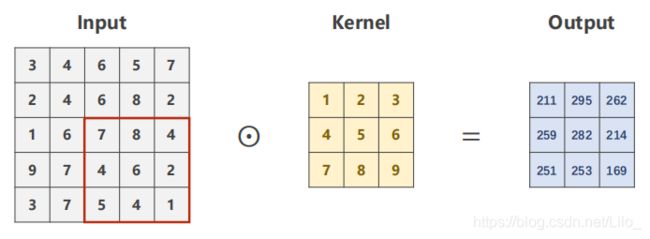

滤波器filter/卷积核kernel在输入图像上滑动,遍历,并做数乘运算(对应元素相乘)再相加【即互相关运算】得到输出。

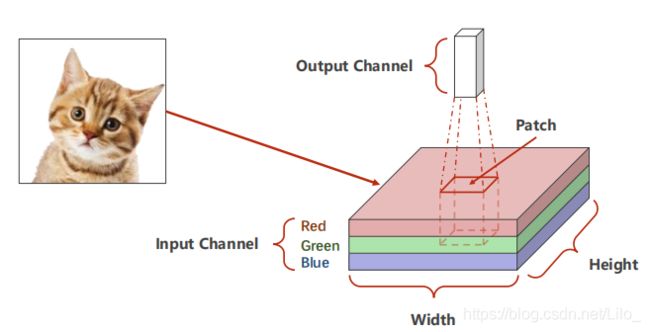

(2)3输入通道

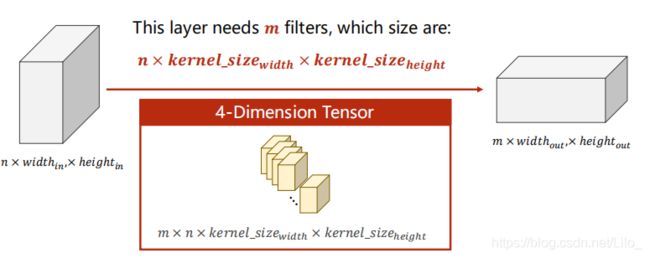

(3)N输入通道

输出通道的高和宽 = 输入通道的高和宽 - 卷积核的高和宽 + 1

卷积核通道数 = 输入通道数

输出通道数 = 卷积核的数量

(4) N输入通道M输出通道

个人理解:输入图像的每一个通道与一个卷积核的每一个通道做卷积运算,n个卷积运算结果相加,得到一个特征提取图,即输出图像的一个通道。每个卷积核进行n次卷积运算。

m个n通道的卷积核,卷积核大小为输出图像的宽高-输入图像宽高+1

2. 零填充和步长 Padding and Stride

Padding 在输入图像四周补零

输出通道的宽高=输入通道宽高-卷积核宽高+1

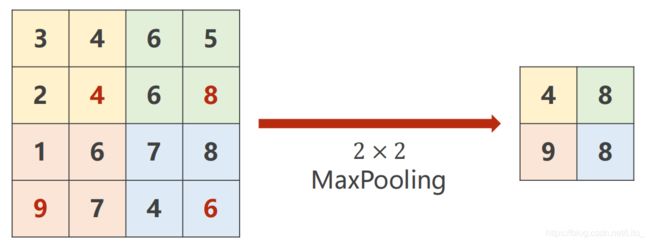

(二)池化层:减少数据量,降低运算需求

1. 最大池化

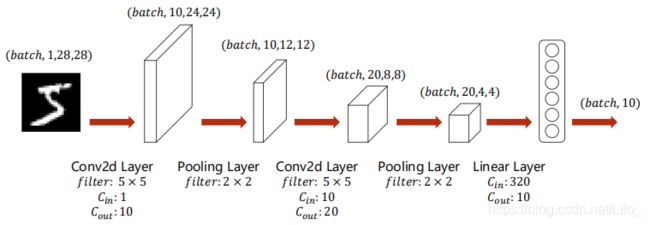

(三)全连接层:分类,输出结果

二、代码复现

# 卷积层的输入输出

import torch

in_channels,out_channels = 5,10

width,height = 100,100

kernel_size = 3

batch_size = 1

input = torch.randn(batch_size,in_channels,width,height)

conv_layer = torch.nn.Conv2d(in_channels,out_channels,kernel_size=kernel_size)

output = conv_layer(input)

print(input.shape)

print(output.shape)

print(conv_layer.weight.shape)

# 卷积运算结果输出

input2 = [3,4,6,5,7, 2,4,6,8,2, 1,6,7,8,4, 9,7,4,6,2, 3,7,5,4,1]

input2 = torch.Tensor(input2).view(1, 1, 5, 5)

conv_layer2 = torch.nn.Conv2d(1, 1, kernel_size=3, stride=2, bias=False)

kernel = torch.Tensor([1,2,3,4,5,6,7,8,9]).view(1, 1, 3, 3)

conv_layer2.weight.data = kernel.data

output2 = conv_layer2(input2)

print(output2)

# 最大池化

input3 = [3,4,6,5, 2,4,6,8, 1,6,7,8, 9,7,4,6, ]

input3 = torch.Tensor(input3).view(1, 1, 4, 4)

maxpooling_layer = torch.nn.MaxPool2d(kernel_size=2)

output3 = maxpooling_layer(input3)

print(output3)

# Move Module to GPU

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

# Move Tensors to GPU

# Send the inputs and targets at every step to the GPU.(写在train和test的循环体里)

inputs, target = data

inputs, target = inputs.to(device),target.to(device)

全部代码:

import torch

import torch.nn.functional as F

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.optim as optim

# Prepare Dataset

batch_size = 64

transform = transforms.Compose([

transforms.ToTensor(), # Convert the PIL Image to Tensor

transforms.Normalize((0.1307,),(0.3081,))

])

train_dataset = datasets.MNIST(root='./dataset/mnist/',train=True,download=True,transform=transform)

train_loader = DataLoader(train_dataset,shuffle=True,batch_size=batch_size)

test_dataset = datasets.MNIST(root='./dataset/mnist/',train=False,download=True,transform=transform)

test_loader = DataLoader(train_dataset,shuffle=False,batch_size=batch_size)

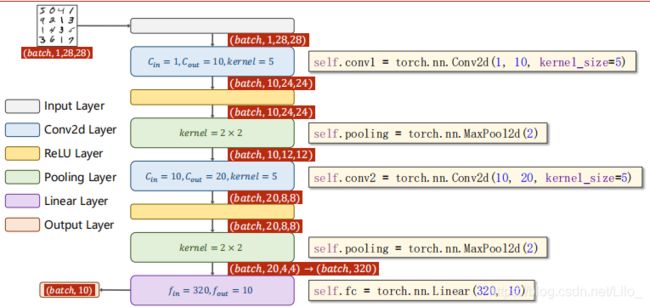

class Net(torch.nn.Module):

"""docstring for Net"""

def __init__(self):

super(Net, self).__init__()

self.conv1 = torch.nn.Conv2d(1,10,kernel_size=5)

self.conv2 = torch.nn.Conv2d(10,20,kernel_size=5)

self.pooling = torch.nn.MaxPool2d(2)

self.fc = torch.nn.Linear(320,10)

def forward(self,x):

# Flatten data from (n,1,28,28) to (n,784)

batch_size = x.size(0)

x = F.relu(self.pooling(self.conv1(x)))

x = F.relu(self.pooling(self.conv2(x)))

x = x.view(batch_size,-1) # Flatten

x = self.fc(x)

return x

model = Net()

# Move Module to GPU

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print(device)

model = model.to(device)

# Construct Loss and Optimizer

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(),lr=0.01,momentum=0.5)

def train(epoch):

running_loss = 0.0

for batch_idx,data in enumerate(train_loader,0):

# Move Tensors to GPU

inputs, target = data

inputs, target = inputs.to(device),target.to(device)

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs,target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d,%5d] loss:%.3f' % (epoch+1,batch_idx+1,running_loss/2000))

running_loss = 0.0

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

# Move Tensors to GPU

inputs, target = data

inputs, target = inputs.to(device),target.to(device)

outputs = model(inputs)

_,predicted = torch.max(outputs.data,dim=1)

total += target.size(0)

correct += (predicted==target).sum().item()

print('Accuracy on test set: %d %%' % (100*correct/total))

if __name__ == '__main__':

for epoch in range(10):

train(epoch)

test()