pytorch搭建TextRNN做文本分类,TextRNN加Attention做对比

数据集来源:天池零基础入门NLP - 新闻文本分类。

完整工程代码点击这里。

数据集比较庞大,14个类别,每个文本平均长度为900。一开始就是搭建了很简单的RNN,然后出问题了,模型不收敛,后来看到其他大佬分享的baseline,基本都是把文本截断的,截断到250左右。

于是我截断了下,模型有点收敛了,但是跑了几十个epoch还是0.3的精度上不去。。。。

然后又找了别人 的TextRNN模型框架,发现了有个很细微的区别,别人的Lstm里面加了dropout,我就有点儿懵,这不是防过拟合的吗?这个模型都还没收敛呢?咋肥事?

本着实践是检验真理的唯一标准,我简单加了dropout进行试验,没想到模型很快就收敛了。。。。。。。准确率直接奔着0.8去了。。。。

我都无语了,咋肥事?

于是搜索了下资料,得到如下:

rnn有放大噪音的功能,某些时候会反过来伤害模型的学习能力,添加dropout会在训练时有选择的抛弃掉一些无用的信息。

让我瞬间恍然大悟,为啥呢?因为我当时和TextCNN做比较,我也简单搭建了一个TextCNN跑这个新闻分类数据,TextCNN很快就收敛了,而且准确率也很快上升到0.8左右。

因为TextCNN底层类似于有分段功能,把文本分段成很多部分,那么一个很长的文本中,有一些无用的干扰信息片段的文本,就会被CNN给踢了,但是RNN就是循环神经网络,从左到右不会扔掉任何数据,一直循环到结束,这个过程就会不可控的把一些无用干扰信息也学习了进来,导致模型无法识别收敛。

总结

经过反复实验,结论如下:

1、textcnn的鲁棒性远高于textrnn,不论是长文本还是短文本,抗干扰性能都表现良好,在普通的文本分类任务中效果教好,但是,对于需要分析更复杂的语义信息这种,textrnn效果好于textcnn,textrnn可以挖掘出更深入的信息,做任务时需要有针对性的选择模型。

2、长文本很考验RNN的学习能力,对模型的要求也很高,可以利用把长文本分成几个短文本进行训练,效果会好很多。

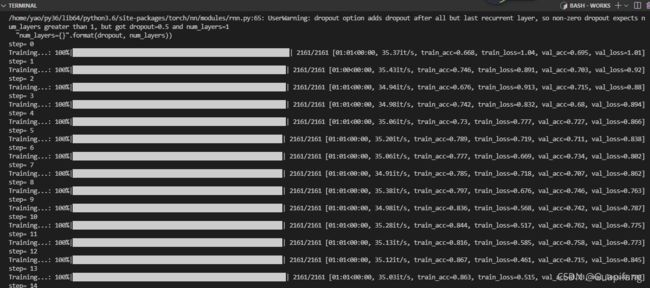

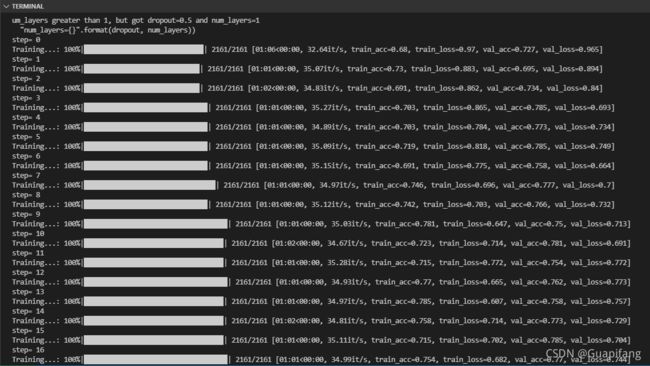

下面的代码为了尽快跑出结果缩小了模型规模,所以准确率没到达0.80,需要的同学的自己改模型结构哈~

普通TextRNN代码

import pandas as pd

from collections import Counter

import pandas as pd

import torch.nn as nn

import torch.nn.functional as F

from torch.autograd import Variable

from sklearn.preprocessing import MinMaxScaler

from sklearn.model_selection import train_test_split

from sklearn.model_selection import train_test_split, GroupKFold, KFold

import numpy as np

import torch

from torch import autograd

import os

from tqdm import tqdm

from gensim.models.word2vec import Word2Vec

#device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

device = torch.device("cuda:0")

#--------------------------加载数据----------------------------

df = pd.read_csv('/新闻文本分类/train_set.csv',sep='\t')

#mx_length = 900

vocabs_size = 0

n_class = 14

training_step = 20#迭代次数

batch_size = 256#每个批次的大小

train = []

targets = []

label = df['label'].values

text = df['text'].values

id = 0

for val in tqdm(text):

s = val.split(' ')

single_data = []

for i in range(len(s)):

vocabs_size = max(vocabs_size,int(s[i])+1)

single_data.append(int(s[i])+1)

if len(single_data)>=256:

train.append(single_data)

targets.append(int(label[id]))

single_data = []

if len(single_data)>=150:

single_data = single_data + [0]*(256-len(single_data))

train.append(single_data)

targets.append(int(label[id]))

id += 1

train = np.array(train)

targets = np.array(targets)

class Bi_Lstm(nn.Module):

def __init__(self):

super(Bi_Lstm,self).__init__()

self.embeding = nn.Embedding(vocabs_size+1,100)

self.lstm = nn.LSTM(input_size = 100, hidden_size = 100,num_layers = 1,bidirectional = False,batch_first=True,dropout=0.5)#加了双向,输出的节点数翻2倍

self.l1 = nn.BatchNorm1d(100)

self.l2 = nn.ReLU()

self.l3 = nn.Linear(100,n_class)#特征输入

self.l4 = nn.Dropout(0.3)

self.l5 = nn.BatchNorm1d(n_class)

def forward(self, x):

x = self.embeding(x)

out,_ = self.lstm(x)

#选择最后一个时间点的output

out = self.l1(out[:,-1,:])

out = self.l2(out)

out = self.l3(out)

out = self.l4(out)

out = self.l5(out)

return out

print(train.shape)

print(targets.shape)

kf = KFold(n_splits=5, shuffle=True, random_state=2021)#5折交叉验证

for fold, (train_idx, test_idx) in enumerate(kf.split(train, targets)):

print('-'*15, '>', f'Fold {fold+1}', '<', '-'*15)

x_train, x_val = train[train_idx], train[test_idx]

y_train, y_val = targets[train_idx], targets[test_idx]

M_train = len(x_train)-1

M_val = len(x_val)

x_train = torch.from_numpy(x_train).to(torch.long).to(device)

x_val = torch.from_numpy(x_val).to(torch.long).to(device)

y_train = torch.from_numpy(y_train).to(torch.long).to(device)

y_val = torch.from_numpy(y_val).to(torch.long).to(device)

model = Bi_Lstm()

model.to(device)

optimizer = torch.optim.Adam(model.parameters(),lr=0.001)

loss_func = nn.CrossEntropyLoss()#多分类的任务

model.train()#模型中有BN和Droupout一定要添加这个说明

#开始迭代

for step in range(training_step):

print('step=',step)

L_val = -batch_size

with tqdm(np.arange(0,M_train,batch_size), desc='Training...') as tbar:

for index in tbar:

L = index

R = min(M_train,index+batch_size)

L_val += batch_size

L_val %= M_val

R_val = min(M_val,L_val + batch_size)

#-----------------训练内容------------------

train_pre = model(x_train[L:R]) # 喂给 model训练数据 x, 输出预测值

train_loss = loss_func(train_pre, y_train[L:R])

val_pre = model(x_val[L_val:R_val])#验证集也得分批次,不然数据量太大内存爆炸

val_loss = loss_func(val_pre, y_val[L_val:R_val])

#----------- -----计算准确率----------------

train_acc = np.sum(np.argmax(np.array(train_pre.data.cpu()),axis=1) == np.array(y_train[L:R].data.cpu()))/(R-L)

val_acc = np.sum(np.argmax(np.array(val_pre.data.cpu()),axis=1) == np.array(y_val[L_val:R_val].data.cpu()))/(R_val-L_val)

#---------------打印在进度条上--------------

tbar.set_postfix(train_loss=float(train_loss.data.cpu()),train_acc=train_acc,val_loss=float(val_loss.data.cpu()),val_acc=val_acc)

tbar.update() # 默认参数n=1,每update一次,进度+n

#-----------------反向传播更新---------------

optimizer.zero_grad() # 清空上一步的残余更新参数值

train_loss.backward() # 以训练集的误差进行反向传播, 计算参数更新值

optimizer.step() # 将参数更新值施加到 net 的 parameters 上

del model

加了Attention的代码

import pandas as pd

from collections import Counter

import pandas as pd

import torch.nn as nn

import torch.nn.functional as F

from torch.autograd import Variable

from sklearn.preprocessing import MinMaxScaler

from sklearn.model_selection import train_test_split

from sklearn.model_selection import train_test_split, GroupKFold, KFold

import numpy as np

import torch

from torch import autograd

import os

from tqdm import tqdm

from gensim.models.word2vec import Word2Vec

#device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

device = torch.device("cuda:0")

#--------------------------加载数据----------------------------

df = pd.read_csv('/新闻文本分类/train_set.csv',sep='\t')

#mx_length = 900

vocabs_size = 0

n_class = 14

training_step = 20#迭代次数

batch_size = 256#每个批次的大小

train = []

targets = []

label = df['label'].values

text = df['text'].values

id = 0

for val in tqdm(text):

s = val.split(' ')

single_data = []

for i in range(len(s)):

vocabs_size = max(vocabs_size,int(s[i])+1)

single_data.append(int(s[i])+1)

if len(single_data)>=256:

train.append(single_data)

targets.append(int(label[id]))

single_data = []

if len(single_data)>=150:

single_data = single_data + [0]*(256-len(single_data))

train.append(single_data)

targets.append(int(label[id]))

id += 1

train = np.array(train)

targets = np.array(targets)

class Bi_Lstm(nn.Module):

def __init__(self):

super(Bi_Lstm,self).__init__()

self.embeding = nn.Embedding(vocabs_size+1,100)

self.lstm = nn.LSTM(input_size = 100, hidden_size = 100,num_layers = 1,bidirectional = False,batch_first=True,dropout=0.5)#加了双向,输出的节点数翻2倍

self.l1 = nn.BatchNorm1d(100)

self.l2 = nn.ReLU()

self.l3 = nn.Linear(100,n_class)#特征输入

self.l4 = nn.Dropout(0.3)

self.l5 = nn.BatchNorm1d(n_class)

def attention_net(self, lstm_output, final_state):

batch_size = len(lstm_output)

hidden = final_state.view(batch_size, -1, 1) # hidden : [batch_size, n_hidden * num_directions(=2), n_layer(=1)]

attn_weights = torch.bmm(lstm_output, hidden).squeeze(2) # attn_weights : [batch_size, n_step]

soft_attn_weights = F.softmax(attn_weights, 1)

# context : [batch_size, n_hidden * num_directions(=2)]

context = torch.bmm(lstm_output.transpose(1, 2), soft_attn_weights.unsqueeze(2)).squeeze(2)

return context, soft_attn_weights

def forward(self, x):

x = self.embeding(x)

#out,_ = self.lstm(x)

out, (final_hidden_state, final_cell_state) = self.lstm(x)

#选择最后一个时间点的output

'''

out = self.l1(out[:,-1,:])

out = self.l2(out)

out = self.l3(out)

out = self.l4(out)

out = self.l5(out)

'''

#output = out.transpose(0, 1) # output : [batch_size, seq_len, n_hidden]

attn_output, attention = self.attention_net(out, final_hidden_state)

#return self.out(attn_output), attention # model : [batch_size, num_classes]

out = self.l3(attn_output)

out = self.l4(out)

out = self.l5(out)

return out

print(train.shape)

print(targets.shape)

kf = KFold(n_splits=5, shuffle=True, random_state=2021)#5折交叉验证

for fold, (train_idx, test_idx) in enumerate(kf.split(train, targets)):

print('-'*15, '>', f'Fold {fold+1}', '<', '-'*15)

x_train, x_val = train[train_idx], train[test_idx]

y_train, y_val = targets[train_idx], targets[test_idx]

M_train = len(x_train)-1

M_val = len(x_val)

x_train = torch.from_numpy(x_train).to(torch.long).to(device)

x_val = torch.from_numpy(x_val).to(torch.long).to(device)

y_train = torch.from_numpy(y_train).to(torch.long).to(device)

y_val = torch.from_numpy(y_val).to(torch.long).to(device)

model = Bi_Lstm()

model.to(device)

optimizer = torch.optim.Adam(model.parameters(),lr=0.001)

loss_func = nn.CrossEntropyLoss()#多分类的任务

model.train()#模型中有BN和Droupout一定要添加这个说明

#开始迭代

for step in range(training_step):

print('step=',step)

L_val = -batch_size

with tqdm(np.arange(0,M_train,batch_size), desc='Training...') as tbar:

for index in tbar:

L = index

R = min(M_train,index+batch_size)

L_val += batch_size

L_val %= M_val

R_val = min(M_val,L_val + batch_size)

#-----------------训练内容------------------

train_pre = model(x_train[L:R]) # 喂给 model训练数据 x, 输出预测值

train_loss = loss_func(train_pre, y_train[L:R])

val_pre = model(x_val[L_val:R_val])#验证集也得分批次,不然数据量太大内存爆炸

val_loss = loss_func(val_pre, y_val[L_val:R_val])

#----------- -----计算准确率----------------

train_acc = np.sum(np.argmax(np.array(train_pre.data.cpu()),axis=1) == np.array(y_train[L:R].data.cpu()))/(R-L)

val_acc = np.sum(np.argmax(np.array(val_pre.data.cpu()),axis=1) == np.array(y_val[L_val:R_val].data.cpu()))/(R_val-L_val)

#---------------打印在进度条上--------------

tbar.set_postfix(train_loss=float(train_loss.data.cpu()),train_acc=train_acc,val_loss=float(val_loss.data.cpu()),val_acc=val_acc)

tbar.update() # 默认参数n=1,每update一次,进度+n

#-----------------反向传播更新---------------

optimizer.zero_grad() # 清空上一步的残余更新参数值

train_loss.backward() # 以训练集的误差进行反向传播, 计算参数更新值

optimizer.step() # 将参数更新值施加到 net 的 parameters 上

del model

PS:很奇怪的是,加了attention,验证集的分数提高很明显,但是训练集一般,不过总的来说,加了attention对模型的整体表达还是有了一定提升。