为持续夯实MobTech袤博科技的数智技术创新能力和技术布道能力,本期极客星球邀请了MobTech企业服务研发部工程师勤佳,从Elasticsearch集群安装、DSL语句讲解、深度分页、IK分词器、滚动索引等方面进行了阐述和分享。

一、集群环境安装

elasticsearch 是一个分布式、高扩展、近实时的搜索与数据分析引擎。

1.1 elasticsearch 安装

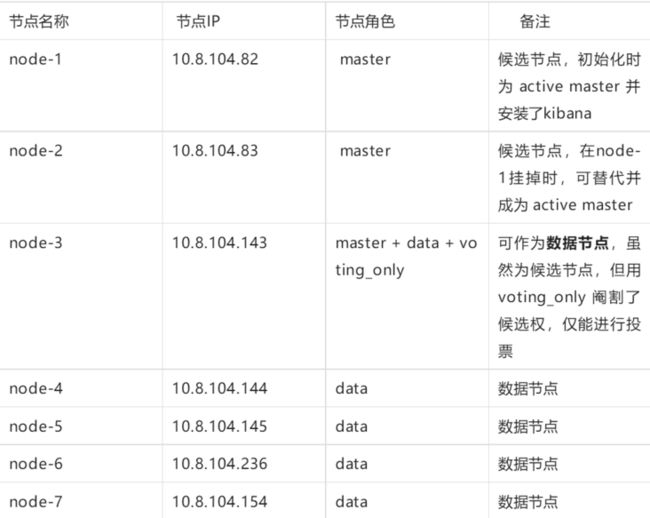

1.1.1 节点说明

本次安装的版本为8.4.1,因此 jdk 我选择的也是较高版本 jdk-18。

1.1.2 安装说明

参考官方

(https://www.elastic.co/guide/...)

下载安装包

(wget https://artifacts.elastic.co/...)

解压执行

tar -xzf elasticsearch-8.4.1-linux-x86_64.tar.gz

cd elasticsearch-8.4.1/

修改配置文件(所有节点)

vim config/elasticsearch.yml

# 集群名称,同一个集群节点配置要一致

cluster.name: my-application

# 节点名称,同一个集群节点配置要不一致,单点部署需移除

node.name: node-1

# master data voting_only

node.roles: [master]

network.host: 10.8.104.82

http.port: 9200

#集群配置可被发现列表,单点部署需移除

discovery.seed_hosts: discovery.seed_hosts: ["10.8.104.82:9300","10.8.104.83:9300","10.8.104.143:9300"]

#

cluster.initial_master_nodes: ["node-1", "node-2","node-3"]

# 8.4.1 默认开启安全验证,可以设置关闭

xpack.security.enabled: false

xpack.security.enrollment.enabled: false

# Enable encryption for HTTP API client connections, such as Kibana, Logstash, and Agents

xpack.security.http.ssl:

enabled: false

# Enable encryption and mutual authentication between cluster nodes

xpack.ml.enabled: false

启动(先启动主节点、用普通用户启动)

useradd elastic

cd ..

chown -R elastic:elastic elasticsearch-8.4.1

su elastic

./bin elasticsearch -d

1.2 kibana 安装

kibana 是为 elasticsearch 设计的开源分析和可视化平台

1.2.1 安装说明

参考官方

(https://www.elastic.co/guide/...)

下载安装包

(curl -O https://artifacts.elastic.co/...)

解压执行

tar -xzf kibana-8.4.1-linux-x86_64.tar.gz

cd kibana-8.4.1/

修改配置文件 vim config/kibana.yml

server.port: 5601server.host: "10.8.104.82"server.name: "my-kibana"elasticsearch.hosts: 启动(不能用root启动,用普通用户启动)

useradd elastic

cd ..

chown -R elastic:elastic kibana-8.4.1

su elastic

nohup bin/kibana &可视化页面 (http://10.8.104.82:5601)

1.3 elasticsearch head 插件

谷歌应用商店下载

(https://chrome.google.com/web...)

搜索 Multi Elasticsearch Head 进行集成

二、Query DSL(Domain Specific Language)

2.1 query 查询

- 查询所有

GET /product/_search 带参数

GET /product/_search?q=name.keyword:苹果AirPods

分页

GET /product/_search?from=0&size=5&sort=price:desc

2.2 全文检索-fulltext query

match 匹配包含某个term的子句

GET /_analyze

{

"analyzer": "ik_max_word",

"text": ["联想电脑"]

}## 联想电脑 会分成 "联系" "电脑" 两个词项,子句中 只要存在一个即可

GET /product/_search { "query": { "match": { "name": "联想电脑" } } } ## match_phrase 与 match 区别,不仅包含"联想"也要包含"电脑" GET /product/_search { "query": { "match_phrase": { "name": "联想电脑" } } }match_all 匹配所有

GET /product/_search { "query": { "match_all": {} } }multi_match 多字段查询

GET /product/_search { "query": { "multi_match": { "query": "苹果", "fields": ["desc","name"] } } }match_phrase 短语查询

## 联想电脑 分词为 "联系" "电脑", match_phrase 与 match 区别,不仅包含"联想"也要包含"电脑" GET /product/_search { "query": { "match_phrase": { "name": "联想电脑" } } }match_phrase_prefix 短语前缀查询,与 match_phrase 类似,但是会对最后一个词项在倒排索引列表中进行通配符搜索

GET /product/_search { "query": { "match_phrase": { "name": "联" } } } GET /product/_search { "query": { "match_phrase_prefix": { "name": "联" } } }2.3 精准查询-Term query

term匹配和搜索词项完全相等的结果

term和match_phrase区别:

match_phrase 会将检索关键词分词, match_phrase的分词结果必须在被检索字段的分词中都包含,而且顺序必须相同,而且默认必须都是连续的

term搜索不会将搜索词分词

term和keyword区别

term是对于搜索词不分词,

keyword是字段类型,是对于source data中的字段值不分词 GET /product/_search

{

"query": {

"term": {

"name.keyword": {

"value": "联想电脑"

}

}

}

}

GET /product/_search

{

"query": {

"term": {

"name": {

"value": "联想电脑"

}

}

}

}terms 匹配和搜索词项列表中任意项匹配的结果,类似 in

GET /product/_search

{

"query": {

"terms": {

"name.keyword": [

"联想电脑",

"华为电脑"

]

}

}

} range 范围查询

GET /product/_search

{

"query": {

"terms": {

"name.keyword": [

"联想电脑",

"华为电脑"

]

}

}

}2.4 过滤器-Filter

filter 与 query的区别:query是计算评分,而filter不会且有相应的缓存机制,可以提升查询效率

GET /product/_search

{

"query": {

"constant_score": {

"filter": {

"term": {

"name.keyword": "苹果电脑"

}

},

"boost": 1

}

}

}

2.5 组合查询-Bool query

bool:可以组合多个查询条件

must 必须满足子句(查询)必须出现在匹配的文档中,并将有助于得分

filter 过滤器 不计算相关度分数,并且子句被考虑用于缓存

should 可能满足 or子句(查询)应出现在匹配的文档中

minimum_should_match 参数指定should返回的文档必须匹配的子句的数量或百分比。如果bool查询包含至少一个should子句,而没有must或 filter子句,则默认值为1。否则,默认值为0

must_not必须不满足 不计算相关度分数,类似 not 子句

GET /product/_search

{

"query": {

"bool": {

"filter": [

{

"term": {

"type": "电脑"

}

}

],

"must": [

{

"term": {

"tag.keyword": {

"value": "商务办公"

}

}

},

{

"range": {

"price": {

"gte": 10000

}

}

}

],

"should": [

{

"term": {

"type": {

"value": "耳机"

}

}

}

],

"minimum_should_match": 0,

"must_not": [

{

"exists": {

"field": "noField"

}

}

]

}

}

}查询

GET product/_search

{

"_source": [

"price"

],

"script_fields": {

"myprice": {

"script": {

"source": "doc['price'].value*2"

}

}

}

}更新

GET product/_doc/2

POST product/_update/2

{

"script": {

"source": "ctx._source.price+=1"

}

}_reindex

POST _reindex

{

"source": {

"index": "product"

},

"dest": {

"index": "product1"

},

"script": {

"source": "ctx._source.price+=2"

}

}参数化

GET product/_search

{

"_source": [

"price"

],

"script_fields": {

"my_price": {

"script": {

"source": "doc['price'].value * params.discount",

"params": {

"discount": 0.9

}

}

},

"multi_my_price": {

"script": {

"source": "[doc['price'].value * params.discount_9,doc['price'].value * params.discount_8,doc['price'].value * params.discount_7,doc['price'].value * params.discount_6,doc['price'].value * params.discount_5]",

"params": {

"discount_9": 0.9,

"discount_8": 0.8,

"discount_7": 0.7,

"discount_6": 0.6,

"discount_5": 0.5

}

}

}

}

}2.7 聚合查询

桶聚合

GET /product/_search?size=0

{

"aggs": {

"type_agg": {

"terms": {

"field": "type",

"size": 10

}

}

}

}

## date_histogram

GET product/_search?size=0

{

"aggs": {

"date_range": {

"date_histogram": {

"field": "create_time",

"fixed_interval": "1d",

"min_doc_count": 0,

"format": "yyyy-MM-dd",

"keyed": false,

// create_time 空值 赋默认值

"missing": "1990-11-28",

"order": {

"_key": "desc"

},

"extended_bounds": {

"min": "2022-09-01",

"max": "2022-12-10"

}

}

}

}

}指标聚合

GET /product/_search?size=0

{

"aggs": {

"price_sum": {

"sum": {

"field": "price"

}

},

"price_avg": {

"avg": {

"field": "price"

}

},

"price_max": {

"max": {

"field": "price"

}

},

"price_min": {

"min": {

"field": "price"

}

},

"price_count": {

"value_count": {

"field": "price"

}

},

"price_stats": {

"stats": {

"field": "price"

}

}

}

}管道聚合

GET product/_search?size=0

{

"aggs": {

"type_bucket": {

"terms": {

"field": "type",

"size": 10

},

"aggs": {

"price_sum": {

"sum": {

"field": "price"

}

}

}

},

"min_sum_bucket": {

"min_bucket": {

"buckets_path": "type_bucket>price_sum"

}

},

"max_sum_bucket": {

"max_bucket": {

"buckets_path": "type_bucket>price_sum"

}

},

"create_time_bucket": {

"date_histogram": {

"field": "create_time",

"calendar_interval": "month",

"format": "yyyy-MM"

},

"aggs": {

"price_sum": {

"sum": {

"field": "price"

}

}

}

},

"min_sum_create_bucket": {

"min_bucket": {

"buckets_path": "create_time_bucket>price_sum"

}

}

}

}三、IK分词器

3.1 IK文件描述

ik提供两种analyzer

ik_smart:会做最粗粒度的拆分,比如会将“中华人民共和国国歌”拆分为“中华人民共和国,国歌”,分词的时候只分一次,句子里面的每个字只会出现一次

ik_max_word:句子的字可以反复出现。只要在词库里面出现过的 就拆分出来。如果没有出现的单字且已经在词里面出现过,那么这个就不会以单字的形势出现 IKAnalyzer.cfg.xml:IK分词配置文件

主词库:main.dic

英文停用词:stopword.dic,不会建立在倒排索引中

特殊词库:

quantifier.dic:特殊词库:计量单位等

suffix.dic:特殊词库:行政单位

surname.dic:特殊词库:百家姓

preposition:特殊词库:语气词

自定义词库:网络词汇、流行词、自造词等

3.2 IK 分词器插件安装

3.2.1 ik-analysis官方仓库

(https://github.com/medcl/elas...)

每一台节点上都要操作(可以先在一台操作,并把文件scp到其他节点)

cd elasticsearch-8.4.1/

./bin/elasticsearch-plugin install https://github.com/medcl/elasticsearch-analysis-ik/releases/download/v8.4.1/elasticsearch-analysis-ik-8.4.1.zip如果要扩充扩展分词 需要修改配置

vim config/analysis-ik/IKAnalyzer.cfg.xml

IK Analyzer 扩展配置

extra_single_word.dic

修改配置后 需要重启elastic集群

3.3 IK分词器远程词库支持

3.3.1 基于http远程支持

需要在IK配置文件中修改如下配置

http://yoursite.com/getCustomDict?dicType=1

http://yoursite.com/getCustomDict?dicType=2

private static final String HEAD_LAST_MODIFIED = "Last-Modified";

private static final String HEAD_ETAG = "ETag";

@RequestMapping("extra_dic")

public void extraDic(String dicType, HttpServletResponse response) throws IOException {

String pathName = Objects.equals(dicType, "1") ? "extra.dic" : "stop.dic";

ClassPathResource classPathResource = new ClassPathResource(pathName);

final File file = classPathResource.getFile();

final String md5Hex = DigestUtils.md5Hex(new FileInputStream(file));

//region 该 http 请求需要返回两个头部(header),一个是 Last-Modified,一个是 ETag,这两者都是字符串类型,只要有一个发生变化,插件就会去抓取新的分词进而更新词库

//endregion

response.setHeader(HEAD_LAST_MODIFIED, md5Hex);

response.setHeader(HEAD_ETAG, md5Hex);

response.setCharacterEncoding(StandardCharsets.UTF_8.name());

//text/plain 普通文本

response.setContentType("text/plain;charset=UTF-8");

try (InputStream inputStream =new FileInputStream(file); OutputStream outputStream = response.getOutputStream()) {

IOUtils.copy(inputStream, outputStream);

outputStream.flush();

} finally {

response.flushBuffer();

}

}

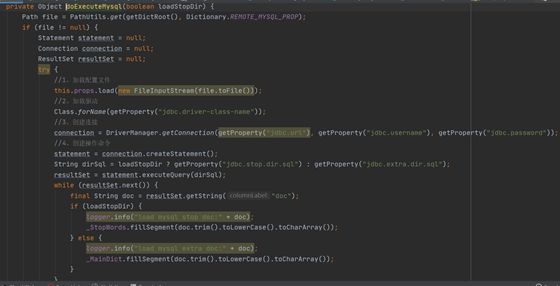

3.3.2 基于mysql远程支持

IK插件配置目录,需要新增jdbc.properties 配置文件。

properties

jdbc.url=jdbc:mysql://127.0.0.1:3306/test_elastic?useUnicode=true&characterEncoding=utf8&serverTimezone=Asia/Shanghai

jdbc.username=root

jdbc.password=root

jdbc.driver-class-name=com.mysql.cj.jdbc.Driver

jdbc.extra.dir.sql=select doc from elastic_extra_doc;

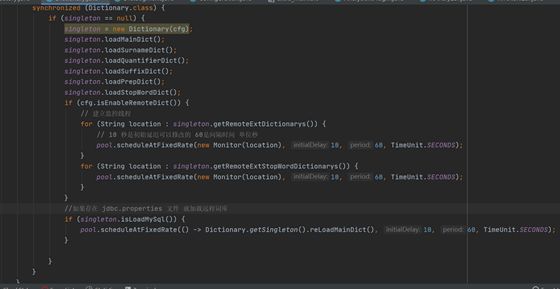

jdbc.stop.dir.sql=select doc from elastic_stop_doc;org.wltea.analyzer.dic.Dictionary#initial 入口处新增加载mysql逻辑

四、rollIndex 滚动索引

当现有索引太大或者太旧时,滚动索引API会将别名滚动到新的索引上来,一般都与索引模板结合使用。

4.1 滚动索引实战演示

创建索引模板

PUT _template/log_template

{

"index_patterns": ["mylog*","testlog*"],

"settings":{

"number_of_shards":5,

"number_of_replicas":2

},

"mappings":{

"properties":{

"id":{

"type":"keyword"

},

"name":{

"type":"keyword"

},

"code":{

"type":"keyword"

}

}

}

}创建索引

因为有索引模板 不需要创建 mapping 与 settingsPUT /testlog-000001

创建索引别名 is_write_index 设置为 true,使索引别名只能有一个写索引,其他索引用来读。

POST /_aliases

{

"actions": [

{

"add": {

"index": "testlog-000001",

"alias": "testlog_roll",

"is_write_index":true

}

}

]

}插入数据

## 批量插入

POST testlog_roll/_bulk

{"create":{}}

{"name":"jimas01","code":"test01"}

{"create":{}}

{"name":"jimas02","code":"test02"}

{"create":{}}

{"name":"jimas03","code":"test03"}

{"create":{}}

{"name":"jimas04","code":"test04"}

{"create":{}}

{"name":"jimas05","code":"test05"}执行滚动条件(只有执行才会触发滚动)

POST /testlog_roll/_rollover

{

"conditions": {

"max_age": "1d",

"max_docs": 10,

"max_size": "5kb"

}

}查询别名信息

`GET /_alias/testlog_roll`

{

"testlog-000003" : {

"aliases" : {

"testlog_roll" : {

"is_write_index" : true

}

}

},

"testlog-000002" : {

"aliases" : {

"testlog_roll" : {

"is_write_index" : false

}

}

},

"testlog-000001" : {

"aliases" : {

"testlog_roll" : {

"is_write_index" : false

}

}

}

}

可以编写rollover 脚本 定时执行 进行索引的滚动。

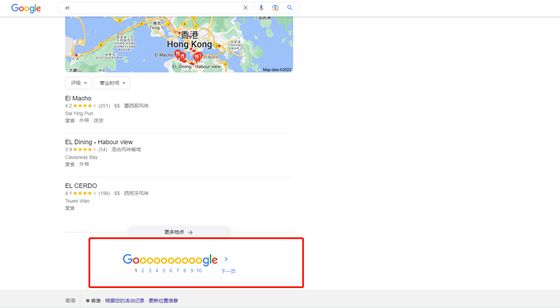

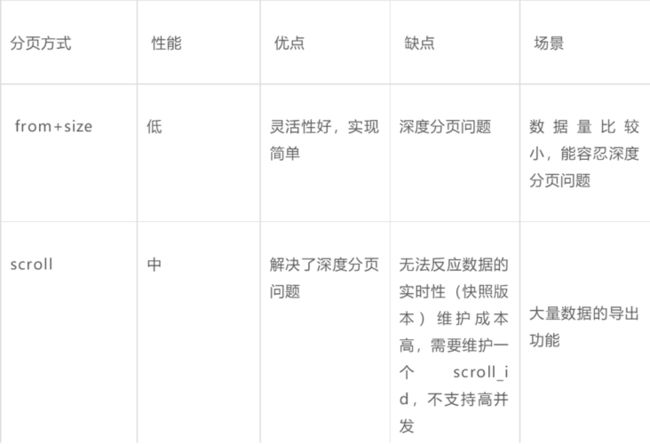

五、深度分页

5.1 from、size 深度分页刨析

ES 分页查询采用from+size,默认from从0开始。如果需要查询的文档从10000 到 10010,

from + size 为 10000 +10, 则需要查询前10010条记录,然后根据排序后取最后10条,

由于ES 是分布式数据库,所以需要在每个分片上分别查询 from+size 条记录再把结果进行合并取最终的10条数据,如果有n个分片就需要查询 n* (from+size)条结果,如果from很大的话就会OOM。

GET /fz_chance_visit_record/_search?from=10000&size=10

## 报错信息如下:

Result window is too large, from + size must be less than or equal to: [10000] but was [10010]. See the scroll api for a more efficient way to request large data sets. This limit can be set by changing the [index.max_result_window] index level setting.

## 最直观的方法 直接修改max_result_window,但要考虑到自身集群内存大小,否则会频繁发生FGC

PUT /_settings

{

"index": {

"max_result_window": 500000

}

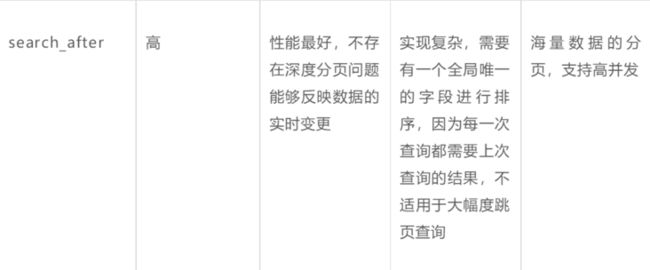

} 5.2 深度分页解决方案

5.2.1 scroll 滚动查询

官方已不推荐使用滚动查询进行深度分页查询,因为无法保存索引状态。

适用于单次请求中检索大量结果,高并发场景不合适,scroll_id会占用大量的资源(特别是排序的请求)。

GET /fz_chance_visit_record/_search?scroll=1m&size=10

{

"query": {

"match_all": {}

}

}

GET _search/scroll

{

"scroll_id":"DnF1ZXJ5VGhlbkZldGNoCwAAAAAdL0Y3FlhlTXJmYXp2UlltMU1ianBPREZITncAAAAAHS9GNhZYZU1yZmF6dlJZbTFNYmpwT0RGSE53AAAAAAEerdMWTTFEWjR6N1dRM2kzaWZhS1hJQ1BHQQAAAAAdL0Y4FlhlTXJmYXp2UlltMU1ianBPREZITncAAAAAHS9GORZYZU1yZmF6dlJZbTFNYmpwT0RGSE53AAAAAAEerdYWTTFEWjR6N1dRM2kzaWZhS1hJQ1BHQQAAAAABHq3UFk0xRFo0ejdXUTNpM2lmYUtYSUNQR0EAAAAAHS9GOhZYZU1yZmF6dlJZbTFNYmpwT0RGSE53AAAAAAEerdUWTTFEWjR6N1dRM2kzaWZhS1hJQ1BHQQAAAAAdL0Y7FlhlTXJmYXp2UlltMU1ianBPREZITncAAAAAHS9GPBZYZU1yZmF6dlJZbTFNYmpwT0RGSE53"

}

## 切片并发执行,max 最大为索引分片数GET /fz_chance_visit_record/_search?scroll=1m&size=10

{

"query": {

"match_all": {}},

"slice": {

// 0 1 2 3 4

"id": 0,

"max": 5}

}

#### 5.2.2 search after

## 修改 max_result_window=5 提升演示效果

PUT product1/_settings

{

"index": {

"max_result_window": 5

}

}

## 普通分页查询

GET product1/_search?size=5

##

GET product1/_search?size=5

{

"sort": [

{

"price": {

"order": "desc"

}

},

{

"_id": {

"order": "asc"

}

}

]

}

GET product1/_search?size=5

{

"search_after": [

5012,

"9"

],

"sort": [

{

"price": {

"order": "desc"

}

},

{

"_id": {

"order": "asc"

}

}

]

}

GET product1/_search?size=5

{

"search_after": [

991,

"4"

],

"sort": [

{

"price": {

"order": "desc"

}

},

{

"_id": {

"order": "asc"

}

}

]

}大厂都一致抛弃了跳页,采用search_after 做深度分页,可以预先查询出前后几页,实现简单的、有限制的跳页功能。