第4周编程作业-Programming Exercise 3: Multi-class Classifification and Neural Networks多元分类和神经网络-机器学习-吴恩达

文章目录

- ex3-Multi-class Classifification and Neural Networks

-

- Exercise 3 | Part 1: One-vs-all多元分类

-

- Part 1: Loading and Visualizing Data

- Part 2 a: Vectorize Logistic Regression

-

- 理论基础

- 代码

- Part 2 b: One-vs-All Training

- Part 3: Predict for One-Vs-All

- Exercise 3 | Part 2: Neural Networks神经网络

-

- Part 1: Loading and Visualizing Data

- Part 2: Loading Pameters

- Part 3: Implement Predict

ex3-Multi-class Classifification and Neural Networks

Exercise 3 | Part 1: One-vs-all多元分类

Part 1: Loading and Visualizing Data

ex3.m中Part 1

%% =========== Part 1: Loading and Visualizing Data =============

% We start the exercise by first loading and visualizing the dataset.

% You will be working with a dataset that contains handwritten digits.

%

% Load Training Data

fprintf('Loading and Visualizing Data ...\n')

load('ex3data1.mat'); % training data stored in arrays X, y

m = size(X, 1);

% Randomly select 100 data points to display

rand_indices = randperm(m);

sel = X(rand_indices(1:100), :);

displayData(sel);

fprintf('Program paused. Press enter to continue.\n');

pause;

displayData.m修改为

function [h, display_array] = displayData(X, example_width)

%DISPLAYDATA Display 2D data in a nice grid

% [h, display_array] = DISPLAYDATA(X, example_width) displays 2D data

% stored in X in a nice grid. It returns the figure handle h and the

% displayed array if requested.

% Set example_width automatically if not passed in

% 如果'example_width'不存在或者为空矩阵时,定义example_width=X列数的算数平方根

if ~exist('example_width', 'var') || isempty(example_width)

example_width = round(sqrt(size(X, 2)));

end

% Gray Image

colormap(gray);

% Compute rows, cols

% m=100, n=400 , example_height=20

[m n] = size(X);

example_height = (n / example_width);

% Compute number of items to display

% display_rows=10, display_cols=10,展示数字图像是10行,每行10个

display_rows = floor(sqrt(m));

display_cols = ceil(m / display_rows);

% Between images padding

% 定义了边界,图片中黑色边框就是边界。可以将pad设置为0,比较前后差别。

pad = 1;

% Setup blank display

% display_array 是一个 (1 + 10 * ( 20 + 1),1 + 10 * (20 + 1))的矩阵

display_array = - ones(pad + display_rows * (example_height + pad), ...

pad + display_cols * (example_width + pad));

% Copy each example into a patch on the display array

% 这是关键部分

curr_ex = 1;

for j = 1:display_rows % j从1到10

for i = 1:display_cols %i从1到10

if curr_ex > m, % curr_ex不超过100

break;

end

% Copy the patch

% Get the max value of the patch

% 取 X 每一行的最大值方便后面进行按比例缩小(暂且称为标准化)

max_val = max(abs(X(curr_ex, :)));

% 把原先的 X 里面每行标准化以后按 20 * 20 的形式 给 display_array 中的赋值

display_array(pad + (j - 1) * (example_height + pad) + (1:example_height), ...

pad + (i - 1) * (example_width + pad) + (1:example_width)) = ...

reshape(X(curr_ex, :), example_height, example_width) / max_val;

curr_ex = curr_ex + 1;

end

if curr_ex > m,

break;

end

end

% Display Image

h = imagesc(display_array, [-1 1]);

% Do not show axis

axis image off

drawnow;

end

最关键的部分如下:

% Copy each example into a patch on the display array

% 这是关键部分

curr_ex = 1;

for j = 1:display_rows % j从1到10

for i = 1:display_cols %i从1到10

if curr_ex > m, % curr_ex不超过100

break;

end

% Copy the patch

% Get the max value of the patch

% 取 X 每一行的最大值方便后面进行按比例缩小(暂且称为标准化)

max_val = max(abs(X(curr_ex, :)));

% 把原先的 X 里面每行标准化以后按 20 * 20 的形式 给 display_array 中的赋值

display_array(pad + (j - 1) * (example_height + pad) + (1:example_height), ...

pad + (i - 1) * (example_width + pad) + (1:example_width)) = ...

reshape(X(curr_ex, :), example_height, example_width) / max_val;

curr_ex = curr_ex + 1;

end

if curr_ex > m,

break;

end

end

第17行中:reshape(X(curr_ex, : ) , 20, 20)是将X的第curr_ex行(共400列)变形为20x20矩阵。然后整个矩阵除以这一行中的最大值max_val,类似前面讲过的标准化,使得这个矩阵的值在[-1,1]。

第15行中:display_array[1+(j-1) ∗ \ast ∗ 21+(1:20) , 1+(i-1) ∗ \ast ∗ 21+(1:20)] = … 是将17行中的变换后矩阵逐行逐列赋值给了display_array。1+(j-1) ∗ \ast ∗ 21+(1:20)这样写的原因如下,1+(i-1) ∗ \ast ∗ 21+(1:20)也很类似。

- 1:是指得边界pad=1(如果令pad=0,可以发现效果不同)

- (1:20):是因为图片的高度是20,(1:20)是逐行把图片数据赋值

- (j-1) ∗ \ast ∗ 21:21(20图片高度+1边界pad)是因为比如结果中第一列的第二张图片起始行的位置前面有一个pad,一张图片以及一个pad。

例子:拿 curr_ex = 1, i = 1, j = 1 来举例,

display_array ( 1 + 0 * 21 + (1:20), 1 + 0 * 21 + (1:20)) 也就是

display_array((2:21),(2:21)) = reshape(X(1,:), 20, 20) / max_val;

这样子逐行逐列把每个小图的矩阵填进display_array。这个例子里画出来的就是结果中的第一行第一列的图数字7。

参考了关于吴恩达ML的第4周作业,神经网络的问题displaydata函数?

displayData.m中用到的一些语法:

p = randperm(n)

% 返回行向量,其中包含从 1 到 `n`(包括二者)之间的整数随机置换。

p = randperm(n,k)

% 返回行向量,其中包含在 1 到 `n`(包括二者)之间随机选择的k个唯一整数。

load(filename)

%{

从 `filename` 加载数据

- 如果 `filename` 是 MAT 文件,`load(filename)` 会将 MAT 文件中的变量加载到 MATLAB® 工作区。

- 如果 `filename` 是 ASCII 文件,`load(filename)` 会创建一个包含该文件数据的双精度数组。%}

... %换行符

Y = round(X)

% 将 `X` 的每个元素四舍五入为最近的整数。在对等情况下,即有元素的小数部分恰为 `0.5` 时,`round` 函数会偏离零四舍五入到具有更大幅值的整数。

X = [2.11 3.5; -3.5 0.78];

Y = round(X)

Y = 2×2

2 4

-4 1

Y = floor(X)

% 将 `X` 的每个元素四舍五入到小于或等于该元素的最接近整数。

Y = ceil(X)

% 将 `X` 的每个元素四舍五入到大于或等于该元素的最接近整数。

imagesc(C)

%将数组 `C` 中的数据显示为一个图像,该图像使用颜色图中的全部颜色。`C` 的每个元素指定图像的一个像素的颜色。生成的图像是一个 `m`×`n` 像素网格,其中 `m` 和 `n` 分别是 `C` 中的行数和列数。这些元素的行索引和列索引确定了对应像素的中心。

B = reshape(A,sz1,...,szN)

%{将 `A` 重构为一个 `sz1`×`...`×`szN` 数组,其中 `sz1,...,szN` 指示每个维度的大小。

可以指定 `[]` 的单个维度大小,以便自动计算维度大小,以使 `B` 中的元素数与 `A` 中的元素数相匹配。例如,如果 `A` 是一个 10×10 矩阵,则 `reshape(A,2,2,[])` 将 `A` 的 100 个元素重构为一个 2×2×25 数组。%}

c = exist(name ,type)

% 检查‘name’是否作为一个变量,函数,文件,路径或者类存在。

Part 2 a: Vectorize Logistic Regression

理论基础

-

逻辑回归的代价函数和梯度

(1)代价函数:

向量化之后为:

h = g ( X θ ) J ( θ ) = 1 m ⋅ ( − y T log ( h ) − ( 1 − y ) T log ( 1 − h ) ) h = g(X\theta)\\ J(\theta) = \frac{1}{m} \cdot \left(-y^{T}\log(h)-(1-y)^{T}\log(1-h)\right) h=g(Xθ)J(θ)=m1⋅(−yTlog(h)−(1−y)Tlog(1−h))(2)梯度:

-

正则化逻辑回归的代价函数和梯度(在上面的逻辑回归的基础上加以调整):

特别注意: θ 0 \theta_0 θ0没有包括在后面的项中。

代码

ex3.m中Part 2 a:

%% ============ Part 2a: Vectorize Logistic Regression ============

% In this part of the exercise, you will reuse your logistic regression

% code from the last exercise. You task here is to make sure that your

% regularized logistic regression implementation is vectorized. After

% that, you will implement one-vs-all classification for the handwritten

% digit dataset.

%

% Test case for lrCostFunction

fprintf('\nTesting lrCostFunction() with regularization');

theta_t = [-2; -1; 1; 2];

X_t = [ones(5,1) reshape(1:15,5,3)/10];

y_t = ([1;0;1;0;1] >= 0.5);

lambda_t = 3;

[J grad] = lrCostFunction(theta_t, X_t, y_t, lambda_t);

fprintf('\nCost: %f\n', J);

fprintf('Expected cost: 2.534819\n');

fprintf('Gradients:\n');

fprintf(' %f \n', grad);

fprintf('Expected gradients:\n');

fprintf(' 0.146561\n -0.548558\n 0.724722\n 1.398003\n');

fprintf('Program paused. Press enter to continue.\n');

pause;

我们需要在lrCostFunction.m中使用正则化逻辑回归的代价函数和梯度:

Tips:

sum(z(2:end).^2) % 将z从第二项到最后的元素自身平方再求和

% 一种求正则化逻辑回归代价函数和梯度的写法:先写没有正则的逻辑回归,然后添加后面的项

grad = (unregularized gradient for logistic regression)

temp = theta;

temp(1) = 0; % because we don't add anything for j = 0

grad = grad + YOUR_CODE_HERE (using the temp variable)

lrCostFunction.m应该修改为:

function [J, grad] = lrCostFunction(theta, X, y, lambda)

%LRCOSTFUNCTION Compute cost and gradient for logistic regression with

%regularization

% J = LRCOSTFUNCTION(theta, X, y, lambda) computes the cost of using

% theta as the parameter for regularized logistic regression and the

% gradient of the cost w.r.t. to the parameters.

% Initialize some useful values

m = length(y); % number of training examples

% You need to return the following variables correctly

J = 0;

grad = zeros(size(theta));

% ====================== YOUR CODE HERE ======================

% Instructions: Compute the cost of a particular choice of theta.

% You should set J to the cost.

% Compute the partial derivatives and set grad to the partial

% derivatives of the cost w.r.t. each parameter in theta

%

% Hint: The computation of the cost function and gradients can be

% efficiently vectorized. For example, consider the computation

%

% sigmoid(X * theta)

%

% Each row of the resulting matrix will contain the value of the

% prediction for that example. You can make use of this to vectorize

% the cost function and gradient computations.

%

% Hint: When computing the gradient of the regularized cost function,

% there're many possible vectorized solutions, but one solution

% looks like:

% grad = (unregularized gradient for logistic regression)

% temp = theta;

% temp(1) = 0; % because we don't add anything for j = 0

% grad = grad + YOUR_CODE_HERE (using the temp variable)

%

h = sigmoid(X * theta);

J = 1/m .* ( -y' * log(h)- (1-y)' * log(1-h)) + ...

lambda /(2*m) * sum(theta(2:end) .^2 ) ;

grad = 1/m .* X' * (h - y);

temp = theta;

temp(1) = 0;

grad = grad + lambda/m * temp;

% =============================================================

grad = grad(:);

end

Part 2 b: One-vs-All Training

说明文件中讲清楚了:你需要在oneVsAll.m返回分类器的参数 θ ∈ R K × ( N + 1 ) \theta \in \mathbb{R}^{K \times (N+1)} θ∈RK×(N+1) ,其中每一行对应学习一类的逻辑回归参数,共有K类所以有K行。

ex3.m中

%% ============ Part 2b: One-vs-All Training ============

fprintf('\nTraining One-vs-All Logistic Regression...\n')

lambda = 0.1;

[all_theta] = oneVsAll(X, y, num_labels, lambda);

fprintf('Program paused. Press enter to continue.\n');

pause;

oneVsAll.m修改为:

function [all_theta] = oneVsAll(X, y, num_labels, lambda)

%ONEVSALL trains multiple logistic regression classifiers and returns all

%the classifiers in a matrix all_theta, where the i-th row of all_theta

%corresponds to the classifier for label i

% [all_theta] = ONEVSALL(X, y, num_labels, lambda) trains num_labels

% logistic regression classifiers and returns each of these classifiers

% in a matrix all_theta, where the i-th row of all_theta corresponds

% to the classifier for label i

% Some useful variables

m = size(X, 1);

n = size(X, 2);

% You need to return the following variables correctly

all_theta = zeros(num_labels, n + 1);

% Add ones to the X data matrix

X = [ones(m, 1) X];

% ====================== YOUR CODE HERE ======================

% Instructions: You should complete the following code to train num_labels

% logistic regression classifiers with regularization

% parameter lambda.

%

% Hint: theta(:) will return a column vector.

%

% Hint: You can use y == c to obtain a vector of 1's and 0's that tell you

% whether the ground truth is true/false for this class.

%

% Note: For this assignment, we recommend using fmincg to optimize the cost

% function. It is okay to use a for-loop (for c = 1:num_labels) to

% loop over the different classes.

%

% fmincg works similarly to fminunc, but is more efficient when we

% are dealing with large number of parameters.

%

% Example Code for fmincg:

%

% % Set Initial theta

% initial_theta = zeros(n + 1, 1);

%

% % Set options for fminunc

% options = optimset('GradObj', 'on', 'MaxIter', 50);

%

% % Run fmincg to obtain the optimal theta

% % This function will return theta and the cost

% [theta] = ...

% fmincg (@(t)(lrCostFunction(t, X, (y == c), lambda)), ...

% initial_theta, options);

%

initial_theta = zeros(n+1 , 1);

options = optimset('Gradobj', 'on', 'MaxIter', 50);

for c = 1: num_labels

[all_theta(c,:)] = fmincg(@(t)lrCostFunction(t, X, (y==c), lambda) ,...

initial_theta, options);

endfor

% =========================================================================

end

Part 3: Predict for One-Vs-All

ex3.m中:

%% ================ Part 3: Predict for One-Vs-All ================

pred = predictOneVsAll(all_theta, X);

fprintf('\nTraining Set Accuracy: %f\n', mean(double(pred == y)) * 100);

predictOneVsAll.m修改为:

function p = predictOneVsAll(all_theta, X)

%PREDICT Predict the label for a trained one-vs-all classifier. The labels

%are in the range 1..K, where K = size(all_theta, 1).

% p = PREDICTONEVSALL(all_theta, X) will return a vector of predictions

% for each example in the matrix X. Note that X contains the examples in

% rows. all_theta is a matrix where the i-th row is a trained logistic

% regression theta vector for the i-th class. You should set p to a vector

% of values from 1..K (e.g., p = [1; 3; 1; 2] predicts classes 1, 3, 1, 2

% for 4 examples)

m = size(X, 1);

num_labels = size(all_theta, 1);

% You need to return the following variables correctly

p = zeros(size(X, 1), 1);

% Add ones to the X data matrix

X = [ones(m, 1) X];

% ====================== YOUR CODE HERE ======================

% Instructions: Complete the following code to make predictions using

% your learned logistic regression parameters (one-vs-all).

% You should set p to a vector of predictions (from 1 to

% num_labels).

%

% Hint: This code can be done all vectorized using the max function.

% In particular, the max function can also return the index of the

% max element, for more information see 'help max'. If your examples

% are in rows, then, you can use max(A, [], 2) to obtain the max

% for each row.

%

h = X * all_theta';

[M , p] = max(h ,[], 2);

fprintf('\nTesting ');

% =========================================================================

end

Exercise 3 | Part 2: Neural Networks神经网络

Part 1: Loading and Visualizing Data

和之前讲过的一样

randperm % 将一列序号随机打乱,序号必须是整数。

>> randperm(5)

ans =

5 3 4 1 2

Part 2: Loading Pameters

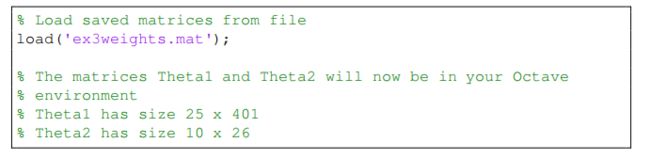

Theta1和Theta2已经设置好了,只需要加载不需要训练。

Part 3: Implement Predict

ex3_nn.m中:

%% ================= Part 3: Implement Predict =================

% After training the neural network, we would like to use it to predict

% the labels. You will now implement the "predict" function to use the

% neural network to predict the labels of the training set. This lets

% you compute the training set accuracy.

pred = predict(Theta1, Theta2, X);

fprintf('\nTraining Set Accuracy: %f\n', mean(double(pred == y)) * 100);

fprintf('Program paused. Press enter to continue.\n');

pause;

% To give you an idea of the network's output, you can also run

% through the examples one at the a time to see what it is predicting.

% Randomly permute examples

rp = randperm(m);

for i = 1:m

% Display

fprintf('\nDisplaying Example Image\n');

displayData(X(rp(i), :));

pred = predict(Theta1, Theta2, X(rp(i),:));

fprintf('\nNeural Network Prediction: %d (digit %d)\n', pred, mod(pred, 10));

% Pause with quit option

s = input('Paused - press enter to continue, q to exit:','s');

if s == 'q'

break

end

end

function p = predict(Theta1, Theta2, X)

%PREDICT Predict the label of an input given a trained neural network

% p = PREDICT(Theta1, Theta2, X) outputs the predicted label of X given the

% trained weights of a neural network (Theta1, Theta2)

% Useful values

m = size(X, 1);

num_labels = size(Theta2, 1);

% You need to return the following variables correctly

p = zeros(size(X, 1), 1);

% ====================== YOUR CODE HERE ======================

% Instructions: Complete the following code to make predictions using

% your learned neural network. You should set p to a

% vector containing labels between 1 to num_labels.

%

% Hint: The max function might come in useful. In particular, the max

% function can also return the index of the max element, for more

% information see 'help max'. If your examples are in rows, then, you

% can use max(A, [], 2) to obtain the max for each row.

%

X = [ones(m,1) X];

hidden = sigmoid(X * Theta1');

hidden = [ones(m , 1) hidden];

[M,p] = max(sigmoid(hidden * Theta2') ,[], 2);

% =========================================================================

end