Hadoop之Yarn篇

目录

编辑

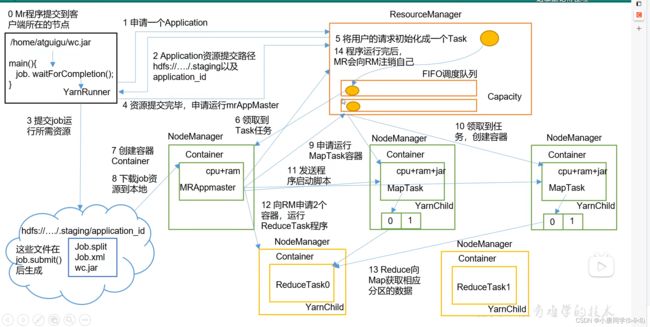

Yarn的工作机制:

全流程作业:

Yarn的调度器与调度算法:

FIFO调度器(先进先出):

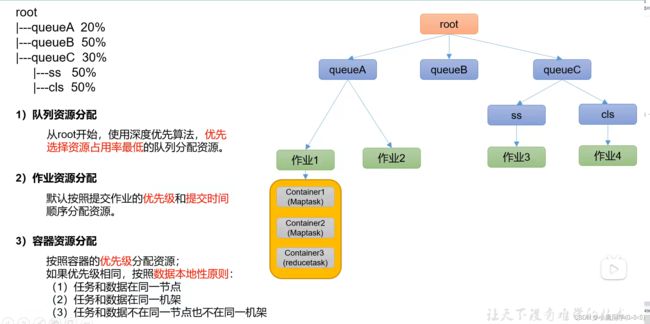

容量调度器(Capacity Scheduler):

容量调度器资源分配算法:

编辑

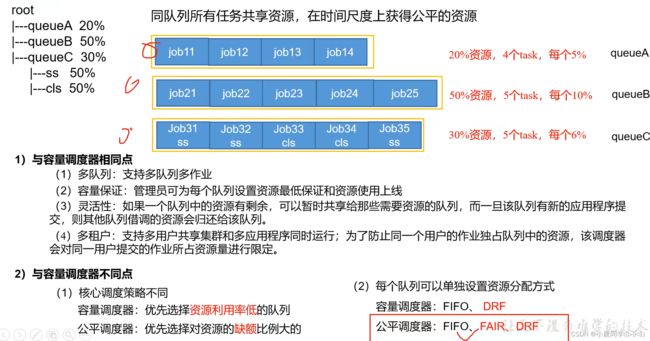

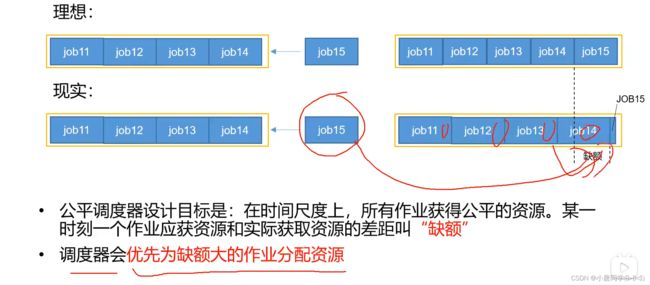

公平调度器(Fair Scheduler):

Yarn的常用命令:

yarn application查看任务

(1)列出所有Application:

(2)根据Application状态过滤:

(3)Kill掉Application:

yarn logs查看日志:

(1)查询Application日志:

(2)查询Container日志:

yarn applicationattempt查看尝试运行的任务

yarn container查看容器

(1)列出所有Container:

(2)打印Container状态:

***注:只有在任务跑的途中才能看到container的状态

yarn node查看节点状态:

列出所有节点:

yarn rmadmin更新配置

加载队列配置:

yarn queue查看队列:

打印队列信息:

Yarn生产环境核心参数:

环境配置代码:

2.2.4 任务优先级

公平调度器案例

Yarn的工作机制:

(其实主要为YARN与MapReduce的交互)

(0): 在linux中运行打包的Java程序 (wc.jar)程序的入口是main方法

在程序的最后一行 job.waitForCompletion()会创建YarnRunner(本地创建---)

(1): YarnRunner向集群(ResourceManger)申请Application(后边详讲作用)=

(2): Application资源提交路径

(3): 提交job运行所需要的资源(Job.spilt Job.xml wc.jar )(按照(2)中提供的路径进行上传)

(4) 资源提交完毕后,申请运行mrAppMaster (程序运行的老大)

(5) 将用户的请求初始化成一个Task (让后放入任务队列中---FIFO调度队列)

(6) NodeManger领取Task任务

(7) NodeManger创建容器,任何任务的执行都是在容器中执行的(容器中有cpu+ram--网络资 源),并且在容器中启动了一个MRAppmaster

(8) MRAppmaster下载job资源到本地

(9) MRAppmaster根据job资源(切片)申请运行MapTask容器

(10) 领取任务,创建MapTask容器(NodeManager)(cpu+ram+jar)

(11) MRAppmaster 发送程序,启动脚本(MapTask)

(12) MapTask运行结束后MRAppmaster得到信息,向RM申请两个容器,运行Reduce Task程序

(13) Reduce向Map获取相应分区的数据

(14) 程序结束后,MR会向RM注销自己(释放资源)

全流程作业:

主要了解HDFS,YARN,MapReduce三者之间的关系

Yarn的调度器与调度算法:

多个客户端向集群提交任务,任务多了集群会把任务放入到任务队列中进行管理。

Hadoop作业调度器主要有三种:FIFO、容量(Capacity Scheduler)和公平(Fair Scheduler)。Apache Hadoop3.1.3默认的资源调度器是Capacity Scheduler。

CDH框架默认调度器是Fair Scheduler。

FIFO调度器(先进先出):

FIFO调度器(First In First Out):单队列,根据提交作业的先后顺序,进行先来先服务。

优点:简单易懂;

缺点:不支持多队列,生产环境很少使用;(在大数据中,体现大容量,高并发,不能满足)

容量调度器(Capacity Scheduler):

Capacity Scheduler是Yahoo开发的多用户调度器。(多个用户可以提交任务)

容量调度器资源分配算法:

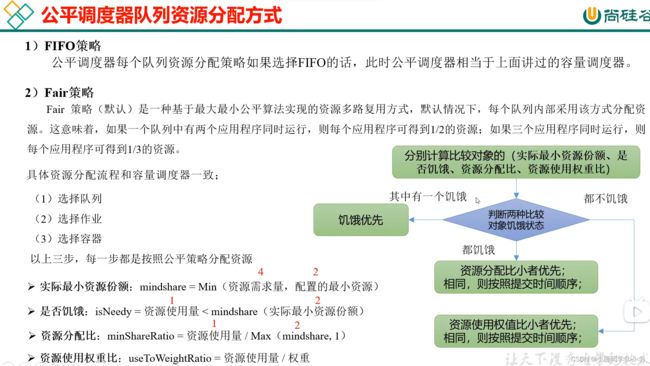

公平调度器(Fair Scheduler):

Fair Schedulere是Facebook开发的 多用户调度器。

缺额问题:

Yarn的常用命令:

yarn application查看任务

(1)列出所有Application:

yarn application -list 是查看mapreduce运行过程的状态

在测试中经常用下述命令,简单解释一下:

hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-3.1.3.jar wordcount /input /outputhadoop: Hadoop框架的命令行工具jar: 运行MapReduce任务所需的Java可执行jar包share/hadoop/mapreduce/hadoop-mapreduce-examples-3.1.3.jar: Hadoop自带的MapReduce示例程序的jar包,其中包括了一些常用的MapReduce任务的示例代码wordcount: 示例程序中的一个任务,表示统计给定文本中每个单词出现的次数(wordcount是一个由Apache Hadoop社区提供的系统自带的示例MapReduce程序,用于统计给定文本中每个单词出现的次数。在Hadoop安装包中的hadoop-mapreduce-examples-*.jar文件中包含了wordcount等多个示例程序的源代码和二进制文件。使用

)wordcount示例程序可以帮助开发人员了解MapReduce编程的基本概念和实现方式,同时也可以作为一个基础模板,为开发定制化的MapReduce任务提供参考。因此,在学习和使用Hadoop MapReduce时,wordcount通常是第一个学习的示例程序之一。/input: 待处理的输入文件或文件夹路径,输入文件可以是本地文件系统上的文件,也可以是HDFS上的文件/output: 处理结果输出路径,输出结果将会写入HDFS上的这个路径中,如果该路径不存在,则会自动创建

因此,运行这个命令会在Hadoop集群上启动一个名为wordcount的MapReduce任务,统计/input路径下的文本中每个单词出现的次数,并将结果输出到/output路径中。

(2)根据Application状态过滤:

yarn application -list -appStates (所有状态:ALL、NEW、NEW_SAVING、SUBMITTED、ACCEPTED、RUNNING、FINISHED、FAILED、KILLED)

yarn application -list -appStates FINISHED 是查看已经结束的任务

(3)Kill掉Application:

yarn application -kill 任务ID

在某个任务比较消耗时间的时候 需要杀死 启用此命令

yarn logs查看日志:

(1)查询Application日志:

yarn logs -applicationId <任务ID--应用程序>

(2)查询Container日志:

yarn logs -applicationId

yarn applicationattempt查看尝试运行的任务

即查看正在运行的状态

yarn applicationattempt -list 任务ID

yarn container查看容器

(1)列出所有Container:

yarn container -list

(2)打印Container状态:

yarn container -status

***注:只有在任务跑的途中才能看到container的状态

yarn node查看节点状态:

列出所有节点:

yarn node -list -all

yarn rmadmin更新配置

加载队列配置:

yarn rmadmin -refreshQueues

yarn queue查看队列:

打印队列信息:

yarn queue -status

(都有 default队列)

Yarn生产环境核心参数:

环境配置代码:

将代码添加到yarn-site.xml文件下

让后进行分发

重置后要重启集群才能发挥作用

The class to use as the resource scheduler.

yarn.resourcemanager.scheduler.class

org.apache.hadoop.yarn.server.resourcemanager.scheduler.capacity.CapacityScheduler

Number of threads to handle scheduler interface.

yarn.resourcemanager.scheduler.client.thread-count

8

Enable auto-detection of node capabilities such as

memory and CPU.

yarn.nodemanager.resource.detect-hardware-capabilities

false

Flag to determine if logical processors(such as

hyperthreads) should be counted as cores. Only applicable on Linux

when yarn.nodemanager.resource.cpu-vcores is set to -1 and

yarn.nodemanager.resource.detect-hardware-capabilities is true.

yarn.nodemanager.resource.count-logical-processors-as-cores

false

Multiplier to determine how to convert phyiscal cores to

vcores. This value is used if yarn.nodemanager.resource.cpu-vcores

is set to -1(which implies auto-calculate vcores) and

yarn.nodemanager.resource.detect-hardware-capabilities is set to true. The number of vcores will be calculated as number of CPUs * multiplier.

yarn.nodemanager.resource.pcores-vcores-multiplier

1.0

Amount of physical memory, in MB, that can be allocated

for containers. If set to -1 and

yarn.nodemanager.resource.detect-hardware-capabilities is true, it is

automatically calculated(in case of Windows and Linux).

In other cases, the default is 8192MB.

yarn.nodemanager.resource.memory-mb

4096

Number of vcores that can be allocated

for containers. This is used by the RM scheduler when allocating

resources for containers. This is not used to limit the number of

CPUs used by YARN containers. If it is set to -1 and

yarn.nodemanager.resource.detect-hardware-capabilities is true, it is

automatically determined from the hardware in case of Windows and Linux.

In other cases, number of vcores is 8 by default.

yarn.nodemanager.resource.cpu-vcores

4

The minimum allocation for every container request at the RM in MBs. Memory requests lower than this will be set to the value of this property. Additionally, a node manager that is configured to have less memory than this value will be shut down by the resource manager.

yarn.scheduler.minimum-allocation-mb

1024

The maximum allocation for every container request at the RM in MBs. Memory requests higher than this will throw an InvalidResourceRequestException.

yarn.scheduler.maximum-allocation-mb

2048

The minimum allocation for every container request at the RM in terms of virtual CPU cores. Requests lower than this will be set to the value of this property. Additionally, a node manager that is configured to have fewer virtual cores than this value will be shut down by the resource manager.

yarn.scheduler.minimum-allocation-vcores

1

The maximum allocation for every container request at the RM in terms of virtual CPU cores. Requests higher than this will throw an

InvalidResourceRequestException.

yarn.scheduler.maximum-allocation-vcores

2

Whether virtual memory limits will be enforced for

containers.

yarn.nodemanager.vmem-check-enabled

false

Ratio between virtual memory to physical memory when setting memory limits for containers. Container allocations are expressed in terms of physical memory, and virtual memory usage is allowed to exceed this allocation by this ratio.

yarn.nodemanager.vmem-pmem-ratio

2.1

2.2.4 任务优先级

容量调度器,支持任务优先级的配置,在资源紧张时,优先级高的任务将优先获取资源。默认情况,Yarn将所有任务的优先级限制为0,若想使用任务的优先级功能,须开放该限制。

- 修改yarn-site.xml文件,增加以下参数

让后分发配置,重启yarn

公平调度器案例

公平调度器常用于中大厂,也默认有defaule队列

创建两个队列,分别是test和atguigu(以用户所属组命名)。期望实现以下效果:若用户提交任务时指定队列,则任务提交到指定队列运行;若未指定队列,test用户提交的任务到root.group.test队列运行,atguigu提交的任务到root.group.atguigu队列运行(注:group为用户所属组)。

公平调度器的配置涉及到两个文件,一个是yarn-site.xml,另一个是公平调度器队列分配文件fair-scheduler.xml(文件名可自定义)。