使用Docker搭建ClickHouse+Zookeeper集群

-

使用VMware创建一个unbutu系统

-

更换apt源

# 备份信息 sduo cp /etc/apt/sources.list /etc/apt/sources.list.bat # 打开配置文件进行修改 sudo vi /etc/apt/sources.list # 删除所有数据换成一下信息deb http://mirrors.aliyun.com/ubuntu/ focal main restricted universe multiverse deb-src http://mirrors.aliyun.com/ubuntu/ focal main restricted universe multiverse deb http://mirrors.aliyun.com/ubuntu/ focal-security main restricted universe multiverse deb-src http://mirrors.aliyun.com/ubuntu/ focal-security main restricted universe multiverse deb http://mirrors.aliyun.com/ubuntu/ focal-updates main restricted universe multiverse deb-src http://mirrors.aliyun.com/ubuntu/ focal-updates main restricted universe multiverse deb http://mirrors.aliyun.com/ubuntu/ focal-backports main restricted universe multiverse deb-src http://mirrors.aliyun.com/ubuntu/ focal-backports main restricted universe multiverse deb http://mirrors.aliyun.com/ubuntu/ focal-proposed main restricted universe multiverse deb-src http://mirrors.aliyun.com/ubuntu/ focal-proposed main restricted universe multiverse deb [arch=amd64] https://download.docker.com/linux/ubuntu focal stable # deb-src [arch=amd64] https://download.docker.com/linux/ubuntu focal stable# 更新apt sudo apt-get update -

搭建docker环境

# 卸载旧的docker环境 sudo apt-get remove docker docker-engine docker-ce docker.io # 安装以下包以使apt可以通过HTTPS使用存储库(repository): sudo apt-get install -y apt-transport-https ca-certificates curl software-properties-common # 添加Docker官方的GPG密钥: curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo apt-key add - # 使用下面的命令来设置stable存储库: sudo add-apt-repository "deb [arch=amd64] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable" # 安装最新版本的Docker CE:这个根据网络情况会比较慢 sudo apt-get install -y docker-ce # 查看docker服务状态 systemctl status docker # 如果没启动,则启动docker服务 sudo systemctl start docker # 修改docker源 # 进入阿里云 复制 加速器地址 https://cr.console.aliyun.com/cn-hangzhou/instances/mirrors # 修改/创建daemon配置文件/etc/docker/daemon.json来使用加速器 sudo vim /etc/docker/daemon.json # 复制一下信息 { "registry-mirrors": ["https://xxxxxxxx.mirror.aliyuncs.com"] } -

拉取

yandex/clickhouse-server、zookeeper镜像docker pull yandex/clickhouse-server docker pull zookeeper -

复制虚拟机

使用VMware直接复制

-

修改hosts

# 三台服务器的ip分别是:117、105、103 # 分别修改三台服务器的hosts文件 vim /etc/hosts # 服务1 的ip 192.168.3.117 server01 # 服务2 的ip 192.168.3.105 server02 # 服务3 的ip 192.168.3.103 server03 -

Zookeeper集群搭建

# 创建zk配置信息存放文件 sudo mkdir /usr/soft sudo mkdir /usr/soft/zookeeperserver01执行:

docker run -d -p 2181:2181 -p 2888:2888 -p 3888:3888 --name zookeeper_node --restart always \ -v /usr/soft/zookeeper/data:/data \ -v /usr/soft/zookeeper/datalog:/datalog \ -v /usr/soft/zookeeper/logs:/logs \ -v /usr/soft/zookeeper/conf:/conf \ --network host \ -e ZOO_MY_ID=1 zookeeperserver02执行:

docker run -d -p 2181:2181 -p 2888:2888 -p 3888:3888 --name zookeeper_node --restart always \ -v /usr/soft/zookeeper/data:/data \ -v /usr/soft/zookeeper/datalog:/datalog \ -v /usr/soft/zookeeper/logs:/logs \ -v /usr/soft/zookeeper/conf:/conf \ --network host \ -e ZOO_MY_ID=2 zookeeperserver03执行:

docker run -d -p 2181:2181 -p 2888:2888 -p 3888:3888 --name zookeeper_node --restart always \ -v /usr/soft/zookeeper/data:/data \ -v /usr/soft/zookeeper/datalog:/datalog \ -v /usr/soft/zookeeper/logs:/logs \ -v /usr/soft/zookeeper/conf:/conf \ --network host \ -e ZOO_MY_ID=3 zookeeper唯一的差别是:

-e ZOO_MY_ID=*而已。 -

修改zookeeper配置文件

/usr/soft/zookeeper/conf/zoo.cfgdataDir=/data dataLogDir=/datalog tickTime=2000 initLimit=5 syncLimit=2 clientPort=2181 autopurge.snapRetainCount=3 autopurge.purgeInterval=0 maxClientCnxns=60 # 服务1 的ip server.1=192.168.3.117:2888:3888 # 服务2 的ip server.2=192.168.3.105:2888:3888 # 服务3 的ip server.3=192.168.3.103:2888:3888 -

验证zookeeper是否配置成功

docker exec -it zookeeper_node /bin/bash ./bin/zkServer.sh status成功结果

ZooKeeper JMX enabled by default Using config: /conf/zoo.cfg Client port found: 2181. Client address: localhost. Client SSL: false. Mode: follower -

Clickhouse集群部署

-

拷贝出临时镜像配置

# 运行一个临时容器,目的是为了将配置、数据、日志等信息存储到宿主机上: docker run --rm -d --name=temp-ch yandex/clickhouse-server # 拷贝容器内的文件: docker cp temp-ch:/etc/clickhouse-server/ /etc/ -

修改配置文件

/etc/clickhouse-server/config.xml//同时兼容IPV6,一劳永逸 <listen_host>0.0.0.0listen_host> //设置时区 <timezone>Asia/Shanghaitimezone> //删除原节点<remote_servers>的测试信息 //新增 <remote_servers incl="clickhouse_remote_servers" /> //新增,和上面的remote_servers 节点同级 <include_from>/etc/clickhouse-server/metrika.xmlinclude_from> //新增,和上面的remote_servers 节点同级 <zookeeper incl="zookeeper-servers" optional="true" /> //新增,和上面的remote_servers 节点同级 <macros incl="macros" optional="true" /> -

拷贝配置文件到挂载文件下

# 分别在server01 server02 server03 执行创建指令 # 创建挂载文件 mian sudo mkdir /usr/soft/clickhouse-server sudo mkdir /usr/soft/clickhouse-server/main sudo mkdir /usr/soft/clickhouse-server/main/conf # 创建挂载文件 sub sudo mkdir /usr/soft/clickhouse-server/sub sudo mkdir /usr/soft/clickhouse-server/sub/conf # 拷贝配置文件 cp -rf /etc/clickhouse-server/ /usr/soft/clickhouse-server/main/conf cp -rf /etc/clickhouse-server/ /usr/soft/clickhouse-server/sub/conf -

修改每台服务器的scp配置

vim /etc/ssh/sshd_config # 修改 PermitRootLogin yes # 重启服务 systemctl restart sshd -

分发到其他服务器

# 拷贝配置到server02上 scp -r /usr/soft/clickhouse-server/main/conf/ server02:/usr/soft/clickhouse-server/main/ scp -r /usr/soft/clickhouse-server/sub/conf/ server02:/usr/soft/clickhouse-server/sub/ # 拷贝配置到server03上 scp -r /usr/soft/clickhouse-server/main/conf/ server03:/usr/soft/clickhouse-server/main/ scp -r /usr/soft/clickhouse-server/sub/conf/ server03:/usr/soft/clickhouse-server/sub/ -

删除掉临时容器

docker rm -f temp-ch -

进入

server01修改/usr/soft/clickhouse-server/sub/conf/config.xml为了和主分片 main的配置区分开来原:8123 9000 9004 9005 9009 修改为:8124 9001 9005 9010 server02和server03如此修改或scp命令进行分发

-

server01新增集群配置文件/usr/soft/clickhouse-server/main/conf/metrika.xml<yandex> <clickhouse_remote_servers> <cluster_3s_1r> <shard> <internal_replication>trueinternal_replication> <replica> <host>server01host> <port>9000port> <user>defaultuser> <password>password> replica> <replica> <host>server03host> <port>9001port> <user>defaultuser> <password>password> replica> shard> <shard> <internal_replication>trueinternal_replication> <replica> <host>server02host> <port>9000port> <user>defaultuser> <password>password> replica> <replica> <host>server01host> <port>9001port> <user>defaultuser> <password>password> replica> shard> <shard> <internal_replication>trueinternal_replication> <replica> <host>server03host> <port>9000port> <user>defaultuser> <password>password> replica> <replica> <host>server02host> <port>9001port> <user>defaultuser> <password>password> replica> shard> cluster_3s_1r> clickhouse_remote_servers> <zookeeper-servers> <node index="1"> <host>192.168.3.117host> <port>2181port> node> <node index="2"> <host>192.168.3.105host> <port>2181port> node> <node index="3"> <host>192.168.3.103host> <port>2181port> node> zookeeper-servers> <macros> <layer>01layer> <shard>01shard> <replica>cluster01-01-1replica> macros> <networks> <ip>::/0ip> networks> <clickhouse_compression> <case> <min_part_size>10000000000min_part_size> <min_part_size_ratio>0.01min_part_size_ratio> <method>lz4method> case> clickhouse_compression> yandex> -

server01新增集群配置文件/usr/soft/clickhouse-server/sub/conf/metrika.xml<yandex> <clickhouse_remote_servers> <cluster_3s_1r> <shard> <internal_replication>trueinternal_replication> <replica> <host>server01host> <port>9000port> <user>defaultuser> <password>password> replica> <replica> <host>server03host> <port>9001port> <user>defaultuser> <password>password> replica> shard> <shard> <internal_replication>trueinternal_replication> <replica> <host>server02host> <port>9000port> <user>defaultuser> <password>password> replica> <replica> <host>server01host> <port>9001port> <user>defaultuser> <password>password> replica> shard> <shard> <internal_replication>trueinternal_replication> <replica> <host>server03host> <port>9000port> <user>defaultuser> <password>password> replica> <replica> <host>server02host> <port>9001port> <user>defaultuser> <password>password> replica> shard> cluster_3s_1r> clickhouse_remote_servers> <zookeeper-servers> <node index="1"> <host>192.168.3.117host> <port>2181port> node> <node index="2"> <host>192.168.3.105host> <port>2181port> node> <node index="3"> <host>192.168.3.103host> <port>2181port> node> zookeeper-servers> <macros> <layer>01layer> <shard>02shard> <replica>cluster01-02-2replica> macros> <networks> <ip>::/0ip> networks> <clickhouse_compression> <case> <min_part_size>10000000000min_part_size> <min_part_size_ratio>0.01min_part_size_ratio> <method>lz4method> case> clickhouse_compression> yandex> -

将

server01新增的两个metrika.xml文件分发到server02,server03# server02 scp -r /usr/soft/clickhouse-server/main/conf/metrika.xml server02:/usr/soft/clickhouse-server/main/conf scp -r /usr/soft/clickhouse-server/sub/conf/metrika.xml server02:/usr/soft/clickhouse-server/sub/conf # server03 scp -r /usr/soft/clickhouse-server/main/conf/metrika.xml server03:/usr/soft/clickhouse-server/main/conf scp -r /usr/soft/clickhouse-server/sub/conf/metrika.xml server03:/usr/soft/clickhouse-server/sub/conf -

修改

server02,server03的metrika.xml文件# server02 main <macros> <layer>01layer> <shard>02shard> <replica>cluster01-02-1replica> macros> # server02 sub <macros> <layer>01layer> <shard>03shard> <replica>cluster01-03-2replica> macros> # server03 main <macros> <layer>01layer> <shard>03shard> <replica>cluster01-03-1replica> macros> # server03 sub <macros> <layer>01layer> <shard>02shard> <replica>cluster01-01-2replica> macros>至此,已经完成全部配置,其他的比如密码等配置,可以按需增加。

-

-

集群运行与测试

在每一台服务器上依次运行实例,zookeeper前面已经提前运行,没有则需先运行zk集群

在每台服务器执行命令,唯一不同的参数是hostname

-

运行main实例

docker run -d --name=ch-main -p 8123:8123 -p 9000:9000 -p 9009:9009 --ulimit nofile=262144:262144 \-v /usr/soft/clickhouse-server/main/data:/var/lib/clickhouse:rw \-v /usr/soft/clickhouse-server/main/conf:/etc/clickhouse-server:rw \-v /usr/soft/clickhouse-server/main/log:/var/log/clickhouse-server:rw \ --add-host server01:192.168.3.117 \ --add-host server02:192.168.3.105 \ --add-host server03:192.168.3.103 \ --hostname server01 \ --network host \ --restart=always \ yandex/clickhouse-server -

运行sub实例

docker run -d --name=ch-sub -p 8124:8124 -p 9001:9001 -p 9010:9010 --ulimit nofile=262144:262144 \ -v /usr/soft/clickhouse-server/sub/data:/var/lib/clickhouse:rw \ -v /usr/soft/clickhouse-server/sub/conf:/etc/clickhouse-server:rw \ -v /usr/soft/clickhouse-server/sub/log:/var/log/clickhouse-server:rw \ --add-host server01:192.168.3.117 \ --add-host server02:192.168.3.105 \ --add-host server03:192.168.3.103 \ --hostname server01 \ --network host \ --restart=always \ yandex/clickhouse-server -

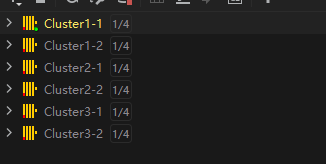

在每台服务器的实例都启动之后,这里使用正版DataGrip来打开

-

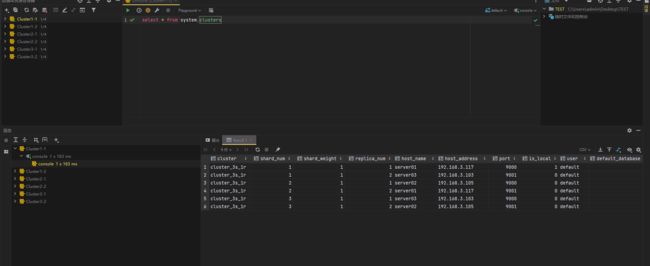

执行

select * from system.clusters查询集群 -

在任一实例上新建一个查询

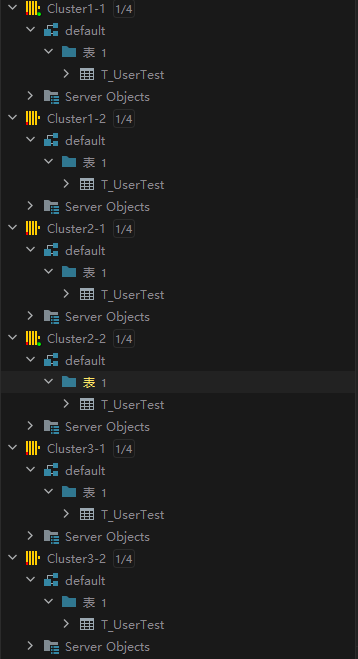

create table T_UserTest on cluster cluster_3s_1r ( ts DateTime, uid String, biz String ) engine = ReplicatedMergeTree('/clickhouse/tables/{layer}-{shard}/T_UserTest', '{replica}') PARTITION BY toYYYYMMDD(ts) ORDER BY ts SETTINGS index_granularity = 8192;cluster_3s_1r是前面配置的集群名称,需一一对应上, /clickhouse/tables/ 是固定的前缀,相关语法可以查看官方文档了。

刷新每个实例,即可看到全部实例中都有这张T_UserTest表,因为已经搭建zookeeper,很容易实现分布式DDL。

-

新建Distributed分布式表

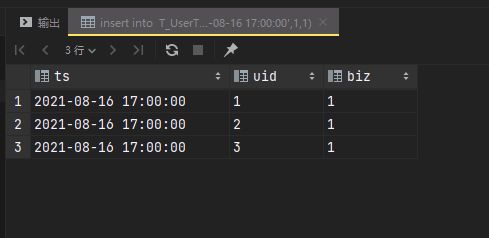

CREATE TABLE T_UserTest_All ON CLUSTER cluster_3s_1r AS T_UserTest ENGINE = Distributed(cluster_3s_1r, default, T_UserTest, rand())每个主分片分别插入相关信息:

--server01 insert into T_UserTest values ('2021-08-16 17:00:00',1,1) --server02 insert into T_UserTest values ('2021-08-16 17:00:00',2,1) --server03 insert into T_UserTest values ('2021-08-16 17:00:00',3,1)查询对应的副本表或者关闭其中一台服务器的docker实例,查询也是不受影响,时间关系不在测试

-