《VINS-mono框架入门及代码解析》笔记2:IMU预积分

系列文章目录

第一讲 前端与特征点管理

文章目录

- 系列文章目录

- 1. VINS后端逻辑

-

- 1.1 后端流程

- 1.2 process函数

- 1.3 imu和图像的回调函数

- 2. getMeasurements函数

- 3. processIMU函数

- 4. IMU预积分

-

- 4.1 对测量值的求解

- 5. 预积分代码

1. VINS后端逻辑

1.1 后端流程

输入信息:IMU信息,特征点信息,回环帧信息,重启信息

四个信息分别对应各自的回调函数

getMeasurements中的processImage在第1讲中已经讲过

本讲主要讲述processIMU函数的流程

1.2 process函数

process函数是一个while(true)循环,会一直进行下去

std::vector<std::pair<std::vector<sensor_msgs::ImuConstPtr>, sensor_msgs::PointCloudConstPtr>> measurements;

std::unique_lock<std::mutex> lk(m_buf);

con.wait(lk, [&]

{

return (measurements = getMeasurements()).size() != 0;

});

使用条件变量的wait函数阻塞线程

如果被唤醒,调用getMeasurements读取buffer中的imu和图像信息,进行时间对齐。

for (auto &measurement : measurements)

遍历每组image-imu组合

auto img_msg = measurement.second;

double dx = 0, dy = 0, dz = 0, rx = 0, ry = 0, rz = 0;

// 遍历imu

for (auto &imu_msg : measurement.first)

{

double t = imu_msg->header.stamp.toSec();

double img_t = img_msg->header.stamp.toSec() + estimator.td;

if (t <= img_t)

{

if (current_time < 0)

current_time = t;

double dt = t - current_time;

ROS_ASSERT(dt >= 0);

current_time = t;

dx = imu_msg->linear_acceleration.x;

dy = imu_msg->linear_acceleration.y;

dz = imu_msg->linear_acceleration.z;

rx = imu_msg->angular_velocity.x;

ry = imu_msg->angular_velocity.y;

rz = imu_msg->angular_velocity.z;

// 时间差和imu数据送进去

estimator.processIMU(dt, Vector3d(dx, dy, dz), Vector3d(rx, ry, rz));

//printf("imu: dt:%f a: %f %f %f w: %f %f %f\n",dt, dx, dy, dz, rx, ry, rz);

}

else // 这就是针对最后一个imu数据,需要做一个简单的线性插值

{

double dt_1 = img_t - current_time;

double dt_2 = t - img_t;

current_time = img_t;

ROS_ASSERT(dt_1 >= 0);

ROS_ASSERT(dt_2 >= 0);

ROS_ASSERT(dt_1 + dt_2 > 0);

double w1 = dt_2 / (dt_1 + dt_2);

double w2 = dt_1 / (dt_1 + dt_2);

dx = w1 * dx + w2 * imu_msg->linear_acceleration.x;

dy = w1 * dy + w2 * imu_msg->linear_acceleration.y;

dz = w1 * dz + w2 * imu_msg->linear_acceleration.z;

rx = w1 * rx + w2 * imu_msg->angular_velocity.x;

ry = w1 * ry + w2 * imu_msg->angular_velocity.y;

rz = w1 * rz + w2 * imu_msg->angular_velocity.z;

estimator.processIMU(dt_1, Vector3d(dx, dy, dz), Vector3d(rx, ry, rz));

//printf("dimu: dt:%f a: %f %f %f w: %f %f %f\n",dt_1, dx, dy, dz, rx, ry, rz);

}

}

进行imu预积分

// set relocalization frame

// 回环相关部分

sensor_msgs::PointCloudConstPtr relo_msg = NULL;

while (!relo_buf.empty()) // 取出最新的回环帧

{

relo_msg = relo_buf.front();

relo_buf.pop();

}

if (relo_msg != NULL) // 有效回环信息

{

vector<Vector3d> match_points;

double frame_stamp = relo_msg->header.stamp.toSec(); // 回环的当前帧时间戳

for (unsigned int i = 0; i < relo_msg->points.size(); i++)

{

Vector3d u_v_id;

u_v_id.x() = relo_msg->points[i].x; // 回环帧的归一化坐标和地图点idx

u_v_id.y() = relo_msg->points[i].y;

u_v_id.z() = relo_msg->points[i].z;

match_points.push_back(u_v_id);

}

// 回环帧的位姿

Vector3d relo_t(relo_msg->channels[0].values[0], relo_msg->channels[0].values[1], relo_msg->channels[0].values[2]);

Quaterniond relo_q(relo_msg->channels[0].values[3], relo_msg->channels[0].values[4], relo_msg->channels[0].values[5], relo_msg->channels[0].values[6]);

Matrix3d relo_r = relo_q.toRotationMatrix();

int frame_index;

frame_index = relo_msg->channels[0].values[7];

estimator.setReloFrame(frame_stamp, frame_index, match_points, relo_t, relo_r);

}

设置回环帧信息

// 特征点id->特征点信息

map<int, vector<pair<int, Eigen::Matrix<double, 7, 1>>>> image;

for (unsigned int i = 0; i < img_msg->points.size(); i++)

{

int v = img_msg->channels[0].values[i] + 0.5;

int feature_id = v / NUM_OF_CAM;

int camera_id = v % NUM_OF_CAM;

double x = img_msg->points[i].x; // 去畸变后归一滑像素坐标

double y = img_msg->points[i].y;

double z = img_msg->points[i].z;

double p_u = img_msg->channels[1].values[i]; // 特征点像素坐标

double p_v = img_msg->channels[2].values[i];

double velocity_x = img_msg->channels[3].values[i]; // 特征点速度

double velocity_y = img_msg->channels[4].values[i];

ROS_ASSERT(z == 1); // 检查是不是归一化

Eigen::Matrix<double, 7, 1> xyz_uv_velocity;

xyz_uv_velocity << x, y, z, p_u, p_v, velocity_x, velocity_y;

image[feature_id].emplace_back(camera_id, xyz_uv_velocity);

}

estimator.processImage(image, img_msg->header);

处理图像信息

1.3 imu和图像的回调函数

imu_callback

m_buf.lock();

imu_buf.push(imu_msg);

m_buf.unlock();

con.notify_one();

feature_callback

m_buf.lock();

feature_buf.push(feature_msg);

m_buf.unlock();

con.notify_one();

m_buf被多线程共享,因此数据放入之前加锁,放入之后解锁。

此外,还要设置条件锁,唤醒process中的获取观测值数据的函数getMeasurements.

2. getMeasurements函数

std::vector<std::pair<std::vector<sensor_msgs::ImuConstPtr>, sensor_msgs::PointCloudConstPtr>>

getMeasurements()

主体同样是一个while(true)循环

首先是三个判断,任意一个条件被满足,都退出。

判断1:

此时feature_buf和imu_buf为空

此时feature_buf不为空,但imu_buf为空

if (imu_buf.empty() || feature_buf.empty())

return measurements;

// imu *******

// image *****

// 这就是imu还没来

if (!(imu_buf.back()->header.stamp.toSec() > feature_buf.front()->header.stamp.toSec() + estimator.td))

{

//ROS_WARN("wait for imu, only should happen at the beginning");

sum_of_wait++;

return measurements;

}

判断3:

imu信息到了,但图像迟迟没更新,此时扔掉太旧的图像

// imu ****

// image ******

// 这种只能扔掉一些image帧

if (!(imu_buf.front()->header.stamp.toSec() < feature_buf.front()->header.stamp.toSec() + estimator.td))

{

ROS_WARN("throw img, only should happen at the beginning");

feature_buf.pop();

continue;

}

// 此时就保证了图像前一定有imu数据

sensor_msgs::PointCloudConstPtr img_msg = feature_buf.front();

feature_buf.pop();

// 一般第一帧不会严格对齐,但是后面就都会对齐,当然第一帧也不会用到

std::vector<sensor_msgs::ImuConstPtr> IMUs;

while (imu_buf.front()->header.stamp.toSec() < img_msg->header.stamp.toSec() + estimator.td)

{

IMUs.emplace_back(imu_buf.front());

imu_buf.pop();

}

// 保留图像时间戳后一个imu数据,但不会从buffer中扔掉

// imu * *

// image *

IMUs.emplace_back(imu_buf.front());

if (IMUs.empty())

ROS_WARN("no imu between two image");

measurements.emplace_back(IMUs, img_msg);

}

return measurements;

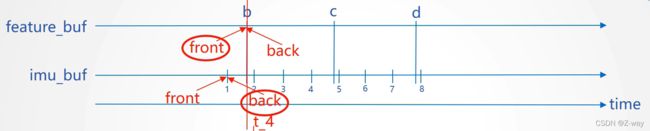

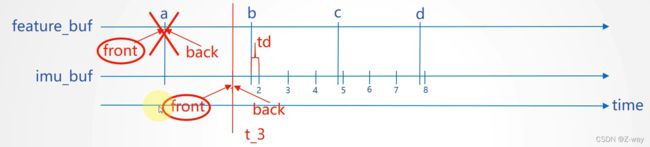

首先读取图像信息b

然后读取图像时间戳+td以内的所有imu信息,1和2

接下来如下图所示

此时满足条件一

将读取到的measurements返回

这两种情况符合条件二,等待下一个imu信号

再次提取数据

将读取图像信息c

读取imu信息3,4,5

3. processIMU函数

void Estimator::processIMU(double dt, const Vector3d &linear_acceleration, const Vector3d &angular_velocity)

if (!first_imu)

{

first_imu = true;

acc_0 = linear_acceleration;

gyr_0 = angular_velocity;

}

首先判断是否是第一个imu信息,如果是,则把当前传入的加速度和角速度设为初始值。

// 滑窗中保留11帧,frame_count表示现在处理第几帧,一般处理到第11帧时就保持不变了

// 由于预积分是帧间约束,因此第1个预积分量实际上是用不到的

if (!pre_integrations[frame_count])

{

pre_integrations[frame_count] = new IntegrationBase{acc_0, gyr_0, Bas[frame_count], Bgs[frame_count]};

}

判断是否分配内存,如果没有分配内存,则创建一个新的预积分对象

if (frame_count != 0)

从第二帧开始处理(第一帧已经是初始值了)

pre_integrations[frame_count]->push_back(dt, linear_acceleration, angular_velocity);

//if(solver_flag != NON_LINEAR)

// 这个量用来做初始化用的

tmp_pre_integration->push_back(dt, linear_acceleration, angular_velocity);

把imu数据push到pre_integrations和tmp_pre_integration中,计算imu预积分

注:pre_integrations代表了滑动窗口中的关键帧,tmp_pre_integration代表了每一个帧(包括关键帧和非关键帧)

// 保存传感器数据

dt_buf[frame_count].push_back(dt);

linear_acceleration_buf[frame_count].push_back(linear_acceleration);

angular_velocity_buf[frame_count].push_back(angular_velocity);

把imu信息存到buffer中

// 又是一个中值积分,更新滑窗中状态量,本质是给非线性优化提供可信的初始值

int j = frame_count;

Vector3d un_acc_0 = Rs[j] * (acc_0 - Bas[j]) - g;

Vector3d un_gyr = 0.5 * (gyr_0 + angular_velocity) - Bgs[j];

Rs[j] *= Utility::deltaQ(un_gyr * dt).toRotationMatrix();

Vector3d un_acc_1 = Rs[j] * (linear_acceleration - Bas[j]) - g;

Vector3d un_acc = 0.5 * (un_acc_0 + un_acc_1);

Ps[j] += dt * Vs[j] + 0.5 * dt * dt * un_acc;

Vs[j] += dt * un_acc;

中值积分,预测最新帧的位置,作为视觉的初始位姿。

4. IMU预积分

4.1 对测量值的求解

k+1时刻的p,v,q与k时刻的p,v,q相关,但k时刻的p,v,q随着后端优化可能发生变化,那么k+1时刻的p,v,q就需要重新积分,十分耗时。

为了避免重复计算,将关键帧之间的imu测量的积分转化为相对运动的约束,即增量形式。

将原本的公式从世界坐标系转化到第k帧的imu坐标系。

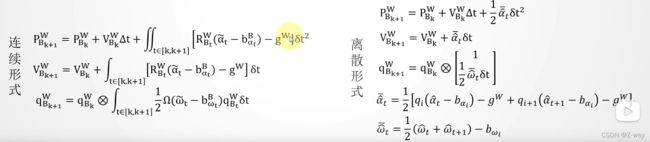

给出预积分的连续和离散形式,离散形式由中值法计算。

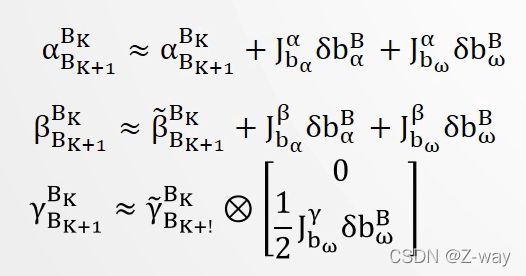

加速度和角速度的偏差也会因为优化而发生改变,仍然需要重复计算预积分。

为了避免这一问题,假设偏差的更新很小,偏差的预积分按照其对偏差的一阶近似来计算。

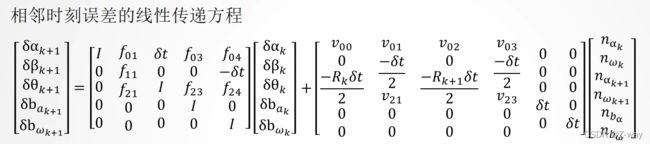

给出相邻时刻误差的线性传递方程

可以写成如下形式

![]()

可以看出,误差的传递分为两部分:

- 当前时刻误差传递给下一时刻

- 当前时刻测量噪声传递给下一时刻

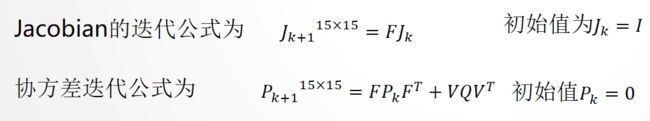

从而得到Jacobian矩阵和协方差矩阵的迭代公式

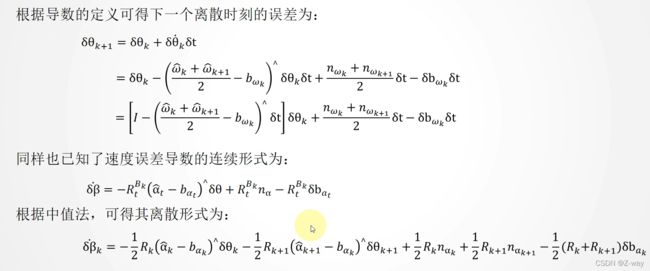

推导速度误差的导数

推导旋转误差的导数

对旋转误差和速度误差做一阶泰勒展开,将上述结果代入,得

将第一个结果带入第二个结果,得

速度误差为

5. 预积分代码

IntegrationBase *pre_integrations[(WINDOW_SIZE + 1)]; // 关键帧

IntegrationBase *tmp_pre_integration; // 所有帧

预积分类

IntegrationBase() = delete;

IntegrationBase(const Eigen::Vector3d &_acc_0, const Eigen::Vector3d &_gyr_0,

const Eigen::Vector3d &_linearized_ba, const Eigen::Vector3d &_linearized_bg)

: acc_0{_acc_0}, gyr_0{_gyr_0}, linearized_acc{_acc_0}, linearized_gyr{_gyr_0},

linearized_ba{_linearized_ba}, linearized_bg{_linearized_bg},

jacobian{Eigen::Matrix<double, 15, 15>::Identity()}, covariance{Eigen::Matrix<double, 15, 15>::Zero()},

sum_dt{0.0}, delta_p{Eigen::Vector3d::Zero()}, delta_q{Eigen::Quaterniond::Identity()}, delta_v{Eigen::Vector3d::Zero()}

{

noise = Eigen::Matrix<double, 18, 18>::Zero();

noise.block<3, 3>(0, 0) = (ACC_N * ACC_N) * Eigen::Matrix3d::Identity();

noise.block<3, 3>(3, 3) = (GYR_N * GYR_N) * Eigen::Matrix3d::Identity();

noise.block<3, 3>(6, 6) = (ACC_N * ACC_N) * Eigen::Matrix3d::Identity();

noise.block<3, 3>(9, 9) = (GYR_N * GYR_N) * Eigen::Matrix3d::Identity();

noise.block<3, 3>(12, 12) = (ACC_W * ACC_W) * Eigen::Matrix3d::Identity();

noise.block<3, 3>(15, 15) = (GYR_W * GYR_W) * Eigen::Matrix3d::Identity();

}

构造函数,进行初始化

push_back函数

void push_back(double dt, const Eigen::Vector3d &acc, const Eigen::Vector3d &gyr)

{

// 相关时间差和传感器数据保留,方便后续repropagate

dt_buf.push_back(dt);

acc_buf.push_back(acc);

gyr_buf.push_back(gyr);

propagate(dt, acc, gyr);

}

propagate函数

void propagate(double _dt, const Eigen::Vector3d &_acc_1, const Eigen::Vector3d &_gyr_1)

{

dt = _dt;

acc_1 = _acc_1;

gyr_1 = _gyr_1;

Vector3d result_delta_p;

Quaterniond result_delta_q;

Vector3d result_delta_v;

Vector3d result_linearized_ba;

Vector3d result_linearized_bg;

midPointIntegration(_dt, acc_0, gyr_0, _acc_1, _gyr_1, delta_p, delta_q, delta_v,

linearized_ba, linearized_bg,

result_delta_p, result_delta_q, result_delta_v,

result_linearized_ba, result_linearized_bg, 1);

//checkJacobian(_dt, acc_0, gyr_0, acc_1, gyr_1, delta_p, delta_q, delta_v,

// linearized_ba, linearized_bg);

delta_p = result_delta_p;

delta_q = result_delta_q;

delta_v = result_delta_v;

linearized_ba = result_linearized_ba;

linearized_bg = result_linearized_bg;

delta_q.normalize();

sum_dt += dt;

acc_0 = acc_1;

gyr_0 = gyr_1;

}

midPointIntegration函数

使用中值法计算预积分

void midPointIntegration(double _dt,

const Eigen::Vector3d &_acc_0, const Eigen::Vector3d &_gyr_0,

const Eigen::Vector3d &_acc_1, const Eigen::Vector3d &_gyr_1,

const Eigen::Vector3d &delta_p, const Eigen::Quaterniond &delta_q, const Eigen::Vector3d &delta_v,

const Eigen::Vector3d &linearized_ba, const Eigen::Vector3d &linearized_bg,

Eigen::Vector3d &result_delta_p, Eigen::Quaterniond &result_delta_q, Eigen::Vector3d &result_delta_v,

Eigen::Vector3d &result_linearized_ba, Eigen::Vector3d &result_linearized_bg, bool update_jacobian)

{

//ROS_INFO("midpoint integration");

// 首先中值积分更新状态量

Vector3d un_acc_0 = delta_q * (_acc_0 - linearized_ba);

Vector3d un_gyr = 0.5 * (_gyr_0 + _gyr_1) - linearized_bg;

result_delta_q = delta_q * Quaterniond(1, un_gyr(0) * _dt / 2, un_gyr(1) * _dt / 2, un_gyr(2) * _dt / 2);

Vector3d un_acc_1 = result_delta_q * (_acc_1 - linearized_ba);

Vector3d un_acc = 0.5 * (un_acc_0 + un_acc_1);

result_delta_p = delta_p + delta_v * _dt + 0.5 * un_acc * _dt * _dt;

result_delta_v = delta_v + un_acc * _dt;

result_linearized_ba = linearized_ba;

result_linearized_bg = linearized_bg;

// 随后更新方差矩阵及雅克比

if(update_jacobian)

{

Vector3d w_x = 0.5 * (_gyr_0 + _gyr_1) - linearized_bg;

Vector3d a_0_x = _acc_0 - linearized_ba;

Vector3d a_1_x = _acc_1 - linearized_ba;

Matrix3d R_w_x, R_a_0_x, R_a_1_x;

R_w_x<<0, -w_x(2), w_x(1),

w_x(2), 0, -w_x(0),

-w_x(1), w_x(0), 0;

R_a_0_x<<0, -a_0_x(2), a_0_x(1),

a_0_x(2), 0, -a_0_x(0),

-a_0_x(1), a_0_x(0), 0;

R_a_1_x<<0, -a_1_x(2), a_1_x(1),

a_1_x(2), 0, -a_1_x(0),

-a_1_x(1), a_1_x(0), 0;

MatrixXd F = MatrixXd::Zero(15, 15);

F.block<3, 3>(0, 0) = Matrix3d::Identity();

F.block<3, 3>(0, 3) = -0.25 * delta_q.toRotationMatrix() * R_a_0_x * _dt * _dt +

-0.25 * result_delta_q.toRotationMatrix() * R_a_1_x * (Matrix3d::Identity() - R_w_x * _dt) * _dt * _dt;

F.block<3, 3>(0, 6) = MatrixXd::Identity(3,3) * _dt;

F.block<3, 3>(0, 9) = -0.25 * (delta_q.toRotationMatrix() + result_delta_q.toRotationMatrix()) * _dt * _dt;

F.block<3, 3>(0, 12) = -0.25 * result_delta_q.toRotationMatrix() * R_a_1_x * _dt * _dt * -_dt;

F.block<3, 3>(3, 3) = Matrix3d::Identity() - R_w_x * _dt;

F.block<3, 3>(3, 12) = -1.0 * MatrixXd::Identity(3,3) * _dt;

F.block<3, 3>(6, 3) = -0.5 * delta_q.toRotationMatrix() * R_a_0_x * _dt +

-0.5 * result_delta_q.toRotationMatrix() * R_a_1_x * (Matrix3d::Identity() - R_w_x * _dt) * _dt;

F.block<3, 3>(6, 6) = Matrix3d::Identity();

F.block<3, 3>(6, 9) = -0.5 * (delta_q.toRotationMatrix() + result_delta_q.toRotationMatrix()) * _dt;

F.block<3, 3>(6, 12) = -0.5 * result_delta_q.toRotationMatrix() * R_a_1_x * _dt * -_dt;

F.block<3, 3>(9, 9) = Matrix3d::Identity();

F.block<3, 3>(12, 12) = Matrix3d::Identity();

//cout<<"A"<

MatrixXd V = MatrixXd::Zero(15,18);

V.block<3, 3>(0, 0) = 0.25 * delta_q.toRotationMatrix() * _dt * _dt;

V.block<3, 3>(0, 3) = 0.25 * -result_delta_q.toRotationMatrix() * R_a_1_x * _dt * _dt * 0.5 * _dt;

V.block<3, 3>(0, 6) = 0.25 * result_delta_q.toRotationMatrix() * _dt * _dt;

V.block<3, 3>(0, 9) = V.block<3, 3>(0, 3);

V.block<3, 3>(3, 3) = 0.5 * MatrixXd::Identity(3,3) * _dt;

V.block<3, 3>(3, 9) = 0.5 * MatrixXd::Identity(3,3) * _dt;

V.block<3, 3>(6, 0) = 0.5 * delta_q.toRotationMatrix() * _dt;

V.block<3, 3>(6, 3) = 0.5 * -result_delta_q.toRotationMatrix() * R_a_1_x * _dt * 0.5 * _dt;

V.block<3, 3>(6, 6) = 0.5 * result_delta_q.toRotationMatrix() * _dt;

V.block<3, 3>(6, 9) = V.block<3, 3>(6, 3);

V.block<3, 3>(9, 12) = MatrixXd::Identity(3,3) * _dt;

V.block<3, 3>(12, 15) = MatrixXd::Identity(3,3) * _dt;

//step_jacobian = F;

//step_V = V;

jacobian = F * jacobian;

covariance = F * covariance * F.transpose() + V * noise * V.transpose();

}

}