springboot整合kafka--从0到1(技术篇)

Kafka 是由 Apache 软件基金会开发的一个开源流处理平台,由 Scala 和 Java 编写。 Kafka 是一种高吞吐量的分布式发布订阅消息系统,它可以处理消费者在网站中的所有动作流数据。本章介绍 Spring Boot 集成 Kafka 收发消息。

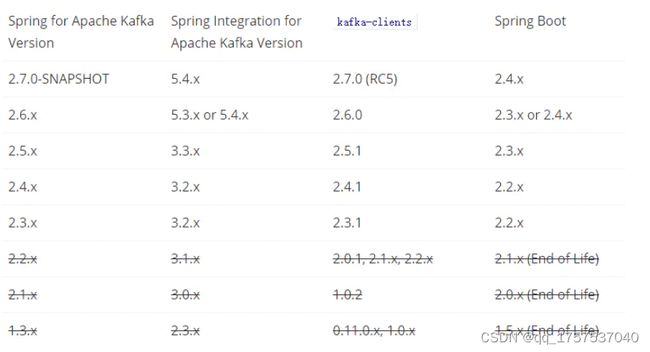

Spring 有专门的项目支持 Kafka ,引入依赖包时需要注意版本兼容问题,以下是 Spring for Apache Kafka 版本兼容列表:

一.安装JAVA JDK

1、下载安装包

http://www.oracle.com/technetwork/java/javase/downloads/jdk8-downloads-2133151.html

注意:根据32/64位操作系统下载对应的安装包

2、添加系统变量:JAVA_HOME=C:\Program Files (x86)\Java\jdk1.8.0_144

二.安装zookeeper

1、 下载安装包

http://zookeeper.apache.org/releases.html#download

2、 解压并进入ZooKeeper目录,进入目录中的conf文件夹

3、 将“zoo_sample.cfg”重命名为“zoo.cfg”

4、 打开“zoo.cfg”找到并编辑dataDir=D:\Kafka\zookeeper-3.4.9\tmp(必须以\分割)

5、 添加系统变量:ZOOKEEPER_HOME=D:\Kafka\zookeeper-3.4.9

6、 编辑path系统变量,添加路径:%ZOOKEEPER_HOME%\bin

7、 在zoo.cfg文件中修改默认的Zookeeper端口(默认端口2181)

8、 打开新的cmd,输入“zkServer“,运行Zookeeper

9、 命令行提示如下:说明本地Zookeeper启动成功

注意:不要关了这个窗口

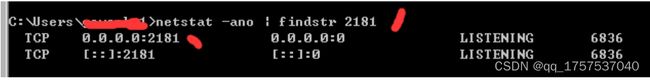

当然你如果不确定有没有启动成功

在命令行中使用netstat -ano | findstr 2181(zookeeper默认是2181端口)

三.安装Kafka

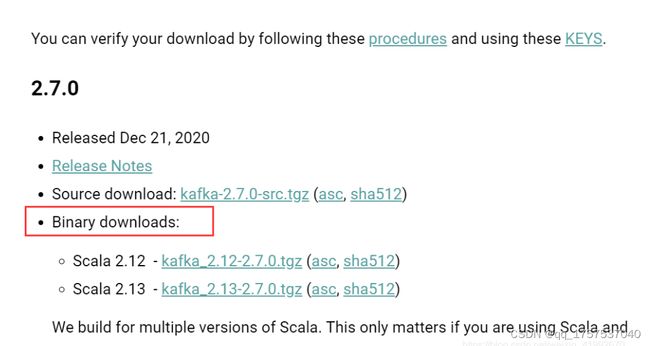

1、 下载安装包

http://kafka.apache.org/downloads

注意要下载二进制版本

2、 解压并进入Kafka目录,笔者:D:\Kafka\kafka_2.12-0.11.0.0

3、 进入config目录找到文件server.properties并打开

4、 找到并编辑log.dirs=D:\Kafka\kafka_2.12-0.11.0.0\kafka-logs

5、 找到并编辑zookeeper.connect=localhost:2181

6、 Kafka会按照默认,在9092端口上运行,并连接zookeeper的默认端口:2181

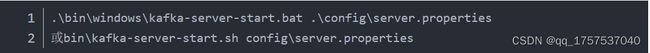

7、 进入Kafka安装目录D:\Kafka\kafka_2.12-0.11.0.0,按下Shift+右键,选择“打开命令窗口”选项,打开命令行,输入:

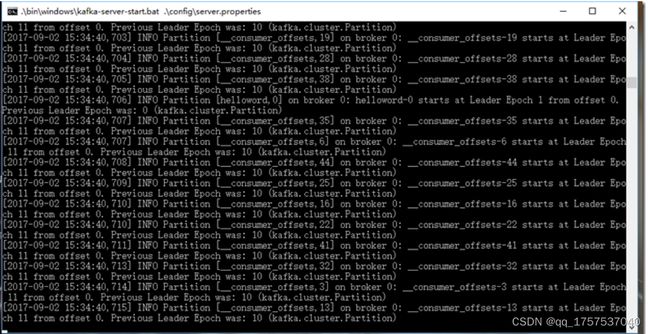

注意:注意:不要关了这个窗口,启用Kafka前请确保ZooKeeper实例已经准备好并开始运行

四.springboot集成kafka

1.在pom文件中引入依赖(具体的版本号建议通过spring-boot-dependencies管理)

org.springframework.kafka

spring-kafka

指定 Scala 版本解决 Jackson/Scala 兼容问题:

org.scala-lang

scala-library

{version}

test

org.scala-lang

scala-reflect

{version}

test

2.配置application.yml文件

spring:

application:

name: demo

kafka:

# kafka连接接地址

bootstrap-servers: ${KAFKA_HOST:localhost}:${KAFKA_PORT:9092} # kafka连接接地址

# 生产者配置

producer:

retries: 0 # 重试次数

acks: 1 # 应答级别:多少个分区副本备份完成时向生产者发送ack确认(可选0、1、all/-1)

batch-size: 16384 # 批量大小

buffer-memory: 33554432 # 生产端缓冲区大小

# 序列化key的类

key-serializer: org.apache.kafka.common.serialization.StringSerializer

# 序列化value的类

value-serializer: org.apache.kafka.common.serialization.StringSerializer

# 消费者配置

consumer:

group-id: javagroup # 默认的消费组ID

enable-auto-commit: true # 是否自动提交offset

auto-commit-interval: 100 # 提交offset延时(接收到消息后多久提交offset)

# earliest:当各分区下有已提交的offset时,从提交的offset开始消费;无提交的offset时,从头开始消费

# latest:当各分区下有已提交的offset时,从提交的offset开始消费;无提交的offset时,消费新产生的该分区下的数据

# none:topic各分区都存在已提交的offset时,从offset后开始消费;只要有一个分区不存在已提交的offset,则抛出异常

auto-offset-reset: latest

# 序列化key的类

key-deserializer: org.apache.kafka.common.serialization.StringDeserializer

# 序列化value的类

value-deserializer: org.apache.kafka.common.serialization.StringDeserializer

详解:

通用配置:spring.kafka.*

admin、producer、consumer、streams配置会覆盖通用配置 spring.kafka.* 中相同的属性

生产者相关配置:spring.kafka.producer.*

消费者相关配置:spring.kafka.consumer.*

默认 value-serializer 使用 org.apache.kafka.common.serialization.StringDeserializer ,只支持文本消息。自定义 org.springframework.kafka.support.serializer.JsonDeserializer 可以让消息支持其他类型。

3.主启动类

新建KafkaMQApplication.java启动类,

注意要新增 @EnableKafka 注解:

package com.yuwen.spring.kafka;

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

import org.springframework.kafka.annotation.EnableKafka;

@SpringBootApplication

@EnableKafka

public class KafkaMQApplication {

public static void main(String[] args) {

SpringApplication.run(KafkaMQApplication.class, args);

}

}4.生产者发送消息:

Spring Kafka 提供KafkaTemplate类发送消息,

在需要的地方注入即可,

新增ProviderService.java生产者服务类:

package com.yuwen.spring.kafka.provider;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.kafka.core.KafkaTemplate;

import org.springframework.stereotype.Service;

@Service

public class ProviderService {

public static final String TOPIC = "testTopic";

@Autowired

private KafkaTemplate kafkaTemplate;

public void send(String message) {

// 发送消息

kafkaTemplate.send(TOPIC, message);

System.out.println("Provider= " + message);

}

}

注意指定 topic ,以及要发送的消息内容message。

5.消费者接收消息:

新增ConsumerService.java类,

注意使用 @KafkaListener 注解:

package com.yuwen.spring.kafka.consumer;

import org.springframework.kafka.annotation.KafkaListener;

import org.springframework.stereotype.Service;

import com.yuwen.spring.kafka.provider.ProviderService;

@Service

public class ConsumerService {

@KafkaListener(topics = ProviderService.TOPIC, groupId = "testGroup", topicPartitions = {})

public void receive(String message) {

System.out.println("Consumer= " + message);

}

}

参数说明:

topics 与发送消息topic相同,可以指定多个

groupId 消费组唯一id

topicPartitions topic分区,可指定多个

6.自动产生消息

为了测试生产者产生消息,

编写AutoGenerate.java

在项目启动时候,自动生成随机字符串,

作为生产者向kafka发送消息:

package com.yuwen.spring.kafka.provider;

import java.util.UUID;

import java.util.concurrent.TimeUnit;

import org.springframework.beans.factory.InitializingBean;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.stereotype.Component;

@Component

public class AutoGenerate implements InitializingBean {

@Autowired

private ProviderService providerService;

@Override

public void afterPropertiesSet() throws Exception {

Thread t = new Thread(new Runnable() {

@Override

public void run() {

while (true) {

String message = UUID.randomUUID().toString();

providerService.send(message);

try {

TimeUnit.SECONDS.sleep(1);

} catch (InterruptedException e) {

e.printStackTrace();

}

}

}

});

t.start();

}

}7.启动项目

运行KafkaMQApplication.java启动类,

输出如下日志,

可以看到生产者产生的随机字符串,

能够被消费者正确获取到:

. ____ _ __ _ _

/\\ / ___'_ __ _ _(_)_ __ __ _ \ \ \ \

( ( )\___ | '_ | '_| | '_ \/ _` | \ \ \ \

\\/ ___)| |_)| | | | | || (_| | ) ) ) )

' |____| .__|_| |_|_| |_\__, | / / / /

=========|_|==============|___/=/_/_/_/

:: Spring Boot :: (v2.3.1.RELEASE)

2022-04-28 19:37:49.687 INFO 14424 --- [ main] c.yuwen.spring.kafka.KafkaMQApplication : Starting KafkaMQApplication on yuwen-asiainfo with PID 14424 (D:\Code\Learn\SpringBoot\spring-boot-demo\MessageQueue\kafka\target\classes started by yuwen in D:\Code\Learn\SpringBoot\spring-boot-demo\MessageQueue\kafka)

2022-04-28 19:37:49.689 INFO 14424 --- [ main] c.yuwen.spring.kafka.KafkaMQApplication : No active profile set, falling back to default profiles: default

2022-04-28 19:37:51.282 INFO 14424 --- [ main] o.s.b.w.embedded.tomcat.TomcatWebServer : Tomcat initialized with port(s): 8028 (http)

2022-04-28 19:37:51.290 INFO 14424 --- [ main] o.apache.catalina.core.StandardService : Starting service [Tomcat]

2022-04-28 19:37:51.291 INFO 14424 --- [ main] org.apache.catalina.core.StandardEngine : Starting Servlet engine: [Apache Tomcat/9.0.36]

2022-04-28 19:37:51.371 INFO 14424 --- [ main] o.a.c.c.C.[Tomcat].[localhost].[/] : Initializing Spring embedded WebApplicationContext

2022-04-28 19:37:51.371 INFO 14424 --- [ main] w.s.c.ServletWebServerApplicationContext : Root WebApplicationContext: initialization completed in 1645 ms

2022-04-28 19:37:51.491 INFO 14424 --- [ Thread-119] o.a.k.clients.producer.ProducerConfig : ProducerConfig values:

acks = 1

batch.size = 16384

bootstrap.servers = [10.21.13.14:9092]

buffer.memory = 33554432

client.dns.lookup = default

client.id = producer-1

compression.type = none

connections.max.idle.ms = 540000

delivery.timeout.ms = 120000

enable.idempotence = false

interceptor.classes = []

key.serializer = class org.apache.kafka.common.serialization.StringSerializer

linger.ms = 0

max.block.ms = 60000

max.in.flight.requests.per.connection = 5

max.request.size = 1048576

metadata.max.age.ms = 300000

metadata.max.idle.ms = 300000

metric.reporters = []

metrics.num.samples = 2

metrics.recording.level = INFO

metrics.sample.window.ms = 30000

partitioner.class = class org.apache.kafka.clients.producer.internals.DefaultPartitioner

receive.buffer.bytes = 32768

reconnect.backoff.max.ms = 1000

reconnect.backoff.ms = 50

request.timeout.ms = 30000

retries = 2147483647

retry.backoff.ms = 100

sasl.client.callback.handler.class = null

sasl.jaas.config = null

sasl.kerberos.kinit.cmd = /usr/bin/kinit

sasl.kerberos.min.time.before.relogin = 60000

sasl.kerberos.service.name = null

sasl.kerberos.ticket.renew.jitter = 0.05

sasl.kerberos.ticket.renew.window.factor = 0.8

sasl.login.callback.handler.class = null

sasl.login.class = null

sasl.login.refresh.buffer.seconds = 300

sasl.login.refresh.min.period.seconds = 60

sasl.login.refresh.window.factor = 0.8

sasl.login.refresh.window.jitter = 0.05

sasl.mechanism = GSSAPI

security.protocol = PLAINTEXT

security.providers = null

send.buffer.bytes = 131072

ssl.cipher.suites = null

ssl.enabled.protocols = [TLSv1.2]

ssl.endpoint.identification.algorithm = https

ssl.key.password = null

ssl.keymanager.algorithm = SunX509

ssl.keystore.location = null

ssl.keystore.password = null

ssl.keystore.type = JKS

ssl.protocol = TLSv1.2

ssl.provider = null

ssl.secure.random.implementation = null

ssl.trustmanager.algorithm = PKIX

ssl.truststore.location = null

ssl.truststore.password = null

ssl.truststore.type = JKS

transaction.timeout.ms = 60000

transactional.id = null

value.serializer = class org.springframework.kafka.support.serializer.JsonSerializer

2022-04-28 19:37:51.563 INFO 14424 --- [ main] o.s.s.concurrent.ThreadPoolTaskExecutor : Initializing ExecutorService 'applicationTaskExecutor'

2022-04-28 19:37:51.590 INFO 14424 --- [ Thread-119] o.a.kafka.common.utils.AppInfoParser : Kafka version: 2.5.0

2022-04-28 19:37:51.592 INFO 14424 --- [ Thread-119] o.a.kafka.common.utils.AppInfoParser : Kafka commitId: 66563e712b0b9f84

2022-04-28 19:37:51.592 INFO 14424 --- [ Thread-119] o.a.kafka.common.utils.AppInfoParser : Kafka startTimeMs: 1651145871589

2022-04-28 19:37:51.851 INFO 14424 --- [ main] o.a.k.clients.consumer.ConsumerConfig : ConsumerConfig values:

allow.auto.create.topics = true

auto.commit.interval.ms = 5000

auto.offset.reset = latest

bootstrap.servers = [10.21.13.14:9092]

check.crcs = true

client.dns.lookup = default

client.id =

client.rack =

connections.max.idle.ms = 540000

default.api.timeout.ms = 60000

enable.auto.commit = false

exclude.internal.topics = true

fetch.max.bytes = 52428800

fetch.max.wait.ms = 500

fetch.min.bytes = 1

group.id = testGroup

group.instance.id = null

heartbeat.interval.ms = 3000

interceptor.classes = []

internal.leave.group.on.close = true

isolation.level = read_uncommitted

key.deserializer = class org.apache.kafka.common.serialization.StringDeserializer

max.partition.fetch.bytes = 1048576

max.poll.interval.ms = 300000

max.poll.records = 500

metadata.max.age.ms = 300000

metric.reporters = []

metrics.num.samples = 2

metrics.recording.level = INFO

metrics.sample.window.ms = 30000

partition.assignment.strategy = [class org.apache.kafka.clients.consumer.RangeAssignor]

receive.buffer.bytes = 65536

reconnect.backoff.max.ms = 1000

reconnect.backoff.ms = 50

request.timeout.ms = 30000

retry.backoff.ms = 100

sasl.client.callback.handler.class = null

sasl.jaas.config = null

sasl.kerberos.kinit.cmd = /usr/bin/kinit

sasl.kerberos.min.time.before.relogin = 60000

sasl.kerberos.service.name = null

sasl.kerberos.ticket.renew.jitter = 0.05

sasl.kerberos.ticket.renew.window.factor = 0.8

sasl.login.callback.handler.class = null

sasl.login.class = null

sasl.login.refresh.buffer.seconds = 300

sasl.login.refresh.min.period.seconds = 60

sasl.login.refresh.window.factor = 0.8

sasl.login.refresh.window.jitter = 0.05

sasl.mechanism = GSSAPI

security.protocol = PLAINTEXT

security.providers = null

send.buffer.bytes = 131072

session.timeout.ms = 10000

ssl.cipher.suites = null

ssl.enabled.protocols = [TLSv1.2]

ssl.endpoint.identification.algorithm = https

ssl.key.password = null

ssl.keymanager.algorithm = SunX509

ssl.keystore.location = null

ssl.keystore.password = null

ssl.keystore.type = JKS

ssl.protocol = TLSv1.2

ssl.provider = null

ssl.secure.random.implementation = null

ssl.trustmanager.algorithm = PKIX

ssl.truststore.location = null

ssl.truststore.password = null

ssl.truststore.type = JKS

value.deserializer = class org.springframework.kafka.support.serializer.JsonDeserializer

2022-04-28 19:37:51.888 INFO 14424 --- [ main] o.a.kafka.common.utils.AppInfoParser : Kafka version: 2.5.0

2022-04-28 19:37:51.888 INFO 14424 --- [ main] o.a.kafka.common.utils.AppInfoParser : Kafka commitId: 66563e712b0b9f84

2022-04-28 19:37:51.888 INFO 14424 --- [ main] o.a.kafka.common.utils.AppInfoParser : Kafka startTimeMs: 1651145871888

2022-04-28 19:37:51.890 INFO 14424 --- [ main] o.a.k.clients.consumer.KafkaConsumer : [Consumer clientId=consumer-testGroup-1, groupId=testGroup] Subscribed to topic(s): testTopic

2022-04-28 19:37:51.892 INFO 14424 --- [ main] o.s.s.c.ThreadPoolTaskScheduler : Initializing ExecutorService

2022-04-28 19:37:51.911 INFO 14424 --- [ main] o.s.b.w.embedded.tomcat.TomcatWebServer : Tomcat started on port(s): 8028 (http) with context path ''

2022-04-28 19:37:51.921 INFO 14424 --- [ main] c.yuwen.spring.kafka.KafkaMQApplication : Started KafkaMQApplication in 2.582 seconds (JVM running for 2.957)

2022-04-28 19:37:51.939 INFO 14424 --- [ntainer#0-0-C-1] org.apache.kafka.clients.Metadata : [Consumer clientId=consumer-testGroup-1, groupId=testGroup] Cluster ID: zdSPCGGvT8qBnM4LSjz9Hw

2022-04-28 19:37:51.939 INFO 14424 --- [ad | producer-1] org.apache.kafka.clients.Metadata : [Producer clientId=producer-1] Cluster ID: zdSPCGGvT8qBnM4LSjz9Hw

2022-04-28 19:37:51.940 INFO 14424 --- [ntainer#0-0-C-1] o.a.k.c.c.internals.AbstractCoordinator : [Consumer clientId=consumer-testGroup-1, groupId=testGroup] Discovered group coordinator 10.21.13.14:9092 (id: 2147483647 rack: null)

2022-04-28 19:37:51.942 INFO 14424 --- [ntainer#0-0-C-1] o.a.k.c.c.internals.AbstractCoordinator : [Consumer clientId=consumer-testGroup-1, groupId=testGroup] (Re-)joining group

2022-04-28 19:37:51.959 INFO 14424 --- [ntainer#0-0-C-1] o.a.k.c.c.internals.ConsumerCoordinator : [Consumer clientId=consumer-testGroup-1, groupId=testGroup] Finished assignment for group at generation 5: {consumer-testGroup-1-35e34543-5bf3-4a4a-a590-9a4c6f7e1ae3=Assignment(partitions=[testTopic-0])}

Provider= c30a2e6c-e2e8-419e-865c-04885d1a90b5

2022-04-28 19:37:51.966 INFO 14424 --- [ntainer#0-0-C-1] o.a.k.c.c.internals.AbstractCoordinator : [Consumer clientId=consumer-testGroup-1, groupId=testGroup] Successfully joined group with generation 5

2022-04-28 19:37:51.970 INFO 14424 --- [ntainer#0-0-C-1] o.a.k.c.c.internals.ConsumerCoordinator : [Consumer clientId=consumer-testGroup-1, groupId=testGroup] Adding newly assigned partitions: testTopic-0

2022-04-28 19:37:51.984 INFO 14424 --- [ntainer#0-0-C-1] o.a.k.c.c.internals.ConsumerCoordinator : [Consumer clientId=consumer-testGroup-1, groupId=testGroup] Setting offset for partition testTopic-0 to the committed offset FetchPosition{offset=310751, offsetEpoch=Optional.empty, currentLeader=LeaderAndEpoch{leader=Optional[10.21.13.14:9092 (id: 0 rack: null)], epoch=absent}}

2022-04-28 19:37:51.985 INFO 14424 --- [ntainer#0-0-C-1] o.s.k.l.KafkaMessageListenerContainer : testGroup: partitions assigned: [testTopic-0]

Consumer= 19210493-d0df-4cd9-993b-f99500523eb2

Consumer= baee3749-307f-4894-ad88-a9610700ab80

Consumer= bea5e807-b003-4c90-89be-20439e2fa921

Consumer= 98258208-8a95-495d-917e-84d30d965e2b

Consumer= 4301851e-ab19-4c9e-89d6-7b604acdf077

Consumer= c30a2e6c-e2e8-419e-865c-04885d1a90b5

Provider= a6d47e9e-de74-481f-82f8-02bd7384fdd8

Consumer= a6d47e9e-de74-481f-82f8-02bd7384fdd8

Provider= bd935ef1-cc61-4014-a971-1ad76c5e82bf

Consumer= bd935ef1-cc61-4014-a971-1ad76c5e82bf