CDH(Cloudera DataHub 6.2.1)部署(centos6、7)、常用组件(zookeeper、hive、hdfs、yarn、oozie、hue、impala、hbase)安装及验证

本文介绍了CDH的基础环境部署、cdh的server服务部署和相关组件的部署及验证。

具体的组件使用,请参考本人的相关专栏内容。

- 说明:

本部署是在centos6的环境中部署的,写法是按照centos7的要求或命令写的,如果其中有不同,则会使用注释说明。

本部署计划是server1文章目录

- 一、CM基础环境部署

-

- 1、下载安装包

- 2、安装依赖包

- 3、安装httpd

- 4、配置host

- 5、关闭防火墙

- 6、关闭selinux

- 7、安装并启动httpd服务

- 8、生成repodata目录

- 9、配置本地yum源

- 10、创建cloudera-scm用户

- 二、安装Server服务

-

- 1、安装Server服务

- 2、设置元数据库为mysql

- 3、启动server服务进程(查看7180端口)

- 4、关闭CDH集群步骤

- 5、配置本地parcel包

- 6、开始安装CDH

- 7、swappiness和透明化

- 8、添加服务(Cloudera Management Service)

-

- 1)、 服务介绍

- 2)、配置如下

- 9、添加zookeeper服务

- 10、添加HDFS和Yarn服务(HDFS HA 和 yarn HA)及验证

- 11、添加HIVE服务及验证

- 12、添加Oozie服务

- 13、添加HUE服务

- 14、添加impala服务

- 15、添加Hbase服务(含HA)及验证

- 16、添加sqoop服务及验证

一、CM基础环境部署

1、下载安装包

https://archive.cloudera.com/cdh6/6.2.1/parcels/

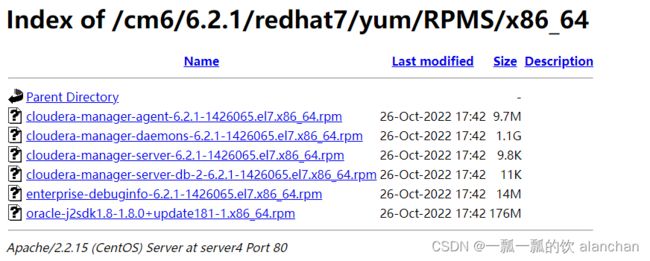

https://archive.cloudera.com/cm6/6.2.1/redhat7/yum/RPMS/x86_64/

2、安装依赖包

yum install -y cyrus-sasl-plain cyrus-sasl-gssapi portmap fuse-libs bind-utils libxslt fuse

yum install -y /lib/lsb/init-functions createrepo deltarpm python-deltarpm

yum install -y mod_ssl openssl-devel python-psycopg2 MySQL-python

MySQL-python可能会与本机已经安装的相冲突

3、安装httpd

只需要在部署本地yum源的机器上安装即可,不用四台全部安装

yum install httpd

yum install createrepo

4、配置host

四台都需要配置,并且需要改服务器的名字,如果改名字后需要reboot。具体环境服务器的名字以自己的为准。

vim /etc/hosts

192.168.10.41 server1

192.168.10.42 server2

192.168.10.43 server3

192.168.10.44 server4

5、关闭防火墙

centos6的命令与centos7有所不同,本机已经关闭过,所以本次没有操作,以下是centos7的命令

# 查看防火墙状态

systemctl status firewalld.service

# running表示防火墙开启

# 执行关闭命令

systemctl stop firewalld.service

#再次执行查看防火墙命令

systemctl status firewalld.service

#执行开机禁用防火墙自启命令

systemctl disable firewalld.service

6、关闭selinux

在配置文件中第一次设置时需要重启服务器

--centos6

getenforce

--centos7

setenforce 0

vim /etc/selinux/config

将SELINUX=enforcing改为SELINUX=disabled

reboot

7、安装并启动httpd服务

# 上面已安装过

yum install httpd -y

--centos6

/etc/rc.d/init.d/httpd start

--centos7

systemctl start httpd.service

cd /var/www/html/

#2、创建cm6目录

mkdir -p cm6/6.2.1/redhat7/yum/RPMS/x86_64/

#3、上传cm6中的文件到/var/www/html/cm6/6.2.1/redhat7/yum/RPMS/x86_64/目录

#4、上传allkeys.asc文件到/var/www/html/cm6/6.2.1/目录下

#访问测试:http://server4/cm6/6.2.1/redhat7/yum/RPMS/x86_64/ 访问正常如下图界面所示

#如果启动httpd有异常,需要将

#ServerName www.example.com:80 改为 localhost:80

8、生成repodata目录

cd /var/www/html/cm6/6.2.1/redhat7/yum

createrepo .

[root@server4 yum]# pwd

/var/www/html/cm6/6.2.1/redhat7/yum

[root@server4 yum]# createrepo .

Spawning worker 0 with 6 pkgs

Workers Finished

Gathering worker results

Saving Primary metadata

Saving file lists metadata

Saving other metadata

Generating sqlite DBs

Sqlite DBs complete

#如果执行createrepo . 命令出现异常,尝试更新yum 命令,执行以下命令

yum update

yum install -y yum-utils device-mapper-persistent-data lvm2

9、配置本地yum源

cd /etc/yum.repos.d/

vim cloudera-manager.repo

#填写内容如下,示例如下图:

[cloudera-manager]

name=Cloudera Manager

baseurl=http://server4/cm6/6.2.1/redhat7/yum/

gpgcheck=0

enabled=1

#执行命令验证:

yum clean all

yum list | grep cloudera

[root@server4 yum.repos.d]# yum clean all

已加载插件:fastestmirror, priorities, security

Repository epel is listed more than once in the configuration

Cleaning repos: base cloudera-manager docker-ce-stable epel erlang-solutions extras jenkins nginx other remi-safe updates

清理一切

Cleaning up list of fastest mirrors

10、创建cloudera-scm用户

#centos7要求必须有,centos6没有要求

useradd cloudera-scm

passwd cloudera-scm

test123456

#免密钥登录

echo "cloudera-scm ALL=(root)NOPASSWD:ALL" >> /etc/sudoers

su - cloudera-scm

exit

[root@server4 yum.repos.d]# passwd cloudera-scm

更改用户 cloudera-scm 的密码 。

新的 密码:

无效的密码: 过于简单化/系统化

重新输入新的 密码:

重新输入新的 密码:

passwd: 所有的身份验证令牌已经成功更新。

[root@server4 yum.repos.d]# echo "cloudera-scm ALL=(root)NOPASSWD:ALL" >> /etc/sudoers

[root@server4 yum.repos.d]# su - cloudera-scm

[cloudera-scm@server4 ~]$ exit

logout

二、安装Server服务

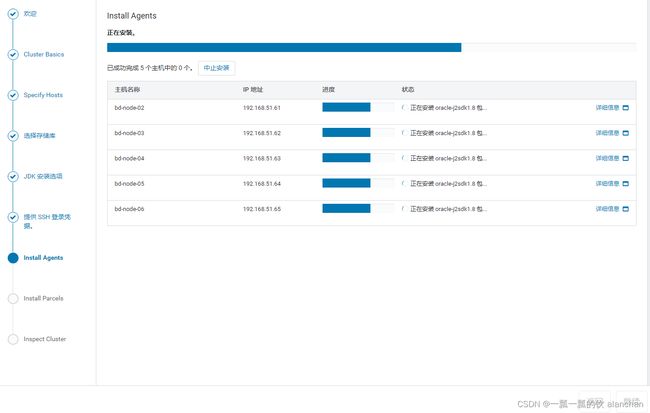

1、安装Server服务

#centos6如果之前已经安装了jdk,则不需要安装jdk。centos7好像在安装过程中,即便本机安装了jdk,还是需要安装其自带的jdk

yum install -y oracle-j2sdk1.8-1.8.0+update181-1.x86_64

yum install -y enterprise-debuginfo-6.2.1-1426065.el7.x86_64

yum install -y cloudera-manager-server-6.2.1-1426065.el7.x86_64

yum install -y cloudera-manager-server-db-2-6.2.1-1426065.el7.x86_64

#执行命令出现如下错误及解决办法

Error: Cannot retrieve repository metadata (repomd.xml) for repository: docker-ce

cd /etc/yum.repos.d

vim docker-ce.repo

enabled=1改成enabled=0

#注意:如果在yum源中添加了新的包时:

#1.需要删除之前的repodata文件后,重新生成;

#2.重启httpd服务

#3.清除yum缓存 yum clean all

2、设置元数据库为mysql

前提是先创建好cdh6数据库

#设置元数据库为mysql--前提是安装ClouderaManager Server服务

/opt/cloudera/cm/schema/scm_prepare_database.sh -h 192.168.10.44 mysql cdh6 root 123456

[root@server4 ~]# /opt/cloudera/cm/schema/scm_prepare_database.sh -h 192.168.10.44 mysql cdh6 root 123456

JAVA_HOME=/usr/java/jdk1.8.0_144

Verifying that we can write to /etc/cloudera-scm-server

Creating SCM configuration file in /etc/cloudera-scm-server

Executing: /usr/java/jdk1.8.0_144/bin/java -cp /usr/share/java/mysql-connector-java.jar:/usr/share/java/oracle-connector-java.jar:/usr/share/java/postgresql-connector-java.jar:/opt/cloudera/cm/schema/../lib/* com.cloudera.enterprise.dbutil.DbCommandExecutor /etc/cloudera-scm-server/db.properties com.cloudera.cmf.db.

[ main] DbCommandExecutor INFO Unable to find JDBC driver for database type: MySQL

[ main] DbCommandExecutor ERROR JDBC Driver com.mysql.jdbc.Driver not found.

[ main] DbCommandExecutor ERROR Exiting with exit code 3

--> Error 3, giving up (use --force if you wish to ignore the error)

#提示ERROR JDBC Driver com.mysql.jdbc.Driver not found.

#需要上传mysql驱动包jar到/opt/cloudera/cm/lib目录下。

[root@server4 ~]# /opt/cloudera/cm/schema/scm_prepare_database.sh -h 192.168.10.44 mysql cm6 root 123456

JAVA_HOME=/usr/java/jdk1.8.0_144

Verifying that we can write to /etc/cloudera-scm-server

Creating SCM configuration file in /etc/cloudera-scm-server

Executing: /usr/java/jdk1.8.0_144/bin/java -cp /usr/share/java/mysql-connector-java.jar:/usr/share/java/oracle-connector-java.jar:/usr/share/java/postgresql-connector-java.jar:/opt/cloudera/cm/schema/../lib/* com.cloudera.enterprise.dbutil.DbCommandExecutor /etc/cloudera-scm-server/db.properties com.cloudera.cmf.db.

Thu Oct 27 09:35:03 CST 2022 WARN: Establishing SSL connection without server's identity verification is not recommended. According to MySQL 5.5.45+, 5.6.26+ and 5.7.6+ requirements SSL connection must be established by default if explicit option isn't set. For compliance with existing applications not using SSL the verifyServerCertificate property is set to 'false'. You need either to explicitly disable SSL by setting useSSL=false, or set useSSL=true and provide truststore for server certificate verification.

[ main] DbCommandExecutor INFO Successfully connected to database.

All done, your SCM database is configured correctly!

#查看:cat /etc/cloudera-scm-server/db.properties

[root@server4 ~]# cat /etc/cloudera-scm-server/db.properties

# Auto-generated by scm_prepare_database.sh on 2022年 10月 27日 星期四 09:35:02 CST

#

# For information describing how to configure the Cloudera Manager Server

# to connect to databases, see the "Cloudera Manager Installation Guide."

#

com.cloudera.cmf.db.type=mysql

com.cloudera.cmf.db.host=192.168.10.44

com.cloudera.cmf.db.name=cm6

com.cloudera.cmf.db.user=root

com.cloudera.cmf.db.setupType=EXTERNAL

com.cloudera.cmf.db.password=123456

3、启动server服务进程(查看7180端口)

#centos6 启动

service cloudera-scm-server stop

service cloudera-scm-server-db stop

service cloudera-scm-server start

service cloudera-scm-server status

#centos7启动

systemctl start cloudera-scm-server

systemctl stop cloudera-scm-server

systemctl status cloudera-scm-server

#查看启动日志:

tail -f /var/log/cloudera-scm-server/cloudera-scm-server.log

#查看启动状态:

systemctl status cloudera-scm-server

netstat -an | grep 7180

[chenwei@server5 ~]$ sudo systemctl status cloudera-scm-server

● cloudera-scm-server.service - Cloudera CM Server Service

Loaded: loaded (/usr/lib/systemd/system/cloudera-scm-server.service; enabled; vendor preset: disabled)

Active: active (running) since 四 2022-10-27 16:04:05 CST; 3s ago

Main PID: 29418 (java)

CGroup: /system.slice/cloudera-scm-server.service

└─29418 /usr/java/jdk1.8.0_181-cloudera/bin/java -cp .:/usr/share/java/mysql-connector-java.jar:/usr/share/java/oracle-connector-java.jar:/usr/share/java/postgresql-connector-java.jar:lib/* -server -Dlog4j.configuration=file:/etc/cloudera-scm-server/log4j.prope...

10月 27 16:04:05 server5 systemd[1]: Started Cloudera CM Server Service.

10月 27 16:04:05 server5 cm-server[29418]: JAVA_HOME=/usr/java/jdk1.8.0_181-cloudera

10月 27 16:04:05 server5 cm-server[29418]: Java HotSpot(TM) 64-Bit Server VM warning: ignoring option MaxPermSize=256m; support was removed in 8.0

10月 27 16:04:07 server5 cm-server[29418]: ERROR StatusLogger No log4j2 configuration file found. Using default configuration: logging only errors to the console. Set system property 'org.apache.logging.log4j.simplelog.StatusLogger.level' to TRACE to...itialization logging.

Hint: Some lines were ellipsized, use -l to show in full..

访问地址:http://server5:7180/cmf/login

#賬號/密碼:admin/admin

java.lang.Exception: Non-thrown exception for stack trace.

#注释掉/etc/hosts中的关于localhost映射,只留下ip 机器名

192.168.52.5 server5

4、关闭CDH集群步骤

- 关闭组件集群,通过在CDH管理界面操作即可

- 关闭CDH集群,通过在CDH管理界面操作即可

- 关闭管理界面,需要通过命令行进行操作,service cloudera-scm-server stop 。该命令注意执行的操作系统与环境变量配置情况

#关闭CDH界面命令:

service cloudera-scm-server stop

5、配置本地parcel包

必须要在数据库初始化后,将parcel文件放置到/opt/cloudera/parcel-repo目录中

#上传cdh6的parcel等文件到opt/cloudera/parcel-repo

#重命名密钥文件名

mv CDH-6.2.1-1.cdh6.2.1.p0.1425774-el7.parcel.sha1 CDH-6.2.1-1.cdh6.2.1.p0.1425774-el7.parcel.sha

[root@server6 parcel-repo]# pwd

/opt/cloudera/parcel-repo

[root@server6 parcel-repo]# ll

总用量 2044360

-rw-r--r-- 1 root root 2093332003 11月 4 08:38 CDH-6.2.1-1.cdh6.2.1.p0.1425774-el7.parcel

-rw-r--r-- 1 root root 40 11月 4 08:35 CDH-6.2.1-1.cdh6.2.1.p0.1425774-el7.parcel.sha

-rw-r--r-- 1 root root 64 11月 4 08:35 CDH-6.2.1-1.cdh6.2.1.p0.1425774-el7.parcel.sha256

-rw-r----- 1 cloudera-scm cloudera-scm 80030 11月 4 08:42 CDH-6.2.1-1.cdh6.2.1.p0.1425774-el7.parcel.torrent

# CDH-6.2.1-1.cdh6.2.1.p0.1425774-el7.parcel.torrent 他的出现表示后面的CDH可以安装

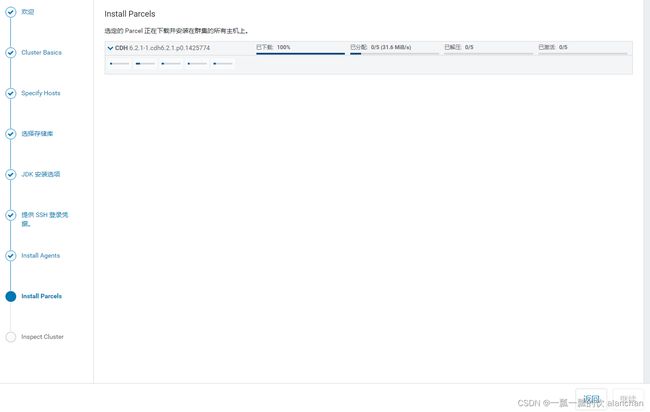

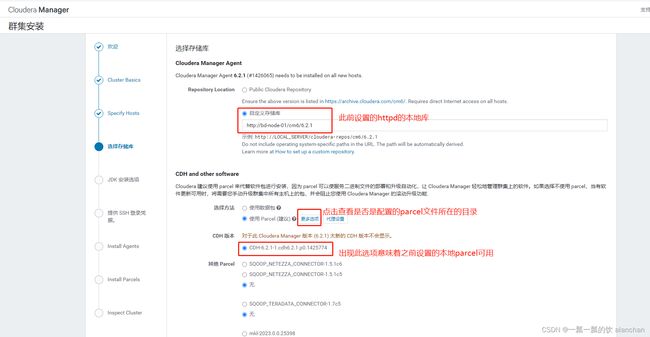

6、开始安装CDH

下面的图可能与上面的自定义存储库地址不一致,因为下面的图是后面部署其他的服务补充的。

这个地址就是上述httpd配置的地址。

访问Server:http://server5:7180/cmf/login

账号密码均为admin

设置Parcel 更新频率为1分钟,是为了刷新cdh的内容,即本地配置的parce包

关于设置非root用户的sudo权限详见链接:设置非root用户的sudo权限

选择 I understand the risks, let me continue with cluster creation. 继续

点击返回

到这里安装包已经完成下载、分配、解压、激活的操作了,接下来才是正真安装CDH组件相关的服务

回到主界面

7、swappiness和透明化

如果安装完成后,没有提示机器存在这些提示,则不需要处理

#临时生效

sysctl -w vm.swappiness=10

echo never > /sys/kernel/mm/transparent_hugepage/defrag

echo never > /sys/kernel/mm/transparent_hugepage/enabled

#永久生效

echo "vm.swappiness=10" >> /etc/sysctl.conf

echo "echo never > /sys/kernel/mm/transparent_hugepage/defrag" >> /etc/rc.local

echo "echo never > /sys/kernel/mm/transparent_hugepage/enabled" >> /etc/rc.local

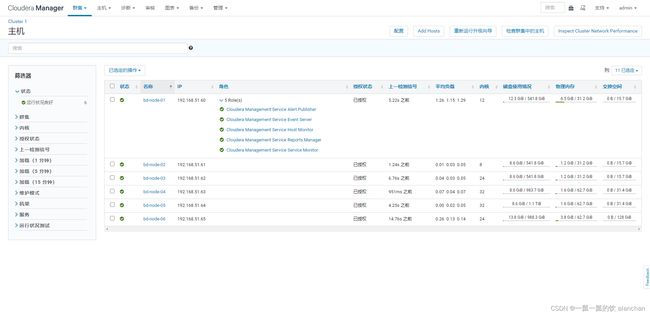

8、添加服务(Cloudera Management Service)

1)、 服务介绍

功能、可作为一组角色实施各种管理功能

- Activity Monitor:收集关于MapReduce服务运行的活动的信息。默认情况下不添加此角色,实际生产环境也是不需要的。

- Host Monitor:收集有关主机的运行状况和指标信息。

- Service Monitor:从YARN和Impala服务中收集关于服务和活动信息的健康和度量信息。

- Event Server:聚合组件的事件并将其用于警报和搜索。

- Alert Publisher :为特定类型的事件生成和提供警报,实际情况下用的也少。

- Reports Manager:生成报告,以提供有关用户,用户组和目录的磁盘利用率,用户和YARN池以及HBase表和名称空间处理活动的历史视图

2)、配置如下

mkdir -p /var/lib/cloudera-host-monitor

mkdir /var/lib/cloudera-service-monitor

chown -R cloudera-scm:cloudera-scm /var/lib/cloudera-host-monitor

chown -R cloudera-scm:cloudera-scm /var/lib/cloudera-service-monitor/

[root@bd-node-01 ~]# cd /var/lib/cloudera-host-monitor

-bash: cd: /var/lib/cloudera-host-monitor: No such file or directory

You have new mail in /var/spool/mail/root

[root@bd-node-01 ~]# mkdir -p /var/lib/cloudera-host-monitor

[root@bd-node-01 ~]# mkdir /var/lib/cloudera-service-monitor

[root@bd-node-01 ~]# chown -R cloudera-scm:cloudera-scm /var/lib/cloudera-host-monitor

[root@bd-node-01 ~]# chown -R cloudera-scm:cloudera-scm /var/lib/cloudera-service-monitor/

#因为cdh的监控服务都放在bd-node-01上,故只修改01上的目录即可

到此,算是完成了CDH环境的部署及验证。

9、添加zookeeper服务

- 设置环境变量

#zookeeper安装目录

/opt/cloudera/parcels/CDH-6.2.1-1.cdh6.2.1.p0.1425774/lib/zookeeper/bin

vim /etc/profile

export ZOOKEEPER_HOME=/opt/cloudera/parcels/CDH-6.2.1-1.cdh6.2.1.p0.1425774/lib/zookeeper

export PATH=$PATH:$ZOOKEEPER_HOME/bin

source /etc/profile

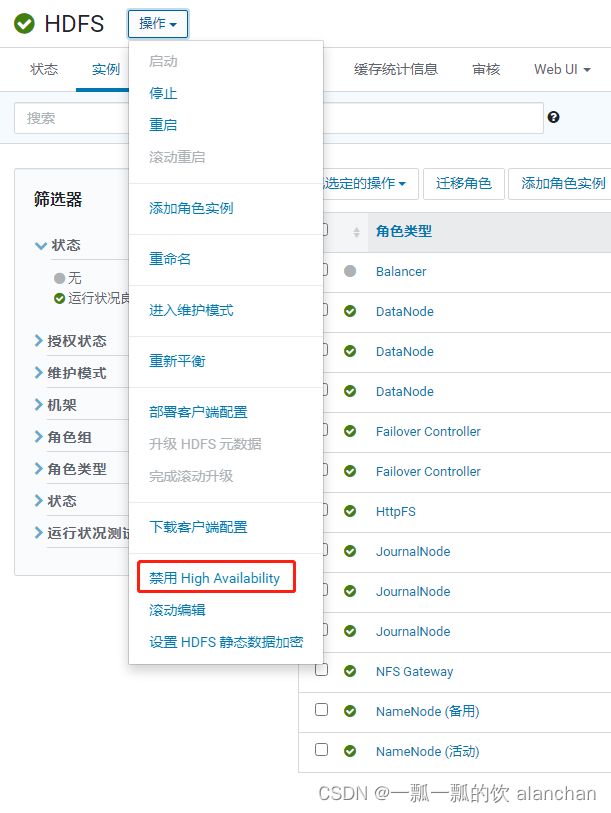

10、添加HDFS和Yarn服务(HDFS HA 和 yarn HA)及验证

按照CDH的管理界面完成hdfs、yarn组件安装即可,比较简单。需要注意的是选择好namenode、datanode等服务配置,该处也是与具体的环境有关,比较个性化。添加完成后,做如下配置或修改。

<property>

<name>hadoop.http.staticuser.username>

<value>alanchanvalue>

property>

<property>

<name>hadoop.proxyuser.alanchan.hostsname>

<value>*value>

property>

<property>

<name>hadoop.proxyuser.alanchan.groupsname>

<value>*value>

property>

- 设置环境变量

#验证hdfs的shell和web ui是否可以正常操作

#hadoop安装目录

/opt/cloudera/parcels/CDH-6.2.1-1.cdh6.2.1.p0.1425774/lib/hadoop/bin

vim /etc/profile

export HADOOP_HOME=/opt/cloudera/parcels/CDH-6.2.1-1.cdh6.2.1.p0.1425774/lib/hadoop

export PATH=$PATH:${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin

source /etc/profile

#测试yarn:

vim testinput.txt

I love BeiJing

I love China

Beijing is the capital of China

#创建HDFS目录并上传文件

hadoop fs -ls /

hadoop fs -put /test/testiinput.txt /test

#运行wordcount

yarn jar /opt/cloudera/parcels/CDH/jars/hadoop-mapreduce-examples-3.0.0-cdh6.2.1.jar wordcount /test /output

11、添加HIVE服务及验证

添加hive的组件比较简单,不再赘述。本处仅仅是验证hive的使用。

#mysql 数据库创建好

#提示ERROR JDBC Driver com.mysql.jdbc.Driver not found.

#需要上传mysql驱动包jar到/opt/cloudera/cm/lib目录下。

#提示:java.lang.ClassNotFoundException: com.mysql.jdbc.Driver

cp /opt/cloudera/cm/lib/mysql-connector-java-5.1.40.jar /opt/cloudera/parcels/CDH/lib/hive/lib/

#hive安装目录

/opt/cloudera/parcels/CDH-6.2.1-1.cdh6.2.1.p0.1425774/lib/hive

vim /etc/profile

export HIVE_HOME=/opt/cloudera/parcels/CDH-6.2.1-1.cdh6.2.1.p0.1425774/lib/hive

export PATH=$PATH:$HIVE_HOME/bin

source /etc/profile

#示例中只在server8上安装了hive

! connect jdbc:hive2://server8:10000

[root@server8 bin]# beeline

WARNING: Use "yarn jar" to launch YARN applications.

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/cloudera/parcels/CDH-6.2.1-1.cdh6.2.1.p0.1425774/jars/log4j-slf4j-impl-2.8.2.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/cloudera/parcels/CDH-6.2.1-1.cdh6.2.1.p0.1425774/jars/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory]

Beeline version 2.1.1-cdh6.2.1 by Apache Hive

beeline> ! connect jdbc:hive2://server8:10000

Connecting to jdbc:hive2://server8:10000

Enter username for jdbc:hive2://server8:10000: root

Enter password for jdbc:hive2://server8:10000: ********

Connected to: Apache Hive (version 2.1.1-cdh6.2.1)

Driver: Hive JDBC (version 2.1.1-cdh6.2.1)

Transaction isolation: TRANSACTION_REPEATABLE_READ

0: jdbc:hive2://server8:10000> show databases;

INFO : Compiling command(queryId=hive_20221107140032_17f94dd8-176b-4033-8b99-67b74a5e12cf): show databases

INFO : Semantic Analysis Completed

INFO : Returning Hive schema: Schema(fieldSchemas:[FieldSchema(name:database_name, type:string, comment:from deserializer)], properties:null)

INFO : Completed compiling command(queryId=hive_20221107140032_17f94dd8-176b-4033-8b99-67b74a5e12cf); Time taken: 1.145 seconds

INFO : Executing command(queryId=hive_20221107140032_17f94dd8-176b-4033-8b99-67b74a5e12cf): show databases

INFO : Starting task [Stage-0:DDL] in serial mode

INFO : Completed executing command(queryId=hive_20221107140032_17f94dd8-176b-4033-8b99-67b74a5e12cf); Time taken: 0.035 seconds

INFO : OK

+----------------+

| database_name |

+----------------+

| default |

+----------------+

1 row selected (1.693 seconds)

0: jdbc:hive2://server8:10000>

0: jdbc:hive2://server8:10000> create database test;

0: jdbc:hive2://server8:10000> show databases;

+----------------+

| database_name |

+----------------+

| default |

| test |

+----------------+

0: jdbc:hive2://server8:10000> create table the_nba_championship(

. . . . . . . . . . . . . . .> team_name string,

. . . . . . . . . . . . . . .> champion_year array<string>

. . . . . . . . . . . . . . .> ) row format delimited

. . . . . . . . . . . . . . .> fields terminated by ','

. . . . . . . . . . . . . . .> collection items terminated by '|';

#上传数据文件到hdfs中

0: jdbc:hive2://server8:10000> select * from the_nba_championship;

+---------------------------------+----------------------------------------------------+

| the_nba_championship.team_name | the_nba_championship.champion_year |

+---------------------------------+----------------------------------------------------+

| Chicago Bulls | ["1991","1992","1993","1996","1997","1998"] |

| San Antonio Spurs | ["1999","2003","2005","2007","2014"] |

| Golden State Warriors | ["1947","1956","1975","2015","2017","2018","2022"] |

| Boston Celtics | ["1957","1959","1960","1961","1962","1963","1964","1965","1966","1968","1969","1974","1976","1981","1984","1986","2008"] |

| L.A. Lakers | ["1949","1950","1952","1953","1954","1972","1980","1982","1985","1987","1988","2000","2001","2002","2009","2010","2020"] |

| Miami Heat | ["2006","2012","2013"] |

| Philadelphia 76ers | ["1955","1967","1983"] |

| Detroit Pistons | ["1989","1990","2004"] |

| Houston Rockets | ["1994","1995"] |

| New York Knicks | ["1970","1973"] |

| Cleveland Cavaliers | ["2016"] |

| Toronto Raptors | ["2019"] |

| Milwaukee Bucks | ["2021"] |

+---------------------------------+----------------------------------------------------+

0: jdbc:hive2://server8:10000> select a.team_name ,count(*) as nums

. . . . . . . . . . . . . . .> from the_nba_championship a lateral view explode(champion_year) b as year

. . . . . . . . . . . . . . .> group by a.team_name

. . . . . . . . . . . . . . .> order by nums desc;

+------------------------+-------+

| a.team_name | nums |

+------------------------+-------+

| Boston Celtics | 17 |

| L.A. Lakers | 17 |

| Golden State Warriors | 7 |

| Chicago Bulls | 6 |

| San Antonio Spurs | 5 |

| Philadelphia 76ers | 3 |

| Miami Heat | 3 |

| Detroit Pistons | 3 |

| New York Knicks | 2 |

| Houston Rockets | 2 |

| Milwaukee Bucks | 1 |

| Toronto Raptors | 1 |

| Cleveland Cavaliers | 1 |

+------------------------+-------+

12、添加Oozie服务

添加oozie的组件比较简单,不再赘述。本处仅仅是使用异常后的处理。

#创建数据库 cdhoozie(名称自己定义)

#提示:java.lang.ClassNotFoundException: com.mysql.jdbc.Driver

cp /opt/cloudera/cm/lib/mysql-connector-java-5.1.40.jar /opt/cloudera/parcels/CDH/lib/oozie/lib/

cp /opt/cloudera/cm/lib/mysql-connector-java-5.1.40.jar /var/lib/oozie/

#问题原因:缺少ExtJS2.2包

http://archive.cloudera.com/gplextras/misc/ext-2.2.zip

#上传服务器并解压

unzip ext-2.2.zip

[root@server8 libext]# pwd

/opt/cloudera/parcels/CDH-6.2.1-1.cdh6.2.1.p0.1425774/lib/oozie/libext

[root@server8 libext]# ll

总用量 968

drwxr-xr-x 9 root root 254 11月 17 09:20 ext-2.2

-rw-r--r-- 1 root root 990924 11月 7 14:26 mysql-connector-java-5.1.40.jar

13、添加HUE服务

添加hue的组件比较简单,不再赘述。

#创建数据库 cdhhue(名称自己定义)

#提示:java.lang.ClassNotFoundException: com.mysql.jdbc.Driver

cp /opt/cloudera/cm/lib/mysql-connector-java-5.1.40.jar /opt/cloudera/parcels/CDH/lib/oozie/lib/

#第一次登录设置登录账号和密码

hue/hue

14、添加impala服务

添加impala的组件比较简单,不再赘述。

15、添加Hbase服务(含HA)及验证

添加hbase的组件比较简单,不再赘述。

# 设置master2个即可

# hbase安装目录

/opt/cloudera/parcels/CDH-6.2.1-1.cdh6.2.1.p0.1425774/lib/hbase/bin

vim /etc/profile

export HBASE_HOME=/opt/cloudera/parcels/CDH-6.2.1-1.cdh6.2.1.p0.1425774/lib/hbase

export PATH=$PATH:${HBASE_HOME}/bin:${HBASE_HOME}/sbin

source /etc/profile

[root@server6 bin]# hbase shell

Java HotSpot(TM) 64-Bit Server VM warning: Using incremental CMS is deprecated and will likely be removed in a future release

HBase Shell

Use "help" to get list of supported commands.

Use "exit" to quit this interactive shell.

For Reference, please visit: http://hbase.apache.org/2.0/book.html#shell

Version 2.1.0-cdh6.2.1, rUnknown, Wed Sep 11 01:05:56 PDT 2019

Took 0.0037 seconds

hbase(main):001:0> help

HBase Shell, version 2.1.0-cdh6.2.1, rUnknown, Wed Sep 11 01:05:56 PDT 2019

Type 'help "COMMAND"', (e.g. 'help "get"' -- the quotes are necessary) for help on a specific command.

hbase(main):002:0> status

1 active master, 1 backup masters, 3 servers, 0 dead, 0.6667 average load

Took 1.1054 seconds

16、添加sqoop服务及验证

[root@server7 lib]# sqoop version

Warning: /opt/cloudera/parcels/CDH-6.2.1-1.cdh6.2.1.p0.1425774/bin/../lib/sqoop/../accumulo does not exist! Accumulo imports will fail.

Please set $ACCUMULO_HOME to the root of your Accumulo installation.

22/11/07 16:05:38 INFO sqoop.Sqoop: Running Sqoop version: 1.4.7-cdh6.2.1

Sqoop 1.4.7-cdh6.2.1

git commit id

Compiled by jenkins on Wed Sep 11 01:28:03 PDT 2019

sqoop list-databases --connect jdbc:mysql://192.168.10.44/test --username root --password rootroot

----mysql导入hive

sqoop import \

--connect 'jdbc:mysql://192.168.10.44/test' \

--username root \

--password '122456' \

--table person \

--hive-import \

--hive-database test \

--hive-table person \

--delete-target-dir \

--m 1

# hive目标表会自动创建,不需要手动创建

以上,完成了CDH的基础环境部署、cdh的server服务部署和相关组件的部署及验证。