Yolov5 utils/activations.py解析

与激活函数实现相关代码

对于激活函数得重新实现,防止在有些模型中无法直接调用nn自带函数

# Activation functions

import torch

import torch.nn as nn

import torch.nn.functional as F

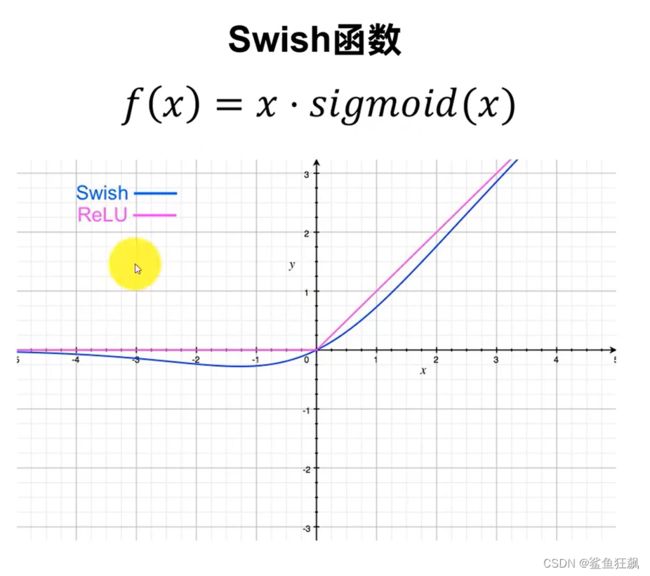

# SiLU https://arxiv.org/pdf/1606.08415.pdf ----------------------------------------------------------------------------

class SiLU(nn.Module): # export-friendly version of nn.SiLU()

@staticmethod

def forward(x):

return x * torch.sigmoid(x)

class Hardswish(nn.Module): # export-friendly version of nn.Hardswish()

@staticmethod

def forward(x):

# return x * F.hardsigmoid(x) # for torchscript and CoreML

return x * F.hardtanh(x + 3, 0., 6.) / 6. # for torchscript, CoreML and ONNX

class MemoryEfficientSwish(nn.Module):

class F(torch.autograd.Function):

@staticmethod

def forward(ctx, x):

ctx.save_for_backward(x)

return x * torch.sigmoid(x)

@staticmethod

def backward(ctx, grad_output):

x = ctx.saved_tensors[0]

sx = torch.sigmoid(x)

return grad_output * (sx * (1 + x * (1 - sx)))

def forward(self, x):

return self.F.apply(x)

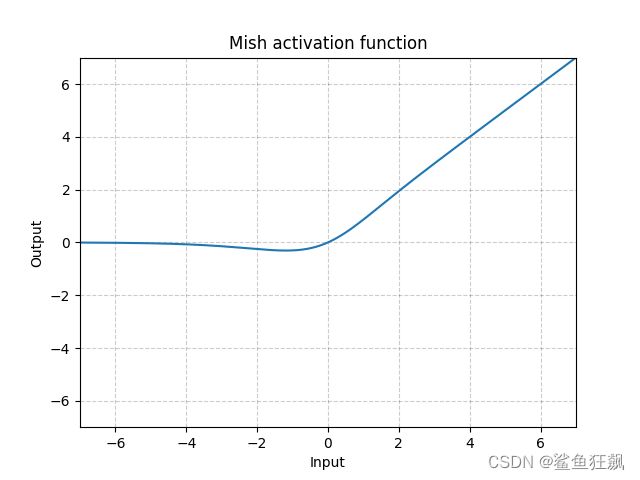

# Mish https://github.com/digantamisra98/Mish --------------------------------------------------------------------------

class Mish(nn.Module):

@staticmethod

def forward(x):

return x * F.softplus(x).tanh()

class MemoryEfficientMish(nn.Module):

class F(torch.autograd.Function):

@staticmethod

def forward(ctx, x):

ctx.save_for_backward(x)

return x.mul(torch.tanh(F.softplus(x))) # x * tanh(ln(1 + exp(x)))

@staticmethod

def backward(ctx, grad_output):

x = ctx.saved_tensors[0]

sx = torch.sigmoid(x)

fx = F.softplus(x).tanh()

return grad_output * (fx + x * sx * (1 - fx * fx))

def forward(self, x):

return self.F.apply(x)

# FReLU https://arxiv.org/abs/2007.11824 -------------------------------------------------------------------------------

class FReLU(nn.Module):

def __init__(self, c1, k=3): # ch_in, kernel

super().__init__()

self.conv = nn.Conv2d(c1, c1, k, 1, 1, groups=c1, bias=False)

self.bn = nn.BatchNorm2d(c1)

def forward(self, x):

return torch.max(x, self.bn(self.conv(x)))

staticmethod用于修饰类中的方法,使其可以在不创建类实例的情况下调用方法,这样做的好处是执行效率比较高。当然,也可以像一般的方法一样用实例调用该方法。该方法一般被称为静态方法。静态方法不可以引用类中的属性或方法,其参数列表也不需要约定的默认参数self。

staticmethod

Swish

class SiLU(nn.Module): # export-friendly version of nn.SiLU()

@staticmethod

def forward(x):

return x * torch.sigmoid(x)

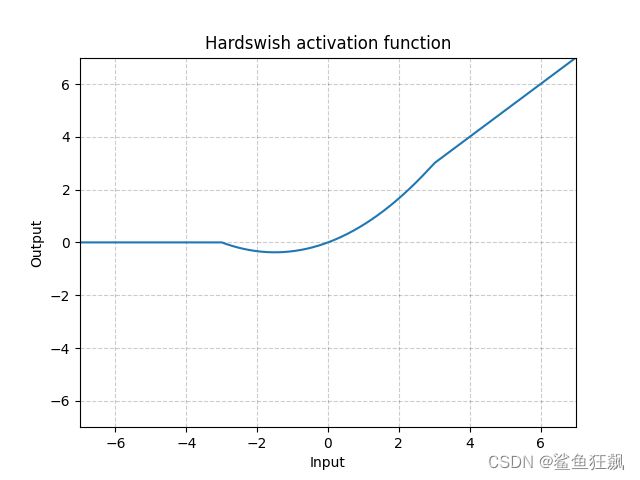

Handswish

class Hardswish(nn.Module): # export-friendly version of nn.Hardswish()

@staticmethod

def forward(x):

# return x * F.hardsigmoid(x) # for torchscript and CoreML

return x * F.hardtanh(x + 3, 0., 6.) / 6.

Mish

Mish(x)=x∗Tanh(Softplus(x))

class Mish(nn.Module):

@staticmethod

def forward(x):

return x * F.softplus(x).tanh()

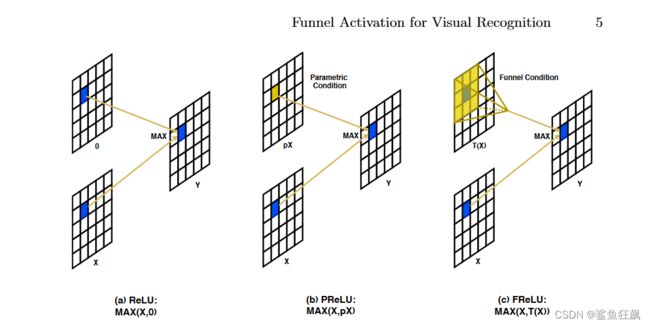

FReLU

三种激活函数对比

FReLU:x与T(x)求max 多了一个funnel condition的操作

class FReLU(nn.Module):

def __init__(self, c1, k=3): # ch_in, kernel

super().__init__()

# nn.Con2d(in_channels, out_channels, kernel_size, stride, padding, dilation=1, groups=1, bias=True)

self.conv = nn.Conv2d(c1, c1, k, 1, 1, groups=c1)

self.bn = nn.BatchNorm2d(c1)

def forward(self, x):

return torch.max(x, self.bn(self.conv(x)))