linux内核源码分析之虚拟内存映射

目录

内存映射原理

系统调用

mmap内存映射原理三个阶段

sys_mmap系统调用

munmap系统调用

内存映射即在进程的虚拟内存地址空间中创建一个映射,分为两种

1)文件映射:文件支持的内存映射,把文件的一个区间映射到进程的虚拟地址空间,数据源是存储设备上的文件。

2)匿名映射:没有文件支持的内存映射,把物理内存映射到进程的虚拟地址空间,没有数据源。

内存映射原理

创建内存映射时,在进程的用户虚拟地址空间中分配一个虚拟内存区域。内核采用延迟分配物理内存的策略,在进程第一次访问虚拟页的时候,产生缺页异常。

- 如果是文件映射,那么分配物理页,把文件指定区间的数据读到物理页中,然后在页表中把虚拟页映射到物理页;

- 如果是匿名映射,就分配物理页,然后在页表中把虚拟页映射到物理页 ;

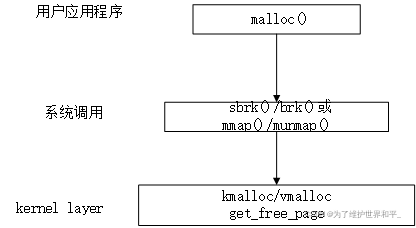

系统调用

应用程序 malloc或mmap申请内存,glibc库的内存分配器ptmalloc使用brk或mmap向内核以页为单位申请虚拟内存,然后把页划分成小内存块给应用程序。

默认阈值是128kb,如果应用程序申请的内存长度小于阈值,ptmalloc使用brk,否则使用mmap

注:应用层的mmap和内核中的mmap参数不一样

库函数

#include

void* mmap(void* start,size_t length,int prot,int flags,int fd,off_t offset);

内核

int mmap(struct file *filp, struct vm_area_struct *vma)

mmap内存映射原理三个阶段

- 进程启动映射过程,并在虚拟地址空间中为映射创建虚拟映射区域;

- 调用内核空间的系统调用mmap,实现物理地址空间和进程的虚拟的一一映射关系;

- 进程发起对这片映射空间的访问,引发缺页异常,实现文件内容到物理内存的拷贝;

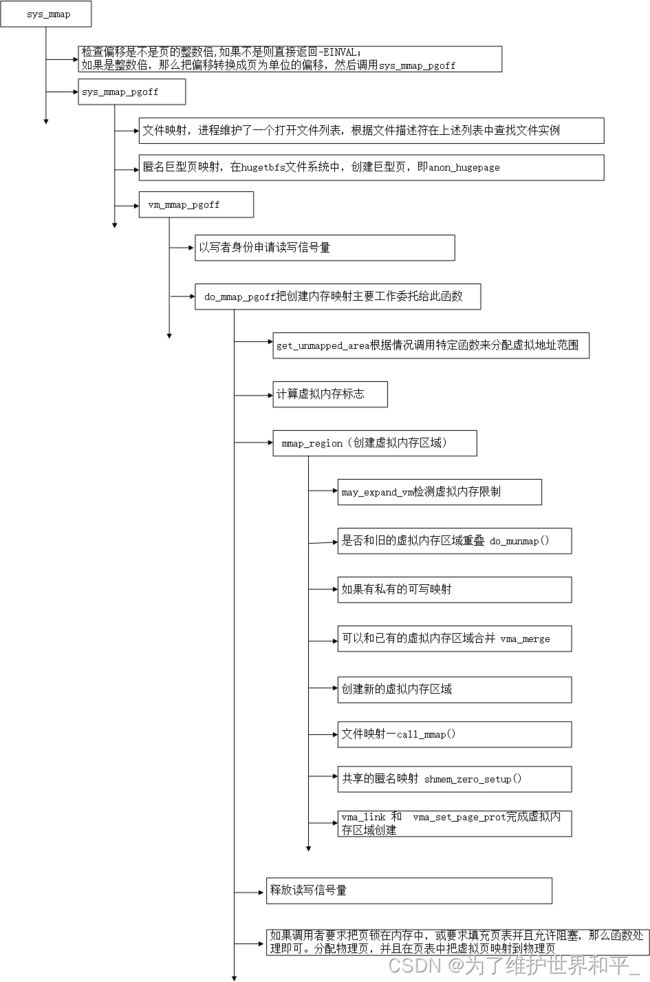

sys_mmap系统调用

mmap 创建 " 内存映射 " 的调用mmap 和 mmap2 ;

mmap 偏移单位是 " 字节 " ,mmap2 偏移单位是 " 页 " ,

在 arm 64 体系架构中 , 没有实现 mmap2 , 只实现了 mmap 系统调用 ;

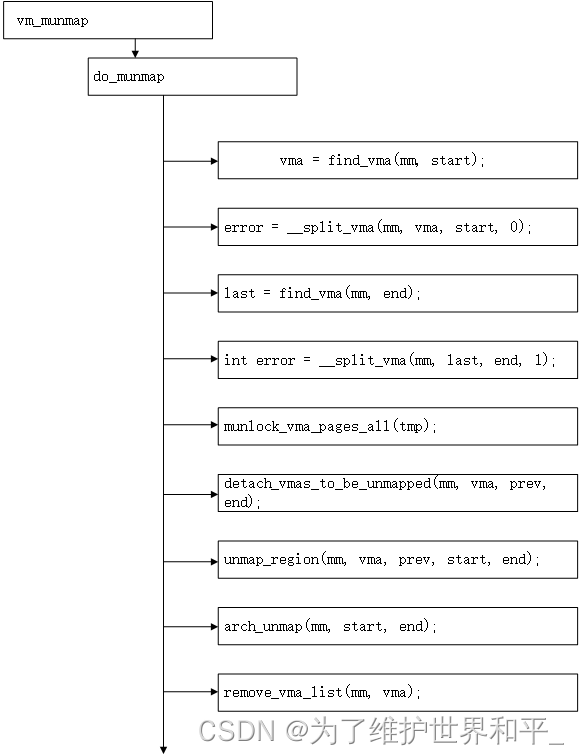

munmap系统调用

系统调用munmap用来删除内存映射,它有两个参数:起始地址和长度即可。它的主要工作委托给内核源码文件处理mm/mmap.c

步骤解析

- vma = find_vma(mm,start);//根据起始地址找到要删除的第一个虚拟内存区域vma

- 如果只删除虚拟内存区域vam的部分,那么分裂虚拟内存区域vma

- 根据结束地址找到删除的最后一个虚拟内存区域vma

- 如果只删除虚拟内存区域last的一部分,那么分裂虚拟内存区域vma

- 针对所有删除目标,如果虚拟内存区域被锁定在内存中,调用函数解锁

- 把所有目标从进程虚拟内存区域链表和树链表中删除,组成一条临时链表

- 针对所有删除目标,在进程的页表中删除映射,并且从处理器的页缓存中删除映射

- 执行处理器架构特定的处理操作

- 删除所有目标

源码如下:

//找到要删除的 第一个 虚拟内存区域 vm_area_struct 结构体实例

int __do_munmap(struct mm_struct *mm, unsigned long start, size_t len,

struct list_head *uf, bool downgrade)

{

unsigned long end;

struct vm_area_struct *vma, *prev, *last;

if ((offset_in_page(start)) || start > TASK_SIZE || len > TASK_SIZE-start)

return -EINVAL;

len = PAGE_ALIGN(len);

end = start + len;

if (len == 0)

return -EINVAL;

/*

* arch_unmap() might do unmaps itself. It must be called

* and finish any rbtree manipulation before this code

* runs and also starts to manipulate the rbtree.

*/

// 该处理器架构 对应的 删除内存映射的处理操作

arch_unmap(mm, start, end);

/* Find the first overlapping VMA */

vma = find_vma(mm, start);

if (!vma)

return 0;

prev = vma->vm_prev;

/* we have start < vma->vm_end */

/* if it doesn't overlap, we have nothing.. */

if (vma->vm_start >= end)

return 0;

/*

* If we need to split any vma, do it now to save pain later.

*

* Note: mremap's move_vma VM_ACCOUNT handling assumes a partially

* unmapped vm_area_struct will remain in use: so lower split_vma

* places tmp vma above, and higher split_vma places tmp vma below.

*/

if (start > vma->vm_start) {

int error;

/*

* Make sure that map_count on return from munmap() will

* not exceed its limit; but let map_count go just above

* its limit temporarily, to help free resources as expected.

*/

if (end < vma->vm_end && mm->map_count >= sysctl_max_map_count)

return -ENOMEM;

//不是删除整个 vam 内存区域,指向分裂的区域

error = __split_vma(mm, vma, start, 0);

if (error)

return error;

prev = vma;

}

/* Does it split the last one? */

last = find_vma(mm, end);

if (last && end > last->vm_start) {

int error = __split_vma(mm, last, end, 1);

if (error)

return error;

}

vma = prev ? prev->vm_next : mm->mmap;

if (unlikely(uf)) {

/*

* If userfaultfd_unmap_prep returns an error the vmas

* will remain splitted, but userland will get a

* highly unexpected error anyway. This is no

* different than the case where the first of the two

* __split_vma fails, but we don't undo the first

* split, despite we could. This is unlikely enough

* failure that it's not worth optimizing it for.

*/

int error = userfaultfd_unmap_prep(vma, start, end, uf);

if (error)

return error;

}

/*

* unlock any mlock()ed ranges before detaching vmas

*/

//解锁

if (mm->locked_vm) {

struct vm_area_struct *tmp = vma;

while (tmp && tmp->vm_start < end) {

if (tmp->vm_flags & VM_LOCKED) {

mm->locked_vm -= vma_pages(tmp);

munlock_vma_pages_all(tmp);

}

tmp = tmp->vm_next;

}

}

/* Detach vmas from rbtree */

// 虚拟内存区域 " 从 进程的 虚拟内存区域 链表 和 红黑树 数据结构中删除

detach_vmas_to_be_unmapped(mm, vma, prev, end);

if (downgrade)

downgrade_write(&mm->mmap_sem);

//被删除内存区域 对应的 映射 " 删除 , 从处理器页表缓存中也删除对应映射

unmap_region(mm, vma, prev, start, end);

/* Fix up all other VM information */

//删除所有的虚拟内存区域

remove_vma_list(mm, vma);

return downgrade ? 1 : 0;

}

int do_munmap(struct mm_struct *mm, unsigned long start, size_t len,

struct list_head *uf)

{

return __do_munmap(mm, start, len, uf, false);

}

int vm_munmap(unsigned long addr, size_t len)

{

struct mm_struct *mm = current->mm;

int ret;

down_write(&mm->mmap_sem);

ret = do_munmap(mm, addr, len, NULL);

up_write(&mm->mmap_sem);

return ret;

}参考:

《深入理解linux内核》

《Linux内核深度解析》