python-ue4-metahuman-nerf:我创造了一个数字人!!

原文:https://zhuanlan.zhihu.com/p/561152870

目录

收起

1. 准备工作:制作 MetaHuman 角色

1.1 创建 MetaHuman 角色

1.2 Quixel Bridge 下载 MetaHuman

1.3 导出 MetaHuman 到 UE4

2. UE 渲染 MetaHuman 的多视点图片

2.1 如何在 UE 中手动渲染视频?

2.2 Python 自动化渲染

2.3 全部代码

3. 渲染结果

随着 ECCV 2020 NeRF 的出现,在计算机视觉、计算机图形学、三维重建等领域砸开了无数的坑位,一篇 NeRF 养活了多少科研工作者、大厂员工。但是要想训练这样一个 Neural Radiance Filed 却不是一件容易的事情,首先从数据来看,训练一个 NeRF 需要该场景的多视点图片,同时还需要知道每张图片所对应的相机参数,要想得到这样的数据,可谓是耗时耗力。那么,有没有一种方式,可以虚拟的仿真出一组 NeRF 的训练数据?

答案当然是有的,而且不止一种方式。下面本文将分享一种使用 UE4 来渲染带有相机参数的多视点图片的方法。在正式开始之前,首先来介绍一下 MetaHuman,它就是我们要渲染的对象。在计算机视觉、三维重建领域,人脸方向的研究一直是一个热点,而 MetaHuman 则是一个高保真的数字人开源框架,我们可以利用 MetaHuman 来设计出自己想要的数字人形象,然后渲染出其多视点图片,用作进一步的研究。

1. 准备工作:制作 MetaHuman 角色

在 UE4 中渲染 MetaHuman 之前,我们首先需要创造一个 Metahuman 角色,然后并将其导入到 UE4 中进行渲染,整体可分为以下几个步骤

- 使用 MetaHuman Creator 创建 MetaHuman 角色;也可以不创建新角色,直接使用自带的即可。

- 借助 Quixel Bridge 工具将创建的 MetaHuman 角色下载下来。

- 在 Quixel Bridge 中将 MetaHuman 角色导出到 UE4中。

下面分别对这几步进行说明:

1.1 创建 MetaHuman 角色

登入 MetaHuman Creator 官网:https://metahuman.unrealengine.com/,会看到如下界面,左侧的 CREATE METAHUMAN 是系统默认给出的 Metahuman 角色,可以直接在其基础上进行修改,创作一个新的 Metahuman,这会存在左侧的 MY METAHUMAN 目录中。

具体的操作界面如下,整体分为三部分:脸部、毛发、身体。我们可以对这三部分中的各个细节进行操作,大家可以自行尝试~

1.2 Quixel Bridge 下载 MetaHuman

我们刚刚创作好的 MetaHuman 角色是存在于官方的服务器上的,在对它进行操作之前,还需要借助 Quixel Bridge 工具将其下载下来。

Quixel Bridge 下载链接:https://quixel.com/bridge

在下载时候,我们使用 Epic Games 账号,后续用的也一直是这个账号。下载完成并登录之后,我们会看到如下界面

左侧导航栏中的 MetaHuman Presets 和 My MetaHuman 分别表示默认角色和我们前一步创建的角色。点击右上角绿色的下载按钮即可下载到本地。

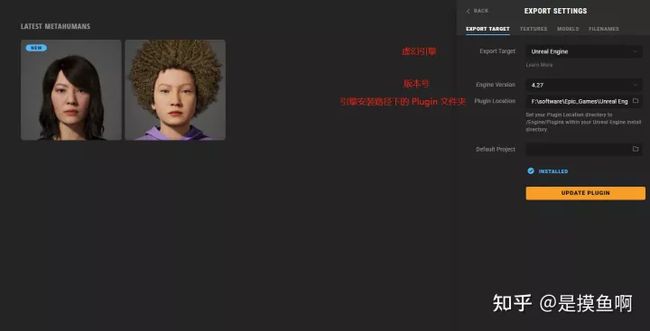

1.3 导出 MetaHuman 到 UE4

在导出 MetaHuman 角色到 UE4 之前,我们需要配置:导出的目标引擎是什么、引擎的版本号、引擎的位置。如下图所示

之后,点击对应 MetaHuman 角色右上角的 Export 按钮即可将其导出到 UE 引擎中(注意:在导出时,要保证 UE 是打开的)。

2. UE 渲染 MetaHuman 的多视点图片

在利用 Python 自动化渲染符合要求的多视点图片之前,我们首先要搞明白的一件事情是:如何手动的在 UE4 中渲染视频序列(视频和图片本质上是一样的,图片就是视频一帧一帧截出来的)?

2.1 如何在 UE 中手动渲染视频?

step1:将 MetaHuman 导入到 UE4 的场景中,并将其定位到合适的位置。在场景中添加电影摄像机 CineCameraActor, 如下:

step2:创建过场动画,并添加关卡序列,如下

step3:依次点击,轨道 — Actor到Sequencer — CineCameraActor,创建 CineCameraActor 的轨道序列,如下

step4:在创建好的 CineCameraActor 轨道序列中添加关键帧(下图添加了 5 个关键帧,分别指定了相机的位置和旋转),使得相机按照既定方式运动,如下

step5:至此,视频序列创建完毕,点击 将影片渲染为视频序列或图片序列,完成导出

上述就是在 UE4 中渲染 MetaHuman 的整个过程,只需要鼠标点点就行了,比较简单。但是有一个严重的问题是:如果要渲染一个多视点 MetaHuman 数据集用作 NeRF 训练的话,我们是必须要知道相机的姿态的;而在上述过程中,我们只能准确的知道关键帧的相机姿态,其他帧的相机姿态都是被插值出来的。如果要手动定义数百上千个关键帧,又是相当繁琐的一项工作,因此我们下面考虑利用 python 来自动化的执行上述过程,自动化的定义关键帧,指定相机的运动。

2.2 Python 自动化渲染

首先,总结一下前一章节手动渲染的过程,整体上可以分为如下 4 个步骤:

- 导入 MetaHuman 角色,并将其定位的合适位置

- 创建关卡序列,并创建 CineCameraActor 轨道序列

- 设置相机参数,并指定相机的位置和旋转,定义相机的运动方式

- 导出渲染的视频、图片序列

上述 4 个步骤中,除了步骤一还需要我们手动操作以外,其余步骤全部都可以利用 python 脚本来实现。在 UE4 中使用 python 接口还需要进行一些简单的配置,具体操作可以参考:https://www.bilibili.com/video/BV1PE411d7z8

步骤一:角色导入以及人脸定位

由于我只想渲染 MetaHuman 的人脸区域,因此我需要将其余身体部分移动到相机视野之外。需要移动的身体部位有:Torso、Legs、Feet。

接着进行 MetaHuman 的定位,为了方便,我们可以将其 Face 位置定位在坐标原点(0,0,0),这是为了方便后面指定相机的位置与旋转。

步骤二:指定相机运动方式:位置和旋转

在这一步,我希望相机能够以 MetaHuman 的人脸(也就是坐标原点)为中心,做球形旋转运动。关于相机的外参,做如下设置:

- 相机到坐标原点的距离(即:球半径)为35

- 相机的俯仰角设置为如下角度:[-30, -15, 0, 15, 30]

- 在每个俯仰角下,偏航角区间设置为:[-180, 180], 每间隔角度 10 定义一个关键点(即:拍摄一张照片)

渲染的相关的设置如下:

- 相机的视场角设置为:100

- 所渲染图片的分辨率:512

- 帧与帧之间的延迟:1.5

相机内参的设置见:步骤三具体的渲染代码。

现在,我们将相机的运动数据(相机位置和旋转)、渲染的相关设置,存为一个 .json 文件,在步骤三渲染的时候直接读取这些数据即可。代码如下:

import json

import numpy as np

# 得到相机的运动数据(位置、旋转)

def get_camera_transform():

radius = 35

camara_transform = []

for y_pitch in range(-30, 31, 15):

for z_yaw in range(-180, 181, 10):

location_x = radius * np.cos(z_yaw / 180 * np.pi) * np.cos(y_pitch / 180 * np.pi)

location_y = radius * np.sin(z_yaw / 180 * np.pi) * np.cos(y_pitch / 180 * np.pi)

location_z = -radius * np.sin(y_pitch / 180 * np.pi)

print([-location_x, -location_y, location_z, 0, y_pitch, z_yaw])

camara_transform.append([-location_x, -location_y, location_z, 0, y_pitch, z_yaw])

return camara_transform

# 将基础数据存为一个 .json 文件

def make_json():

camara_transform = get_camera_transform()

data_dict = {

f"film_fov": 100,

"film_resolution": 512,

"delay_every_frame": 1.5,

"output_image_path": "F:/projects/Unreal projects/MetaHumanRender/RenderImages/Danielle/face_hair",

"camera_data": {

"camera_qRrq9DqY": {

"type": "Camera",

"camera_transform": camara_transform

}

}

}

data_json = json.dumps(data_dict, indent=4)

with open("camera_data.json", 'w') as json_file:

json_file.write(data_json)

if __name__ == '__main__':

make_json()步骤三:读取相机运动数据,创建关卡序列添加关键帧

首先,读取上一节生成的.json文件,获取相机运动数据

def read_json_file(path):

file = open(path, "rb")

file_json = json.load(file)

export_path = file_json['output_image_path'] # image export path

film_fov = file_json.get('film_fov', None) # camera film fov

film_resolution = file_json.get("film_resolution", 512) # camera file resolution

delay_every_frame = file_json.get("delay_every_frame", 3.0) # sequence run delay every frame

camera_json = file_json['camera_data'] # camera data

camera_transform_array = []

camera_rotation_array = []

camera_name_array = []

for camera in camera_json:

camera_transform = camera_json[camera]["camera_transform"]

for index in range(len(camera_transform)):

camera_transform_array.append(unreal.Transform(

location=[camera_transform[index][0], camera_transform[index][1], camera_transform[index][2]],

rotation=[camera_transform[index][3], camera_transform[index][4], camera_transform[index][5]],

scale=[1.0, 1.0, 1.0]

))

camera_rotation_array.append(unreal.Rotator(

roll=camera_transform[index][3],

pitch=camera_transform[index][4],

yaw=camera_transform[index][5]

))

camera_name_array.append(camera)

return camera_transform_array, camera_rotation_array, camera_name_array, export_path, film_fov, film_resolution, delay_every_frame接着创建关卡序列,并添加关键帧,每一个相机姿态对应一个关键帧。在这一部分,做要做了如下几个事情,具体细节可参考代码

- 在场景中创建一个电影摄像机 CineCameraActor,并指定其位置和旋转。

- 设置胶片或数字传感器的尺寸。

- 相机的镜头的参数设置:最小焦距、最大焦距等。

- 聚焦设置:焦距、光圈、视场角等。

- 相机的绑定,相机的关键帧添加,对每一个相机姿态添加一个关键帧。

代码

def create_sequence(asset_name, camera_transform_array, camera_rotation_array, camera_name_array, film_fov,

package_path='/Game/'):

# create a sequence

sequence = unreal.AssetToolsHelpers.get_asset_tools().create_asset(asset_name, package_path, unreal.LevelSequence,

unreal.LevelSequenceFactoryNew())

sequence.set_display_rate(unreal.FrameRate(numerator=1, denominator=1))

sequence.set_playback_start_seconds(0)

sequence.set_playback_end_seconds(len(camera_transform_array))

# add a camera cut track

camera_cut_track = sequence.add_master_track(unreal.MovieSceneCameraCutTrack)

print(camera_transform_array[0], '=======================================================================')

print(camera_rotation_array[0].get_editor_property("roll"))

# 添加关键帧

for index in range(0, len(camera_name_array)):

camera_cut_section = camera_cut_track.add_section()

camera_cut_section.set_start_frame_bounded(6 * index)

camera_cut_section.set_end_frame_bounded(6 * index + len(camera_transform_array))

camera_cut_section.set_start_frame_seconds(6 * index)

camera_cut_section.set_end_frame_seconds(6 * index + len(camera_transform_array))

# Create a cine camera actor

# 在世界编辑器中创建一个电影摄像机CineCameraActor,并指定位置和旋转

camera_actor = unreal.EditorLevelLibrary().spawn_actor_from_class(unreal.CineCameraActor,

unreal.Vector(0, 0, 0),

unreal.Rotator(0, 0, 0))

# 指定电影摄像机CineCameraActor的名称

camera_actor.set_actor_label(camera_name_array[index])

# 返回CineCamera组件

camera_component = camera_actor.get_cine_camera_component()

ratio = math.tan(film_fov / 360.0 * math.pi) * 2

# step1:胶片背板设置

filmback = camera_component.get_editor_property("filmback")

# sensor_height:胶片或数字传感器的垂直尺寸,以毫米为单位

filmback.set_editor_property("sensor_height", 60)

# sensor_width:胶片或数字传感器的水平尺寸,以毫米为单位

filmback.set_editor_property("sensor_width", 60)

# step2:镜头设置

lens_settings = camera_component.get_editor_property("lens_settings")

lens_settings.set_editor_property("min_focal_length", 4.0)

lens_settings.set_editor_property("max_focal_length", 1000)

lens_settings.set_editor_property("min_f_stop", 1.2)

lens_settings.set_editor_property("max_f_stop", 500)

print(lens_settings)

# step3:聚焦设置

focal_length = 45 # 焦距

current_aperture = 500 # 光圈

camera_component.set_editor_property("current_focal_length", focal_length) # 设置当前焦距

camera_component.set_editor_property("current_aperture", current_aperture) # 设置当前光圈

fov = camera_component.get_editor_property("field_of_view")

print(camera_component.get_editor_property("current_focal_length"))

print(camera_component.get_editor_property("current_aperture"))

print(f"fov - {fov}")

# add a binding for the camera

camera_binding = sequence.add_possessable(camera_actor)

transform_track = camera_binding.add_track(unreal.MovieScene3DTransformTrack)

transform_section = transform_track.add_section()

transform_section.set_start_frame_bounded(6 * index)

transform_section.set_end_frame_bounded(6 * index + len(camera_transform_array))

transform_section.set_start_frame_seconds(6 * index)

transform_section.set_end_frame_seconds(6 * index + len(camera_transform_array))

# get channel for location_x location_y location_z

channel_location_x = transform_section.get_channels()[0]

channel_location_y = transform_section.get_channels()[1]

channel_location_z = transform_section.get_channels()[2]

# get key for rotation_y rotation_z

channel_rotation_x = transform_section.get_channels()[3]

channel_rotation_y = transform_section.get_channels()[4]

channel_rotation_z = transform_section.get_channels()[5]

# camera_transform = camera_transform_array[index]

# camera_location_x = camera_transform.get_editor_property("translation").get_editor_property("x")

# camera_location_y = camera_transform.get_editor_property("translation").get_editor_property("y")

# camera_location_z = camera_transform.get_editor_property("translation").get_editor_property("z")

for i in range(len(camera_transform_array)):

camera_transform = camera_transform_array[i]

camera_location_x = camera_transform.get_editor_property("translation").get_editor_property("x")

camera_location_y = camera_transform.get_editor_property("translation").get_editor_property("y")

camera_location_z = camera_transform.get_editor_property("translation").get_editor_property("z")

camera_rotation = camera_rotation_array[i]

camera_rotate_roll = camera_rotation.get_editor_property("roll")

camera_rotate_pitch = camera_rotation.get_editor_property("pitch")

camera_rotate_yaw = camera_rotation.get_editor_property("yaw")

new_time = unreal.FrameNumber(value=i)

channel_location_x.add_key(new_time, camera_location_x, 0.0)

channel_location_y.add_key(new_time, camera_location_y, 0.0)

channel_location_z.add_key(new_time, camera_location_z, 0.0)

channel_rotation_x.add_key(new_time, camera_rotate_roll, 0.0)

channel_rotation_y.add_key(new_time, camera_rotate_pitch, 0.0)

channel_rotation_z.add_key(new_time, camera_rotate_yaw, 0.0)

# if i % 5 == 0:

# print(new_time, [camera_location_x, camera_location_y, camera_location_z, camera_rotate_roll, camera_rotate_pitch, camera_rotate_yaw])

# add the binding for the camera cut section

camera_binding_id = sequence.make_binding_id(camera_binding, unreal.MovieSceneObjectBindingSpace.LOCAL)

camera_cut_section.set_camera_binding_id(camera_binding_id)

# save sequence asset

unreal.EditorAssetLibrary.save_loaded_asset(sequence, False)

return sequence步骤四:将关卡序列渲染为视频、图片序列

将创建的关卡序列渲染为电影序列,这部分主要就是设置一些渲染参数,对应手动渲染的 step5,具体参数的含义可参考 unreal 官方文档。代码如下:

# sequence movie

def render_sequence_to_movie(export_path, film_resolution, delay_every_frame, on_finished_callback):

# 1) Create an instance of our UAutomatedLevelSequenceCapture and override all of the settings on it. This class is currently

# set as a config class so settings will leak between the Unreal Sequencer Render-to-Movie UI and this object. To work around

# this, we set every setting via the script so that no changes the user has made via the UI will affect the script version.

# The users UI settings will be reset as an unfortunate side effect of this.

capture_settings = unreal.AutomatedLevelSequenceCapture()

# Set all POD settings on the UMovieSceneCapture

# capture_settings.settings.output_directory = unreal.DirectoryPath("../../../unreal-render-release/Saved/VideoCaptures1/")

capture_settings.settings.output_directory = unreal.DirectoryPath(export_path)

# If you game mode is implemented in Blueprint, load_asset(...) is going to return you the C++ type ('Blueprint') and not what the BP says it inherits from.

# Instead, because game_mode_override is a TSubclassOf we can use unreal.load_class to get the UClass which is implicitly convertable.

# ie: capture_settings.settings.game_mode_override = unreal.load_class(None, "/Game/AI/TestingSupport/AITestingGameMode.AITestingGameMode_C")

capture_settings.settings.game_mode_override = None

capture_settings.settings.output_format = "{camera}" + "_{frame}"

capture_settings.settings.overwrite_existing = False

capture_settings.settings.use_relative_frame_numbers = False

capture_settings.settings.handle_frames = 0

capture_settings.settings.zero_pad_frame_numbers = 4

# If you wish to override the output framerate you can use these two lines, otherwise the framerate will be derived from the sequence being rendered

capture_settings.settings.use_custom_frame_rate = False

# capture_settings.settings.custom_frame_rate = unreal.FrameRate(24,1)

capture_settings.settings.resolution.res_x = film_resolution

capture_settings.settings.resolution.res_y = film_resolution

capture_settings.settings.enable_texture_streaming = False

capture_settings.settings.cinematic_engine_scalability = True

capture_settings.settings.cinematic_mode = True

capture_settings.settings.allow_movement = False # Requires cinematic_mode = True

capture_settings.settings.allow_turning = False # Requires cinematic_mode = True

capture_settings.settings.show_player = False # Requires cinematic_mode = True

capture_settings.settings.show_hud = False # Requires cinematic_mode = True

capture_settings.use_separate_process = False

capture_settings.close_editor_when_capture_starts = False # Requires use_separate_process = True

capture_settings.additional_command_line_arguments = "-NOSCREENMESSAGES" # Requires use_separate_process = True

capture_settings.inherited_command_line_arguments = "" # Requires use_separate_process = True

# Set all the POD settings on UAutomatedLevelSequenceCapture

capture_settings.use_custom_start_frame = False # If False, the system will automatically calculate the start based on sequence content

capture_settings.use_custom_end_frame = False # If False, the system will automatically calculate the end based on sequence content

capture_settings.custom_start_frame = unreal.FrameNumber(0) # Requires use_custom_start_frame = True

capture_settings.custom_end_frame = unreal.FrameNumber(0) # Requires use_custom_end_frame = True

capture_settings.warm_up_frame_count = 0

capture_settings.delay_before_warm_up = 0

capture_settings.delay_before_shot_warm_up = 0

capture_settings.delay_every_frame = delay_every_frame

capture_settings.write_edit_decision_list = True

# Tell the capture settings which level sequence to render with these settings. The asset does not need to be loaded,

# as we're only capturing the path to it and when the PIE instance is created it will load the specified asset.

# If you only had a reference to the level sequence, you could use "unreal.SoftObjectPath(mysequence.get_path_name())"

capture_settings.level_sequence_asset = unreal.SoftObjectPath(sequence_asset_path)

# To configure the video output we need to tell the capture settings which capture protocol to use. The various supported

# capture protocols can be found by setting the Unreal Content Browser to "Engine C++ Classes" and filtering for "Protocol"

# ie: CompositionGraphCaptureProtocol, ImageSequenceProtocol_PNG, etc. Do note that some of the listed protocols are not intended

# to be used directly.

# Right click on a Protocol and use "Copy Reference" and then remove the extra formatting around it. ie:

# Class'/Script/MovieSceneCapture.ImageSequenceProtocol_PNG' gets transformed into "/Script/MovieSceneCapture.ImageSequenceProtocol_PNG"

capture_settings.set_image_capture_protocol_type(

unreal.load_class(None, "/Script/MovieSceneCapture.ImageSequenceProtocol_PNG"))

# After we have set the capture protocol to a soft class path we can start editing the settings for the instance of the protocol that is internallyc reated.

capture_settings.get_image_capture_protocol().compression_quality = 85

# Finally invoke Sequencer's Render to Movie functionality. This will examine the specified settings object and either construct a new PIE instance to render in,

# or create and launch a new process (optionally shutting down your editor).

unreal.SequencerTools.render_movie(capture_settings, on_finished_callback) 2.3 全部代码

import sys

import unreal, os, json, math

# sequence asset path

sequence_asset_path = '/Game/Render_Sequence.Render_Sequence'

# read json file

def read_json_file(path):

file = open(path, "rb")

file_json = json.load(file)

export_path = file_json['output_image_path'] # image export path

film_fov = file_json.get('film_fov', None) # camera film fov

film_resolution = file_json.get("film_resolution", 512) # camera file resolution

delay_every_frame = file_json.get("delay_every_frame", 3.0) # sequence run delay every frame

camera_json = file_json['camera_data'] # camera data

camera_transform_array = []

camera_rotation_array = []

camera_name_array = []

for camera in camera_json:

camera_transform = camera_json[camera]["camera_transform"]

for index in range(len(camera_transform)):

camera_transform_array.append(unreal.Transform(

location=[camera_transform[index][0], camera_transform[index][1], camera_transform[index][2]],

rotation=[camera_transform[index][3], camera_transform[index][4], camera_transform[index][5]],

scale=[1.0, 1.0, 1.0]

))

camera_rotation_array.append(unreal.Rotator(

roll=camera_transform[index][3],

pitch=camera_transform[index][4],

yaw=camera_transform[index][5]

))

camera_name_array.append(camera)

return camera_transform_array, camera_rotation_array, camera_name_array, export_path, film_fov, film_resolution, delay_every_frame

def create_sequence(asset_name, camera_transform_array, camera_rotation_array, camera_name_array, film_fov,

package_path='/Game/'):

# create a sequence

sequence = unreal.AssetToolsHelpers.get_asset_tools().create_asset(asset_name, package_path, unreal.LevelSequence,

unreal.LevelSequenceFactoryNew())

sequence.set_display_rate(unreal.FrameRate(numerator=1, denominator=1))

sequence.set_playback_start_seconds(0)

sequence.set_playback_end_seconds(len(camera_transform_array))

# add a camera cut track

camera_cut_track = sequence.add_master_track(unreal.MovieSceneCameraCutTrack)

print(camera_transform_array[0], '=======================================================================')

print(camera_rotation_array[0].get_editor_property("roll"))

# 添加关键帧

for index in range(0, len(camera_name_array)):

camera_cut_section = camera_cut_track.add_section()

camera_cut_section.set_start_frame_bounded(6 * index)

camera_cut_section.set_end_frame_bounded(6 * index + len(camera_transform_array))

camera_cut_section.set_start_frame_seconds(6 * index)

camera_cut_section.set_end_frame_seconds(6 * index + len(camera_transform_array))

# Create a cine camera actor

# 在世界编辑器中创建一个电影摄像机CineCameraActor,并指定位置和旋转

camera_actor = unreal.EditorLevelLibrary().spawn_actor_from_class(unreal.CineCameraActor,

unreal.Vector(0, 0, 0),

unreal.Rotator(0, 0, 0))

# 指定电影摄像机CineCameraActor的名称

camera_actor.set_actor_label(camera_name_array[index])

# 返回CineCamera组件

camera_component = camera_actor.get_cine_camera_component()

ratio = math.tan(film_fov / 360.0 * math.pi) * 2

# step1:胶片背板设置

filmback = camera_component.get_editor_property("filmback")

# sensor_height:胶片或数字传感器的垂直尺寸,以毫米为单位

filmback.set_editor_property("sensor_height", 60)

# sensor_width:胶片或数字传感器的水平尺寸,以毫米为单位

filmback.set_editor_property("sensor_width", 60)

# step2:镜头设置

lens_settings = camera_component.get_editor_property("lens_settings")

lens_settings.set_editor_property("min_focal_length", 4.0)

lens_settings.set_editor_property("max_focal_length", 1000)

lens_settings.set_editor_property("min_f_stop", 1.2)

lens_settings.set_editor_property("max_f_stop", 500)

print(lens_settings)

# step3:聚焦设置

focal_length = 45 # 焦距

current_aperture = 500 # 光圈

camera_component.set_editor_property("current_focal_length", focal_length) # 设置当前焦距

camera_component.set_editor_property("current_aperture", current_aperture) # 设置当前光圈

fov = camera_component.get_editor_property("field_of_view")

print(camera_component.get_editor_property("current_focal_length"))

print(camera_component.get_editor_property("current_aperture"))

print(f"fov - {fov}")

# add a binding for the camera

camera_binding = sequence.add_possessable(camera_actor)

transform_track = camera_binding.add_track(unreal.MovieScene3DTransformTrack)

transform_section = transform_track.add_section()

transform_section.set_start_frame_bounded(6 * index)

transform_section.set_end_frame_bounded(6 * index + len(camera_transform_array))

transform_section.set_start_frame_seconds(6 * index)

transform_section.set_end_frame_seconds(6 * index + len(camera_transform_array))

# get channel for location_x location_y location_z

channel_location_x = transform_section.get_channels()[0]

channel_location_y = transform_section.get_channels()[1]

channel_location_z = transform_section.get_channels()[2]

# get key for rotation_y rotation_z

channel_rotation_x = transform_section.get_channels()[3]

channel_rotation_y = transform_section.get_channels()[4]

channel_rotation_z = transform_section.get_channels()[5]

# camera_transform = camera_transform_array[index]

# camera_location_x = camera_transform.get_editor_property("translation").get_editor_property("x")

# camera_location_y = camera_transform.get_editor_property("translation").get_editor_property("y")

# camera_location_z = camera_transform.get_editor_property("translation").get_editor_property("z")

for i in range(len(camera_transform_array)):

camera_transform = camera_transform_array[i]

camera_location_x = camera_transform.get_editor_property("translation").get_editor_property("x")

camera_location_y = camera_transform.get_editor_property("translation").get_editor_property("y")

camera_location_z = camera_transform.get_editor_property("translation").get_editor_property("z")

camera_rotation = camera_rotation_array[i]

camera_rotate_roll = camera_rotation.get_editor_property("roll")

camera_rotate_pitch = camera_rotation.get_editor_property("pitch")

camera_rotate_yaw = camera_rotation.get_editor_property("yaw")

new_time = unreal.FrameNumber(value=i)

channel_location_x.add_key(new_time, camera_location_x, 0.0)

channel_location_y.add_key(new_time, camera_location_y, 0.0)

channel_location_z.add_key(new_time, camera_location_z, 0.0)

channel_rotation_x.add_key(new_time, camera_rotate_roll, 0.0)

channel_rotation_y.add_key(new_time, camera_rotate_pitch, 0.0)

channel_rotation_z.add_key(new_time, camera_rotate_yaw, 0.0)

# if i % 5 == 0:

# print(new_time, [camera_location_x, camera_location_y, camera_location_z, camera_rotate_roll, camera_rotate_pitch, camera_rotate_yaw])

# add the binding for the camera cut section

camera_binding_id = sequence.make_binding_id(camera_binding, unreal.MovieSceneObjectBindingSpace.LOCAL)

camera_cut_section.set_camera_binding_id(camera_binding_id)

# save sequence asset

unreal.EditorAssetLibrary.save_loaded_asset(sequence, False)

return sequence

# sequence movie

def render_sequence_to_movie(export_path, film_resolution, delay_every_frame, on_finished_callback):

# 1) Create an instance of our UAutomatedLevelSequenceCapture and override all of the settings on it. This class is currently

# set as a config class so settings will leak between the Unreal Sequencer Render-to-Movie UI and this object. To work around

# this, we set every setting via the script so that no changes the user has made via the UI will affect the script version.

# The users UI settings will be reset as an unfortunate side effect of this.

capture_settings = unreal.AutomatedLevelSequenceCapture()

# Set all POD settings on the UMovieSceneCapture

# capture_settings.settings.output_directory = unreal.DirectoryPath("../../../unreal-render-release/Saved/VideoCaptures1/")

capture_settings.settings.output_directory = unreal.DirectoryPath(export_path)

# If you game mode is implemented in Blueprint, load_asset(...) is going to return you the C++ type ('Blueprint') and not what the BP says it inherits from.

# Instead, because game_mode_override is a TSubclassOf we can use unreal.load_class to get the UClass which is implicitly convertable.

# ie: capture_settings.settings.game_mode_override = unreal.load_class(None, "/Game/AI/TestingSupport/AITestingGameMode.AITestingGameMode_C")

capture_settings.settings.game_mode_override = None

capture_settings.settings.output_format = "{camera}" + "_{frame}"

capture_settings.settings.overwrite_existing = False

capture_settings.settings.use_relative_frame_numbers = False

capture_settings.settings.handle_frames = 0

capture_settings.settings.zero_pad_frame_numbers = 4

# If you wish to override the output framerate you can use these two lines, otherwise the framerate will be derived from the sequence being rendered

capture_settings.settings.use_custom_frame_rate = False

# capture_settings.settings.custom_frame_rate = unreal.FrameRate(24,1)

capture_settings.settings.resolution.res_x = film_resolution

capture_settings.settings.resolution.res_y = film_resolution

capture_settings.settings.enable_texture_streaming = False

capture_settings.settings.cinematic_engine_scalability = True

capture_settings.settings.cinematic_mode = True

capture_settings.settings.allow_movement = False # Requires cinematic_mode = True

capture_settings.settings.allow_turning = False # Requires cinematic_mode = True

capture_settings.settings.show_player = False # Requires cinematic_mode = True

capture_settings.settings.show_hud = False # Requires cinematic_mode = True

capture_settings.use_separate_process = False

capture_settings.close_editor_when_capture_starts = False # Requires use_separate_process = True

capture_settings.additional_command_line_arguments = "-NOSCREENMESSAGES" # Requires use_separate_process = True

capture_settings.inherited_command_line_arguments = "" # Requires use_separate_process = True

# Set all the POD settings on UAutomatedLevelSequenceCapture

capture_settings.use_custom_start_frame = False # If False, the system will automatically calculate the start based on sequence content

capture_settings.use_custom_end_frame = False # If False, the system will automatically calculate the end based on sequence content

capture_settings.custom_start_frame = unreal.FrameNumber(0) # Requires use_custom_start_frame = True

capture_settings.custom_end_frame = unreal.FrameNumber(0) # Requires use_custom_end_frame = True

capture_settings.warm_up_frame_count = 0

capture_settings.delay_before_warm_up = 0

capture_settings.delay_before_shot_warm_up = 0

capture_settings.delay_every_frame = delay_every_frame

capture_settings.write_edit_decision_list = True

# Tell the capture settings which level sequence to render with these settings. The asset does not need to be loaded,

# as we're only capturing the path to it and when the PIE instance is created it will load the specified asset.

# If you only had a reference to the level sequence, you could use "unreal.SoftObjectPath(mysequence.get_path_name())"

capture_settings.level_sequence_asset = unreal.SoftObjectPath(sequence_asset_path)

# To configure the video output we need to tell the capture settings which capture protocol to use. The various supported

# capture protocols can be found by setting the Unreal Content Browser to "Engine C++ Classes" and filtering for "Protocol"

# ie: CompositionGraphCaptureProtocol, ImageSequenceProtocol_PNG, etc. Do note that some of the listed protocols are not intended

# to be used directly.

# Right click on a Protocol and use "Copy Reference" and then remove the extra formatting around it. ie:

# Class'/Script/MovieSceneCapture.ImageSequenceProtocol_PNG' gets transformed into "/Script/MovieSceneCapture.ImageSequenceProtocol_PNG"

capture_settings.set_image_capture_protocol_type(

unreal.load_class(None, "/Script/MovieSceneCapture.ImageSequenceProtocol_PNG"))

# After we have set the capture protocol to a soft class path we can start editing the settings for the instance of the protocol that is internallyc reated.

capture_settings.get_image_capture_protocol().compression_quality = 85

# Finally invoke Sequencer's Render to Movie functionality. This will examine the specified settings object and either construct a new PIE instance to render in,

# or create and launch a new process (optionally shutting down your editor).

unreal.SequencerTools.render_movie(capture_settings, on_finished_callback)

# sequence end call back

def on_render_movie_finished(success):

unreal.log('on_render_movie_finished is called')

if success:

unreal.log('---end LevelSequenceTask')

# check asset is exist

def check_sequence_asset_exist(root_dir):

sequence_path = os.path.join(root_dir, 'Content', 'Render_Sequence.uasset')

if (os.path.exists(sequence_path)):

unreal.log('---sequence is exist os path---')

os.remove(sequence_path)

else:

unreal.log('---sequence is not exist os path---')

if unreal.EditorAssetLibrary.does_asset_exist(sequence_asset_path):

unreal.log('---sequence dose asset exist---')

unreal.EditorAssetLibrary.delete_asset(sequence_asset_path)

else:

unreal.log('---sequence does not asset exist---')

sequence_asset_data = unreal.AssetRegistryHelpers.get_asset_registry().get_asset_by_object_path(sequence_asset_path)

if sequence_asset_data:

unreal.log('---sequence is exist on content---')

sequence_asset = unreal.AssetData.get_asset(sequence_asset_data)

unreal.EditorAssetLibrary.delete_loaded_asset(sequence_asset)

else:

unreal.log('---sequence is not exit on content---')

def main():

# bind event

on_finished_callback = unreal.OnRenderMovieStopped()

on_finished_callback.bind_callable(on_render_movie_finished)

# get json file

root_dir = unreal.SystemLibrary.get_project_directory()

json_path = os.path.join(root_dir, 'RawData', 'camera_data.json')

# get json data

camera_transform_array, camera_rotation_array, camera_name_array, export_path, film_fov, film_resolution, delay_every_frame = read_json_file(

json_path)

# check sequence is asset

check_sequence_asset_exist(root_dir)

# create sequence

create_sequence('Render_Sequence', camera_transform_array, camera_rotation_array, camera_name_array, film_fov)

# run sequence

render_sequence_to_movie(export_path, film_resolution, delay_every_frame, on_finished_callback) 3. 渲染结果

上图即为渲染的多视点图片,每张图片对应的相机姿态也是确定的。

至此,在 UE4 中利用 python 实现自动化渲染的整个过程就结束了。在这里留下一个小问题:

UE4 中的相机姿态数据我们是无法直接使用的,如何将其转化为 NeRF 中的相机姿态数据呢?大家可以自行探索一下,后续文章再对这一部分的内容进行讲解。