BEVFormer转onnx,并优化

以下记录均是在bevformer_tiny版本上进行的实验,且不考虑时序输入

参考了https://github.com/DerryHub/BEVFormer_tensorrt,但是这个是为了部署在tensorRT上的,自己定义了一些特殊的算子,并不是我需要的,所以自己尝试重新转onnx。

一、配置环境

直接在bevformer官方推荐的环境上进行转onnx操作:https://github.com/fundamentalvision/BEVFormer/blob/master/docs/install.md

二、准备工作

在路径:mmdetection3d/BEVFormer/projects/mmdet3d_plugin/bevformer/apis/test.py中添加一个函数:

def custom_multi_gpu_test_onnx(model, data_loader,tmpdir=None, gpu_collect=False):

"""Test model with multiple gpus.

This method tests model with multiple gpus and collects the results

under two different modes: gpu and cpu modes. By setting 'gpu_collect=True'

it encodes results to gpu tensors and use gpu communication for results

collection. On cpu mode it saves the results on different gpus to 'tmpdir'

and collects them by the rank 0 worker.

Args:

model (nn.Module): Model to be tested.

data_loader (nn.Dataloader): Pytorch data loader.

tmpdir (str): Path of directory to save the temporary results from

different gpus under cpu mode.

gpu_collect (bool): Option to use either gpu or cpu to collect results.

Returns:

list: The prediction results.

"""

model.eval()

bbox_results = []

mask_results = []

dataset = data_loader.dataset

rank, world_size = get_dist_info()

if rank == 0:

prog_bar = mmcv.ProgressBar(len(dataset))

time.sleep(2) # This line can prevent deadlock problem in some cases.

have_mask = False

repetitions = 100

for i, data in enumerate(data_loader):

with torch.no_grad():

inputs = {}

inputs['img'] = data['img'][0].data[0].float().unsqueeze(0) #torch.randn(6,3,736,1280)#.cuda()

#inputs['return_loss'] = False

inputs['img_metas'] = [1]

inputs['img_metas'][0] = [1]

inputs['img_metas'][0][0] = {}

inputs['img_metas'][0][0]['can_bus'] = torch.from_numpy(data['img_metas'][0].data[0][0]['can_bus']).float()#torch.randn(18)#.cuda()

inputs['img_metas'][0][0]['lidar2img'] = torch.from_numpy(np.array(data['img_metas'][0].data[0][0]['lidar2img'])).float().unsqueeze(0)#torch.randn(1,6,4,4)#.cuda()

inputs['img_metas'][0][0]['scene_token'] = 'fcbccedd61424f1b85dcbf8f897f9754'

inputs['img_metas'][0][0]['img_shape'] = torch.Tensor([[480,800]])

output_file = '/×××/BEVformer/mmdetection3d/BEVFormer/J5/bevformer_tiny.onnx'

torch.onnx.export(

model,

inputs,

output_file,

export_params=True,

keep_initializers_as_inputs=True,

do_constant_folding=False,

verbose=False,

opset_version=11,

)

print(f"ONNX file has been saved in {output_file}")

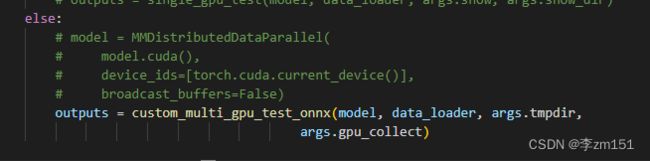

return {0:'1'} 然后使用mmdetection3d/BEVFormer/tools/test.py这个用来测试的脚本进行转onnx操作,把233行的custom_multi_gpu_test改成上面定义的函数custom_multi_gpu_test_onnx,我是在cpu上操作的,所以把上面分布式操作去掉了,如图所示

按照如下图修改配置信息,方便调试

三、开始排错

报错1:KeyError:‘RANK'

解决方法: 点进dist_utils.py里面,修改内容,如下所示

def _init_dist_pytorch(backend, **kwargs):

# TODO: use local_rank instead of rank % num_gpus

os.environ['RANK'] = '0'

os.environ['MASTER_ADDR'] = 'localhost'

os.environ['MASTER_PORT'] = '5678'

rank = int(os.environ['RANK'])

num_gpus = torch.cuda.device_count()

torch.cuda.set_device(rank % num_gpus)

dist.init_process_group(backend=backend, world_size=int(1),**kwargs)报错2:AttributeError: 'NoneType' object has no attribute 'size'

原因是bevformer的模型的forward输入比较特殊,不是单纯的字典或者列表,为了方便转onnx,进行一些改写,如下:

(1)将mmdetection3d/BEVFormer/projects/mmdet3d_plugin/bevformer/detectors/bevformer.py中143行的forward函数改成:

def forward(self, input): #return_loss=True,

"""Calls either forward_train or forward_test depending on whether

return_loss=True.

Note this setting will change the expected inputs. When

`return_loss=True`, img and img_metas are single-nested (i.e.

torch.Tensor and list[dict]), and when `resturn_loss=False`, img and

img_metas should be double nested (i.e. list[torch.Tensor],

list[list[dict]]), with the outer list indicating test time

augmentations.

"""

#return_loss = input['return_loss']

#if return_loss:

#return self.forward_train(**kwargs)

#else:

#input['rescale']=True

# return_loss=False, rescale=True,

return self.forward_test(input['img_metas'], input['img'])(2)forward_test函数定义去掉**kwargs, self.simple_test()函数输入也去掉**kwargs

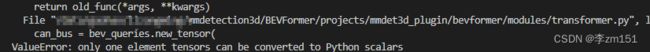

报错3:ValueError: only one element tensors can be converted to Python scalars

原因 bevformer本来是以numpy形式输入'can_bus’参数的,但是转模型的时候应该所有变量都是tensor的形式,我们在初始化数据输入的时候是用torch.randn()生成输入,所以做如下修改:

将bevformer/modules/transformer.py的get_bev_feature函数改为:

def get_bev_features(

self,

mlvl_feats,

bev_queries,

bev_h,

bev_w,

grid_length=[0.512, 0.512],

bev_pos=None,

prev_bev=None,

**kwargs):

"""

obtain bev features.

"""

bs = mlvl_feats[0].size(0)

bev_queries = bev_queries.unsqueeze(1).repeat(1, bs, 1)

bev_pos = bev_pos.flatten(2).permute(2, 0, 1)

# obtain rotation angle and shift with ego motion

delta_x = np.array([each['can_bus'][0].cpu().numpy()

for each in kwargs['img_metas']])

delta_x = torch.from_numpy(delta_x)

delta_y = np.array([each['can_bus'][1].cpu().numpy()

for each in kwargs['img_metas']])

delta_y = torch.from_numpy(delta_y)

ego_angle = np.array(

[each['can_bus'][-2] / np.pi * 180 for each in kwargs['img_metas']])

ego_angle = torch.from_numpy(ego_angle.astype(np.float32))

grid_length_y = grid_length[0]

grid_length_x = grid_length[1]

translation_length = torch.sqrt(delta_x ** 2 + delta_y ** 2)

translation_angle = (

(

torch.atan(delta_y / (delta_x + 1e-8))

+ ((1 - torch.sign(delta_x)) / 2) * torch.sign(delta_y) * np.pi

)

/ np.pi

* 180

)

bev_angle = ego_angle - translation_angle

shift_y = translation_length * \

torch.cos(bev_angle / 180 * np.pi) / grid_length_y / bev_h

shift_x = translation_length * \

torch.sin(bev_angle / 180 * np.pi) / grid_length_x / bev_w

shift_y = shift_y * int(self.use_shift)

shift_x = shift_x * int(self.use_shift)

shift = torch.stack([shift_x, shift_y]).permute(1, 0)

#shift = 0

if prev_bev is not None:

if prev_bev.shape[1] == bev_h * bev_w:

prev_bev = prev_bev.permute(1, 0, 2)

if self.rotate_prev_bev:

for i in range(bs):

# num_prev_bev = prev_bev.size(1)

rotation_angle = kwargs['img_metas'][i]['can_bus'][-1]

tmp_prev_bev = prev_bev[:, i].reshape(

bev_h, bev_w, -1).permute(2, 0, 1)

tmp_prev_bev = rotate(tmp_prev_bev, rotation_angle,

center=self.rotate_center)

tmp_prev_bev = tmp_prev_bev.permute(1, 2, 0).reshape(

bev_h * bev_w, 1, -1)

prev_bev[:, i] = tmp_prev_bev[:, 0]

# add can bus signals

can_bus = bev_queries.new_tensor(

[each['can_bus'].cpu().numpy() for each in kwargs['img_metas']]) # [:, :]

can_bus = self.can_bus_mlp(can_bus)[None, :, :]

bev_queries = bev_queries + can_bus * int(self.use_can_bus)

feat_flatten = []

spatial_shapes = []

for lvl, feat in enumerate(mlvl_feats):

bs, num_cam, c, h, w = feat.shape

spatial_shape = (h, w)

feat = feat.flatten(3).permute(1, 0, 3, 2)

if self.use_cams_embeds:

feat = feat + self.cams_embeds[:, None, None, :].to(feat.dtype)

feat = feat + self.level_embeds[None,

None, lvl:lvl + 1, :].to(feat.dtype)

spatial_shapes.append(spatial_shape)

feat_flatten.append(feat)

feat_flatten = torch.cat(feat_flatten, 2)

spatial_shapes = torch.as_tensor(

spatial_shapes, dtype=torch.long, device=bev_pos.device)

level_start_index = torch.cat((spatial_shapes.new_zeros(

(1,)), spatial_shapes.prod(1).cumsum(0)[:-1]))

feat_flatten = feat_flatten.permute(

0, 2, 1, 3) # (num_cam, H*W, bs, embed_dims)

bev_embed = self.encoder(

bev_queries,

feat_flatten,

feat_flatten,

bev_h=bev_h,

bev_w=bev_w,

bev_pos=bev_pos,

spatial_shapes=spatial_shapes,

level_start_index=level_start_index,

prev_bev=prev_bev,

shift=shift,

**kwargs

)

return bev_embed报错4:ValueError: only one element tensors can be converted to Python scalars

在encoder.py的point_sampling函数里面也有这个问题, 直接注释掉95~99行,改为

lidar2img = img_metas[0]['lidar2img']报错5:KeyError: 'box_type_3d'

这里是bevformer模型输入比较特殊的地方,这个变量是一个类名,不是数据,大概的作用是对模型输出进行包装后处理的,我们在这里可以直接注释掉这一行

报错6:RuntimeError: Exporting the operator linspace to ONNX opset version 11 is not supported.

如果必须要用opset 11版本的torch.onnx转模型,这个地方会提示torch.linspace算子不支持,定位到算子在bevformer/modules/encoder.py的 BEVFormerEncoder.get_reference_points函数中

可以选择使用torch.range()和torch.arrange()算子进行替换,这里我用torch.arange(),替换如下:

def get_reference_points(H, W, Z=8, num_points_in_pillar=4, dim='3d', bs=1, device='cuda', dtype=torch.float):

"""Get the reference points used in SCA and TSA.

Args:

H, W: spatial shape of bev.

Z: hight of pillar.

D: sample D points uniformly from each pillar.

device (obj:`device`): The device where

reference_points should be.

Returns:

Tensor: reference points used in decoder, has \

shape (bs, num_keys, num_levels, 2).

"""

# reference points in 3D space, used in spatial cross-attention (SCA)

if dim == '3d':

zs = torch.cat((torch.arange(0.5,Z-0.5,(Z-1)/(num_points_in_pillar-1)), torch.Tensor([Z-0.5])),dim=0).view(-1, 1, 1).expand(num_points_in_pillar, H, W) / Z

xs = torch.cat((torch.arange(0.5, W-0.5, (W-1)/(W-1)), torch.Tensor([W-0.5])),dim=0).view(1, 1, W).expand(num_points_in_pillar, H, W) / W

ys = torch.cat((torch.arange(0.5, H-0.5, (H-1)/(H-1)), torch.Tensor([H-0.5])),dim=0).view(1, H, 1).expand(num_points_in_pillar, H, W) / H

ref_3d = torch.stack((xs, ys, zs), -1)

ref_3d = ref_3d.permute(0, 3, 1, 2).flatten(2).permute(0, 2, 1)

ref_3d = ref_3d[None].repeat(bs, 1, 1, 1)

return ref_3d

# reference points on 2D bev plane, used in temporal self-attention (TSA).

elif dim == '2d':

ref_y, ref_x = torch.meshgrid(

torch.cat((torch.arange(0.5, H-0.5, (H-1)/(H-1)), torch.Tensor([H-0.5])),dim=0),

torch.cat((torch.arange(0.5, W-0.5, (W-1)/(W-1)), torch.Tensor([W-0.5])),dim=0)

)

ref_y = ref_y.reshape(-1)[None] / H

ref_x = ref_x.reshape(-1)[None] / W

ref_2d = torch.stack((ref_x, ref_y), -1)

ref_2d = ref_2d.repeat(bs, 1, 1).unsqueeze(2)

return ref_2d报错7:RuntimeError: Exporting the operator maximum to ONNX opset version 11 is not supported

提示maximum算子不支持,定位到算子位于evformer/modules/encoder.py的 BEVFormerEncoder.point_sampling函数中,直接将torch.maximum()改为torch.max()效果是一样的。

报错8:RuntimeError: Exporting the operator nan_to_num to ONNX opset version 11 is not supported.

就在报错7的位置的下面一点点,有一个bev_mask=torch.nan_to_num(bev_mask),这个地方在转onnx的时候可以直接去掉。

报错9:RuntimeError: Exporting the operator grid_sampler to ONNX opset version 11 is not supported

很经典的报错,定位算子,从这个函数点进去:

from mmcv.ops.multi_scale_deform_attn import multi_scale_deformable_attn_pytorch先导入需要的函数:

from mmcv.ops.point_sample import bilinear_grid_sample然后再multi_scale_deformable_attn_pytorch中将

sampling_value_l_ = F.grid_sample(

value_l_,

sampling_grid_l_,

mode='bilinear',

padding_mode='zeros',

align_corners=False)替换为:

sampling_value_l_ = bilinear_grid_sample(value_l_,sampling_grid_l_)效果是一样的

并且将这个函数中的最后一行的reshape改为view

报错10:RuntimeError: view size is not compatible with input tensor's size and stride (at least one dimension spans across two contiguous subspaces).

直接点进报错信息中的/mmcv/ops/point_sample.py中,找到x = x.view(n,-1),改为:

x = x.contiguous().view(n, -1)

y = y.contiguous().view(n, -1)报错11:RuntimeError: Exporting the operator atan2 to ONNX opset version 11 is not supported.

atan2算子不支持,定位到算子位置在mmdetection3d/BEVFormer/projects/mmdet3d_plugin/core/bbox/util.py的31行,替换为:

rot = (

(

torch.atan((rot_sine / (rot_cosine + 1e-8)).sigmoid())

+ ((1 - torch.sign(rot_cosine)) / 2) * torch.sign(rot_sine) * np.pi

)

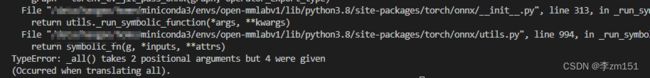

)报错12:TypeError: _all() takes 2 positional arguments but 4 were given

(Occurred when translating all).

这个报错属于是torch版本比较低的缘故,但是由于bevformer的环境指定了torch==1.9.1所以不好直接更新torch版本,参考https://blog.csdn.net/andrewchen1985/article/details/125197226

从

from torch.onnx import symbolic_opset9点进symbolic_opset9这个文件里面,定位到2440行,将def _any(g,input)和def _all(g, input)这;两个函数改为:

def _any(g, *args):

# aten::any(Tensor self)

if len(args) == 1:

input = args[0]

dim, keepdim = None, 0

# aten::any(Tensor self, int dim, bool keepdim)

else:

input, dim, keepdim = args

dim = [_parse_arg(dim, "i")]

keepdim = _parse_arg(keepdim, "i")

input = _cast_Long(g, input, False) # type: ignore[name-defined]

input_sum = sym_help._reducesum_helper(g, input,

axes_i=dim, keepdims_i=keepdim)

return gt(g, input_sum, g.op("Constant", value_t=torch.LongTensor([0])))

def _all(g, *args):

input = g.op("Not", args[0])

# aten::all(Tensor self)

if len(args) == 1:

return g.op("Not", _any(g, input))

# aten::all(Tensor self, int dim, bool keepdim)

else:

return g.op("Not", _any(g, input, args[1], args[2]))

————————————————

版权声明:本文为CSDN博主「andrewchen1985」的原创文章,遵循CC 4.0 BY-SA版权协议,转载请附上原文出处链接及本声明。

原文链接:https://blog.csdn.net/andrewchen1985/article/details/125197226报错13:RuntimeError: Exporting the operator __iand_ to ONNX opset version 11 is not supported.

算子不支持,这个算子找了好久,定位到mmdetection3d/BEVFormer/projects/mmdet3d_plugin/core/bbox/coders/nms_free_coder.py的80行,意思是mask &= ......相与操作‘&’有问题,替换为:

mask = (mask.float()*((final_box_preds[..., :3] <= self.post_center_range[3:]).all(1)).float()).bool()OK,到这里onnx初步转好了:

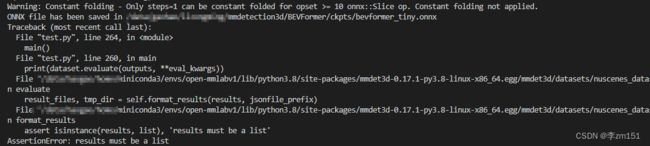

四、优化onnx

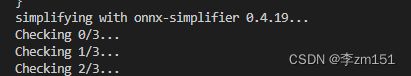

虽然转好了onnx,但是可以看到输出很多警告信息,实际上这个onnx可能还是有点问题的,我们先用onnx simplifier包优化一下:

import onnx

import onnxsim

onnx_path = '/×××/mmdetection3d/BEVFormer/ckpts/bevformer_tiny.onnx'

model_onnx = onnx.load(onnx_path) # load onnx model

onnx.checker.check_model(model_onnx) # check onnx model

print(onnx.helper.printable_graph(model_onnx.graph)) # print

sim_onnx_path = '/×××/mmdetection3d/BEVFormer/ckpts/bevformer_tiny_sim.onnx'

print(f'simplifying with onnx-simplifier {onnxsim.__version__}...')

model_onnx, check = onnxsim.simplify(model_onnx, check_n=3,skip_shape_inference=True)

assert check, 'assert check failed'

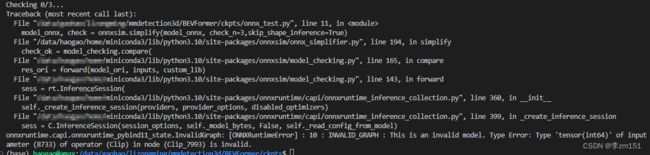

onnx.save(model_onnx, sim_onnx_path)报错1:onnxruntime.capi.onnxruntime_pybind11_state.InvalidGraph: [ONNXRuntimeError] : 10 : INVALID_GRAPH : This is an invalid model. Type Error: Type 'tensor(int64)' of input parameter (8733) of operator (Clip) in node (Clip_7993) is invalid.

定位这个问题的过程比较繁琐,从mmcv.cnn.bricks.transformer.MultiheadAttention的self.attn中进入nn.MultiheadAttention,从nn.MultiheadAttention的forward中进入F.multi_head_attention_forward(),再从F.multi_head_attention_forward()中的_in_projection_packed()点进去

简单来说点进functional中

import torch.nn.functional搜索_in_projection_packed,在第4729行将;

w_q, w_k, w_v = w.chunk(3)改为:

w_q, w_k, w_v = w.split(int(w.shape[0]/3))在第4734行将

b_q, b_k, b_v = b.chunk(3)改为:

b_q, b_k, b_v = b.split(int(b.shape[0]/3))另外,在SpatialCrossAttention的forward中的有一行 count = torch.clamp(count, min=1.0)

改为

count[count<1]=1在decoder.py中的inverse_sigmoid函数由于存在torch.clamp函数,所以需要改写为

def inverse_sigmoid(x, eps=1e-5):

"""Inverse function of sigmoid.

Args:

x (Tensor): The tensor to do the

inverse.

eps (float): EPS avoid numerical

overflow. Defaults 1e-5.

Returns:

Tensor: The x has passed the inverse

function of sigmoid, has same

shape with input.

"""

#x = x.clamp(min=0, max=1)

x[x<0] = 0

x[x>1] = 1

#x1 = x#.clamp(min=eps)

x1 = x.clone()

x1[x1另外,也要把这个函数放到bevformer_head中,用来替换从mmdet.models.utils.transformer中导入的inverse_sigmoid

报错2:onnxruntime.capi.onnxruntime_pybind11_state.InvalidArgument: [ONNXRuntimeError] : 2 : INVALID_ARGUMENT : Non-zero status code returned while running Expand node. Name:'Expand_1855' Status Message: invalid expand shape

关于expand算子的问题,

虽然还没搞清楚原因是啥,但是我知道咋改。定位到mmdetection3d/BEVFormer/projects/mmdet3d_plugin/bevformer/modules/spatial_cross_attention.py的SpatialCrossAttention的forward的forward里面,将

queries_rebatch[j, i, :len(index_query_per_img)] = query[j, index_query_per_img]改为:

queries_rebatch[j, i, :len(index_query_per_img)] = query[j, np.array(index_query_per_img)]下面一行的

reference_points_rebatch[j, i, :len(index_query_per_img)] = reference_points_per_img[j, index_query_per_img]改为:

reference_points_rebatch[j, i, :len(index_query_per_img)] = reference_points_per_img[j, np.array(index_query_per_img)]再在下面的

slots[j, index_query_per_img] += queries[j, i, :len(index_query_per_img)]前面加一行

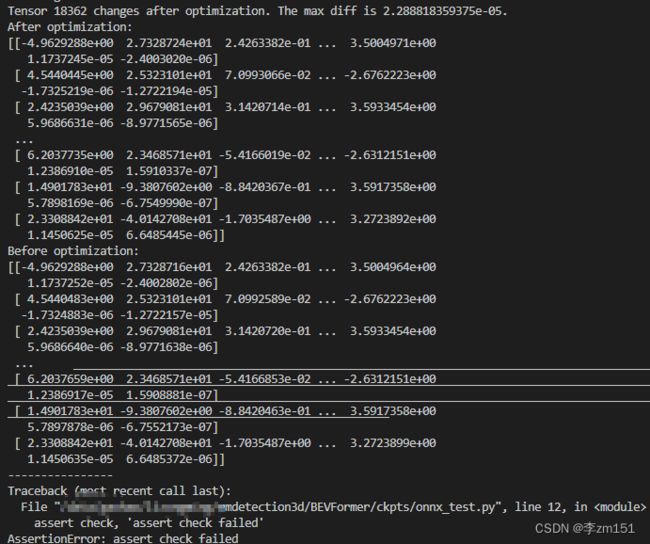

index_query_per_img = np.array(index_query_per_img)报错3:Tensor 18362 changes after optimization. The max diff is 2.288818359375e-05.

提示优化结果有偏差,初步定位了一下位置,发现在后处理部分,也就是bevformer.py的self.pts_bbox_head.get_bboxes,暂且把这个去掉,让def simple_test_pts(self, x, img_metas, prev_bev=None, rescale=False):只输出outs,如下所示

def simple_test_pts(self, x, img_metas, prev_bev=None, rescale=False):

"""Test function"""

outs = self.pts_bbox_head(x, img_metas, prev_bev=prev_bev)

return outs然后重新生成onnx,并且优化

至此,bevformer_tiny的onnx转换和优化工作初步完成!!!

排错不易,点赞加收藏哦!!!