ceph集群的搭建

ceph集群部署(准备阶段)

1. 配置静态网络(自选)

配置静态IP

2. 配置主机名(必做)

ceph01:

hostnamectl set-hostname ceph01

ceph02:

hostnamectl set-hostname ceph02

ceph03:

hostnamectl set-hostname ceph03

3. 配置域名解析(必做)

这里以ceph01节点为例,其他节点方法相似

vi /etc/hosts

添加以下内容

192.168.100.30 ceph01

192.168.100.40 ceph02

192.168.100.50 ceph03

4. 安装命令补全相关的包(自选)

yum list all | grep bash

yum install bash-completion.noarch -y

reboot

等待一会儿,再重新连接服务器

5,每台服务器添加一块硬盘(必做)

自行添加

ceph集群部署

1. 配置免密登录(所有存储节点都需执行)

ssh-keygen

ssh-copy-id ceph01

ssh-copy-id ceph02

ssh-copy-id ceph03

2. 更换网络源(所有节点都需要做)并安装ceph软件包

2.1 备份yum源

mkdir /etc/yum.repos.d/ch

mv /etc/yum.repos.d/* /etc/yum.repos.d/ch

2.2 安装阿里源,下载wget等

curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-7.repo

yum install -y wget

wget -O /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

yum -y install yum-plugin-priorities.noarch

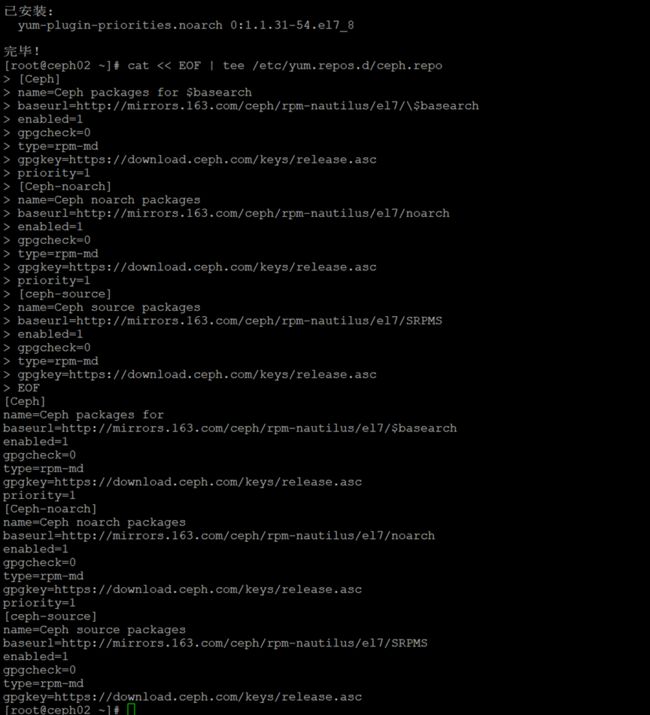

2.3配置Ceph源

cat << EOF | tee /etc/yum.repos.d/ceph.repo

[Ceph]

name=Ceph packages for $basearch

baseurl=http://mirrors.163.com/ceph/rpm-nautilus/el7/\$basearch

enabled=1

gpgcheck=0

type=rpm-md

gpgkey=https://download.ceph.com/keys/release.asc

priority=1

[Ceph-noarch]

name=Ceph noarch packages

baseurl=http://mirrors.163.com/ceph/rpm-nautilus/el7/noarch

enabled=1

gpgcheck=0

type=rpm-md

gpgkey=https://download.ceph.com/keys/release.asc

priority=1

[ceph-source]

name=Ceph source packages

baseurl=http://mirrors.163.com/ceph/rpm-nautilus/el7/SRPMS

enabled=1

gpgcheck=0

type=rpm-md

gpgkey=https://download.ceph.com/keys/release.asc

EOF

2.4 在所有集群和客户端节点安装Ceph

yum -y install ceph --skip-broke

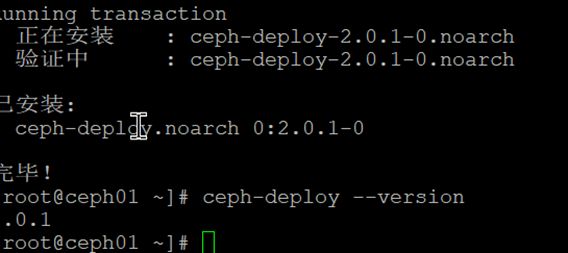

2.5 在ceph01节点额外安装ceph-deploy

yum install -y python-setuptools

yum -y install ceph-deploy

2.6 校验版本

ceph-deploy --version

3. 初始化Ceph集群

3.1 在管理点上创建一个目录,以维护为集群生成的配置文件和密钥。

mkdir cluster

cd cluster

3.2 使用ceph-deploy创建集群

ceph-deploy new --public-network 192.168.200.0/24 --cluster-network 192.168.200.0/24 ceph01

这里直接指定cluster-network(集群内部通讯)和public-network(外部访问Ceph集群),也可以在执行命令后修改配置文件方式指定。

3.3 检查当前目录中的输出

3.4 查看ceph配置文件

cat ceph.conf

3.5安装Ceph包到指定节点

参数–no-adjust-repos是直接使用本地源,不生成官方源

ceph-deploy install --no-adjust-repos ceph01 ceph02 ceph03

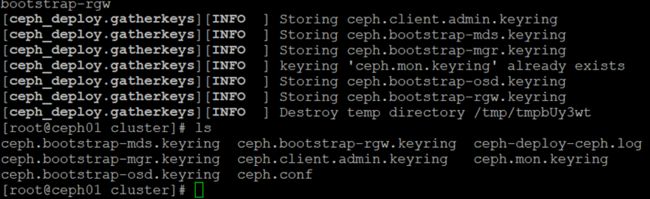

4. 创建第一个monitor

创建监视器,初始化monitor,并收集所有密钥,官方介绍:为了获得高可用性,您应该运行带有至少三个监视器的生产Ceph集群。

ceph-deploy mon create-initial

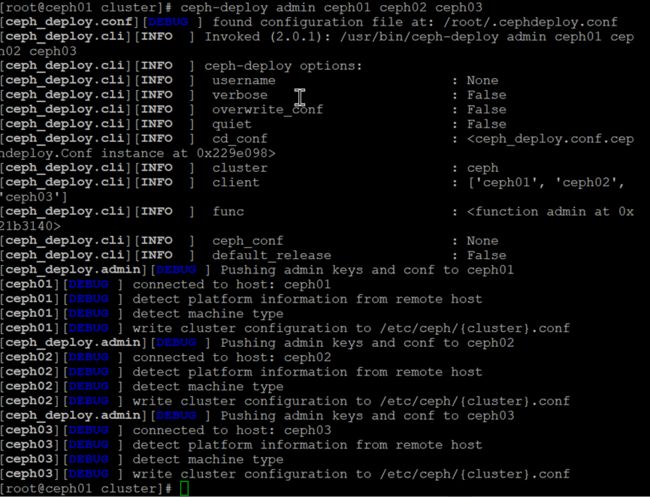

使用ceph-deploy命令将配置文件和 admin key复制到管理节点和Ceph节点,以便每次执行ceph CLI命令无需指定monitor地址和

ceph.client.admin.keyring。

ceph-deploy admin ceph01 ceph02 ceph03

4.2 查看复制的包

ssh ceph03 ls /etc/ceph

- 创建第一个manager

ceph-deploy mgr create ceph01

- 添加OSD盘

添加6个osd,假设每个节点中都有2个未使用的磁盘/dev/vdb。 确保该设备当前未使用并且不包含任何重要数据。(等待的时间长)

ceph-deploy osd create --data /dev/sdb ceph01

ceph-deploy osd create --data /dev/sdb ceph02

ceph-deploy osd create --data /dev/sdb ceph03

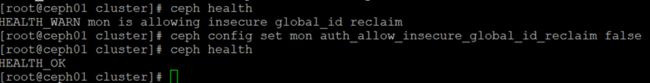

6.1检查集群健康状态

ceph health

ceph health detail

注!如果回显“HEALTH_WARN mon is allowing insecure global_id reclaim”

解决办法:禁用不安全模式

ceph config set mon auth_allow_insecure_global_id_reclaim false

稍后再次查看,ceph status就变成HEALTH_OK了

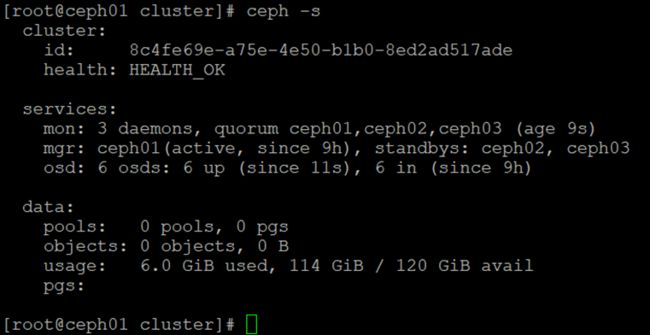

6.2 查看集群状态详细信息

ceph -s

- 扩展群集

7.1 添加Monitors

ceph-deploy mon add ceph02

ceph-deploy mon add ceph03

添加了新的Ceph Monitor,Ceph将开始同步Monitor并形成仲裁。可以通过执行以下命令检查仲裁状态:(注意时间同步)

ceph quorum_status --format json-pretty

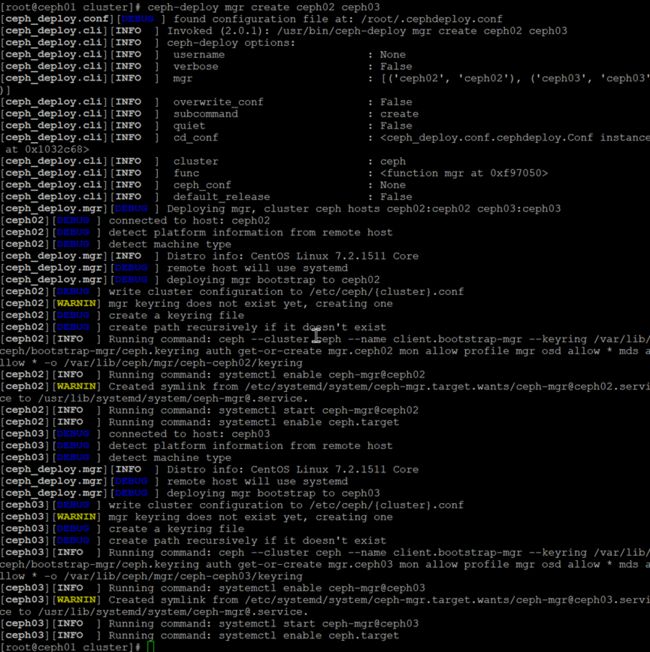

7.2 添加Managers

ceph-deploy mgr create ceph02 ceph03

再次检测集群状态

ceph -s

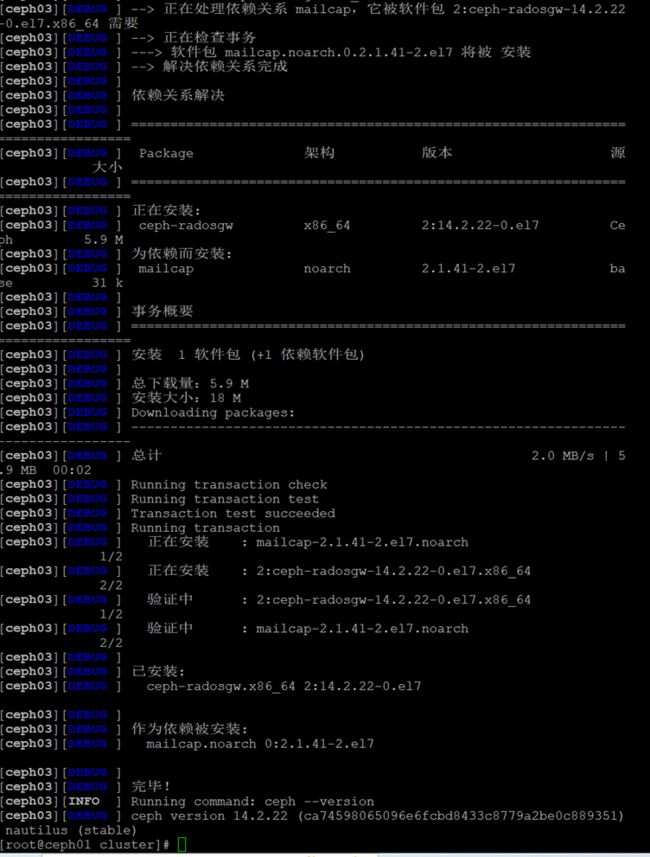

7.3 创建rgw实例

7.3.1 创建RGW的新实例

ceph-deploy rgw create ceph01 ceph02 ceph03

默认情况下,RGW实例将侦听7480端口。

7.3.2 在运行RGW的节点上编辑ceph.conf来更改此端口

vi ceph.conf

修改内容如下:

[client]

rgw frontends = civetweb port=80

7.3.3验证rgb

浏览器访问http://192.168.200.30:7480/,能输出内容就成功

7.4 创建mds实例

ceph-deploy --overwrite-conf mds create ceph01 ceph02 ceph03

7.5 查看osd当前状态

ceph osd tree

7.6 配置删除pool权限

默认创建pool无法执行删除操作,需要执行以下配置

vi ceph.conf

添加如下内容:

[mon]

mon_allow_pool_delete = true

更新配置到所有节点

cd /root/cluster/

ceph-deploy --overwrite-conf config push ceph01 ceph02 ceph03

8. 各个节点重启monitor服务

systemctl restart ceph-mon.target

感谢大家,点赞,收藏,关注,评论!