0628-6.2-如何在CDH6.2中启用Kerberos

Fayson的github: https://github.com/fayson/cdhproject

推荐关注微信公众号:“Hadoop实操”,ID:gh_c4c535955d0f

1 文档编写目的

在前面的文章中,Fayson介绍了《0610-6.2.0-如何在Redhat7.4安装CDH6.2》,这里我们基于这个环境开始安装Kerberos。Kerberos是一个用于安全认证第三方协议,并不是Hadoop专用,你也可以将其用于其他系统,它采用了传统的共享密钥的方式,实现了在网络环境不一定保证安全的环境下,client和server之间的通信,适用于client/server模型,由MIT开发和实现。而使用Cloudera Manager可以较为轻松的实现界面化的Kerberos集成,本文Fayson主要介绍如何在Redhat7.4的CDH6.2环境中启用Kerberos。

- 内容概述:

1.如何安装及配置KDC服务

2.如何通过CDH启用Kerberos

3.如何登录Kerberos并访问Hadoop相关服务

4.总结

- 测试环境:

1.操作系统:Redhat7.4

2.CDH6.2

3.采用root用户进行操作

2 KDC服务安装及配置

本文档中将KDC服务安装在Cloudera Manager Server所在服务器上(KDC服务可根据自己需要安装在其他服务器)

1.在Cloudera Manager服务器上安装KDC服务

[root@ip-172-31-6-83 ~]# yum -y install krb5-server krb5-libs krb5-auth-dialog krb5-workstation

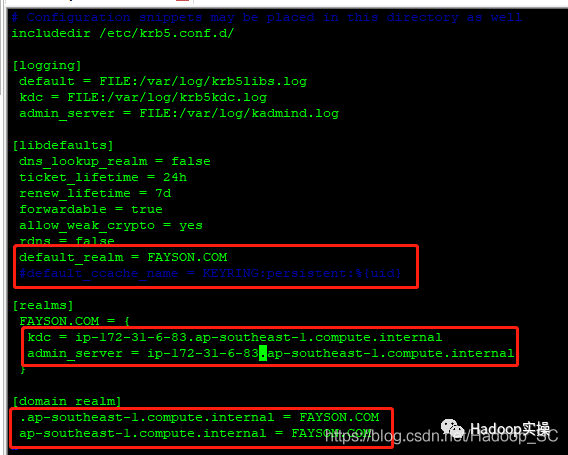

[root@ip-172-31-6-83 ~]# vim /etc/krb5.conf

# Configuration snippets may be placed in this directory as well

includedir /etc/krb5.conf.d/

[logging]

default = FILE:/var/log/krb5libs.log

kdc = FILE:/var/log/krb5kdc.log

admin_server = FILE:/var/log/kadmind.log

[libdefaults]

dns_lookup_realm = false

ticket_lifetime = 24h

renew_lifetime = 7d

forwardable = true

rdns = false

default_realm = FAYSON.COM

#default_ccache_name = KEYRING:persistent:%{uid}

[realms]

FAYSON.COM = {

kdc = ip-172-31-6-83.ap-southeast-1.compute.internal

admin_server = ip-172-31-6-83.ap-southeast-1.compute.internal

}

[domain_realm]

.ap-southeast-1.compute.internal = FAYSON.COM

ap-southeast-1.compute.internal = FAYSON.COM

标红部分为需要修改的信息。

3.修改/var/kerberos/krb5kdc/kadm5.acl配置

[root@ip-172-31-6-83 ~]# vim /var/kerberos/krb5kdc/kadm5.acl

*/[email protected] *

4.修改/var/kerberos/krb5kdc/kdc.conf配置

[root@ip-172-31-6-83 ~]# vim /var/kerberos/krb5kdc/kdc.conf

[root@ip-172-31-6-83 ~]# cat /var/kerberos/krb5kdc/kdc.conf

[kdcdefaults]

kdc_ports = 88

kdc_tcp_ports = 88

[realms]

FAYSON.COM = {

#master_key_type = aes256-cts

max_renewable_life= 7d 0h 0m 0s

acl_file = /var/kerberos/krb5kdc/kadm5.acl

dict_file = /usr/share/dict/words

admin_keytab = /var/kerberos/krb5kdc/kadm5.keytab

supported_enctypes = aes256-cts:normal aes128-cts:normal des3-hmac-sha1:normal arcfour-hmac:normal camellia256-cts:normal camellia128-cts:normal des-hmac-sha1:normal des-cbc-md5:normal des-cbc-crc:normal

}

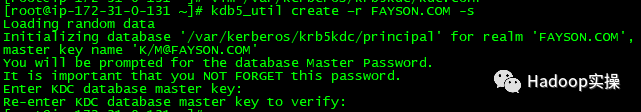

5.创建Kerberos数据库

[root@ip-172-31-6-83 ~]# kdb5_util create –r FAYSON.COM -s

Loading random data

Initializing database '/var/kerberos/krb5kdc/principal' for realm 'FAYSON.COM',

master key name 'K/[email protected]'

You will be prompted for the database Master Password.

It is important that you NOT FORGET this password.

Enter KDC database master key:

Re-enter KDC database master key to verify:

此处需要输入Kerberos数据库的密码。

6.创建Kerberos的管理账号

[root@ip-172-31-6-83 ~]# kadmin.local

Authenticating as principal root/[email protected] with password.

kadmin.local: addprinc admin/[email protected]

WARNING: no policy specified for admin/[email protected]; defaulting to no policy

Enter password for principal "admin/[email protected]":

Re-enter password for principal "admin/[email protected]":

Principal "admin/[email protected]" created.

kadmin.local: exit

标红部分为Kerberos管理员账号,需要输入管理员密码。

7.将Kerberos服务添加到自启动服务,并启动krb5kdc和kadmin服务

[root@ip-172-31-6-83 ~]# systemctl enable krb5kdc

Created symlink from /etc/systemd/system/multi-user.target.wants/krb5kdc.service to /usr/lib/systemd/system/krb5kdc.service.

[root@ip-172-31-6-83 ~]# systemctl enable kadmin

Created symlink from /etc/systemd/system/multi-user.target.wants/kadmin.service to /usr/lib/systemd/system/kadmin.service.

[root@ip-172-31-6-83 ~]# systemctl start krb5kdc

[root@ip-172-31-6-83 ~]# systemctl start kadmin

8.测试Kerberos的管理员账号

[root@ip-172-31-6-83 ~]# kinit admin/[email protected]

Password for admin/[email protected]:

[root@ip-172-31-6-83 ~]# klist

Ticket cache: FILE:/tmp/krb5cc_0

Default principal: admin/[email protected]

Valid starting Expires Service principal

05/11/2019 11:26:56 05/12/2019 11:26:56 krbtgt/[email protected]

renew until 05/18/2019 11:26:56

[root@ip-172-31-6-83 ~]#

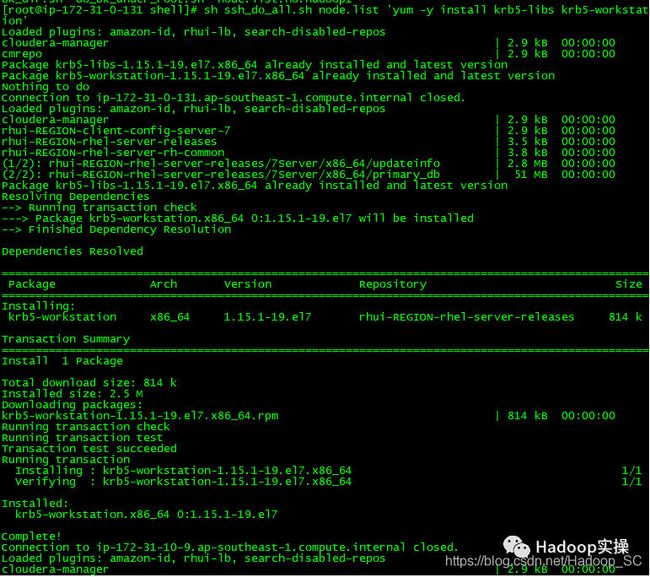

9.为集群安装所有Kerberos客户端,包括Cloudera Manager

使用批处理脚本为集群所有节点安装Kerberos客户端

[root@ip-172-31-6-83 shell]# sh ssh_do_all.sh node.list 'yum -y install krb5-libs krb5-workstation'

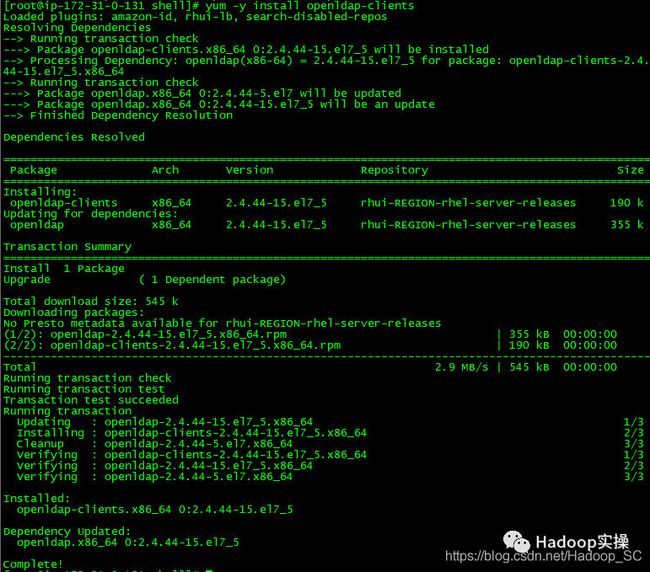

10.在Cloudera Manager Server服务器上安装额外的包

[root@ip-172-31-6-83 shell]# yum -y install openldap-clients

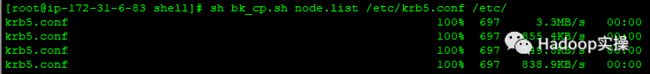

11.将KDC Server上的krb5.conf文件拷贝到所有Kerberos客户端

使用批处理脚本将Kerberos服务端的krb5.conf配置文件拷贝至集群所有节点的/etc目录下:

[root@ip-172-31-6-83 shell]# sh bk_cp.sh node.list /etc/krb5.conf /etc/

3 CDH集群启用Kerberos

1.在KDC中给Cloudera Manager添加管理员账号

[root@ip-172-31-6-83 shell]# kadmin.local

Authenticating as principal admin/[email protected] with password.

kadmin.local: addprinc cloudera-scm/[email protected]

WARNING: no policy specified for cloudera-scm/[email protected]; defaulting to no policy

Enter password for principal "cloudera-scm/[email protected]":

Re-enter password for principal "cloudera-scm/[email protected]":

Principal "cloudera-scm/[email protected]" created.

kadmin.local: exit

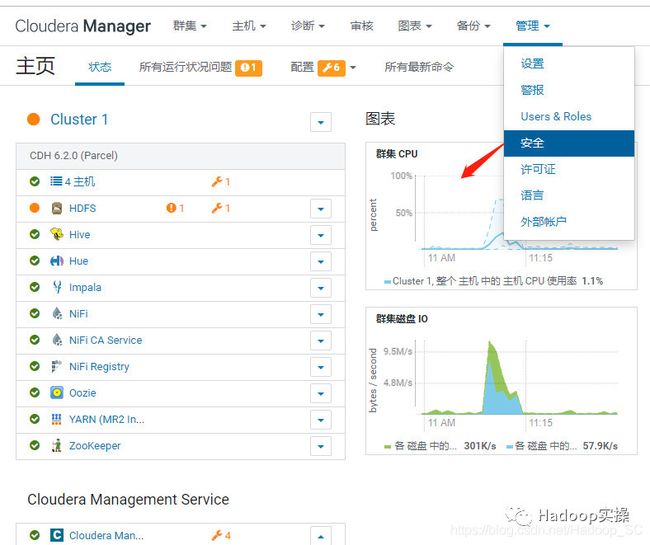

2.进入Cloudera Manager的“管理”->“安全”界面

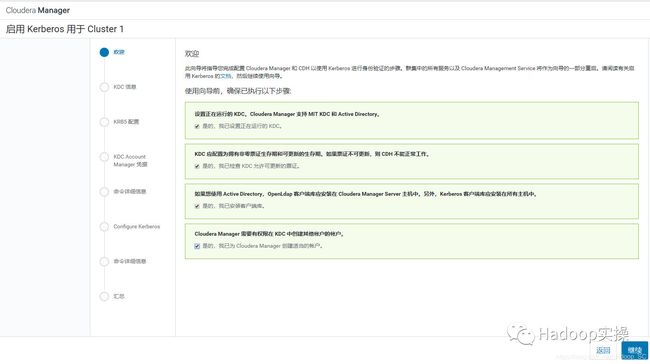

3.选择“启用Kerberos”,进入如下界面

4.确保如下列出的所有检查项都已完成,然后全部点击勾选

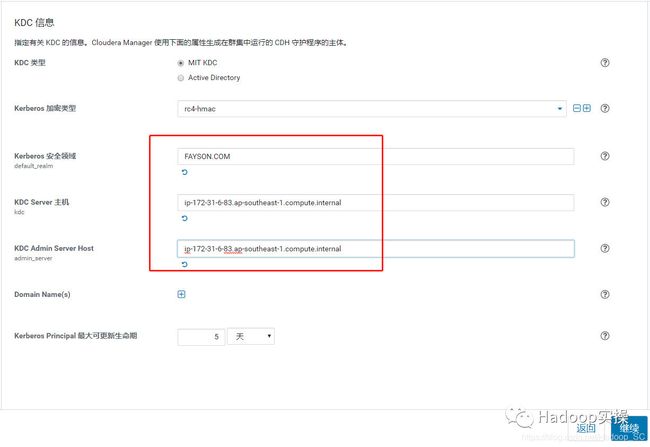

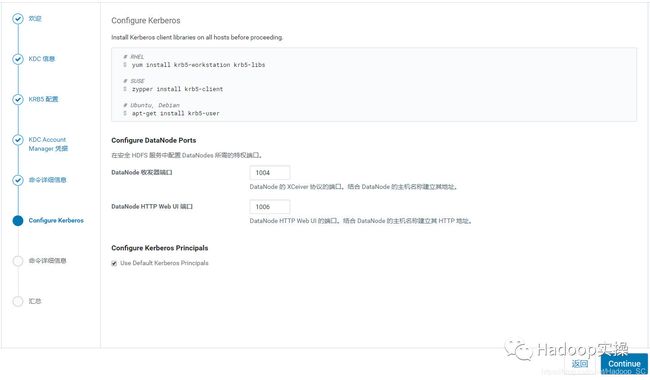

5.点击“继续”,配置相关的KDC信息,包括类型、KDC服务器、KDC Realm、加密类型以及待创建的Service Principal(hdfs,yarn,,hbase,hive等)的更新生命期等

6.不建议让Cloudera Manager来管理krb5.conf, 点击“继续”

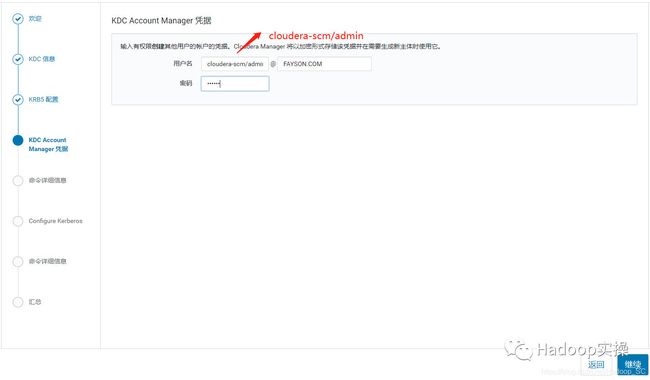

7.输入Cloudera Manager的Kerbers管理员账号,一定得和之前创建的账号一致,点击“继续”

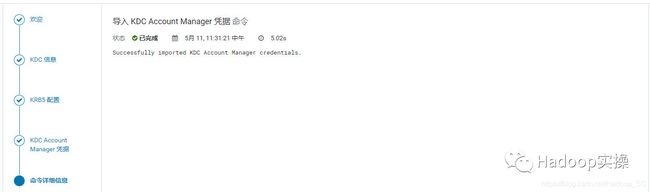

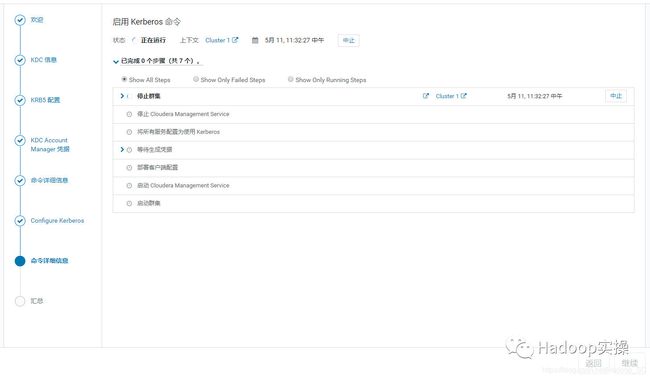

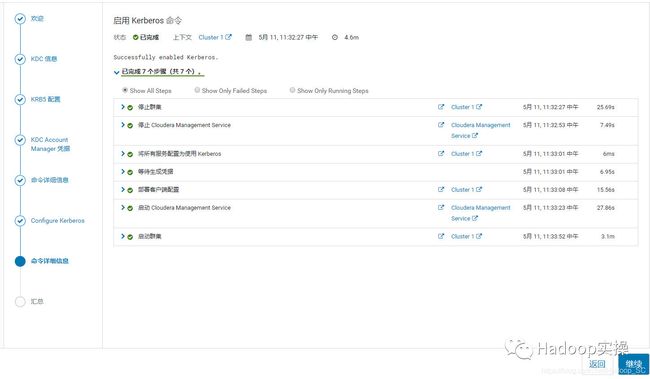

8.点击“继续”启用Kerberos

9.Kerberos启用完成,点击“继续”

10.集群重启完成,点击“继续”

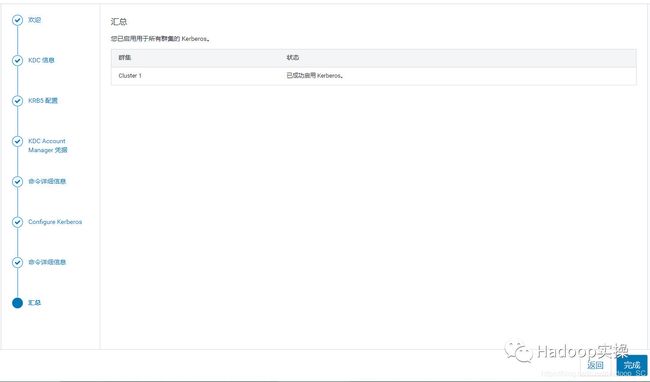

点击“完成”,至此已成功启用Kerberos。

12.回到主页,一切正常,再次查看“管理”->“安全”,界面显示“已成功启用 Kerberos。”

4 Kerberos使用

使用fayson用户运行MapReduce任务及操作Hive,需要在集群所有节点创建fayson用户。

1.使用kadmin创建一个fayson的principal

[root@ip-172-31-6-83 shell]# kadmin.local

Authenticating as principal admin/[email protected] with password.

kadmin.local: addprinc [email protected]

WARNING: no policy specified for [email protected]; defaulting to no policy

Enter password for principal "[email protected]":

Re-enter password for principal "[email protected]":

Principal "[email protected]" created.

kadmin.local: exit

You have new mail in /var/spool/mail/root

[root@ip-172-31-6-83 ~]# kdestroy

[root@ip-172-31-6-83 ~]# kinit fayson

Password for [email protected]:

[root@ip-172-31-6-83 ~]# klist

Ticket cache: FILE:/tmp/krb5cc_0

Default principal: [email protected]

Valid starting Expires Service principal

05/11/2019 11:38:33 05/12/2019 11:38:33 krbtgt/[email protected]

renew until 05/18/2019 11:38:33

[root@ip-172-31-6-83 ~]#

3.在集群所有节点添加fayson用户

使用批量脚本在所有节点添加fayson用户

[root@ip-172-31-6-83 shell]# sh ssh_do_all.sh node.list "useradd fayson"

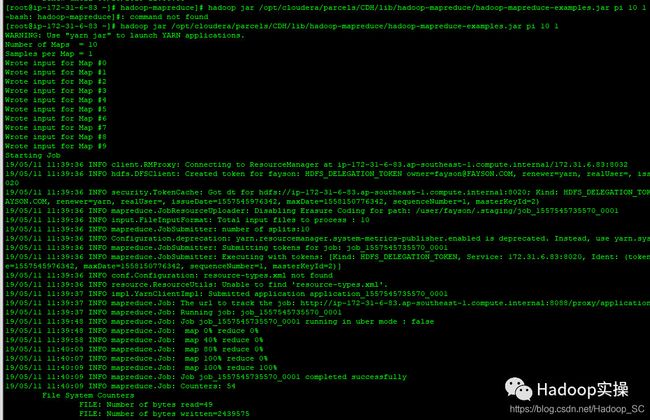

4.运行MapReduce作业

[root@ip-172-31-6-83 hadoop-mapreduce]# hadoop jar /opt/cloudera/parcels/CDH/lib/hadoop-mapreduce/hadoop-mapreduce-examples.jar pi 10 1

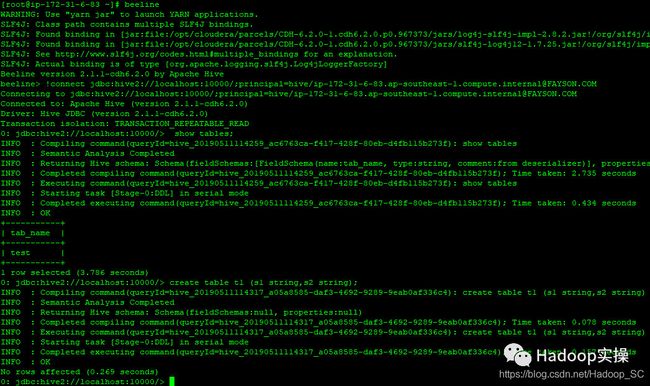

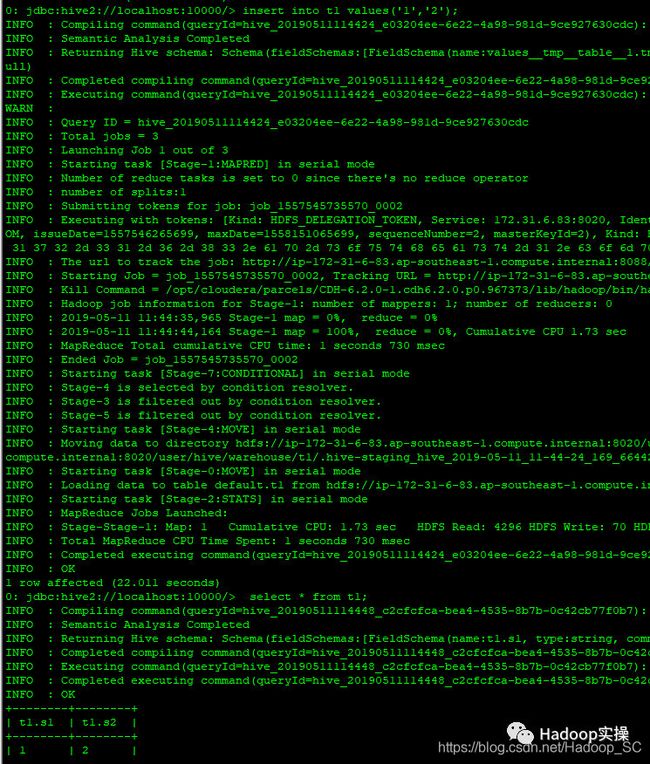

5.使用beeline连接hive进行测试

[root@ip-172-31-6-83 ~]# beeline

WARNING: Use "yarn jar" to launch YARN applications.

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/jars/log4j-slf4j-impl-2.8.2.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/jars/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory]

Beeline version 2.1.1-cdh6.2.0 by Apache Hive

beeline> !connect jdbc:hive2://localhost:10000/;principal=hive/[email protected]

Connecting to jdbc:hive2://localhost:10000/;principal=hive/[email protected]

Connected to: Apache Hive (version 2.1.1-cdh6.2.0)

Driver: Hive JDBC (version 2.1.1-cdh6.2.0)

Transaction isolation: TRANSACTION_REPEATABLE_READ

0: jdbc:hive2://localhost:10000/> show tables;

INFO : Compiling command(queryId=hive_20190511114259_ac6763ca-f417-428f-80eb-d4fb115b273f): show tables

INFO : Semantic Analysis Completed

INFO : Returning Hive schema: Schema(fieldSchemas:[FieldSchema(name:tab_name, type:string, comment:from deserializer)], properties:null)

INFO : Completed compiling command(queryId=hive_20190511114259_ac6763ca-f417-428f-80eb-d4fb115b273f); Time taken: 2.735 seconds

INFO : Executing command(queryId=hive_20190511114259_ac6763ca-f417-428f-80eb-d4fb115b273f): show tables

INFO : Starting task [Stage-0:DDL] in serial mode

INFO : Completed executing command(queryId=hive_20190511114259_ac6763ca-f417-428f-80eb-d4fb115b273f); Time taken: 0.434 seconds

INFO : OK

+-----------+

| tab_name |

+-----------+

| test |

+-----------+

1 row selected (3.786 seconds)

0: jdbc:hive2://localhost:10000/> create table t1 (s1 string,s2 string);

INFO : Compiling command(queryId=hive_20190511114317_a05a8585-daf3-4692-9289-9eab0af336c4): create table t1 (s1 string,s2 string)

INFO : Semantic Analysis Completed

INFO : Returning Hive schema: Schema(fieldSchemas:null, properties:null)

INFO : Completed compiling command(queryId=hive_20190511114317_a05a8585-daf3-4692-9289-9eab0af336c4); Time taken: 0.078 seconds

INFO : Executing command(queryId=hive_20190511114317_a05a8585-daf3-4692-9289-9eab0af336c4): create table t1 (s1 string,s2 string)

INFO : Starting task [Stage-0:DDL] in serial mode

INFO : Completed executing command(queryId=hive_20190511114317_a05a8585-daf3-4692-9289-9eab0af336c4); Time taken: 0.157 seconds

INFO : OK

No rows affected (0.269 seconds)

0: jdbc:hive2://localhost:10000/>

向test表中插入数据

0: jdbc:hive2://localhost:10000/> insert into t1 values('1','2');

0: jdbc:hive2://localhost:10000/> select * from t1;

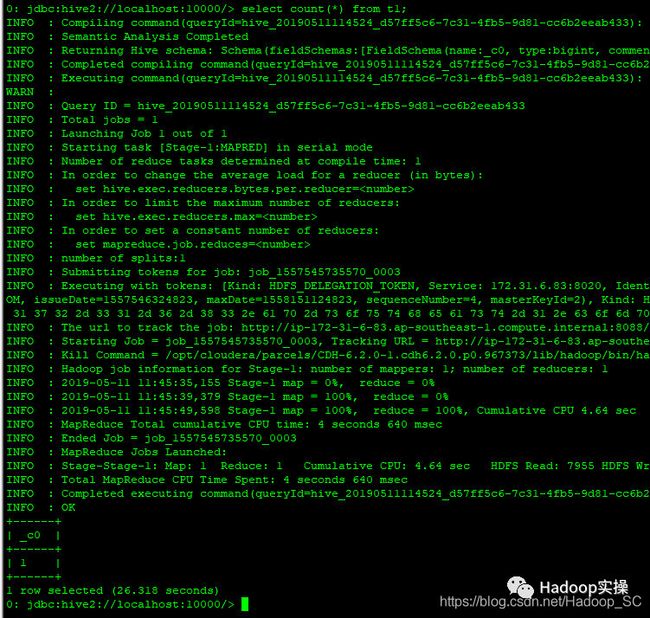

执行一个Count语句

0: jdbc:hive2://localhost:10000/> select count(*) from t1;

5 常见问题

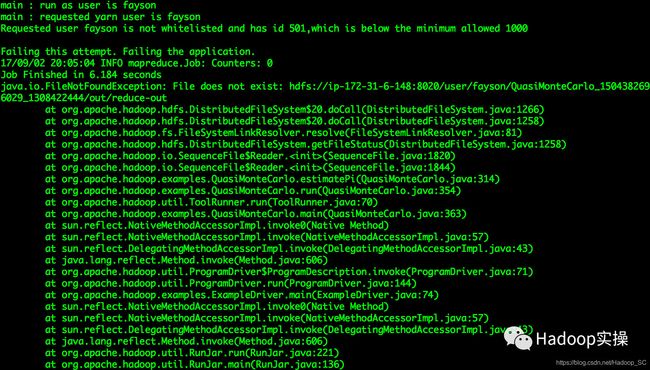

1.使用Kerberos用户身份运行MapReduce作业报错

main : run as user is fayson

main : requested yarn user is fayson

Requested user fayson is not whitelisted and has id 501,which is below the minimum allowed 1000

Failing this attempt. Failing the application.

17/09/02 20:05:04 INFO mapreduce.Job: Counters: 0

Job Finished in 6.184 seconds

java.io.FileNotFoundException: File does not exist: hdfs://ip-172-31-6-148:8020/user/fayson/QuasiMonteCarlo_1504382696029_1308422444/out/reduce-out

at org.apache.hadoop.hdfs.DistributedFileSystem$20.doCall(DistributedFileSystem.java:1266)

at org.apache.hadoop.hdfs.DistributedFileSystem$20.doCall(DistributedFileSystem.java:1258)

at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81)

at org.apache.hadoop.hdfs.DistributedFileSystem.getFileStatus(DistributedFileSystem.java:1258)

at org.apache.hadoop.io.SequenceFile$Reader.(SequenceFile.java:1820)

at org.apache.hadoop.io.SequenceFile$Reader.(SequenceFile.java:1844)

at org.apache.hadoop.examples.QuasiMonteCarlo.estimatePi(QuasiMonteCarlo.java:314)

at org.apache.hadoop.examples.QuasiMonteCarlo.run(QuasiMonteCarlo.java:354)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:70)

at org.apache.hadoop.examples.QuasiMonteCarlo.main(QuasiMonteCarlo.java:363)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:606)

at org.apache.hadoop.util.ProgramDriver$ProgramDescription.invoke(ProgramDriver.java:71)

at org.apache.hadoop.util.ProgramDriver.run(ProgramDriver.java:144)

at org.apache.hadoop.examples.ExampleDriver.main(ExampleDriver.java:74)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:606)

at org.apache.hadoop.util.RunJar.run(RunJar.java:221)

at org.apache.hadoop.util.RunJar.main(RunJar.java:136)

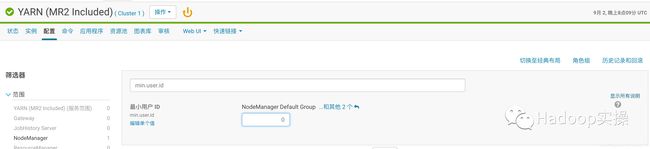

问题原因:是由于Yarn限制了用户id小于1000的用户提交作业;

解决方法:修改Yarn的min.user.id来解决

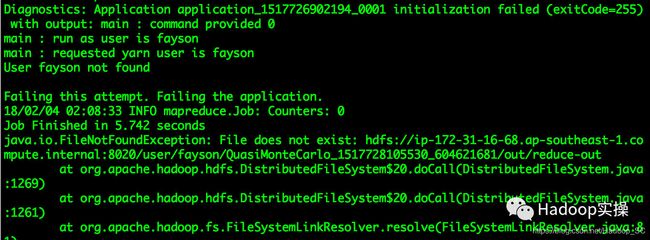

2.进行kinit操作后,执行MR作业报“User fayson not found”

6 总结

-

CDH6与CDH5启用Kerberos的过程基本没差别,除了CDH6的界面有些许变化外。

-

在CDH集群中启用Kerberos需要先安装Kerberos服务(krb5kdc和kadmin服务)

-

在集群所有节点需要安装Kerberos客户端,用于和kdc服务通信

-

在Cloudera Manager Server节点需要额外安装openldap-clients包

-

CDH集群启用Kerberos后,使用自己定义的fayson用户向集群提交作业需确保集群所有节点的操作系统中存在fayson用户,否则作业会执行失败