7.4 并行连接网路GoogLeNet

由来:吸收了NiN网络的串联网络思想,并在此基础上做了改进

解决的问题:什么样大小的卷积核最合适的问题。使用不同大小的卷积核组合是有利的。

GoogLeNet架构

GoogLeNet的Inception块的架构

import torch

from torch import nn

from torch.nn import functional as F

from d2l import torch as d2l

import time

class Inception(nn.Module):

# c1--c4是每条路径的输出通道数 | **kwargs表示接收任意数量的参数,并以普通字典的方式存储

def __init__(self,in_channels,c1,c2,c3,c4,**kwargs):

super(Inception,self).__init__(**kwargs)

# 线路1,单1x1卷积层

self.p1_1 = nn.Conv2d(in_channels,c1,kernel_size=1)

# 线路2,1x1卷基层后接3x3卷积层

self.p2_1 = nn.Conv2d(in_channels,c2[0],kernel_size=1)

self.p2_2 = nn.Conv2d(c2[0],c2[1],kernel_size=3,padding=1)

# 线路3,1x1卷积层后接5x5卷积层

self.p3_1 = nn.Conv2d(in_channels,c3[0],kernel_size=1)

self.p3_2 = nn.Conv2d(c3[0],c3[1],kernel_size=5,padding=2)

# 线路4,3x3最大汇聚层后接1x1卷积层

self.p4_1 = nn.MaxPool2d(kernel_size=3,stride=1,padding=1)

self.p4_2 = nn.Conv2d(in_channels,c4,kernel_size=1)

def forward(self,x):

# 1x1卷积层后面都有relu

p1= F.relu(self.p1_1(x))

p2= F.relu(self.p2_2(F.relu(self.p2_1(x))))

p3= F.relu(self.p3_2(F.relu(self.p3_1(x))))

p4= F.relu(self.p4_2(self.p4_1(x)))

# 在通道维度上连结输出

return torch.cat((p1,p2,p3,p4),dim=1)

# 逐一实现每个模块

# 第一个模块: 一个64通道,7x7的卷积层 (卷积层包含relu和最大池化)

b1 = nn.Sequential(nn.Conv2d(1,64,kernel_size=7,stride=2,padding=3),

nn.ReLU(),

nn.MaxPool2d(kernel_size=3,stride=2,padding=1))

# 第二个模块:1x1的卷积层 和 3x3的卷积层

b2 = nn.Sequential(nn.Conv2d(64,64,kernel_size=1),

nn.ReLU(),

nn.Conv2d(64,192,kernel_size=3,padding=1),

nn.MaxPool2d(kernel_size=3,stride=2,padding=1))

'''第三个模块:由两个Inception堆积而成'''

b3 = nn.Sequential(Inception(in_channels=192,c1=64,c2=(96,128),c3=(16,32),c4=32),

# 第一个Inception的四条路径的输出通道汇合成输入通道数:192+64+128+32+32=256

Inception(in_channels=256,c1=128,c2=(128,192),c3=(32,96),c4=64),

nn.MaxPool2d(kernel_size=3,stride=2,padding=1))

b4 = nn.Sequential(Inception(480, 192, (96, 208), (16, 48), 64),

Inception(512, 160, (112, 224), (24, 64), 64),

Inception(512, 128, (128, 256), (24, 64), 64),

Inception(512, 112, (144, 288), (32, 64), 64),

Inception(528, 256, (160, 320), (32, 128), 128),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

b5 = nn.Sequential(Inception(832, 256, (160, 320), (32, 128), 128),

Inception(832, 384, (192, 384), (48, 128), 128),

nn.AdaptiveAvgPool2d((1,1)),

nn.Flatten())

net = nn.Sequential(b1, b2, b3, b4, b5, nn.Linear(1024, 10))

X = torch.rand(size=(1, 1, 96, 96))

for layer in net:

X = layer(X)

print(layer.__class__.__name__,'output shape:\t', X.shape)

输出结果:

Sequential output shape: torch.Size([1, 64, 24, 24])

Sequential output shape: torch.Size([1, 192, 12, 12])

Sequential output shape: torch.Size([1, 480, 6, 6])

Sequential output shape: torch.Size([1, 832, 3, 3])

Sequential output shape: torch.Size([1, 1024])

Linear output shape: torch.Size([1, 10])

d2l库中的训练函数最后得到的是平均准确率而不是最优的准确率,自己实现一个

def train_ch6(net, train_iter, test_iter, num_epochs, lr, device):

"""Train a model with a GPU (defined in Chapter 6).

Defined in :numref:`sec_lenet`"""

def init_weights(m):

if type(m) == nn.Linear or type(m) == nn.Conv2d:

nn.init.xavier_uniform_(m.weight)

net.apply(init_weights)

print('training on', device)

net.to(device)

optimizer = torch.optim.SGD(net.parameters(), lr=lr)

loss = nn.CrossEntropyLoss()

animator = d2l.Animator(xlabel='epoch', xlim=[1, num_epochs],

legend=['train loss', 'train acc', 'test acc'])

timer, num_batches = d2l.Timer(), len(train_iter)

best_test_acc = 0

for epoch in range(num_epochs):

# Sum of training loss, sum of training accuracy, no. of examples

metric = d2l.Accumulator(3)

net.train()

for i, (X, y) in enumerate(train_iter):

timer.start()

optimizer.zero_grad()

X, y = X.to(device), y.to(device)

y_hat = net(X)

l = loss(y_hat, y)

l.backward()

optimizer.step()

with torch.no_grad():

metric.add(l * X.shape[0], d2l.accuracy(y_hat, y), X.shape[0])

timer.stop()

train_l = metric[0] / metric[2]

train_acc = metric[1] / metric[2]

if (i + 1) % (num_batches // 5) == 0 or i == num_batches - 1:

animator.add(epoch + (i + 1) / num_batches,

(train_l, train_acc, None))

test_acc = d2l.evaluate_accuracy_gpu(net, test_iter)

if test_acc>best_test_acc:

best_test_acc = test_acc

animator.add(epoch + 1, (None, None, test_acc))

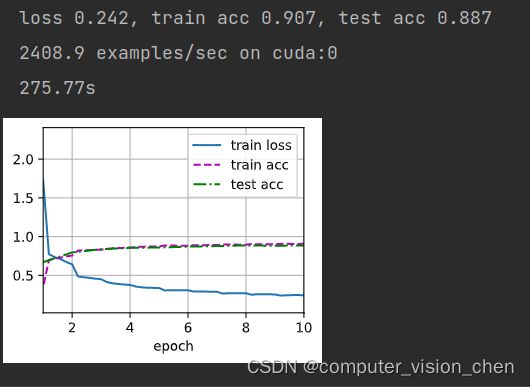

print(f'loss {train_l:.3f}, train acc {train_acc:.3f}, '

f'test acc {test_acc:.3f}, best test acc {best_test_acc:.3f}')

# 取的好像是平均准备率

print(f'{metric[2] * num_epochs / timer.sum():.1f} examples/sec '

f'on {str(device)}')

'''开始计时'''

start_time = time.time()

# 设置参数,训练模型

lr, num_epochs, batch_size = 0.05, 20, 128

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size, resize=96)

# d2l自带的训练函数,最后得到的是test_iter是平均的准确率

# d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

# 用自己的训练函数

train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

'''计时结束'''

end_time = time.time()

run_time = end_time - start_time

# 将输出的秒数保留两位小数

print(f'{round(run_time,2)}s')

思考

为什么1x1卷积层后面必有relu函数?

1x1卷积相当于全连接,要激活的。

AlexNet、VGG和NiN的模型参数大小与GoogLeNet相比,后两个网络架构是如何显著减少模型参数大小的?

AlexNet和VGG都有三个全连接层,而NiN用1x1卷积层替换掉了全连接层,GoogLeNet只有一层全连接层。所以显著的减少了模型参数大小。