YOLOv5,YOLOv8改进-修改DCNv2

1. 概述

Deep&Cross Network(DCN)[1]是由Google于2017年提出的用于计算CTR问题的方法,是对Wide&Deep[2]模型的进一步改进。线性模型无法学习到特征的交叉属性,需要大量的人工特征工程的介入,深度网络对于交叉特征的学习有着天然的优势,在Wide&Deep模型中,Deep侧已经是一个DNN模型,而Wide侧是一个线性模型LR,无法有效的学习到交叉特征。在DCN中针对Wide&Deep模型的Wide侧提出了Cross网络,通过Cross网络学习到更多的交叉特征,提升整个模型的特征表达能力。

2. 算法原理

2.1. DCN的网络结构

DCN模型的网络结构如下图所示:

DCNv1解决的问题就是我们常规的图像增强,仿射变换(线性变换加平移)不能解决的多种形式目标变换的几何变换的问题

DCN v2

对于positive的样本来说,采样的特征应该focus在RoI内,如果特征中包含了过多超出RoI的内容,那么结果会受到影响和干扰。而negative样本则恰恰相反,引入一些超出RoI的特征有助于帮助网络判别这个区域是背景区域。

DCNv1引入了可变形卷积,能更好的适应目标的几何变换。但是v1可视化结果显示其感受野对应位置超出了目标范围,导致特征不受图像内容影响(理想情况是所有的对应位置分布在目标范围以内)。

为了解决该问题:提出v2, 主要有

1、扩展可变形卷积,增强建模能力

2、提出了特征模拟方案指导网络培训:feature mimicking scheme

上面这段话是什么意思呢,通俗来讲就是,我们的可变性卷积的区域大于目标所在区域,所以这时候就会对非目标区域进行错误识别。

所以自然能想到的解决方案就是加入权重项进行惩罚。(至于这个实现起来就比较简单了,直接初始化一个权重然后乘(input+offsets)就可以了)

![]()

可调节的RoIpooling也是类似的,公式如下:![]()

下面附上代码

----------------------------------分割线-----------------------------------------

common中加入以下代码

class DCNv2(nn.Module):

def __init__(self, in_channels, out_channels, kernel_size, stride=1,

padding=1, dilation=1, groups=1, deformable_groups=1):

super(DCNv2, self).__init__()

self.in_channels = in_channels

self.out_channels = out_channels

self.kernel_size = (kernel_size, kernel_size)

self.stride = (stride, stride)

self.padding = (padding, padding)

self.dilation = (dilation, dilation)

self.groups = groups

self.deformable_groups = deformable_groups

self.weight = nn.Parameter(

torch.empty(out_channels, in_channels, *self.kernel_size)

)

self.bias = nn.Parameter(torch.empty(out_channels))

out_channels_offset_mask = (self.deformable_groups * 3 *

self.kernel_size[0] * self.kernel_size[1])

self.conv_offset_mask = nn.Conv2d(

self.in_channels,

out_channels_offset_mask,

kernel_size=self.kernel_size,

stride=self.stride,

padding=self.padding,

bias=True,

)

self.bn = nn.BatchNorm2d(out_channels)

self.act = Conv.default_act

self.reset_parameters()

def forward(self, x):

offset_mask = self.conv_offset_mask(x)

o1, o2, mask = torch.chunk(offset_mask, 3, dim=1)

offset = torch.cat((o1, o2), dim=1)

mask = torch.sigmoid(mask)

x = torch.ops.torchvision.deform_conv2d(

x,

self.weight,

offset,

mask,

self.bias,

self.stride[0], self.stride[1],

self.padding[0], self.padding[1],

self.dilation[0], self.dilation[1],

self.groups,

self.deformable_groups,

True

)

x = self.bn(x)

x = self.act(x)

return x

def reset_parameters(self):

n = self.in_channels

for k in self.kernel_size:

n *= k

std = 1. / math.sqrt(n)

self.weight.data.uniform_(-std, std)

self.bias.data.zero_()

self.conv_offset_mask.weight.data.zero_()

self.conv_offset_mask.bias.data.zero_()

class Bottleneck_DCN(nn.Module):

# Standard bottleneck

def __init__(self, c1, c2, shortcut=True, g=1, e=0.5): # ch_in, ch_out, shortcut, groups, expansion

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = DCNv2(c_, c2, 3, 1, groups=g)

self.add = shortcut and c1 == c2

def forward(self, x):

return x + self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))

class C3_DCN(C3):

# C3 module with DCNv2

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5):

super().__init__(c1, c2, n, shortcut, g, e)

c_ = int(c2 * e)

self.m = nn.Sequential(*(Bottleneck_DCN(c_, c_, shortcut, g, e=1.0) for _ in range(n)))

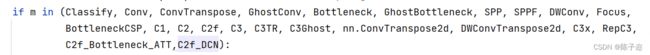

注册模块

类似这种,和注意力机制一个改法,很简单

# YOLOv5 by Ultralytics, GPL-3.0 license

# Parameters

nc: 6 # number of classes

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.25 # layer channel multiple

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# YOLOv5 v6.0 backbone

backbone:

# [from, number, module, args]

[[-1, 1, Conv, [64, 6, 2, 2]], # 0-P1/2

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

[-1, 3, C3_DCN, [128]],

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

[-1, 6, C3_DCN, [256]],

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16

[-1, 9, C3_DCN, [512]],

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32

[-1, 3, C3_DCN, [1024]],

[-1, 1, SPPF, [1024, 5]], # 9

]

# YOLOv5 v6.0 head

head:

[[-1, 1, Conv, [512, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 6], 1, Concat, [1]], # cat backbone P4

[-1, 3, C3, [512, False]], # 13

[-1, 1, Conv, [256, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 4], 1, Concat, [1]], # cat backbone P3

[-1, 3, C3, [256, False]], # 17 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]],

[[-1, 14], 1, Concat, [1]], # cat head P4

[-1, 3, C3, [512, False]], # 20 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]],

[[-1, 10], 1, Concat, [1]], # cat head P5

[-1, 3, C3, [1024, False]], # 23 (P5/32-large)

[[17, 20, 23], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]

YOLOV8同上