运动物体的边缘检测

运动物体的边缘检测

1.开发工具

Python版本:Anaconda 的python环境3.8版本

开发软件:Pycharm社区版

识别模型:普通学习模型

相关模块:Anaconda自带模块,opencv-python=3.4.8.29模块

2.环境搭建

安装Anaconda并将路径添加到环境变量,安装Pycharm并将路径添加到环境变量,使用pip安装需要的相关模块即可。

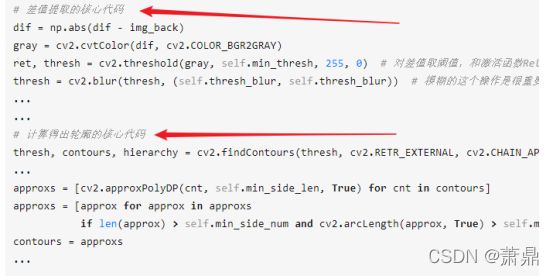

3.原理介绍

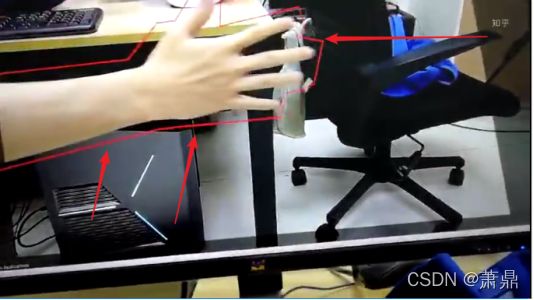

利用固定摄像头拍摄的实时视频,框出移动物体的轮廓(即FrogEyes蛙眼移动物体侦测),首先将摄像头里的想要识别的区域圈出,然后在其检测区域只要物体有运动趋势,则可以检测出运动物体的边缘部分,然后将边缘框出,形成一个移动物体的边缘检测识别。

4.程序流程

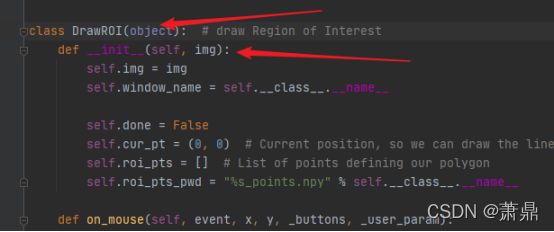

(一)首先创建一个类,该类是用于前期你们圈出你们想检测的区域的代码,可以选定部分区域进行移动物体的边缘检测

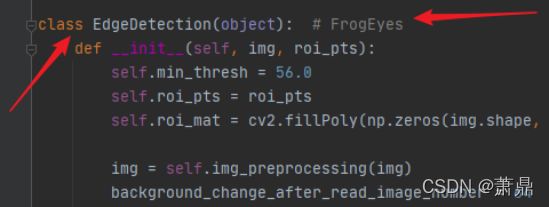

(二)再创建一个一个蛙眼类,作用就是将视频里面的移动物体进行边缘检测再圈出,实现移动物体的边缘检测

(四)定义两个函数,使其将视频读取并且在电脑上展示

(五)源代码展示

import multiprocessing as mp

import cv2

import numpy as np

import os

class DrawROI(object): # draw Region of Interest

def __init__(self, img):

self.img = img

self.window_name = self.__class__.__name__

self.done = False

self.cur_pt = (0, 0) # Current position, so we can draw the line-in-progress

self.roi_pts = [] # List of points defining our polygon

self.roi_pts_pwd = "%s_points.npy" % self.__class__.__name__

def on_mouse(self, event, x, y, _buttons, _user_param):

if self.done: # Nothing more to do

return

if event == cv2.EVENT_MOUSEMOVE:

# We want to be able to draw the line-in-progress, so update current mouse position

self.cur_pt = (x, y)

elif event == cv2.EVENT_LBUTTONDOWN:

# Left click means adding a point at current position to the list of points

print("Adding point #%d with position(%d,%d)" % (len(self.roi_pts), x, y))

self.roi_pts.append((x, y))

elif event == cv2.EVENT_RBUTTONDOWN:

# Right click means we're done

print("Completing polygon with %d points." % len(self.roi_pts))

self.done = True

def confirm(self, img):

roi_mat = cv2.fillPoly(np.zeros(img.shape, dtype=np.uint8), self.roi_pts, (1, 1, 1))

high_light_roi_mat = np.ones(roi_mat.shape, dtype=np.float) * 0.5

high_light_roi_mat = cv2.fillPoly(high_light_roi_mat, self.roi_pts, (1.0, 1.0, 1.0)) # highlight the roi

canvas = np.copy(img)

canvas = canvas * high_light_roi_mat

canvas = np.array(canvas, dtype=np.uint8)

cv2.imshow(self.window_name, canvas)

while os.path.exists(self.roi_pts_pwd):

wait_key = cv2.waitKey(50)

if wait_key == 13:

self.done = True

break

elif wait_key == 8: # ord('\b'), Backspace

os.remove(self.roi_pts_pwd) # delete the *.npy and redraw

self.roi_pts = [] # initialize the pts

self.done = False

def draw_roi(self, line_color=(234, 234, 234)):

img = self.img

roi_mat = np.zeros(img.shape, dtype=np.uint8) # Region of Image(ROI)

cv2.namedWindow(self.window_name, flags=cv2.WINDOW_FREERATIO)

cv2.imshow(self.window_name, img)

cv2.waitKey(1)

cv2.setMouseCallback(self.window_name, self.on_mouse)

canvas = np.copy(img)

canvas_cur = canvas

pre_pts_len = len(self.roi_pts) # previous points of polygon number

if os.path.exists(self.roi_pts_pwd):

self.roi_pts = np.load(self.roi_pts_pwd)

self.confirm(img)

while not self.done:

if len(self.roi_pts) != pre_pts_len:

pre_pts_len = len(self.roi_pts)

cv2.line(canvas_cur, self.roi_pts[-1], self.cur_pt, line_color, 2)

canvas = canvas_cur

else:

canvas_cur = np.copy(canvas)

if len(self.roi_pts) > 0:

cv2.line(canvas_cur, self.roi_pts[-1], self.cur_pt, line_color, 2)

cv2.imshow(self.window_name, canvas_cur) # Update the window

if cv2.waitKey(50) == 13: # ENTER hit, ord('\r') == 13

self.roi_pts = np.array([self.roi_pts])

roi_mat = cv2.fillPoly(roi_mat, self.roi_pts, (1, 1, 1))

print(self.roi_pts_pwd)

np.save(self.roi_pts_pwd, self.roi_pts)

self.confirm(img)

cv2.destroyWindow(self.window_name)

return self.roi_pts

class EdgeDetection(object): # FrogEyes

def __init__(self, img, roi_pts):

self.min_thresh = 56.0

self.roi_pts = roi_pts

self.roi_mat = cv2.fillPoly(np.zeros(img.shape, dtype=np.uint8), self.roi_pts, (1, 1, 1))

img = self.img_preprocessing(img)

background_change_after_read_image_number = 64 # change time = 0.04*number = 2.7 = 0.4*64

self.img_list = [img for _ in range(background_change_after_read_image_number)]

self.high_light_roi_mat = cv2.fillPoly(np.ones(self.roi_mat.shape, dtype=np.float) * 0.25,

self.roi_pts, (1.0, 1.0, 1.0), ) # highlight the roi

self.img_len0 = int(360)

self.img_len1 = int(self.img_len0 / (img.shape[0] / img.shape[1]))

self.img_back = self.img_preprocessing(img) # background

self.min_side_num = 3

self.min_side_len = int(self.img_len0 / 24) # min side len of polygon

self.min_poly_len = int(self.img_len0 / 12)

self.thresh_blur = int(self.img_len0 / 8)

def img_preprocessing(self, img):

img = np.copy(img)

img *= self.roi_mat

# img = cv2.resize(img, (self.img_len1, self.img_len0))

# img = cv2.bilateralFilter(img, d=3, sigmaColor=16, sigmaSpace=32)

return img

def get_polygon_contours(self, img, img_back):

# img = np.copy(img)

dif = np.array(img, dtype=np.int16)

dif = np.abs(dif - img_back)

dif = np.array(dif, dtype=np.uint8) # get different

gray = cv2.cvtColor(dif, cv2.COLOR_BGR2GRAY)

ret, thresh = cv2.threshold(gray, self.min_thresh, 255, 0)

thresh = cv2.blur(thresh, (self.thresh_blur, self.thresh_blur))

if np.max(thresh) == 0: # have not different

contours = []

else:

thresh, contours, hierarchy = cv2.findContours(thresh, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

# hulls = [cv2.convexHull(cnt) for cnt, hie in zip(contours, hierarchy[0]) if hie[2] == -1]

# hulls = [hull for hull in hulls if cv2.arcLength(hull, True) > self.min_hull_len]

# contours = hulls

approxs = [cv2.approxPolyDP(cnt, self.min_side_len, True) for cnt in contours]

approxs = [approx for approx in approxs

if len(approx) > self.min_side_num and cv2.arcLength(approx, True) > self.min_poly_len]

contours = approxs

return contours

def main_get_img_show(self, origin_img):

img = self.img_preprocessing(origin_img)

contours = self.get_polygon_contours(img, self.img_back)

self.img_list.append(img)

img_prev = self.img_list.pop(0)

self.img_back = img \

if not contours or not self.get_polygon_contours(img, img_prev) \

else self.img_back

show_img = np.array(self.high_light_roi_mat * origin_img, dtype=np.uint8)

show_img = cv2.polylines(show_img, contours, True, (0, 0, 255), 2) if contours else show_img

return show_img

def queue_img_put(q, name, pwd, ip, channel=1):

cap = cv2.VideoCapture("1.mp4")

while True:

# print("2222222222222")

is_opened, frame = cap.read()

q.put(frame) if is_opened else None

# q.get() if q.qsize() > 1 else None

def queue_img_get(q, window_name):

frame = q.get()

region_of_interest_pts = DrawROI(frame).draw_roi()

cv2.namedWindow(window_name, flags=cv2.WINDOW_FREERATIO)

frog_eye = EdgeDetection(frame, region_of_interest_pts)

while True:

print("11111111")

frame = q.get()

img_show = frog_eye.main_get_img_show(frame)

cv2.imshow(window_name, img_show)

cv2.waitKey(1)

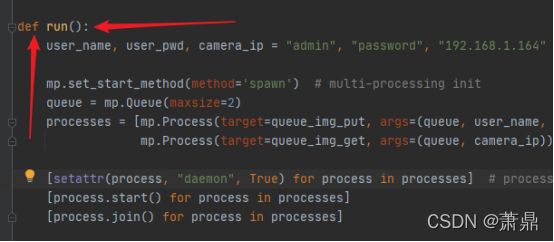

def run():

user_name, user_pwd, camera_ip = "admin", "password", "192.168.1.164"

# mp.set_start_method(method='spawn') # multi-processing init

queue = mp.Queue(maxsize=2)

processes = [mp.Process(target=queue_img_put, args=(queue, user_name, user_pwd, camera_ip)),

mp.Process(target=queue_img_get, args=(queue, camera_ip))]

[setattr(process, "daemon", True) for process in processes] # process.daemon = True

[process.start() for process in processes]

[process.join() for process in processes]

if __name__ == '__main__':

run()

(六)定义最后一个run()函数,使读取的视频能够正确的进行移动物体的边缘检测

5.注意事项

Oopencv-python版本一定要4.0以下,不然程序会报错。