李宏毅-机器学习hw4-self-attention结构-辨别600个speaker的身份

一、慢慢分析+学习pytorch中的各个模块的参数含义、使用方法、功能:

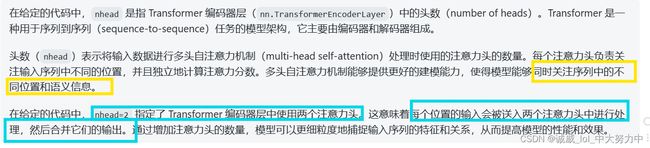

1.encoder编码器中的nhead参数:

self.encoder_layer = nn.TransformerEncoderLayer( d_model=d_model, dim_feedforward=256, nhead=2)

所以说,这个nhead的意思,就是有window窗口的大小,也就是一个b由几个a得到

2.tensor.permute改变维度的用法示例:

#尝试使用permute函数进行测试:可以通过tensor张量直接调用

import torch

import numpy as np

x = np.array([[[1,1,1],[2,2,2]],[[3,3,3],[4,4,4]]])

y = torch.tensor(x)

#y.shape

z=y.permute(2,1,0)

z.shape

print(z) #permute之后变成了3*2*2的维度

print(y) #本来是一个2*2*3从外到内的维度3.tensor.mean求均值:从1个向量 到 1个数值:

4.python中字典(映射)的使用:

二、model的neural network设计部分:

import torch

import torch.nn as nn

import torch.nn.functional as F

class Classifier(nn.Module):

def __init__(self, d_model=80, n_spks=600, dropout=0.1):

super().__init__()

# Project the dimension of features from that of input into d_model.

self.prenet = nn.Linear(40, d_model) #通过一个线性的输入层,从40个维度,变成d_model个

#展示不需要使用这个conformer进行实验

# TODO:

# Change Transformer to Conformer.

# https://arxiv.org/abs/2005.08100

#这里是不需要自己设计 self-attention层的,因为transformer的encoder层用到self-attention层

self.encoder_layer = nn.TransformerEncoderLayer(

d_model=d_model, dim_feedforward=256, nhead=2 #输入维度是上面的d_model,输出维度是256,这2个nhead是啥?一个b由几个a得到

)

#下面这个暂时用不到

# self.encoder = nn.TransformerEncoder(self.encoder_layer, num_layers=2)

# Project the the dimension of features from d_model into speaker nums.

#predict_layer

self.pred_layer = nn.Sequential( #这里其实就相当于是一个线性输出层了,最终输出的是一个n_soks维度600的向量

nn.Linear(d_model, d_model),

nn.ReLU(),

nn.Linear(d_model, n_spks),

)

def forward(self, mels):

"""

args:

mels: (batch size, length, 40) #我来试图解释一下这个东西,反正就是一段声音信号处理后得到的3维tensor,最里面那一维是40

return:

out: (batch size, n_spks) #最后只要输出每个batch中的行数 + 每一行中的n_spks的数值

"""

# out: (batch size, length, d_model) #原来out设置的3个维度的数据分别是batchsize ,

out = self.prenet(mels) #通过一个prenet层之后,最里面的那一维空间 就变成了一个d_model维度

# out: (length, batch size, d_model)

out = out.permute(1, 0, 2) #利用permute将0维和1维进行交换

# The encoder layer expect features in the shape of (length, batch size, d_model).

out = self.encoder_layer(out)

# out: (batch size, length, d_model)

out = out.transpose(0, 1) #重新得到原来的维度,这次用transpose和上一次用permute没有区别

# mean pooling

stats = out.mean(dim=1) #对维度1(第二个维度)计算均值,也就是将整个向量空间-->转成1个数值

#得到的是batch,d_model (len就是一行的数据,从这一行中取均值,就是所谓的均值池化)

# out: (batch, n_spks)

out = self.pred_layer(stats) #这里得到n_spks还不是one-hot vec

return out三、warming up 的设计过程:

import math

import torch

from torch.optim import Optimizer

from torch.optim.lr_scheduler import LambdaLR

#这部分的代码感觉有一点诡异,好像是设计了一个learning rate的warmup过程,算了,之后再回来阅读好了

def get_cosine_schedule_with_warmup(

optimizer: Optimizer,

num_warmup_steps: int,

num_training_steps: int,

num_cycles: float = 0.5,

last_epoch: int = -1,

):

"""

Create a schedule with a learning rate that decreases following the values of the cosine function between the

initial lr set in the optimizer to 0, after a warmup period during which it increases linearly between 0 and the

initial lr set in the optimizer.

Args:

optimizer (:class:`~torch.optim.Optimizer`):

The optimizer for which to schedule the learning rate.

num_warmup_steps (:obj:`int`):

The number of steps for the warmup phase.

num_training_steps (:obj:`int`):

The total number of training steps.

num_cycles (:obj:`float`, `optional`, defaults to 0.5):

The number of waves in the cosine schedule (the defaults is to just decrease from the max value to 0

following a half-cosine).

last_epoch (:obj:`int`, `optional`, defaults to -1):

The index of the last epoch when resuming training.

Return:

:obj:`torch.optim.lr_scheduler.LambdaLR` with the appropriate schedule.

"""

def lr_lambda(current_step):

# Warmup

if current_step < num_warmup_steps:

return float(current_step) / float(max(1, num_warmup_steps))

# decadence

progress = float(current_step - num_warmup_steps) / float(

max(1, num_training_steps - num_warmup_steps)

)

return max(

0.0, 0.5 * (1.0 + math.cos(math.pi * float(num_cycles) * 2.0 * progress))

)

return LambdaLR(optimizer, lr_lambda, last_epoch)四、train中每个batch进行的处理:

import torch

#这里面其实就是原来train部分的代码处理一个batch的操作

def model_fn(batch, model, criterion, device): #这个函数的参数是batch数据,model,loss_func,设备

"""Forward a batch through the model."""

mels, labels = batch #获取mels参数 和 labels参数

mels = mels.to(device)

labels = labels.to(device)

outs = model(mels) #得到的输出结果

loss = criterion(outs, labels) #通过和labels进行比较得到loss

# Get the speaker id with highest probability.

preds = outs.argmax(1) #按照列的方向 计算出最大的索引位置

# Compute accuracy.

accuracy = torch.mean((preds == labels).float()) #通过将preds和labels进行比较得到acc的数值

return loss, accuracy五、validation的处理函数:

from tqdm import tqdm

import torch

def valid(dataloader, model, criterion, device): #感觉就是整个validationset中的数据都进行了操作

"""Validate on validation set."""

model.eval() #开启evaluation模式

running_loss = 0.0

running_accuracy = 0.0

pbar = tqdm(total=len(dataloader.dataset), ncols=0, desc="Valid", unit=" uttr") #创建进度条,实现可视化process_bar

for i, batch in enumerate(dataloader): #下标i,batch数据存到batch中

with torch.no_grad(): #先说明不会使用SGD

loss, accuracy = model_fn(batch, model, criterion, device) #调用上面定义的batch处理函数得到loss 和 acc

running_loss += loss.item()

running_accuracy += accuracy.item()

pbar.update(dataloader.batch_size) #这些处理进度条的内容可以暂时不用管

pbar.set_postfix(

loss=f"{running_loss / (i+1):.2f}",

accuracy=f"{running_accuracy / (i+1):.2f}",

)

pbar.close()

model.train()

return running_accuracy / len(dataloader) #返回正确率六、train的main调用:

from tqdm import tqdm

import torch

import torch.nn as nn

from torch.optim import AdamW

from torch.utils.data import DataLoader, random_split

def parse_args(): #定义一个给config赋值的函数

"""arguments"""

config = {

"data_dir": "./Dataset",

"save_path": "model.ckpt",

"batch_size": 32,

"n_workers": 1, #这个参数太大的时候,我的这个会error

"valid_steps": 2000,

"warmup_steps": 1000,

"save_steps": 10000,

"total_steps": 70000,

}

return config

def main( #可以直接用上面定义那些参数作为这个main里面的参数

data_dir,

save_path,

batch_size,

n_workers,

valid_steps,

warmup_steps,

total_steps,

save_steps,

):

"""Main function."""

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(f"[Info]: Use {device} now!")

train_loader, valid_loader, speaker_num = get_dataloader(data_dir, batch_size, n_workers) #获取所需的data,调用get_dataloader函数

train_iterator = iter(train_loader) #定义一个train_data的迭代器

print(f"[Info]: Finish loading data!",flush = True)

model = Classifier(n_spks=speaker_num).to(device) #构造一个model的实例

criterion = nn.CrossEntropyLoss() #分别构造loss_func 和 optimizer的实例

optimizer = AdamW(model.parameters(), lr=1e-3)

scheduler = get_cosine_schedule_with_warmup(optimizer, warmup_steps, total_steps) #构造warmup的实例

print(f"[Info]: Finish creating model!",flush = True)

best_accuracy = -1.0

best_state_dict = None

pbar = tqdm(total=valid_steps, ncols=0, desc="Train", unit=" step") #process_bar相关的东西,不用管它

for step in range(total_steps): #一共需要的步数进行for循环

# Get data

try:

batch = next(train_iterator) #从train_data中获取到下一个batch的数据

except StopIteration:

train_iterator = iter(train_loader)

batch = next(train_iterator)

loss, accuracy = model_fn(batch, model, criterion, device) #传递对应的数据、模型参数,得到这个batch的loss和acc

batch_loss = loss.item()

batch_accuracy = accuracy.item()

# Updata model

loss.backward()

optimizer.step()

scheduler.step()

optimizer.zero_grad() #更新进行Gradient descend 更新模型,并且将grad清空

# Log

pbar.update() #process_bar的东西先不管

pbar.set_postfix(

loss=f"{batch_loss:.2f}",

accuracy=f"{batch_accuracy:.2f}",

step=step + 1,

)

# Do validation

if (step + 1) % valid_steps == 0:

pbar.close()

valid_accuracy = valid(valid_loader, model, criterion, device) #调用valid函数计算这一次validation的正确率

# keep the best model

if valid_accuracy > best_accuracy: #总是保持最好的valid_acc

best_accuracy = valid_accuracy

best_state_dict = model.state_dict()

pbar = tqdm(total=valid_steps, ncols=0, desc="Train", unit=" step")

# Save the best model so far.

if (step + 1) % save_steps == 0 and best_state_dict is not None:

torch.save(best_state_dict, save_path) #保存最好的model参数

pbar.write(f"Step {step + 1}, best model saved. (accuracy={best_accuracy:.4f})")

pbar.close()

if __name__ == "__main__": #调用这个main函数

main(**parse_args())七、inference部分的test内容:

import os

import json

import torch

from pathlib import Path

from torch.utils.data import Dataset

class InferenceDataset(Dataset):

def __init__(self, data_dir):

testdata_path = Path(data_dir) / "testdata.json"

metadata = json.load(testdata_path.open())

self.data_dir = data_dir

self.data = metadata["utterances"]

def __len__(self):

return len(self.data)

def __getitem__(self, index):

utterance = self.data[index]

feat_path = utterance["feature_path"]

mel = torch.load(os.path.join(self.data_dir, feat_path))

return feat_path, mel

def inference_collate_batch(batch):

"""Collate a batch of data."""

feat_paths, mels = zip(*batch)

return feat_paths, torch.stack(mels)import json

import csv

from pathlib import Path

from tqdm.notebook import tqdm

import torch

from torch.utils.data import DataLoader

def parse_args():

"""arguments"""

config = {

"data_dir": "./Dataset",

"model_path": "./model.ckpt",

"output_path": "./output.csv",

}

return config

def main(

data_dir,

model_path,

output_path,

):

"""Main function."""

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(f"[Info]: Use {device} now!")

mapping_path = Path(data_dir) / "mapping.json"

mapping = json.load(mapping_path.open())

dataset = InferenceDataset(data_dir)

dataloader = DataLoader(

dataset,

batch_size=1,

shuffle=False,

drop_last=False,

num_workers=8,

collate_fn=inference_collate_batch,

)

print(f"[Info]: Finish loading data!",flush = True)

speaker_num = len(mapping["id2speaker"])

model = Classifier(n_spks=speaker_num).to(device)

model.load_state_dict(torch.load(model_path))

model.eval()

print(f"[Info]: Finish creating model!",flush = True)

results = [["Id", "Category"]]

for feat_paths, mels in tqdm(dataloader):

with torch.no_grad():

mels = mels.to(device)

outs = model(mels) #调用model计算得到outs

preds = outs.argmax(1).cpu().numpy() #对outs进行argmax,得到的索引存储到preds中

for feat_path, pred in zip(feat_paths, preds):

results.append([feat_path, mapping["id2speaker"][str(pred)]]) #将每一次的结果存放的到results中

with open(output_path, 'w', newline='') as csvfile:

writer = csv.writer(csvfile)

writer.writerows(results)

if __name__ == "__main__":

main(**parse_args())inference部分的代码暂时就看看好了,这个2022版本的数据在github上404了。。。

七、Dataset的处理过程:

import os

import json

import torch

import random

from pathlib import Path

from torch.utils.data import Dataset

from torch.nn.utils.rnn import pad_sequence

class myDataset(Dataset):

def __init__(self, data_dir, segment_len=128):

self.data_dir = data_dir

self.segment_len = segment_len

# Load the mapping from speaker neme to their corresponding id.

mapping_path = Path(data_dir) / "mapping.json"

mapping = json.load(mapping_path.open()) #将这个json文件load到变量mapping中

self.speaker2id = mapping["speaker2id"] #其实speaker2id这个变量就是mapping里面的内容

#其实也就是原来数据集中的"id00464"变成我们这里的600个人的数据集的0-599的id

# Load metadata of training data.

metadata_path = Path(data_dir) / "metadata.json"

metadata = json.load(open(metadata_path))["speakers"]

#和上面类似的操作,这里的metadata就是打开那个json文件中的内容

#我觉得按照他上课的说法,这里的n_mels的意思就是每个特征音频长度取出40就好了,?对吗

#然后,这个json文件里面的内容就是不同speakerid所发声的音频文件的路径和mel_len

# Get the total number of speaker.

self.speaker_num = len(metadata.keys())

self.data = [] #data就是这个class中的数据

for speaker in metadata.keys(): #逐个遍历每个speaker

for utterances in metadata[speaker]: #遍历每个speaker的每一段录音

self.data.append([utterances["feature_path"], self.speaker2id[speaker]])#将每一段录音按照 (路径,新id)存入data变量中

def __len__(self):

return len(self.data) #返回总共的data数量

def __getitem__(self, index):

feat_path, speaker = self.data[index] #从下标位置获取到该段录音的路径 和 speakerid

# Load preprocessed mel-spectrogram.

mel = torch.load(os.path.join(self.data_dir, feat_path)) #从路径中获取到该mel录音文件

# Segmemt mel-spectrogram into "segment_len" frames.

if len(mel) > self.segment_len: #如果大于128这个seg , 一些处理....

# Randomly get the starting point of the segment.

start = random.randint(0, len(mel) - self.segment_len)

# Get a segment with "segment_len" frames.

mel = torch.FloatTensor(mel[start:start+self.segment_len])

else:

mel = torch.FloatTensor(mel)

# Turn the speaker id into long for computing loss later.

speaker = torch.FloatTensor([speaker]).long() #将speakerid转换为long类型

return mel, speaker #返回这个录音mel文件和对应的speakerid

def get_speaker_number(self):

return self.speaker_num这里附带我下载的文件资源路径:

ML2022Spring-hw4 | Kaggle

下面dropbox的链接是可以使用的

!wget https://www.dropbox.com/s/vw324newiku0sz0/Dataset.tar.gz.aa?d1=0

!wget https://www.dropbox.com/s/vw324newiku0sz0/Dataset.tar.gz.aa?d1=0

!wget https://www.dropbox.com/s/z840g69e71nkayo/Dataset.tar.gz.ab?d1=0

!wget https://www.dropbox.com/s/h1081e1ggonio81/Dataset.tar.gz.ac?d1=0

!wget https://www.dropbox.com/s/fh3zd8ow668c4th/Dataset.tar.gz.ad?d1=0

!wget https://www.dropbox.com/s/ydzygoy2pv6gw9d/Dataset.tar.gz.ae?d1=0

!cat Dataset.tar.gz.* | tar zxvf -这样才能下载到你需要的数据

怎么说呢?最后的最后,还是这个dropbox中下载的内容不全,少了一些文件

有一个解决的方法是,直接在kaggle上面下载那个5.2GB的压缩包,不过解压之后可能有70GB,文件似乎太大了,而且下载之后,只要全部解压导入到Dataset文件夹就可以运行了

方法三:尝试一下那个GoogleDrive上面的文件 :

失败了,算了还是自己老老实实下载然后上传吧

!gdown --id '1CtHZhJ-mTpNsO-MqvAPIi4Yrt3oSBXYV' --output Dataset.zip

!gdown --id '14hmoMgB1fe6v50biIceKyndyeYABGrRq' --output Dataset.zip

!gdown --id '1e9x-Pj13n7-9tK9LS_WjiMo21ru4UBH9' --output Dataset.zip

!gdown --id '10TC0g46bcAz_jkiM165zNmwttT4RiRgY' --output Dataset.zip

!gdown --id '1MUGBvG_Jjq00C2JYHuyV3B01vaf1kWIm' --output Dataset.zip

!gdown --id '18M91P5DHwILNy01ssZ57AiPOR0OwutOM' --output Dataset.zip

!unzip Dataset.zip