k8s的入门和项目部署

1、k8s简介

1.1 k8s是什么

kubernetes,希腊文中舵手的意思,简称K8s,是Google开源的容器编排引擎。在Docker容器引擎的基础上,为容器化的应用提供部署运行、资源调度、服务发现和动态伸缩等一系列完整功能,提高了大规模容器集群管理的便捷性。

1.2 k8s能做什么

目前大型微服务架构的项目,动辄几十上百个服务,这些服务需要部署到N个服务器上,如何实现快速部署和管理这么多的服务呢?

k8s的功能:

- 自动化部署:定义好部署文件,可以自动完成大量项目的部署

- 智能扩缩容:根据容器运行的情况,自动决定是否增加或减少部署的节点数量

- 自我恢复:节点崩溃后,自动将流量迁移到其他节点,等节点恢复后再使用

- 负载均衡:通过网络组件可以将流量分发到集群中的每个服务

- 滚动升降级:先对一部分服务升降机,完成后再更新另一部分服务,保证服务可用性。

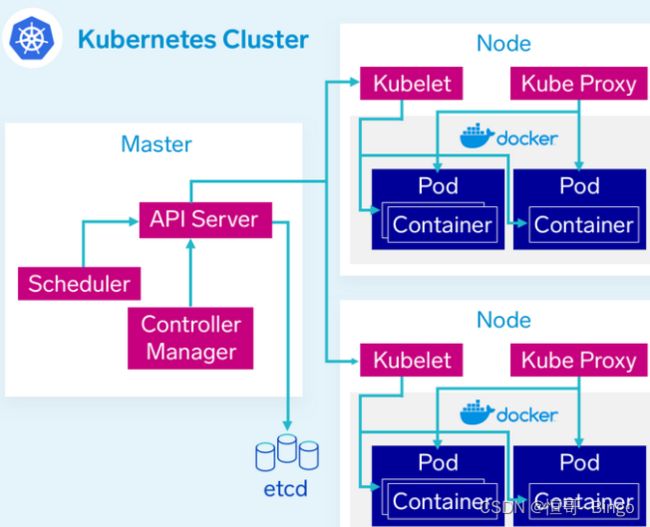

1.3 k8s的核心组件

k8s的组件非常多,架构也比较复杂,这里只介绍主要的一些:

-

Master

K8S集群的控制中心,它负责管理整个集群的状态和配置

包含:

-

API Server

提供了K8S集群的REST API,用于管理和控制集群中的资源

-

Scheduler

负责将Pod调度到合适的节点上运行

-

Controller Manager

负责管理K8S集群中的控制器,如Replication Controller、Deployment Controller等

-

etcd

分布式键值存储系统,用于存储K8S集群的状态和配置信息

-

-

Node

Node节点是K8S集群中的工作节点,它负责运行容器化应用程序

包含:

-

Kubelet

负责管理节点上的Pod和容器,与Master节点通信以获取Pod的调度信息

-

Kube Proxy

负责为Pod提供网络代理和负载均衡功能

-

Container Runtime

负责运行容器,如Docker、rkt等

-

Pod

K8S中最小的可部署单元,它包含一个或多个容器,这些容器共享同一个网络命名空间和存储卷。Pod可以在同一个节点上运行,也可以跨节点运行

-

-

Service

K8S中的网络抽象,它为一组Pod提供了一个稳定的IP地址和DNS名称,使得其他应用程序可以通过该IP地址和DNS名称访问这组Pod

-

Volume

K8S中的存储抽象,它为Pod提供了持久化存储,可以将数据存储在本地磁盘、网络存储或云存储中

-

Namespace

K8S中的逻辑隔离单元,它将集群中的资源划分为多个虚拟集群,以便不同的用户或应用程序可以共享同一个集群,但彼此之间互相隔离

2、安装k8s

下面使用kubeadm来搭建一个入门级的k8s环境

2.1 安装环境

基础的软件环境

| 软件 | 版本 |

|---|---|

| Docker | 24-ce |

| Kubernetes | 1.23 |

| 操作系统 | CentOS7_x64 |

最基本的集群组成,一个主机,两个节点

| 服务器名称 | 服务器IP |

|---|---|

| master | 192.168.223.188 |

| node1 | 192.168.223.177 |

| node2 | 192.168.223.166 |

2.2 前期配置

下面的配置现在master上完成,后克隆到节点上

1)修改hosts文件

vi /etc/hosts

添加内容

192.168.223.188 master

192.168.223.177 node1

192.168.223.166 node2

2)修改主机名

vi /etc/hostname

参考hosts配置修改为master或node1、node2

3)时间同步

systemctl start chronyd

systemctl enable chronyd

date

4)禁用防火墙

systemctl stop firewalld

systemctl disable firewalld

5)关闭selinux服务

sed -i 's/enforcing/disabled/' /etc/selinux/config

6)禁用swap分区

vi /etc/fstab

#注释掉下面的设置

/dev/mapper/centos-swap swap

7)添加网桥过滤和地址转发功能

cat > /etc/sysctl.d/kubernetes.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

EOF

#然后执行

sysctl --system

2.3 安装docker

1)安装docker依赖

yum install -y yum-utils

2)设置docker仓库镜像地址

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

3)安装docker

yum install -y docker-ce docker-ce-cli containerd.io

4)设置docker开机启动

systemctl enable docker && systemctl start docker

5)配置docker 镜像加速器

# 创建文件

vi /etc/docker/daemon.json

# 修改内容

{

"exec-opts": ["native.cgroupdriver=systemd"],

"registry-mirrors": [

"https://registry.docker-cn.com",

"http://hub-mirror.c.163.com",

"https://docker.mirrors.ustc.edu.cn"

]

}

6) 重启docker

systemctl restart docker

2.4 安装k8s集群

1) kubernetes镜像切换成国内源

cat < /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

2)安装指定版本 kubeadm,kubelet和kubectl

yum install -y kubelet-1.23.0 kubeadm-1.23.0 kubectl-1.23.0

3)设置kubelet开机启动

systemctl enable kubelet

到这里master上基本的k8s配置完成了,然后将master克隆出两个节点机node1、node2

分别修改IP和hostname

4)master主机上执行k8s初始化

kubeadm init \

--apiserver-advertise-address=192.168.7.188 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.23.0 \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16 \

--ignore-preflight-errors=all

参数说明

–apiserver-advertise-address #集群通告地址(master 机器IP)

–image-repository # 指定阿里云镜像仓库地址

–kubernetes-version #K8s版本,与上面安装的一致

–service-cidr #集群内部虚拟网络,Pod统一访问入口

–pod-network-cidr #Pod网络

5)初始化完成后,会出现提示,将提示复制下来分别在master和node上执行

在master节点创建必要文件

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

在node节点上执行上面的提示,加入集群

kubeadm join 192.168.7.188:6443 --token uxe262.qnvanyd72ojy5c3x \

--discovery-token-ca-cert-hash sha256:e3c90c7cc1bab7ccd4093a7d7a9a2293ef17e1c4a477bf29b8ec4bf45e662f10

6) 查看集群

发现node状态是NotReady,因为还没有安装网络

kubectl get nodes

2.5 安装CNI网络

1)创建kube-flannel.yml

把“net-conf.json”中的Network 改成刚才 kubeadm 的参数 --pod-network-cidr 设置的地址段

---

kind: Namespace

apiVersion: v1

metadata:

name: kube-flannel

labels:

pod-security.kubernetes.io/enforce: privileged

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

rules:

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-flannel

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-flannel

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-flannel

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-flannel

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

#image: flannelcni/flannel-cni-plugin:v1.1.0 for ppc64le and mips64le (dockerhub limitations may apply)

image: docker.io/rancher/mirrored-flannelcni-flannel-cni-plugin:v1.1.0

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

#image: flannelcni/flannel:v0.19.2 for ppc64le and mips64le (dockerhub limitations may apply)

image: docker.io/rancher/mirrored-flannelcni-flannel:v0.19.2

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

#image: flannelcni/flannel:v0.19.2 for ppc64le and mips64le (dockerhub limitations may apply)

image: docker.io/rancher/mirrored-flannelcni-flannel:v0.19.2

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: EVENT_QUEUE_DEPTH

value: "5000"

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: xtables-lock

mountPath: /run/xtables.lock

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

2)执行配置文件

kubectl apply -f kube-flannel.yml

3)查看集群状态

# 查看节点

kubectl get nodes

# 查看pod系统配置

kubectl get pods -n kube-system

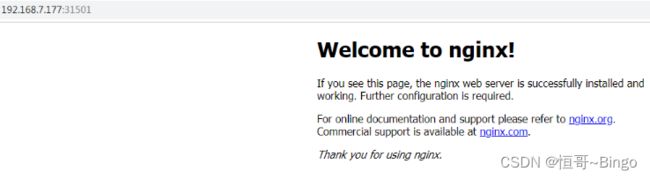

2.6 部署nginx服务

这里部署简单的nginx服务作为k8s的测试

1) 如果没有nginx,先拉取镜像

docker pull nginx

2) 创建pod部署

kubectl create deployment nginx --image=nginx

3) 查看pod资源

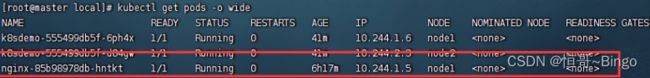

kubectl get pods -o wide

状态是Running代表pod正常运行

4) 暴露端口提供服务

kubectl expose deployment nginx --port=80 --type=NodePort

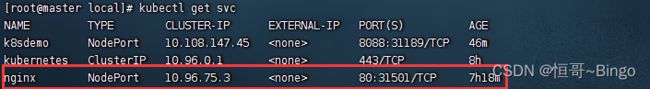

5)查看服务部署的ip和端口

kubectl get svc

可以查看到k8s暴露出的端口是31501,使用此端口就可以访问nginx了

出现错误

1) pod创建后一直出现ContainerCreating状态

2)使用describe查看后出现:network: open /run/flannel/subnet.env: no such file or directory

#查看pod执行情况

kubectl describe pod pod名称

3)在各个节点上创建/run/flannel/subnet.env

FLANNEL_NETWORK=10.244.0.0/16

FLANNEL_SUBNET=10.244.0.1/24

FLANNEL_MTU=1450

FLANNEL_IPMASQ=true

4) 删除deployment,重新部署

kubectl delete deployment xxx

3、k8s部署springboot

这里部署一个Java项目,使用k8s自动部署到两台机器上做集群

1) 创建简单的SpringBoot项目,打包成镜像部署到docker中,具体可以参考

https://blog.csdn.net/u013343114/article/details/112179420

2) 在阿里云中创建个人镜像仓库,在docker中登录仓库,推送镜像

https://cr.console.aliyun.com/

# 登录阿里云仓库

docker login --username=账号 registry.cn-hangzhou.aliyuncs.com

# 给镜像打tag

docker tag [ImageId] registry.cn-hangzhou.aliyuncs.com/命名空间/仓库名:[镜像版本号]

# 推送镜像到仓库中

docker push registry.cn-hangzhou.aliyuncs.com/命名空间/仓库名:[镜像版本号]

3) 在k8s中创建秘钥,名为demo-docker-secret

kubectl create secret docker-registry demo-docker-secret --docker-server=registry.cn-hangzhou.aliyuncs.com --docker-username=用户名 --docker-password=登录密码

4) 自动生成部署文件

kubectl create deployment k8sdemo --image=registry.cn-hangzhou.aliyuncs.com/chenheng666/repo01:1.0 --dry-run=client -o yaml > demo-k8s.yaml

4) 修改demo-k8s.yaml中的副本replicas为2,添加imagePullSecrets秘钥

apiVersion: apps/v1

kind: Deployment

metadata:

creationTimestamp: null

labels:

app: k8sdemo

name: k8sdemo

spec:

replicas: 2

selector:

matchLabels:

app: k8sdemo

strategy: {}

template:

metadata:

creationTimestamp: null

labels:

app: k8sdemo

spec:

imagePullSecrets:

- name: demo-docker-secret

containers:

- image: registry.cn-hangzhou.aliyuncs.com/chenheng666/repo01:1.0

name: repo01

resources: {}

status: {}

5) 导入部署文件,创建部署

kubectl apply -f demo-k8s.yaml

6) 暴露服务端口

kubectl expose deploy k8sdemo --port=8088 --target-port=8088 --type=NodePort

7) 查看pod

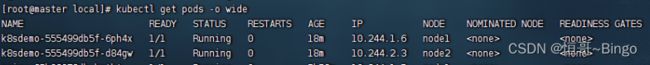

可以看到项目已经部署到两台node上了

kubectl get pods -o wide

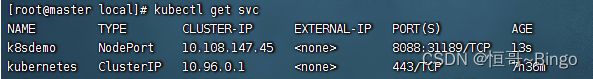

8)查看端口

kubectl get svc

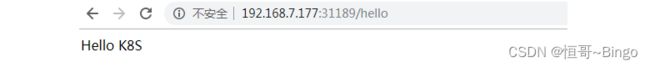

9) 使用node1和node2都可以访问接口