libtorch部署1:模型训练和转换

前面已经研究过tensorflow,onnx的模型部署方案,这篇就来记录一下pyTorch模型的C++部署方案。

对于pyTorch,我的基本应用思路是:

- 采用pytorch进行训练模型,测试模型

- 用libtorch实现前向传播,推理部署

注意事项写在前面:

-

注意 libtorch和VC版本对应。

比如libtorch1.4对应的VC2015,libtorch1.6对应的VC2017。 -

注意libtorch和pyTorch版本的对应。尽量要使用同一个版本号的。

比如我用的就是pyTorch1.4版本和libTorch1.4版本 -

注意libtorch/pyTorch和Cuda的版本对应。

比如cuda10/10.1可以通用,Cuda10.2,cuda11.0就不能通用

基本环境

- 操作系统:Win10

- 编译器:VS 2015

- Cuda版本:CUDA10+cuDNN7.6.5

- Python版本:Anaconda3-5.2.0-Windows-x86_64(对应python3.6.5)

- Pytorch版本:1.4.0

- Libtorch版本:1.4.0

说明:

对于Anaconda、VC、CUDA/cuDNN这些基础环境的安装,比较简单,就直接略过了。

1.pyTorch安装

Torch历史版本下载

https://pytorch.org/get-started/previous-versions/

//CUDA 10.0/10.1

conda install pytorch==1.4.0 torchvision==0.5.0 cudatoolkit=10.1 -c pytorch

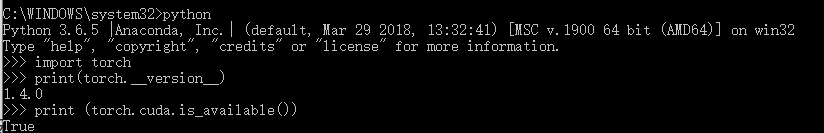

安装完成后直接测试:

python

import torch

print(torch.__version__)

print (torch.cuda.is_available())

2.训练模型

这里可以直接通过torchvision直接下载一个预处理模型,也可以自己训练。

如果是有现成的预处理模型,就跳到下一步模型转换。

这里为了方便记录流程,我采用的训练模型。

下面是mnist手写字体识别的模型训练:

import argparse

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torchvision import datasets, transforms

import PIL

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 10, kernel_size=5)

self.conv2 = nn.Conv2d(10, 20, kernel_size=5)

self.conv2_drop = nn.Dropout2d()

self.fc1 = nn.Linear(320, 50)

self.fc2 = nn.Linear(50, 10)

def forward(self, x):

x = self.conv1(x)

x = F.relu(F.max_pool2d(x, 2))

x = self.conv2(x)

x = F.relu(F.max_pool2d(self.conv2_drop(x), 2))

x = x.view(-1, 320)

x = F.relu(self.fc1(x))

x = F.dropout(x, training=self.training)

x = self.fc2(x)

return F.log_softmax(x, dim=1)

def train(args, model, device, train_loader, optimizer, epoch):

model.train()

for batch_idx, (data, target) in enumerate(train_loader):

data, target = data.to(device), target.to(device)

optimizer.zero_grad()

output = model(data)

loss = F.nll_loss(output, target)

loss.backward()

optimizer.step()

if batch_idx % args.log_interval == 0:

print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.format(

epoch, batch_idx * len(data), len(train_loader.dataset),

100. * batch_idx / len(train_loader), loss.item()))

def test(args, model, device, test_loader):

model.eval()

test_loss = 0

correct = 0

with torch.no_grad():

for data, target in test_loader:

data, target = data.to(device), target.to(device)

output = model(data)

test_loss += F.nll_loss(output, target, reduction='sum').item() # sum up batch loss

pred = output.max(1, keepdim=True)[1] # get the index of the max log-probability

correct += pred.eq(target.view_as(pred)).sum().item()

test_loss /= len(test_loader.dataset)

print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(

test_loss, correct, len(test_loader.dataset),

100. * correct / len(test_loader.dataset)))

def main():

# 用于训练的超参数设置

parser = argparse.ArgumentParser(description='PyTorch MNIST样例')

parser.add_argument('--batch-size', type=int, default=64,

help='input batch size for training (default: 64)')

parser.add_argument('--test-batch-size', type=int, default=1000,

help='input batch size for testing (default: 1000)')

parser.add_argument('--epochs', type=int, default=10,

help='number of epochs to train (default: 10)')

parser.add_argument('--lr', type=float, default=0.01,

help='learning rate (default: 0.01)')

parser.add_argument('--momentum', type=float, default=0.5,

help='SGD momentum (default: 0.5)')

parser.add_argument('--no-cuda', action='store_true', default=False,

help='disables CUDA training')

parser.add_argument('--seed', type=int, default=1,

help='random seed (default: 1)')

parser.add_argument('--log-interval', type=int, default=10,

help='how many batches to wait before logging training status')

args = parser.parse_args()

use_cuda = not args.no_cuda and torch.cuda.is_available() # 判断是否使用GPU训练

print(use_cuda)

torch.manual_seed(args.seed) # 固定住随机种子,使训练结果可复现

device = torch.device("cuda" if use_cuda else "cpu")

kwargs = {'num_workers': 8, 'pin_memory': True} if use_cuda else {}

# 加载训练数据

train_loader = torch.utils.data.DataLoader(

datasets.MNIST('data', train=True, download=True,

transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])),

batch_size=args.batch_size, shuffle=True, **kwargs)

# 加载测试数据

test_loader = torch.utils.data.DataLoader(

datasets.MNIST('data', train=False, transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])),

batch_size=args.test_batch_size, shuffle=True, **kwargs)

model = Net().to(device)

optimizer = optim.SGD(model.parameters(), lr=args.lr, momentum=args.momentum)

for epoch in range(1, args.epochs + 1):

train(args, model, device, train_loader, optimizer, epoch)

test(args, model, device, test_loader)

torch.save(model, "model.pth") # 保存模型参数

if __name__ == '__main__':

import time

start = time.time()

main()

end = time.time()

running_time = end - start

print('time cost : %.5f 秒' % running_time)

3.模型测试

训练完成后,进行一个简单测试

我们随便找了一张图片

![]()

测试代码如下

import torch

import cv2

from torchvision import transforms

from PIL import Image

if __name__ == '__main__':

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

model = torch.load('model.pth') # 加载模型

model = model.to(device)

model.eval() # 把模型转为test模式

img = cv2.imread("img.jpg", 0) # 读取要预测的灰度图片

img = Image.fromarray(img)

trans = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])

img = trans(img)

img = img.unsqueeze(0) # 图片扩展多一维,[batch_size,通道,长,宽],此时batch_size=1

img = img.to(device)

output = model(img)

pred = output.max(1, keepdim=True)[1]

pred = torch.squeeze(pred)

print('检测结果为:%d' % (pred.cpu().numpy()))

如果出现

AttributeError: Can’t get attribute ‘Net’ on

main’ from

‘test.py’>

那么就将训练脚本里面的Net类拷贝到test.py里面就可以了

执行python test.py 后输出结果可以看到模型预测是正常的。

检测结果为:2

4.转换模型

pytorch的模型是没法直接用libtorch加载的,必须要进行序列化后输出。

这个转换过程就是找一张图片,直接foward一遍,然后用torch.jit.trace 保存下来就可以了。

import torch

import torch.nn as nn

import cv2

import torch.nn.functional as F

from torchvision import transforms

from PIL import Image

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 10, kernel_size=5)

self.conv2 = nn.Conv2d(10, 20, kernel_size=5)

self.conv2_drop = nn.Dropout2d()

self.fc1 = nn.Linear(320, 50)

self.fc2 = nn.Linear(50, 10)

def forward(self, x):

x = self.conv1(x)

x = F.relu(F.max_pool2d(x, 2))

x = self.conv2(x)

x = F.relu(F.max_pool2d(self.conv2_drop(x), 2))

x = x.view(-1, 320)

x = F.relu(self.fc1(x))

x = F.dropout(x, training=self.training)

x = self.fc2(x)

return F.log_softmax(x, dim=1)

if __name__ == '__main__':

# 使用cpu进行推理,也可选cuda,这里选的哪种,libtorch里面也只能加载哪种

device = torch.device('cpu')

model = torch.load('model.pth') # 加载模型

model = model.to(device)

model.eval() # 把模型转为test模式

img = cv2.imread("img.jpg", 0) # 读取要预测的灰度图片

img = Image.fromarray(img)

trans = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])

img = trans(img)

img = img.unsqueeze(0) # 图片扩展多一维,[batch_size,通道,长,宽]

img = img.to(device)

traced_net = torch.jit.trace(model, img)

traced_net.save("model_cpu.pt")

print("模型序列化导出成功")

执行转换后,可以得到

model_cpu.pt

这样,整个模型的训练转换过程就完成了,下一篇我们就用libtorch加载这个模型,并进行验证。

5.补充内容DataParallel

有的时候我们服务器上不止一个GPU,这时候,可能就会用到nn.DataParallel函数,它可以让多个GPU并行处理。这种情况下,进行序列化输出模型就有一点不同了。

序列化:

device = torch.device('cuda')

model = get_model(args_in)

model = torch.nn.DataParallel(model, device_ids=[0])

model.load_state_dict(torch.load(args_in.test_model_path))

model.to(device)

# use evaluation mode to ignore dropout, etc

model.eval()

# The tracing input need not to be the same size as the forward case.

example = torch.rand(1, 3, 256, 256).to(device)

# Use torch.jit.trace to generate a torch.jit.ScriptModule via tracing.

traced_script_module = torch.jit.trace(model.module, example)

traced_script_module.save("traced_model.pt")

注意事项:

1.并行化当然是GPU上的,需要将tensor通过to(device)或者.cuda()转到GPU上。

2.利用DataParallel训练的模型,需要在trace时使用 model.module,而不是通常的model

6.Tracing方法

libtorch不依赖于python,但python训练的模型,需要转换为script model才能由libtorch加载,并进行推理。在这一步官网提供了两种方法:

Trace 和 Script

上面提到的都是Tracing,这种比较简单,对我来说也够用了。缺点是适用于模型没有分支的情况。

如果模型较复杂,forward中有分支,那么就需要用通过torch.jit.script编译模块,将其转换为ScriptModule。比如:

class MyModule(torch.nn.Module):

def __init__(self, N, M):

super(MyModule, self).__init__()

self.weight = torch.nn.Parameter(torch.rand(N, M))

def forward(self, input):

if input.sum() > 0:

output = self.weight.mv(input)

else:

output = self.weight + input

return output

my_module = MyModule(10,20)

sm = torch.jit.script(my_module)

官方文档

https://pytorch.org/tutorials/advanced/cpp_export.html